| Issue |

A&A

Volume 675, July 2023

|

|

|---|---|---|

| Article Number | A120 | |

| Number of page(s) | 32 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/202346017 | |

| Published online | 07 July 2023 | |

Euclid preparation

XXVIII. Forecasts for ten different higher-order weak lensing statistics

1

Université Paris-Saclay, Université Paris Cité, CEA, CNRS, Astrophysique, Instrumentation et Modélisation Paris-Saclay, 91191 Gif-sur-Yvette, France

2

Institute for Particle Physics and Astrophysics, Dept. of Physics, ETH Zurich, Wolfgang-Pauli-Strasse 27, 8093 Zurich, Switzerland

3

Dipartimento di Fisica e Astronomia “Augusto Righi” – Alma Mater Studiorum Università di Bologna, Via Piero Gobetti 93/2, 40129 Bologna, Italy

4

INAF-Osservatorio di Astrofisica e Scienza dello Spazio di Bologna, Via Piero Gobetti 93/3, 40129 Bologna, Italy

5

INFN-Sezione di Bologna, Viale Berti Pichat 6/2, 40127 Bologna, Italy

6

University Observatory, Faculty of Physics, Ludwig-Maximilians-Universität, Scheinerstr. 1, 81679 Munich, Germany

7

AIM, CEA, CNRS, Université Paris-Saclay, Université de Paris, 91191 Gif-sur-Yvette, France

8

Argelander-Institut für Astronomie, Universität Bonn, Auf dem Hügel 71, 53121 Bonn, Germany

9

INFN-Sezione di Roma, Piazzale Aldo Moro, 2 – c/o Dipartimento di Fisica, Edificio G. Marconi, 00185 Roma, Italy

10

INAF-Osservatorio Astronomico di Roma, Via Frascati 33, 00078 Monteporzio Catone, Italy

11

Johns Hopkins University, 3400 North Charles Street, Baltimore, MD 21218, USA

12

School of Mathematics, Statistics and Physics, Newcastle University, Herschel Building, Newcastle-upon-Tyne NE1 7RU, UK

13

Departamento de Física, Faculdade de Ciências, Universidade de Lisboa, Edifício C8, Campo Grande, 1749-016 Lisboa, Portugal

14

Instituto de Astrofísica e Ciências do Espaço, Faculdade de Ciências, Universidade de Lisboa, Tapada da Ajuda, 1349-018 Lisboa, Portugal

15

Aix-Marseille Université, CNRS, CNES, LAM, Marseille, France

16

Institute of Physics, Laboratory of Astrophysics, École Polytechnique Fédérale de Lausanne (EPFL), Observatoire de Sauverny, 1290 Versoix, Switzerland

17

Dipartimento di Fisica, Sapienza Università di Roma, Piazzale Aldo Moro 2, 00185 Roma, Italy

18

Université Paris-Saclay, CNRS, Institut d’astrophysique spatiale, 91405 Orsay, France

19

INAF-Osservatorio Astrofisico di Torino, Via Osservatorio 20, 10025 Pino Torinese, (TO), Italy

20

Dipartimento di Fisica, Università di Genova, Via Dodecaneso 33, 16146 Genova, Italy

21

INFN-Sezione di Roma Tre, Via della Vasca Navale 84, 00146 Roma, Italy

22

Department of Physics “E. Pancini”, University Federico II, Via Cinthia 6, 80126 Napoli, Italy

23

Instituto de Astrofísica e Ciências do Espaço, Universidade do Porto, CAUP, Rua das Estrelas, 4150-762 Porto, Portugal

24

Dipartimento di Fisica, Universitá degli Studi di Torino, Via P. Giuria 1, 10125 Torino, Italy

25

INFN-Sezione di Torino, Via P. Giuria 1, 10125 Torino, Italy

26

INAF-IASF Milano, Via Alfonso Corti 12, 20133 Milano, Italy

27

Institut de Física d’Altes Energies (IFAE), The Barcelona Institute of Science and Technology, Campus UAB, 08193 Bellaterra, (Barcelona), Spain

28

Port d’Informació Científica, Campus UAB, C. Albareda s/n, 08193 Bellaterra, (Barcelona), Spain

29

Institut d’Estudis Espacials de Catalunya (IEEC), Carrer Gran Capitá 2-4, 08034 Barcelona, Spain

30

Institute of Space Sciences (ICE, CSIC), Campus UAB, Carrer de Can Magrans, s/n, 08193 Barcelona, Spain

31

INAF-Osservatorio Astronomico di Capodimonte, Via Moiariello 16, 80131 Napoli, Italy

32

INFN section of Naples, Via Cinthia 6, 80126 Napoli, Italy

33

Dipartimento di Fisica e Astronomia “Augusto Righi” – Alma Mater Studiorum Universitá di Bologna, Viale Berti Pichat 6/2, 40127 Bologna, Italy

34

Centre National d’Études Spatiales, Toulouse, France

35

Institut national de physique nucléaire et de physique des particules, 3 rue Michel-Ange, 75794 Paris Cédex 16, France

36

Institute for Astronomy, University of Edinburgh, Royal Observatory, Blackford Hill, Edinburgh EH9 3HJ, UK

37

Jodrell Bank Centre for Astrophysics, Department of Physics and Astronomy, University of Manchester, Oxford Road, Manchester M13 9PL, UK

38

ESAC/ESA, Camino Bajo del Castillo, s/n., Urb. Villafranca del Castillo, 28692 Villanueva de la Cañada, Madrid, Spain

39

European Space Agency/ESRIN, Largo Galileo Galilei 1, 00044 Frascati, Roma, Italy

40

Mullard Space Science Laboratory, University College London, Holmbury St Mary, Dorking, Surrey RH5 6NT, UK

41

Instituto de Astrofísica e Ciências do Espaço, Faculdade de Ciências, Universidade de Lisboa, Campo Grande, 1749-016 Lisboa, Portugal

42

Department of Astronomy, University of Geneva, ch. d’Ecogia 16, 1290 Versoix, Switzerland

43

INAF-Istituto di Astrofisica e Planetologia Spaziali, Via del Fosso del Cavaliere, 100, 00100 Roma, Italy

44

Univ. Lyon, Univ. Claude Bernard Lyon 1, CNRS/IN2P3, IP2I Lyon, UMR 5822, 69622 Villeurbanne, France

45

INAF-Osservatorio Astronomico di Trieste, Via G. B. Tiepolo 11, 34143 Trieste, Italy

46

INAF-Osservatorio Astronomico di Padova, Via dell’Osservatorio 5, 35122 Padova, Italy

47

Max Planck Institute for Extraterrestrial Physics, Giessenbachstr. 1, 85748 Garching, Germany

48

Universitäts-Sternwarte München, Fakultät für Physik, Ludwig-Maximilians-Universität München, Scheinerstrasse 1, 81679 München, Germany

49

Leiden Observatory, Leiden University, Niels Bohrweg 2, 2333 CA, Leiden, The Netherlands

50

Jet Propulsion Laboratory, California Institute of Technology, 4800 Oak Grove Drive, Pasadena, CA 91109, USA

51

Technical University of Denmark, Elektrovej 327, 2800 Kgs. Lyngby, Denmark

52

Cosmic Dawn Center (DAWN), Copenhagen, Denmark

53

Institut d’Astrophysique de Paris, 98bis Boulevard Arago, 75014 Paris, France

54

Max-Planck-Institut für Astronomie, Königstuhl 17, 69117 Heidelberg, Germany

55

NASA Goddard Space Flight Center, Greenbelt, MD 20771, USA

56

Université de Genève, Département de Physique Théorique and Centre for Astroparticle Physics, 24 quai Ernest-Ansermet, 1211 Genève 4, Switzerland

57

Department of Physics, PO Box 64, 00014 University of Helsinki, Finland

58

Helsinki Institute of Physics, Gustaf Hällströmin katu 2, University of Helsinki, Helsinki, Finland

59

Institute of Theoretical Astrophysics, University of Oslo, PO Box 1029 Blindern, 0315 Oslo, Norway

60

NOVA optical infrared instrumentation group at ASTRON, Oude Hoogeveensedijk 4, 7991 PD Dwingeloo, The Netherlands

61

Department of Physics, Institute for Computational Cosmology, Durham University, South Road DH1 3LE, UK

62

Université Paris Cité, CNRS, Astroparticule et Cosmologie, 75013 Paris, France

63

Institut d’Astrophysique de Paris, UMR 7095, CNRS, and Sorbonne Université, 98 bis boulevard Arago, 75014 Paris, France

64

European Space Agency/ESTEC, Keplerlaan 1, 2201 AZ Noordwijk, The Netherlands

65

Kapteyn Astronomical Institute, University of Groningen, PO Box 800, 9700 AV Groningen, The Netherlands

66

Department of Physics and Astronomy, University of Aarhus, Ny Munkegade 120, 8000 Aarhus C, Denmark

67

Space Science Data Center, Italian Space Agency, Via del Politecnico snc, 00133 Roma, Italy

68

Institute of Space Science, Bucharest 077125, Romania

69

Dipartimento di Fisica e Astronomia “G.Galilei”, Universitá di Padova, Via Marzolo 8, 35131 Padova, Italy

70

INFN-Padova, Via Marzolo 8, 35131 Padova, Italy

71

Dipartimento di Fisica e Astronomia, Universitá di Bologna, Via Gobetti 93/2, 40129 Bologna, Italy

72

Departamento de Física, FCFM, Universidad de Chile, Blanco Encalada 2008, Santiago, Chile

73

Institut für Astro- und Teilchenphysik, Universität Innsbruck, Technikerstr. 25/8, 6020 Innsbruck, Austria

74

Aix-Marseille Université, CNRS/IN2P3, CPPM, Marseille, France

75

Institut de Ciencies de l’Espai (IEEC-CSIC), Campus UAB, Carrer de Can Magrans, s/n Cerdanyola del Vallés, 08193 Barcelona, Spain

76

Centro de Investigaciones Energéticas, Medioambientales y Tecnológicas (CIEMAT), Avenida Complutense 40, 28040 Madrid, Spain

77

Universidad Politécnica de Cartagena, Departamento de Electrónica y Tecnología de Computadoras, 30202 Cartagena, Spain

78

Institut de Recherche en Astrophysique et Planétologie (IRAP), Université de Toulouse, CNRS, UPS, CNES, 14 Av. Édouard Belin, 31400 Toulouse, France

79

Infrared Processing and Analysis Center, California Institute of Technology, Pasadena, CA 91125, USA

80

INAF-Osservatorio Astronomico di Brera, Via Brera 28, 20122 Milano, Italy

81

Instituto de Astrofísica de Canarias, Calle Vía Láctea s/n, 38204 San Cristóbal de La Laguna, Tenerife, Spain

82

Center for Computational Astrophysics, Flatiron Institute, 162 5th Avenue, 10010 New York, NY, USA

83

School of Physics and Astronomy, Cardiff University, The Parade, Cardiff CF24 3AA, UK

84

Department of Physics and Helsinki Institute of Physics, Gustaf Hällströmin katu 2, 00014 University of Helsinki, Finland

85

Dipartimento di Fisica “Aldo Pontremoli”, Universitá degli Studi di Milano, Via Celoria 16, 20133 Milano, Italy

86

INFN-Sezione di Milano, Via Celoria 16, 20133 Milano, Italy

87

Junia, EPA department, 59000 Lille, France

88

Instituto de Física Teórica UAM-CSIC, Campus de Cantoblanco, 28049 Madrid, Spain

89

CERCA/ISO, Department of Physics, Case Western Reserve University, 10900 Euclid Avenue, Cleveland, OH 44106, USA

90

Laboratoire de Physique de l’École Normale Supérieure, ENS, Université PSL, CNRS, Sorbonne Université, 75005 Paris, France

91

Observatoire de Paris, Université PSL, Sorbonne Université, LERMA, 75005 Paris, France

92

Astrophysics Group, Blackett Laboratory, Imperial College London, London SW7 2AZ, UK

93

SISSA, International School for Advanced Studies, Via Bonomea 265, 34136 Trieste, TS, Italy

94

IFPU, Institute for Fundamental Physics of the Universe, Via Beirut 2, 34151 Trieste, Italy

95

INFN, Sezione di Trieste, Via Valerio 2, 34127 Trieste, TS, Italy

96

Departamento de Astrofísica, Universidad de La Laguna, 38206 La Laguna, Tenerife, Spain

97

Dipartimento di Fisica e Scienze della Terra, Universitá degli Studi di Ferrara, Via Giuseppe Saragat 1, 44122 Ferrara, Italy

98

Istituto Nazionale di Fisica Nucleare, Sezione di Ferrara, Via Giuseppe Saragat 1, 44122 Ferrara, Italy

99

Institut de Physique Théorique, CEA, CNRS, Université Paris-Saclay, 91191 Gif-sur-Yvette Cedex, France

100

Dipartimento di Fisica – Sezione di Astronomia, Universitá di Trieste, Via Tiepolo 11, 34131 Trieste, Italy

101

NASA Ames Research Center, Moffett Field, CA 94035, USA

102

INAF, Istituto di Radioastronomia, Via Piero Gobetti 101, 40129 Bologna, Italy

103

INFN-Bologna, Via Irnerio 46, 40126 Bologna, Italy

104

Université Côte d’Azur, Observatoire de la Côte d’Azur, CNRS, Laboratoire Lagrange, Bd de l’Observatoire, CS 34229, 06304 Nice cedex 4, France

105

Institute for Theoretical Particle Physics and Cosmology (TTK), RWTH Aachen University, 52056 Aachen, Germany

106

Institute for Astronomy, University of Hawaii, 2680 Woodlawn Drive, Honolulu, HI 96822, USA

107

Department of Physics & Astronomy, University of California Irvine, Irvine, CA 92697, USA

108

University of Lyon, UCB Lyon 1, CNRS/IN2P3, IUF, IP2I Lyon, France

109

INFN-Sezione di Genova, Via Dodecaneso 33, 16146 Genova, Italy

110

Department of Astronomy & Physics and Institute for Computational Astrophysics, Saint Mary’s University, 923 Robie Street, Halifax Nova Scotia B3H 3C3, Canada

111

Ruhr University Bochum, Faculty of Physics and Astronomy, Astronomical Institute (AIRUB), German Centre for Cosmological Lensing (GCCL), 44780 Bochum, Germany

112

Univ. Grenoble Alpes, CNRS, Grenoble INP, LPSC-IN2P3, 53, Avenue des Martyrs, 38000 Grenoble, France

113

Department of Physics and Astronomy, University College London, Gower Street, London WC1E 6BT, UK

114

Department of Physics and Astronomy, Vesilinnantie 5, 20014 University of Turku, Finland

115

University of Applied Sciences and Arts of Northwestern Switzerland, School of Engineering, 5210 Windisch, Switzerland

116

Centro de Astrofísica da Universidade do Porto, Rua das Estrelas, 4150-762 Porto, Portugal

117

Institute of Cosmology and Gravitation, University of Portsmouth, Portsmouth PO1 3FX, UK

118

Department of Mathematics and Physics E. De Giorgi, University of Salento, Via per Arnesano, CP-I93, 73100 Lecce, Italy

119

INFN, Sezione di Lecce, Via per Arnesano, CP-193, 73100 Lecce, Italy

120

INAF-Sezione di Lecce, c/o Dipartimento Matematica e Fisica, Via per Arnesano, 73100 Lecce, Italy

121

Institute for Computational Science, University of Zurich, Winterthurerstrasse 190, 8057 Zurich, Switzerland

122

Higgs Centre for Theoretical Physics, School of Physics and Astronomy, The University of Edinburgh, Edinburgh EH9 3FD, UK

123

Université St Joseph; Faculty of Sciences, Beirut, Lebanon

124

Institut für Theoretische Physik, University of Heidelberg, Philosophenweg 16, 69120 Heidelberg, Germany

125

Department of Astrophysical Sciences, Peyton Hall, Princeton University, Princeton, NJ 08544, USA

Received:

30

January

2023

Accepted:

11

April

2023

Recent cosmic shear studies have shown that higher-order statistics (HOS) developed by independent teams now outperform standard two-point estimators in terms of statistical precision thanks to their sensitivity to the non-Gaussian features of large-scale structure. The aim of the Higher-Order Weak Lensing Statistics (HOWLS) project is to assess, compare, and combine the constraining power of ten different HOS on a common set of Euclid-like mocks, derived from N-body simulations. In this first paper of the HOWLS series, we computed the nontomographic (Ωm, σ8) Fisher information for the one-point probability distribution function, peak counts, Minkowski functionals, Betti numbers, persistent homology Betti numbers and heatmap, and scattering transform coefficients, and we compare them to the shear and convergence two-point correlation functions in the absence of any systematic bias. We also include forecasts for three implementations of higher-order moments, but these cannot be robustly interpreted as the Gaussian likelihood assumption breaks down for these statistics. Taken individually, we find that each HOS outperforms the two-point statistics by a factor of around two in the precision of the forecasts with some variations across statistics and cosmological parameters. When combining all the HOS, this increases to a 4.5 times improvement, highlighting the immense potential of HOS for cosmic shear cosmological analyses with Euclid. The data used in this analysis are publicly released with the paper.

Key words: gravitational lensing: weak / methods: statistical / surveys / large-scale structure of Universe / cosmological parameters

© ESO 2023

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

This article is published in open access under the Subscribe to Open model. Subscribe to A&A to support open access publication.

1. Introduction

It is a well-established fact that the Universe is undergoing a phase of accelerated expansion (e.g., Riess et al. 1998; Perlmutter et al. 1999). Understanding what is driving this acceleration in the framework of a spatially flat universe is one of the (if not the) greatest challenges of modern-day cosmology. The concordance Λ cold dark matter (ΛCDM) model performs excellently in fitting the available data, yet the cosmological constant Λ is far from satisfactory from a theoretical point of view. To make things harder for ΛCDM, recent tensions have emerged due to an inconsistency between the values of some parameters from independent data measuring the same quantities in radically different ways. The most debated case is the discrepancy between the Hubble constant H0 as measured from local probes and as inferred from cosmological data sets (see, e.g., Di Valentino et al. 2021, for a review). Another, albeit less significant, example is the disagreement between the cosmic microwave background (CMB) and lensing estimates of the growth of structure parameter,  (e.g., Hildebrandt et al. 2017; Heymans et al. 2021; Amon et al. 2022). Although some unknown systematic effects could have been missed in the analysis, such tensions may also be the first signs that alternative models are needed, relying on either dark energy in a general relativity framework or based on modified gravity (see, e.g., Joyce et al. 2016, and references therein). Discriminating among the plethora of viable candidates is the aim of Stage IV surveys such as the Dark Energy Spectroscopic Instrument (DESI, DESI Collaboration 2016), the Prime Focus Spectrograph (PFS, Takada et al. 2014), the Vera C. Rubin Observatory Legacy Survey of Space and Time (LSST, Ivezić et al. 2019), Euclid (Laureijs et al. 2011), SPHEREx (Doré et al. 2014), and the Nancy Grace Roman Space Telescope (Spergel et al. 2015).

(e.g., Hildebrandt et al. 2017; Heymans et al. 2021; Amon et al. 2022). Although some unknown systematic effects could have been missed in the analysis, such tensions may also be the first signs that alternative models are needed, relying on either dark energy in a general relativity framework or based on modified gravity (see, e.g., Joyce et al. 2016, and references therein). Discriminating among the plethora of viable candidates is the aim of Stage IV surveys such as the Dark Energy Spectroscopic Instrument (DESI, DESI Collaboration 2016), the Prime Focus Spectrograph (PFS, Takada et al. 2014), the Vera C. Rubin Observatory Legacy Survey of Space and Time (LSST, Ivezić et al. 2019), Euclid (Laureijs et al. 2011), SPHEREx (Doré et al. 2014), and the Nancy Grace Roman Space Telescope (Spergel et al. 2015).

In this context, the Euclid mission will play a pivotal role in cosmology by measuring the dark energy equation of state w and the S8 parameter with exquisite precision and accuracy (see, e.g., Laureijs et al. 2011; Euclid Collaboration 2020). This will be achieved by exploiting the clustering of galaxies as well as cosmic shear: the Weak gravitational Lensing (WL) signal of galaxies due to the deflection of light rays by the large-scale structure. In this article, we focus on optimizing the extraction of cosmological information from the cosmic shear probe.

The classical cosmic shear analysis involves measuring the correlations between ellipticities of pairs of galaxies as a function of their separation; an estimator called the two-point correlation functions of the shear (γ-2PCF). Although it benefits from a comprehensive theoretical description, this estimator is only sensitive to the multiscale variance of the lensing field. However, the gravitational collapse of the matter perturbations introduces non-Gaussian features in the shear field. As a consequence, the γ-2PCF and any estimator probing the field up to second order do not contain all cosmological information. This is seen, for example, in the degeneracies between parameters, such as the one between Ωm and σ8, which means the γ-2PCF can only efficiently constrain their combination  . To recover the extra information contained in nonlinear scales, many non-Gaussian estimators – also referred as higher-order statistics (HOS) in contrast to two-point statistics – have been introduced in the literature. Such HOS include higher-order moments (e.g., Van Waerbeke et al. 2013; Gatti et al. 2022; Porth & Smith 2021), peak counts (e.g., Marian et al. 2009; Dietrich & Hartlap 2010; Kacprzak et al. 2016; Martinet et al. 2018, 2021b; Harnois-Déraps et al. 2021), one-point probability distributions (e.g., Barthelemy et al. 2020; Boyle et al. 2021; Liu & Madhavacheril 2019; Thiele et al. 2020), Minkowski functionals (e.g., Kratochvil et al. 2012; Petri et al. 2015; Vicinanza et al. 2019; Parroni et al. 2020), Betti numbers (e.g., Feldbrugge et al. 2019; Parroni et al. 2021), persistent homology (e.g., Heydenreich et al. 2021, 2022), scattering transform coefficients (e.g., Cheng et al. 2020; Cheng & Ménard 2021b), as well as map-level inference (Porqueres et al. 2022; Boruah et al. 2022). Despite their increased complexity, which often requires resorting to numerical simulations to model their cosmology dependence, all the references above have demonstrated that these new statistics have superior constraining power compared to the γ-2PCF. However, each of these new HOS is usually developed and studied by independent teams, which renders a fair comparison between them extremely difficult.

. To recover the extra information contained in nonlinear scales, many non-Gaussian estimators – also referred as higher-order statistics (HOS) in contrast to two-point statistics – have been introduced in the literature. Such HOS include higher-order moments (e.g., Van Waerbeke et al. 2013; Gatti et al. 2022; Porth & Smith 2021), peak counts (e.g., Marian et al. 2009; Dietrich & Hartlap 2010; Kacprzak et al. 2016; Martinet et al. 2018, 2021b; Harnois-Déraps et al. 2021), one-point probability distributions (e.g., Barthelemy et al. 2020; Boyle et al. 2021; Liu & Madhavacheril 2019; Thiele et al. 2020), Minkowski functionals (e.g., Kratochvil et al. 2012; Petri et al. 2015; Vicinanza et al. 2019; Parroni et al. 2020), Betti numbers (e.g., Feldbrugge et al. 2019; Parroni et al. 2021), persistent homology (e.g., Heydenreich et al. 2021, 2022), scattering transform coefficients (e.g., Cheng et al. 2020; Cheng & Ménard 2021b), as well as map-level inference (Porqueres et al. 2022; Boruah et al. 2022). Despite their increased complexity, which often requires resorting to numerical simulations to model their cosmology dependence, all the references above have demonstrated that these new statistics have superior constraining power compared to the γ-2PCF. However, each of these new HOS is usually developed and studied by independent teams, which renders a fair comparison between them extremely difficult.

The Higher-Order Weak Lensing Statistics (HOWLS) project has been initiated to remedy this situation. One of its main aims is, indeed, to test HOS probes by relying on the same mock data, here mimicking those that Euclid will make available. In contrast to some early (e.g., Pires et al. 2009; Hilbert et al. 2012) and recent efforts in the literature (e.g., Zürcher et al. 2022), HOWLS was designed as a challenge to the community, thus attracting contributions from the largest team of HOS experts ever. Individual teams within the Euclid community have applied 24 different algorithms to the same mocks for a total of two second-order statistics (the shear and convergence two-point correlation functions γ-2PCF and κ-2PCF) and ten different HOS: convergence one-point probability distribution (κ-PDF), higher-order convergence moments (HOM), n-th order aperture mass moments  , aperture mass peak counts (peaks), convergence Minkowski functionals (MFs), convergence Betti numbers (BNs), aperture mass persistent homology Betti numbers (pers. BNs) and heatmap (pers. heat.), and convergence scattering transform coefficients (ST). Such a large number is unprecedented and offers the possibility of investigating which one (or which combination) is best suited to be coupled with the standard γ-2PCF probe to narrow down the constraints on cosmological parameters (CPs). Different HOS are in fact sensitive to different scales and features in the convergence (κ) maps and thus they couple to the γ-2PCF in their own way. Moreover, HOWLS can also check for correlations among the various HOS probes, revealing which ones are sufficiently uncorrelated such that their combination does indeed improve the total constraining power. It is also worth stressing that the present paper is only the first in a series. HOWLS will actually serve as a preparation for the application of WL HOS to the Euclid Survey, defining common tools and pipelines for the consortium.

, aperture mass peak counts (peaks), convergence Minkowski functionals (MFs), convergence Betti numbers (BNs), aperture mass persistent homology Betti numbers (pers. BNs) and heatmap (pers. heat.), and convergence scattering transform coefficients (ST). Such a large number is unprecedented and offers the possibility of investigating which one (or which combination) is best suited to be coupled with the standard γ-2PCF probe to narrow down the constraints on cosmological parameters (CPs). Different HOS are in fact sensitive to different scales and features in the convergence (κ) maps and thus they couple to the γ-2PCF in their own way. Moreover, HOWLS can also check for correlations among the various HOS probes, revealing which ones are sufficiently uncorrelated such that their combination does indeed improve the total constraining power. It is also worth stressing that the present paper is only the first in a series. HOWLS will actually serve as a preparation for the application of WL HOS to the Euclid Survey, defining common tools and pipelines for the consortium.

The HOWLS data set is based on the DUSTGRAIN-pathfinder simulations (Giocoli et al. 2018a), designed to model the cosmological dependence of every statistic, and on the Scinet LIght-Cones Simulations (SLICS, Harnois-Déraps et al. 2018) for estimating covariances. We have built realistic Euclid mocks out of these simulations, in particular mimicking the expected galaxy density, intrinsic ellipticities, and redshift distribution. For every mock, we built a convergence map following the Kaiser & Squires (1993) implementation described in Pires et al. (2020). We measured the γ-2PCF in the ellipticity catalogs; the κ-2PCF, κ-PDF, MFs, BNs, ST, and HOM from the convergence maps;  ,

,  , and pers. BNs and pers. heat. from the aperture mass calculated from the shear field; and peaks of aperture mass maps calculated from the reconstructed convergence fields. We then developed two independent analysis pipelines to compute the Fisher information and thus forecast the constraining power of HOS compared to two-point statistics.

, and pers. BNs and pers. heat. from the aperture mass calculated from the shear field; and peaks of aperture mass maps calculated from the reconstructed convergence fields. We then developed two independent analysis pipelines to compute the Fisher information and thus forecast the constraining power of HOS compared to two-point statistics.

This first paper in the HOWLS series is intended to introduce the data set (Sect. 2) and HOS (Sect. 3), and to conduct a Fisher analysis (Sect. 4) to compare them. Forecasts are presented and discussed in Sect. 5. We conclude in Sect. 6 by listing the refinements that we will include in the following HOWLS publications. The HOWLS data set and applied statistics are publicly released with this article1.

2. HOWLS data set

To perform a Fisher analysis, one needs to compute data vector (DV) derivatives with respect to individual CPs. We therefore run the DUSTGRAIN-pathfinder simulations, varying one parameter at a time among Ωm, σ8, and w, for four different values around the fiducial ones. We additionally used the DUSTGRAIN-pathfinder and SLICS to build the covariance matrix necessary to forecast parameter constraints. The simulations are summarized in Table 1 and described in detail below in Sect. 2.1 for DUSTGRAIN-pathfinder and Sect. 2.2 for SLICS.

Simulations used in HOWLS with CP values for Ωm, σ8, and w, and the numbers of realizations.

2.1. DUSTGRAIN-pathfinder simulations

The DUSTGRAIN-pathfinder suite consists of N-body simulations of volume (750 h−1 Mpc)3 filled with Np = 7683 particles, corresponding to a particle mass resolution mp of approximately 8 × 1010h−1 M⊙ (Giocoli et al. 2018a). The standard reference CPs have been set to values consistent with the results of the Planck-2015 (Planck Collaboration I 2016) cosmological data analysis, namely a matter density Ωm of 0.31345, a baryon density Ωb of 0.0491, Hubble constant H0 of 67.31 km s−1 Mpc−1, a scalar spectral index ns of 0.9658, and mean amplitude σ8 of the linear density fluctuations on the 8 h−1 Mpc scale of 0.842. Since the DUSTGRAIN-pathfinder simulations are part of a cosmological data set that also accounts for modified gravity models, the simulations (including those assuming standard general relativity) were carried out with the MG-Gadget code (Puchwein et al. 2013).

For the analyses performed for this work, in addition to the reference ΛCDM, we used 12 other cosmological runs. In particular, we considered cosmological simulations where only one of the CPs Ωm, σ8, and w was varied, either by ±4% and ±16% for σ8 and w, or by +28%/−36% for Ωm to allow for existing data to be reused. When varying Ωm the value of the physical baryon density Ωbh2 was kept fixed in the computation of the linear matter power spectrum adopted in the initial conditions, which was performed by means of the Boltzmann code CAMB (Lewis et al. 2000).

For each cosmological simulation, we built up mass density planes and then shooting-rays in light cones for 128 different line-of-sight realizations (256 in the case of the fiducial cosmology). The past light cones were built using the MapSim routine (Giocoli et al. 2015) following a pyramidal geometry. This method has been used and tested on a variety of cosmological simulations (Tessore et al. 2015; Castro et al. 2018; Giocoli et al. 2018b) and recently compared with other algorithms (Hilbert et al. 2020) finding only percent-level differences for both cosmic shear two-point and peak statistics. The approach we follow in MapSim is based on using several snapshots from a single realization of an N-body simulation to build a light-cone up to a redshift of 4. For the DUSTGRAIN-pathfinder suite there are 21 snapshots available in this redshift range. Given the box length of 750 h−1Mpc, roughly 7 (5) boxes are needed to cover the comoving distance of about 5 (3.6) h−1 Gpc to a source redshift zs of 4 (2). To obtain better redshift sampling, the volume required to construct the light-cone is divided along the line-of-sight into multiple contiguous redshift slices obtained from the individual snapshots. If the redshift slice reaches beyond the boundary of a single box, two lens planes are constructed from a single snapshot. The total number of lens planes up to zs = 4 (zs = 2) is 27 (19). To avoid replicating the same structure along the line of sight, the 7 boxes needed to cover the light-cone are randomized. This randomization procedure allows us to extract multiple realizations from a single simulation. Randomization is achieved by using seeds that act on the simulation boxes based on: (i) changing the location of the observer, typically placed on the center of one of the faces of the box, (ii) redefining the center of the box (taking advantage of periodic boundary conditions), and (iii) changing the signs of the box axes. From each line of sight realization, we then project, using the Born approximation, to construct convergence and shear maps of 5 × 5 deg2 at various source redshifts.

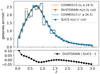

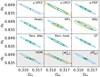

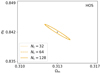

In Fig. 1 we exhibit the κ maps for our 13 simulations considering sources at redshift zs = 2. As can be seen, we display the same line-of-sight realization for the different cosmological simulations: in each subpanel we can recognize by eye the same large-scale structure distribution. More detailed and quantitative statistical information can now be extracted from these maps, for example in Fig. 2 we show the corresponding convergence power spectra, averaged over 128 lines of sight. The left, central and right panels display the Ωm, σ8, and w variations, respectively. The black solid line and gray shaded region – the same in all panels – exhibit the average convergence power spectra and the corresponding dispersion for the reference ΛCDM run.

|

Fig. 1. Convergence maps for sources at zs = 2 of the same light-cone random realization constructed from the DUSTGRAIN-pathfinder simulations used in this work. The region displayed covers a region of approximately 2.5 × 2.5 deg2. |

|

Fig. 2. Convergence power spectra for sources at zs = 2. From left to right, we exhibit the runs that account for Ωm, σ8 and w variations, respectively. The curves show the average over all 128 line of sight realizations, and the gray areas show the scatter of the convergence power spectrum around the mean value for the reference ΛCDM simulation (256 lines of sight) considering a field of view of 5 deg on a side. |

2.2. SLICS simulations

The SLICS are a suite of 924 fully independent N-body simulations specifically designed for the estimation of covariance matrices describing WL observables. They were produced by cubep3m, a Poisson solver that computes the nonlinear evolution of 15363 particles starting from initial conditions created at z = 120 under the Zeldovich approximation (Harnois-Déraps et al. 2013), in boxes of 505 h−1 Mpc on a side. Every run shares the same CPs2 but embodies a unique noise realization, thereby providing a large ensemble ideally suited for sample variance estimation. The matter power spectrum agrees to within 2 percent with the COSMIC EMULATOR (Heitmann et al. 2014) up to k = 3.0 h−1 Mpc at z = 0.6 (Harnois-Déraps & van Waerbeke 2015).

As detailed in Harnois-Déraps et al. (2018), the particles were collapsed on-the-fly into mass sheets at 18 predetermined redshifts, from which WL light-cones were constructed up to a redshift of 3.0 following the standard multiple-plane technique. Specifically, convergence and shear maps of 100 deg2 were constructed under the Born approximation at 18 source planes, and subsequently sampled to generate Euclid-like galaxy mocks with properties listed in Sect. 2.3, similarly to the methods presented in Sect. 2.1. Each plane has a thickness of 257.5 h−1 Mpc, which, according to Zorrilla Matilla et al. (2020), results in sub-dominant biases on cosmic shear statistics for upcoming surveys.

The SLICS simulations are publicly available3 and were used in a number of cosmic shear data analyses including CFHTLenS (e.g., Joudaki et al. 2017), Kilo Degree Survey (Hildebrandt et al. 2017) and Dark Energy Survey (Harnois-Déraps et al. 2021) data, as well as clustering data analyses including 2dFLenS (Blake et al. 2016), GAMA (van Uitert et al. 2018) and BOSS (Xia et al. 2020). Notably, the covariance matrix estimates of two-point functions have been shown to match well the analytical calculations in Hildebrandt et al. (2017) and Harnois-Déraps et al. (2019), leading to comparable constraints on CPs.

Some dissimilarities between the SLICS and the DUSTGRAIN-pathfinder simulations are worth noting here, as they might have a small but nonnegligible impact on the results presented in this paper. The two suites are based on distinct N-body codes, which results in residual differences in the nonlinear clustering. In addition, we note differences in particle count and mass resolution, in the light-cone opening angles, in pixel sizes4 and in the lens randomization procedure. Finally, the shear maps construction pipelines differ in that they are computed directly from the convergence field of view for DUSTGRAIN-pathfinder, but over the full periodic box for SLICS, which eliminates residual edge effects and B-mode leakage. It is worth mentioning that for the DUSTGRAIN-pathfinder runs, we assume void boundary condition, with κ = 0 outside the field of view, when computing the shear field. The only scales that are affected are angular modes l ∼ 105 and change is below one percent. This last caveat has been also highlighted in Hilbert et al. (2020), for a lower resolution map, to have little impact on two-point statistics as also suggested from the good agreement between numerical and theoretical forecasts for γ-2PCF and κ-PDF in the present analysis (see Sect. 4.5).

2.3. Mock galaxy catalogs

We generate mock galaxy catalogs by sampling the shear and convergence planes defined earlier from the DUSTGRAIN-pathfinder and SLICS simulations. This is to achieve a high degree of realism, allowing us to reproduce Euclid survey properties such as the redshift distribution, galaxy density, and shape noise. The procedure closely follows the implementation of Martinet et al. (2021b), but additionally considers magnitudes in the IE band of the Euclid VIS instrument for sampling the redshift distribution in the case of DUSTGRAIN-pathfinder. In contrast to the mentioned article, we do not present results including tomography in the present work. This is delayed to a future HOWLS paper but our mocks already support any tomographic slicing by construction.

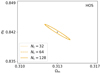

The redshift distributions n(z) of the DUSTGRAIN-pathfinder and SLICS simulations are shown in Fig. 3. They are built from the COSMOS2015 photometric redshift catalogs (Laigle et al. 2016) after a cut in magnitudes IE ≤ 24.5 in the case of DUSTGRAIN-pathfinder and i′≤24.5 for SLICS. This difference is explained by the fact the SLICS mocks were built earlier for Martinet et al. (2021b) than DUSTGRAIN-pathfinder and did not yet include the information about the Euclid VIS magnitudes (computed as a combination of the r, i′, and z′ magnitudes in the present work). Therefore, the DUSTGRAIN-pathfinder n(z) is closer to the expected Euclid n(z). The difference is, however, less than 5%, except in the redshift range z ≤ 0.2, which contains little information with regard to lensing. Additionally, the model of the dependence on cosmology is built from DUSTGRAIN-pathfinder while SLICS enters in the computation of the covariance matrix. This distinction lowers the risk of any impact of the n(z) difference between the two sets of data on the cosmological inference. After the magnitude cut, the COSMOS2015 n(z) is smoothed by fitting the parametrization from Fu et al. (2008) as it was shown in Martinet et al. (2021b) to capture the high-redshift tail better than the standard Smail et al. (1994) fit. The redshift distributions of the DUSTGRAIN-pathfinder and SLICS mocks are fully characterized by

|

Fig. 3. Top: Redshift distributions of the mocks built from the DUSTGRAIN-pathfinder (red dots) and SLICS (blue dots) simulations, normalized to 30 galaxies per arcmin2. These correspond to a Fu et al. (2008) fit to the Laigle et al. (2016) COSMOS2015 catalog after removing galaxies with magnitudes IE ≥ 24.5 and i′≥24.5 for DUSTGRAIN-pathfinder and SLICS, respectively (red and blue histograms). Bottom: Fractional difference between the DUSTGRAIN-pathfinder and SLICS redshift distributions. The difference is always below 5% except for redshifts lower than 0.2. |

and the parameters listed in Table 2.

Parameters of the Fu et al. (2008) redshift distributions of Eq. (1) for the DUSTGRAIN-pathfinder and SLICS mocks, normalized to 30 galaxies per arcmin2.

Shape noise is included by assigning an intrinsic ellipticity to each galaxy. Specifically, we draw each ellipticity component (ϵi, i = {1, 2}) from a Gaussian random distribution centered on 0 and with a dispersion σϵi = 0.26. This reference value (e.g., Euclid Collaboration 2019b) has been measured for a sample of galaxies observed with the Hubble Space Telescope and with similar photometric properties to the expected VIS sample (magnitudes I814 ∼ 24.5, Schrabback et al. 2018).

The impact of shape noise on the cosmological model is minimized by using the same random realization of galaxy intrinsic ellipticities and positions across all cosmologies for a given mock. This means that we have 128 independent realizations of shape noise for the DUSTGRAIN-pathfinder mocks, but these are identical for the 12 cosmologies probed. Conversely, the positions and ellipticities are fully random for any realization used in the covariance matrix computation, either with the DUSTGRAIN-pathfinder or SLICS simulations. This ensures the shape noise contribution to the error budget is faithfully captured. This process of fixing shape noise in the model and leaving it free in the covariance has become standard practice for simulation-based inference with higher-order mass map estimators (e.g., Kacprzak et al. 2016; Martinet et al. 2018; Harnois-Déraps et al. 2021).

These galaxy catalogs are then fed to the Euclid convergence map reconstruction pipeline described in Pires et al. (2020) and in Sect. 2.4 to produce the convergence maps on which most HOS will be measured. These catalogs are also used to compute direct statistics from the shear, specifically γ-2PCFs (Sect. 3.1) and aperture masses (Map, see Sects. 3.4 and 3.8).

2.4. Mass mapping

The statistical properties of the WL field can be assessed by a statistical analysis either of the shear field or of the convergence field. Many HOS are traditionally computed from the κ field. This requires solving a mass inversion problem that consists of reconstructing the convergence κ from the measured shear field γ. Using complex notation, the shear field is written as γ = γ1 + iγ2, and the convergence field as κ = κE + iκB, with κE and κB respectively corresponding to the E- and B-mode components of the field, by analogy with the electromagnetic field.

We can derive the relation between the shear field γ and the convergence field κ in the Fourier domain (Kaiser & Squires 1993) with

where the hat symbol denotes Fourier transforms,  is the complex conjugate, and

is the complex conjugate, and  with

with

with  and ℓi the Fourier counterparts of the angular coordinates θi. The convergence can only be determined up to an additive constant because there is a degeneracy when ℓ1 = ℓ2 = 0 (see e.g., Bartelmann 1995). In practice, we impose that the mean convergence vanishes across the field by setting the reconstructed ℓ = 0 mode to zero.

and ℓi the Fourier counterparts of the angular coordinates θi. The convergence can only be determined up to an additive constant because there is a degeneracy when ℓ1 = ℓ2 = 0 (see e.g., Bartelmann 1995). In practice, we impose that the mean convergence vanishes across the field by setting the reconstructed ℓ = 0 mode to zero.

Assuming the mass inversion is conducted without noise regularization, the same information is contained in the shear field as in the convergence maps. However, it is well known that the Kaiser-Squires inversion creates undesirable artifacts at the borders of the reconstructed convergence maps (see e.g., Seitz & Schneider 1996, 2001; Pires et al. 2020). This is due to the fact that the discrete Fourier transform implicitly assumes periodicity of the image along both dimensions. In a future work, mass mapping systematic effects will be further mitigated and their impact quantified by propagating the errors into CP forecasts using HOS. In addition to border effects and masks, we will test the impact of reduced shear. In this article, however, we assume that the mean ellipticity is an unbiased estimator of the mean shear instead of reduced shear such that we can replace γ by ϵ in Eq. (2), an assumption only correct in the weak regime.

The shear is sampled only at the positions of the galaxies. Therefore, the first step of the mass inversion method is to bin the observed ellipticities of galaxies on a regular pixel grid to create the shear maps. In practice, we bin the galaxies in pixels of size of 0 59, resulting in shear maps of 512 × 512 pixels (for DUSTGRAIN-pathfinder) and 1024 × 1024 pixels (for SLICS) that are then converted into convergence maps using Eq. (2). The statistical analysis is performed only on the E-modes convergence maps because WL only produces E-modes. However, the application of the HOS to the B-modes map can be used to test for residual systematic effects.

59, resulting in shear maps of 512 × 512 pixels (for DUSTGRAIN-pathfinder) and 1024 × 1024 pixels (for SLICS) that are then converted into convergence maps using Eq. (2). The statistical analysis is performed only on the E-modes convergence maps because WL only produces E-modes. However, the application of the HOS to the B-modes map can be used to test for residual systematic effects.

3. Statistics

Many higher-order probes have been proposed, tested, and measured on present-day Stage III lensing maps with promising preliminary results (e.g., Martinet et al. 2018; Gatti et al. 2022; Harnois-Déraps et al. 2021; Heydenreich et al. 2022; Zürcher et al. 2022; Burger et al. 2023). This consideration motivated us to focus our analysis on HOS of scalar fields derived from the shear: convergence and aperture mass. In the following paragraphs, we review the probes we consider, referring the interested reader to the quoted papers for further details. Far from being fully exhaustive, this short review aims at presenting the tools we have used and giving the reader an overview of the many roads that open up when going beyond second-order statistics. A short description of theoretical predictions is given for the 2PCF and the κ-PDF as they are used to validate the simulated derivatives entering the Fisher analysis, see Sect. 4.5. The impatient reader can directly look at Table 3, where we list the HOS we have used together with their abbreviations, the number of independent teams that applied them, and the subsections in which they are described. The fact that each statistic was computed by a different team led to a variety of choices in terms of the filtering of the shear or convergence field. For this publication we do not try to homogenize these choices as we consider it part of each method and list these differences in Table 3.

Statistics that have been applied to the HOWLS data set, with their abbreviation in the present article, the filter and smoothing scale employed, the number of independent teams for each statistic and links to the corresponding subsections.

3.1. Two-point correlation functions

Although useful on their own, HOS are at their best when used in combination with standard second-order statistics, coupling the typically larger signal-to-noise ratio (S/N) of lower-order statistics with the degeneracy-breaking power of HOS. In the following, we therefore quantify both the constraints from each HOS probe alone and the improvement of the constraints from joint second- and higher-order statistics with respect to the second-order-only case.

The most basic cosmic shear observable is the real-space shear two-point correlation functions (γ-2PCF), since it can be estimated by simply multiplying the ellipticities of galaxy pairs and averaging. The shear can conveniently be decomposed into a tangential, γt, and cross – component, γ×, such that

with ℜ and ℑ the real and imaginary parts, ϕ the polar angle of the direction vector between the galaxy position ϑ′ and a reference point ϑ, and the minus sign a convention to have the tangential shear positive around a mass overdensity. The two shear components can then be combined to get two 2PCFs (see, e.g., Kilbinger 2015, and references therein)

where the dependence is only on the angular separation θ on the sky because under the Cosmological Principle cosmic fields are statistically invariant under translation and rotation.

The main virtue of these γ-2PCF is that they can be straightforwardly estimated from the measured ellipticities εi as

where the sum runs over the galaxy pairs with positions on the sky (θi, θj) having angular separation |θi − θj| in a bin centered on θ. The weight wi of the ellipticity εi can be related to the measurement error, and set to zero if the galaxy is in a masked region. In the present article the lensing weights are set to 1, as the impact of masks and shear measurement methods are beyond the scope of this analyis.

The γ-2PCF can be easily computed for a given cosmological model by first going to Fourier space and then converting back into real space. The final result is

where  and

and  are the power spectra of the convergence E and B modes, while Jn(x) is the n-th order spherical Bessel function of the first kind. We note that, in the absence of systematics and neglecting higher-order effects, WL does not produce B modes so that

are the power spectra of the convergence E and B modes, while Jn(x) is the n-th order spherical Bessel function of the first kind. We note that, in the absence of systematics and neglecting higher-order effects, WL does not produce B modes so that  , and ξ± reduce to different Hankel transforms of the same quantity, which can be derived from the matter power spectrum Pδ(ℓ).

, and ξ± reduce to different Hankel transforms of the same quantity, which can be derived from the matter power spectrum Pδ(ℓ).

As done for the shear, we can similarly define the κ-2PCF for the convergence. In particular, this will inherit the same properties of translation and rotation invariance as in the shear case. The main difference is that, with the convergence being a scalar quantity, there is only one single correlation function. In Fourier space, this can be related to the convergence power spectrum as

where δD is the Dirac-δ function and the convergence power spectrum depends only on the modulus of ℓ due to the statistical homogeneity and isotropy. It is then possible to show that  , and the κ-2PCF is given by

, and the κ-2PCF is given by

which is the same as ξ+(θ). In the following, we keep this different label in order to distinguish between the γ-2PCF and κ-2PCF.

In this article, the γ-2PCF has been computed with the ATHENA software (Kilbinger et al. 2014) using 10 logarithmic bins between 0 1 and 300′. The κ-2PCF has been measured using the public code TreeCorr (Jarvis et al. 2004). The minimum separation considered is 0

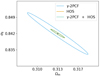

1 and 300′. The κ-2PCF has been measured using the public code TreeCorr (Jarvis et al. 2004). The minimum separation considered is 0 59 (corresponding to the pixel scale) and the maximum separation is approximately 424′ (corresponding to the DUSTGRAIN-pathfinder map diagonal), with 25 bins. As discussed in Sect. 4 several bins are later removed to pass our quality criteria, with the final scale range described in Table 3. We also show these DVs as well as their standard Fisher derivatives with respect to Ωm, σ8, and w in Figs. 4 and 5 for γ-2PCF and κ-2PCF respectively. Finally, we note some difference in the chosen binning by the different teams computing the 2PCFs which leads to some artificial difference between ξ+ and ξκ, for example.

59 (corresponding to the pixel scale) and the maximum separation is approximately 424′ (corresponding to the DUSTGRAIN-pathfinder map diagonal), with 25 bins. As discussed in Sect. 4 several bins are later removed to pass our quality criteria, with the final scale range described in Table 3. We also show these DVs as well as their standard Fisher derivatives with respect to Ωm, σ8, and w in Figs. 4 and 5 for γ-2PCF and κ-2PCF respectively. Finally, we note some difference in the chosen binning by the different teams computing the 2PCFs which leads to some artificial difference between ξ+ and ξκ, for example.

|

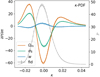

Fig. 4. γ-2PCF ξ+ (top) and ξ− (bottom) DVs and derivatives. The gray lines, whose scale is given by the second axis, correspond to the average DVs computed from the 924 SLICS realizations. The Fisher derivatives (defined in Eq. (47)) are computed from the DUSTGRAIN-pathfinder simulations with large variations of Ωm (orange), σ8 (green), and w (blue). The solid, dashed, and dotted lines respectively correspond to the average over 128, 64, and 32 realizations. The shaded areas represent the uncertainty computed from the 128 DUSTGRAIN-pathfinder realizations, and the gray error bars those of the 924 SLICS realizations. The inclusion of the dashed and dotted lines within the shaded areas highlights the low numerical noise. |

|

Fig. 5. Fiducial DV for κ-2PCF (gray) along with its derivatives with respect to Ωm (orange), σ8 (green), and w (blue). |

3.2. Convergence PDF

The one-point probability distribution function (PDF) of the WL convergence κ encodes vital information about the non-Gaussian late-time density field. In the presence of shape noise, which dominates on small scales, this information can be extracted most conveniently from the PDF of the convergence after smoothing on an angular scale big enough that the variance set by the gravitational clustering is larger than the shape noise contribution. As a one-point statistic, the PDF is straightforward to measure from simulated and real data, as evidenced by recent analyses of density-split statistics and weak lensing moments in the Dark Energy Survey (Friedrich et al. 2018; Gruen et al. 2018; Gatti et al. 2020, 2022), which carry PDF information in a compressed way and can deal with survey mask effects.

When focusing on mildly nonlinear scales, typically corresponding to smoothing scales of about 10′ at low redshifts, this one-point PDF also admits accurate theoretical predictions (see for example Barthelemy et al. 2020; Boyle et al. 2021). The main idea of this theoretical model relies on the fact that, within the Limber approximation, since the WL convergence probes the matter density in a cone whose opening angle is set by the smoothing scale, the κ-PDF can be predicted using cylindrical collapse applied to the 2D slices of the density in the circular cross-sections. Hence, for a set of sources regrouped in redshift bins and with a certain source distribution across the survey n(z), we recall that the theoretical cumulant generating function (CGF) ϕκ, θ at a given angular smoothing scale θ can be expressed as

with ϕcyl the CGF of the density in each individual 2D slice of radius χ(z)θ, and ωn(z) the generalized lensing kernel given a wide distribution of sources following the normalized distribution n(z)

where the Heaviside ℋ ensures that the integrand vanishes for z ≥ zs. ϕcyl is obtained from the cylindrical collapse dynamics as a proxy for the whole nonlinear evolution in cylindrically symmetric configurations (top-hat smoothing) and enforces a specific hierarchy of cumulants. The PDF is then recovered through the inverse Laplace transform of the exponential of the κ CGF as

Note that the cylindrical collapse model is accurate enough to predict the so-called reduced cumulants of the (2D) density field  , where ⟨δn⟩c are the cumulants of the matter density. This means that the model also requires an external input for the prediction of the nonlinear variance of all the 2D slices of the density along the line of sight. Fortunately, the emulation of the matter power spectrum has received lots of attention these past years and can be estimated rather accurately, for example by using the revised Halofit model (Takahashi et al. 2012) or the Euclid Emulator (Euclid Collaboration 2019a). Galaxy shape noise can be included in the theoretical prediction through a convolution of the noiseless PDF as described in Boyle et al. (2021) or directly at the level of the CGF by simple addition of – for example – an associated shape noise variance

, where ⟨δn⟩c are the cumulants of the matter density. This means that the model also requires an external input for the prediction of the nonlinear variance of all the 2D slices of the density along the line of sight. Fortunately, the emulation of the matter power spectrum has received lots of attention these past years and can be estimated rather accurately, for example by using the revised Halofit model (Takahashi et al. 2012) or the Euclid Emulator (Euclid Collaboration 2019a). Galaxy shape noise can be included in the theoretical prediction through a convolution of the noiseless PDF as described in Boyle et al. (2021) or directly at the level of the CGF by simple addition of – for example – an associated shape noise variance  .

.

We extracted the κ-PDF from the simulated maps for two top-hat filters with smoothing scales θs ∈ {4 69, 9

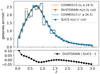

69, 9 37}, corresponding to 8/16 pixels in the DUSTGRAIN-pathfinder simulations. After smoothing the maps with the appropriate top-hat filter, we excluded all pixels whose smoothing circle of radius θs would intersect the patch boundary.5 The PDF was obtained from a histogram using 201 linearly spaced bins in the range κ ∈ [ − 0.1, 0.1]. Cuts were made in the considered range of κ to exclude the extreme tails and keep the DV close to Gaussian as discussed in Sect. 4.2. The precise κ values are given in Fig. 6. The cuts exclude 0.05% and 0.25% of the cumulative probability from the low-κ and high-κ tails, respectively, for the smaller smoothing scale. We subsequently compare the theoretical predictions to the measurements in the simulation in Sect. 4.5.

37}, corresponding to 8/16 pixels in the DUSTGRAIN-pathfinder simulations. After smoothing the maps with the appropriate top-hat filter, we excluded all pixels whose smoothing circle of radius θs would intersect the patch boundary.5 The PDF was obtained from a histogram using 201 linearly spaced bins in the range κ ∈ [ − 0.1, 0.1]. Cuts were made in the considered range of κ to exclude the extreme tails and keep the DV close to Gaussian as discussed in Sect. 4.2. The precise κ values are given in Fig. 6. The cuts exclude 0.05% and 0.25% of the cumulative probability from the low-κ and high-κ tails, respectively, for the smaller smoothing scale. We subsequently compare the theoretical predictions to the measurements in the simulation in Sect. 4.5.

|

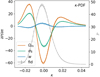

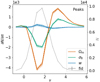

Fig. 6. Fiducial DV for κ-PDF (gray) along with its Fisher derivatives with respect to Ωm (orange), σ8 (green), and w (blue). The κ-PDF was computed on κ maps smoothed with a top-hat filter with a scale of 4 |

One alternative to the κ-PDF is to turn to the (slightly) more involved – but also more easily accessible through observations – modeling of the one-point PDF of the aperture mass from Eq. (13) discussed in Sect. 3.4. The analogous theoretical model was developed in Barthelemy et al. (2021). The next extension would be to include some tomographic information in the analysis. At the level of the PDF one could rely on the ideas developed in Barthelemy et al. (2022) for the joint PDF between – built to be – independent lensing kernels that probe structures along the line of sight, but the generalization to any other lensing observables should be straightforward.

3.3. Higher-order convergence moments

The shear and convergence 2PCF and their harmonic space counterparts, the power spectra, are related to the variance of the lensing fields. It is then natural to ask whether additional information is encoded in moments higher than two, given that they are indicators of the non-Gaussianity of the field. We consider here the second, third, and fourth moments of the convergence field.

Moments of the smoothed WL convergence field κθ can be calculated from weighted averages of the lensing PDF as  , assuming a lensing convergence field of zero mean. The variance ⟨κ2⟩=σ2 fully characterizes a Gaussian one-point distribution, while the skewness ⟨κ3⟩/σ3 and kurtosis ⟨κ4⟩/σ4 − 3 are the most common examples encoding non-Gaussian information. While an analysis of the variance, skewness, and kurtosis promises a significant compression of the κ-PDF DV, higher-order cumulants are known to be very sensitive to the tails of the distribution, rendering their signal and likelihood hard to predict.

, assuming a lensing convergence field of zero mean. The variance ⟨κ2⟩=σ2 fully characterizes a Gaussian one-point distribution, while the skewness ⟨κ3⟩/σ3 and kurtosis ⟨κ4⟩/σ4 − 3 are the most common examples encoding non-Gaussian information. While an analysis of the variance, skewness, and kurtosis promises a significant compression of the κ-PDF DV, higher-order cumulants are known to be very sensitive to the tails of the distribution, rendering their signal and likelihood hard to predict.

An approximated yet accurate formula for the moments can be obtained under the hierarchical ansatz (Bernardeau & Schaeffer 1992; Szapudi & Szalay 1993), using this ansatz to express the bispectrum and trispectrum in terms of the power spectrum and plugging them into the general expression for the convergence moments (see Munshi & Jain 2001; Vicinanza et al. 2019, for details).

It is worth noting that moments are a function of smoothing radius, so one typically considers as a cosmological probe their scaling with θ. In contrast, here we use them for a fixed θ since, in a preliminary analysis, we have found moments obtained from different smoothing radii to be highly correlated. Although some information is still present in the correlated moments, we prefer here to first focus on the choice of the filter radius in our two parameter analysis for which combining moments at different radii is not essential. We, therefore, measure them like the κ-PDF using a top-hat filter with two different values of the aperture radius, namely θ ∈ {4 69, 9

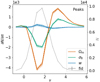

69, 9 37}, and take {⟨κ2⟩(θ),⟨κ3⟩(θ),⟨κ4⟩(θ)} for a fixed θ as our DV (see Fig. 7). To this end, we first define

37}, and take {⟨κ2⟩(θ),⟨κ3⟩(θ),⟨κ4⟩(θ)} for a fixed θ as our DV (see Fig. 7). To this end, we first define

|

Fig. 7. Fiducial DV (gray) along with its derivatives with respect to Ωm (orange), σ8 (green), and w (blue) for HOM computed on κ maps smoothed with a top-hat filter with a scale of 4 |

as the smoothed convergence field. We then take it to the second, third, fourth power and compute its average value over the pixels to compute the data vector for every single map. The mean over the different realizations of the simulations makes up our final DV.

3.4. Higher-order aperture mass moments

Higher-order moments as introduced before were extracted for the smoothed convergence maps. However, it is also possible to compute them for the aperture mass Map map, at sky location ϑ and scale θ given by (Schneider et al. 1998):

where Uθ is a compensated filter function with

The higher-order aperture maps statistics  correlate the aperture masses on n different scales θ1, ⋯, θn, and are defined as

correlate the aperture masses on n different scales θ1, ⋯, θn, and are defined as

The  is closely related to the third-order moment of the convergence maps since both of them are sensitive to the matter bispectrum (Schneider et al. 2005). However, in contrast to other HOS considered in this work, the

is closely related to the third-order moment of the convergence maps since both of them are sensitive to the matter bispectrum (Schneider et al. 2005). However, in contrast to other HOS considered in this work, the  can be directly inferred from the shear and are therefore not affected by systematics induced by the convergence reconstruction. Additionally

can be directly inferred from the shear and are therefore not affected by systematics induced by the convergence reconstruction. Additionally  can be inferred from the shear three-point correlation functions Γ0 = ⟨γ γ γ⟩ and Γ1 = ⟨γ* γ γ⟩, which can be easily estimated even for irregular survey geometries and in the presence of masks (Schneider & Lombardi 2003; Heydenreich et al. 2023). Consequently,

can be inferred from the shear three-point correlation functions Γ0 = ⟨γ γ γ⟩ and Γ1 = ⟨γ* γ γ⟩, which can be easily estimated even for irregular survey geometries and in the presence of masks (Schneider & Lombardi 2003; Heydenreich et al. 2023). Consequently,  is more straightforward to measure in a realistic survey setting than HOS of κ.

is more straightforward to measure in a realistic survey setting than HOS of κ.

We infer the  from the tangential shear γt(ϑ′;ϑ) at position ϑ′ with respect to ϑ. This inference is possible because each filter Uθ is associated with a function Qθ for which

from the tangential shear γt(ϑ′;ϑ) at position ϑ′ with respect to ϑ. This inference is possible because each filter Uθ is associated with a function Qθ for which

The function Qθ is given by

To mimic the application of the  statistics to a survey, we use Eq. (16) and work directly with the simulated shear catalogs. We use the filter function Uθ from Crittenden et al. (2002),

statistics to a survey, we use Eq. (16) and work directly with the simulated shear catalogs. We use the filter function Uθ from Crittenden et al. (2002),

and the scale radii θ ∈ {1 17, 2

17, 2 34, 4

34, 4 69, 9

69, 9 37}.

37}.

Our measurement of  proceeds in two steps following the procedure in Sect. 5.3.1 of Heydenreich et al. (2023). First, we measure Map(ϑ, θ). For this, we employ the convolution theorem to solve the convolution of Qθ and γt in Fourier space. Since the tangential shear γt can be written as

proceeds in two steps following the procedure in Sect. 5.3.1 of Heydenreich et al. (2023). First, we measure Map(ϑ, θ). For this, we employ the convolution theorem to solve the convolution of Qθ and γt in Fourier space. Since the tangential shear γt can be written as

where the vectors are interpreted as complex numbers ϑ = ϑ1 + iϑ2, Eq. (16) transforms into

Therefore, we can calculate both  and γ(ϑ) on a grid, and solve the convolution in Eq. (20) using the Fast Fourier Transform (FFT). To avoid border effects, we cut off a strip of width of 4θ from each border. This large cut-off is needed because the exponential aperture filter is not exactly zero for ϑ > θ, so at distance θ, we still experience border effects from each side of the Map(ϑ, θ) map. Our cut-off means we neglect 0.07% of the total filter power, which we deem acceptable. Second, we measure

and γ(ϑ) on a grid, and solve the convolution in Eq. (20) using the Fast Fourier Transform (FFT). To avoid border effects, we cut off a strip of width of 4θ from each border. This large cut-off is needed because the exponential aperture filter is not exactly zero for ϑ > θ, so at distance θ, we still experience border effects from each side of the Map(ϑ, θ) map. Our cut-off means we neglect 0.07% of the total filter power, which we deem acceptable. Second, we measure  (θ1, θ2, θ3). For this, we multiply Map(ϑ, θ1), Map(ϑ, θ2), and Map(ϑ, θ3) for each line-of-sight and each position ϑ. Then, we average over ϑ, which gives

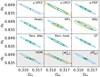

(θ1, θ2, θ3). For this, we multiply Map(ϑ, θ1), Map(ϑ, θ2), and Map(ϑ, θ3) for each line-of-sight and each position ϑ. Then, we average over ϑ, which gives  (θ1, θ2, θ3) for each line-of-sight. This DV is shown in Fig. 8.

(θ1, θ2, θ3) for each line-of-sight. This DV is shown in Fig. 8.

|

Fig. 8. Fiducial DV (gray) along with its derivatives with respect to Ωm (orange), σ8 (green), and w (blue) for |

We estimate the n-th order moments  (Fig. 9) with a different approach. For this we cover the survey footprint with apertures and estimate

(Fig. 9) with a different approach. For this we cover the survey footprint with apertures and estimate  within each aperture (Schneider et al. 1998) as

within each aperture (Schneider et al. 1998) as

|

Fig. 9. Fiducial DV (gray) along with its derivatives with respect to Ωm (orange), σ8 (green), and w (blue) for |

where each wi is the weight of the ellipticity εi and we abbreviated Qθj(|ϑij − ϑ|) ≡ Qθj; ij for an aperture centered at ϑ. Note that the index ik in the kth sum in (21) only considers galaxies that lie within the support of Qθk. By averaging over all apertures in the footprint with appropriate weights wap, one then obtains an unbiased estimate for  . As shown in Porth et al. (2020) and Porth & Smith (2021), one can decompose the nested sums appearing in (21), so that the full estimation process scales linearly with the number of galaxies. In this work, we estimate the connected parts of the aperture-mass statistics for n ∈ {2, 3, 4, 5}, where for n = 3 we take into account all of the different combinations of aperture radii and in the other cases only consider the components for which θ1 = … = θn. In contrast to the FFT-based estimation procedure described above, we employ a polynomial filter function introduced in Schneider et al. (1998) for the direct estimator method,

. As shown in Porth et al. (2020) and Porth & Smith (2021), one can decompose the nested sums appearing in (21), so that the full estimation process scales linearly with the number of galaxies. In this work, we estimate the connected parts of the aperture-mass statistics for n ∈ {2, 3, 4, 5}, where for n = 3 we take into account all of the different combinations of aperture radii and in the other cases only consider the components for which θ1 = … = θn. In contrast to the FFT-based estimation procedure described above, we employ a polynomial filter function introduced in Schneider et al. (1998) for the direct estimator method,

where ℋ(x) denotes the Heaviside function. For our choice of wap, we follow Porth & Smith (2021) who propose a form that approximates an inverse variance weighting scheme. As the mocks used in this work have wi ≡ 1, it can be shown that in this case the weight of an aperture centered at ϑ is equal to the number of multiplet counts within its configuration of aperture radii,

where the indices are bound to the same constraints as in (21). Analogous to the FFT-based estimation procedure we need to avoid border effects; with our choice (22) for the filter function this results in cutting off a strip of width of max({θ1,⋯,θn}) from each border. In the resulting DV (Fig. 9) the first twelve elements correspond to the equal scale statistics of order {2, 4, 5} while the final 20 elements correspond to the third order statistics and are ordered as the DV in Fig. 8.

3.5. Aperture mass peak counts

A different way to probe the convergence field at all statistical orders in a single step is to consider peak counts. As the name itself implies, one is now searching for peaks (i.e., local over-densities) on the smoothed convergence maps.

Some studies only focus on peaks with very large S/N because they are good tracers of massive galaxy clusters (Kruse & Schneider 1999; Marian & Bernstein 2006; Gavazzi & Soucail 2006; Hamana et al. 2015; Miyazaki et al. 2017). The advantage of this approach is that the dependence on cosmology of the WL cluster abundance can be accurately predicted by theoretical models (see e.g., Kruse & Schneider 2000; Bartelmann etal. 2001; Hamana et al. 2004; Marian et al. 2009). Another approach consists of also considering low-amplitude peaks. Since there is no analytical prediction for the full range of S/N peaks, it is necessary to run a large number of N-body simulations to calibrate the dependence of the peak count statistics on cosmology. However, a significant fraction of these peaks arise from large-scale structure projections, and as such, carry additional cosmological information (see e.g., Yang et al. 2013; Lin et al. 2016). Over the past two decades, many cosmological studies have been performed based on the second approach (see e.g., Pires et al. 2009; Dietrich & Hartlap 2010; Martinet et al. 2018, 2021a,b; Peel et al. 2018; Li et al. 2019; Ajani et al. 2020; Harnois-Déraps et al. 2021) and have shown the strength of peak counts in discriminating between cosmological models. In a preliminary Fisher analysis, we tested the two approaches and, as expected, the best results were obtained when including the full range of S/N peaks, which is therefore what we present in the following.

The computation of the peak count statistics requires filtering the convergence maps because of galaxy shape noise. In practice, the peak count can be evaluated at a given scale by convolving the convergence maps with a filter function of a specific scale (i.e., aperture radius). We choose to filter the maps using compensated filters defined in Eq. (14). Compensated filters (e.g., aperture mass filters or wavelet filters) have been preferred over low-pass filters (e.g., Gaussian filters) due to their shapes, which reduce the overlap between different scales and then the correlations between them (see e.g., Lin et al. 2016; Ajani et al. 2020). In Leonard et al. (2012), it is demonstrated that the aperture mass is formally identical to a wavelet transform at a specific scale.

As such, we use the starlet transform (Starck et al. 2007; Leonard et al. 2012) to simultaneously compute five aperture mass maps corresponding to scales of {1 17, 2

17, 2 34, 4

34, 4 69, 9

69, 9 37, 18

37, 18 74}. The starlet transform decomposes the convergence as follows:

74}. The starlet transform decomposes the convergence as follows:

where CJ is a smooth version of the convergence κ and  are the wavelet maps corresponding to a scale of θ = 2i pixels.

are the wavelet maps corresponding to a scale of θ = 2i pixels.

The starlet transform is equivalent to applying the following aperture mass filter to the convergence map (Leonard et al. 2012):

In the aperture mass maps, the peaks are identified as individual pixels higher than their eight neighbors. The edges are discarded from the computation. Once the peaks are detected on each aperture mass map, they are classified depending on their amplitudes with respect to the shape noise. Several implementations of the peaks have been tested. The main differences between the implementations are in the range of the amplitudes of the peaks that are considered, the number of equidistant bins and the size of the edges to be discarded.

The results for the peak counts presented in this study are obtained by sorting the peaks into 14 equidistant bins between −1 and 6 times the dispersion value (see Table 4 and Fig. 10) to ensure it is Gaussian distributed for the Fisher analysis and by discarding a stripe of 1 pixel on each side of the Map map before counting peaks.

|

Fig. 10. Fiducial DV (gray) along with its derivatives with respect to Ωm (orange), σ8 (green), and w (blue) for peaks of aperture mass maps, computed from κ-fields smoothed with a starlet filter with a scale of 2 |

Verification of the Gaussian hypothesis.

3.6. Convergence Minkowski functionals

The HOS probes previously described can be considered extensions of the standard second-order probes since they all aim at probing the convergence field at higher orders to sort out the information contained in its non-Gaussianity. A different way to access this additional constraining power is represented by topological indicators.

Topology is the branch of mathematics that addresses the shapes, boundaries and connectivity of structural features in a field. More precisely, it is concerned with the properties of a geometric object that are preserved under continuous deformations, such as stretching, twisting, crumpling, and bending. Basic examples of topological properties are: the dimension distinguishing between a line and a surface; the compactness differentiating between a straight line and a circle; and connectedness, which separates a circle from two nonintersecting circles.

The first topological statistics we use as a cosmological tool are the Minkowski functionals (MFs). According to Hadwiger’s theorem (Hadwiger 1957), under a few simple requirements, any morphological descriptor of a d-dimensional scalar field is a linear combination of d + 1 MFs Vn with n ranging from 0 to d. The geometrical interpretation of each functional depends on the considered dimension d. Therefore, the morphology of the 2-dimensional convergence scalar field κ(ϑ) = κ(ϑ1, ϑ2) with variance σ2 will be described by the first three MFs6 as

where A represents the map area, ∂Qν is the boundary of the excursion set Qν = {(ϑ1, ϑ2)∣κ(ϑ1, ϑ2)/σ ≥ ν} at a given threshold ν, da and dl are respectively the surface and the line element along ∂Qν, and 𝒦 is the local geodesic curvature of ∂Qν. Qualitatively, one can say that V0(ν) and V1(ν) quantify, respectively, the area and the perimeter of the excursion set Qν, while V2(ν) gives the Euler characteristic, which is the topological quantity measuring the connectivity of the field. For an orientable surface, the latter is defined as χ = 2(𝒜 − ℬ), that is the difference between the number 𝒜 of disconnected regions above the threshold ν and the number ℬ of those below ν, that is the number of holes. We note that in Morse theory the Euler characteristics are also given by the alternating sum of critical point counts and hence related to the peak counts described in the previous section.

Equation (26), applied to the convergence field, can be rewritten in an operative form as

where

with κ,i and κ,ij the first- and second-order partial derivatives of the convergence field with respect to ϑi and ϑiϑj and for i, j = 1, 2.

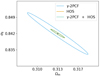

Equation (27) suggests that the zeroth MF V0 can be evaluated by the integration of the Heaviside step function over the whole excursion set Qν. The other MFs V1, V2 have been transformed from line integrals into surface integrals by inserting a delta function δD and the appropriate Jacobian. The integrands of the first and second MFs, including the geodesic curvature 𝒦, are then given by second-order invariants that depend solely on the threshold ν, the field value κ and functions of its first- and second-order derivatives κ,i, κ,ij defined in Eq. (28), (see Sect. 2.3 of Schmalzing & Górski 1998, for a detailed calculation)7.