| Issue |

A&A

Volume 607, November 2017

|

|

|---|---|---|

| Article Number | A95 | |

| Number of page(s) | 27 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/201629504 | |

| Published online | 20 November 2017 | |

Planck intermediate results

LI. Features in the cosmic microwave background temperature power spectrum and shifts in cosmological parameters

1 APC, AstroParticule et Cosmologie, Université Paris Diderot, CNRS/IN2P3, CEA/lrfu, Observatoire de Paris, Sorbonne Paris Cité, 10 rue Alice Domon et Léonie Duquet, 75205 Paris Cedex 13, France

2 African Institute for Mathematical Sciences, 6-8 Melrose Road, Muizenberg, 7945 Cape Town, South Africa

3 Agenzia Spaziale Italiana Science Data Center, via del Politecnico snc, 00133 Roma, Italy

4 Agenzia Spaziale Italiana, via del Politecnico snc, 00133 Roma, Italy

5 Aix Marseille Univ., CNRS, LAM, Laboratoire d’Astrophysique de Marseille, 13013 Marseille, France

6 Astrophysics Group, Cavendish Laboratory, University of Cambridge, J J Thomson Avenue, Cambridge CB3 0HE, UK

7 Astrophysics & Cosmology Research Unit, School of Mathematics, Statistics & Computer Science, University of KwaZulu-Natal, Westville Campus, Private Bag X54001, Durban 4000, South Africa

8 CITA, University of Toronto, 60 St. George St., Toronto, ON M5S 3H8, Canada

9 CNRS, IRAP, 9 Av. colonel Roche, BP 44346, 31028 Toulouse Cedex 4, France

10 California Institute of Technology, Pasadena, California, CA 91125, USA

11 Centre for Theoretical Cosmology, DAMTP, University of Cambridge, Wilberforce Road, Cambridge CB3 0WA, UK

12 Computational Cosmology Center, Lawrence Berkeley National Laboratory, Berkeley, California, CA 94720, USA

13 DTU Space, National Space Institute, Technical University of Denmark, Elektrovej 327, 2800 Kgs. Lyngby, Denmark

14 Département de Physique Théorique, Université de Genève, 24 Quai E. Ansermet, 1211 Genève 4, Switzerland

15 Departamento de Astrofísica, Universidad de La Laguna (ULL), 38206 La Laguna, Tenerife, Spain

16 Departamento de Física, Universidad de Oviedo, Avda. Calvo Sotelo s/n, 33007 Oviedo, Spain

17 Department of Astrophysics/IMAPP, Radboud University, PO Box 9010, 6500 GL Nijmegen, The Netherlands

18 Department of Mathematics, University of Stellenbosch, Stellenbosch 7602, South Africa

19 Department of Physics & Astronomy, University of British Columbia, 6224 Agricultural Road, Vancouver, British Columbia, Canada

20 Department of Physics and Astronomy, Dana and David Dornsife College of Letter, Arts and Sciences, University of Southern California, Los Angeles, CA 90089, USA

21 Department of Physics and Astronomy, University of Sussex, Brighton BN1 9QH, UK

22 Department of Physics, Gustaf Hällströmin katu 2a, University of Helsinki, 00014 Helsinki, Finland

23 Department of Physics, Princeton University, Princeton, New Jersey, NJ 08544, USA

24 Department of Physics, University of California, Berkeley, California, CA 94607, USA

25 Department of Physics, University of California, One Shields Avenue, Davis, California, CA 95616, USA

26 Department of Physics, University of California, Santa Barbara, California, CA 93106, USA

27 Department of Physics, University of Illinois at Urbana-Champaign, 1110 West Green Street, Urbana, Illinois, IL 61820, USA

28 Dipartimento di Fisica e Astronomia G. Galilei, Università degli Studi di Padova, via Marzolo 8, 35131 Padova, Italy

29 Dipartimento di Fisica e Astronomia, Alma Mater Studiorum, Università degli Studi di Bologna, Viale Berti Pichat 6/2, 40127 Bologna, Italy

30 Dipartimento di Fisica e Scienze della Terra, Università di Ferrara, via Saragat 1, 44122 Ferrara, Italy

31 Dipartimento di Fisica, Università La Sapienza, P.le A. Moro 2, 00185 Roma, Italy

32 Dipartimento di Fisica, Università degli Studi di Milano, via Celoria, 16, 20133 Milano, Italy

33 Dipartimento di Fisica, Università degli Studi di Trieste, via A. Valerio 2, 34127 Trieste, Italy

34 Dipartimento di Fisica, Università di Roma Tor Vergata, via della Ricerca Scientifica, 1, 00133 Roma, Italy

35 European Space Agency, ESAC, Planck Science Office, Camino bajo del Castillo, s/n, UrbanizaciónVillafranca del Castillo, 28692 Villanueva de la Cañada, Madrid, Spain

36 European Space Agency, ESTEC, Keplerlaan 1, 2201 AZ Noordwijk, The Netherlands

37 Gran Sasso Science Institute, INFN, viale F. Crispi 7, 67100 L’ Aquila, Italy

38 HGSFP and University of Heidelberg, Theoretical Physics Department, Philosophenweg 16, 69120 Heidelberg, Germany

39 Haverford College Astronomy Department, 370 Lancaster Avenue, Haverford, Pennsylvania, PA 19041, USA

40 Helsinki Institute of Physics, Gustaf Hällströmin katu 2, University of Helsinki, 00014 Helsinki, Finland

41 INAF–Osservatorio Astronomico di Padova, Vicolo dell’Osservatorio 5, 35122 Padova, Italy

42 INAF–Osservatorio Astronomico di Trieste, via G.B. Tiepolo 11, 40127 Trieste, Italy

43 INAF/IASF Bologna, via Gobetti 101, 40129 Bologna, Italy

44 INAF/IASF Milano, via E. Bassini 15, 20133 Milano, Italy

45 INFN – CNAF, viale Berti Pichat 6/2, 40127 Bologna, Italy

46 INFN, Sezione di Bologna, viale Berti Pichat 6/2, 40127 Bologna, Italy

47 INFN, Sezione di Ferrara, via Saragat 1, 44122 Ferrara, Italy

48 INFN, Sezione di Roma 2, Università di Roma Tor Vergata, via della Ricerca Scientifica, 1, 00185 Roma, Italy

49 INFN/National Institute for Nuclear Physics, via Valerio 2, 34127 Trieste, Italy

50 Imperial College London, Astrophysics group, Blackett Laboratory, Prince Consort Road, London, SW7 2AZ, UK

51 Institut d’Astrophysique Spatiale, CNRS, Univ. Paris-Sud, Université Paris-Saclay, Bât. 121, 91405 Orsay Cedex, France

52 Institut d’Astrophysique de Paris, CNRS (UMR 7095), 98bis Boulevard Arago, 75014 Paris, France

53 Institute Lorentz, Leiden University, PO Box 9506, Leiden 2300 RA, The Netherlands

54 Institute of Astronomy, University of Cambridge, Madingley Road, Cambridge CB3 0HA, UK

55 Institute of Theoretical Astrophysics, University of Oslo, Blindern, 0371 Oslo, Norway

56 Instituto de Astrofísica de Canarias, C/Vía Láctea s/n, La Laguna, 38205 Tenerife, Spain

57 Instituto de Física de Cantabria (CSIC-Universidad de Cantabria), Avda. de los Castros s/n, 39005 Santander, Spain

58 Istituto Nazionale di Fisica Nucleare, Sezione di Padova, via Marzolo 8, 35131 Padova, Italy

59 Jet Propulsion Laboratory, California Institute of Technology, 4800 Oak Grove Drive, Pasadena, California, CA 91125, USA

60 Jodrell Bank Centre for Astrophysics, Alan Turing Building, School of Physics and Astronomy, The University of Manchester, Oxford Road, Manchester, M13 9PL, UK

61 Kavli Institute for Cosmological Physics, University of Chicago, Chicago, IL 60637, USA

62 Kavli Institute for Cosmology Cambridge, Madingley Road, Cambridge, CB3 0HA, UK

63 LAL, Université Paris-Sud, CNRS/IN2P3, 91898 Orsay, France

64 LERMA, CNRS, Observatoire de Paris, 61 Avenue de l’Observatoire, 75014 Paris, France

65 Laboratoire Traitement et Communication de l’Information, CNRS (UMR 5141) and Télécom ParisTech, 46 rue Barrault, 75634 Paris Cedex 13, France

66 Laboratoire de Physique Subatomique et Cosmologie, Université Grenoble-Alpes, CNRS/IN2P3, 53, rue des Martyrs, 38026 Grenoble Cedex, France

67 Laboratoire de Physique Théorique, Université Paris-Sud 11 & CNRS, Bâtiment 210, 91405 Orsay, France

68 Lawrence Berkeley National Laboratory, Berkeley, California, USA

69 Low Temperature Laboratory, Department of Applied Physics, Aalto University, Espoo, 00076 Aalto, Finland

70 Max-Planck-Institut für Astrophysik, Karl-Schwarzschild-Str. 1, 85741 Garching, Germany

71 Mullard Space Science Laboratory, University College London, Surrey RH5 6NT, UK

72 Nicolaus Copernicus Astronomical Center, Polish Academy of Sciences, Bartycka 18, 00-716 Warsaw, Poland

73 Nordita (Nordic Institute for Theoretical Physics), Roslagstullsbacken 23, 106 91 Stockholm, Sweden

74 Purple Mountain Observatory, Chinese Academy of Sciences, Nanjing 210008, PR China

75 SISSA, Astrophysics Sector, via Bonomea 265, 34136 Trieste, Italy

76 San Diego Supercomputer Center, University of California, San Diego, 9500 Gilman Drive, La Jolla, CA 92093, USA

77 School of Chemistry and Physics, University of KwaZulu-Natal, Westville Campus, Private Bag X54001, Durban, 4000, South Africa

78 School of Physics and Astronomy, Cardiff University, Queens Buildings, The Parade, Cardiff, CF24 3AA, UK

79 School of Physics and Astronomy, Sun Yat-Sen University, 135 Xingang Xi Road, Guangzhou, 510006, PR China

80 School of Physics and Astronomy, University of Nottingham, Nottingham NG7 2RD, UK

81 School of Physics, Indian Institute of Science Educationand Research Thiruvananthapuram (IISER-TVM), Trivandrum 695016, Kerala, India

82 Simon Fraser University, Department of Physics, 8888 University Drive, Burnaby BC, Canada

83 Sorbonne Université-UPMC, UMR 7095, Institut d’Astrophysique de Paris, 98bis Boulevard Arago, 75014 Paris, France

84 Sorbonne Universités, Institut Lagrange de Paris (ILP), 98bis Boulevard Arago, 75014 Paris, France

85 Space Sciences Laboratory, University of California, Berkeley, California, CA 94720, USA

86 The Oskar Klein Centre for Cosmoparticle Physics, Department of Physics, Stockholm University, AlbaNova, 106 91 Stockholm, Sweden

87 UPMC Univ. Paris 06, UMR 7095, 98bis Boulevard Arago, 75014 Paris, France

88 Université de Toulouse, UPS-OMP, IRAP, 31028 Toulouse Cedex 4, France

89 University of Granada, Departamento de Física Teórica y del Cosmos, Facultad de Ciencias, 18071 Granada, Spain

90 Warsaw University Observatory, Aleje Ujazdowskie 4, 00-478 Warszawa, Poland

⋆

Corresponding authors: Silvia Galli, e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

; Marius Millea, e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

Received: 8 August 2016

Accepted: 10 September 2017

Abstract

The six parameters of the standard ΛCDM model have best-fit values derived from the Planck temperature power spectrum that are shifted somewhat from the best-fit values derived from WMAP data. These shifts are driven by features in the Planck temperature power spectrum at angular scales that had never before been measured to cosmic-variance level precision. We have investigated these shifts to determine whether they are within the range of expectation and to understand their origin in the data. Taking our parameter set to be the optical depth of the reionized intergalactic medium τ, the baryon density ωb, the matter density ωm, the angular size of the sound horizon θ∗, the spectral index of the primordial power spectrum, ns, and Ase− 2τ (where As is the amplitude of the primordial power spectrum), we have examined the change in best-fit values between a WMAP-like large angular-scale data set (with multipole moment ℓ < 800 in the Planck temperature power spectrum) and an all angular-scale data set (ℓ < 2500Planck temperature power spectrum), each with a prior on τ of 0.07 ± 0.02. We find that the shifts, in units of the 1σ expected dispersion for each parameter, are { Δτ,ΔAse− 2τ,Δns,Δωm,Δωb,Δθ∗ } = { −1.7,−2.2,1.2,−2.0,1.1,0.9 }, with a χ2 value of 8.0. We find that this χ2 value is exceeded in 15% of our simulated data sets, and that a parameter deviates by more than 2.2σ in 9% of simulated data sets, meaning that the shifts are not unusually large. Comparing ℓ < 800 instead to ℓ> 800, or splitting at a different multipole, yields similar results. We examined the ℓ < 800 model residuals in the ℓ> 800 power spectrum data and find that the features there that drive these shifts are a set of oscillations across a broad range of angular scales. Although they partly appear similar to the effects of enhanced gravitational lensing, the shifts in ΛCDM parameters that arise in response to these features correspond to model spectrum changes that are predominantly due to non-lensing effects; the only exception is τ, which, at fixed Ase− 2τ, affects the ℓ> 800 temperature power spectrum solely through the associated change in As and the impact of that on the lensing potential power spectrum. We also ask, “what is it about the power spectrum at ℓ < 800 that leads to somewhat different best-fit parameters than come from the full ℓ range?” We find that if we discard the data at ℓ < 30, where there is a roughly 2σ downward fluctuation in power relative to the model that best fits the full ℓ range, the ℓ < 800 best-fit parameters shift significantly towards the ℓ < 2500 best-fit parameters. In contrast, including ℓ < 30, this previously noted “low-ℓ deficit” drives ns up and impacts parameters correlated with ns, such as ωm and H0. As expected, the ℓ < 30 data have a much greater impact on the ℓ < 800 best fit than on the ℓ < 2500 best fit. So although the shifts are not very significant, we find that they can be understood through the combined effects of an oscillatory-like set of high-ℓ residuals and the deficit in low-ℓ power, excursions consistent with sample variance that happen to map onto changes in cosmological parameters. Finally, we examine agreement between PlanckTT data and two other CMB data sets, namely the Planck lensing reconstruction and the TT power spectrum measured by the South Pole Telescope, again finding a lack of convincing evidence of any significant deviations in parameters, suggesting that current CMB data sets give an internally consistent picture of the ΛCDM model.

Key words: cosmology: observations / cosmic background radiation / cosmological parameters / cosmology: theory

© ESO, 2017

1. Introduction

Probably the most important high-level result from the Planck satellite1 (Planck Collaboration I 2016) is the good agreement of the statistical properties of the cosmic microwave background anisotropies (CMB) with the predictions of the six-parameter standard ΛCDM cosmological model (Planck Collaboration XV 2014; Planck Collaboration XVI 2014; Planck Collaboration XI 2016; Planck Collaboration XIII 2016). This agreement is quite remarkable, given the very significant increase in precision of the Planck measurements over those of prior experiments. The continuing success of the ΛCDM model has deepened the motivation for attempts to understand why the Universe is so well-described as having emerged from Gaussian adiabatic initial conditions with a particular mix of baryons, cold dark matter (CDM), and a cosmological constant (Λ).

Since the main message from Planck, and indeed from the Wilkinson Microwave Anisotropy Probe (WMAP; Bennett et al. 2013) before it, has been the continued success of the six-parameter ΛCDM model, attention naturally turns to precise details of the values of the best-fit parameters of the model. Many cosmologists have focused on the parameter shifts with respect to the best-fit values preferred by pre-Planck data. Compared to the WMAP data, for example, Planck data prefer a somewhat slower expansion rate, higher dark matter density, and higher matter power spectrum amplitude, as discussed in several Planck Collaboration papers (Planck Collaboration XV 2014; Planck Collaboration XVI 2014; Planck Collaboration XI 2016; Planck Collaboration XIII 2016), as well as in Addison et al. (2016). These shifts in parameters have increased the degree of tension between CMB-derived values and those determined from some other astrophysical data sets, and have thereby motivated discussion of extensions to the standard cosmological model (e.g. Verde et al. 2013; Marra et al. 2013; Efstathiou 2014; Wyman et al. 2014; Beutler et al. 2014; MacCrann et al. 2015; Seehars et al. 2016; Hildebrandt et al. 2016). However, none of these extensions are strongly supported by the Planck data themselves (e.g. see discussion in Planck Collaboration XIII 2016).

Despite the interest that the shifts in best-fit parameters has generated, there has not yet been an identification of the particular aspects of the Planck data, and their differences from WMAP data, that give rise to the shifts. The main goal of this paper is to identify the aspects of the data that lead to the shifts, and to understand the physics that drives ΛCDM parameters to respond to these differences in the way they do. We chose to pursue this goal with analysis that is entirely internal to the Planck data. In carrying out this Planck-based analysis, we still shed light on the WMAP-to-Planck parameter shifts, because when we restrict ourselves to modes that WMAP measures at high signal-to-noise ratio, the WMAP and Planck temperature maps agree well (e.g. Kovács et al. 2013; Planck Collaboration XXXI 2014). The qualitatively new attribute of the Planck data that leads to the parameter shifts is the high-precision measurement of the temperature power spectrum in the 600 ≲ ℓ ≲ 2000 range2. Restricting our analysis to be internal to Planck has the advantage of simplicity, without altering the main conclusions.

We also investigated the consistency of the differences in parameters inferred from different multipole ranges with expectations, given the ΛCDM model and our understanding of the sources of error. The consistency of such parameter shifts has been previously studied in Planck Collaboration XI (2016), Couchot et al. (2015), and Addison et al. (2016). In studying the consistency of parameters inferred from ℓ < 1000 with those inferred from ℓ> 1000Addison et al. (2016) claim to find significant evidence for internal inconsistencies in the Planck data. Our analysis improves upon theirs in several ways, mainly through our use of simulations to account for covariances between the pair of data sets being compared, as well as the “look elsewhere effect”, and the departure of the true distribution of the shift statistics away from a χ2 distribution.

Much has already been demonstrated about the robustness of the Planck parameter results to data processing, data selection, foreground removal, and instrument modelling choices Planck Collaboration XI (2016). We will not revisit all of that here. However, having identified the power spectrum features that are causing the shifts in cosmological parameters, we show that these features are all present in multiple individual frequency channels, as one would expect from the previous studies. The features in the data therefore appear to be cosmological in origin.

The Planck polarization maps, and the TE and EE polarization power spectra determinations they enable, are also new aspects of the Planck data. These new data are in agreement with the TT results and point to similar shifts away from the WMAP parameters (Planck Collaboration XIII 2016), although with less statistical weight. In order to focus on the primary driver of the parameter shifts, namely the temperature power spectrum, we have ignored polarization data except for the constraint on the value of the optical depth τ coming from polarization at the largest angular scales, which in practice we folded in with a prior on τ.

Our primary analysis is of the shift in best-fit cosmological parameters as determined from: (1) a prior on the value of τ (as a proxy for low-ℓ polarization data) and PlanckTT3 data restricted to ℓ < 8004; and (2) the same τ prior and the full ℓ-range (ℓ < 2500) of PlanckTT data. Taking the former data set as a proxy for WMAP, these are the parameter shifts that have been of great interest to the community. There is of course a degree of arbitrariness in the particular choice of ℓ = 800 for defining the low-ℓ data set. One might argue for a lower ℓ, based on the fact that the WMAP temperature maps reach a signal-to-noise ratio of unity by ℓ ≃ 600, and thus above 600 the power spectrum error bars are at least twice as large as the Planck ones. However, we explicitly selected ℓ = 800 for our primary analysis because it splits the weight on ΛCDM parameters coming from Planck so that half is from ℓ < 800 and half is from ℓ> 8005. Addressing the parameter shifts from ℓ < 800 versus ℓ> 800 is a related and interesting issue, and while our main focus is on the comparison of the full-ℓ results to those from ℓ < 800, we computed and showed the low-ℓ versus high-ℓ results as well. Additionally, as described in Appendix A, we performed an exhaustive search over many different choices for the multipole at which to split the data.

In addition to the high-ℓPlanck temperature data, inferences of the reionization optical depth obtained from the low-ℓPlanck polarization data also have an important impact on the determination of the other cosmological parameters. The parameter shifts that have been discussed in the literature to date have generally assumed a constraint on τ coming from Planck LFI polarization data (Planck Collaboration XI 2016; Planck Collaboration XIII 2016). During the writing of this paper, new and tighter constraints on τ were released using improved Planck HFI polarization data (Planck Collaboration Int. XLVI 2016; Planck Collaboration Int. XLVII 2016). These are consistent with the previous ones, shrinking the error by approximately a factor of two and moving the best fit to slightly lower values of τ. To make our work more easily comparable to previous discussions, and because the impact of this updated constraint is not very large, we have chosen to write the main body of this paper assuming the old τ prior. This also allows us to more cleanly isolate and discuss separately the impact of the new prior, which we do in a later section of this paper.

Our focus here is on the results from Planck, and so an in-depth study comparing the Planck results with those from other cosmological data sets is beyond our scope. Nevertheless, there do exist claims of internal inconsistencies in CMB data (Addison et al. 2016; Riess et al. 2016), with the parameter shifts we discuss here playing an important role, since they serve to drive the PlanckTT best fits away from those of the two other CMB data sets, namely the Planck measurements of the φφ lensing potential power spectrum (Planck Collaboration XVII 2014; Planck Collaboration XV 2016) and the South Pole Telescope (SPT) measurement of the TT damping tail (Story et al. 2013). Thus, we also briefly examine whether there is any evidence of discrepancies that are not just internal to the PlanckTT data, but also when comparing with these other two probes.

The features we identify that are driving the changes in parameters are approximately oscillatory in nature, a part of them with a frequency and phasing such that they could be caused by a smoothing of the power spectrum, of the sort that is generated by gravitational lensing. We thus investigate the role of lensing in the parameter shifts. The impact of lensing in PlanckTT parameter estimates has previously been investigated via use of the parameter “AL” that artificially scales the lensing power spectrum (as discussed on p. 28 of Planck Collaboration XVI 2014; and p. 24 of Planck Collaboration XIII 2016). Here we introduce a new method that more directly elucidates the impact of lensing on cosmological parameter determination.

Given that we regard the ℓ < 2500Planck data as providing a better determination of the cosmological parameters than the ℓ < 800Planck data, it is natural to turn our primary question around and ask: what is it about the ℓ < 800 data that makes the inferred parameter values differ from the full ℓ-range parameters? Addressing this question, we find that the deficit in low-multipole power at ℓ ≲ 30, the “low-ℓ deficit”6, plays a significant role in driving the ℓ < 800 parameters away from the results coming from the full ℓ-range.

The paper is organized as follows. Section 2 introduces the shifts seen in parameters between using Planckℓ < 800 data and full-ℓ data. Section 3 describes the extent to which the observed shifts are consistent with expectations; we make some simplifying assumptions in our analysis and justify their use here. Section 4 represents a pedagogical summary of the physical effects underlying the various parameter shifts. We then turn to a more detailed characterization of the parameter shifts and their origin. The most elementary, unornamented description of the shifts is presented in Sect. 5.1, followed by a discussion of the effects of gravitational lensing in Sect. 5.2 and the role of the low-ℓ deficit in Sect. 5.3. In Sect. 5.4 we consider whether there might be systematic effects significantly impacting the parameter shifts and in Sect. 5.5 we add a discussion of the effect of changing the τ prior. Finally, we comment on some differences with respect to other CMB experiments in Sect. 6 and conclude in Sect. 7.

Throughout we work within the context of the six-parameter, vacuum-dominated, cold dark matter (ΛCDM) model. This model is based upon a spatially flat, expanding Universe whose dynamics are governed by general relativity and dominated by cold dark matter and a cosmological constant (Λ). We shall assume that the primordial fluctuations have Gaussian statistics, with a power-law power spectrum of adiabatic fluctuations. Within that framework the usual set of cosmological parameters used in CMB studies is: ωb ≡ Ωbh2, the physical baryon density; ωc ≡ Ωch2, the physical density of cold dark matter (or ωm for baryons plus cold dark matter plus neutrinos); θ∗, the ratio of sound horizon to angular diameter distance to the last-scattering surface; As, the amplitude of the (scalar) initial power spectrum; ns, the power-law slope of those initial perturbations; and τ, the optical depth to Thomson scattering through the reionized intergalactic medium. Here the Hubble constant is expressed as H0 = 100 h km s-1 Mpc-1. In more detail, we follow the precise definitions used in Planck Collaboration XVI (2014) and Planck Collaboration XIII (2016).

Parameter constraints for our simulations and comparison to data use the publicly available CosmoSlik package (Millea 2017), and the full simulation pipeline code will be released publicly pending acceptance of this work. Other parameter constraints are determined using the Markov chain Monte Carlo package cosmomc (Lewis & Bridle 2002), with a convergence diagnostic based on the Gelman and Rubin statistic performed on four chains. Theoretical power spectra are calculated with CAMB (Lewis et al. 2000).

|

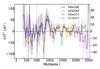

Fig. 1 Cosmological parameter constraints from PlanckTT+τprior for the full multipole range (orange) and for ℓ < 800 (blue) – see the text for the definitions of the parameters. We note that the constraints are generally in good agreement, with the full Planck data providing tighter limits on the parameters; however, the best-fit values certainly do shift. It is these shifts that we seek to explain in this paper. A prior τ = 0.07 ± 0.02 has been used here as a proxy for the effect of the low-ℓ polarization data (with the impact of a different prior discussed later). As a comparison, we also show results for WMAP TT data combined with the same prior on τ (grey). |

2. Parameters from low-ℓ versus full-ℓPlanck data

Figure 1 compares the constraints on six parameters of the base-ΛCDM model from the PlanckTT+τprior data for ℓ < 2500 with those using only the data at ℓ < 800. We have imposed a specific prior on the optical depth, τ = 0.07 ± 0.02, as a proxy for the Planck LFI low-ℓ polarization data, in order to make it easier to compare the constraints, and to restrict our investigation to the TT power spectrum only. As mentioned before, we will discuss the impact of the newer HFI polarization results in Sect. 5.5. The constraints shown are one-dimensional marginal posterior distributions of the cosmological parameters given the data, obtained using the cosmomc code (Lewis & Bridle 2002), as described in Sect. 1, and applying exactly the same priors and assumptions for the Planck likelihoods as detailed in Planck Collaboration XIII (2016).

We see that the constraints from the full data set are tighter than those from using only ℓ < 800, and that the peaks of the distributions7 are slightly shifted. It is these shifts that we seek to explain in the later sections. Figure 1 also shows constraints from the WMAP TT spectrum. As already mentioned, these constraints are qualitatively very similar to those from Planckℓ < 800, although not exactly the same, since WMAP reaches the cosmic variance limit closer to ℓ = 600. Nevertheless, as was already shown by Kovács et al. (2013), Larson et al. (2015), the CMB maps themselves agree very well, and thus the small differences in parameter inferences (the largest of which is a roughly 1σ difference in θ∗) are presumably due to small differences in sky coverage and WMAP instrumental noise. We see that the dominant source of parameter shifts between Planck and WMAP is the new information contained in the ℓ> 800 modes, and that by discussing parameter shifts internal to Planck we are also directly addressing the differences between WMAP and Planck.

Figure 1 shows the shifts for some additional derived parameters, as well as the basic six-parameter set. In particular, one can choose to use the conventional cosmological parameter H0, rather than the CMB parameter θ∗, as part of a six-parameter set. Of course neither choice is unique, and we could have also focused on other derived quantities in addition to six that span the space; for the amplitude, we have presented results for the usual choice As, but added panels for the alternative choices Ase− 2τ (which will be important later in this paper) and σ8 (the rms density variation in spheres of size 8 h-1 Mpc in linear theory at z = 0). The shifts shown in Fig. 1 are fairly representative of the sorts of shifts that have already been discussed in previous papers (e.g. Planck Collaboration XVI 2014; Planck Collaboration XI 2016; Addison et al. 2016), despite different choices of τ prior and ℓ ranges.

To simplify the analysis as much as possible, throughout most of this paper we will choose our parametrization of the six degrees of freedom in the ΛCDM model so that we reduce the correlations between parameters, and also so that our choice maps onto the physically meaningful effects that will be described in Sect. 4. While a choice of six parameters satisfying both criteria is not possible, we have settled on θ∗, ωm, ωb, ns, As e− 2τ, and τ. Most of these choices are standard, but two are not the same as those focused on in most CMB papers: we have chosen ωm instead of ωc, because the former governs the size of the horizon at the epoch of matter-radiation equality, which controls both the potential-envelope effect and the amplitude of gravitational lensing (see Sect. 4); and we have chosen to use As e− 2τ in place of As, because the former is much more precisely determined and much less correlated with τ. Physically, this arises because at angular scales smaller than those that subtend the horizon at the epoch of reionization (ℓ ≃ 10) the primary impact of τ is to suppress power by e− 2τ (again, see Sect. 4).

As a consequence of this last fact, the temperature power spectrum places a much tighter constraint on the combination As e− 2τ than it does on τ or As. Due to the strong correlation between these two parameters, any extra information on one will then also translate into a constraint on the other. For this reason, a change in the prior we use on τ will be mirrored by a change in As, given a fixed As e− 2τ combination. Conversely, the extra information one obtains on As from the smoothing of the small-scale power spectrum due to gravitational lensing will be mirrored by a change in the recovered value of τ (and this will be important, as we will show later). As a result, since we will mainly focus on the shifts of As e− 2τ and τ, we will often interpret changes in the value of τ as a proxy for changes in As (at fixed As e− 2τ), and thus for the level of lensing observed in the data (see Sect. 5.2).

3. Comparison of parameter shifts with expectations

In light of the shifts in parameters described in the previous section, we would of course like to know whether they are large enough to indicate a failure of the ΛCDM model or the presence of systematic errors in the data, or if they can be explained simply as an expected statistical fluctuation arising from instrumental noise and sample variance. The aim of this section is to give a precise determination based on simulations, in particular one that avoids several approximations used by previous analyses.

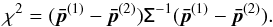

One of the first attempts to quantify the shifts was performed in Appendix A of Planck Collaboration XVI (2014), and was based on a set of Gaussian simulations. More recent studies using the Planck 2015 data have generally compared posteriors of disjoint sets of Planck multipole ranges (e.g. Planck Collaboration XI 2016; Addison et al. 2016). There, the posterior distribution of the parameters shifts given the data is  , with

, with  being the vector of parameter-marginalized means estimated from the multipole range α = 1,2. This posterior distribution is assumed to be a Gaussian with zero mean and covariance Σ = C(1) + C(2), where C(α) are the parameter posterior covariances of the two data sets and both

being the vector of parameter-marginalized means estimated from the multipole range α = 1,2. This posterior distribution is assumed to be a Gaussian with zero mean and covariance Σ = C(1) + C(2), where C(α) are the parameter posterior covariances of the two data sets and both  and C(α) are estimated from MCMC runs. Therefore, there it is assumed that, if one excludes from the parameter vector the optical depth τ for which prior information goes into both sets, the remaining five cosmological parameters are independent random variables. Additionally, to quantify the overall shift in parameters, a χ2 statistic is computed,

and C(α) are estimated from MCMC runs. Therefore, there it is assumed that, if one excludes from the parameter vector the optical depth τ for which prior information goes into both sets, the remaining five cosmological parameters are independent random variables. Additionally, to quantify the overall shift in parameters, a χ2 statistic is computed,  (1)The probability to exceed χ2 is then calculated assuming that it has a χ2 distribution with degrees of freedom equal to the number of parameters (usually five since τ is ignored).

(1)The probability to exceed χ2 is then calculated assuming that it has a χ2 distribution with degrees of freedom equal to the number of parameters (usually five since τ is ignored).

There are assumptions, both explicit and implicit, in previous analyses which we avoid with our procedure. We take into account the covariance in the parameter errors from one data set to the next, and do not assume that the parameter errors are normally distributed. Additionally our procedure allows us to include τ in the set of compared parameters. As we will see, our more exact procedure shows that consistency is somewhat better than would have appeared to be the case otherwise.

3.1. General outline of the procedure

We schematically outline here the steps of the procedure that we apply, with more details being provided in the following section.

First, we choose to quantify the shifts between parameters estimated from different multipole ranges as differences in best-fit values  , that is, the values that maximize their posterior distributions, rather than differences in the mean values

, that is, the values that maximize their posterior distributions, rather than differences in the mean values  of their marginal distributions. We adopt this choice because best-fit values are much faster to compute (they are determined with a minimizer algorithm, while the means require full MCMC chains). We justify this choice by the fact that the posterior distributions of cosmological parameters in the ΛCDM model are very closely Gaussian, so that their means and maxima are very similar. Furthermore, we will consistently compare the shifts in best-fit parameters measured from the data with their probability distribution estimated from the simulations. Therefore we are confident that this choice should not affect our final results.

of their marginal distributions. We adopt this choice because best-fit values are much faster to compute (they are determined with a minimizer algorithm, while the means require full MCMC chains). We justify this choice by the fact that the posterior distributions of cosmological parameters in the ΛCDM model are very closely Gaussian, so that their means and maxima are very similar. Furthermore, we will consistently compare the shifts in best-fit parameters measured from the data with their probability distribution estimated from the simulations. Therefore we are confident that this choice should not affect our final results.

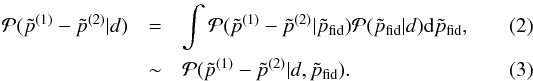

Next, we wish to determine the probability distribution of the parameter shifts given the data, that is,  . Since when estimating

. Since when estimating  we use the same Gaussian prior on τ,

we use the same Gaussian prior on τ,  and

and  are correlated. Therefore, we use simulations to numerically build this distribution. The idea is to draw simulations from the Planck likelihoods

are correlated. Therefore, we use simulations to numerically build this distribution. The idea is to draw simulations from the Planck likelihoods  , where

, where  is a fiducial model. For each of these simulations, we estimate the best-fit parameters

is a fiducial model. For each of these simulations, we estimate the best-fit parameters  for each of the multipole ranges considered. This allows us to build the probability distribution of the shifts in parameters given a fiducial model,

for each of the multipole ranges considered. This allows us to build the probability distribution of the shifts in parameters given a fiducial model,  .

.

The fiducial model we use is the best-fit (the maximum of the posterior distribution) ΛCDM model for the full ℓ = 2–2500 PlanckTT data, with τ fixed to 0.07, and the Planck calibration parameter, yP, fixed to one (see details, for example about treatment of foregrounds, in the next section; yP is a map-level rescaling of the data as defined in Planck Collaboration XI (2016)). More explicitly, we use { Ase− 2τ,ns,ωm,ωb,θ∗,τ,yP } = { 1.886,0.959,0.1438,0.02206,1.04062,0.07,1 }. The reason for fixing τ and the calibration in obtaining the fiducial model is that for the analysis of each simulation, priors on these two parameters are applied, centred on 0.07 and 1, respectively; if our fiducial model had different values, the distribution of best-fits across simulations for those and all correlated parameters would be biased from their fiducial values, and one would need to recentre the distributions; our procedure is more straightforward and clearer to interpret. In any case, our analysis is not very sensitive to the exact fiducial values and we have checked that for a slightly different fiducial model with τ = 0.055, the significance levels of the shifts given in Sect. 3.3 change by <0.1σ8. This allows us to take the final step, which assumes that the distribution of the shifts in parameters is weakly dependent on the fiducial model in the range allowed by its probability distribution given the data,  , so that we can estimate the posterior distribution of the parameter differences given the data from

, so that we can estimate the posterior distribution of the parameter differences given the data from  In fact, the uncertainty on the fiducial model estimated from the data, encoded in

In fact, the uncertainty on the fiducial model estimated from the data, encoded in  , is small (at the percent level for most of the parameters), and we explicitly checked in the τ = 0.055 case that its value does not change our results. Moreover, since we are interested in the distribution of the differences of the parameter best-fits, and not in the absolute values of the best-fits themselves, we expect that this difference essentially only depends on the scatter of the data as described by the Planck likelihood from which we generate the simulations. Since this likelihood is assumed to be weakly dependent on the fiducial model, again roughly in the range allowed by

, is small (at the percent level for most of the parameters), and we explicitly checked in the τ = 0.055 case that its value does not change our results. Moreover, since we are interested in the distribution of the differences of the parameter best-fits, and not in the absolute values of the best-fits themselves, we expect that this difference essentially only depends on the scatter of the data as described by the Planck likelihood from which we generate the simulations. Since this likelihood is assumed to be weakly dependent on the fiducial model, again roughly in the range allowed by  , we expect the distribution of the differences to have a weak dependence on the fiducial model.

, we expect the distribution of the differences to have a weak dependence on the fiducial model.

3.2. Detailed description of the simulations

We now turn to describe these simulations in more detail. The goal of these simulations is to be as consistent as possible with the approximations made in the real analysis (as opposed to, for example, the suite of end-to-end simulations described in Planck Collaboration XI 2016, which aim to simulate systematics not directly accounted for by the real likelihood). In this sense, our simulations are a self-consistency check of Planck data and likelihood products. We will now describe these simulations in more detail.

For each simulation, we draw a realization of the data independently at ℓ < 30 and at ℓ > 309. At ℓ < 30 we draw realizations directly at the map level, whereas for ℓ > 30 we use the plik_lite CMB covariance (described in Planck Collaboration XI 2016) to draw power spectrum realizations. For both ℓ < 30 and ℓ > 30, each realization is drawn assuming a fiducial model.

For ℓ> 30, we draw a random Gaussian sample from the plik_lite covariance and add it to the fiducial model. This, along with the covariance itself, forms the simulated likelihood. The plik_lite covariance includes in it uncertainties due to foregrounds, beams, and inter-frequency calibration, hence these are naturally included in our analysis. We note that the level of uncertainty from these sources is determined from the Planck ℓ < 2500 data themselves (extracted via a Gibbs-sampling procedure, assuming only the frequency dependence of the CMB). Thus, we do not expect exactly the same parameters from plik and plik_lite when restricted to an ℓmax below 2500 because plik_lite includes some information, mostly on foregrounds, from ℓmax < ℓ < 250010. For our purposes, this is actually a benefit of using plik_lite, since it lets us put well-motivated priors on the foregrounds for any value of ℓmax in a way that does not double count any data. Regardless of that, the difference between plik and plik_lite is not very large. For example, the largest of any parameter difference at ℓmax = 1000 is 0.15σ (in the σ of that parameter for ℓmax = 1000), growing to 0.35σ at ℓmax = 1500, and of course back to effectively zero by ℓmax = 2500. Regardless, since our simulations and analyses of real data are performed with the same likelihood, our approach is fully self-consistent.

At ℓ < 30, so as to simulate the correct non-Gaussian shape of the Cℓ posteriors, we draw a map-level realization of the fiducial CMB power spectrum. In doing so, we ignore uncertainties due to foregrounds, inter-frequency calibration, and noise; we will show below that this is a sufficient approximation. For the likelihood, rather than compute the Commander (Planck Collaboration IX 2016; Planck Collaboration X 2016) likelihood for each simulation (which in practice would be computationally prohibitive), we instead use the following simple but accurate analytic approximation. With no masking, the probability distribution of (2ℓ + 1)Ĉℓ/Cℓ is known to be exactly a χ2 distribution with 2ℓ + 1 degrees of freedom (here Ĉℓ is the observed spectrum and Cℓ is the theoretical spectrum). Our approximation posits that, for our masked sky, fℓ(2ℓ + 1)Ĉℓ/Cℓ is drawn from χ2 [fℓ(2ℓ + 1)], with fℓ an ℓ-dependent coefficient determined for our particular mask via simulations, and with Ĉℓ being the mask-deconvolved power spectrum. Approximations very similar to this have been studied previously by Benabed et al. (2009) and Hamimeche & Lewis (2008). Unlike some of those works, our approximation here does not aim to be a general purpose low-ℓ likelihood, rather just to work for our specific case of assuming the ΛCDM model and when combined with data up to ℓ ≃ 800 or higher. While it is not a priori obvious that it is sufficient in these cases, we can perform the following test. We run parameter estimation on the real data, replacing the full Commander likelihood with our approximate likelihood using Ĉℓ and fℓ as derived from the Commander map and mask. We note that this also tests the effect of fixing the foregrounds and inter-frequency calibrations, since we are using just the best-fit Commander map, and it also tests the effect of ignoring noise uncertainties, since our likelihood approximation does not include them. We find that, for both an ℓ < 800 and an ℓ < 2500 run11, no parameter deviates from the real results by more than 0.05σ, with several parameters changing much less than that; hence we find that our approximation is good enough for our purposes. Additionally, in Appendix B we describe a complementary test that scans over many realizations of the CMB sky as well, also finding the approximation to be sufficient.

The likelihood from each simulation is combined with a prior on τ of 0.07 ± 0.02 (with other choices of priors discussed in Sect. 5.5). It is worth emphasizing that the exact same prior is imposed on every simulation, and hence implicitly we are not drawing realizations of different polarization data to go along with the realizations of temperature data that we have discussed above. This is a valid choice because the polarization data are close to noise dominated and therefore largely uncorrelated with the temperature data. We have chosen to do this because our aim is to examine parameter shifts between different subsets of temperature data, rather than between temperature versus polarization, and thus we regard the polarization data as a fixed external prior. Had we sampled the polarization data, the significance levels of shifts would have been slightly smaller because the expected scatter on τ and correlated parameters would be slightly larger. We have explicitly checked this fact by running a subset of the simulations (ones for ℓ < 800 and ℓ < 2500) with the mean of the τ prior randomly draw from its prior distribution for each simulation, that is, we have implicitly drawn realizations of the polarization data. We find that the significance levels of the different statistics discussed in the following section are reduced by 0.1σ or less. We note that this same subset of simulations is described further in Appendix B, where it is used as an additional verification of our low-ℓ approximation.

|

Fig. 2 Differences in best-fit parameters between ℓ < 800 and ℓ < 2500 as compared to expectations from a suite of simulations. The cloud of blue points and the histograms are the distribution from simulations (discussed in Sect. 3), while the orange points and lines are the shifts found in the data. Although the shifts may appear to be generally large for this particular choice of parameter set, it is important to realise that this is not an orthogonal basis, and that there are strong correlations among parameters; when this is taken into account, the overall significance of these shifts is 1.4σ, and the significance of the biggest outlier (Ase− 2τ), after accounting for look-elsewhere effects, is 1.7σ. Figure 3 shows these same shifts in a more orthogonal basis that makes judging these significance levels easier by eye. Choosing a different multipole at which to split the data, or comparing low ℓs versus high ℓs alone, does not change this qualitative level of agreement. We note that the parameter mode discussed in Sect. 3.3 is not projected out here, since it would correspond to moving any data point by less than the width of the point itself. |

3.3. Results

With the simulated data and likelihoods in hand, we now numerically maximize the likelihood for each of the realizations to obtain best-fit parameters. The maximization procedure uses “Powell’s method” from the SciPy package (Jones et al. 2001–2016) and has been tested to be robustby running it on the true data at all ℓ splits, beginning from several different starting points, and ensuring convergence to the same minimum. We find in all cases that convergence is sufficient to ensure that none of the significance values given in this section change by more than 0.1σ, which we consider a satisfactory level.

Using the computational power provided by the volunteers at Cosmology@Home12, whose computers ran a large part of these computations, we have been able to run simulations not just for ℓ < 800 and ℓ < 2500, but for roughly 100 different subsets of data, with around 5000 realizations for each. We discuss some of these results in this section, with a more comprehensive set of tests given in Appendix A.

Figure 2 shows the resulting distribution of parameter shifts expected between the ℓ < 800 and ℓ < 2500 cases, compared to the shift seen in the real data. To quantify the overall consistency, we pick a statistic, compute its value on the data as well as on the simulations, then compute the probability to exceed (PTE) the data value based on the distribution of simulations. We then turn this into the equivalent number of σ, such that a 1-dimensional Gaussian has the same 2-tailed PTE. We use two particular statistics:

-

the χ2 statistic, computing χ2 = Δp Σ-1 Δp, where Δp is the vector of shifts in parameters between the two data sets and Σ is the covariance of these shifts from the set of simulations;

-

the max-param statistic, where we scan for max( | Δp/σp |), that is, the most deviant parameter from the set { θ∗, ωm, ωb, Ase− 2τ, ns, τ }, in terms of the expected shifts from the simulations, σp.

|

Fig. 3 Visually it might seem that the data point in the six-parameter space of Fig. 2 is a much worse outlier than only 1.4σ. One way to see that it really is only 1.4σ is to transform to another parameter space, as shown in this figure. Linear transformations leave the χ2 unaffected, and while ours here are not exactly linear, the shifts are small enough that they can be approximated as linear and the χ2 is largely unchanged (in fact it is slightly worse, 1.6σ). We have chosen these parameters so the shifts are more decorrelated while still using physical quantities. The parameter |

|

Fig. 4 Distribution of two different statistics computed on the simulations (blue histogram) and on the data (orange line). The first is the χ2 statistic, where we compute χ2 for the change in parameters between ℓ < 800 and ℓ < 2500, with respect to the covariance of the expected shifts. The second is a “biggest outlier” statistic, where we search for the parameter with the largest change, in units of the standard deviation of the simulated shifts. We give the probability to exceed (PTE) on each panel. For both statistics, we find that the observed shifts are largely consistent with expectations from simulations. |

In the case of the χ2 statistic, and when one is comparing two nested sets of data (by “nested” we mean that one data set contains the other, that is, ℓ < 800 is part of ℓ < 2500), there is an added caveat. In cases like this, there is the potential for the existence of one or more directions in parameter space for which expected shifts are extremely small compared to the posterior constraint on the same mode. These correspond to parameter modes where very little new information has been added, and hence one should see almost no shift. It is thus possible that the χ2 statistic is drastically altered by a change to the observed shifts that is in fact insignificant at our level of interest. Such a mode can be excited by any number of things, such as systematics, effects of approximations, minimizer errors, etc., but at a very small level. These modes can be enumerated by simultaneously diagonalizing the covariance of expected shifts and the covariance of the posteriors, and ordering them by the ratio of eigenvalues. For the case of comparing ℓ < 800 and ℓ < 2500, we find that the worst offending mode corresponds to altering the observed shifts in { H0, ωm, ωb, Ase− 2τ, ns, τ } by { 0.02, −0.01, 0.02, −0.003, 0.04, 0.01 } in units of the 1σ posteriors from ℓ < 2500. This can change the significance of the χ2 statistic by an amount that corresponds to 0.6σ, despite no cosmological parameter nor linear combination of them having changed by more than a few percent of each σ. To mitigate this effect and hence to make the χ2 statistic more meaningful for our desired goal of assessing consistency, we quote significance levels after projecting out any modes whose ratio of eigenvalues is greater than 10 (which in our case is just the aforementioned mode). We emphasize that removal of this mode is not meant to, nor does it, hide any problems; in fact, in some cases the χ2 becomes worse after removal. The point is that without removing it we would be sensitive to shifts in parameters at extremely small levels that we do not care about. In any case, this mode removal is only necessary for the case of the χ2 statistic and nested data sets, which is only a small subset of the tests performed in this paper.

Results for several data splits are summarized in Table 1, with the comparison of ℓ < 800 to ℓ < 2500 given in the first row and shown more fully in Fig. 4. In this case, we find that the parameter shifts are in fairly good agreement with expectations from simulations, with significance levels of 1.4σ and 1.7σ from the two statistics, respectively. We also note that the qualitative level of agreement is largely unchanged when considering ℓ < 800 versus ℓ> 800 or when splitting at ℓ = 1000.

Of the other data splits shown in Table 1, the ℓ < 1000 versus ℓ> 1000 case may be of particular interest, since it is discussed extensively in Addison et al. (2016). Although not the main focus in their paper, those authors find 1.8σ as the level of the overall agreement by applying the equivalent of our Eq. (1) to the shifts in five parameters, namely { θ∗,ωc,ωb,log As,ns }. This is similar to our result, although higher by 0.2σ. There are three main contributors to this difference. Firstly, although Addison et al. drop τ in the comparison to try to mitigate the effect of the prior on τ having induced correlations in the two data sets, they keep log As as a parameter, which is highly correlated with τ. This means that their comparison fails to remove the correlations, nor does it take them into account. One could largely remove the correlation by switching to Ase− 2τ (which is much less correlated with τ); this has the effect of reducing the significance of the shifts by 0.3σ. Secondly, the Addison et al. analysis puts no priors on the foreground parameters, which is especially important for the ℓ> 1000 part. For example, fixing the foregrounds to their best-fit levels from ℓ < 2500 reduces the significance by an additional 0.2σ. Finally, our result uses six parameters as opposed to five (since we are able to correctly account for the prior on τ); this increases the significance back up by around 0.3σ.

There is an additional point that Addison et al. (2016) fail to take into account when quoting significance levels – and the same issue arises in some other published claims of parameter shifts that focus on a single parameter. This is that one should not pick out the most extreme outlying parameter without assessing how large the largest expected shift is among the full set of parameters. In other words, one should account for what are sometimes called “look elsewhere” effects (see Planck Collaboration XVI 2016, for a discussion of this issue in a different context). Our simulations allow us to do this easily. For example, in the ℓ < 1000 versus ℓ> 1000 case, the biggest change in any parameter is a 2.3σ shift in ωm; however, the significance of finding a 2.3σ outlier when searching through six parameters with our particular correlation structure is only 1.6σ, which is the value we quote in Table 1.

To summarize this section, we do not find strong evidence of inconsistency in the parameter shifts from ℓ < 800 to those from ℓ < 2500, when compared with expectations, nor from any of the other data splits shown in Table 1. We also find that the results of Addison et al. (2016) somewhat exaggerate the significance of tension, for a number of reasons, as discussed above.

As a final note, we show in Table 2 the consistency of various data splits as in Table 1, but using data and simulations that have a prior of τ = 0.055 ± 0.010 instead of τ = 0.07 ± 0.02. In general the agreement between different splits changes by between −0.1 and 0.3σ, thus slightly worse. A detailed discussion of these results will be presented in Sect. 5.5.

Consistency of various data splits, as determined from two statistics computed on data and simulations.

|

Fig. 5 Response of |

4. Physical explanation of the power spectrum response to changing ΛCDM parameters

Having studied the question of the magnitude of the parameter shifts relative to expectations, we now turn to an analysis of why the best-fit model parameters change in the particular way that they do. Understanding this requires reviewing exactly how changes to ΛCDM parameters affect the CMB power spectrum, so that these can be matched with the features in the data that drive the changes. The material in this section is meant as background for the narrative that will come later, and readers may want to skip it on a first reading; nevertheless, the information collected here is not available in any single source elsewhere, and will be important for understanding the relationship between parameters and power spectrum features. The key information is the response of the angular power spectrum to changes in parameters, shown in Fig. 5. In Sect. 5 we will close the loop on how the physics embodied in the curves of Fig. 5 interacts with the residual features in the power spectrum to give the parameter shifts we see in Fig. 1.

The structure in the CMB anisotropy spectrum arises from gravity-driven oscillations in the baryon-photon plasma before recombination (e.g. Peebles & Yu 1970; Zel’dovich et al. 1972). Fortunately our understanding of the CMB spectrum has become highly developed, so we are able to understand the physical causes (see Fig. 5) of the shifts already discussed as arising from the interaction of gravitational lensing, the early integrated Sachs-Wolfe (ISW, Sachs & Wolfe 1967) effect, the potential envelope, and diffusion damping. In this section we review the physics behind the  curves and clarify some interesting interactions by “turning off” various effects. The reader is referred to Peacock (1999), Liddle & Lyth (2000), and Dodelson (2003) for basic textbook treatments of the physics of CMB anisotropies.

curves and clarify some interesting interactions by “turning off” various effects. The reader is referred to Peacock (1999), Liddle & Lyth (2000), and Dodelson (2003) for basic textbook treatments of the physics of CMB anisotropies.

4.1. The matter density: ωm

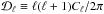

We begin by considering how changes in the matter density affect the power spectrum, leading to the rising behaviour seen in the top left panel of Fig. 5. We note that here we have plotted the linear response in the quantity  rather than Cℓ.

rather than Cℓ.

Since much of the relevant action occurs near horizon crossing, a description of the physics is best accomplished by picking a gauge; we choose the Newtonian gauge here and focus primarily on the potentials Φ and Ψ and the density. Within this picture, the impact of the matter density comes from the “early integrated Sachs-Wolfe effect” (i.e., the evolution of the potentials immediately after last scattering) and from the “potential envelope”. The effect of main interest to us is the latter – the enhancement of power above ℓ ≃ 100 arising due to the near-resonant driving of the acoustic oscillations by decaying potentials as they cross the horizon near, or earlier than, the epoch of matter-radiation equality (Hu & White 1996a, 1997; Hu et al. 1996). Overdense modes that enter the horizon during radiation domination (ρm/ρrad ≪ 1) cannot collapse rapidly enough into their potential wells (due to the large pressure of the radiation) to prevent the potentials from decaying due to the expansion of the Universe. The time it takes the potential to decay is closely related to the time at which the photons reach their maximal compression and hence maximal energy density perturbation. The near-resonant driving of the oscillator, and the fact that the photons do not lose (as much) energy climbing out of the potential well (as they gained falling in), leads to a large increase in observed amplitude of the temperature perturbation over its initial value. For modes that enter the horizon later, the matter density perturbations contribute more to the potentials, which are (partially) stabilized against decay by the contribution of the CDM. This reduces the amplitude enhancement. The net result is an ℓ-dependent boost to the power spectrum amplitude, transitioning from unity at low ℓ to a factor of over ten in the high-ℓ limit. This boost is known as the “potential envelope”. It is not immediately apparent in the power spectrum, due to the effects of damping at high ℓ, but it imprints a large dependence on ωm and can be uncovered if the effects of damping and line-of-sight averaging are removed (e.g. Fig. 7 of Hu & White 1997).

The characteristic scale of the power boost is set by the angular scale, θeq, which is the comoving size of the horizon at the epoch of matter-radiation equality projected from the last-scattering surface. Thus the CMB spectra are sensitive to θeq. In the ΛCDM model θeq depends almost solely on the redshift of matter-radiation equality, zeq (with an additional, very weak, dependence on Ωm). Higher ωm means higher zeq and thus θeq is smaller; the rise in power from low ℓ (modes that entered at z <zeq) to high ℓ (modes that entered at z>zeq) gets shifted to higher ℓ. This shifting of the transition to higher ℓ results in a decrease in power in the region of the transition and thus the shape of the change in  shown in Fig. 5. As we will see in Sect. 5.1, an oscillatory decrease in lower ℓ power (from increasing ωm) will be a key part of our explanation for the parameter shifts. Indeed, once the impact of the low multipoles is reduced by the addition of high-ℓ data, the increase in power near the first peak from a redder spectrum must be countered by a higher ωm (and other shifts, see Sect. 5.3).

shown in Fig. 5. As we will see in Sect. 5.1, an oscillatory decrease in lower ℓ power (from increasing ωm) will be a key part of our explanation for the parameter shifts. Indeed, once the impact of the low multipoles is reduced by the addition of high-ℓ data, the increase in power near the first peak from a redder spectrum must be countered by a higher ωm (and other shifts, see Sect. 5.3).

Additional dependence on ωm comes from the change in the damping scale and how recombination proceeds. The damping scale is the geometric mean of the horizon and the mean free path at recombination, and changing the expansion rate changes this scale (Silk 1968; Hu & Sugiyama 1995b). An increase in ωm corresponds to a decrease in the physical damping scale (which corresponds to a decreased angular scale at fixed distance to last scattering). However, within the range of variation in ωm allowed by Planck, changes in damping are a sub-dominant effect.

Finally, the anisotropies we observe are modified from their primordial form due to the effects of lensing by large-scale structure along the line of sight. One effect of lensing is to “smear” the acoustic peaks and troughs, reducing their contrast (Seljak 1996). The peak smearing by lensing depends on ωm through the decay of small-scale potentials between horizon crossing and the epoch of equality (see e.g. Pan et al. 2014). While ωm is an important contributor to the lensing effect, we will see in Sect. 5.2 that lensing will primarily drive shifts in τ and Ase− 2τ.

4.2. The baryon density: ωb

For the nearly scale-invariant, adiabatic perturbations of interest to us, the presence of baryons causes a modulation in the heights of the peaks in the power spectrum and a change in the damping scale due to the change in the mean free path. Physically a non-zero baryon-photon momentum density ratio, R = 3ρb/ (4ργ), alters the zero-point of the acoustic oscillations away from zero effective temperature (Θ0 + Ψ = 0) to Θ0 + (1−R)Ψ = 0 (see e.g. Seljak 1994; Hu & Sugiyama 1995a; Hu et al. 1997). For non-zero RΨ this leads to a modulation of even and odd peak heights, enhancing the odd peaks (corresponding to compression into a potential well) with RΨ < 0 and reducing the even peaks (corresponding to rarefactions in potential wells). Given only low-ℓ data, such as for WMAP, the relative heights of the first and second peaks, in particular, are important for determining R and therefore ωb. An increase in ωb boosts the first peak relative to the second, as is apparent in the ωb panel of Fig. 5. We will see in Sect. 5.1 that the inclusion of the high-ℓ data will lead to a decrease in ωb, which will be required to better match the ratio of the first and second peaks once the other parameters have shifted.

A change in ωb also changes the mean free path of photons near recombination, and the process of recombination itself, thus affecting the diffusion damping scale. As with an increase in ωm, an increase in ωb decreases the physical damping scale. The angular scale which this corresponds to depends on the distance to last scattering, which can be altered by changing ωb, depending on what other quantities are held fixed. For the choice shown in Fig. 5, we find that the angular scale decreases as well, leading to less damping and the excess of power seen at high ℓ in the ωb panel.

4.3. The optical depth: τ

Reionization in the late Universe recouples the CMB photons to the matter field, but not as tightly as before recombination (since the matter density has dropped by over six orders of magnitude in the intervening period). Scattering of photons off electrons in the ionized intergalactic medium suppresses the power in the primary anisotropies on scales smaller than the horizon at reionization (ℓ ≳ 10) by e− 2τ (Kaiser 1984; Efstathiou 1988; Sugiyama et al. 1993; Hu & White 1996b). Because of this, increasing τ at fixed As e− 2τ keeps the power spectrum at ℓ ≫ 10 nearly constant. The small wiggles in the τ panel are entirely from the increased gravitational lensing power, due to the increase in As necessary to keep As e− 2τ constant. At very low ℓ this increase in As directly boosts anisotropies.

Increasing As e− 2τ at fixed τ results in changes to  that are almost exactly proportional to

that are almost exactly proportional to  , with small corrections due to the second-order effect of gravitational lensing.

, with small corrections due to the second-order effect of gravitational lensing.

4.4. The spectral index, ns, and acoustic scale, θ∗

The final two effects are very easy to understand. A change in the spectral index of the primordial perturbations yields a corresponding change to the observed CMB power spectrum (e.g. Knox 1995). Increasing ns with the amplitude fixed at the pivot point k = k0 = 0.05 Mpc-1, increases (decreases) power at ℓ ≳ ( ≲ ) 550, since modes with k = k0 project into angular scales near ℓ = 550. We will see in Sect. 5.1 that a tilt towards redder spectra (i.e. a decrease in high-ℓ power) will be necessary to best fit the high-ℓ data. Alternatively, as discussed in Sect. 5.3, when not tightly constrained by the ℓ> 1000 data, a higher ns allows a better fit to the “deficit” of power at ℓ < 30.

The predominant effect of altering θ∗ (which, with the other parameters held fixed, is performed by modifying ωΛ) is to stretch the spectrum in the ℓ direction, causing large changes in the rapidly-varying regions of the spectrum between peaks and troughs. We note that the high sensitivity of the power spectrum to this scaling parameter (e.g. Kosowsky et al. 2002) means that small variations in θ∗ can swamp those of other parameters. In Sect. 5.1 we will see that one of the differences between the ℓ < 800 best-fit model and that for ℓ < 2500 is a variation in θ∗ that shifts the third peak in the angular power spectrum slightly to the right, removing some oscillatory residuals.

4.5. The Hubble constant, H0

With these effects in hand it is easy to understand how changes in other parameters, such as H0, impact  . As discussed in Planck Collaboration XVI (2014, Sect. 3.1), the characteristic angular size of fluctuations in the CMB (θ∗) is exceptionally well and robustly determined (better than 0.1%). Within the ΛCDM model this angle is a ratio of the sound horizon at the time of last scattering and the angular diameter distance to last scattering. The sound horizon is determined by the redshift of recombination, ωm, and ωb, so the constraint on θ∗ translates into a constraint on the distance to last scattering, which in turn becomes a constraint on the 3-dimensional subspace ωm–ωb–h. Marginalizing over ωb gives a strong degeneracy between ωm and h, which can be approximately expressed as Ωmh3 = constant (as will be important in Sect. 5.3). For example, an increase in ωm decreases the sound horizon as

. As discussed in Planck Collaboration XVI (2014, Sect. 3.1), the characteristic angular size of fluctuations in the CMB (θ∗) is exceptionally well and robustly determined (better than 0.1%). Within the ΛCDM model this angle is a ratio of the sound horizon at the time of last scattering and the angular diameter distance to last scattering. The sound horizon is determined by the redshift of recombination, ωm, and ωb, so the constraint on θ∗ translates into a constraint on the distance to last scattering, which in turn becomes a constraint on the 3-dimensional subspace ωm–ωb–h. Marginalizing over ωb gives a strong degeneracy between ωm and h, which can be approximately expressed as Ωmh3 = constant (as will be important in Sect. 5.3). For example, an increase in ωm decreases the sound horizon as  (softened by the influence of radiation) and hence the distance to last scattering must decrease, to hold θ∗ fixed. This distance is an integral of 1 /H(z), with H2(z) ∝ {ωm[(1 + z)3−1] + h2} for the dominant contribution from z ≪ zeq. Thus h must decrease in order for the distance to last scattering not to decrease too much.

(softened by the influence of radiation) and hence the distance to last scattering must decrease, to hold θ∗ fixed. This distance is an integral of 1 /H(z), with H2(z) ∝ {ωm[(1 + z)3−1] + h2} for the dominant contribution from z ≪ zeq. Thus h must decrease in order for the distance to last scattering not to decrease too much.

4.6. Lensing

As mentioned earlier, the anisotropies we observe are modified from their primordial form by several secondary processes, among them the deflection of CMB photons by the gravitational lensing associated with large-scale structure (see e.g. Lewis & Challinor 2006, for a review). These deflections serve to “smear” the last scattering surface, leading to a smoothing of the peaks and troughs in the angular power spectrum, as well as generating excess power on small scales, B-mode polarization, and non-Gaussian signatures. Our focus is on the first effect.

Gradients in the gravitational potential bend the paths of photons by a few arcminutes, with the bend angles coherent over degree scales, leading to a pattern of distortion and magnification on the initially Gaussian CMB sky. In magnified regions the power is shifted to lower ℓ, while in demagnified regions it is shifted to higher ℓ. Across the whole sky this reduces the contrast of the peaks and troughs in the power spectrum (while conserving the total power), and generates an almost power-law tail to very high ℓ. The amplitude of the peak smearing is set by (transverse gradients of) the (projected) gravitational potential and this is sensitive to parameters (such as As and ωm), which change its amplitude or shape. The separate topic of CMB lensing through the 4-point functions (to derive  ) is discussed in Sect. 6.3.

) is discussed in Sect. 6.3.

5. Connecting parameter shifts to data to physics

With an understanding of the different ways in which the ΛCDM model parameters can adjust the TT spectrum, we can now begin to try to explain the parameter shifts of main interest for this paper. We start in Sect. 5.1 by showing how the best-fit model has adjusted from its ℓ < 800 solution to match the new data at ℓ> 800. This story tracks more or less chronologically how our best understanding of the ΛCDM model has progressed, since the modes at ℓ ≲ 800 had mostly been measured first with WMAP. Additionally, it highlights the features of the Planck data that are important for driving parameter shifts with respect to the ℓ < 800 best-fit model.

The question answered in Sect. 5.1 is “what caused the parameters to shift from their ℓ < 800 values to their ℓ < 2500 ones?” A different, and also useful, question is “what causes there to be shifts at all, that is, where do the differences come from?”. This puts the ℓ < 800 and ℓ> 800 data on more equal footing, allowing us to pick aspects of each that generate most of the difference between the two. Although the resulting story is not unique, we find that the particular choice we have made results in a helpful explanation. It leads us to identify the connection with gravitational lensing, which we discuss in Sect. 5.2, and of the low-ℓ deficit, which we discuss in Sect. 5.3.

|

Fig. 6 Shifts in the best-fit values of parameters when one considers the multipole range either below or above different values of ℓsplit. This uses the PlanckTT+τprior data combination, with ℓ> 30 computed using plik_lite. The different lines correspond to restricting the data to ℓ <ℓsplit (blue), 30 <ℓ <ℓsplit (green), and ℓ>ℓsplit (orange). These shifts are described in Sect. 5.1. One can see here that excising the ℓ < 30 region moves the low-ℓ parameters closer to the high-ℓ parameters, as discussed in detail in Sect. 5.3. Error bands are the ± 1 and ± 2σ scatter in the simulations away from the input fiducial model. We have chosen to plot this quantity as opposed to posterior constraints on these parameters (which is different because of our prior on τ) because it is these bands that are appropriate for comparing the blue and orange lines against each other. We note that this has the perhaps counter-intuitive effect of having the error bands in the τ panel increase as more data are added. None of the local “spikes” are found to be significant, as can be seen from the bottom panel of Fig. A.1. |

|

Fig. 7 How the best-fit ℓ <ℓmax PlanckTT+τprior ΛCDM model adjusts as ℓmax is increased from 800 to 2500 (going from the top panels to the bottom panels). Left column: all panels show residuals relative to the ℓ < 800 model. Planck power spectrum binned estimates and ± 1σ errors on the CMB spectrum, as extracted with plik_lite, are shown as grey boxes. Note the change in y-axis scale at ℓ = 500, indicated by the vertical dotted line. The solid black line is the best-fit model for ℓ <ℓmax, where ℓmax is different for each panel, as indicated by which of the boxes are shaded darker. The various coloured lines indicate the linear response to the shift in individual parameters between their ℓ < 800 best-fit value and their ℓ <ℓmax one. Right column: identical to the left column, except that the contribution from θ∗ (i.e., the blue line from the corresponding left panel) has been subtracted from the sums, as well as from the actual model and from the data. For reference, the arrows in the top and bottom panels show the locations of the peaks in the power spectrum. |

5.1. From ℓ < 800 to ℓ < 2500

We begin by examining how parameters shift as we increase ℓmax from 800 to 2500. The best-fit parameters from the range ℓ <ℓmax are shown by the solid blue curve in Fig. 6 (where ℓsplit is, in this case, ℓmax). Although eight parameters are displayed in this figure, for the purpose of explaining shifts it is important to consider only six parameters at a time (since there are only six degrees of freedom in the ΛCDM model). We will use the set of six discussed in Sect. 2, for the reasons described there. As a reminder, they are θ∗, ωm, ωb, ns, As e− 2τ, and τ. Focusing on these parameters, one can see in Fig. 6 the following changes:

-

a sharp drop in θ∗ between ℓmax = 800 and 1000;

-

a highly correlated gradual drop in ωb, drop in ns, increase in ωm, and increase in Ase− 2τ across the whole multipole range;

-

an increase in τ between ℓmax = 1000 and 1500.

Figure 7 illustrates even more explicitly how these different multipole ranges cause the parameter shifts. This figure compresses a large amount of information into a combination of ten panels, the full understanding of which requires a slow stepwise explanation. Each of the panels in the left column shows residuals of the data relative to the best-fit ℓ < 800 model. The thick black line is the best-fit model for ℓ <ℓmax, with ℓmax increased in each subsequent panel and represented by the darker data points (varying from ℓmax = 800 in the top panels to ℓmax = 2500 in the bottom panels).

In panel 1a of Fig. 7 we have ℓmax = 800 and thus we see directly the residuals in the ℓ> 800 data with respect to the ℓ < 800 model that cause the parameter shifts of main interest for this work. We will sometimes refer to these features as the “oscillatory residuals”; for definiteness, we are referring to the upward trends at ℓ ≃ { 900,1300,1600,1800 } and downward ones at ℓ ≃ { 1100,1400,1700 }. We note that these oscillations are (roughly) out of phase with the CMB peaks themselves, a point which will be important for future discussion.