| Issue |

A&A

Volume 667, November 2022

|

|

|---|---|---|

| Article Number | A104 | |

| Number of page(s) | 18 | |

| Section | Planets and planetary systems | |

| DOI | https://doi.org/10.1051/0004-6361/202142486 | |

| Published online | 15 November 2022 | |

Semi-supervised standardized detection of extrasolar planets

1

Université Aix-Marseille, CNRS, CNES, LAM,

13007

Marseille, France

e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

2

Université Côte-d’Azur, Observatoire de la Côte d’Azur, CNRS,

Lagrange UMR 7293,

CS 34229,

06304,

Nice Cedex 4, France

Received:

19

October

2021

Accepted:

1

July

2022

Abstract

Context. The detection of small exoplanets with the radial velocity (RV) technique is limited by various poorly known noise sources of instrumental and stellar origin. As a consequence, current detection techniques often fail to provide reliable estimates of the significance levels of detection tests in terms of false-alarm rates or p-values.

Aims. We designed an RV detection procedure that provides reliable p-value estimates while accounting for the various noise sources typically affecting RV data. The method is able to incorporate ancillary information about the noise (e.g., stellar activity indicators) and specific data- or context-driven data (e.g. instrumental measurements, magnetohydrodynamical simulations of stellar convection, and simulations of meridional flows or magnetic flux emergence).

Methods. The detection part of the procedure uses a detection test that is applied to a standardized periodogram. Standardization allows an autocalibration of the noise sources with partially unknown statistics (algorithm 1). The estimation of the p-value of the test output is based on dedicated Monte Carlo simulations that allow handling unknown parameters (algorithm 2). The procedure is versatile in the sense that the specific pair (periodogram and test) is chosen by the user. Ancillary or context-driven data can be used if available.

Results. We demonstrate by extensive numerical experiments on synthetic and real RV data from the Sun and αCenB that the proposed method reliably allows estimating the p-values. The method also provides a way to evaluate the dependence of the estimated p-values that are attributed to a reported detection on modeling errors. It is a critical point for RV planet detection at low signal-to-noise ratio to evaluate this dependence. The python algorithms developed in this work are available on GitHub.

Conclusions. Accurate estimation of p-values when unknown parameters are involved in the detection process is an important but only recently addressed question in the field of RV detection. Although this work presents a method to do this, the statistical literature discussed in this paper may trigger the development of other strategies.

Key words: techniques: radial velocities / stars: activity / planets and satellites: detection / methods: statistical

© S. Sulis et al. 2022

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

This article is published in open access under the Subscribe-to-Open model. This email address is being protected from spambots. You need JavaScript enabled to view it. to support open access publication.

1 Introduction

When radial velocity (RV) time series are analysed, exoplanet detection tests aim at deciding whether the time series contains one or several planetary signatures plus noise (the alternative hypothesis, noted ℋ1), or only noise (the null hypothesis, noted ℋ0). With the recent advent of very stable and high-precision spectrographs (Pepe et al. 2010; Jurgenson et al. 2016), instrumental noise has reached levels that are so low (Fischer et al. 2016) that the noise generated by stellar activity has become the limiting factor for the detection of Earth-like planets. Stellar noise is frequency dependent (colored) because stellar variability results from several phenomena that contribute simultaneously to the observations and evolve at different timescales. The main sources of stellar noise that have been identified so far include magnetic cycles, starspots, faculae, inhibition of the convective blueshift by plages, meridional circulation, convective motions of the stellar atmosphere (granulation and supergranulation), and acoustic oscillations. The overall stellar noise affects the frequency range in which planets can be found and may dominate the ~ 10 cm s−1 amplitude level that is typical of an Earth-like exoplanet orbiting a Sun-like star at 1 AU.

The development of techniques to mitigate stellar activity for the study of exoplanets is an active field of research. Several methods and tools have been developed to account for these different sources in the RV time series (see Meunier 2021 for a recent review). First, some specific observation strategies are commonly used to reduce the contribution of short-timescale activity components (Hatzes et al. 2011; Dumusque et al. 2011). Second, to correct for variability that evolves at the stellar rotation period (spots and plages), the most basic technique consists of fitting a model to the RV dataset (e.g., sinusoidal functions at the stellar rotation period (Boisse et al. 2011), or quasi-periodic Gaussian processes (see, e.g., Aigrain et al. 2012; Baluev 2011, 2013b; Rajpaul et al. 2015, 2021; Jones et al. 2022)). To constrain the parameters of these models, activity indicators (e.g., the S-index, Wilson 1968; log R′HK, Noyes et al. 1984; or the bisector span, Queloz et al. 2001) are commonly used if correlations with the RV dataset are found. Some relations with photometry (Aigrain et al. 2012; Yu et al. 2018) or the study of the chromatic dependence of the stellar activity (Meunier et al. 2017; Tal-Or et al. 2018; Dumusque 2018) can also be used. Recent advances in RV extraction processes from stellar spectra in particular are very promising (Davis et al. 2017; Collier Cameron et al. 2021; Cretignier et al. 2021; de Beurs et al. 2022).

However, despite the diversity of existing techniques to reduce the stellar noise, residual noise sources at unknown levels translate into uncertainties in the interpretation of the RV detection tests. In practice, it is difficult to provide a reliable estimation of the significance level of a reported detection. For this reason, examples of a debated detection are numerous (see as examples the cases of HD 41248 b and c, Jenkins & Tuomi 2014; Santos et al. 2014; HD 73256 b, Udry et al. 2003; Ment et al. 2018; AD Leo b, Tuomi et al. 2018; Carleo et al. 2020; Alede-baran c, Hatzes et al. 2015; Reichert et al. 2019; or Kapteyn b and c, Anglada-Escude et al. 2014; Bortle et al. 2021). We discuss the particular case of αCenBb at the end of this paper as an example (Dumusque et al. 2012; Hatzes 2013; Rajpaul et al. 2016; Toulis & Bean 2021).

The main question we address here is the evaluation of reliable significance levels of detection tests in the presence of unknown colored noise. Significance levels are often interpreted through analyses of false-alarm probabilities (FAP) or of p-values. The former are fixed before the tests are conducted, while the latter measure a degree of surprise of the observed test statistic under the null hypothesis. In this work, we consider p-values. The estimation of these values has been amply discussed in the literature for the case when the noise model contains several unknown parameters (Bayarri & Berger 2000). Connections of our approach with the literature are discussed in Sect. 3.3. An accurate estimation of the p-values is critical for designing reliable detection methods of small-planet RV signatures.

Because the sampling grid is irregular, an RV detection is most often performed through the Lomb–Scargle periodogram (LSP; Scargle 1982) or variants of the periodograms (see, e.g., Ferraz-Mello 1981; Cumming et al. 1999; Cumming 2004; Reegen 2007; Bourguignon et al. 2007; Zechmeister & Kürster 2009; Baluev 2013a; Jenkins et al. 2014; Tuomi et al. 2014, Gregory 2016, Hara et al. 2017, 2022). While the marginal distribution of the periodogram components at each frequency is often known for white Gaussian noise (WGN) of known variance (e.g., a χ2 distribution for the LSP), the joint distribution of the periodogram components is much more difficult to characterize because the components exhibit dependences that are dictated by the sampling grid (or equivalently, by the spectral window).

In practice, specific noise models are applied to correct RV data for the activity, and the p-values of the test statistics are evaluated on the RV residuals assuming they follow the statistics of a WGN, or that they have a known covariance matrix. When the noise is considered WGN, some methods are based on approximate analytical expressions (Baluev 2008). Other methods compute the FAP using the formula that would be obtained for independent components, where an ad hoc number of independent frequencies is plugged in (see Horne & Baliunas 1986; Schwarzenberg-Czerny 1998; Cumming 2004, as well as Süveges 2014 and Süveges et al. 2015 for a critical analysis of this approach). Finally, another family of approaches is based on Monte Carlo or bootstrap techniques (Paltani 2004; Schwarzenberg-Czerny 2012), sometimes aided by results from extreme-value distributions (Süveges 2014; Süveges et al. 2015).

In the large majority of cases, however, the noise in RV residuals is not white, but colored and with an unknown power spectral density (PSD). Providing reliable FAP estimates in this situation remains, to our knowledge, an open problem. The assumption that the noise PSD is flat leads logically to increased false alarms in the PSD bumps, and to a loss of detection power in the PSD valleys (e.g., see Fig. 2 in Sulis et al. 2016). This problem is only partially solved in practical approaches where a covariance model is fitted to the data and used to produce residuals that are assumed to be white. For instance, Delisle et al. (2020) recently presented an analytical formulation of the p-value resulting from the generalization of Baluev (2008) to the case of colored noise when the covariance matrix is known. Their results indicate that the FAP estimates are highly dependent on the considered covariance matrix, with variations directly related to the mismatch between the true and assumed covariance matrix. Similar effects were analyzed in Sulis et al. (2016) and in Sect. 3.5 of Sulis (2017). To our knowledge, all methods aimed at evaluating the FAP or p-values associated with periodogram tests assume that the noise is eventually white, either directly or because the model used for the covariance is considered exact, so that the signal can be whitened. Since estimation errors in the noise statistics lead to fluctuations in the p-value estimates, our incomplete knowledge of the noise sources and their statistics represents a fundamental limitation to how much we can trust these estimates. The present work deals precisely with this problem. Another critical point is the consistency of the p-value estimate when different noise models are used in the detection process (this point was very clearly raised by Rajpaul et al. 2016, for instance). The controversy about a low-mass planet detection often resides in the different noise models that are assumed for the data. Because i) noise models can never be exact and ii) the estimated p-values are model dependent, incorporating model errors in the estimation of these values appears to be a relevant (but new) approach, and we follow this path below.

In this paper, we propose a new RV planet detection procedure that can generate reliable estimates of the significance levels of detection tests by means of p-values. The approach is built on a previous study (Sulis et al. 2020), which was limited to the case of regularly sampled time series and primarily considered the effect of granulation noise. Both of these limitations are removed in the present paper.

The approach is flexible and can deal with the main characteristics that are encountered in practice with RV detection: irregular sampling and various noise sources with partially unknown noise statistics. The basic principle of the procedure is to propagate errors on the noise model parameters to derive accurate p-values of detection tests by means of intensive Monte Carlo simulations. The choice of the noise models involved in the procedure is entirely left to the user, as is the choice of the periodogram and detection test. We note that without loss of generality, the procedure can be applied to an RV dataset resulting from any extraction process (including, e.g., the extraction techniques recently described in Collier Cameron et al. 2021 or Cretignier et al. 2021). In addition, the procedure can exploit training time series of stellar or instrumental noise, if these data are available, for instance, through auxiliary observations or realistic simulations.

The core of the new detection approach is presented in Sect. 2 (algorithm 1). The numerical procedure for estimating the p-values of the test statistics produced by algorithm 1 is presented in Sect. 3 (algorithm 2). Validations of the p-value estimation procedure under different configurations are given in Sects. 4 and 5. We conclude with the application of the detection procedure to the RV data of αCenB, where an Earth-mass planet detection has been debated (Sect. 6). In this last section, we show that the proposed detection procedure can be used to evaluate the robustness of a planet detection with respect to specific model errors. Examples of how to use the algorithms we developed in this work are available on GitHub1, and practical details are given in Appendix A.

2 Semi-supervised standardized detection

Our notations are as follows: Bold small (capital) letters without subscript indicate column vectors (matrices). Vector (matrix) components are denoted by subscripts. For instance, we write vector x as x = [x1,…xN]T. We denote by  the estimate of a quantity z. Throughout the paper, the notation ∣ means “conditional on” (and it is not necessarily used for probability densities). Appendix B summarizes the main notations of this work.

the estimate of a quantity z. Throughout the paper, the notation ∣ means “conditional on” (and it is not necessarily used for probability densities). Appendix B summarizes the main notations of this work.

2.1 Hypothesis testing

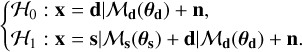

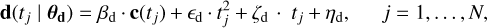

We consider an RV time series under test, x, irregularly sampled at N time instants t = [t1, t2,…, tN]⊤. Our detection problem can be cast as a binary hypothesis problem of the form

(1)

(1)

The signal d accounts for various effects that typically affect RV data. A nonexhaustive list of such effects includes long-term instrumental trends, stellar magnetic activity, or RV trends due to an additional companion in the system. This nuisance signal, which is the unknown mean under ℋ0, cannot be estimated by numerical simulations. While d can be of random origin, it is customary to model it as a deterministic signature (see, e.g., Dumusque et al. 2012). This is our approach in the following. We refer to d as a nuisance signal and write generically that d is generated from some model ℳd with parameters θd.

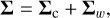

The noise n ~ N(0, Σ) is a zero-mean stochastic noise of unknown covariance matrix Σ that we decompose into

(2)

(2)

with Σw a diagonal covariance matrix whose entries account for possibly different uncertainties on the RV measurement. If they are equal,  , with

, with  the variance of a WGN (noted w below), and Σc the unknown covariance matrix of a colored noise We note that, in this paper, Σc is considered fixed. Temporal variations of Σc such as those considered by Baluev (2015) might in principle be considered in our numerical procedure by sampling according to a time-dependent covariance structure, but this is beyond the scope of this paper. A nonexhaustive list of effects that can be included in n entails meridional circulation, magnetic flux emergence, stellar granulation, supergranulation, and instrumental noise. The choice of which type of RV noise component fits in d or n depends on the assumptions made by the user about the RV time series under test.

the variance of a WGN (noted w below), and Σc the unknown covariance matrix of a colored noise We note that, in this paper, Σc is considered fixed. Temporal variations of Σc such as those considered by Baluev (2015) might in principle be considered in our numerical procedure by sampling according to a time-dependent covariance structure, but this is beyond the scope of this paper. A nonexhaustive list of effects that can be included in n entails meridional circulation, magnetic flux emergence, stellar granulation, supergranulation, and instrumental noise. The choice of which type of RV noise component fits in d or n depends on the assumptions made by the user about the RV time series under test.

Under the alternative hypothesis (one or more planets are present), time series x contains the same noise components d and n, plus a deterministic signal s corresponding to the Keplerian signatures of planets (model ℳs). The number of planets and their Keplerian parameters θs are unknown.

Throughout this paper, we consider the optional case in which context-driven data of the stochastic colored noise n in Eq. (1) can be made available through realistic simulations, for instance. We call these data the null training sample (NTS). By construction, this NTS is free of any possible contamination by the planetary signal.

For example, focusing on the stellar granulation noise, Sulis et al. (2020) showed that 3D magnetohydrodynamical (MHD) simulations can generate realistic synthetic time series of this RV noise source (see also Appendix C). This was also demonstrated in Palumbo et al. (2022) with synthetic stellar spectra simulators. This suggests that using simulations of stellar granulation in the detection process could provide an alternative to the observational strategy of binning spectra over a night (Dumusque et al. 2011). Meunier et al. (2015) and Meunier & Lagrange (2019) showed that while convection (granulation and supergranulation) dominates at high frequencies, where it acts as a power-law noise (~1/f), convection has energy at all frequencies, so that averaging on short timescales is not efficient enough to detect RV signatures of Earth-like planets that are hidden in the convection noise.

Other examples in which NTS can be obtained are 3D MHD simulations of stellar supergranulation (e.g., Rincon & Rieutord 2018), empirical simulations of stellar meridional flows (Meunier & Lagrange 2020), or specific data-driven observations representative of the instrumental noise (e.g., microtelluric lines; Cunha et al. 2014 Seifahrt et al. 2010) affecting the RV data. In general, all realistic synthetic time series of the stochastic colored noise sources affecting the RV data can be included in the NTS.

This setting poses the general question how this NTS might be leveraged to improve the detection process, both in terms of detection power and of the accuracy of the computed p-value. This approach was proposed and analyzed in Sulis et al. (2017a), but with d = 0 and a regular sampling for x in model (1). In this approach, the detection test was automatically calibrated with the NTS through a standardization of the periodogram. While this preliminary work has demonstrated improved performances in terms of power and control of the p-value, it presents two important limitations for RV detection purposes. First, the approach accounts only for noise that can be generated through realistic simulations. Second, the scope was limited to regular sampling. In contrast, the detection procedure proposed below can cope with all components of noise, including instrumental and stellar noises of different origins (d ≠ 0) and any type of time sampling. This general procedure is semi-supervised in the sense that it incorporates ancillary sources of noise that affect the data. Because it is also based on a periodogram standardization, we call this procedure semi-supervised standardized detection (3SD for short). The 3SD procedure contains two algorithms: one for the detection, and one for evaluating the resulting significance levels.

2.2 Periodogram standardization

2.2.1 An NTS is available

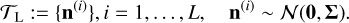

We assume that the NTS, if available, takes the form of L sequences of synthetic time series of noise n in Eq. (1),

(3)

(3)

An efficient way to use this side information in the detection process is to compute standardized periodograms (see Sulis et al. 2020). Let Ω denote the set of frequencies at which the periodogram, noted p, is computed (Ω and the type of periodogram P are defined by the user). We note vk these frequencies and NΩ their number. The periodograms of each of the L series of the NTS, pℓ(vk), with ℓ = 1,…, L can be used to form an averaged periodogram,  , as the sample mean of these L periodograms,

, as the sample mean of these L periodograms,

(4)

(4)

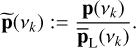

The standardized periodogram is then defined as

(5)

(5)

In the following, p and  denote the vectors concatenating the periodogram ordinates at all frequencies in Ω, and the standardized periodogram is noted

denote the vectors concatenating the periodogram ordinates at all frequencies in Ω, and the standardized periodogram is noted  or simply

or simply  .

.

In the case of regular sampling and when d = 0, Sulis et al. (2020) demonstrated that standardization avoids both a loss of detection power in valleys of the noise PSD and an increase in false alarm rate in its peaks (see Sulis et al. 2016, and Sect.3.5.3 of Sulis 2017). An important point of the present paper is to show that these desirable features also hold in the case of irregular sampling for different models of d and n (see Sects. 4 and 5).

2.2.2 No NTS is available

Simulations (e.g., 3D MHD simulations of stellar granulation) are computationally demanding, and generating very long time series (months or years) may therefore appear beyond reach for standard RV planet searches. It is also worth mentioning that helioseismology has revealed that 3D MHD simulations are unable to reproduce the power of large-scale flows on the Sun (supergranulation), which means that improvements are still needed in this field of research (Hanasoge et al. 2012). For these reasons, we also consider the case in which no NTS of n in Eq. (1) is available. In this case, the periodogram standardization relies on a parametric model ℳn whose parameters are estimated from the RV data. We note that, when an NTS is available, model ℳn is not necessary for the detection process in algorithm 1. Therefore, it was not made explicit in the notation used in model (1). In this approach, synthetic time series of n are generated and used to compute the averaged periodogram used in Eq. (4). Numerous examples of stochastic parametric models exist, and a (nonexhaustive) relevant list for RV data includes Harvey-like functions (Harvey 1985), autoregressive (AR) models (Brockwell & Davis 1991; Elorrieta et al. 2019), or Gaussian processes (Rasmussen & Williams 2005).

A particular noise configuration happens when noise n is white (Σ0 = 0, leading to Σ = σ2I in Eq. (2)). In this case, the standardization of the periodogram in Eq. (5) is simply performed by the estimated variance of the RV time series.

2.3 Considered detection procedure

The detection procedure proposed in this work is described in Fig. 1. We refer to this procedure as algorithm 1 in the following.

The first step (rows 1–4) aims at computing the periodogram p involved in Eq. (5). For this purpose, if the nuisance component d is present in Eq. (1), the algorithm starts by removing it from the time series x by fitting model ℳd (blue block). The residuals are used to compute p (row 4). If d = 0 is not present in Eq. (1), then the blue block is ignored, and we directly compute p with the data under test (x).

In this first step, the algorithm removes the nuisance signals, and detection is performed on the residuals. A more general detection approach such as the generalized likelihood ratio (Kay 1998) would require jointly estimating all unknown parameters under both hypotheses. This approach would lead to different nuisance parameters under ℋ0 and ℋ1 because the parameters of the nuisance signals are consecutively estimated with or without those of the signal (sinusoidal or Keplerian parameters). This procedure can be computationally very expensive or even intractable, however, when a maximum likelihood estimation is required over a large number of parameters. It is therefore discarded in our framework, where nuisance parameters are estimated only under ℋ0. For weak planetary signatures, the perturbation of these signatures in the nuisance parameters is weak, so that the estimation of these parameters does not change much under both hypotheses.

The second step (rows 5–10) aims at computing the averaged periodogram  involved in Eq. (5). For this purpose, if n in Eq. (1) is a colored noise for which an NTS is available (rows 6–7), we directly take the L null training series to compute

involved in Eq. (5). For this purpose, if n in Eq. (1) is a colored noise for which an NTS is available (rows 6–7), we directly take the L null training series to compute  in row 14. If no NTS is available (rows 8–10), we fit model ℳn to the time series under test x and compute a synthetic NTS (called

in row 14. If no NTS is available (rows 8–10), we fit model ℳn to the time series under test x and compute a synthetic NTS (called  ) with the estimated model parameters

) with the estimated model parameters  . In this case,

. In this case,  in row 14 is evaluated after generating a sufficiently large number of synthetic noise time series from ℳn. If noise n is white (rows 11–13), the averaged periodogram in row 14 simply becomes the product of the estimated variance of x

in row 14 is evaluated after generating a sufficiently large number of synthetic noise time series from ℳn. If noise n is white (rows 11–13), the averaged periodogram in row 14 simply becomes the product of the estimated variance of x  by vector 1: = [1… 1]T.

by vector 1: = [1… 1]T.

The final steps of the procedure consist of computing  (row 15) and applying the detection test ⊤ (row 16). The procedure returns the test statistics t(x).

(row 15) and applying the detection test ⊤ (row 16). The procedure returns the test statistics t(x).

2.4 Periodogram and test used in this work

In order to illustrate our detection framework, we mainly consider the Lomb–Scargle periodogram here (Scargle 1982). The numerator and the denominator in Eq. (5) are therefore LSP. For the detection test, we consider the test whose statistics is the largest periodogram peak. This test is referred to as Max test for short. The Max test statistic writes

(6)

(6)

We emphasize that the 3SD detection procedure is not tied to the particular choice of the pair (periodogram and test) selected above. It can indeed be applied as such to other types of periodograms and test statistics that exploit the periodogram ordinates differently (see Sulis et al. 2016, 2017a for examples of other detection tests). Table 1 summarizes examples of input parameters that can be used in algorithm 1.

When algorithm 1 has computed the test statistics of x, the important question is to evaluate its p-value. This is the purpose of Sect. 3.

3 Numerical estimation of the p-values

For an observed test statistics t, the p-value is denoted by pv(t) and defined as

For example, when all parameters are known, the p-value of a test statistics t obtained by the Max test (6) is defined as

(7)

(7)

with  the cumulative distribution function (CDF) of the test statistics under ℋ0.

the cumulative distribution function (CDF) of the test statistics under ℋ0.

A p-value estimation procedure in which all parameters of the statistical model are known under ℋ0 is called an oracle in the statistical literature (see Donoho & Johnstone 1994; Roquain & Verzelen 2022; Mary & Roquain 2021 and references therein). We describe such an oracle procedure in Sect. 3.1.

When unknown nuisance parameters are present under ℋ0, the computation of p-values is not straightforward. A common approach that allows accounting for uncertainties in the model parameters considers the unknown parameters under ℋ0 as random and computes the expectation of p-values over the distribution of a prior parameter. This is the approach of the Monte Carlo procedure proposed in algorithm 2 (see Sect. 3.2). Connections with existing approaches are discussed in Sect. 3.3.

In practice, a desirable feature of the procedure is also that it provides a high-probability interval (e.g., 90%) for the location of the true (i.e., oracle) p-value. The Monte Carlo sampling approach of algorithm 2 naturally provides estimates of such intervals, called 90% intervals below. As a complementary p-value estimate, the highest p-value (upper envelope of the sampled p-values) obtained from the simulation can also be used as a (often overly) conservative estimate (Bayarri & Berger 2000). In practice, a large difference between the two estimates is the sign of a strong dependence of the estimation results on the considered models and parameters.

3.1 Oracle

When all parameters under the null hypothesis are known, which is an ideal situation that is not met in practice, a straightforward way for evaluating the oracle p-values numerically consists of performing algorithm 1 many times (e.g., b) to obtain a set {t(x)}j = 1,…,b of realizations of the test statistics t. From this set, we can compute an empirical estimate of  , called

, called  for short, and an estimated p-value by plugging

for short, and an estimated p-value by plugging  into Eq. (7). The estimation of the p-value can be made arbitrarily accurate because

into Eq. (7). The estimation of the p-value can be made arbitrarily accurate because  converges to

converges to  as b → ∞.

as b → ∞.

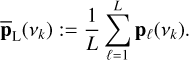

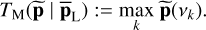

3.2 Algorithm for estimating the p-values

The generic procedure (algorithm 2) is presented in Fig. 2. The final expected p-value  is obtained by sample expectation (row 19) over B p-values

is obtained by sample expectation (row 19) over B p-values  obtained in a major loop (green, rows 1–18). Each such p-value is obtained through a minor loop (rows 5–16) that measures an empirical CDF

obtained in a major loop (green, rows 1–18). Each such p-value is obtained through a minor loop (rows 5–16) that measures an empirical CDF  . This loop (and this estimated CDF) includes an averaging over the priors πd and πw.

. This loop (and this estimated CDF) includes an averaging over the priors πd and πw.

The major loop samples B values of the parameters for n  , which are obtained by parametric bootstrap: a sample NTS is generated according to parameters

, which are obtained by parametric bootstrap: a sample NTS is generated according to parameters  estimated from the data (rows 3 and 4). In the nested minor loop (blue), the algorithm samples a number b of test statistics t produced by algorithm 1 conditionally on

estimated from the data (rows 3 and 4). In the nested minor loop (blue), the algorithm samples a number b of test statistics t produced by algorithm 1 conditionally on  (if n is colored),

(if n is colored),  (if n is white) and on

(if n is white) and on  (if d ≠ 0 in Eq. (1)). These parameter values are obtained by randomly perturbing the corresponding input values

(if d ≠ 0 in Eq. (1)). These parameter values are obtained by randomly perturbing the corresponding input values  and

and  within reasonable uncertainties according to some prior distributions, called πw (row 10) and πd (row 13). If model ℳd involves the use of an ancillary series c, a synthetic series

within reasonable uncertainties according to some prior distributions, called πw (row 10) and πd (row 13). If model ℳd involves the use of an ancillary series c, a synthetic series  is generated in row 14 using the same model parameters

is generated in row 14 using the same model parameters  plus a WGN.

plus a WGN.

Each test statistics is then obtained by applying algorithm 1 to simulated time series x{i,j} (row 16). If n is colored, algorithm 1 takes as input the L simulated NTS  (computed in row 7) for periodogram standardization. If n is white, this vector is sent as empty in algorithm 1.

(computed in row 7) for periodogram standardization. If n is white, this vector is sent as empty in algorithm 1.

A particular case of this algorithm arises when the noise is considered colored with a parametric model ℳn and no NTS is available. This case impacts the setting of L in rows 3 and 7 and is explained in Sect. 5.5.

For πw and πd, we consider Gaussian and uniform priors (with independent components if multivariate) in the simulations below. The component distributions are centered on the parameter values estimated from the data. The scale parameters (widths of the intervals for uniform priors or variances for the Gaussian prior) are estimated from the estimation error bars.

3.3 Connection with the literature

In the statistical literature, several approaches exist for estimating p-values when unknown parameters are involved in the detection process (see Bayarri & Berger 2000 for a review). In these approaches, prior predictive p-values are obtained by averaging over a prior law of the parameters (Box 1980). In contrast, posterior predictive p-values require averaging over the posterior distribution (Guttman 1967; Rubin 1984). Sampling from the posterior would require a computationally involved procedure (e.g., with a Markov chain Monte Carlo method). For this reason, we do not follow the posterior predictive approach here and aim at keeping the sampling process simple. In our approach, this process is different depending on the parameters we consider. We list the different cases below.

For the parameters of ℳn (row 4 of algorithm 2), which correspond to a parametric model of a random process, a natural way of sampling parameters over a distribution that is consistent with our model is to sample the random process with the estimate at hand and to re-estimate the parameters. This is what we do here. This approach also means that we do not have to choose an explicit prior for θn, the parameters of which would need to be set or estimated.

For the other unknown parameters σw and θd in rows 10 and 13 of algorithm 2, we did not use the same strategy for computational reasons. Here, we found it more efficient to sample these parameters from some priors (Gaussian and uniform), whose parameters were estimated from the estimated error bars in an empirical Bayes manner (Efron & Hastie 2016).

The performances of the generic algorithm 2 are evaluated on real and synthetic data in the next three sections.

4 Noise is granulation and WGN

4.1 Objectives

We first consider the simplest case in which the RV time series is only affected by the stochastic colored noise n in Eq. (1), of which a training set τL is assumed to be available (i.e., we set d = 0 in Eq. (1), and ℳd = 0 in algorithms 1 and 2). While this configuration is not realistic for most long-period planet RV searches, where magnetic activity phenomena (defined in d) always play an important role in the observed RV time series, it can be useful for detecting low-mass ultra-short period planets (USP, defined with periods < 1 day). This has been discussed in Sulis et al. (2020). In addition to this very specific case, this section has two goals. The first goal is pedagogical because we illustrate the principle of the detection procedure with the simplest case. The second goal is to validate the proposed p-value estimation procedure when the NTS is composed with MHD simulations of stellar granulation and for irregular sampling. So far, this validation has only been performed for a regular sampling, in which case the FAP and p-values can be computed with analytical expressions (Sulis et al. 2020).

4.2 Validation of the 3SD procedure on solar data

4.2.1 Data, MHD simulations, and sampling grids

The validation of the procedure on real data poses two difficulties. First, establishing a ground-truth reference for the p-value that would be obtained with an NTS composed of genuine stellar convection noise requires implementing the oracle procedure (see Sect. 3.1), which in turn requires a very large number of genuine stellar noise time series. Fortunately, the GOLF/SoHO spectrophotometer has made 25-yr-long time series of solar RV observations available that can be used (e.g., see Appourchaux et al. 2018). Second, in this section, we focus on granulation and instrumental noise. We therefore need to disregard the low-frequency part of the noise PSD that is dominated by the magnetic activity. For this purpose, the detection test is applied to frequencies ν > 56 µHz alone because at frequencies below 56 the PSDs of solar data and MHD simulations start diverging (see Sulis et al. 2020 and Appendix C).

Because GOLF observations cover a very long period of time and because the timescales characteristic for granulation are shorter than a day, we divided the total time series into smaller series and focused on this short-timescale solar variability alone. We selected b = 1410 two-day-long series from the ≈3000 available time series taken during the first 17 yr of GOLF observations (the last years are particularly affected by detector aging).

These time series are regularly sampled (Nreg = 2880 data points) at a sampling rate Δt = 60s. As detailed in Sulis et al. (2020), we filtered out the high-frequency p-modes on each two-day-long series (with periods < 15 min because they are not reproduced by the MHD simulations), and we added a WGN with variance  estimated from the high-frequency plateau visible in the periodogram of the GOLF data (see Appendix C). The resulting dataset constitutes a large set of noise time series from which the oracle reference results can be computed.

estimated from the high-frequency plateau visible in the periodogram of the GOLF data (see Appendix C). The resulting dataset constitutes a large set of noise time series from which the oracle reference results can be computed.

For the implementation of algorithm 2, the available NTS is composed with a 53-day-long noise time series obtained from 3D MHD simulations of solar granulation (Bigot et al., in prep.). After splitting them into two-day-long time series, 26 granulation noise time series are available, of which we randomly selected L = 5 for the standardization. Before running algorithm 2, we applied the same corrections as for the GOLF data to the NTS obtained from MHD simulations (T5) (i.e., filtering of the stellar acoustic modes and addition of an instrumental WGN). The NTS was finally sampled as the observations under test (see the three sampling grids detailed below).

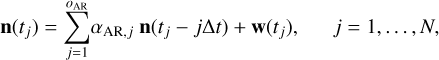

Algorithm 2 also relies on parameters that set the size of the Monte Carlo simulations, B and b (taken here as B = 100 and b = 104) and on model ℳn with associated parameters  . We chose an AR model because it suits our MHD NTS time series well and its parameters are fast to estimate. An AR process is defined as (Brockwell & Davis 1991)

. We chose an AR model because it suits our MHD NTS time series well and its parameters are fast to estimate. An AR process is defined as (Brockwell & Davis 1991)

(8)

(8)

with n(tj) the sample of n at time instant tj, αAR,j ∈] −1; 1[the AR coefficients filter, oAR the AR order, Δt the sampling time step, and w(tj) the sample at time tj of a WGN series w of variance  . The model parameters

. The model parameters  are the AR order and the filter coefficients. The order was selected using Akaike’s final prediction error criterion (Akaike 1969) and led to a value of 10, which remained fixed for the remaining procedure. The filter coefficients (estimated B × b + 1 times) were obtained through the standard Yule-Walker equations (Brockwell & Davis 1991).

are the AR order and the filter coefficients. The order was selected using Akaike’s final prediction error criterion (Akaike 1969) and led to a value of 10, which remained fixed for the remaining procedure. The filter coefficients (estimated B × b + 1 times) were obtained through the standard Yule-Walker equations (Brockwell & Davis 1991).

For this study, we considered three temporal sampling grids. First, we considered a regular sampling grid (Δt = 10 min), where we keep only 10% of the initial GOLF series to make the number of data points N that are comparable to ordinary RV time series. Second, we considered a regular sampling grid (Δt = 60 s) affected by a large central gap: two 2.4 h continuous observations separated by a 43.2 h gap. Third, we considered a random sampling grid, in which 10% of the data points are randomly selected in the initial regular grid of the GOLF series (Δt ∈ [60, 2880] s). In each case, the sampling grid contains N = 288 data points. For the regular (irregular) sampling grids, the standardized periodogram was computed using the classical (Lomb–Scargle) periodogram. They are the same for regular sampling.

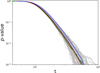

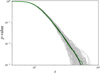

4.2.2 Validation of algorithm 2 on solar data

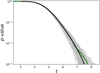

The p-value estimation procedure provided by algorithm 2 results in B = 100 estimates  shown in gray in the top panels of Fig. 3. The average p-value

shown in gray in the top panels of Fig. 3. The average p-value  is shown in black and the p-value of the oracle procedure based on real stellar noise in green. To compute this oracle, we ran algorithm 1 with 1420 GOLF time series that we further permuted ten times by blocks. This led to an effective number of b = 14200 series. To compute each realization of the test, we randomly selected one series to compute the periodogram in row 4 of algorithm 1 and L = 5 other series to compute the averaged periodogram in row 14.

is shown in black and the p-value of the oracle procedure based on real stellar noise in green. To compute this oracle, we ran algorithm 1 with 1420 GOLF time series that we further permuted ten times by blocks. This led to an effective number of b = 14200 series. To compute each realization of the test, we randomly selected one series to compute the periodogram in row 4 of algorithm 1 and L = 5 other series to compute the averaged periodogram in row 14.

The beam of the gray curves represents particular estimates of the unknown relation pv(t) of algorithm 1, with parameters that are consistent with the NTS at hand. The true p-value of this procedure is unknown (for irregular sampling) because evaluating it exactly would require an infinite number of MHD simulations. If the simulations performed in algorithm 2 sample the space of parameters correctly, the truth is likely to lie somewhere in these curves, perhaps close to the mean where the bunch of curves is located.

A comparison of the black and green curves shows that for the three considered sampling grids the approach based on MHD simulations is equivalent to using true stellar noise on average.

This shows that while algorithm 2 only has a reduced number (L = 5) of synthetic time series for the NTS at hand, it can generate a beam of situations whose mean succeeds in evaluating the true p-value of a standardized detection procedure supervised by genuine stellar noise.

We note a slight mismatch in the middle and right panel that probably arises because our ground-truth (GOLF) data contain some effects that were not accounted for in the p-value estimation procedure. Here, the highest p-value (upper envelope of the gray curves) provides a more conservative and more reliable estimate than the mean. The test statistics t corresponding to a mean p-value of 1% are 28.3, 33.6, and 33.5 for the three samplings. For these values, the simulations show that the p-value has an empirical probability of 90% to lie in the range [0.4%, 1.6%], [0.5%, 1.5%], and [0.6%, 1.5%] (panels in the middle row of Fig. 3). At these t values, the oracle p-values correspond to 1.2%, 1.8%, and 1.3%.

Because MHD simulations are made to represent stellar granulation noise with high accuracy, the good match observed in each case between the mean p-value obtained by algorithm 2 and the oracle based on true stellar noise suggests that the true p-value is close to the sample average. We also note that in the case of regular sampling, for which the p-value expression is known and given in Eq. (13) of Sulis et al. (2020), theory, the averaged estimate from algorithm 2, and the oracle with true stellar noise (panel a) match well.

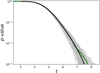

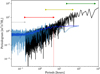

Finally, the bottom panels show the histograms of the distribution of the frequency index in which the maximum of the standardized periodogram was found during the iterations of algorithm 2 of the 3SD procedure (gray) and oracle (green). We show the same distribution, but for the (nonstandardized) LSP in blue, that is, a procedure that would apply the Max test to p instead of  in algorithm 1. Because the noise is colored, the LSP tends to peak at lower frequencies, where the PSD has higher values (see Fig. C.1). Colored noise inexorably causes an increased rate of false alarms at these frequencies, but this effect cannot be quantified or even suspected by the user when the noise PSD is unknown. In contrast, the standardization used in Eq. (5) leads to an autocalibration of the periodogram ordinates, which leads to a more reasonable (uniform) distribution of the frequency index of the maximum peak. These results show that the 3SD procedure is well calibrated and algorithm 2 succeeds in estimating the resulting p-value correctly. Because the distribution of the test statistics is very similar for data that are standardized with true noise and for data that are standardized by means of MHD noise time series, these results also demonstrate that the MHD simulations can efficiently be exploited in place of a real noise NTS for a periodogram standardization.

in algorithm 1. Because the noise is colored, the LSP tends to peak at lower frequencies, where the PSD has higher values (see Fig. C.1). Colored noise inexorably causes an increased rate of false alarms at these frequencies, but this effect cannot be quantified or even suspected by the user when the noise PSD is unknown. In contrast, the standardization used in Eq. (5) leads to an autocalibration of the periodogram ordinates, which leads to a more reasonable (uniform) distribution of the frequency index of the maximum peak. These results show that the 3SD procedure is well calibrated and algorithm 2 succeeds in estimating the resulting p-value correctly. Because the distribution of the test statistics is very similar for data that are standardized with true noise and for data that are standardized by means of MHD noise time series, these results also demonstrate that the MHD simulations can efficiently be exploited in place of a real noise NTS for a periodogram standardization.

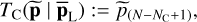

Finally, we carried out a similar validation of the output p-values, but for another pair (periodogram and test). We selected the GLS periodogram (Zechmeister & Kürster 2009) and the test statistics of the  maximum periodogram value, defined as

maximum periodogram value, defined as

(9)

(9)

with NC ∈ ℕ+ a parameter related to the number of periodic components, and  the kth-order statistic of

the kth-order statistic of  . We note that test TC may be more discriminative against the null than the Max test

. We note that test TC may be more discriminative against the null than the Max test  in Eq. (6) for multiple periodic components, as discussed by Chiu (1989) and Shimshoni (1971). The p-values resulting from algorithm 2 computed for NC = 10 in Eq. (9) and for the random sampling grid defined in Sect. 4.2.1 are shown in Fig. 4. These results illustrate that the reliability of the procedure is not tied to the pair (LSP and Max test).

in Eq. (6) for multiple periodic components, as discussed by Chiu (1989) and Shimshoni (1971). The p-values resulting from algorithm 2 computed for NC = 10 in Eq. (9) and for the random sampling grid defined in Sect. 4.2.1 are shown in Fig. 4. These results illustrate that the reliability of the procedure is not tied to the pair (LSP and Max test).

|

Fig. 3 Validation of the 3SD approach for a noise n composed of granulation plus WGN and d = 0 in Eq. (1). We investigate three temporal sampling grids, from left to right: regular, regular with a central gap, and random. Top panels: relation pv(t) for the detection procedure using a genuine NTS based on GOLF solar data (green; oracle). The B = 100 estimates |

5 Noise is magnetic activity, long term trends, granulation and WGN

5.1 Setting and objectives

In practice, many stellar activity processes affect the RV observations (Meunier 2021), and an NTS is not available for all of them. This is the case, for example, of stellar magnetic activity such as starspots and plages. We present a more general application of the 3SD procedure, in which classical techniques that are used to correct for the stellar variability of magnetic sources are coupled with the use of an NTS. We also investigate the case in which no NTS is available at the end of the section.

In this section, we consider as an illustration a nuisance signal d in Eq. (1) that encapsulates a magnetic signature that is modulated with the stellar rotation period plus long-term trends that are modulated with the stellar cycle. Numerous techniques have been developed to estimate the contribution of magnetic activity to RV data (e.g., see Ahrer et al. 2021). We assume that an estimate of the noise activity signature has been obtained by one of these methods in the form of an ancillary time series for d. This ancillary time series is assumed to be the log  activity indicator in the following (but other indicators can be used as well, see Table 1).

activity indicator in the following (but other indicators can be used as well, see Table 1).

The noise component n in Eq. (1) is composed of correlated granulation noise plus WGN. An NTS of n composed with L simulated RV time series obtained from MHD simulations is assumed to be available, except in the last part of this section, in which we relax this assumption.

The remaining section contains four parts. The first two parts describe the synthetic dataset with the computation of oracle p-values, and the validation of the p-value estimation algorithm with the impact of the priors on the computed p-values. In the third part, we study improvements that can be achieved by using generalized extreme values (GEV) to speed up the Monte Carlo simulations in algorithm 2. In the last part, we consider the particular (but practical) case in which no NTS is available for n in Eq. (1).

|

Fig. 4 Validation of algorithm 2 using the GLS periodogram and test TC in Eq. (9). Oracle is shown in green, p-value estimates from algorithm 2 are shown in gray, and their mean value is plotted in black. |

5.2 Synthetic RV time series and oracle p-values

We first created synthetic RV time series used to (i) generate an RV test time series that was later used in the input of algorithm 1, and (ii) compute the oracle p-values that were later used to evaluate the accuracy of the p-value estimates derived from algorithm 2. These time series entail magnetic, granulation, and instrumental noises generated as described below.

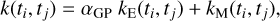

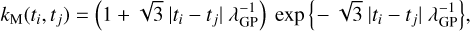

Component d was obtained as the realization of a Gaussian process (GP; Rasmussen & Williams 2005) of kernel

(10)

(10)

with kE and exponential sine square kernel defined as

(11)

(11)

and kM a Matérn-3/2 kernel defined as

(12)

(12)

with {ti, tj} the time coordinates at indexes i, j = 1,…, N. The hyperparameters of kernel k in Eq. (10) were set to αGP = 2, ΓGP = 22 is the scale parameter, PGP = 25 days is the rotation period, and λGP = 1.609 is the characteristic length scale. This GP was implemented using the GEORGE Python package2 (see Ambikasaran et al. 2015 and online documentation3 to find Eqs. (11) and (12)). Similar equations for kernels (11) and (12) can also be found in Eq. (8) of Espinoza et al. (2019) and Eq. (4) of Barragán et al. (2022), for instance.

This GP model with the same parameters was also used to compute the synthetic ancillary time series c: = [c(t1),…, c(tN)]⊤, to which we added a WGN.

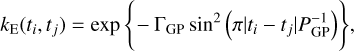

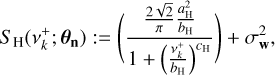

For component n, we created a stochastic colored noise time series with a Harvey function and a WGN. The power spectral density of this noise model has the form (Harvey 1985; Meunier et al. 2015)

(13)

(13)

where the vector θH = [aH, bH, cH]⊤ collects the amplitude, characteristic frequency![Mathematical equation: ${{\bf{\theta }}_{\rm{H}}} = {\left[ {{a_{\rm{H}}},{b_{\rm{H}}},{c_{\rm{H}}},\sigma _{\rm{w}}^2} \right]^ \top }$](/articles/aa/full_html/2022/11/aa42486-21/aa42486-21-eq67.png) , and power of the Harvey function, and

, and power of the Harvey function, and  is the variance of the WGN. Notation

is the variance of the WGN. Notation  means that positive frequencies are considered. We calibrated the parameter vector θH using a fit of the available MHD RV time series (see Sect. 4). In this case and in the following, we used the lmfit Python package (Newville et al. 2014), which makes nonlinear optimizations for curve-fitting problems. This choice was made for simplicity and because the algorithm is fast. For practical RV analyses, the user can optimize the fitting procedure to use a proper Markov chain Monte Carlo estimation procedure, for example.

means that positive frequencies are considered. We calibrated the parameter vector θH using a fit of the available MHD RV time series (see Sect. 4). In this case and in the following, we used the lmfit Python package (Newville et al. 2014), which makes nonlinear optimizations for curve-fitting problems. This choice was made for simplicity and because the algorithm is fast. For practical RV analyses, the user can optimize the fitting procedure to use a proper Markov chain Monte Carlo estimation procedure, for example.

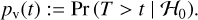

Synthetic time series with a PSD that is exactly given by Eq. (13) can be simulated with the Fourier transform (FT). To compute a synthetic time series from Eq. (13), we generated a WGN w ~ N(0,1), computed the fast FT of w, performed a point-wise multiplication with  , and finally took the real part of the inverse FT. An example of a synthetic ten-daylong time series obtained using models (10) and (13) is shown in Fig. 5 (black). From this time series, we created a large central gap between day 2 and day 8 in the time-sampling grid, and we randomly selected N = 288 data points in the two remaining intervals (gray). This synthetic RV time series is the series that we test in the next three subsections.

, and finally took the real part of the inverse FT. An example of a synthetic ten-daylong time series obtained using models (10) and (13) is shown in Fig. 5 (black). From this time series, we created a large central gap between day 2 and day 8 in the time-sampling grid, and we randomly selected N = 288 data points in the two remaining intervals (gray). This synthetic RV time series is the series that we test in the next three subsections.

To compute the oracle procedure (which has knowledge of the exact parameters that were used to generate the synthetic time series, i.e., θH in Eq. (13) and [αGP, ΓGP, PGP,λGP] in Eq. (10)), we generated 104 synthetic noise time series as described above, and we ran algorithm 1 for each of the series. The resulting oracle ground truth is shown in green in Figs. 6–8 (all panels).

5.3 Validation of algorithm 2

We applied the 3SD procedure on one of the synthetic time series. For this purpose, we assumed a realistic situation in which the noise models ℳd and ℳn that are used as inputs of algorithms 1 and 2 are different from the models that are used to generate the synthetic RV dataset in Sect. 5.2.

Following an example given in Dumusque et al. (2012) and Ahrer et al. (2021), we used for ℳd a simple parametric model4 composed of a linear combination of c and three low-order polynomials,

(14)

(14)

with θd = [βd, ϵd, ζd, ηd]⊤ the corresponding parameter vector.

For the available NTS  , we selected the L = 5 MHD time series described in Sect. 4.2.1. Using the LSP for P, the Max test for T,

, we selected the L = 5 MHD time series described in Sect. 4.2.1. Using the LSP for P, the Max test for T,  , the model (14) for ℳd, no model ℳn, and the synthetic ancillary series c, we then computed algorithm 1 and obtained a test value t.

, the model (14) for ℳd, no model ℳn, and the synthetic ancillary series c, we then computed algorithm 1 and obtained a test value t.

To compute algorithm 2, the inputs parameters (P , T), ℳd, and c were the same as for algorithm 1. For the additional inputs, we took the result from row 2 of algorithm 1 to obtain  , the vector of (four) parameters estimates, along with its scale parameter

, the vector of (four) parameters estimates, along with its scale parameter  . We selected a uniform prior πd for the noise parameters with interval widths set to twice the estimated standard error. We selected an AR model (8) for model ℳn (as in Sect. 4.1), and we fit this model to the NTS to obtain the estimated parameters

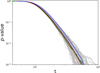

. We selected a uniform prior πd for the noise parameters with interval widths set to twice the estimated standard error. We selected an AR model (8) for model ℳn (as in Sect. 4.1), and we fit this model to the NTS to obtain the estimated parameters  . We finally set up the size of the Monte Carlo simulations to B = 100 and b = 1000. The p-value estimates resulting from algorithm 2 are shown in the left panel of Fig. 6. When they are compared with the oracle p-values, algorithm 2 is shown to provide reliable p-value estimates at any t for colored noise and activity.

. We finally set up the size of the Monte Carlo simulations to B = 100 and b = 1000. The p-value estimates resulting from algorithm 2 are shown in the left panel of Fig. 6. When they are compared with the oracle p-values, algorithm 2 is shown to provide reliable p-value estimates at any t for colored noise and activity.

As a final validation step of algorithm 2, we finally examined the impact of the priors πd (involved in row 13) on the derived p-values. The results are shown in Fig. 7. This example shows a weak impact, with an accurate estimation for any t in almost all cases. At the extreme of 10 σ for the perturbation interval width, the p-value estimate becomes more conservative: at a given value of t, the estimated p-value is biased upward (blue curve). In practice, the influence of the chosen priors on the estimated p-values can always be confirmed by a simulation study of this type.

|

Fig. 5 Example of the synthetic time series generated to validate the 3SD approach when noise equals magnetic activity plus long-term trends, granulation, and WGN. Top: averaged periodograms of the two-day GOLF solar observations used in Sect. 2.4 (green) and the associated MHD training data (red), Harvey model fit to the MHD PSD (yellow), and averaged periodogram of the final synthetic data (GP+Harvey, black). Bottom: example of synthetic RV data generated with a regular sampling (black) based on a GP noise modeling for the magnetic activity (red). For the study, the dataset was also sampled irregularly (gray). |

5.4 Generalized extreme values

Algorithm 2 does not rely on any model for the estimated CDF  of the test statistics in Eq. (6). For the estimated mean p-value to be accurate, it therefore requires the parameter b involved in algorithm 2 to be large (typically b ≥ 1000 or more). As B × b Monte Carlo simulations are involved (with B typically ≥ 100), algorithm 2 is computationally expensive. Fortunately, univariate extreme-value theory shows that the maximum of a set of identically distributed random variables follows a GEV distribution (Coles 2001). This suggests that GEV distributions can be used as a parametric model for the CDF of Eq. (6) to improve the estimation accuracy. This method was used by Süveges (2014) when only white noise is involved in model (1) (i.e., no colored noise or nuisance signal d). Sulis et al. (2017b) extended the work of Süveges (2014) to the case of colored noise. Here, we evaluate the gains that this parameterization can contribute to the bootstrap procedure for the general case of colored noise and magnetic activity signals.

of the test statistics in Eq. (6). For the estimated mean p-value to be accurate, it therefore requires the parameter b involved in algorithm 2 to be large (typically b ≥ 1000 or more). As B × b Monte Carlo simulations are involved (with B typically ≥ 100), algorithm 2 is computationally expensive. Fortunately, univariate extreme-value theory shows that the maximum of a set of identically distributed random variables follows a GEV distribution (Coles 2001). This suggests that GEV distributions can be used as a parametric model for the CDF of Eq. (6) to improve the estimation accuracy. This method was used by Süveges (2014) when only white noise is involved in model (1) (i.e., no colored noise or nuisance signal d). Sulis et al. (2017b) extended the work of Süveges (2014) to the case of colored noise. Here, we evaluate the gains that this parameterization can contribute to the bootstrap procedure for the general case of colored noise and magnetic activity signals.

The GEV CDF depends on three parameters: the location µ ∈ ℝ, the scale σ ∈ ℝ+, and the shape ξ ∈ ℝ,

![Mathematical equation: $G\left( t \right){{\rm{e}}^{ - \left[ {1 + \xi \left( {{{t - \mu } \over \sigma }} \right)} \right]{{_ + ^{ - {1 \over \xi }}}_,}}}$](/articles/aa/full_html/2022/11/aa42486-21/aa42486-21-eq79.png) (15)

(15)

with [x]+: = max(0, x). When ξ ≠ 0, G(t) in Eq. (15) is only defined for  . When ξ = 0, G(t) is derived by taking the limit at t = 0.

. When ξ = 0, G(t) is derived by taking the limit at t = 0.

In algorithm 2, the unknown parameters θGEV: = [ξ,µ,σ]⊤ can be estimated using the j = 1,…, b test statistics t(x{i,j}), for instance, by maximum likelihood. In this case, the maximization can be obtained by an iterative method (Coles 2001). This leads to i = 1,…, B GEV parameters ![Mathematical equation: ${\bf{\hat \theta }}_{GEV}^{\left\{ i \right\}}: = {\left[ {{{\hat \xi }^{\left\{ i \right\}}},{{\hat \mu }^{\left\{ i \right\}}},{{\hat \sigma }^{\left\{ i \right\}}}} \right]^ \top }$](/articles/aa/full_html/2022/11/aa42486-21/aa42486-21-eq81.png) , from which the p-value can be estimated by

, from which the p-value can be estimated by  .

.

The results of the GEV parameterization are shown in the middle and right panels of Fig. 6 (for b = 100 and b = 500). The p-value estimates resulting from the GEV approximation are still given in a fairly tight 90% interval around the oracle. Moreover, when only half of the Monte Carlo realizations are used compared to the initial b value involved in algorithm 2 (b = 500 instead of 1000), a similar precision of the p-value estimates is obtained. Hence, the GEV approximation allows us to halve the computational time of algorithm 2 for the same precision (case b = 500). Reducing the computational time by ten (b = 100) leads to larger 90% intervals at constant p-value (e.g., the interval is ~40% larger at a p-value of 1% in the right panel of Fig. 6).

|

Fig. 6 P-value estimates resulting from algorithm 1 when noise equals magnetic activity plus long-term trends, granulation, and WGN, and where an NTS is available for granulation plus WGN. The mean p-value is shown in black. The oracle, which is the same for all three panels, is shown in green. From left to right: algorithm 2 computed without the GEV, and with the GEV approximation with b = 500 and b = 100. |

|

Fig. 7 P-value estimates resulting for different choices of the priors πd in row 13 of algorithm 2. Gray and black show the results for a Gaussian prior centered on the estimated parameter values with the scale parameters taken as 3 σ, with σ the estimated standard deviation of the parameter estimates. Gray shows examples of p-values |

5.5 Algorithm 2 without NTS (practical case)

We consider now the common situation in which no NTS is available. In this case, the parameters of noise n can be estimated from the data (maybe along with the magnetic activity signal d) through a model ℳn. Standardization is then performed by generating synthetic time series according to ℳn in algorithm 1.

When no NTS is available, two steps in algorithm 2 deserve particular attention. First, in rows 3 and 4, we set L = 1 because the noise parameters estimate  of model ℳn is based on the unique time series under test x. Next, in row 7, as many synthetic time series can be generated that follow model ℳn(θn) as necessary. Hence, in principle, L → ∞. In practice, however, the choice of L is a compromise between estimation error and computation time. For the purpose of making massive Monte Carlo simulations, we used the low value L = 5.

of model ℳn is based on the unique time series under test x. Next, in row 7, as many synthetic time series can be generated that follow model ℳn(θn) as necessary. Hence, in principle, L → ∞. In practice, however, the choice of L is a compromise between estimation error and computation time. For the purpose of making massive Monte Carlo simulations, we used the low value L = 5.

As in Sect. 5.3, we applied the 3SD procedure to one of the synthetic time series described in Sect. 5.2. We first ran algorithm 1 with model ℳd such that

(16)

(16)

For model ℳn, a Harvey function (13) was used. There is no NTS and the pair (P and T) was taken as the LSP periodogram and the Max test.

To compute algorithm 2, the input parameters (P, T), ℳd, ℳn, and c are the same as for algorithm 1. For the additional inputs, we obtain  and

and  from row 2 of algorithm 1 and set πd as in Sect. 5.3. Parameters

from row 2 of algorithm 1 and set πd as in Sect. 5.3. Parameters  were obtained from row 9 of algorithm 1 based on a fit of the LSP of x (as in Dumusque et al. 2011); this implicitly assumes that the LSP provides an acceptable approximation of the true PSD). We finally set up the size of the Monte Carlo simulations as B = 100 and b = 1000. The p-value estimates resulting from algorithm 2 are shown in Fig. 8. The estimated mean p-values and true p-values match very well, similarly to the case with an NTS (Sect. 5.3, see left panel of Fig. 6). This shows that the p-value can be robustly estimated even when no NTS is available.

were obtained from row 9 of algorithm 1 based on a fit of the LSP of x (as in Dumusque et al. 2011); this implicitly assumes that the LSP provides an acceptable approximation of the true PSD). We finally set up the size of the Monte Carlo simulations as B = 100 and b = 1000. The p-value estimates resulting from algorithm 2 are shown in Fig. 8. The estimated mean p-values and true p-values match very well, similarly to the case with an NTS (Sect. 5.3, see left panel of Fig. 6). This shows that the p-value can be robustly estimated even when no NTS is available.

We anticipate, however, that the performances of detection procedures based on estimates of the covariance structure from the data will probably depend on the RV data (noise sources, data sampling, etc.). The comparison of specific cases is left to a future paper.

6 Application to RV exoplanet detection: The debated detection of αCenBb

Alpha Centauri Bb is a debated ~1.13 Earth-mass (minimum mass) planet whose detection was reported around our solar neighborhood star αCenB with an orbital period of 3.1 days (Dumusque et al. 2012). It is challenging to achieve the detection of such a small planet because αCen is a triple star and the planetary RV semi-amplitude (~0.51 m s−1) was at the limit of the instrumental precision of HARPS (~1 m s−1). The observed RV campaign contains N = 459 points, irregularly sampled during a time span of 4 yr between 2008 and 2011. The dataset contains large gaps between the observation years as a result of the visibility of the star, and small gaps that are due to the available nights.

The planet detection has been debated in a lively way: using analysis tools different from the detection paper in Hatzes (2013), using different stellar noise modeling in Rajpaul et al. (2016), and recently using a different technique of randomization inference to measure the p-value of the periodogram peak at 3.2 days by Toulis & Bean (2021).

In this section, we analyze this RV dataset as a case study for the 3SD procedure. The objective of the second part (Sect. 6.2) is also to demonstrate that algorithm 2 offers the possibility of investigating the effects of model errors on the frequency analysis and on the estimated p-values. The purpose is not to argue for or against a chosen noise model, but to study the effects of model mismatch. We first present the results of the 3SD procedure computed with the noise models involved in the detection paper (Dumusque et al. 2012). We then analyze the robustness of the results using a different noise model.

|

Fig. 8 Same as Fig. 6, but for a practical case without NTS for n in Eq. (1). In this case, the covariance structure of the stochastic noise source is estimated from the data with model ℳn given in Eq. (13). |

6.1 3SD procedure with models from the detection paper

In short, the initial model ℳd described in the detection paper (Dumusque et al. 2012) contains three noise components. First, it contains the binary contribution (mB) of αCen A, modeled with a second-order polynomial (three free parameters). Second, it contains the magnetic cycle, modeled with the linear relation of Eq. (16) and  (for which periods < 40 days have been filtered out) taken as ancillary series (mC, one free parameter). Third, it contains the magnetic (rotation modulated) activity (mM), modeled as the sum of sine and cosine waves fitted at the stellar rotation period (~37 days) and its first four harmonics. Because of the stellar differential rotation, the authors fitted each of the 4 yr of observations individually to allow the stellar rotation period estimate to vary slightly from year to year. They also used a different number of harmonics for each of the four RV subsets. We refer to the supplementary material’ of Dumusque et al. (2012) for the global model that is fit to each of the four RV subsets (see their Eqs. p.7). In total, mM contains 19 free parameters.

(for which periods < 40 days have been filtered out) taken as ancillary series (mC, one free parameter). Third, it contains the magnetic (rotation modulated) activity (mM), modeled as the sum of sine and cosine waves fitted at the stellar rotation period (~37 days) and its first four harmonics. Because of the stellar differential rotation, the authors fitted each of the 4 yr of observations individually to allow the stellar rotation period estimate to vary slightly from year to year. They also used a different number of harmonics for each of the four RV subsets. We refer to the supplementary material’ of Dumusque et al. (2012) for the global model that is fit to each of the four RV subsets (see their Eqs. p.7). In total, mM contains 19 free parameters.

The sum of components mB, mC, and mM forms the noise component d in model (1). The authors assumed that component n in (1) is uncorrelated and there is no NTS.

We applied the 3SD procedure to the RV data of αCenB with the following setting for algorithm 1:

the Lomb–Scargle periodogram as P,

the Max test as T,

the set of linearly sampled frequencies as Ω: = [9.4 × 10−4, 215.8] (with Δν = 1.88 × 10−3 µHz, and NΩ = 114750 frequencies in total),

no training time series or parametric model for the stochastic noise component n

,

,the model defined in Dumusque et al. (2012) for component d, recalled above as ℳd,

the ancillary time series c as the 40-days-filtered

time series available from the data release of Dumusque et al. (2012).

time series available from the data release of Dumusque et al. (2012).

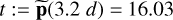

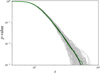

In row 11 of algorithm 1, we obtained a standard deviation  of the data residuals of 1.23 m s−15, in agreement with the value given in Dumusque et al. (2012). The standardized periodogram computed in row 14 is shown in Fig. 9. The algorithm computed a test value t = 16.03 (peak at 3.2 days).

of the data residuals of 1.23 m s−15, in agreement with the value given in Dumusque et al. (2012). The standardized periodogram computed in row 14 is shown in Fig. 9. The algorithm computed a test value t = 16.03 (peak at 3.2 days).

We then ran algorithm 2 to evaluate the p-value of t with same inputs as algorithm 1 that are listed above. In addition, we plugged into algorithm 2 a number of Monte Carlo simulations of B = 100 and b = 1000, a Gaussian prior for πw with a standard deviation of 0.04 m s−1 (1σ estimated error bar), and a uniform prior πd with a 1σ interval width on each of the 23 free parameters of model ℳd.

The t values corresponding to mean p-values of 10, 1, and 0.1% are shown with solid green, blue, and red curves, respectively, in Fig. 9. The 90% intervals are shown with the corresponding colored shaded regions. The value  corresponds to an estimated mean p-value of 0.47% with a 90% interval of [0.1%, 0.7%]. We confirmed that these results are reasonably stable with respect to the prior model πd for the ℳd model parameters in row 13 of algorithm 2 (e.g., a Gaussian instead of a uniform prior with interval widths set to 1σ standard error leads to

corresponds to an estimated mean p-value of 0.47% with a 90% interval of [0.1%, 0.7%]. We confirmed that these results are reasonably stable with respect to the prior model πd for the ℳd model parameters in row 13 of algorithm 2 (e.g., a Gaussian instead of a uniform prior with interval widths set to 1σ standard error leads to  (16.03) = 0.43% with [0.1%, 0.7%] 90% interval).

(16.03) = 0.43% with [0.1%, 0.7%] 90% interval).

Dumusque et al. (2012) assumed that the data residuals (without a planet) are white. While the p-value evaluation procedure used in this paper was not specified, we assume that they used the classical bootstrap technique in which the p-values are evaluated by shuffling the dataset and keeping the observing time dates constant. The t values corresponding to the p-values of 10, 1, and 0.1% computed with this classical method are shown by dashed lines for comparison. The largest periodogram peak at 3.2 days lies in the region of p-values below 0.1%, which is consistent with the result of the publication paper (a p-value of 0.02%).

We conclude from this analysis that if the model assumed by Dumusque et al. (2012) is accurate, the 3.2 days peak is associated with a mean p-value of 0.47%, which is small but nevertheless about 23 times larger than the 0.02% p-value estimate given in the detection paper. In general, the p-value derived without taking the errors on the noise model parameters into account is underestimated.

|

Fig. 9 Standardized Lomb–Scargle periodogram of the a CenB RV residuals. The noise model ℳd used to create these residuals is taken from Dumusque et al. (2012). p-values estimated by a classical bootstrap procedure (see text) are shown with the dashed lines. Mean p-values derived with algorithm 2 are shown with solid lines, and their credibility interval with the shaded regions. The peak at 3.2 days is shown by the gray vertical arrow. |

|

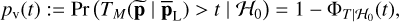

Fig. 10 Impact of noise model errors on the significance levels. Left: Lomb–Scargle periodogram of the α CenB RV residuals. The peak at 3.2 days is shown by the gray vertical arrow. Solid lines are the mean p-values estimated without a model error. The p-value at t: = p(3.2 d) = 24.48 is within the 90% interval [0.1%, 0.7%]. Dotted lines are the mean p-values estimated with a model error (see text). The p-value at t = 24.48 this time is within the 90% interval [59.5%, 64.7%]. Right: distribution of the period index where the maximum periodogram value was found in row 15 of algorithm 2 (zoom at periods <20 days). The standard version of the 3SD procedure (simulating the situation without a model error) is shown in the top panel, and the hybrid version (detection process run with a model error) is shown in the bottom panel. A bias toward specific periods is visible in the bottom panel: the model error leaves residuals in which periodicities are imprinted. |

6.2 Systematic study of noise model errors

We considered another noise model ℳd for d. Following Rajpaul et al. (2016; see their Sect. 4), we still considered a nuisance signal of the form d = mB + mC + mM, but with some modifications. The estimation of the noise model parameters on the RV dataset was now performed in two steps. The binary and long-term trend components mB and mC were fit first. The magnetic activity component mM was fitted in a second step on the data residuals as a Gaussian process with a quasi-periodic covariance kernel of the form of Eq. (10), with kM fixed to 0 and parameters [αGP, ΓGP, PGP] free with PGP in intervals of 30 and 42 days.

When we ran our detection algorithm 1 with this new noise model, we obtained final data residuals of 0.78 m s−1 standard deviation, and the peak at 3.2 days disappeared. Similarly to Rajpaul et al. (2016), we performed an injection test with a planetary signal at 3.2 days with an amplitude of 0.51 m s−1 to verify that the GP noise model does not overfit the planet signal. We found the periodogram peak of the data residuals to be the highest peak, confirming the observations of Rajpaul et al. (2016) that the detection outcome can be very sensitive to the choice of the noise model plugged in the detection algorithm.