| Issue |

A&A

Volume 628, August 2019

|

|

|---|---|---|

| Article Number | A82 | |

| Number of page(s) | 21 | |

| Section | Numerical methods and codes | |

| DOI | https://doi.org/10.1051/0004-6361/201833143 | |

| Published online | 09 August 2019 | |

Self-gravitating disks in binary systems: an SPH approach

I. Implementation of the code and reliability tests

1

Dipartimento di Fisica, Sapienza, Università di Roma, P.le Aldo Moro, 5, 00185 Rome, Italy

e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

2

INAF-IAPS, Istituto di Astrofisica e Planetologia Spaziali, Area di Ricerca di Tor Vergata, Via del Fosso del Cavaliere, 100, 00133 Rome, Italy

Received:

2

April

2018

Accepted:

19

June

2019

Abstract

The study of the stability of massive gaseous disks around a star in a nonisolated context is a difficult task and becomes even more complicated for disks that are hosted by binary systems. The role of self-gravity is thought to be significant when the ratio of the disk-to-star mass is non-negligible. To solve these problems, we implemented, tested, and applied our own smoothed particle hydrodynamics (SPH) algorithm. The code (named GaSPH) passed various quality tests and shows good performances, and it can therefore be reliably applied to the study of disks around stars when self-gravity needs to be accounted for. We here introduce and describe the algorithm, including some performance and stability tests. This paper is the first part of a series of studies in which self-gravitating disks in binary systems are let evolve in larger environments such as open clusters.

Key words: protoplanetary disks / hydrodynamics / binaries: general

© ESO 2019

1. Introduction

The study of protoplanetary disks in nonisolated system has become a relevant topic in numerical astrophysics because several planetary systems and disks around stars inside open clusters have recently been observed. The recent discovery of a Neptune-sized planet that is hosted by a binary star in the nearby Hyades cluster (Ciardi et al. 2018) has opened new perspectives for the study of the evolution of primordial disks that interact with binary stars in a nonisolated environment. In the context of the study of isolated binary systems, we note that most of the classical models have studied low-mass disks, with the result that even considering their self-gravity, no appreciable change is observed in the time evolution of the stellar orbital parameters. Additionally, low-mass disks give a poor feedback on the hosting stars, which means that the timescale for the variation in their orbital parameters is larger than the other dynamical timescales that are involved.

To investigate such systems, we built our own smoothed particle hydrodynamics (SPH) code to integrate the evolution of the composite star+gas system. Following the scheme described in Capuzzo-Dolcetta & Miocchi (1998), our code treats gravity by means of a classical tree-based scheme (see also Barnes 1986; Barnes & Hut 1986; Miocchi & Capuzzo-Dolcetta 2002) and adds a proper treatment of the close gravitational interactions of the gas particles. The evolution of a limited number of point-mass objects (which may represent either stars or planets) is treated with a high-order explicit method.

This preliminary work provides an instrument that is not only suited to modeling heavy protoplanetary disks that interact with single and binary stars. It can also be used to study these systems not in isolation, but in stellar systems such as open star clusters.

In the next section we describe our code after preliminarily introducing the numerical framework, and in Sect. 3 we present and discuss some physical and performance tests. In Sect. 4 we describe the disk model we adopted and the application of our code to the study of heavy Keplerian disks. Section 5 is dedicated to the conclusions.

2. Numerical algorithm

In order to study a selg-gravitating gas, we developed an SPH code, coupled with a tree-based scheme for the Newtonian force integration. In this section, after a breaf recall of some basic theory of gravitational tree codes and of the SPH formalism (Sect. 2.1), we describe the main features of our numerical algorithm (Sect. 2.2). For further technical details, we refer to Appendices A.1 and A.2.

2.1. Basic theory

Introduced for the first time by Lucy (1977) and Gingold & Monaghan (1977), the SPH scheme has been widely adopted to investigate a huge set of astronomical problems that involve fluid systems. An SPH scheme allows integrating the fluid dynamical equations in a Lagrangian approach by representing the system through a set of points, or so-called pseudo-particles. For each particle, a set of fundamental quantities (such as density ρ, pressure P, internal energy u, and velocity v) are calculated by means of an interpolation with a proper kernel function over a suitable neighbor. For an exhaustive explanation of the method, we refer to various papers in the literature, such as those by Monaghan & Lattanzio (1985), Monaghan (1988, 2005). Here we recall some basic aspects.

Interpolations are performed with a continuous kernel function W(r, h), whose spread scale is determined by a characteristic length h, called the smoothing length. It can easily be shown (see, e.g., Hernquist & Katz 1989) that under some additional constraints, interpolation errors are limited to the order O(h2). We used as kernel function the cubic spline that was for the first time adopted by Monaghan & Lattanzio (1985), who developed a formalism that had been introduced by Hockney & Eastwood (1981). This kernel function has the following form:

(1)

(1)

The SPH interpolation involves only a limited set of N′ neighboring particles that are enclosed within the range 2h, therefore the computational effort is expected to scale linearly with the total particle number N. On the other hand, when long-range interactions such as gravity are considered, the computational effort increases because each particle interacts with the whole system. A classical direct N-body code would therefore require a computational weight scaling as N2. However, a suitable gravitational tree-based scheme allows us to efficiently evaluate the Newtonian force by approximating the potential with a harmonic expansion (see Barnes & Hut 1986; Barnes 1986, for a full explanation). For each particle, only the contribution from a local neighborhood is calculated through a direct particle–particle coupling, while the contribution from farther particles is suitably approximated. The following expressions (Eqs. (2) and (3)) represent the approximated potential Φ(r) and the force (per unit mass) a(r)= − ∇Φ(r) given by a far cluster of particles,

(2)

(2)

(3)

(3)

M is the total mass of this ensemble, r = |r| is the distance of the particle under study to the center of mass of the cluster. The symbol  represents the so-called quadrupole tensor, which is associated with the specific cluster. In indexed form, it is given by

represents the so-called quadrupole tensor, which is associated with the specific cluster. In indexed form, it is given by

(4)

(4)

where  and

and  (i, j = 1, 2, 3) refer to the Cartesian coordinates of the kth particle of mass mk. The summation is performed over all the NC particles that are included in the cluster.

(i, j = 1, 2, 3) refer to the Cartesian coordinates of the kth particle of mass mk. The summation is performed over all the NC particles that are included in the cluster.

In the following section we describe the main structure and formalism used by GaSPH. Further computational details related to the implementation of the algorithm can be found in Appendices A and B.

2.2. Main structure of the algorithm

A single step to compute the acceleration contains two preliminary phases. One cycle is dedicated to map the particles into an octal grid domain. A further cycle, linear in N, is needed to evaluate some key parameters such as the density, ρ, the smoothing length, h, and the pressure, P. Then a third set of operations, the most complicated operation, is that of evaluating the gravitational and hydrodynamical forces in addition to the internal energy rate  of the gas.

of the gas.

GaSPH can also easily treat a system that consists of a set of point masses. To do this, the part of the SPH computations is turned off and the tree scheme alone is used for gravity interactions. On the other hand, a gas can be treated with pure hydrodynamics by turning off the gravitational field and using the SPH formalism alone.

After the main computations of the acceleration a and the energy rate  , the algorithm updates in time the velocity, position, and internal energy of the gas with a second-order Verlet method. Because of the structure of the second-order technique, the three main computational cycles should be performed twice into a single time iteration to obtain two estimates of a and

, the algorithm updates in time the velocity, position, and internal energy of the gas with a second-order Verlet method. Because of the structure of the second-order technique, the three main computational cycles should be performed twice into a single time iteration to obtain two estimates of a and  .

.

In addition, the smooth particles may interact with a small number Nob of additional objects, an ensemble of point masses that mimic stars and/or planets. Differently from the other particles, the motion of these few objects is integrated with a 14th-order Runge–Kutta method by direct particle-to-particle N-body interactions without any approximation for the gravitational field. When Nob is sufficiently small, these operations request a little additional computational effort that scales roughly linearly with respect to the total number of points (including both the SPH particles number N and the objects number Nob). For the specific purpose of our investigation, where we have Nob ≤ 2, the general efficiency of the code is not affected.

2.2.1. Particle mapping and density computation

For a set of N equal-mass points, we preliminarily need to subdivide the system into a hierarchical series of subgroups of points in order to apply the multipole approximation for the Newtonian field contribution given by a “cluster”. To do this, we use a classical Barnes-Hut tree-code to map the particles into an octal grid space, according to their positions. We follow in particular the technique adopted by Miocchi & Capuzzo-Dolcetta (2002) by mapping the points through a 3-bit-based codification (see Sect. 2.2.6 for further details).

Before the accelerations are computed, SPH particles need a preliminary stage in which densities and smoothing lengths are computed. To perform a good interpolation, we need to keep a fixed number of neighbors for each point. For inhomogeneous fluids, we must therefore use a smoothing length h ≡ h(r, t) that varies in space and in time.

Individual smoothing lengths should be chosen in such a way that the higher the local number density n = ρ/m, the smaller the interpolation kernel radius: h ∝ n−1/3, in order to have a roughly constant number of neighbors of the given particle. For this purpose, we adopt a commonly used prescription (Hernquist & Katz 1989; Monaghan 2005). For each particle, we start from an initial guess for h, then we vary it until the number of particles that lie within the kernel dominion reaches a fixed value N0. We iterate a process in which each time the number of neighbor points, N′, is counted using a certain smoothing length hprev, then we update this length to a new value hnew according to the following formula:

![Mathematical equation: $$ \begin{aligned} h_{\mathrm{new}} = h_{\mathrm{prev}} \dfrac{1}{2} \left[ 1+\left( \dfrac{N_0}{N\prime }\right)^{1/3} \right] . \end{aligned} $$](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq11.gif) (5)

(5)

If the fluid were homogeneous, hprev(N0/N′)1/3 would immediately provide the correct value of the smoothing length, without any further iteration. The addend 1 lets the program perform an average of the old smoothing length, for which any excessive oscillation error due to non-homogeneities in the spatial distribution of particles is damped. The iteration is stopped when convergence is reached according to the criterion |N′−N0|≤ΔN, where ΔN is a tolerance number. In this regard, Attwood et al. (2007) investigated the acoustic oscillations of some models of polytrope around the equilibrium by imposing a constant neighbor number N′ and letting ΔN vary. They found that the fluctuation of N′±ΔN introduced an additional numerical noise that was able to break the stability of this system, giving rise to errors. To prevent errors, the authors found that ΔN should be set to zero, which is the choice we adopt in this paper. Moreover, they showed that the calculation of h according to the iterative process illustrated above and with ΔN = 0 is equivalent to solving for all the particles the 2N-equations system described by the following two equations:

(6)

(6)

and to finding the exact solutions of density and smoothing length {ρi, hi | i ∈ [1, N]}, with  a suitable constant, and rij the mutual distance between the ith and the jth particles. We typically use a number of neighbors N′=60, such as δ ≈ 1.2.

a suitable constant, and rij the mutual distance between the ith and the jth particles. We typically use a number of neighbors N′=60, such as δ ≈ 1.2.

When the density ρi is evaluated, the corresponding pressure Pi can be computed by means of a suitable equation of state. Appendix B.1 illustrates further technical details about the neighbor-search procedure.

2.2.2. Force calculation and softened interactions

For a generic ith particle, the acceleration ai is computed by adding both the SPH terms and the Newtonian terms in the same iteration. Together with the acceleration, the internal energy rate of the particle is also computed.

To treat a self-gravitating gas with an SPH scheme, a proper treatment of the gravitational potential is necessary to avoid overestimating the gravity field. Particles can be considered as point sources of the Newtonian field when their mutual distance is larger than 2h. Otherwise, their Newtonian interaction is, in consistency with the assumed kernel function (Gingold & Monaghan 1977), such that it vanishes at an interparticle distance approaching zero.

With the cubic spline kernel, we can obtain a different form of the Newtonian interaction between two particles, such that the classical term is softened if the particles approach within a distance of the order of a softening length ϵ = 2h. See the appendix in Hernquist & Katz (1989) for more details, and the appendix in Price & Monaghan (2007) for an explicit expression of the force and the potential. When SPH interaction is turned off, a constant value ϵ is generally used in place of 2h for the softening length. In this case, the total energy is conserved within the numerical error. On the other hand, the Hamiltonian becomes time dependent with SPH systems because the softening length varies in time, and so the energy is no longer conserved. To solve this problem, the equations of motion must be rewritten in a conservative form that takes the variation in h into account. We followed the Hamiltonian formalism as adopted for the first time by Springel & Hernquist (2002) for the hydrodynamical interactions, which was further developed by Price & Monaghan (2007) for the gravitational field. The SPH equation assume the form

![Mathematical equation: $$ \begin{aligned} \dfrac{\mathrm{d} \boldsymbol{v}_i}{\mathrm{d}t}&=- \sum \limits _{j} \frac{1}{2} \left( { g}_{\mathrm{soft}} (r_{ij},h_i) + { g}_{\mathrm{soft}} (r_{ij},h_j) \right) \frac{\boldsymbol{r}_{ij}}{r_{ij}}\nonumber \\&\quad - \sum \limits _{j} m_j \frac{G}{2} \left( \frac{\zeta _i}{\Omega _i} \boldsymbol{\nabla }_i W(r_{ij},h_i) + \frac{\zeta _j}{\Omega _j} \boldsymbol{\nabla }_i W(r_{ij},h_j) \right)\nonumber \\&\quad -\sum \limits _{j} m_j\left( \dfrac{P_i}{ \rho _i^2 \Omega _i} \boldsymbol{\nabla }_i W(r_{ij},h_i) + \dfrac{P_j}{ \rho _j^2 \Omega _j} \boldsymbol{\nabla }_i W(r_{ij},h_j) \right) \nonumber \\&\quad -\sum \limits _{j} m_j \Pi _{ij} \left[\frac{ \boldsymbol{\nabla _i} W(r_{ij},h_i) + \boldsymbol{\nabla }_i W(r_{ij},h_j) }{2}\right] +\dfrac{\mathrm{d} \boldsymbol{v}_{i}^{[\mathrm{stars}]}}{\mathrm{d}t} \end{aligned} $$](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq14.gif) (7)

(7)

(8)

(8)

where the index i refers to a generic ith particle and the index j in the sums refers to the jth particle that is enclosed within the range 2hM = 2 ⋅ max(hi, hj). The term gsoft represents the softened gravitational force per unit mass mentioned above: it is a function only of the mutual particle distance rij and of the smoothing length h. It tends to zero as rij → 0 and assumes the classical Newtonian form  for rij ≥ 2h. The operator ∇i represents the gradient with respect to the coordinates of the ith particle. The gradient is performed over two different expressions of the kernel W, with two different lengths hi and hj. The terms ζi and Ωi are suitable functions that account for the variation in the smoothed Newtonian potential with respect to the softening length and for the non-uniformity of the softening length itself, respectively. They assume this form for a generic particle of index i:

for rij ≥ 2h. The operator ∇i represents the gradient with respect to the coordinates of the ith particle. The gradient is performed over two different expressions of the kernel W, with two different lengths hi and hj. The terms ζi and Ωi are suitable functions that account for the variation in the smoothed Newtonian potential with respect to the softening length and for the non-uniformity of the softening length itself, respectively. They assume this form for a generic particle of index i:

(9)

(9)

(10)

(10)

where in the same way for the system in Eq. (6), the sum extends over the particles that are enclosed within the range 2hi. In Eq. (10), the function ϕsoft represents the softenend gravitational potential, such that ∇ϕsoft = −gsoftrij/rij. The potential reaches a constant value as rij → 0 and becomes equal to the Newtonian potential for rij ≥ 2h (for an explicit expression, see, e.g., Price & Monaghan 2007). Terms Ω and ζ are computed in the same neighbor-search iterative loop where ρ and h are worked out.

Only if the gas interacts with stars does the last term in Eq. (7), ![Mathematical equation: $ \mathrm{d} \boldsymbol{v}_{i}^{[\mathrm{stars}]}/\mathrm{d}t $](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq19.gif) (discussed in Sect. 2.2.4), represent a non-null acceleration that accounts for the Newtonian interaction between ith particle and the point masses. The function Πij, which we discuss in the following section, characterizes the well-known artificial viscosity. The expression of the equation of motion, Eq. (7), guarantees a symmetric exchange of linear momentum between the particles.

(discussed in Sect. 2.2.4), represent a non-null acceleration that accounts for the Newtonian interaction between ith particle and the point masses. The function Πij, which we discuss in the following section, characterizes the well-known artificial viscosity. The expression of the equation of motion, Eq. (7), guarantees a symmetric exchange of linear momentum between the particles.

2.2.3. Artificial viscosity

In high-compression regions such as shock wavefronts, the velocity gradient may be so strong that two layers interpenetrate and the hydrodynamical equations may not be integrated correctly and generate unphysical effects. Additional artificial pressure terms are a possible solution for this problem. In our code, we added an artificial term by adopting the same classical schematization as Monaghan (1989), which corresponds to introducing a suitable artificial viscosity that dampens the velocity gradient when two particles approach. Practically, a viscous-pressure term Πij is included in Eqs. (7) and (8). It assumes the expression

(11)

(11)

where  . The dot product vij ⋅ rij involves the relative velocity and the distance of a pair of particles i − j. Only the particles that move in, for which vij ⋅ rij < 0, contribute to the artificial viscosity. The parameter η is a suitable term to prevent singularities when two particles approach very closely (we use the typical value of η = 0.1). The terms

. The dot product vij ⋅ rij involves the relative velocity and the distance of a pair of particles i − j. Only the particles that move in, for which vij ⋅ rij < 0, contribute to the artificial viscosity. The parameter η is a suitable term to prevent singularities when two particles approach very closely (we use the typical value of η = 0.1). The terms  and

and  represent the average values of the smoothing length

represent the average values of the smoothing length  , the density

, the density  and the speed of sound

and the speed of sound  , respectively. We set β = 2α. In this simple formulation, the artificial viscosity is activated throughout the fluid; but in two circumstances it should be damped to prevent unphysical effects. Artificial viscosity must be damped in regions where shear dominates, and where the velocity gradient is low.

, respectively. We set β = 2α. In this simple formulation, the artificial viscosity is activated throughout the fluid; but in two circumstances it should be damped to prevent unphysical effects. Artificial viscosity must be damped in regions where shear dominates, and where the velocity gradient is low.

For two shearing layers of fluid, the relative velocity between the particles leads to an approach that is interpreted by the artificial viscosity (11) as a compression. This incorrect interpretation causes the code to overestimate the strength of the viscous interaction. To prevent false compressions, Balsara (1995) multiplied the term μij by a proper switching coefficient:

(12)

(12)

in which the divergence of velocity and the velocity curl are evaluated for a particle of index i as

(13)

(13)

We implemented the term f by multiplying μij for an average value  . Further problems may arise far away from high-compression regions. In the classical formulation of Πij, α = 1 = cost. (e.g., in Monaghan 1992). In this scheme, the viscosity acts with the same effectiveness in every region, while we would expect the artificial term to be efficient only where it is needed, that is, close to the shock fronts. To solve this problem, we used the same formalism as was introduced by Morris & Monaghan (1997) and further developed by Rosswog et al. (2000) by considering an individual αi for each particle that follows the time-variation equation,

. Further problems may arise far away from high-compression regions. In the classical formulation of Πij, α = 1 = cost. (e.g., in Monaghan 1992). In this scheme, the viscosity acts with the same effectiveness in every region, while we would expect the artificial term to be efficient only where it is needed, that is, close to the shock fronts. To solve this problem, we used the same formalism as was introduced by Morris & Monaghan (1997) and further developed by Rosswog et al. (2000) by considering an individual αi for each particle that follows the time-variation equation,

(14)

(14)

where Si = max(−(∇ ⋅ v)i, 0) (αmax − αmin) represents a “source” term that increases in the proximity of the shock front; αmin represents a minimum threshold value for α, and αmax represents its maximum. The (increasing) rate of the viscosity coefficient is driven by a characteristic timescale τα = hi/bcs that depends on how the fluid allows the perturbations to propagate through the resolution length. The individual viscosity coefficients αi and αj, when referred to a generic i − j particle pairing, are averaged in the same way as was done with the other quantities.

For a gas with γ = 5/3, a good value for the b coefficient can be set such that 5 ≤ b−1 ≤ 10 (Morris & Monaghan 1997). For our tests, we set αmax = 2, αmin = 0.1 and b−1 = 5. These are the most commonly adopted values in literature for a wide class of problems involving collapse, merging stars or protoplanetary disks (see, e.g., Rosswog & Price 2007; Stamatellos et al. 2011; Hosono et al. 2016). The implementation of the artificial viscosity term (Eqs. (12)–(14)), together with its form implemented in Eqs. (7) and (8), may affect the accuracy of the code in preserving the total angular momentum. In Sect. 3.1.5 we discuss how this form of viscosity, with different choices of the coefficients αmin and b, guarantees the conservation of the angular momentum.

2.2.4. Additional stellar objects

We calculated a direct point-to-point interaction for the mutual interaction between stars and to couple stars with SPH particles. The equation of motion of a generic p-th star takes the following form:

(15)

(15)

where gsoft(r, ϵ) represents the Newtonian acceleration, which takes the form discussed above in Sect. 2.2.2. The force softening is accounted for the stars as well according to a constant softening length ϵs = cost. The gravity is thus softened when the mutual distance approaches ϵs. The first summation is extended over all SPH particles, while the index s in the second sum refers to the generic stars.

Similarly, the equation of motion, Eq. (7), when referred to a gas ith particle contains the following sum:

![Mathematical equation: $$ \begin{aligned} \dfrac{\mathrm{d} \boldsymbol{v}_i^{[\mathrm{stars}]}}{\mathrm{d}t} = - \sum \limits _{s} \frac{1}{2} \left( { g}_{\mathrm{soft}} ( r_{i\mathrm{s}} ,\epsilon _{\rm s}) + { g}_{\mathrm{soft}} (r_{i\mathrm{s}} ,h_i) \right) \frac{ \boldsymbol{r}_{i\mathrm{s}}}{r_{i\mathrm{s}} }, \end{aligned} $$](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq32.gif) (16)

(16)

where the index s again refers to the stars, and ris is the distance vector between a gas particle and a star.

2.2.5. Time integration and time-stepping

To evolve the gas system in time, we adopted a second-order integration method that is similar to a classical second-order Runge–Kutta scheme but is at the same time very similar to a leap-frog integrator: the well-known velocity-Verlet method (see Andersen 1983; Allen & Tildesley 1989, for detailed references). The Verlet method is based on a trapezoidal scheme coupled with a predictor-corrector technique for the estimation of v and u. The structure of this scheme is very similar to that of classical symplectic leap-frog algorithms, although it requires two computations of the force at every time iteration (see Appendix A.1). Nevertheless, the general velocity-Verlet method applied to gas evolution shows some advantages compared to the symplectic algorithm of the same order. Like a standard Runge–Kutta method, velocity and positions are updated in synchronized steps, without the Δt/2 shift. This feature provides a good flexibility in problems that are approached with nonuniform time-steps that involve the interaction of the gas component with other components that are integrated with different methods, as in our case. Various applications of velocity-Verlet methods in SPH schemes are found in the literature, for example, in Hubber et al. (2013) or in Hosono et al. (2016).

The additional point masses are ballistic elements, whose equation of motion needs to be integrated with very high precision to avoid secular trends that are typical of few-body gravitational problems. Although the SPH precision is at only second order, we decided to integrate the Newtonian motion of the (few) stars and planets in the system with a 14th-order Runge–Kutta method that was recently developed by Feagin (2012) through the so-called m-symmetry formalism. The method consists of 35 force computations per time-step, and in analogy with the well-known second- and fourth-order RK methods, it updates the velocities and the positions by suitable linear combinations of 35 different Kr and Kv coefficients (see Appendix A.2 for further details).

For the gas, we chose the time-step Δt following a criterion similar to the standard Courant–Friedrichs–Lewy (CFL) criterion that is commonly adopted for SPH systems (see, e.g., Monaghan 1992), together with some additional criteria. A global time-step Δtmin can be determined by taking the minimum between the following two quantities:

![Mathematical equation: $$ \begin{aligned} \Delta t_{\mathrm{term}} = \min \limits _i \left( \dfrac{C ~h }{c_{\mathrm{s} i} + h_i |\boldsymbol{\nabla }\cdot \boldsymbol{v}|_i + \varphi \alpha _i \left[ c_{\mathrm{s} i} + 2 \max \limits _{j}(\mu _{ij}) \right] } ~,~ C_{{u}} \dfrac{u_i }{\dot{u}_i } \right) \end{aligned} $$](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq33.gif) (17)

(17)

(18)

(18)

where cs is the sound speed, C is a coefficient whose typical value lies between 0.1 and 0.4; we usually choose 0.15. Moreover, Cu, Ca, and Cd are coefficients to be set < 1. We chose Cu = 0.04, Ca = 0.15, and Cd = 0.02. Finally, the coefficient φ typically ranges from 0.6 to 1.2 (we adopt φ = 1.2 throughout). Similarly to the control of the variation in kinetic energy, we control the time variation of the thermal energy  in a single time-step by limiting it to a certain fraction Cu = 0.04. The index i refers to an individual time-step Δti that is related to a specific particle.

in a single time-step by limiting it to a certain fraction Cu = 0.04. The index i refers to an individual time-step Δti that is related to a specific particle.

For the point-particle phase in the system (i.e., stars or planets), we chose a characteristic time-step, Δt, that is defined as

(19)

(19)

where we use Cob = 0.15. The various quantities with the index s of course characterize a specific star particle.

For a homogeneous medium, the integration can be performed with a global time-step, that is, the lowest value of gas and stars. Generally, the particles have different resolutions hi and different accelerations, which leads to a wide class of typical evolution timescales. For some particles, the integration might therefore be made with different Δti, which avoids the explicit force calculation at every time iteration and saves some computing time. We adopted a technique that was implemented in several N-body algorithms, such as the classical TREESPH (Hernquist & Katz 1989) or in the multi-GPU-parallelized N-body code HiGPUs (Capuzzo-Dolcetta et al. 2013). We assigned to each point a time-step as a negative two-power fraction of a reference time  (it can be a fixed quantity, or it may change periodically during the simulation). The particle motion is updated periodically according to their Δti in such a way that after an integration time Δtmax, all of them are synchronized (further details are explained in Appendix A.1). Particle mapping and sorting are performed every time for every particle as well, independently of their individual time-step. Thus, the configuration of the tree grid, together with total mass and quadrupole momentum of the boxes, are computed every single step Δtmin. Similarly, at each minimum time-step iteration, the gravitational interactions between gas and star are computed even during “inactive” stages of the gas. Thus, the acceleration of the inactive SPH particles is split into two terms: one is given by a fixed non-updated hydrodynamical and self-gravitaty term, and the other is given by a constantly updated gas-star gravitational force.

(it can be a fixed quantity, or it may change periodically during the simulation). The particle motion is updated periodically according to their Δti in such a way that after an integration time Δtmax, all of them are synchronized (further details are explained in Appendix A.1). Particle mapping and sorting are performed every time for every particle as well, independently of their individual time-step. Thus, the configuration of the tree grid, together with total mass and quadrupole momentum of the boxes, are computed every single step Δtmin. Similarly, at each minimum time-step iteration, the gravitational interactions between gas and star are computed even during “inactive” stages of the gas. Thus, the acceleration of the inactive SPH particles is split into two terms: one is given by a fixed non-updated hydrodynamical and self-gravitaty term, and the other is given by a constantly updated gas-star gravitational force.

In our scheme, the stars and planets do not follow an individual time-step scheme, and their mutual interactions are computed for every single step Δtmin, even when Δtstars ≠ Δtmin. Furthermore, we force the particles that lie close to the stars within a tolerance distance to be integrated at every time iteration. Practically, we compute the distance for a generic ith particle from the stars, and we furthermore predict this distance at the following time iteration. When these values are lower than a tolerance of κϵob (with a constant κ ≥ 2), the particle time-step drops to Δtmin. For our practical purposes, we used a small number of objects (in the current investigation, Nob ≤ 2), thus, the 35-stage RK scheme requires a relatively short CPU-time (less than 2% of the total).

In gas problems that involve strong shocks, the use of individual time-steps may lead to strong errors. Even though CFL conditions are satisfied, the strong velocity gradients may determine a great discrepancy in time-steps between close particles. Consequently, close particles may evolve with very strongly different timescales. This may create too many asymmetries in the mutual hydrodynamical interactions, which causes unphysical discontinuities in velocity and pressure. Following the idea of Saitoh & Makino (2009), we limit for each pair of neighboring ith and jth particles the ratio of time-steps  . These investigators have shown that a good compromise is reached by the choice of A = 4, which gives good results without abruptly affecting the efficiency of the code.

. These investigators have shown that a good compromise is reached by the choice of A = 4, which gives good results without abruptly affecting the efficiency of the code.

2.2.6. Approximation of the gravitational field: opening criterion

The decomposition of the system into a series of clusters is performed by the tree algorithm through a recursive octal cube eight sub-boxes, each one subdivided into a further eight cubes of order L = 2, and so on. A tree structure is thus constituted, made of several nested boxes, each of which contains a group of particles. To calculate the acceleration of an ith particle, the algorithm walks along the tree, starting from the low-order cubes toward the highest order cubes (that contain just one particle), and evaluates the distance between the particle and the center of mass of the boxes. Each time a box is probed, the code decides to open it and probes its internal cubes only if the well-known opening criterion is satisfied,

(20)

(20)

where θ is the so-called opening angle parameter for which reasonable values range among 0.3 and 1 (see Sect. 3.2, dedicated to performance tests), and DL = D0 × 2−L is the side length of the box. In the opposite case, the algorithm decides to approximate the gravitational field by adding for the acceleration of the ith particle just the contribution of the box (given by Eq. (3)). With this scheme, the net amount of computation scales down to N log N, which is far shorter than N2 for large N.

With ra the geometrical center of a certain cube and rCM its center of mass position, we may find a very large offset ΔCM ≡ |ra − rCM| under specific circumstances. With a center of mass far away from the box center, some errors may arise in the force approximation because a cube can be considered “far enough” from a particle according to the opening criterion even though some of the points enclosed in the box may still be very close to the particle (see Fig. 1). These close particles are therefore ignored, and the whole box gives the multi-polar approximated contribution to the particle acceleration. The acceleration is therefore calculated with less accuracy than might be expected. A first key to avoid these errors should be adopted by checking whether the particle lies very close to a box (as was done, e.g., by Springel 2005). If the test particle is inside a cube or close to its borders according to a certain tolerance, the box is always opened, independently of the truthfulness of Eq. (20).

|

Fig. 1. Schematic 2D example of the lack of accuracy in the field computation caused by a large offset ΔCM. The center of gravity is far away from the ith particle, and the cube is not opened. Nevertheless, some particles that lie near the edge of the box, such as the jth and the kth points, are very close to the ith point, but their direct contribution is missed, which results in a loss of accuracy. |

We can furthermore optionally modify the opening criterion in our code by taking the offset term into account. We may use the following rule to open a box:

(21)

(21)

This prescription is equivalent to the classic opening criterion, but with an effective opening angle θ′< θ, to guarantee that every close box is opened. In some peculiar cases in which ΔCM is large (i.e., comparable with the length of the semidiagonal of the box), as in the example of Fig. 1, the effective opening angle is considerably smaller than θ. In Sect. 3.2 we show that for a typical value of θ = 0.6, the adoption of the new criterion does not require too much additional computational effort, especially when many particles are involved.

3. Code testing

We illustrate here some basic physics tests (Sect. 3.1) and a series of performance tests (Sect. 3.2). In Sect. 3.1 we apply GaSPH to two basic problems: (i) a non-hydrodynamical system, characterized by a cluster of point-mass particles distributed according to a Plummer profile, and (ii) a classical shock-wave problem. These quality tests are followed by some applications to hydrodynamical systems at equilibrium. First, we treat some polytropes with finite radius. Then, we compare our algorithm with a well-known hydrodynamical tree-based code (Gadget-2) in the case of a gaseous Plummer sphere. In Sect. 3.2 we analyze the computational efficiency and the accuracy of our code in different contexts.

3.1. Tests with gas and pressureless systems

3.1.1. Turning off the SPH: the evolution of a pressureless system

In order to test the stability of our numerical method, we performed a series of simulations in which we placed a set of points according to the standard Plummer configuration (Plummer 1911), which is often adopted to study the distribution of stars in globular clusters. The Plummer sphere is pressureless, so that the particles interact only though gravity, and there is no SPH interaction. As units of measurement, we chose the total mass M and the gravitational constant G, and we placed an ensemble of N = 105 particles in a Plummer distribution with core radius R = 1 and cutoff radius Rout = 10R. The particles had equal masses m = N−1 and equal softening length ϵ, chosen as a fraction of the central mean interparticle distance:  (with αs ∈ [0.2, 1.0]). Starting the Plummer distribution at the virial equilibrium, we integrated its time evolution for 50 mean crossing-times τc. This parameter is defined as the initial ratio between the half-mass radius and the mean dispersion velocity

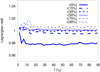

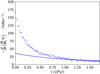

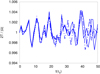

(with αs ∈ [0.2, 1.0]). Starting the Plummer distribution at the virial equilibrium, we integrated its time evolution for 50 mean crossing-times τc. This parameter is defined as the initial ratio between the half-mass radius and the mean dispersion velocity  . Figure 2 shows the virial ratio

. Figure 2 shows the virial ratio  as a function of time and compares four runs made with different combinations of the opening angle θ (0.6; 1.0) and ϵ (0.2; 0.5). The four results illustrated in Fig. 2 do not show any relevant difference: the virial ratios oscillate within a small fraction < 0.5%, especially for the configuration with θ = 1 and αs = 0.5, which was expected to be the worst case.

as a function of time and compares four runs made with different combinations of the opening angle θ (0.6; 1.0) and ϵ (0.2; 0.5). The four results illustrated in Fig. 2 do not show any relevant difference: the virial ratios oscillate within a small fraction < 0.5%, especially for the configuration with θ = 1 and αs = 0.5, which was expected to be the worst case.

|

Fig. 2. Oscillation of the virial ratio as a function of time for different choices of code configuration parameters for the simulation of a Plummer distribution of 105 equal-mass particles. The continuous line shows θ = 0.6, ϵ = 0.2; the dashed line represents θ = 1.0, ϵ = 0.2; the dotted line shows θ = 0.6, ϵ = 0.5; and the dash-dotted line denotes θ = 1.0, ϵ = 0.5. |

We note that we do not work with a classical high-precision N-body code such as Nbody-6 (for example see Aarseth 1999). The Newtonian force is approximated by means of both the multipolar expansion, which occurs when particles are sufficiently far, and the softening length damping, which occurs when the particles approach within a distance of about ϵ. Despite these approximations, acceptable results can be obtained in a noncollisional system like our Plummer distribution. The results lie within reasonable errors.

3.1.2. Sedov–Taylor blast wave

To test the code with strong shock waves, we simulated the effects of a point explosion on a homogeneous infinite hydrodynamical medium with constant density ρ0 and null pressure. If an amount of energy E0 is injected at a certain point r0, an explosion occurs and then a radial symmetric shock wave propagates outward. Sedov (1959) investigated this problem and found a simple analytical law for the time evolution of the shock front:

(22)

(22)

where rs is the radial position of the front relative to the point of the explosion r0, while a is a function of the adiabatic constant γ (it is close to 0.5 for γ = 5/3, and it approaches 1 for γ = 7/5). Furthermore, the fluid density immediately behind the shock front (r ≤ rs) has the following radial profile:

(23)

(23)

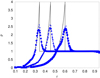

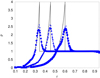

where Gγ is an analytical function of the relative radial coordinate r/rs. Similarly as in many previous works (see, e.g., Rosswog & Price 2007; Tasker et al. 2008), we set the initial conditions for a homogeneous and static medium (ρ0 = 1, v = 0) by placing 106 equal-mass particles in a cubic lattice structure, confined in a box with x, y, and z coordinates each ranging from −1 to 1. γ was set to 5/3, and the explosion was simulated by giving an amount of energy E0 = 1 to the origin of the system. We were unable to reproduce a point explosion with an SPH system because its spatial resolution is determined by the kernel support. We therefore needed to inject the energy in a small region with the same scale as 2h. We thus gave at a time t* the energy E0 to the particles that were enclosed in a sphere with radius R = 2h. Figure 3 shows three different radial average density profiles ρ(r, t′) that correspond to the times t = 0.05, t = 0.1, and t = 0.2. The results are compared with the analytical solution. Although the position of the front follows the expected law of Eq. (22), the peak does not reach the expected value  .

.

|

Fig. 3. Radial density profiles of the Sedov–Taylor blast wave at several times (increasing rightward) t = 0.05 (circles), t = 0.1 (triangles), and t = 0.2 (squares) in a simulation with N = 106. The results obtained using a higher resolution (N = 3 375 000) are plotted with dotted lines. The full lines represent the classical Sedov–Taylor auto-similar solutions. |

Intrinsic errors in approximating the physical quantities, given by the smoothing kernel, allow the density to spread out and follow a wider distribution than the true profile. This corresponds to a smoothing of the vertical discontinuity and so to a lower peak of the density. The same figure shows a comparison with results obtained from a further test that was made with the same system, but using a better resolution (N = 3 375 000). The peak of the curve clearly reaches a higher value.

3.1.3. Polytropes at equilibrium

We tested our code in the case of hydrodynamic self-gravitational systems by building static polytropes with different indexes (n = 1, n = 3/2, and n = 2). A generic polytrope of index n constitutes a radially symmetric system whose equation of state follows the expression

(24)

(24)

where the density is parametrized as ρ(r)/ρ0 = θn(r), and ρ0 is the central value. The static radial solution θ(r) can be found by writing an equilibrium condition between the hydrostatic pressure gradient and the gravitational forces, from which the well-known Lane–Emden equation can be obtained (an exhaustive treatment can be found, e.g., in Chandrasekhar 1958):

(25)

(25)

with  , and Kn a suitable normalization coefficient. For an index n ∈ (0, 5), the system has a finite radius and the coefficient Kn depends, through α2, both on the radius R and on the total mass M. We set both of them to 1 in our tests, implying K1 ≈ 0.637, K3/2 ≈ 0.424 and K2 ≈ 0.365.

, and Kn a suitable normalization coefficient. For an index n ∈ (0, 5), the system has a finite radius and the coefficient Kn depends, through α2, both on the radius R and on the total mass M. We set both of them to 1 in our tests, implying K1 ≈ 0.637, K3/2 ≈ 0.424 and K2 ≈ 0.365.

We tested the ability of our code to let a system spontaneously relax in a polytrope configuration, following the prescription adopted in Price & Monaghan (2007). Starting from a homogeneous sphere of particles placed in a lattice structure, the system was let evolve by forcing the pressure to follow Eq. (24). We forced the SPH system to evolve by damping the velocities with an additional acceleration adamp = −0.05 v, until the kinetic energy decreased to a small fraction (1%) of the total energy. A standard nonconstant α was chosen for the artificial SPH viscosity, with α0 = 0.1, and a number of neighbors of 110 was set for the particles.

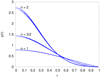

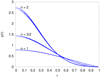

A correct treatment of self-gravity and hydrodynamic interactions among SPH particles, and the choice of the equation of state (24) allows the system to acquire the density profile ρ0θn, which is the solution of Eq. (25). Figure 4 shows the three radial density profiles we obtained for the different polytropic indexes. The resolution, related to the particles number, affects the accuracy of the code in correctly sampling the profile ρ(r), especially in the central denser regions. Mainly for the higher index n = 2, a higher particle number is needed to let the numerical density approach the theoretical expected value at a specific accuracy level. 10 000 particles were used for the models with n = 1 and n = 3/2, while the polytrope with index n = 2 was built with 20 000 particles.

|

Fig. 4. Equilibrium analytical solutions of the density profiles ρ(r) related to three different models of polytropes with indexes n = 1, n = 3/2, and n = 2, drawn as solid curves. Comparison with the computed profiles (dots). |

3.1.4. Gaseous Plummer distribution

We tested the equilibrium of a static gas density distribution according to the Plummer function:

![Mathematical equation: $$ \begin{aligned} \rho (r) = \rho _0 \left[ 1 + \left( \dfrac{r}{a} \right)^2 \right]^{-5/2} , \end{aligned} $$](/articles/aa/full_html/2019/08/aa33143-18/aa33143-18-eq50.gif) (26)

(26)

with the central density defined by ρ0 = 3M/4πa3, where M and a are the total mass of the system and a characteristic length, respectively. We set M = 1 and a = 1 (G = 1 in internal code units) so that the half-mass radius of the system is r(50%) ≈ 1.3. In a static configuration with a null velocity field, the gas SPH particles compensate for the mutual self-gravity with a pressure gradient that results from a temperature distribution T(r)=κρ(r)1/5. κ represents a constant that is calibrated by taking the equation state of a perfect gas P = (γ − 1)ρu into account and by imposing the virial equilibrium between gravitational energy W and total thermal energy  , that is, |W|=2U. We placed 50 000 particles according to a Monte Carlo sampling of the distribution in Eq. (26). A realistic distribution has an infinite radius, therefore we used a cutoff at a proper radial distance r ≈ 22, such that the distribution contained 99.8 % of the mass of a realistic infinitely extended Plummer sphere. Figure 5 shows the time variation of some Lagrangian radii that contain 5%, 15%, 30%, 50%, 75%, and 90% of the total system mass for an integration time of 90 central free-fall timescales ( τ0 = (3π / 32Gρ0)1/2 ≈ 1 in our code units).

, that is, |W|=2U. We placed 50 000 particles according to a Monte Carlo sampling of the distribution in Eq. (26). A realistic distribution has an infinite radius, therefore we used a cutoff at a proper radial distance r ≈ 22, such that the distribution contained 99.8 % of the mass of a realistic infinitely extended Plummer sphere. Figure 5 shows the time variation of some Lagrangian radii that contain 5%, 15%, 30%, 50%, 75%, and 90% of the total system mass for an integration time of 90 central free-fall timescales ( τ0 = (3π / 32Gρ0)1/2 ≈ 1 in our code units).

|

Fig. 5. Lagrangian radii as a function of time for a hydro-Plummer distribution at equilibrium (see Sect. 3.1.4). The radii are normalized to their respective initial values. |

We compared the results with the well-known gravitational SPH code Gadget-2 (Springel 2005). Figures 6 and 7 show a comparison between the radial density profiles obtained by the two algorithms with the same choice of the main parameters. The α viscosity coefficient was set constant and equal to 1. The reported density was computed at t = 90 τ0, even though the system reached an acceptable equilibrium state already within a few units of τ0 after several slight oscillations.

|

Fig. 6. Radial density profile for a Plummer distribution with 50 000 SPH particles (solid line). The results obtained with Gadget-2 are also shown (dashed line). The analytical Plummer profile is plotted with a dotted line. The density is in units such that ρ0 = 3/(4π). |

|

Fig. 7. Logarithmic ratio ρN/ρA of the numerical to analytical radial density profile for a Plummer distribution with 50 000 SPH particles (solid line). The results obtained with Gadget-2 are also shown (dashed line). |

In Fig. 7 we can distinguish three main radial zones for r ≤ 0.3, 0.3 < r < 2, and r ≥ 2. In the middle zone, the codes are in good agreement and provide a density profile with an accuracy lower than 2% with respect to the analytical model. For r < 0.3, Gadget-2 describes a density that deviates by up to the 6% from the expected value, while our program has a maximum deviation of 11%. For both models, these higher errors can be ascribed to the fact that the system contains only about 2% of the total mass (and thus 2% of the total particles) within the radial distance r = 0.3, which causes a poor sampling of the potential inside the sphere. Consequently, the system tends to shrink slightly. In the outward zone, the deviations can reach significantly higher values with both codes because the density values are far lower than in the central zone. We therefore conclude that in the context of a standard physical environment, the two codes show a satisfactory agreement overall.

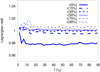

3.1.5. Artificial viscosity and angular momentum conservation

A nonconstant artificial viscosity may lead to nonconservation of the angular momentum, L. The actual conservation of this quantity was tested with different settings of the artificial viscosity parameters in Eq. (14), integrating the time evolution of a system that was very similar to the one described in the previous section. We used the same Plummer distribution (see Eq. (26)), with M = 1 and a = 1, made of 50 000 SPH particles. The same thermal energy profile was adopted, but scaled down by a factor 1/2, so that  . We converted the (subtracted) thermal energy into kinetic energy by assigning to each ith particle a clockwise azimuthal velocity, with absolute value

. We converted the (subtracted) thermal energy into kinetic energy by assigning to each ith particle a clockwise azimuthal velocity, with absolute value  (where ui was the original specific thermal energy characterizing the Plummer system used in Sect. 3.1.4), and a direction parallel to the X, Y plane. The system thus acquired a nonzero vertical component of the angular momentum,

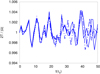

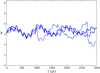

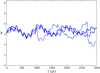

(where ui was the original specific thermal energy characterizing the Plummer system used in Sect. 3.1.4), and a direction parallel to the X, Y plane. The system thus acquired a nonzero vertical component of the angular momentum,  . The virial equilibrium was still formally preserved because gravitational potential energy and thermokinetic energy were the same such that |W|=2(K + U), but the (new) angular rotation triggered changes in the density distribution. We integrated in time for about 100 initial central free-fall timescales τ0 (which is on the same order as the azimuthal dynamical timescale, taken as the ratio rc/v(rc)≈1.4, where rc is the initial radius at which the density drops by a factor 1/2). We performed three different simulations by varying in the α rate Eq. (14) the parameters αmin and b. We set αmin = 0.1, b−1 = 5, αmin = 0.02, b−1 = 5, and αmin = 0.1, b−1 = 7. The angular rotation changes the configuration of the system, which causes the initial Plummer density distribution to become flatter perpendicularly to the z-axis, while the whole system expands. During an initial phase of about 20τ0, the distribution underwent some rapid variation followed by a slow secular evolution. Figure 8 shows the quantity (Lz−Lz0)/|Lz0|, which represents the variation as a function of time of the component Lz compared to its initial value Lz0. The three lines refer to the different choices of the parameters αmin and b. The curves show a conservation of the angular momentum within 10−3 up to 100 evolution timescales. In particular, the choice of αmin = 0.1, b−1 = 7, compared with the other configurations, gives a stronger variation of Lz during the first phases, while it shows a lower change rate during the secular evolution of the system. On the other hand, a low value αmin = 0.02 gives rise to a better conservation in the initial phases and a higher deviation during later stages. We observed in all three simulations that the two components Lx and Ly maintain values compared to Lz within a relative error of 10−3.

. The virial equilibrium was still formally preserved because gravitational potential energy and thermokinetic energy were the same such that |W|=2(K + U), but the (new) angular rotation triggered changes in the density distribution. We integrated in time for about 100 initial central free-fall timescales τ0 (which is on the same order as the azimuthal dynamical timescale, taken as the ratio rc/v(rc)≈1.4, where rc is the initial radius at which the density drops by a factor 1/2). We performed three different simulations by varying in the α rate Eq. (14) the parameters αmin and b. We set αmin = 0.1, b−1 = 5, αmin = 0.02, b−1 = 5, and αmin = 0.1, b−1 = 7. The angular rotation changes the configuration of the system, which causes the initial Plummer density distribution to become flatter perpendicularly to the z-axis, while the whole system expands. During an initial phase of about 20τ0, the distribution underwent some rapid variation followed by a slow secular evolution. Figure 8 shows the quantity (Lz−Lz0)/|Lz0|, which represents the variation as a function of time of the component Lz compared to its initial value Lz0. The three lines refer to the different choices of the parameters αmin and b. The curves show a conservation of the angular momentum within 10−3 up to 100 evolution timescales. In particular, the choice of αmin = 0.1, b−1 = 7, compared with the other configurations, gives a stronger variation of Lz during the first phases, while it shows a lower change rate during the secular evolution of the system. On the other hand, a low value αmin = 0.02 gives rise to a better conservation in the initial phases and a higher deviation during later stages. We observed in all three simulations that the two components Lx and Ly maintain values compared to Lz within a relative error of 10−3.

|

Fig. 8. Fractional variation in the z-component of the angular momentum for our simulated rotating Plummer model (see Sect. 3.1.5). We plot the results obtained by varying some configuration parameters: αmin = 0.1, b−1 = 5 (full line), αmin = 0.02, b−1 = 5 (dotted line), and αmin = 0.1, b−1 = 7 (dash-dotted line). |

3.2. Code performance

In order to analyze the computational efficiency of our algorithm as a function of the particle number, we performed several tests by measuring the average CPU time that was spent for a single run by the main routines. We therefore studied the performances of the GaSPH code in three different contexts:

-

(1)

A system with pure self-gravity and zero pressure, adding a comparison with the results of Gadget-2.

-

(2)

A system with self-gravity and SPH pressure.

-

(3)

A system similar to that of case 2, but with the addition of 20 point star-like external objects.

We performed the tests by placing a set of N particles with the same Plummer density profile distribution as adopted in Sect. 3.1.1. The program was tested on an Intel®CoreTM i7-4710HQ architecture with 6MB of cache memory and with 16GB of RAM memory DDR3L with a data-transferring speed of 1600 MHz.

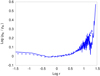

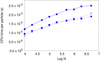

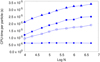

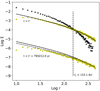

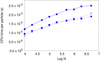

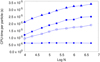

For a standard tree code without SPH, the computational time per particle is expected to be linear in log N because the overall time scales as N log N. To increase the efficiency and save considerable memory resources, we can also use a simple formalism made by considering only the first “monopole” term −MGr−3r that appears in the right member of Eq. (3). This is a technique that was also adopted in Gadget-2 and simplifies the complexity of the algorithm by neglecting the efforts for the quadrupole tensor computation. The suppression of the quadrupole term decreases the computational time, with a minor cost in terms of accuracy. For a pure self-gravitating system, Fig. 9 shows the CPU time needed for a single particle force calculation as a function of the ten-based logarithm of the particle number N (ranging from 104 to 5 × 106). Choosing an opening angle θ = 0.6, we performed a series of force evaluation by considering a simple pressureless system, with particles interacting only with the Newtonian field. The computational times measured using our code (averaged over a reasonable number ≥30 of equal tests) are comparable with the average CPU times measured using Gadget-2. The figure also shows the results based on a second series of runs with GaSPH performed with the quadrupole term included in the gravitational field. Including this term, an additional CPU time of about 30% is requested. Figure 10 compares the previous CPU times with the times needed by GaSPH for a full self-gravitating SPH system, with the quadrupole term included in the computation and the same value of θ = 0.6. The times per particle for the density computation routine are also shown. In computing the acceleration, the additional time per particle is fairly independent of N, as can be seen in the figure, because the close SPH interactions are always made over a fixed number of neighboring points, which we set to 60 in this example. For the same reasons, the average time per particle needed to calculate the density is also expected to be constant, as Fig. 10 shows. The calculus of ρ and h requests an iterative process in which the routine for each particle is called several times. The CPU times illustrated in the figure are the average values per single iteration. Determining the optimum value of h the code typically requires no more than two iterations.

|

Fig. 9. CPU time per particle for the pure gravitational force calculation in monopole and quardupole approximations at different N. Gadget-2 results (empty circles) are compared to our code results (squares connected with dashed lines) in the same monopole approximation. The continuous line refers to the performance of GaSPH, and the quadrupole term is included in the field. |

|

Fig. 10. GaSPH average CPU times per particle as a function of N. Comparison of different routines: SPH neighbor-search routine averaged for a single iteration (circles), pure gravity computation with monopole term (empty squares) and with quadrupole term (filled squares), self-gravity computation up to quadrupole and including the SPH terms (triangles). The units are the same as in Fig. 9. |

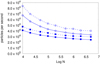

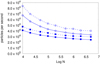

The optional introduction of the offset ΔCM term in the opening criterion (as discussed in Sect. 2.2.6) causes in some cases a considerable reduction of the effective angle θ. Consequently, the number of direct particle-to-particle interactions increases, which decreases the code performance. Figure 11 illustrates the code efficiency in terms of number of particles processed in a second. The results, related to the two acceleration routines (pure self-gravity and self-gravity with SPH) shown in the previous graph, are compared with other result obtained by including the offset term ΔCM in the opening criterion of Eq. (21). A substantial but not drastic worsening in performance can be observed. For instance, using 5 × 106 SPH particles and including ΔCM, the code computes the accelerations at a rate of ≈24 000 particles per second (about 17% slower than the case without ΔCM). Computations were made with θ = 0.6 and including the quadrupole terms. The performance of the same two force subroutines (pure gravity and gravity plus hydrodynamics) were also studied at different values of θ (CPU times per particle as a function of N are shown in Fig. 12).

|

Fig. 11. Number of processed particles per second. The continuous lines and the dashed lines refer to results with and without the correction term ΔCM, respectively. We show the simple gravity field calculation (empty circles) and the full self-gravity routine with hydrodynamics (squares). The quadrupole term is considered for the gravity field. θ = 0.6. |

|

Fig. 12. Computational time per particle vs. log N for various values of the opening angle θ. The pure tree gravitational algorithm (dashed line) and full SPH+gravity algorithm are shown as dashed and solid lines, respectively. The quadrupole term is included in the force evaluation. The ΔrCM offset term is not considered by the opening criterion. |

Smaller angles should provide higher precision at the cost of a longer computational time. On the other hand, with larger angles there are fewer direct point-to-point interactions and we gain in efficiency, but we expect a lower accuracy. We evaluated the accuracy of our tree code by measuring a “mean relative error” in computing the accelerations according to the prescription suggested by Hernquist (1987), with different conditions of particle number N and opening angle θ. The prescription consists of a comparison of the three components of the acceleration vector as computed by means of the tree scheme,  , with the “exact” value

, with the “exact” value  computed by direct summation. A mean error

computed by direct summation. A mean error  is computed by averaging over all the N particles. Then, the relative error is computed as follows:

is computed by averaging over all the N particles. Then, the relative error is computed as follows:

(27)

(27)

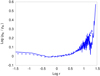

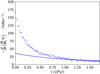

Figure 13 shows these relative errors, obtained with GaSPH, as a function of the CPU time. The figure does not show the relative errors for each single component, but shows the mean values, computed by the simple average  . Results for several setup configurations are illustrated. The figure shows the results in three different panels, according to the value of N (N = 104, N = 105, and N = 106). For each value of N, we used different combinations of the parameter θ (0.4, 0.6, 0.8) with different opening criteria (Eq. (20) or Eq. (21)) and different multipole approximations (only the monopole term, or also including the quadrupole term). As the data show, the approximation of the field with the quadrupole moment always represents the best choice in terms of performance because at the same error, it requests a shorter CPU time than the monopole approximation. On the other hand, the choice of the new opening criterion gives a smaller improvement of the error with respect to the benefits that are obtained by switching from monopole to quadrupole term.

. Results for several setup configurations are illustrated. The figure shows the results in three different panels, according to the value of N (N = 104, N = 105, and N = 106). For each value of N, we used different combinations of the parameter θ (0.4, 0.6, 0.8) with different opening criteria (Eq. (20) or Eq. (21)) and different multipole approximations (only the monopole term, or also including the quadrupole term). As the data show, the approximation of the field with the quadrupole moment always represents the best choice in terms of performance because at the same error, it requests a shorter CPU time than the monopole approximation. On the other hand, the choice of the new opening criterion gives a smaller improvement of the error with respect to the benefits that are obtained by switching from monopole to quadrupole term.

|

Fig. 13. Tree code relative errors Err(ak) (averaged over all the three Cartesian coordinates) for the gravitational field computation as a function of the CPU time. The data are illustrated for different particle numbers (N = 104, N = 105, and N = 106) in panels a, b, and c. In each panel, the full line connects the points related to a computation of the gravitational field made with the quadrupole approximation, while the dashed line refers to computations made by using just the monopole term. The shape of the “void” markers distinguishes different choices of θ with the “standard” criterion (Eq. (20)): θ = 0.8 (void squares), θ = 0.6 (void triangles), and θ = 0.4 (void circles). Results for different opening angles with the “opening law” (Eq. (21)) criterion are also marked: θ = 0.8 (solid squares), θ = 0.6 (solid triangles), and θ = 0.4 (solid circles). |

For lower particle numbers, a better computational performance without loss of accuracy can be obtained by including the quadrupole approximation and the (more expensive) opening criterion given in Eq. (21), together with a suitable change of the θ angle. We focus, for example, on the simulation setups characterized by θ = 0.6 with any possible opening criterion, monopole approximation, and N = 104 or N = 105. The change θ = 0.6 → θ = 0.8, together with the use of criterion (21), represents a good choice and provides better performing simulations without degrading the precision of the algorithm. We obtain the same advantage when we pass, similarly, from θ = 0.4 to θ = 0.6. On the other hand, for N = 106 (panel c in Fig. 13), the results related to the approximation with monopole and with quadrupole have smaller differences than the other cases with different N. The choice of a larger opening angle (passing from θ = 0.6 to θ = 0.8 or passing from θ = 0.4 to θ = 0.6) together with the use of the new opening criterion can give a better performance even though the accuracy slightly decreases.

If we wish to preserve the high efficiency of the tree code by keeping the CPU time to scale as N log N, an angle θ ≥ 0.3 must be chosen (Hernquist 1987). The choice of θ = 0.6 in quadrupole approximation or the choice of θ = 0.4 in monopole approximation together with criterion (21) represents a satisfying option because it provides relative errors of at most about 10−3.

We now added Nob = 20 stars to the SPH distribution. As explained in the previous section, a 14th-order explicit method was applied to calculate the evolution of these objects, and giving their low number in comparison to N, very little additional CPU time is expected. Table 1 reports the percentage or workload related to the main relevant subroutines for the new gas+stars system: tree-building + particle-sorting routine, density computation routine, acceleration routine, and the star evolution routine. In addition, we report the rest of the time needed for the basic operations (such as v, u, and r updating, energy computation, and time-step computation). Different work balances are shown for several values of N. The percentage of workload related to the tree-building routine is stable to the order of 6% at different N. In contrast, the work needed by the density routine becomes less and less relevant as N increases, while the gravity+SPH computation becomes increasingly essential. The computational effort to treat the evolution of stars, together with their interaction with the gas, is due both to the pure N-body RK coupling, which is expected to scale as  , and to the time for coupling each star with each SPH particle, which is expected to be linear in N. Nevertheless, Table 1 shows that 20 stars contribute very little to the total CPU time. For the specific purposes of our current work, we used fewer than two stars or two stars, and their contribution to the code effort is therefore far lower than the 2%÷3%.

, and to the time for coupling each star with each SPH particle, which is expected to be linear in N. Nevertheless, Table 1 shows that 20 stars contribute very little to the total CPU time. For the specific purposes of our current work, we used fewer than two stars or two stars, and their contribution to the code effort is therefore far lower than the 2%÷3%.

Work profiling (in percentage with respect to the total) of GaSPH, tested on a Plummer gas distribution with 20 stars and different numbers of SPH (first column).

4. Protoplanetary disks

This section is dedicated to the description of some tests performed on two main problems involving protoplanetary disks. The first test is to compare the numerical integration of a disk around one star with the analytical prediction (Sect. 4.1). The second test involves a self-gravitating disk that interacts with a binary star (Sect. 4.2).

4.1. Protoplanetary disks around one star

4.1.1. Disk model

Here we illustrate the general setup we used to model a protoplanetary disk in equilibrium around a star of mass Ms = 1 M⊙. According to the classical flared-disk model (see, e.g., Garcia 2011; Armitage 2011), we let the disk revolve around the central object with a roughly Keplerian frequency  (where R is the cylindrical coordinate

(where R is the cylindrical coordinate  in the reference frame centered on the central object). The disk evolution is essentially driven by secular viscous dissipation. According to the well-known α-disk model (Shakura & Sunyaev 1973), the turbulence in the internal disk is schematized by means of a pseudo-viscosity of the following form:

in the reference frame centered on the central object). The disk evolution is essentially driven by secular viscous dissipation. According to the well-known α-disk model (Shakura & Sunyaev 1973), the turbulence in the internal disk is schematized by means of a pseudo-viscosity of the following form:

(28)

(28)

This kinematic viscosity perturbs the fluid equations by leading to a net transport of matter inward and an outward flux of angular momentum. αSS represents a characteristic efficiency coefficient for the momentum transport, while H = cs/Ωk represents a characteristic vertical pressure of the disk scale height. The viscous evolution is usually much slower than the dynamical evolution (the characteristic secular timescale is ∝r2/ν, which typically is 2 or 3 orders of magnitude larger than  ). This modeling of the turbulence is basically dimensional and is made by mainly taking dynamical turbulence processes into account. Thus, αSS extends over a wide range of variability (typically 10−4 and 10−2). When the disk self-gravity is strong enough, another important effect arises that is due to the gravitational perturbations. Several works (see, e.g., Mayer et al. 2002; Boss 1998, 2003) have numerically estimated the gravitational timescales in a protoplanetary disk, which is on the same order as its dynamical time. They showed that under certain conditions, matter can undergo instabilities and eventually condense to form clumps in 103 ÷ 104 yr, which may give rise to gaseous planets. It can been shown that the disks maintain their equilibrium state against collapse according to the Toomre criterion,

). This modeling of the turbulence is basically dimensional and is made by mainly taking dynamical turbulence processes into account. Thus, αSS extends over a wide range of variability (typically 10−4 and 10−2). When the disk self-gravity is strong enough, another important effect arises that is due to the gravitational perturbations. Several works (see, e.g., Mayer et al. 2002; Boss 1998, 2003) have numerically estimated the gravitational timescales in a protoplanetary disk, which is on the same order as its dynamical time. They showed that under certain conditions, matter can undergo instabilities and eventually condense to form clumps in 103 ÷ 104 yr, which may give rise to gaseous planets. It can been shown that the disks maintain their equilibrium state against collapse according to the Toomre criterion,

(29)

(29)

where Ωe represents the epicyclic frequency, which is approximatively equivalent to Ωk for Keplerian disks (see Binney & Tremaine 1987; Toomre 1964, for a detailed study). The Toomre factor is a general coefficient that quantifies the predominance of the gravitational processes over the typical thermal and dynamical actions.

We initially let our disk revolve with an azimuthal velocity  that depends both on the mass of the central star Ms and on the internal mass of the disk itself

that depends both on the mass of the central star Ms and on the internal mass of the disk itself  . The cumulative mass M(R) can be neglected only for low disk masses MD ≪ Ms. The shape of the disk in the direction perpendicular to the revolving midplane depends on the vertical pressure scale height H, such that pressure and density scale with a Gaussian profile exp(−z2/2H2). Here a local vertically isothermal approximation was used because we assumed that any radiative input energy from the star is efficiently dissipated away: the cooling times are far shorter than the dynamical timescales. The disk is thus vertically isothermal, and the temperature depends only on the radial distance from the central star.

. The cumulative mass M(R) can be neglected only for low disk masses MD ≪ Ms. The shape of the disk in the direction perpendicular to the revolving midplane depends on the vertical pressure scale height H, such that pressure and density scale with a Gaussian profile exp(−z2/2H2). Here a local vertically isothermal approximation was used because we assumed that any radiative input energy from the star is efficiently dissipated away: the cooling times are far shorter than the dynamical timescales. The disk is thus vertically isothermal, and the temperature depends only on the radial distance from the central star.

We set the thermal disk profile according to the well-known flared-disk model, for which the ratio H/R increases with R (see Garcia 2011; Dullemond et al. 2007, for a full clarification). The disk temperature thus follows the profile

(30)

(30)

which is commonly used by setting q = 1/2, while R0 represents a scale length. We used a slightly different slope q = 3/7, adopted by D’Alessio et al. (1999) by assuming that the thermal processes in the inner layers of the disk do not affect its dynamical stability.

With this temperature profile (independent of t and z), the gas pressure follows a barotropic equation of state  . This choice represents a rough approximation of the cooling processes and allows us to model self-gravitating disks in equilibrium only when Q > 2, which excludes disk models with a state of marginal stability (Q ≈ 1). In a realistic model of a disk without the isothermal approximation, when the Toomre parameter approaches unity, the loss of thermal energy due to radiative cooling processes leads to matter aggregation, which in turn causes shock waves that heat the gas again. If the disk is capable of retaining a sufficient amount of the additional thermal energy that is generated, the collapse only causes some spiral instabilities that do not increase exponentially. The collapse process is thus arrested and the disk reaches a meta-stable state. Every time that a gravitational instability occurs, it is further dissipated by the heat back-production in this meta-state. For a good treatment, see for example Kratter & Lodato (2016). Conversely, the isothermal equation adopted by our model forces the system to cool down at an infinitely high efficiency rate, and to expel all the additional thermal energy that is generated by the compression of matter. Thus, in regions where 1 ≤ Q < 2, the density increases and the collapse process is not halted by production of heat. Our model of a disk in equilibrium is thus limited to masses MD for which the self-gravity guarantees the condition Q ≥ 2.