| Issue |

A&A

Volume 684, April 2024

|

|

|---|---|---|

| Article Number | A192 | |

| Number of page(s) | 32 | |

| Section | Numerical methods and codes | |

| DOI | https://doi.org/10.1051/0004-6361/202348687 | |

| Published online | 24 April 2024 | |

FARGOCPT: 2D Multiphysics code for simulating disk interactions with stars, planets, and particles★

1

Institut für Theoretische Astrophysik, Zentrum für Astronomie (ZAH), Universität Heidelberg,

Albert-Ueberle-Str. 2,

69120

Heidelberg,

Germany

e-mail: rometsch@uni-heidelberg.de

2

Institut für Astronomie und Astrophysik, Universität Tübingen,

Auf der Morgenstelle 10,

72076

Tübingen,

Germany

3

Fakultät für Physik, Universität Duisburg-Essen,

Lotharstraße 1,

47057

Duisburg,

Germany

4

Leibniz-Institut für Astrophysik Potsdam (AIP),

An der Sternwarte 16,

14482

Potsdam,

Germany

5

Universitäts-Sternwarte, Fakultät für Physik, Ludwig-Maximilians-Universität München,

Scheinerstr. 1,

81679

München,

Germany

Received:

21

November

2023

Accepted:

29

January

2024

Context. Planet-disk interactions play a crucial role in the understanding of planet formation and disk evolution. There are multiple numerical tools available to simulate these interactions, including the commonly used FARGO code and its variants. Many of the codes have been extended over time to include additional physical processes, with a focus on their accurate modeling.

Aims. We introduce FARGOCPT, an updated version of FARGO that incorporates other previous enhancements to the code, to provide a simulation environment tailored to studies of the interactions between stars, planets, and disks. It is meant to ensure an accurate representation of planet systems, hydrodynamics, and dust dynamics, with a focus on usability.

Methods. The radiation-hydrodynamics part of FARGOCPT uses a second-order upwind scheme in 2D polar coordinates, supporting multiple equations of state, radiation transport, heating and cooling, and self-gravity. Shocks are considered using artificial viscosity. The integration of the N-body system is achieved by leveraging the REBOUND code. The dust module utilizes massless tracer particles, adapted to drag laws for the Stokes and Epstein regimes. Moreover, FARGOCPT provides mechanisms to simulate accretion onto stars and planets.

Results. The code has been tested in practice in the context of multiple studies. Additionally, it comes with an automated test suite for checking the physics modules. It is available online.

Conclusions. FARGOCPT offers a unique set of simulation capabilities within the current landscape of publicly available planet-disk interaction simulation tools. Its structured interface and underlying technical updates are intended to assist researchers in ongoing explorations of planet formation.

Key words: hydrodynamics / methods: numerical / protoplanetary disks / planet–disk interactions / binaries: close / novae, cataclysmic variables

© The Authors 2024

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Open Access article, published by EDP Sciences, under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

This article is published in open access under the Subscribe to Open model. Subscribe to A&A to support open access publication.

1 Introduction

Planet migration is a crucial component of our understanding of planet formation. Besides analytical or semi-analytical calculations, one way to study it is via hydrodynamic calculations coupled with gravitational N-body simulations. Specifically, in the context of planet formation, the shape of the hydrodynamic object resembles a thin, flared disk. The computer program FARGO (Masset 2000) is meant to simulate these protoplane-tary disks by numerically solving the hydrodynamics equations on a staggered grid using a second-order upwind scheme. It includes a special algorithm, with the same name, which can relax the time step constraint by making use of the axial symmetry of the disk flow, thereby reducing the computational cost of simulations. The specific form of the hydrodynamic equations solved by the program is stated later in Sect. 2.

Because FARGO is tailored to the study of protoplane-tary disks, the code uses a cylindrical grid, reflecting the major symmetry of the system. One main assumption used in the code is that the simulated disks are thin in the sense that their vertical extent is small compared to the radial distance from the central star. This is the justification for approximating the three-dimensional (3D) disk with a two-dimensional (2D) representation. Using this approximation significantly reduces the required time for simulations of protoplanetary disks and enables the long-term study of these systems. In its original form, the code employed the (locally) isothermal assumption, in which the temperature is assumed to be fixed in time and only dependent on the distance from the central start. This allows for the energy equation to be solved analytically, while additionally reducing the computational cost of the simulation.

Over the past two decades, studies have shown that additional effects play an important role in the evolution of protoplanetary disks and their interaction with embedded planets (for overviews, see Kley & Nelson 2012; Baruteau et al. 2014; Paardekooper et al. 2023). The relevant physical processes include the heating and cooling of the gas (e.g., Baruteau & Masset 2008a; Kley & Crida 2008; Paardekooper & Papaloizou 2008; Lega et al. 2014; Masset 2017), self-gravity (e.g., Pierens & Huré 2005; Baruteau & Masset 2008b), and radiation transport (e.g., Morohoshi & Tanaka 2003; Paardekooper & Mellema 2006). We have added all of these effects to our version of the FARGO code and made them fully controllable from the input file and verified their correctness through extensive testing. We also extended the number of physical quantities that are evaluated during the simulation and written to output files.

While there are multiple options to run planet-disk interaction simulations including the aforementioned effects, we have recognized a need for a simulation code that is not only able to simulate the relevant physical processes accurately, but is also easy to use. This is especially important for students and researchers who are not experts in modifying and compiling C/C++ or FORTRAN codes in a typical Linux environment. These skills can pose a significant hurdle when running simulations.

The primary aim behind publishing this code is to provide a comprehensive simulation tool for planet-disk interactions that remains accessible and useful to both students and more senior researchers. To this end, the testing suite for the physical modules is fully automated with pass-fail tests so it can be run after every future modification of the code to ensure that it is still working as intended.

On the physics side, a significant enhancement is the incorporation of radiation physics. Furthermore, the introduction of a particle module enables in-depth studies on the impact of embedded planet systems on the structure and dust distribution of planet-forming disks, a task that is currently often performed to model disk observations. We also added cooling and mass inflow functions and an updated equation of state specifically tailored to study cataclysmic variable systems. Additionally, the code can simulate the disk centered around any combination of N-body objects. This enables, for instance, simulations of cir-cumbinary disks in the center of mass-frame or simulations of a circumsecondary disk in a binary star system.

Simulation programs for planet-disk interaction can, broadly speaking be organized into two main categories: Lagrangian and Eulerian methods. Lagrangian methods trace the dynamics of the disk by following the motion of a large number of particles. The most common example of this is the Smoothed Particle Hydrodynamics (SPH) method with a prominent example being the PHANTOM code (Price et al. 2018).

The Eulerian methods solve the hydrodynamics equations on a grid, which can either be fixed or dynamic. In this category, again, we can distinguish between two main approaches used in astrophysics: methods that require artificial viscosity and Godunov methods (Woodward & Colella 1984). Either of these methods can be implemented as a finite-volume or finite-difference method. Godunov methods solve the Riemann problem at the cell interfaces to compute the fluxes through the interfaces. This approach allows for an accurate treatment of shocks and an approximate treatment of smooth flows. Prominent examples include the PLUTO code Mignone et al. (2007) and the Athena++ code (Stone et al. 2020).

Schemes based on artificial viscosity can be derived as a finite-difference scheme from Taylor series expansions of the Euler equations (Woodward & Colella 1984). This technique assumes that the solution is smooth, which is not the case at discontinuities such as shocks. It approximates shocks by smearing out discontinuities into smooth regions of strong gradients via artificial viscosity. The ZEUS code (Stone & Norman 1992) and the FARGO code (which is based on the ZEUS code) are prominent examples of this approach. While these codes were classified as finite-difference schemes, they employed a staggered grid and implemented their advection step by splitting the domain into cells and computing the fluxes through the cell interfaces. The cell as a basic geometrical unit and the sharing of fluxes among adjacent cells, which ensures the conservation of the advected quantities to machine-precision by construction, warrant the classification of the advection scheme as “finite-volume” (Anderson et al. 2020). We lean towards this classification because it rests on an intrinsic property of the scheme. However, the crucial distinction seems to be the one between Godunov methods and methods that require artificial viscosity and the FARGO code family falls into the latter category.

While Godunov methods are (formally) more accurate when handling discontinuities such as shocks, the artificial viscosity methods are better at modeling smooth flows and, in practice, they are often more robust and easier to use. Both approaches have their advantages and disadvantages and the choice of which method to use depends on the specific problem at hand. For a specific example, see Ziampras et al. (2023b) who used both PLUTO and FARGO3D to investigate the buoyancy waves in the coorbital region of small-mass planets.

The original FARGO code was introduced by Masset (2000). Based on this code, other groups developed their version of the FARGO code. Examples include FARGO2D1D (Crida et al. 2007), GFARGO (Regály et al. 2012; Masset 2015), FARGOCA (Lega et al. 2014), FARGO_THORIN (Chrenko et al. 2017), the official successor FARGO3D (Benítez-Llambay & Masset 2016), as well as FARGOADSG (Baruteau & Masset 2008a,b; Baruteau & Zhu 2016), which is the predecessor of our code.

In this work, we present FARGOCPT, the FARGO version developed by members of the Computational Physics group at the University of Tübingen since 2012. Publications from this group, using the code, include Müller & Kley (2012, 2013), Picogna & Kley (2015), Rometsch et al. (2020, 2021), and Jordan et al. (2021).

Furthermore, we present novel approaches for handling challenging aspects of planet-disk interaction simulations. We introduce a new method for handling the indirect term suitable for simulations centered on individual stars in binary systems (Sect. 3.5), a local viscosity stabilizer for use in the context of cataclysmic variables (Sect. 3.9.2), and a correction for dust diffusion treated by stochastic kicks (Sect. 3.12.2).

This document is structured as follows. First, the physical problem at hand is sketched out in Sect. 2. Then, the newly introduced physics modules are described in Sect. 3. Software engineering and usability aspects of the code are discussed in Sect. 4. Finally, we present our conclusions in Sect. 5 with a discussion of the code. The appendix includes a presentation of various test cases included in the automatic test suite.

2 Physical system

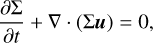

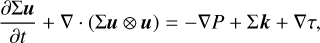

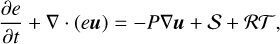

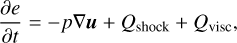

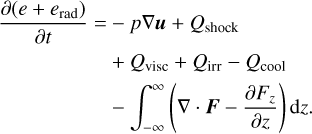

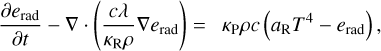

The FARGOCPT code is a computer program that numerically solves the vertically integrated radiation-hydrodynamics equations in the one-temperature approximation coupled with an N-body system. They are expressed as:

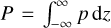

(1)

(1)

(2)

(2)

(3)

(3)

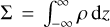

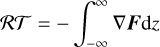

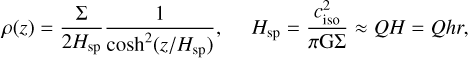

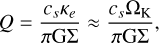

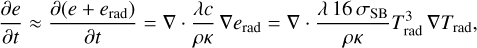

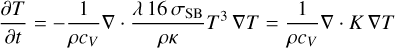

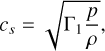

with the surface density,  , as the vertically integrated gas volume density, ρ, the gas velocity, u, the vertically integrated internal energy density, e = Σє, with the specific internal energy, є, the vertically integrated pressure,

, as the vertically integrated gas volume density, ρ, the gas velocity, u, the vertically integrated internal energy density, e = Σє, with the specific internal energy, є, the vertically integrated pressure,  , accelerations, k, due to external forces (e.g., due to gravity), the viscous stress tensor, τ, and heat sinks and sources, S (see Sect. 3.8). The last term represents the radiation transport:

, accelerations, k, due to external forces (e.g., due to gravity), the viscous stress tensor, τ, and heat sinks and sources, S (see Sect. 3.8). The last term represents the radiation transport:

(4)

(4)

with the radiation flux, F, in three dimensions. In the code, the vertical component of the radiation flux is split off and treated with an effective model, see Sect. 3.8. The code handles radiation hydrodynamics using the one-temperature approach which is discussed in more detail in Sect. 3.8.5.

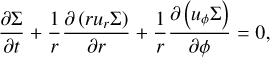

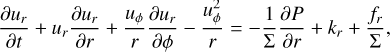

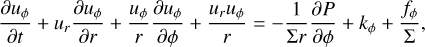

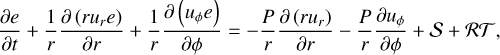

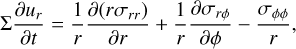

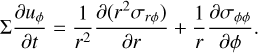

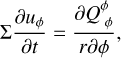

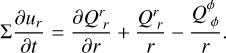

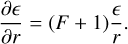

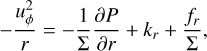

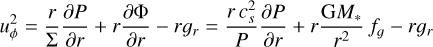

In polar coordinates (r, ϕ), to which the code is tailored, the equations are expressed as (e.g., Masset 2002):

(5)

(5)

(6)

(6)

(7)

(7)

(8)

(8)

where ƒr and ƒϕ are the radial and azimuthal forces per unit area due to viscosity (see Eqs. (82) and (83)). For a rotating coordinate system, we follow the conservative formulation of Kley (1998) and add the respective terms to the two momentum equations.

The left-hand sides of these equations are the transport step and the right-hand sides are the source terms. Following the scheme of the ZEUS code (Stone & Norman 1992), the transport step is solved by a finite-volume method based on an upwind scheme with a second-order slope limiter (van Leer 1977) and the code can make use of the FARGO algorithm (Masset 2000) to speed up the simulation. The source terms are updated as described in Sect. 3.1 using first-order Euler steps or implicit updates. The definitions for the heating and cooling term, S, and the radiation transport term, 𝓡𝒯, are given in Sect. 3.8.

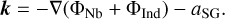

The external accelerations k are due to the gravitational forces from the star(s) and planets, correction terms in case of a non-inertial frame, and the self-gravity of the disk:

(9)

(9)

The interaction of the disk with the N-body objects is considered via the gravitational potential, as expressed in Eq. (15). Self-gravity of the gas is considered as an acceleration (see Sect. 3.7).

The N-body objects feel the gravitational acceleration a exerted by the gas. This is computed by summation of the smoothed gravitational acceleration over all grid cells. We refer to Sect. 3.6 for formulas and details about the smoothing. Because the simulation can be run in a non-inertial frame of reference, the correction terms are applied as detailed in Sect. 3.5.

Finally, FARGOCPT features a particle module based on Lagrangian super-particles, where a single particle might represent any number of physical dust particles or solid bodies. The particles feel the gravity from the N-bodies and interact with the gas through a gas-drag law. Additionally, the dust diffusion was modeled to consider the effects of gas turbulence. The particle module is described in detail in Sect. 3.12. The next section describes the various physics modules that were added to the code.

3 Improvements of the physics modules

We built upon the original FARGO code by Masset (2000) and its improved version FARGOADSG. It already included treatment of the energy equation (Baruteau & Masset 2008a), self-gravity (Baruteau & Masset 2008b), and a Lagrangian particle module (Baruteau & Zhu 2016). We advanced these modules, added new physics modules, and added features to improve the usability of the code. For a detailed description of the FARGOADSG code, and with it of the underlying hydrodynamics part of the code presented here, we recommend Chap. 3 of Baruteau (2008). This section starts with outlining the order of operations in the operator splitting approach and then describes the various new features and changes.

3.1 Order of operations and interaction of subsystems

This section details the order in which the physical processes are considered during one iteration in the code and how they interact. For the update step, we used the sequential operator splitting (also known as Lie-Trotter splitting) where possible, meaning that we always use the most up-to-date quantities from the previous operators when applying the current operator. This is the simplest and oldest splitting scheme and has better accuracy than applying all operators using the quantities at the beginning of the step. This scheme is known as additive splitting (e.g., Geiser et al. 2017). Each time step starts with accretion onto the planets. Conceptually, this is the same as performing planet accretion at the end of the time step, except for the first and last iteration of the simulation.

Then, the code computes the gravitational forces between the N-body objects and the gas, between the N-body objects and the dust particles, and the self-gravity of the disk. At this stage, the indirect term, namely, the corrections for the non-inertial frame, is computed and added to the gravitational interaction. These are then applied to the subsystems by updating the velocities of the N-body objects, updating the acceleration of the dust particles, and updating the potential of the gas. Experience shows that for the interaction between N-body and gas the positions of the N-body objects have to be at the same time as the gas. From this point on, the N-body system and the particle system evolve independently in time until the end of the time step.

The gas velocities are first updated by the self-gravity acceleration and then by the N-body gravity potential, the pressure gradient, and, in the case of the radial update, also the centrifugal acceleration. At this step, the code updates the internal energy of the gas with compression heating. It is important to perform the energy update at this step before the viscosity is applied to avoid instabilities. Then, the code sequentially updates the velocities and the internal energy further by artificial viscosity, viscosity (both of which depend on the gas velocities), as well as heating and cooling terms. The heating and cooling steps are applied simultaneously for numerical stability. Finally, the internal energy is updated by radiation transport. Once all the forces and source terms were applied, the transport step is conducted. Boundary conditions and the wave-damping zone are applied at the appropriate sub-steps throughout the hydrodynamics step.

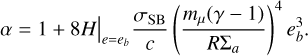

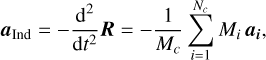

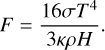

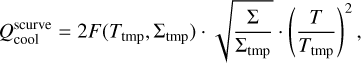

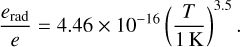

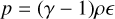

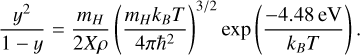

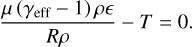

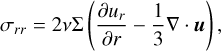

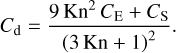

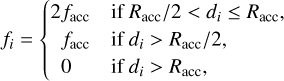

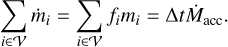

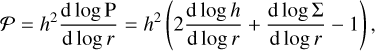

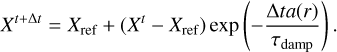

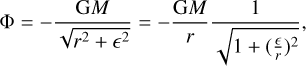

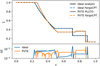

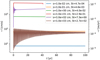

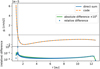

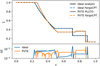

A detailed diagram of the order of the operations during each time step and the interactions between the subsystems is shown in Fig. 1. The diagram illustrates how each of the subsystems is advanced in time and between which sub-steps information is exchanged between the subsystems. The next paragraphs guide through the diagram starting from the top, explaining the meaning of the differently shaped patches, arrows, and lines. Each of the three subsystems is colored differently: the N-body system is shown in green, the hydrodynamics in blue, and the dust particles in orange. A colored patch represents the “state” of a subsystem. Elliptical patches indicate the initial state at the beginning of a time step, parallelograms indicate the intermediate states and colored rectangles with rounded corners indicate the final state at the end of the time step. Each state is labeled with variable names. The respective subscript indicates the sub-step during the operator splitting. For example, the energy is updated from en, first to ea, then to eb and after additional steps finally to en+1. These variables will be used in Sect. 3.8 to refer to the sub-steps. A variable printed in bold text indicates that the variable was changed by the last operation.

A white rectangle with rounded corners indicates an “intermediate calculation”, the result of which is subsequently used in multiple operations. For example, the calculation of the indirect term is used in all three subsystems. Finally, the rectangles with bars on the sides indicate an “operation” that changes the state of a system.

Double lines trace the change of a subsystem throughout the time step. Lines with arrows attached at the end emerge from a state patch or an intermediate calculation and end in an operation or intermediate calculation patch. The large rectangle with bars shaded in grey at the right side of the diagram illustrates the sub-steps involved in the hydrodynamics part of the simulation. Except for the irradiation operation which requires the position of the N-bodies, this part of the simulation is independent of the N-body system.

The shaded rectangle with rounded corners in the upper third of the diagram illustrates the various gravitational interactions between the subsystems. The information indicated by arrows that end at the borders of this patch can be applied in any of the contained intermediate calculations.

The operations are ordered from the top to bottom in the order they are applied during the time step. This means that an operation or a state is generally only influenced by states above it or at the same level. Please follow the arrows to get a sense of the order of operations.

|

Fig. 1 Order of operations in the operator splitting scheme showing the evolution of the N-body, hydro, and particle subsystems throughout one iteration step. Single-line arrows mean that the originating object is used in the calculation of the destination. Double lines indicated the evolution of one of the subsystems from the initial state (ellipse) over intermediate states (parallelogram) to the final state (round shape). Each rectangle with rounded corners is a computation of an intermediate quantity using the state of the subsystem to which it is connected. The rectangles with bars on the sides indicate an operation that changes the state of a system. The variables that were changed by an operation are indicated in boldface in the intermediate states. |

Possible cases for the disk gravity.

3.2 N-body module

The original FARGO code includes a Runge-Kutta fifth-order N-body integrator, which was used with the same time step as the hydro simulations. This could lead to some situations in which the time step was too large for the N-body simulation, for instance, during close encounters in simulations of multiple migrating planets. Instead of implementing our N-body code with adaptive time-stepping, we incorporated the well-established and well-tested REBOUND code (Rein & Liu 2012) with its 15th order IAS15 integrator (Rein & Spiegel 2015) into FARGOCPT. REBOUND is called during every integration step of the hydro system to advance the N-body system for the length of the CFL time step. During this time, multiple N-body steps might be performed as needed. The interaction with the gas is incorporated by adding Δt a to the velocities of the bodies before the N-body integration.

We changed the central object from an implicit object, which was assumed to be of mass 1 in code units placed at the origin, to a moving point mass. It is now treated exactly like any other N-body object, notably including gravitational smoothing, which was not applied to the star before. This solves an inconsistency between the interaction of disk and planets and of the disk and star. This is necessary as the smoothing simulates the effect of the disk being stratified in the vertical direction and makes the potential behave more like it would in 3D (Müller et al. 2012).

Changing the star from being an implicit object to an explicit one, we changed all the relevant equations, which now include the mass of the central object and the distance to it.

3.3 Gravitational interactions

The dominant physical interaction between planets and a disk is gravity. In principle, any object that has mass causes a gravitational acceleration on any other object. In our simulation, N-body objects are considered to have a mass and particles are considered to be massless. The gas disk can be configured in one of three states. First, it can be massless and not accelerate the N-bodies. Second, the disk can massive, accelerate the N-bodies, the particles and itself (self-gravity included). Third, it can massive, accelerate the N-bodies, but not itself or the particles (self-gravity ignored).

The first two cases are consistent whereas the third case is inconsistent. However, it is a commonly used approximation. The reason for this is that the computation of the (self)gravity of the gas disk is computationally expensive.

In the third case, when the forces on the N-bodies due to the gas are computed using the full surface density, the N-bodies feel the full mass of the disk interior to their orbit, while gas on the same orbit does not feel this mass. Consequently, their respective equilibrium state angular velocities differ which causes a non-physical shift in resonance locations and, thus, in the torque and migration rate experienced by the planets (Baruteau & Masset 2008b). This mismatch can be alleviated by simply subtracting the azimuthal average of the surface density in the calculation of the force. This is done in the code in the case that self-gravity is disabled.

Our code does not support a configuration in which only one of the N-bodies feels the disk, such as a situation in which the planet feels the disk but the star does not. This is done intentionally to avoid nonphysical systems. Likewise, the indirect term caused by the disk is always included if the disk has mass (cases 2 and 3).

Whether the disk is considered to have mass is configured by the “DiskFeedback” option. If this is set to “yes”, the disk is considered to have mass and vice-versa. The three cases and the value of the code parameters are summarized in Table 1.

3.4 Coordinate center

Because the star is treated like any other N-body object and it is able to move, we need to define the coordinate center of the hydro domain. In principle, we can choose an arbitrary reference point. However, because the N-body system dominates the gravity, we choose the center of mass of any combination of N-bodies as the coordinate center.

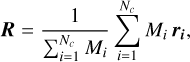

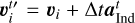

With the N-body objects located at ri, with i = 1,2,…, Np, the coordinate center, R, can now be set as:

(10)

(10)

where Nc is the number of point masses whose center of mass is chosen to be the coordinate center. For example, Nc = Np selects the full N-body system as a reference, Nc = 1 selects the primary as a center and Nc = 2 selects a stellar binary or the center of mass of a star-planet system.

The center of mass of the full N-body system is likely the most desirable of such choices because it can be an inertial frame that does not require considering fictitious forces. This eliminates the need for an extra acceleration term to correct for the non-inertial frame, the so-called indirect term, which can be the source of numerical instabilities. At least it eliminates the need for the indirect term resulting from the N-body system, which generally dominates the indirect term, although contributions from the disk gravity might still need to be considered. One application is the simulation of circumbinary disks, in which the natural coordinate center is the center of mass of the binary stars.

3.5 Shift-based indirect term

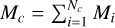

The correction forces needed because of a non-inertial frame are typically called the indirect term in the context of planet-disk interaction simulations. Based on the definition of the coordinate center in Eq. (10), the corresponding acceleration can formally be written as:

(11)

(11)

where we used  , defined

, defined  , and assumed that the derivatives of the masses are negligible. The individual ai should include all accelerations from forces acting on a specific N-body , including gravity from all sources, other N-bodies or the disk.

, and assumed that the derivatives of the masses are negligible. The individual ai should include all accelerations from forces acting on a specific N-body , including gravity from all sources, other N-bodies or the disk.

Usually, this correction term is evaluated at the start of a time step, yielding a vector  . As outlined in Sect. 2, this is then added to the velocities of the N-bodies as a sort of Euler step

. As outlined in Sect. 2, this is then added to the velocities of the N-bodies as a sort of Euler step  before integration of the N-body system. For the disk, the indirect term is applied through the potential. This update is first-order in time. As long as the magnitude of the indirect term is small compared to direct gravity, this choice appears to be good enough.

before integration of the N-body system. For the disk, the indirect term is applied through the potential. This update is first-order in time. As long as the magnitude of the indirect term is small compared to direct gravity, this choice appears to be good enough.

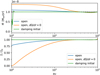

Using an Euler step as above is not sufficient in simulations in which the indirect term becomes stronger than the direct gravity. This can be the case in simulations of circumbinary disks centered on one of the stars that are useful for the study of cir-cumstellar disks in binary star systems. In such simulations, the disk can become unstable with the Euler method.

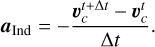

As a solution, we compute the indirect term as the average acceleration felt by the coordinate center during the whole time step. The average acceleration is computed from the actual shift needed to keep the coordinate center put. Therefore we call this method the “shift-based indirect term”. In our code, this is achieved by making a copy of the N-body system after the N-body velocities have been updated by disk gravity, integrating the copy in time and computing the net acceleration from the velocities as:

(12)

(12)

where  is the velocity of the coordinate center. The code then discards the N-body system copy, applies this indirect term to the N-body, hydro, and particle systems, and integrates them. In this way, the acceleration of the center and the indirect term cancel out and the system stays put much better compared to the old method. The computation of the acceleration is handled this way because the IASl5 integrator is a predictor-corrector scheme that does not produce an effective acceleration that would be accessible from the outside. Using the acceleration from an explicit Runge-Kutta scheme would produce the same results.

is the velocity of the coordinate center. The code then discards the N-body system copy, applies this indirect term to the N-body, hydro, and particle systems, and integrates them. In this way, the acceleration of the center and the indirect term cancel out and the system stays put much better compared to the old method. The computation of the acceleration is handled this way because the IASl5 integrator is a predictor-corrector scheme that does not produce an effective acceleration that would be accessible from the outside. Using the acceleration from an explicit Runge-Kutta scheme would produce the same results.

We tested, both, the old and the shift-based indirect term implementations and could not find any discernable difference in the dynamics of systems with embedded planets as massive as 10 Jupiter masses around a solar mass star. However, the shift-based indirect term enables simulations of circumbinary disks centered on one of the stars which was previously not possible. Even resolved simulations of circumsecondary disks (centered on the secondary star) when the latter is less massive than the primary star are possible. For the impact of this new scheme on the simulation of circumbinary disks, we refer to Jordan et al. (in prep.).

3.6 Gravity and smoothing

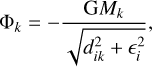

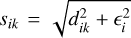

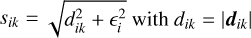

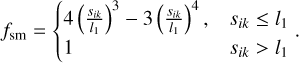

For modeling the gravity, we used the common scale-height-dependent smoothing approach. This approach accounts for the fact that a gas cell in 2D, which represents a vertically extended disk, experiences a weaker gravitational pull from an N-body object compared to the same cell for a truly razor-thin 2D disk (Müller et al. 2012). The potential due to a point mass, k, with mass, Mk, at a distance, dik, from the center of a cell, i, is then given by:

(13)

(13)

where G is the gravitational constant, dij is the distance between the point mass and the cell, and єi = αsmHi is the smoothing length with the smoothing parameter, αsm, and the cell scale height, Hi. According to Müller et al. (2012), αsm should be between 0.6 and 0.7 to accurately describe gravitational forces and torques, which is why we apply it to all N-bodies, even the central star. Masset (2002) found that a factor of 0.76 most closely reproduces type I migration rates of 3D simulations. We note that єi has to be evaluated with the scale height at the location of the cell, not the location of the N-body object (Müller et al. 2012).

When the gravity of planets inside the disk is also considered for computing the scale height (Sect. 3.11), the scale height and the smoothing length in Eq. (13) become smaller in the vicinity of the planet. There, the smoothing can then become too small such that numerical instabilities occur.

To remedy this issue, we added an additional smoothing adapted from Klahr & Kley (2006). We found this to be a necessity when simulating binaries where one component enters the simulation domain due to an eccentric orbit or small inner domain radius. It is applied as a factor to the potential in Eq. (13) and is given by:

![${\rm{\Phi }}_k^{{\rm{sm}}} = {{\rm{\Phi }}_k}\left\{ {\matrix{ {\left[ {{{\left( {{{{s_{ik}}} \over {{_i}}}} \right)}^4} - 2{{\left( {{{{s_{ik}}} \over {{_i}}}} \right)}^3} + 2\left( {{{{s_{ik}}} \over {{_i}}}} \right)} \right]\,\,\,\,\,{\rm{if}}{s_{ik}} < {d_{{\rm{sm}},k}}} \cr {1\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,{\rm{otherwise;}}} \cr } } \right.$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq21.png) (14)

(14)

where  is the є-smoothed distance and dsm,k is another smoothing length, separate from єi. This smoothing is purely numerically motivated and guarantees numerical stability close to the planet. It has no effect outside dsm,k. The smoothing length, dsm,k, is a fraction of the Roche radius, RRoche, which is the distance from the point mass to its L1 point with respect to point mass, k = 1. For the case that the mass of the N-body particles changes, for example, with planet accretion, we compute RRoche using one Newton-Raphson iteration to calculate the dimensionless Roche radius, starting from its value during the last iteration. For low planet masses, RRoche reduces to the Hill radius.

is the є-smoothed distance and dsm,k is another smoothing length, separate from єi. This smoothing is purely numerically motivated and guarantees numerical stability close to the planet. It has no effect outside dsm,k. The smoothing length, dsm,k, is a fraction of the Roche radius, RRoche, which is the distance from the point mass to its L1 point with respect to point mass, k = 1. For the case that the mass of the N-body particles changes, for example, with planet accretion, we compute RRoche using one Newton-Raphson iteration to calculate the dimensionless Roche radius, starting from its value during the last iteration. For low planet masses, RRoche reduces to the Hill radius.

In many Fargo code variants, the gravity of the central star is not smoothed. However, the smoothing is required because the disk is vertically extended. The gravitational potential of the central star should therefore also be smoothed, otherwise, it is overestimated. This results in a deviation of order 10−4 for the azimuthal velocity compared to a non-smoothed stellar potential. Although this deviation is small, we believe that the stellar potential should be smoothed for consistency. A downside of this choice is that there exists no known analytical solution for the equilibrium state of a disk around a central star with a smoothed potential.

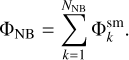

The full N-body system with NNB members then has the total potential:

(15)

(15)

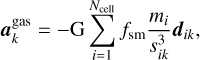

Having covered how the point masses affect the gas, the following paragraphs describe how the planets are affected by the gas. For a given point mass, k, at position, rk, the gravitational acceleration exerted onto the point mass by the disk is:

(16)

(16)

where dik = rk − ri is the distance vector between the planet and the cell, mi is the mass of a grid-cell, i, and sik is the smoothed distance between the cell and the point mass. It is given by  .

.

Again, the acceleration includes єi to account for the finite vertical extent of the disk. The same coefficient is used for the potential. The additional factor is the analog to Eq. (14) and is given by:

(17)

(17)

We note that the interaction is not symmetric. The acceleration of N-body objects due to the gas is computed using direct summation while the gravitational potential in Eq. (15) enters into the momentum expressions in Eqs. (6) and (7) via a numerical differentiation. Because we computed the smoothing length using the scale height at the location of the cell (as we argue should be done on physical grounds, e.g., Müller et al. 2012), the differentiation causes extra terms that depend on the smoothing length.

To alleviate this issue, we implemented computing the acceleration of the gas due to the N-body objects by direct summation, which has negligible computational overhead. In this way, the interaction is fully symmetric and no additional terms are introduced. As of now, we are unaware of how much this asymmetry affects the results of simulations of planet–disk interaction and the issue is left for future work.

3.7 Self-gravity

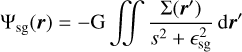

Calculating the gravitational potential of a thin (2D) disk is a complex task. Indeed, it requires the vertical averaging of Poisson’s equation, which in general is not feasible. Due to this limitation, in thin disk simulations, we often resort to a Plummer potential approximation for the gas, taking the following form:

(18)

(18)

with s = ‖r − r'‖, the gravitational constant, G, and a smoothing length, єsg. Contrary to a common belief, the role of this smoothing length is not to avoid numerical divergences at the singularity, s = 0. While it indeed fulfills this function, its main purpose is to account for the vertical stratification of the disk. In other terms, it permits gathering the combined effects of all disk vertical layers in the midplane. Without such a smoothing length, the magnitude of the self-gravity (SG) acceleration would be overestimated. In this context, many smoothing-lengths have been proposed but the most widely used is the one proposed by Müller et al. (2012). Based on an analytic approach, they suggested that the softening should be proportional to the gas scale height, ϵsg = 1.2Hg, to correctly capture SG at large distances.

Direct computation of the potential according to Eq. (18) is prohibitive since it requires N2 operations. Fortunately, assuming a logarithmic spacing in the radial direction and a constant disk aspect ratio, h = H/r = const., the potential can be recast as a convolution product, which can be efficiently computed in order N log(N) operations (Binney & Tremaine 1987) thanks to fast Fourier methods (Frigo & Johnson 2005). Such a method was implemented for the SG accelerations by Baruteau (2008). We use the same method and the module used in FARGOCPT is based on the implementation in FargoADSG (Baruteau & Masset 2008b).

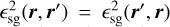

The traditional choice for the smoothing length is  where B is a constant. This choice, however, has two drawbacks. First, it breaks the r − r′ symmetry of the gravitational interaction, which violates Newton’s third law of motion. As a consequence, a nonphysical acceleration in the radial direction is manifested (Baruteau 2008). Second, even if the choice of B = 1.2 h minimizes the errors at large distances (Müller et al. 2012) this, nonetheless, results in SG underestimation at small distances, independent of the value of B (Rendon Restrepo & Barge 2023). This underestimation can quench gravitational collapse.

where B is a constant. This choice, however, has two drawbacks. First, it breaks the r − r′ symmetry of the gravitational interaction, which violates Newton’s third law of motion. As a consequence, a nonphysical acceleration in the radial direction is manifested (Baruteau 2008). Second, even if the choice of B = 1.2 h minimizes the errors at large distances (Müller et al. 2012) this, nonetheless, results in SG underestimation at small distances, independent of the value of B (Rendon Restrepo & Barge 2023). This underestimation can quench gravitational collapse.

FARGOCPT includes two improvements to alleviate those problems. The asymmetry problem can be alleviated by choosing a smoothing length that fulfills the symmetry requirement  . Additionally, it can be shown that the Fourier scheme is still applicable for a more general form of the smoothing length

. Additionally, it can be shown that the Fourier scheme is still applicable for a more general form of the smoothing length  This is fulfilled if the smoothing length is a Laurent series in the ratio

This is fulfilled if the smoothing length is a Laurent series in the ratio  and a Fourier series in ϕ − ϕ' (which additionally captures the 2π periodicity in ϕ). Testing has shown, that the azimuthal dependence is negligible, so we only consider the constant term from the Fourier series. Furthermore, the radial dependence is only weak, so we only consider the first two terms of the Laurent series. This leads to the following form of the smoothing length:

and a Fourier series in ϕ − ϕ' (which additionally captures the 2π periodicity in ϕ). Testing has shown, that the azimuthal dependence is negligible, so we only consider the constant term from the Fourier series. Furthermore, the radial dependence is only weak, so we only consider the first two terms of the Laurent series. This leads to the following form of the smoothing length:

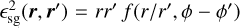

(19)

(19)

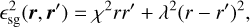

with two positively defined coefficients χ and λ. These two parameters depend on the aspect ratio h and can be precom-puted for a given grid size by numerically minimizing the error between the 2D approximation and the full 3D summation of the gravitational acceleration. This requires specifying the vertical stratification of the disk. Assuming that the gravity from the central object is negligible compared to the disk SG, the vertical stratification is a Spitzer profile:

(20)

(20)

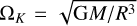

with the Toomre parameter

(21)

(21)

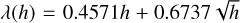

with the epicycle frequency κe. Furthermore assuming a grid with rmax/rmin = 250/20, the fit formula for the coefficients are χ(h) = −0.7543h2 + 0.6472h and  . This is the formula used in FARGOCPT. For grids with substantially different ratios of outer to inner boundary radius, the minimization procedure has to be repeated and the constants changed. This smoothing length leads to a symmetric self-gravity force and has reduced errors for both small and large distances.

. This is the formula used in FARGOCPT. For grids with substantially different ratios of outer to inner boundary radius, the minimization procedure has to be repeated and the constants changed. This smoothing length leads to a symmetric self-gravity force and has reduced errors for both small and large distances.

In the case of a non-constant aspect ratio, we use the mass-averaged aspect ratio which is recomputed after several time-steps. An additional benefit of the symmetric formulation is improved conservation of angular momentum by way of removing the self-acceleration present when using the old smoothing length.

In the limit of weak self-gravity, Q ≥ 20, Rendon Restrepo & Barge (2023) corrected the underestimation of SG at small distances introducing a space-dependent smoothing length, which matches the exact 3D SG force with an accuracy of 0.5%. However, they did not correct the symmetry issue. Despite, this oversight they showed that their correction can lead to the gravitational collapse of a dust clump trapped inside a gaseous vortex (Rendon Restrepo & Gressel 2023) or maintain a fragment bound by gravity (Rendon Restrepo et al. 2022). In their latest work, (Rendon Restrepo et al., in prep.) found the exact kernel for all SG regimes which makes the use of a smoothing length obsolete:

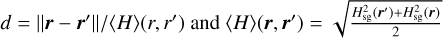

![$K = {1 \over {\sqrt \pi }}{\left\langle H \right\rangle ^{ - 2}}{d \over 8}\exp \left( {{{{d^2}} \over 8}} \right)\left[ {{K_1}\left( {{{{d^2}} \over 8}} \right) - {K_0}\left( {{{{d^2}} \over 8}} \right)} \right],$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq36.png) (22)

(22)

where K0 and K1 are modified Bessel functions of the second kind,  with Hsg defined in Eq. (23). This Kernel remains compatible with the aforementioned convolution product and fast Fourier methods. Although it is computationally expensive to compute the kernel using Bessel functions, it can be precomputed for locally isothermal simulations, thus, it has to be computed only once. For radiative simulations in which the aspect ratio changes, it can be updated only every so often making the method computationally feasible. Finally, this solution shares the properties of the solution presented above making the SG acceleration symmetric and removing the self-acceleration.

with Hsg defined in Eq. (23). This Kernel remains compatible with the aforementioned convolution product and fast Fourier methods. Although it is computationally expensive to compute the kernel using Bessel functions, it can be precomputed for locally isothermal simulations, thus, it has to be computed only once. For radiative simulations in which the aspect ratio changes, it can be updated only every so often making the method computationally feasible. Finally, this solution shares the properties of the solution presented above making the SG acceleration symmetric and removing the self-acceleration.

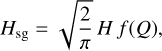

When considering SG, the balance between vertical gravity and pressure that sets the scale height needs to include the SG component as well. This effect can be included in the standard vertical density stratification ρ(z) = Σ/(2πH) exp (−1/2(z/H)2) to good approximation by adjusting the definition of the scale height (Bertin & Lodato 1999, see their Appendix A). In the case that the SG option is turned on, the standard scale height is replaced by:

(23)

(23)

![${f(Q) = {\pi \over {4Q}}\left[ {\sqrt {1 + {{8{Q^2}} \over \pi }} - 1} \right],}$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq39.png) (24)

(24)

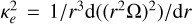

with the Toomre Q parameter from Eq. (21). In the code, we multiply the result of the standard scale height computation by the factor  . The epicycle frequency κe in the Toomre parameter in Eq. (21) is calculated as

. The epicycle frequency κe in the Toomre parameter in Eq. (21) is calculated as  (e.g., Binney & Tremaine 1987) with the angular velocity of the gas Ω.

(e.g., Binney & Tremaine 1987) with the angular velocity of the gas Ω.

For simulations of collapse in self-gravitating disks, we added the OpenSimplex algorithm to our code to initialize noise in the density distribution. The noise is intended to break the axial symmetry of the disk and thereby help gravitational instabilities to develop.

3.8 Energy equation and radiative processes

The original FARGO (Masset 2000) code did not include treatment of the energy equation. This was added in various later incarnations of the FARGO code, including FargoADSG (Baruteau & Masset 2008a) on which this code is based. In this section, we outline the procedure of how the energy update is performed in FARGOCPT. Refer to the grey box on the right in Fig. 1 for a visual representation. The subscripts of the variables in this section refer to the ones in Fig. 1, intended to help the reader in locating the sub-steps.

The energy update step due to compression or expansion, shock heating, viscosity, irradiation, and radiative cooling or β-cooling is implemented using operator splitting. Our scheme consists of a mix of implicit update steps.

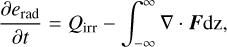

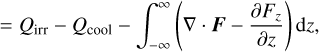

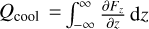

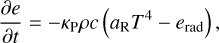

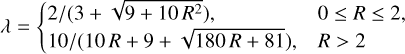

In principle, we perform the energy update on the sum of internal energy density e and the radiation energy density erad = aT4, thus assuming a perfect coupling between the ideal and the photon gas, the so-called one-temperature approximation (see Sect. 3.8.5 for more detail). Separately, these quantities change according to:

(25)

(25)

(26)

(26)

(27)

(27)

with the 3D radiation energy flux F, viscous heating Qvisc, shock-heating as captured by artificial viscosity Qshock, irradiation Qirr, and radiative losses at the disk surfaces  . Note, that the vertical cooling part Qcool is split off from the integral over ∇ • F.

. Note, that the vertical cooling part Qcool is split off from the integral over ∇ • F.

In principle, the total energy update is then:

(28)

(28)

We split this update into three main parts applied in the following order. The first line represents shock heating and compression heating (see Sect. 3.8.1), the second line represents heating and cooling (see Sect. 3.8.2), and the third line represents the radiation transport (see Sect. 3.8.5).

The following subsections review the details of this process.

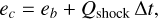

3.8.1 Compression heating and shock heating

The pressure term is updated first following D’Angelo et al. (2003) with an implicit step using an exponential decay ensuring stability (see their Eq. (24)). The update is expressed as:

![${e_b} = {e_a}\exp \left[ { - (\gamma - 1){\rm{\Delta }}t\nabla \cdot {u_n}} \right],$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq47.png) (29)

(29)

with the adiabatic index, γ. As a next step, shock heating is treated in the form of heating from artificial viscosity:

(30)

(30)

with Qshock being the right-hand-side of Eq. (88) where Σ = Σa and u = ub.

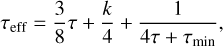

3.8.2 Heating and cooling

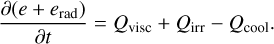

This step considers the update due to viscosity, irradiation, and cooling. The relevant part from Eq. (28) is

(31)

(31)

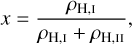

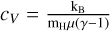

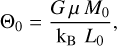

To arrive at the expression for this update step, we first insert explicit expressions for the two energies. The internal energy density is e = ρcVT with the specific heat capacity  , where kB is the Boltzmann constant, mH is the mass of a hydrogen atom, and µ is the mean molecular weight. The radiation energy density is given by

, where kB is the Boltzmann constant, mH is the mass of a hydrogen atom, and µ is the mean molecular weight. The radiation energy density is given by  with the Stefan-Boltzmann constant σSB and the speed of light c. Then, we use a first-order discretization for the time derivative and rearrange it according to the time index of T. Finally, we convert to an expression for the internal energy e arriving at:

with the Stefan-Boltzmann constant σSB and the speed of light c. Then, we use a first-order discretization for the time derivative and rearrange it according to the time index of T. Finally, we convert to an expression for the internal energy e arriving at:

(32)

(32)

This implicit energy update is stable in our testing. The heating and cooling rates are calculated as described in the following sections. Each of the terms is optional and can be configured by the user. Cooling rates are discussed in Sects. 3.8.3 and 3.8.4, irradiation is discussed in Sect. 3.8.6. and viscous heating is discussed in Sect. 3.9.

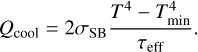

3.8.3 Radiative Cooling

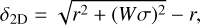

For radiative cooling, we take the same approach as (Müller & Kley 2012) where energy can escape through the disk surfaces and the energy transport from the disk midplane to the surfaces is modeled using an effective opacity. The associated cooling term is

(34)

(34)

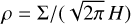

The minimum temperature Tmin, defaulting to 4 K, is set to take into account that the disk does not cool towards a zero Kelvin region but rather towards a cold environment slightly warmer than the cosmic microwave background. This can be important in the outer regions of the disk and effectively sets a temperature floor. For the effective opacity we used (Hubeny 1990; Müller & Kley 2012; D’Angelo & Marzari 2012):

(35)

(35)

where k = 2 for an irradiated disk and  for a non-irradiated disk (D’Angelo & Marzari 2012),

for a non-irradiated disk (D’Angelo & Marzari 2012), is the optical depth calculated from the Rosseland mean opacity k, and τmin = 0.01 is a floor value to capture optically very thin cases in which line opacities become dominant (Hubeny 1990).

is the optical depth calculated from the Rosseland mean opacity k, and τmin = 0.01 is a floor value to capture optically very thin cases in which line opacities become dominant (Hubeny 1990).

There are three options to compute the opacity in the code: lin (Lin & Papaloizou 1985), bell (Bell & Lin 1994), and constant for a constant opacity. For the first two, see Müller & Kley (2012) for more details. Additional opacity laws can be easily implemented by expanding the code by a function that returns the opacity κ(ρ, T) as a function of the temperature and volume density.

In case of β-cooling (Gammie 2001), we have:

(36)

(36)

is added to Qcool where eref is the reference energy to which the energy is relaxed. This reference can either be the initial value or prescribed by a locally isothermal temperature profile. We note that β-cooling is a misnomer as it is not exclusively a cooling process but rather a relaxation process towards a reference state. If the temperature is lower than in the reference state, the energy is increased, that is, heating occurs.

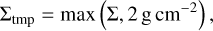

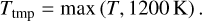

3.8.4 S-curve cooling

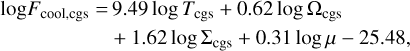

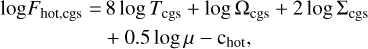

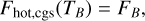

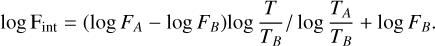

For the specific case of simulating cataclysmic variables, we also included the option to compute Qcool according to Ichikawa & Osaki (1992) and Kimura et al. (2020). Their model splits the cooling function into a cold, radiative branch and a hot, convec-tive branch. They used opacities based on Cox & Stewart (1969) and a vertical radiative flux, namely, the radiative loss from one surface of the disk, of:

(37)

(37)

for the optically thin regime to derive the radiative flux of the cold branch:

(38)

(38)

where the subscript cgs indicates the value of the respective quantity in cgs units. The cold branch is valid for temperatures, T < TA, where TA is the temperature at which:

(39)

(39)

The radiative flux of the hot branch is derived by assuming that disk quantities do not vary in the vertical direction (one-zone model) for an optically thick disk:

(40)

(40)

Using Kramer’s law for the opacity of ionized gas, the radiative flux can be approximated as:

(41)

(41)

where the constant chot = 25.49 was used in Ichikawa & Osaki (1992) and chot = 23.405 in Kimura et al. (2020). The difference between the two constants is that the first leads to weaker cooling compared to the latter. The hot branch is valid for temperatures T > TB, where TB is the temperature at which:

(42)

(42)

where log FB = max(K, log FA) with (based on Fig. 3 in Mineshige & Osaki 1983)

![$K = 11 + 0.4\log \left[ {{{2 \times {{10}^{10}}} \over {{r_{{\rm{cm}}}}}}} \right].$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq65.png) (43)

(43)

The radiative flux in the intermediate branch is given by an interpolation between the cold and hot branches:

(44)

(44)

The cooling term is then given by  due to the radiation from both sides of the disk where F is the radiative flux pieced together from the cool, intermediate, and hot branches as described above. These prescriptions were developed for the conditions inside an accretion disk, and we found that it leads to numerical issues when applied to the low-density regions outside a truncated disk. We therefore opted to modulate the radiative flux with a square root function for densities below Σthresh < 2 g cm−2 and a square function for temperatures below Tthresh < 1200K:

due to the radiation from both sides of the disk where F is the radiative flux pieced together from the cool, intermediate, and hot branches as described above. These prescriptions were developed for the conditions inside an accretion disk, and we found that it leads to numerical issues when applied to the low-density regions outside a truncated disk. We therefore opted to modulate the radiative flux with a square root function for densities below Σthresh < 2 g cm−2 and a square function for temperatures below Tthresh < 1200K:

(45)

(45)

(46)

(46)

(47)

(47)

3.8.5 In-plane radiation transport using FLD

The last term in Eqs. (3) and (28), the in-plane radiation transport, is treated using the flux-limited diffusion (FLD) approach (Levermore & Pomraning 1981; Levermore 1984). The method allows us to treat radiation transport as a diffusion process, both, in the optically thick and optically thin regime. Our implementation builds upon Kley (1989), Kley & Crida (2008), Müller (2013) and uses the successive over-relaxation (SOR) method to solve the linear equation system involved in the implicit energy update.

In principle, the process of radiation transport is described by the evolution of a two-component gas consisting of an ideal gas and a photon gas which have thermal energy densities e = egas and erad, respectively (Mihalas & Mihalas 1984). In the flux-limited diffusion approximation, their coupled evolution is given by (Kley 1989; Commerçon et al. 2011; Kolb et al. 2013):

(48)

(48)

(49)

(49)

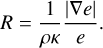

where λ is the flux limiter. We use the flux limiter presented in Kley (1989, see this reference for alternatives) which is given by:

(50)

(50)

with the dimensionless quantity

(51)

(51)

In FARGOCPT, we use the one-temperature approximation, meaning that we assume that the photon gas and the ideal gas instantaneously equilibrate their temperatures, Tgas = T and Trad. This approximation allows us to reduce Eqs. (48) and (49) into a single equation for the total thermal energy, e + erad. We further assume that erad is negligible against e (and the same for their time derivatives) yielding:

(52)

(52)

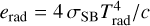

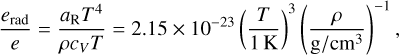

where we used  . To see that erad is indeed negligible against e, we assume that the gas is a perfect gas and consider the ratio of energy densities:

. To see that erad is indeed negligible against e, we assume that the gas is a perfect gas and consider the ratio of energy densities:

(53)

(53)

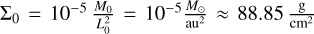

where we used µ = 2.35 and γ = 1.4. Assuming further a minimum mass solar nebula (MMSN; Hayashi 1981) density with Σ(r) = 1700 g/cm2 (r/au)−3/2, the ratio becomes:

(54)

(54)

The dependence on the radius cancels out in the calculation of ρ from Σ because of the specific exponent of −3/2 of the MMSN. Now, we can see that erad/e ranges from 4.7 × 10−9 at T = 100 K to 1.6 × 10−4 at T = 2000 K. Thus, the approximation is justified in the context of planet-forming disks.

Then, we again make use of the assumption that the gas and the photon gas equilibrate their temperatures instantaneously, namely, T = Trad, and use the fact that ρ can be considered constant during the radiation transport part of the operator splitting scheme. Furthermore, we assume the opacity k to be constant during the radiation transport step, although in principle it depends on T. Equation (52) can then be recast into an equation for T yielding:

(55)

(55)

with  . This diffusion equation is then discretized and the resulting linear equation system is solved using the SOR method resulting in an updated temperature T'. Details of the discretization and implementation of the SOR solver can be found in Appendix 1 of Müller (2013). Finally, we assume that the gas is a perfect gas and update its internal energy density by using the new temperature,

. This diffusion equation is then discretized and the resulting linear equation system is solved using the SOR method resulting in an updated temperature T'. Details of the discretization and implementation of the SOR solver can be found in Appendix 1 of Müller (2013). Finally, we assume that the gas is a perfect gas and update its internal energy density by using the new temperature,

(56)

(56)

Here, ee is an energy surface density again (also see Fig. 1), as opposed to the energy volume densities in the rest of the section above.

We note that our implementation uses the 3D formulation. Other implementations of midplane radiation transport (also the one described in Müller 2013) use the surface density instead and have to introduce a factor  (sometimes also chosen as 2H) to link the surface density to the volume density which depends on the vertical stratification of the disk. However, if we assume ρ (or equivalently H) to be constant throughout the radiative transport step, this factor cancels out in the end and the two approaches are equivalent. For the sake of simplicity, we use the 3D version and assume that all horizontal radiation transport is confined to the midplane. Before the radiation transport step, we compute

(sometimes also chosen as 2H) to link the surface density to the volume density which depends on the vertical stratification of the disk. However, if we assume ρ (or equivalently H) to be constant throughout the radiative transport step, this factor cancels out in the end and the two approaches are equivalent. For the sake of simplicity, we use the 3D version and assume that all horizontal radiation transport is confined to the midplane. Before the radiation transport step, we compute

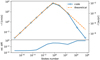

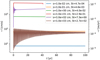

The FLD implementation is tested with two separate tests. The first test, presented in Appendix D.8, shows a test for the physical part in which a disk equilibrates to two different temperatures enforced at the inner and outer boundaries. The second test, presented in Appendix D.9, shows a test for the SOR diffusion solver which compares the numerical results of a 2D diffusion process against the available analytical solution. We note that the current implementation of the FLD module only considers a perfect gas so it should not be used together with the non-constant adiabatic index (see Sect. 3.8.7) without further testing or modifications.

3.8.6 Irradiation

The irradiation term is computed as the sum of the irradiation from all N-body objects. This allows for simulations in which planets or a secondary star irradiate the disk. An N-body object is considered to be irradiating, if it is assigned a temperature and radius in the config file.

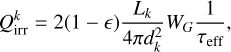

For each single source with index k, the heating rate due to irradiation is computed following Menou & Goodman (2004) and D’Angelo & Marzari (2012) as:

(57)

(57)

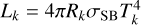

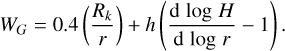

with the disk albedo є which is set to 1 /2, the luminosity of the source, Lk, the distance to the source, dk, and the effective optical depth, τeff. The luminosity is calculated as  with the radius, Rk, and the temperature, Tk, of the source, and the effective opacity, τeff, as given in Eq. (35). The remaining factor WG is a geometrical factor that accounts for the disk geometry in the case of a central star (Chiang & Goldreich 1997) and includes terms for close to the source (first term) and far from the source (second term). It is given by:

with the radius, Rk, and the temperature, Tk, of the source, and the effective opacity, τeff, as given in Eq. (35). The remaining factor WG is a geometrical factor that accounts for the disk geometry in the case of a central star (Chiang & Goldreich 1997) and includes terms for close to the source (first term) and far from the source (second term). It is given by:

(58)

(58)

We assume the flaring of the disk,  , is constant in time and has the value of the free parameter specified for the initial conditions. Properly accounting for the disk geometry would require ray-tracing from all sources to all grid cells which is computationally expensive. Finally, the total irradiation heating rate is given by:

, is constant in time and has the value of the free parameter specified for the initial conditions. Properly accounting for the disk geometry would require ray-tracing from all sources to all grid cells which is computationally expensive. Finally, the total irradiation heating rate is given by:

(59)

(59)

where the sum runs over all irradiating objects.

3.8.7 Non-constant adiabatic Index

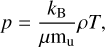

In astrophysics, it is very common to treat matter as ideal gas, for which the following equation, also known as the ideal gas law, holds:

(60)

(60)

with the pressure p, the Boltzmann constant, kB, the mean molecular weight, µ, the density, ρ, the temperature, T, and the atomic mass unit mu. In the case of an ideal gas, the assumption is that there are no interactions between the gas particles and the pressure is only exerted by interactions with the boundary of a volume, containing the gas. This is very often a good approximation, especially in the case of accretion disks where densities are low.

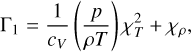

A further assumption that is commonly made is that of a perfect gas, for which the pressure and sound speed are related to ρ and T by the same constant adiabatic index:

(61)

(61)

which is the ratio of the specific heat capacity at constant pressure with the one at constant volume, with γ = 5/3 for a monatomic gas and γ = 7/5 for a diatomic gas (e.g., Vaidya et al. 2015). The pressure and sound speed of a perfect gas are:

(62)

(62)

The assumption of a perfect gas is a good approximation at 300 ≲ T ≲ 1000–2000 K, with some density dependence (see Fig. 1 of D’Angelo & Bodenheimer 2013). At lower or higher temperatures, contributions by rotational and vibrational degrees of freedom, or changes in the chemical composition such as the dissociation and ionization of hydrogen, would need to be taken into account. Confusingly, the perfect gas is often referred to as the ideal gas in the literature.

In the following, we outline the case of a general ideal gas in which the single constant adiabatic index is replaced with two other quantities. Hence, in Eqs. (62) and (63) the constant γ will be replaced by the effective adiabatic index γeff and the first adiabatic exponent Γ1, respectively.

With these changes, the equation of state can account for the dissociation and ionization processes of hydrogen, as well as rotational and translational degrees of freedom at lower temperatures. Such an equation of state was already implemented in PLUTO by Vaidya et al. (2015) which serves as a basis for the changes in our code. In the PLUTO code, this equation of state is called the “PVTE” equation of state which stands for pressure-volume-temperature-energy. We adopted the same name for the equation of state in FARGOCPT.

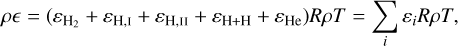

We start by writing the total internal energy density ρє of an ideal gas as a summation of several contributions:

(64)

(64)

with R = kB/mH. These contributions are given by (compare Table 1 from Vaidya et al. 2015):

![$\eqalign{ & {\varepsilon _{{\rm{H}},{\rm{I}}}} = {3 \over 2}X(1 + x)y\left( {{\rm{translational energy for hydrogen}}} \right), \cr & {\varepsilon _{{\rm{He}}}} = {3 \over 8}Y\left( {{\rm{translational energy for helium}}} \right), \cr & {\varepsilon _{{\rm{H}} + {\rm{H}}}} = 4.48{\rm{eVXy}}/\left( {2{k_B}T} \right)\left( \matrix{ {\rm{dissociation energy for}} \hfill \cr {\rm{molecular hydrogen}} \hfill \cr} \right), \cr & {\varepsilon _{{\rm{H}},{\rm{II}}}} = 13.6{\rm{eV}}Xxy/\left( {{k_B}T} \right)\left( \matrix{ {\rm{ionization energy for}} \hfill \cr {\rm{atomic hydrogen}} \hfill \cr} \right), \cr & {\varepsilon _{{{\rm{H}}_2}}} = {{X(1 - y)} \over 2}\left[ {{3 \over 2} + {T \over {{\zeta _v}}}{{{\rm{d}}{\zeta _v}} \over {{\rm{d}}T}} + {T \over {{\zeta _r}}}{{{\rm{d}}{\zeta _r}} \over {{\rm{d}}T}}} \right]\left( \matrix{ {\rm{internal}} \hfill \cr {\rm{energy for molecular hydrogen}} \hfill \cr} \right), \cr} $](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq94.png)

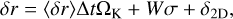

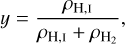

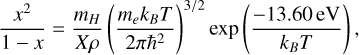

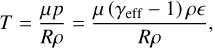

where X (defaulting to 0.75 in the code) and Y = 1 − X are the hydrogen and helium mass fractions and y and x are the hydrogen dissociation and ionization fractions, defined as:

(65)

(65)

ζv and ζr are the partition functions of vibration and rotation of the hydrogen molecule and are described in D’Angelo & Bodenheimer (2013). If we assume local thermodynamic equilibrium, then y and x can be computed by using the following two Saha equations:

(67)

(67)

(68)

(68)

After applying the ideal gas law and inserting Eq. (64), the pressure-internal energy relation becomes:

(69)

(69)

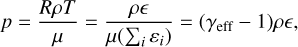

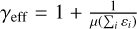

where  is the effective adiabatic index and µ is the mean molecular weight, given by:

is the effective adiabatic index and µ is the mean molecular weight, given by:

![$\mu = 4[2X(1 + y + 2xy) + Y].$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq101.png) (70)

(70)

Now, by using the relation from Eq. (69) an equation for the temperature can be derived:

(71)

(71)

which can be solved for a given internal energy and density as a root-finding problem:

(72)

(72)

The sound speed is given by:

(73)

(73)

where Γ1 is the first adiabatic exponent, which is defined as:

(74)

(74)

where the temperature and density exponents are defined by:

(75)

(75)

Since the computational effort to compute γeff, Γ1, and µ for every cell at every time step is very high, we precompute them to create lookup tables. During the simulation, the values of γeff, Γ1, and µ are interpolated from the lookup tables for given densities and internal energies. How these tables can be implemented is also explained in Vaidya et al. (2015).

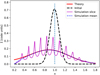

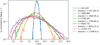

As our code is 2D, we require the scale height to compute the densities used for reading the adiabatic indices from the lookup table. To compute the scale height, our method requires the adiabatic indices. This results in a cyclic dependency. We found that this is not an issue, as successive time-steps naturally act as an iterative solver for this problem. We additionally always compute the scale height twice, before and after updating the adiabatic indices and we perform this iteration twice per time step. We tested our implementation using the shock tube test. Because there is no analytical solution for the shock tube test with non-constant adiabatic indices, we compared our results against results generated with the PLUTO code. The test is shown in Fig. D.3 under the label ‘PVTE’ and we find good agreement between our implementation and the implementation by Vaidya et al. (2015).

3.9 Viscosity

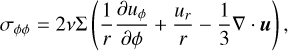

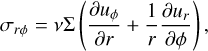

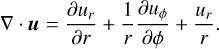

Viscosity is implemented as an operator splitting step (see Fig. 1 for its context). The full viscous stress tensor reads (see, e.g., Shu 1992):

![${\sigma _{ij}} = 2\mu \left[ {{1 \over 2}\left( {{{\partial {u_i}} \over {\partial {x_j}}} + {{\partial {u_j}} \over {\partial {x_i}}}} \right) - {{{\delta _{ij}}} \over 3}\nabla \cdot u} \right] + \zeta {\delta _{ij}}\nabla \cdot u,$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq107.png) (76)

(76)

where i, j ∈ {1,2} indicate the spatial directions, µ and ζ are the shear and bulk viscosity, respectively, and δij is the Kronecker δ. The shear viscosity is given by µ = vΣ with the kinematic viscosity denoted by v. In our case, ζ is neglected, although artificial viscosity (see Sect. 3.9.1) reintroduces a bulk viscosity. The kinematic viscosity is either given by a constant value or the α-prescription (Shakura & Sunyaev 1973) for which:

(77)

(77)

with the disk scale height, H.

The relevant elements in polar coordinates of the viscous stress tensor are:

(78)

(78)

(79)

(79)

(80)

(80)

(81)

(81)

The momentum update is then performed according to Kley (1999; see also D’Angelo et al. 2002) as:

(82)

(82)

(83)

(83)

Finally, the energy update due to viscosity (D’Angelo et al. 2003) is given by:

![${{\partial e} \over {\partial t}} = {{{Q_{{\rm{visc}}}}} \over {\Delta t}} = {1 \over {2v{\rm{\Sigma }}}}\left[ {\sigma _{rr}^2 + 2\sigma _{r\phi }^2 + \sigma _{\phi \phi }^2} \right] + {{2v{\rm{\Sigma }}} \over 9}{(\nabla \cdot u)^2},$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq115.png) (84)

(84)

where Qvisc is to be used in the energy update in Eq. (32).

3.9.1 Tscharnuter and Winkler artificial viscosity

The role of artificial viscosity is to handle (discontinuous) shock fronts in finite-difference schemes. This is achieved by smoothing the shock front over several grid cells by adding a bulk viscosity term. Tscharnuter & Winkler (1979) raised concerns about the formulation of artificial viscosity introduced by Von Neumann & Richtmyer (1950), as it can produce artificial pressure even if there are no shocks (e.g., Bodenheimer et al. 2006, Sect. 6.1.4). Tscharnuter & Winkler (1979) then proposed a tensor artificial viscosity, analogous to the viscous stress tensor, that is independent of the coordinate system and frame of reference. For our implementation of this artificial viscosity, we follow Stone & Norman (1992, Appendix B) who added two additional constraints on the artificial viscosity: the artificial viscosity constant must be the same in all directions and the off-diagonal elements of the tensor must be zero to prevent artificial angular momentum transport. We note that there is also an artificial viscosity described in the main text of Stone & Norman (1992), sometimes referred to as the “Stone and Norman” artificial viscosity, which does not have these properties and is only applicable in Cartesian coordinates. Nonetheless, it is sometimes used in cylindrical and spherical coordinates.

In our case, we use the version suited for curve-linear coordinates and the artificial viscosity pressure tensor is given by:

![${\bf{Q}} = \left\{ {\matrix{ {{l^2}{\rm{\Sigma }}(\nabla \cdot u)\left[ {\nabla \otimes u - {1 \over 3}(\nabla \cdot u){\bf{I}}} \right]\,\,\,\,\,{\rm{if}}\nabla \cdot {\bf{u}} < 0} \cr {0\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,{\rm{otherwise}}} \cr } ,} \right.$](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq116.png) (85)

(85)

where l = q Δx is the distance over which shocks are smoothed with the dimensionless parameter q near unity and the cells size Δx. It is given by Δx = max(Δxa, Δxb) where a and b indicate the grid of cell centers and interfaces, respectively. The contribution to the momentum equation is:

(86)

(86)

(87)

(87)

Finally, the shock heating caused by the artificial viscosity is given by:

![$\matrix{ {{{\partial e} \over {\partial t}} = - {l^2}{\rm{\Sigma }}(\nabla \cdot u){1 \over 3}\left[ {{{\left( {{{\partial {u_r}} \over {\partial r}}} \right)}^2} + {{\left( {{{\partial {u_\phi }} \over {r\partial \phi }} + {{{u_r}} \over r}} \right)}^2}} \right.} \cr {\left. {\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\,\, + {{\left( {{{\partial {u_\phi }} \over {r\partial \phi }} + {{{u_r}} \over r} - {{\partial {u_r}} \over {\partial r}}} \right)}^2}} \right].} \cr } $](/articles/aa/full_html/2024/04/aa48687-23/aa48687-23-eq119.png) (88)

(88)

To ensure the stability of these updates, we use a CFL constraint analogous to the one in (Stone & Norman 1992, see their Sect. 4.6):

(89)

(89)

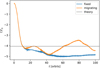

3.9.2 Local viscosity stabilizer

We found numerical instabilities in simulations of disks in close binary systems. In these systems, the disk is truncated by tidal forces (e.g., Artymowicz & Lubow 1994). At this truncation radius, the strong density gradients can cause numerical instabilities in the viscosity update step which drastically reduces the time step.

To prevent these instabilities, we designed a damping method that checks whether the viscosity update is too large and unstable and then reduces the update to a stable size. This method has the advantage that it is a local per-cell update that can be dropped into the existing code with only one modification to the update step. An alternative solution would be to implement a full implicit viscosity update step based on solving a linear system of equations which would have required substantial changes to our code in the viscosity update step. Furthermore, this implicit update would be computationally more expensive whereas the overhead of the local damping method is negligible. Because the instability is numerical and confined to only a small region, we argue that the damping method is a valid solution.

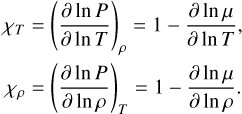

For our method, we interpret the viscosity update as a diffusion process. As we are only looking at a single cell, we treat the velocities of the neighboring cells as constant. We then can write the velocity update due to viscosity in the form of:

(90)

(90)

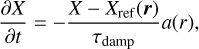

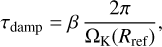

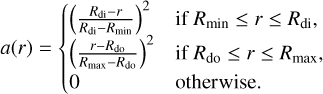

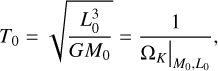

where in the nomenclature of Fig. 1, ut+Δt = ud and ut = uc. The analytical solution to this equation is an exponential relaxation to the equilibrium velocity of  When the explicit update overshoots the equilibrium velocity, the method becomes unstable. One option to avoid the instability is to add Δt • c1 > −1 to the CFL criteria but this effectively freezes the simulation. Instead, the code can now be configured to: