| Issue |

A&A

Volume 621, January 2019

|

|

|---|---|---|

| Article Number | A36 | |

| Number of page(s) | 31 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/201833775 | |

| Published online | 03 January 2019 | |

Weak-lensing shear measurement with machine learning

Teaching artificial neural networks about feature noise⋆

1 Argelander-Institut für Astronomie, Auf dem Hügel 71, 53121 Bonn, Germany

e-mail: mtewes@astro.uni-bonn.de

2 Institute of Physics, Laboratory of Astrophysics, Ecole Polytechnique Fédérale de Lausanne (EPFL), Observatoire de Sauverny, 1290 Versoix, Switzerland

Received:

4

July

2018

Accepted:

7

November

2018

Cosmic shear, that is weak gravitational lensing by the large-scale matter structure of the Universe, is a primary cosmological probe for several present and upcoming surveys investigating dark matter and dark energy, such as Euclid or WFIRST. The probe requires an extremely accurate measurement of the shapes of millions of galaxies based on imaging data. Crucially, the shear measurement must address and compensate for a range of interwoven nuisance effects related to the instrument optics and detector, noise in the images, unknown galaxy morphologies, colors, blending of sources, and selection effects. This paper explores the use of supervised machine learning as a tool to solve this inverse problem. We present a simple architecture that learns to regress shear point estimates and weights via shallow artificial neural networks. The networks are trained on simulations of the forward observing process, and take combinations of moments of the galaxy images as inputs. A challenging peculiarity of the shear measurement task, in terms of machine learning applications, is the combination of the noisiness of the input features and the requirements on the statistical accuracy of the inverse regression. To address this issue, the proposed training algorithm minimizes bias over multiple realizations of individual source galaxies, reducing the sensitivity to properties of the overall sample of source galaxies. Importantly, an observational selection function of these source galaxies can be straightforwardly taken into account via the weights. We first introduce key aspects of our approach using toy-model simulations, and then demonstrate its potential on images mimicking Euclid data. Finally, we analyze images from the GREAT3 challenge, obtaining competitively low multiplicative and additive shear biases despite the use of a simple training set. We conclude that the further development of suited machine learning approaches is of high interest to meet the stringent requirements on the shear measurement in current and future surveys. We make a demonstration implementation of our technique publicly available.

Key words: methods: data analysis / gravitational lensing: weak / cosmological parameters

A copy of the code is also available at the CDS via anonymous ftp to cdsarc.u-strasbg.fr (130.79.128.5) or via http://cdsarc.u-strasbg.fr/viz-bin/qcat?J/A+A/621/A36

© ESO 2019

1. Introduction

Images of distant galaxies appear slightly distorted, typically at the percent level, as light bundles reaching the observer are differentially deflected owing to gravitational lensing by massive structures along the line of sight. Since galaxies come in a variety of intrinsic shapes, inclinations, and orientations, these weak distortions are not identifiable on individual sources. In this sense, galaxies give us only a very noisy view of the distortion field. However, despite this intrinsic “shape noise”, the weak lensing (WL) effect imprints spatial correlations on the apparent galaxy shapes. Observing these spatial correlations, ideally as a function of redshift, allows us to infer properties of the large-scale matter structure of the Universe, and how this structure has grown over time.

This probe, known as cosmic shear, is one of the main scientific drivers for surveys poised to explore dark matter and dark energy, such as KiDS1 (de Jong et al. 2015), the Dark Energy Survey (DES, The Dark Energy Survey Collaboration 2016), the ESA Euclid2 mission (Laureijs et al. 2011), and NASA’s Wide Field InfraRed Survey Telescope WFIRST3. Kilbinger (2015) and Mandelbaum (2018) provide recent reviews on the field, with a particular focus on the analysis methods to interpret the data from wide field surveys.

The statistical uncertainty of cosmic shear measurements, which is related to the finite number of galaxies probing the shear field, decreases with the increasing sky coverage and depth of the surveys. To make full use of large surveys, the accuracy of the data analysis methods must therefore be high enough to avoid that systematic errors dominate the error-budget of the cosmological parameter inference (Refregier 2003). For Euclid, surveying 15 000 square degrees of extra-galactic sky, the resulting accuracy requirements are unprecedented. These requirements flow down, on the observational side, to (1) the determination of redshifts and (2) the measurement of shear. The cosmology community is working intensively on both aspects and on the required algorithmic improvements, often addressing effects that could previously be neglected due to the limited survey size.

Regarding the problem of photometric redshift determination, “empirical” and machine learning methods are now considered as at least equivalent to traditional template-fitting methods in terms of precision and accuracy. They are also complementary, as they are based on fundamentally different principles and assumptions. Several applications of artificial neural networks (NN) yield highly competitive results, especially when predicting redshift probability distributions (e.g., Bilicki et al. 2018; Bonnett 2015). Furthermore, D’Isanto & Polsterer (2018) demonstrate how deep convolutional NNs can infer redshifts by directly processing multiband image data at the pixel level, as compared to using fluxes measured in apertures.

The shear measurement problem has not yet seen a similar evolution toward machine learning methods. The problem of shear measurement is also referred to as “shape measurement” in the literature, as the shape (more precisely the ellipticity) of galaxies yields an estimator for the lensing shear. There are two traditional categories of shear measurement techniques: (1) methods based on the measurement of weighted quadrupole moments of the observed light distribution and (2) methods that forward-fit a model. Mathematically, these categories share strong similarities (Simon & Schneider 2017), and both have to tackle the same sources of biases in order to serve as accurate shear estimators.

The most prominent observational issues are the deformation of the sheared galaxy light distribution by the telescope optics and atmospheric seeing (often seen as convolution by a point-spread function PSF), the pixellation of the image by the detector, and the pixel noise. Information about the original galaxy shape and the shear is lost or compromised by each of these effects. Even for space-based instruments, the shape of the PSF varies over the field of view and in time, and the PSF for each galaxy must therefore first be reconstructed with high fidelity before shear can be estimated. In addition, the low signal-to-noise ratio (S/N) of the galaxy images leads to biases that have to be accounted for, as shear estimators are not linear functions of the image pixel values (see, e.g., Refregier et al. 2012). A large variety of shear measurement methods have been developed to deal with these effects, notably in context of the public Shear Testing Program (STEP) and the GRavitational lEnsing Accuracy Testing (GREAT) challenges (Heymans et al. 2006; Massey et al. 2007; Bridle et al. 2010; Kitching et al. 2012; Mandelbaum et al. 2015). Today’s state-of-the-art shape measurement methods involve various forms of simulation-based calibration to account for different biases (e.g., Fenech Conti et al. 2017; Huff & Mandelbaum 2017; Jee et al. 2016), yet without embracing a full machine learning approach. The computational cost of the shape measurement process is also of importance, with Euclid set to observe about 1.5 billion galaxies. Rigorously testing a method will typically imply applying it to simulations larger than the survey itself, underlining the need for fast algorithms.

In this paper, we use supervised machine learning (ML) to address the problem of shear measurement, building upon the few previous applications of ML to this specific problem (Gruen et al. 2010; Tewes et al. 2012; Graff et al. 2014, see also4Springer et al. 2018). Specifically, we simulate noisy and PSF-convolved galaxy images with known shear, and train NNs to regress shear estimates based on features of these images, so to minimize shear prediction biases rather than shear errors. While we participated in the GREAT3 challenge (Mandelbaum et al. 2015) with an early attempt of this approach under the name MegaLUT, the present paper describes a fundamentally revised methodology. The development of a machine learning approach is motivated by

-

the low CPU cost of ML predictions, as compared for example to iterative forward-fitting methods (either frequentist or Bayesian),

-

the unavoidable need for some form of shear calibration via image simulations for any state-of-the-art technique, due to practical effects such as galaxy blends and CCD charge transfer inefficiency,

-

the potential of simulation-driven methods to easily embrace further complex bias sources not identified at the moment, without affecting the initial formalism,

-

the possibility to control and penalize the tradeoff between sensitivity of the method to parameters affecting bias, such as prior knowledge of galaxies, and the bias itself.

A distinctive aspect of this ML application is the noisiness of the data. For the low-S/N galaxies of interest to cosmic shear studies, the measurement uncertainty on the shape of individual sources is larger than the WL distortion we wish to recover accurately. The cost function of the training algorithm and the structure of the training data must therefore be adapted so that the NNs can learn to correct for biases resulting from the propagation of noisy inputs through them.

To ease the analysis and comparison with other methods, the present work is limited to the prediction of point estimates and weights for each component of the shear. This was also the format adopted by GREAT3 (Mandelbaum et al. 2014). The large number of source galaxies in weak lensing surveys led the community to (so far) favor these over shear probability distributions. Furthermore, traditional shape measurement methods only produce point estimates, and they are also easier to analyze, for example when computing correlation functions. The situation is however changing, with several current methods adopting some more descriptive probabilistic formalisms (e.g., Bernstein & Armstrong 2014). We see the implementation presented in this paper as a stepping stone toward a probabilistic machine learning approach.

This article is organized as follows: we introduce the required WL-formalism in Sect. 2. In Sect. 3, we describe how NNs can be trained to achieve accurate predictions in the presence of noise in their inputs. The input features measured on galaxy images are presented in Sect. 4. We then detail how we connect these steps to form a shear measurement method in Sect. 5. The method is demonstrated on simple simulations in Sect. 6, and with a variable PSF in Sect. 7. We apply it to more realistic Euclid-like simulations with source selection in Sect. 8, and on GREAT3 data in Sect. 9. Lastly, we offer perspectives for the practical integration in a shear measurement pipeline in Sect. 10, and summarize in Sect. 11.

2. Formalism of weak gravitational lensing

In the following we give minimal definitions of the formalism of weak lensing and its estimation. Recent reviews include Kilbinger (2015) and Bartelmann & Maturi (2017), and a comprehensive introduction can be found in Schneider et al. (2006).

2.1. Shear and ellipticity

The weak-lensing distortion seen in a given field of view can locally be approximated as a linear transformation between the “true” unlensed coordinates and the observed coordinates, expressed by a Jacobian matrix. In coordinates centered respectively on the unlensed and observed source, this local transformation is often written as

where g1 and g2 are the two components of the reduced5 shear, causing a change in the ellipticity of observed galaxies, and where κ is the convergence, describing the change in their apparent size. It is often convenient to write the shear as a complex number g = g1 + g2i.

Most traditional methods measuring the lensing shear deal with expressions for the ellipticity of a galaxy, as for example with KSB (Kaiser et al. 1995; Hoekstra et al. 1998). In contrast, our proposed method constructs a direct estimator of the shear signal g as defined above. Inevitably, this estimator will be noisy, due to shape noise (see Sect. 1). But it does not require a formal description of the ellipticity at any stage of the process. Indeed, the notion of the ellipticity of a galaxy is not trivial, as real galaxies have complex morphologies without simple elliptical isophotes, not to mention pixellation and noise. For idealized galaxies with elliptical isophotes, we do however define an ellipticity in the following, which we use in the analysis of the sensitivity of our method and for some experiments. For such a galaxy, with semi-major axis a and semi-minor axis b, we follow the notation of the GREAT3 challenge (Mandelbaum et al. 2014) and define the ellipticity ε as a complex number of modulus |ε|=(1 − b/a)/(1 + b/a) and a phase determined by the position angle ϕ of the major axis such that ε1 = |ε|cos(2ϕ) and ε2 = |ε|sin(2ϕ). With this definition, and considering only weak shear |g|≪1, the observed ellipticity εobs of an idealized lensed galaxy is related to its true intrinsic ellipticity, εtrue, by εobs ≈ εtrue + g. The ellipticity εobs of each galaxy subject to some shear is a noisy but unbiased estimator of this shear, ⟨εobs⟩≈g, under the assumption that the source galaxies are intrinsically randomly oriented, that is ⟨εtrue⟩=0.

2.2. Biases and sensitivity of shear estimation

Biases of a shear estimator ĝ are commonly quantified using a linear bias model following Heymans et al. (2006), decomposing the bias into a multiplicative part, μ, and an additive part, c, for each component,

Given shear measurements on simulations with known true shears, estimates of these biases μ and c are obtained by fitting a line to the shear estimation residuals  against the true shear value6. The commonly used components i = {1, 2} of the shear and the biases μi

and ci

are defined by the coordinate grid used in Eq. (1), usually the image pixel grid. The first (second) component describes deformations along the axes (along the diagonals) of this grid. In addition, following GREAT3 conventions (Mandelbaum et al. 2015) and in line with Fenech Conti et al. (2017), we use the indices i = { + , × } to relate to components in a frame rotated to be aligned with the anisotropy of the PSF. The estimation of these PSF-oriented biases is done on simulations with variable orientation of the PSF. More precisely, to estimate those biases, one first rotates the components 1 and 2 of ĝ and gtrue by −2θ, where θ is the position angle of the PSF anisotropy, and then performs the linear regressions on these rotated components. We stress that we focus in this paper on probing the bias of the shear measurement method only, assuming for instance that the PSF is perfectly known.

against the true shear value6. The commonly used components i = {1, 2} of the shear and the biases μi

and ci

are defined by the coordinate grid used in Eq. (1), usually the image pixel grid. The first (second) component describes deformations along the axes (along the diagonals) of this grid. In addition, following GREAT3 conventions (Mandelbaum et al. 2015) and in line with Fenech Conti et al. (2017), we use the indices i = { + , × } to relate to components in a frame rotated to be aligned with the anisotropy of the PSF. The estimation of these PSF-oriented biases is done on simulations with variable orientation of the PSF. More precisely, to estimate those biases, one first rotates the components 1 and 2 of ĝ and gtrue by −2θ, where θ is the position angle of the PSF anisotropy, and then performs the linear regressions on these rotated components. We stress that we focus in this paper on probing the bias of the shear measurement method only, assuming for instance that the PSF is perfectly known.

In line with the above linear bias model, the numerous sources for bias are also often categorized into “multiplicative” and “additive” (Mandelbaum 2018). For example, the size of the PSF and the noise in the images are sources for multiplicative bias, as both effects tend to make galaxies look rounder and therefore less sheared (see, e.g., Melchior & Viola 2012). A typical source for an additive bias is the imperfect correction for an anisotropic PSF, leading to a net shift in the measured galaxy ellipticity. We refer to Massey et al. (2013) for a more comprehensive list of biases and studies of their propagation into cosmic shear results. These authors also establish that Stage IV weak-lensing experiments (Albrecht et al. 2006) require multiplicative (additive) biases and the uncertainty on these biases to be on the order of |μ|≲2 × 10−3 (|c|≲2 × 10−4).

In this paper, we will perform evaluations of μ and c in different bins of “true” parameters potentially affecting the bias, such as the intrinsic size of the galaxies. This is made possible by carrying out numerical experiments using simulated data. It is crucial to be aware that any binning or selection according to some noisy “observed” parameters might lead to shear estimation biases due to selection effects. For example, the estimate of the size or the S/N of a galaxy can in practice depend on the orientation and magnitude of the shear. For a discussion of selection biases, see, e.g., Fenech Conti et al. (2017). An illustration of the intricate dependencies of biases on true and observed parameters can be found in Pujol et al. (2017).

As mentioned by Hoekstra et al. (2017), an important goal for a shear measurement method should be to minimize the sensitivity |∂μ/∂p| to any parameter p potentially affecting the multiplicative bias μ of a measurement. A tradeoff between this sensitivity and the overall bias will have to be made. Let us consider some extreme examples. Suppose that a method shows a strong multiplicative bias on a given set of simulations. Applying a plain multiplicative scaling to all its shear estimates will apparently remove this overall bias. However, the sensitivity of this method to the galaxy population and simulation parameters might be increased by this rough rescaling. The rescaled method would therefore show a low bias on these particular simulations, but a potentially unacceptable sensitivity to the actual galaxy profiles. As another example, we can consider a method strongly driven by a prior on the galaxy shapes, but failing to use some of the available information from the observed data. While this method might show a low sensitivity to some parameters of the observed galaxy population, it would certainly have a biased overall response to shear, and a strong sensitivity to its prior assumptions. When designing a shear measurement method, both sensitivity and integrated biases should therefore be kept under control simultaneously.

3. Accurate regressions from artificial neural networks in presence of feature noise

In this section, we describe how we train neural networks to perform accurate regressions despite noisy input features, building upon ideas from Gruen et al. (2010). We keep this part generic to any inverse problem, and will introduce the particular application to weak-lensing shear measurement in Sect. 5.

3.1. The inverse regression problem

A standard feedforward neural network (NN) with N input nodes and one output node can be seen as a “free-form” fitting function of ℝN → ℝ (see, e.g., Tagliaferri et al. 2003, for an introduction to NNs and applications to astronomy). As such, the property of a NN to be nonlinear in its inputs (also called features) is explicitly desired, to allow for flexibility of the fitting function. A natural consequence of this nonlinearity is that if noisy realizations of input data are to be propagated through the NN, the resulting distribution of outputs might well differ from the noise distribution of the inputs. In particular, the expectation value of the output can be offset from the output which would be obtained from noise-free or less noisy inputs, leading to a net noise bias. We note that this holds for any nonlinear estimator.

Let us consider a NN of sufficient capacity, that is flexibility to adapt to the data, for a given regression problem. The NN regression is parametrized by all the weights and biases of the network’s nodes. For a fixed network architecture, the shape of this regression is then entirely determined by the training of the network. This training consists in optimizing the network parameters so as to minimize a cost function which compares network predictions to some known truth or “target” values. A simple and common choice for such a cost function is the mean square error (MSE) between the network predictions and the target values, in analogy to an ordinary least squares or maximum likelihood method. When fitting a model to noisy observations that depend on noiseless explanatory variables, the MSE does lead to the usually desired fitting curve (or hypersurface, in case of several input nodes). The latter traces, in the limit of many observations, the average values of the observed variable in bins of the explanatory variables.

In this work, our use of NNs is however “inverse”. We want to regress estimates for the explanatory variable (the NN target) based on noisy observations of the dependent variables (the NN inputs), a problem known in statistics as an inverse regression or calibration. We provide a illustrated example of an inverse regression and the terminology in Appendix A.

As mentioned by Gruen et al. (2010), it is counterproductive in such a situation to train a NN to minimize a MSE expressed between targets and individual predictions of the explanatory variable. We can however formulate other cost functions which explicitly favor accuracy in the predictions of the explanatory variable, when facing noise in the observed dependent variables. For this, the training data has to be structured so that the neural network can experience several realizations of the noise in the dependent variables for each value of the target explanatory variable.

3.2. Training with realizations and cases

To structure our training data, we introduce the distinction between “realizations” and “cases”:

-

A training realization is a single observation of the noisy dependent variables, for a particular (known) value of the explanatory variable. Measurements of the dependent variables resulting from a physical process give us such realizations, except that the value for the explanatory variable is usually not known.

-

A training case is an ensemble of realizations obtained for the same value of the explanatory variable. In other words, for our application of NNs, it is an ensemble of (input, target) pairs all sharing the same target value. When the training data is entirely simulated, cases can easily be generated to contain as many realizations as desired.

The training data therefore consists of an ensemble of cases, each containing an ensemble of realizations. Cost functions can now take advantage of this structure. We define the mean square bias (MSB) cost function, which penalizes the estimated prediction bias over the realizations in each case, as7

where p groups all the parameters (weights and biases) of the NN, D represents the training inputs containing the input vector Di, k for each of the nrea realizations in each of the ncase cases, o(p, Di, k) is the NN output for each realization, and t represents the training targets (with the target tk of each case). With the same notations, the classical MSE cost function making no distinction between realization and cases would be written

The apparently small difference between MSB and MSE is therefore that the MSB averages the NN outputs over the realizations in each case before comparing them to the target values. For both cost functions, the NN still learns how to predict one output for each realization. Let us note some consequences of the MSB cost function, which plays an important role in this paper.

First, in the limit of a sufficiently large number of realizations per case, the MSB does not penalize scatter in the predictions. A network trained to minimize MSB will, as desired, trade precision for accuracy, but it could potentially go beyond the optimal use of information and introduce additional unnecessary noise in its predictions. In practice, one can control this behavior, as well as potential overfitting to the training data, by limiting the capacity of the NN (i.e., limiting the number of its nodes and/or layers).

Second, one has to acknowledge that an inverse regression problem might simply not have an “accurate” solution, in the sense of a solution with vanishing MSB. If the observed dependent variables (the NN input features) do not carry information about the explanatory variable (the NN target) the corresponding target values will not be accurately estimated. And even if this information is still there, given a finite number of realizations and cases, a sufficiently strong noise in the input features will lead to biased predictions. We note that this might affect some “difficult” realizations only, while other regions of parameter space allow for sufficient accuracy.

More generally, not only the accuracy but also the achievable precision of the predictions might vary from one realization to another. In situations where the noisiness of an observed realization can be estimated from the observation itself, we can therefore further mitigate the effect of noise and extract more information by going beyond the prediction of point estimates. In this paper, we explore the simplest extension to the prediction of point estimates, by including the prediction of weights.

3.3. Predicting weights

Ideally, the prediction of weights and point estimates should be learned simultaneously. To maximize insight, we propose in the scope of this work the use of a separate NN for the weight prediction, in addition to the NN predicting the point estimates. The two networks are trained successively. In the first step, the NN yielding point estimates is trained using the MSB cost function. Then, the second NN is trained to predict an optimal weight for each realization, in order to increase the accuracy of each case. For this second NN, with parameters pW and exclusively positive outputs w, we define the mean square weighted bias (MSWB) cost function

where O contains the predicted point estimates oi, k = o(p, Di, k) obtained through the first NN. A pecularity of this cost function is that no explicit target values for the weights is given. Furthermore, by construction, the weights w minimizing the MSWB might have an arbitrary scale. We impose both the positivity and an upper bound to the weights by using an activation function ℝ → (0, 1) for the output layer of this second NN.

The training data (D, t) for the weight training can have a different structure of realizations and cases than the training data for the point estimates. It is always possible to obtain the point estimate predictions O from the first NN by running it on the training data of the second NN. We can thus make use of two training datasets, each optimized for its purpose.

We will further discuss the properties and behavior of NNs trained with the MSB and MSWB cost functions and the importance of the distributions of cases and realizations in Sect. 5, in the context of the practical application to weak lensing shear estimation.

3.4. Neural network implementation, training optimization algorithm, and committees

All results of this paper are obtained using an experimental custom NN library implemented in python, which we make publicly available (see Appendix B). In the following, we briefly summarize details and default settings of the NNs. If not stated otherwise through this paper, these configurations were chosen based on previous experience or trial-and-error attempts. We do not claim that these choices are optimal, and expect many other configurations to yield equivalent or better results.

For both types of networks (point estimates and weights), we use small fully-connected NNs with typically two hidden layers of five nodes each. All input and hidden nodes use the hyperbolic tangent f(x)=tanh(x) activation function. For the output layer, we use an identity activation function for the prediction of point estimates, and a variant of a sigmoid, f(x)=1/(1 + exp(−4x)) for the weight-predicting networks. We follow the conventional practice to deal with highly heterogeneous feature scales, and prepend a normalization (or whitening) of the input data vectors to our networks (Graff et al. 2014). This normalization independently scales and shifts the features seen by each node of the input layer, so that, for the training data, all inputs cover the interval [ − 1, 1].

Instead of using the conventional back-propagation (Rumelhart et al. 1986), we train our networks with a Broyden-Fletcher-Goldfarb-Shanno (BFGS) iterative optimization algorithm (Nocedal & Wright 2006, and references therein) in its scipy implementation8. The use of an algorithm that is agnostic of the network details, and therefore computes all required gradients numerically, allows for easy experimentation with cost functions and also with unconventional nodes, such as product units (Durbin & Rumelhart 1989; Schmitt 2002). To increase the efficiency of the training, we implement a caching mechanism for the results computed by each layer of the network. We also use so-called mini-batch optimization (see, e.g., Nielsen 2015), that is we randomly select a “batch” of typically 25% of the training cases, perform several (typically 30) optimization iterations on this batch, and iteratively pursue with the next randomly selected batch.

We start the training iterations from a randomized initial parameter state, with network weights and biases drawn from a centered normal distribution with a standard deviation of 0.1. Owing to this random initialization as well as the mini-batch optimization, networks trained on exactly the same data yield different estimators. We exploit this stochastic behavior to increase the robustness of our training procedure, by systematically using so-called committees of typically eight NNs in place of single networks. After the parallel training of such a committee, and a repeated evaluation of the performance of each member on an independent validation dataset during the training, we retain the best half of the members to form our final estimator. This allows in particular to reject badly converged optimizations, and to verify the overall stability of the training procedure (see also Zhou et al. 2002). We take averages of the predictions made by the retained committee members as output of a committee. For the weight-predicting NNs described in Sect. 3.3, the unconstrained scale of the predicted weights could potentially require a prior normalization. In practice, we observe however that the use of the sigmoid output activation function results in members predicting weights of very similar scales.

Finally, we note that our implementation allows to individually mask realizations of each case, which is important to handle failures of the input feature measurements, discussed in the next section.

4. Feature measurement on galaxy images

The raw data of a weak-lensing study consists of survey images. In this section we describe how we measure a small set of features based on moments of the observed galaxy light profiles from which the shear is to be inferred. Those features will serve as input to the machine learning algorithm, potentially together with information from a PSF-model, multiband photometry, or other relevant parameters. For this exploratory work we deliberately opt for a small number of selected features describing the galaxy images, to ease experimentation, efficiency, and also to set a benchmark. Deep-learning approaches with convolutional NNs, which directly learn filters to extract optimal galaxy features from image pixels are an obvious alternative (Tuccillo et al. 2018; D’Isanto & Polsterer 2018). However, we expect that few simple “hand-crafted” features9 are sufficient to capture a very large fraction of the shear information from the noisy galaxy images of interest to a weak lensing analysis, especially on simple simulations.

4.1. Adaptive weighted moments

To describe the galaxy shapes we use statistics based on moments computed with an adaptive elliptical Gaussian weight function (in contrast to the circular weight function used in Tewes et al. (2012), which we observe to yield less precise results). We employ the well-tested and efficient implementation offered by the HSM module of the GalSim software package (Bernstein & Jarvis 2002; Hirata & Seljak 2003; Mandelbaum et al. 2012; Rowe et al. 2015, and references therein). The same or very similar moment computations are used in other shape measurement techniques, such as DEIMOS (Melchior et al. 2011) and the methods directly implemented within GalSim.

To stress the computational nature of these features and connect them with the HSM implementation, we denote them in a fixed-width font. We define the following moment-based features:

-

adamom_flux corresponds to the total source flux of the bestfit elliptical Gaussian profile (ShapeData.moments_amp in GalSim), expressed in ADU. This is a biased estimate of the flux of any realistic (i.e., non-Gaussian) galaxy profile, but such biases have no direct consequences for ML input features.

-

adamom_g1 and adamom_g2 are components of the observed ellipticity (ShapeData.observed_shape.g1/2 in GalSim), which would correspond, for a simple elliptical Gaussian profile and without PSF, noise, and pixellation, to the ellipticity defined in Sect. 2 as an estimator for shear.

-

adamom_sigma gives a measurement of the radial extension of the profile, in units of pixels (ShapeData.moments_sigma). In the case of a circular Gaussian profile, it would estimate its standard deviation.

-

adamom_rho4 gives a weighted radial fourth moment of the image, measuring the concentration, i.e., a kurtosis, of the light profile (ShapeData.moments_rho4 in GalSim).

4.2. Noise measurement and signal-to-noise ratio

The S/N of galaxy images is a key quantity when assessing the quality of a shear measurement. A scientific analysis of a shear catalog will tend to include galaxies with a S/N as low as tolerable, for a given shear measurement technique. Unfortunately, S/N measurements mentioned across the literature are often difficult to compare, as the observational definition of a S/N is not trivial and not always fully described. In the following, we present the simple observational S/N that we use to evaluate our method.

First, we quantify the background pixel noise for each target galaxy using a rescaled median absolute deviate (MAD) to estimate the standard deviation (see, e.g. Rousseeuw & Croux 1993)

where ξi are the pixel values (in ADU) along the edge of a “stamp” of sufficient size centered on the target galaxy. Generalizations of this procedure, for better precision, are easily conceivable if required. The robust MAD statistic has the advantage, over a plain standard deviation, that potential field stars, galaxies, or image artifacts on the stamp edge have a reduced impact.

In the second step, we combine this background pixel noise measurement with the results from the adaptive moment measurements described above to obtain a S/N. Naturally, our definition of S/N follows from the CCD equation (see, e.g., Chromey 2010), and we choose a circular aperture with a radius of three times the measured half-light radius of the source as effective area for the background noise contribution. More precisely,

where

and G is the gain in electrons per ADU. For a Gaussian profile, the numerical factor  would rescale the standard deviation into the desired half-light radius. This choice of effective aperture Aeff has a strong influence on the S/N, and might seem arbitrary as galaxy light profiles are not Gaussian. We observe however that this definition gives results within a few percent of the ratio FLUX_AUTO/FLUXERR_AUTO given by the SExtractor software (Bertin & Arnouts 1996, 2010), for all simulations considered in this paper. The advantage of defining our own measure of S/N based on the described simple input features is to ease reproducibility and to avoid introducing the dependency on an additional software.

would rescale the standard deviation into the desired half-light radius. This choice of effective aperture Aeff has a strong influence on the S/N, and might seem arbitrary as galaxy light profiles are not Gaussian. We observe however that this definition gives results within a few percent of the ratio FLUX_AUTO/FLUXERR_AUTO given by the SExtractor software (Bertin & Arnouts 1996, 2010), for all simulations considered in this paper. The advantage of defining our own measure of S/N based on the described simple input features is to ease reproducibility and to avoid introducing the dependency on an additional software.

To mimic “sky-limited” observations, simulated images are sometimes drawn purely with a stationary Gaussian noise. In this approximation, Eq. (7) simplifies to

We show some simulated sources with Gaussian profiles for different S/N and sizes in Fig. 1 (see also Fig. 18 for an illustration with PSF-convolved elliptical Sérsic profiles).

|

Fig. 1. Illustration of the S/N on simple Gaussian profiles with Gaussian pixel noise. The fluxes are chosen so that the average S/N, measured on many realizations of each source, matches the scale given on the left. |

We note that for ML shear measurement, a measured S/N is potentially an interesting input feature of each galaxy, especially if the number of features needs to be small (Tewes et al. 2012). However, in the following, we will not use the S/N as input feature, but provide instead separately the more fundamental flux and size measurements to the ML algorithm, complemented by a sky noise measurement if required. This use of flux instead of S/N allows, in particular, for testing a single training on test sets with different noise levels, or for training on data with a lower noise than the actual observations. Nevertheless, we will extensively use the observed S/N defined above in the analysis of shear estimation biases.

5. Machine learning shear estimation

We now describe how we use and train NNs to predict an estimate for the shear of each galaxy, using the NN cost functions and the input features introduced in the previous sections. We focus on the core principles of the ML approach, and defer for now the numerous complications that a full shear measurement pipeline has to face.

Recall that we consider here the prediction of point estimates of the shear components ĝi, i ∈ {1, 2}, and associated weights which we denote wi. We will predict these point estimates and weights with independent NNs that are trained with different cost functions. For the sake of simplicity, we also distribute the predictions related to the two components to independent networks, instead of considering networks with multiple output nodes. We therefore train four scalar estimators, each consisting of a committee of several NNs for increased robustness.

Depending on the conditions in which the shear estimation method is to be applied, such as ground- or space-based data, variability of the sky background, instrumental effects in the data, selection of the source galaxies, accuracy to be achieved, different ways to setup and train these estimators can be considered. In the following, we present and motivate one simple fiducial approach in generic terms, using two different “training sets”, that is forward-simulations of observed galaxies with known shear.

5.1. Step I: shear point estimates with low conditional bias

We start by training the two shear point estimators ĝi. A simple toy-model choice of the input features could be measures of the ellipticity components, the flux, the size of the observed galaxy image, the noise of the sky background, and the ellipticity and size of the PSF model at the location of the considered galaxy. These eight input values summarize key information required by the shear estimator to account for the PSF shape and noise bias. We note that a different approach to inform the ML about the variability of a space-telescope PSF is discussed in Sect. 7.

We use the MSB cost function (Eq. (3)), which, muting the explicit dependency on the training data, takes the form

with p designating the parameters of the estimator. Recall that the networks for the two components of ĝi are entirely independent. For each component, ĝjk(p) designates the predicted value for the realization j of the case k.

The structure of the training set, that is its composition of cases and realizations, is the next most important choice. To train the estimator to be both accurate and as insensitive as possible to the distribution of true galaxy properties, we aim at penalizing its “conditional” bias, that is its bias in any subregion of this true parameter space. In other words, we aim at a potential estimator which would be accurate for any PSF, and any true galaxy size, elongation, flux, etc.

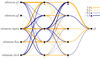

We generate a training set as illustrated in Fig. 2. Within each case k, the realizations share the same true shear  (the target value for the training), but also the same value for other explanatory variables that we can request the estimator to attempt to become insensitive to, given the information it obtains from its input features. Consequently, each case contains only one true galaxy combined with one particular PSF, always seen under the same shear. While other aspects of the data, such as the position angle of the galaxy, its exact position on the pixel grid, and the realization of the pixel noise do have a direct influence on the shear estimate, they have to be dealt with statistically. Indeed, a shear estimator cannot be insensitive to the intrinsic orientation of a galaxy, which is degenerate with the shear. This orientation acts as a form of unavoidable shape noise for the shear measurement. Therefore, within each case, we draw nrea realizations of these noise sources, and train the estimator to yield unbiased predictions despite this noise and pixellation.

(the target value for the training), but also the same value for other explanatory variables that we can request the estimator to attempt to become insensitive to, given the information it obtains from its input features. Consequently, each case contains only one true galaxy combined with one particular PSF, always seen under the same shear. While other aspects of the data, such as the position angle of the galaxy, its exact position on the pixel grid, and the realization of the pixel noise do have a direct influence on the shear estimate, they have to be dealt with statistically. Indeed, a shear estimator cannot be insensitive to the intrinsic orientation of a galaxy, which is degenerate with the shear. This orientation acts as a form of unavoidable shape noise for the shear measurement. Therefore, within each case, we draw nrea realizations of these noise sources, and train the estimator to yield unbiased predictions despite this noise and pixellation.

|

Fig. 2. Illustration of the structure of a training set to train a shear estimator ĝi with an MSB cost function. The horizontal frames correspond to different “cases”, each containing different “realizations” of a galaxy. All galaxies of a case are simulated with the same true shear, and the same PSF. Despite the circular symmetry of the PSFs used in this illustration, the typical cosmic shear is too small to be noticed by eye. |

The required value nrea to sufficiently average-out the noise effects with respect to a significant bias can be reduced by noise cancellation techniques. With such techniques, a controlled ensemble of compensating samples is taken, to improve the precision on the bias of a case beyond what would be achieved by randomly drawing the realizations. In Fig. 2, the intrinsic orientations of the galaxies are rotated in regular intervals on a ring in the (ε1, ε2)-plane, so that the average intrinsic ellipticity within each case exactly vanishes (following Nakajima & Bernstein 2007). Such techniques have become known as shape noise cancellation (see, e.g., Mandelbaum et al. 2014, and references therein).

Let us consider again the cost function. If a hypothetical estimator would achieve a zero MSB cost, for an infinite amount of realizations per case, this estimator could be said to be fully insensitive to the distribution of galaxy and PSFs it is presented with, among the population it was trained on. It is important to acknowledge that this is not possible in practice for all regions of this “true” parameter space: consider the example of an intrinsically small, unresolved galaxy, whose observed shape will not carry shear information. The PSF, the noise, and the pixellation lead to a loss of information which cannot be compensated for by the point estimator.

This limitation has important consequences. The presence of “difficult” cases in the training set, such as unresolved galaxies without useable shear information, or cases for which the precision is insufficient, can affect the performance of the estimator even in “easier” regions of parameter space. Indeed, the cases are connected by the one scalar MSB cost function summarizing the whole training set. If the features do not allow the NN to differentiate well enough between these “difficult” and “easy” cases, or if ML capacity is insufficient to exploit the features, the ML might have to settle with bad performance on easy cases in order to avoid excessively bad scores on the difficult ones. It’s a price of the simple sequential point estimate and weight training explored in this paper.

The role of the second step is to build a function that downweights galaxies from which an unbiased estimate cannot be obtained.

5.2. Step II: weight prediction

Given the estimators ĝi, i ∈ {1, 2}, we now train independent NNs to predict the associated weights wi. We use an MSWB cost function from Eq. (5), which can be written, separately for each component of the shear and its estimators, as

We recall that the w are constrained to the interval (0, 1) by design of the NNs, and that the estimates ĝ are to be computed ahead of the training of the weight-predictor, for each galaxy in the training data.

Again, putting aside technical details of the ML algorithm, we consider the choice of input features and the structure of the training data. Regarding the input features, it could seem intuitive that a small set of features, describing for example the observed size and flux, is sufficient to optimally down-weight low-S/N source galaxies for which an unbiased shear estimation cannot be achieved. After all, if the ĝi achieve low conditional biases, the act of removing intrinsically small and faint galaxies from the sample cannot introduce any additional biases. This reasoning is however wrong, as we don’t have access to any true galaxy parameters, which are uncorrelated with the shear. Instead, the input features, including the measurement of size and flux, are based on the observed galaxy and will inevitably show dependencies on the shear, at some level. Using such a small set of features would lead to a shear-dependent weighting, and thereby lead to biases even if the ĝi itself is accurate. Weighting acts in this regard exactly as any selection function, leading to selection biases (Miller et al. 2013; Kaiser 2000; Bernstein & Jarvis 2002).

Two conclusions can be drawn from this observation. First, it is justified to maintain the full set of features when training the weight-estimator, so that the weights can exploit the full information from each source to counter selection effects. Second, if selection biases prior to the shape measurement are affecting the data, this step of training the weights is a natural place to inform the ML-alogrithm about the selection function. Applications in Sects. 6 and 8 will illustrate this point.

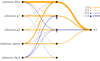

We structure the training data for the weights as illustrated in Fig. 3. The realizations within a case still all share the same true shear and PSF, but now also sample ideally the full population of observed galaxies. By this structure, we therefore aim at predicting weights so that, for any shear and any PSF, the overall shear prediction error (both statistical and bias) gets minimized.

|

Fig. 3. Structure of a training set to train a weight estimator, wi, with a MSWB cost function. Within each case, this training informs the method about approximated distributions of properties of the source galaxies and selection functions. |

We stress that the introduction of these weights, estimated on the noisy observations, to the shear estimation formalism will potentially re-introduce some small conditional biases that we attempted to minimize in Sect. 5.1. For example, the different realizations of a galaxy shown in Fig. 2 will get slightly different weights. Given the loss of information in the observation process, it is expected that a shear estimator cannot be fully insensitive to the true galaxy parameters. The approach presented here attempts to minimize this sensitivity to the smallest achievable level.

Furthermore, we stress that the trained weight-estimator does depend on the distribution of galaxy properties in the training data. To pick again an extreme example for the purpose of illustration, the prevalence of unresolved galaxies (or mis-identified stars) in the source population will influence how conservative the rejection of small observed galaxies needs to be in order to avoid biases.

Finally, we note that in the context of the weight training described above, shape-noise cancellation (SNC) should in general not be used in the simulated datasets. As the shape-measurement precision increases with S/N, SNC is more efficient on high-S/N than on low-S/N galaxies. On simulations with SNC, the training of wi would easily learn to exploit this, by yielding weights that exaggeratedly favor bright and large galaxies. Weights trained in this fashion are closer to optimal weights for ellipticity measurement than to weights for shear measurement, and would have to be corrected before being applicable to real survey data. For simplicity, we consider in this paper the direct estimation of weights for shear estimation, and therefore avoid when feasible the use of SNC in our training and validation sets.

5.3. On the estimation of ellipticity, size, magnification or other parameters

Variants of the described approach can be used to estimate parameters other than shear, such as galaxy shape model parameters. More generally, any parameter defined by a measurement on an idealized source, as would be seen with an infinite-resolution and noise-free imaging system, can serve as target for the ML output.

Let us first consider the estimation of the ellipticity components of idealized galaxy images, as defined before PSF-convolution and noise (see Sect. 2.1). Recall that real galaxy profiles have no simply-defined ellipticity. However, if the use of galaxies with complex morphology is required, ellipticity measurements can be used as target values in an identical manner. Figure 4 illustrates a simulation structure to train a point estimator for ellipticity. Cases cover a variety of galaxies and PSFs, and the realizations within each case differ only in noise and their exact positioning on the pixel grid. No shear is added to the training simulations. The set of input features remains unchanged from the previous examples, and the NNs are trained with an MSB cost function. Under the hypothesis of the idealized galaxy morphology, the resulting point estimate of the ellipticity can be seen as an estimate for the shear. Associated weights for this use can then be trained exactly as done in Sect. 5.2.

|

Fig. 4. Structure of a training set to train an ellipticity estimator, which can serve as a shortcut to the training of a shear estimator, when considering galaxies with simple elliptical profiles. |

Such a prediction of ellipticity is of particular interest, as it provides a computational shortcut for shear estimation, and we will later use it to pretrain NNs. The advantage, with respect to training a shear point estimator, is that fewer realizations per case are needed to achieve comparable results, as the shape noise has been removed from the problem.

Another estimator of interest is the angular size of a galaxy, again before PSF-convolution and noise effects. The availability of an accurate size measurement is mandatory for galaxy size-magnification studies (Schmidt et al. 2011), which suffer from the same instrumental bias sources as the ones affecting shear measurements. An approach to directly predict a magnification estimator could also be explored, in analogy to the shear estimator presented in this paper. Doing so, the ML algorithm could learn to exploit physical correlations between galaxy properties (see, e.g., Huff & Graves 2013) while compensating for the observational correlations introduced by the measurement process on noisy images. Discussing these estimators in more detail is, however, beyond the scope of this paper.

5.4. Practical notes on the training convergence and data

The successful training of supervised ML alogrithms typically requires some experimentation with hyperparameters, such as the size of the NNs and the size of a training set. In the following, we briefly list important observations and advices which ease the methodical optimization of the architecture and training of the neural networks. While some of these suggestions might seem elementary to ML-practitioners, we detail them in the particular context of the presented galaxy shape measurement problem. We assert that these principles are useful for any ML shear measurement approach.

Validation set. Arguably the most important idea is to always use a separate validation dataset to evaluate a training performed on some training dataset. This validation set can be simulated in the similar way as the training set, but should otherwise be independent (i.e., contain different cases and realizations). Monitoring the cost function value on both validation and training sets during the training allows the detection of training convergence and potential overfitting of the ML algorithm. Overfitting could happen if the training set is too small (in terms of nrea and ncase) and/or if the NN-capacity is unnecessarily high. Validation sets with both structures shown in Figs. 2 and 3 are useful. The first one can be used to test the achieved quality of the point shear estimator, and the second one to test the overall shear estimate including the predicted weights.

Start with small NNs. The experimentation should start with few and simple features and a very low-capacity network, to obtain a benchmark solution. Before adding features, or increasing the NN capacity by adding nodes, a validation set of sufficient size on which one can clearly visualize the limitation of the benchmark solution should be available. We would like to point out that the shear estimation problem based on the input features described in this paper does not require a large capacity. Indeed, the dependency of the shear estimate on the observed features is rather smooth, and no discontinuities are expected.

Training set adjustments. It is often advantageous to use different source galaxy property distributions in the training set than in the data one wishes to process. In particular, when training the shear point estimator with MSB (Sect. 5.1 and Fig. 2), cases from which no unbiased shear estimates can be expected may harm the training and should be avoided. A typical example is given by unresolved galaxies, with an intrinsic extension much smaller than the PSF. Their observed features will carry no shear information. Even a small number of these cases can dominate the cost function value, and lead the NNs to overfit and yield biased estimates on much easier cases. For the same type of training, it can also be beneficial to fill the true parameter space relatively uniformly, and to extend the range of true simulation parameters (such as shear, galaxy flux, and size) beyond what the real-sky data contains. We stress that the training set for the weight prediction should mimic the real-sky data and therefore include all problematic cases in a representative way. The same is true for an overall validation set.

Pretraining on “simpler” data. The computational cost to train the point estimator with MSB can be reduced by starting the NN training on data from simulations with an artificially reduced noise. The low noise allows for a smaller training set, and therefore a faster training, in many cases by at least an order of magnitude. The motivation for such a pretraining is that the NNs can learn for instance how to perform the PSF correction in an efficient way. Afterward, the pretrained NNs are further optimized to correct for noise bias on the conventional training set, requiring far less iterations than if no pretraining was done. If an input feature informs the NNs about the noise, it can be necessary to alter this feature during the pretraining, so that the values encountered by the NNs when training on low-noise data approximatively correspond to the values seen on the conventional simulations.

Amount of simulated data. Ideally, the size of a training set should be increased up to a point at which no further improvement of predictions made on an even larger validation sets is seen. As a rule of thumb, the validation set size needed to probe biases to some desired accuracy gives a good indication of the required training set size. For example, as the different shear “cases” of a constant-shear GREAT3 branch contain 10 000 galaxies each (including shape noise cancellation), one needs a training set with about as many realizations to obtain satisfactory results.

6. Application to fiducial toy-model simulations

As a first proof of concept of the proposed machine learning approach, we demonstrate it on a simple set of easily reproducible simulations, which we call “fiducial”. We stress that the main purpose of these simulations is to allow qualitative examinations and comparisons. We do not seek to optimize or explore every aspect of the algorithm at this stage, and focus instead on illustrating the core ideas introduced in the previous sections with small NNs. We defer experiments based on more realistic simulations to Sect. 8.

6.1. Fiducial image simulation parameters

We use GalSim (Rowe et al. 2015) to generate training and validation data in the form of Sérsic profiles (Sérsic 1963) convolved with a Gaussian PSF, on stamps of 64 by 64 pixels. For these first experiments the PSF is circular with a standard deviation of 2.0 pixels, while we introduce a non-stationary PSF in Sect. 7. Table 1 lists the simple distributions of true galaxy parameters that we use through all these experiments. For efficiency reasons, we couple the size and flux distributions by first drawing a surface-brightness parameter S for each galaxy, and then computing the true flux F = S ⋅ πR2, where R is the true half-light radius of the Sérsic profile. This choice allows us to reduce the generation of undetectable galaxies with large size and a low flux. For each realization of a stamp, the true position of the galaxy is uniformly drawn within one pixel around the stamp center, to simulate random pixellation. We mimic background-limited noise conditions by drawing stationary pixel noise from a normal distribution with zero mean and a standard deviation of 1.0 counts.

Galaxy parameters of the fiducial experiments.

Figure 5 shows the distribution of average observed S/N (as computed via Eq. (9)) and of the relative frequency of feature measurement successes on these fiducial simulations. The points in the two panels represent cases from a dataset as shown in Fig. 2: both statistics are computed over many orientations and noise realizations of the same true galaxies.

|

Fig. 5. Evolution of the S/N (top panel) and of the selection function imposed by the feature measurement (bottom panel) as function of the parameters R and S of the fiducial simulations. Each point is computed from 10 000 rotated realizations of a galaxy, convolved by the stationary circular PSF used in Sect. 6.2 (dataset VP). |

Roughly 30% of the galaxies drawn from the fiducial parameters have a S/N below 10. We intentionally design the fiducial simulations to include those galaxies, and even to reach into regions of parameter space in which the feature measurement regularly fails due to the noise. Our feature measurement via adaptive weighted moments imposes a selection on the galaxies, analogously to what the detection of sources in a real survey would do. Any adaptive or non-trivial feature measurement will show a similar behavior. With these fiducial simulations, we can therefore make a demonstration of handling a selection function with the ML-predicted weights.

6.2. Training and validation datasets

All training and validation data are drawn based on the fiducial parameter distributions introduced above. The feature measurement on the simulated galaxy images directly follows their generation, and might not always succeed, due to the pixel noise. Individual galaxy realizations for which this happens are simply masked out from the catalog. We generate four different datasets, which we describe in the following.

-

TP designates the dataset used to train the shear point estimators, with a structure as illustrated in Fig. 2. We draw 5000 independent galaxies and shears (“cases”), and generate 2000 realizations of each galaxy, for a total of 10 million stamps. Prior to the shearing, the PSF-convolution and the pixellation, the galaxy is rotated for each realization so that the position angle of the galaxy uniformly and regularly describes a full circle per case. We then remove about 30% of the cases that have ⟨S/N⟩< 10 from this dataset, as motivated below.

-

VP serves as an intermediate validation dataset for the point estimators. It has the same structure and number of cases as the above TP, but with 10 000 realizations per case, amounting to 50 million stamps. This allows for a higher precision of the bias analysis.

-

TW serves to train the weight estimators, with a structure as illustrated in Fig. 3. We draw 200 cases of different shears, and 100 000 realizations of different galaxies per case (20 million stamps), without any shape noise cancellation scheme.

-

VO is an overall validation dataset. It has the same structure and size as TW, and we again opt for not using shape noise cancellation.

These datasets are drawn with different initializations of the random number generators.

To summarize, the training of the point estimates will use a dataset avoiding “difficult” cases of small and faint galaxies, while the training of the weights uses the full fiducial parameter space. Clearly, some of the small and faint galaxies within the parameter space do not allow accurate ĝi estimates, even with 2000 realizations. Their presence in TP would perturb the training process, as the NNs would attempt to fit these outliers instead of obtaining accurate ĝi on the “easy” cases (see Sect. 5.4). We stress that this selection based on the average S/N per case rejects entire cases, and not only some particular realizations. It does not introduce the biases and complications that a selection on individual measurements would bring. Furthermore, we note that the use of this particular threshold on ⟨S/N⟩ is somewhat arbitrary, and that more optimized selections based on true galaxy parameters could result in a better performance.

On average, and with the demonstration scripts which we make available, the generation of one 64-by-64-pixel stamp takes about 10 ms, and the feature measurement takes 3 ms on a contemporary CPU.

6.3. Machine learning shear estimation

As input for all NNs, we use the five features adamom_g1, adamom_g2, adamom_sigma, adamom_flux, adamom_rho4 described in Sect. 4.1. We do not inform the NNs about the PSF and the background noise, given that these are stationary in the present section.

In a first step, we train the two point estimators ĝ1 and ĝ2 on the dataset TP. For each component, we use a committee of eight individual NNs with two hidden layers of five nodes, and one output node. Figure 6 illustrates one of these NNs. All other parameters concerning the NN setup follow the description given in Sect. 3.4.

|

Fig. 6. Visualization of one NN from the committee predicting a shear point estimate ĝ1, trained for the fiducial experiment. The NN nodes are represented by black squares, in a configuration with only two hidden layers of five nodes each. The connections between these nodes depict the values of the NN weights, by their thickness and color. The NN biases are visualized by the triangles below the nodes. The legend gives the scale of these elements. From the relative amplitude of the weights, one can observe, for example, that adamom_g2 has relatively low impact, while adamom_sigma plays an important role in the prediciton of ĝ1. All input features are normalized prior to entering the NNs (see Sect. 3.4 for details). |

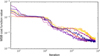

The evolution of the MSB cost function during the training is shown in Fig. 7. After about 10 000 iterations no further improvement is seen for the best committee members in this particular setup, and we stop the training.

|

Fig. 7. Evolution of the MSB cost function value during the training of the shear point estimation for the fiducial experiment. Each color shows a different committee member. Evaluations on a validation set are shown with dotted lines. Owing to the implemented mini-batch algorithm (Sect. 3.4), the cost function is not always monotonically decreasing. |

Before training the weight estimators, we inspect the achieved quality of this first step by applying the point estimators to the VP validation set. Figure 8 presents a first quality check of ĝ1, visualizing estimation biases per case as a function of source galaxy parameters. Some panels show results for a subset of cases selected according to their ⟨S/N⟩. As first observation, we note that the simple networks succeed in predicting accurate shear point estimates for almost all ⟨S/N⟩> 10 cases. Remarkably, this performance even extrapolates to some low-⟨S/N⟩ cases from regions of parameter space not seen during the training.

|

Fig. 8. Overview of the shear point estimation for the fiducial simulations. Each point is a case of the VP validation set. Top panels: residuals of the unweighted average ĝ1 as function of the true shear |

Based on the ⟨S/N⟩> 10 cases of the same dataset, Fig. 9 shows the multiplicative and additive bias terms (Eq. (2)) obtained by unweighted linear regression, in various bins of source galaxy parameters. Given the dependence on these galaxy parameters, we refer to the resulting biases as “conditional”. As for the training, the ⟨S/N⟩-cut of the validation set is arbitrary, but this simple threshold allows us to focus on the cases which matter most for a real shear measurement application. In most bins of Fig. 9, the conditional multiplicative biases μ have amplitudes close to 2 × 10−3 (shown by the shaded horizontal band), while the additive biases c are on the order of 10−4. The most significant multiplicative biases are observed for galaxies with large Sérsic indices (i.e., the most centrally concentrated light profiles). This is easily explained, as the concentration of the light profile, related to the feature adamom_rho4, is particularly difficult to measure for small and faint galaxies. In parts of parameter space in which features are unreliable (adamom_rho4, for example), the NNs will tend toward predictions satisfying an average galaxy. In Fig. 9, this directly leads to conditional bias trends, typically with a balance between positive and negative multiplicative biases. We note that when considering average biases over an entire source galaxy population, these conditional biases might cancel out, at the price of some sensitivity to this source population. It is also important to stress that the above analysis purposely avoids selection biases by disregarding cases for which ⟨S/N⟩< 10. Such a selection cannot be made on individual realizations, leading us to the next training step.

|

Fig. 9. Multiplicative ( μi) and additive (ci) bias parameters characterizing the ĝi point estimates, for the fiducial experiment, as function of galaxy parameters. The four panels visualize the dependence of these bias terms on the average S/N per case, the true half-light radius R, the Sérsic index, and the intrinsic ellipticity ε of the galaxies. In each panel, the μi and ci are shown respectively with solid and dotted lines, in black for the first component, in red for the second (see legend). The bin limits of source galaxy parameters divide the population into quantiles and are indicated with light vertical lines, except for the Sérsic index, which follows a discrete distribution. The parameters are shown on a linear scale within the shaded area, and using a logarithmic scale outside of this region. Error bars depict 1σ uncertainties originating from the validation set. In addition, the training itself introduces some noise, so that details of these curves vary depending on the realization of the training set, without changing the qualitative observations discussed in the text. |

We proceed by training the weight estimators, using the TW dataset without any additional selection, which in a real application would mimic the observed data as closely as possible. We again use committees with eight NNs, but reduce the capacity of the NNs to a single hidden layer of five nodes. Figure 10 shows an example of this small network structure. For the present demonstration, larger NNs did not yield significantly better results. The MSWB cost function, computing weighted averages of the shear point estimates per case, ceases to decrease after few hundred iterations.

We then run the predictions ĝi and wi from both steps on the remaining VO dataset. Figure 11 summarizes the results of this overall analysis of the shear estimates. The two leftmost panels show shear residuals on each of the 200 cases, obtained by averaging the point estimates ĝi alone accross each case, ignoring the weights. Significant percent-level multiplicative shear biases can clearly be seen. They can be attributed mainly to (1) noise and pixellation bias on small and low-S/N galaxies, and (2) the potentially shear-dependent selection function imposed by the feature measurement. The central panels of this figure show residuals of the weighted average shear of each case, on the same data. The use of weights reduces the overall multiplicative bias by an order of magnitude to the level of 10−3, with additive biases on the order of 10−4. We conclude from this experiment that the weight estimators have successfully learned to down-weight “difficult” galaxies, and to mostly cancel out remaining biases of the point estimates.

|

Fig. 11. Overall validation of the shear estimation of the fiducial experiment with stationary PSF, demonstrating the achieved performance on galaxies selected only by the measurability of the input features (see Fig. 5). Leftmost panels: residuals of the unweighted average point estimates as function of the true shear component (one point per case of the VO dataset); central panels: residuals of the full shear estimates including the weights. Sums and averages are computed over the realizations within each case. The bias parameters μi and ci obtained from linear regressions are indicated within the panels. Rightmost panels: weight values for a random subsample of galaxies, as function of the measured features adamom_sigma and adamom_flux (top), and the true parameters R and S (bottom). The equivalent distributions of the respective other weight components are highly similar. |

The right panels of Fig. 11 illustrate the distribution of weights for random individual galaxies. One can observe that adamom_flux has a major influence on the weight value, but that other features in addition to adamom_sigma also contribute to the estimator. We stress that this reliance on other features is essential if we want the weights to counter biases introduced by any shear-sensitive selection function, which might have a complicated dependency on galaxy parameters.

As a side effect, the weights also introduce sensitivity to the true galaxy parameters. In Fig. 12, we present an analysis of the conditional biases similar to Fig. 9, but now taking into account the weights, and for the full fiducial parameter space without any cut in ⟨S/N⟩. The interpretation of these conditional biases of the weighted point estimates is not trivial. The points in each bin show the multiplicative and additive biases one would obtain if all source galaxies would have their true properties within this particular bin, instead of following the distributions used for the weight training. This is a very pessimistic point of view, as it assumes that we largely ignore the true galaxy properties. Instead, for any practical application, the training set for the weights would be chosen to mimic the target galaxy population as accurately as possible. The analysis shown in Fig. 12 therefore gives a first idea about the sensitivity to the knowledge of source galaxies, in particular how important this knowledge is for training the weights. It is reassuring to observe that the weights do not completely invalidate the very low sensitivity of the point estimates to the galaxy parameters. Impressively, the conditional multiplicative biases seen in Fig. 12, without any cut in S/N, are still sub-percent for the large majority of slices through true parameter space. Nevertheless, comparing Figs. 9 and 12, one might be led to wonder if the use of weights is not detrimental. We stress again that a selection of galaxies based on ⟨S/N⟩, as done in Fig. 9, is not possible for real data. A substitute selection based on S/N (or any other combination of observed features) will likely lead to selection biases, which can however be mitigated by the use of weights, as we will illustrate in Sect. 8.

|

Fig. 12. Conditional biases of the full shear estimator (point estimates and weights) on the fiducial experiment. As discussed in the text, these conditional biases give a very pessimistic view, as the population within each bin strongly differs from the assumed overall population with which the weights are trained. The analysis uses the VP dataset, and includes all galaxies for which features could be measured, without any cut in ⟨S/N⟩. A weighted least squares regression is performed in each bin to obtain μ and c. |

7. Correcting for a non-stationary PSF

In the following, we demonstrate two alternative paths along which the ML method can correct for a variable PSF. For both situations, we assume that the PSF is exactly known, and regard the creation of a suitable PSF model to be a separate problem.

The first approach is oriented toward dealing with a well-determined diffraction-limited PSF of a space-based telescope. We assume that a model of the PSF across the field exists and is applicable to many exposures of a survey. Given such a PSF model, one can directly use the field position of each galaxy as input features to an ML shear estimation. The ML algorithm is trained on simulations that are generated using the same PSF model, and thereby directly learns how the PSF should be corrected for depending on the location of a source on the detector. The advantage of using the source position as an input feature is that it optimally encodes, given the model, the knowledge about the PSF, and naturally provides a machine-learned interpolation of the PSF anywhere in the field. This approach can be generalized for a PSF model that depends, for example, on time or galaxy color.

The second approach is better suited for the stochastic atmospheric PSF of a ground-based survey. For such data, a separate PSF model is usually constructed for each exposure, based on images of stars in the field. It is intractable to train an ML algorithm to individually learn the spatial dependence of the PSF for each exposure. But instead, one can train the ML to perform a PSF correction based on input features that directly describe the PSF shape. For example, moment-based shape parameters describing the ellipticity and size of the PSF could be used, or parameters describing the PSF or its variability from a reference PSF in terms of an orthogonal basis. The ML is then to be trained with a range of PSFs encountered in the whole survey, and naturally learns to interpolate the PSF shape parameters.

7.1. Implementation and simulations