| Issue |

A&A

Volume 591, July 2016

|

|

|---|---|---|

| Article Number | A2 | |

| Number of page(s) | 19 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/201628278 | |

| Published online | 01 June 2016 | |

High resolution weak lensing mass mapping combining shear and flexion⋆

1 McWilliams Center for Cosmology, Department of Physics, Carnegie Mellon University, Pittsburgh, PA 15213, USA

e-mail: flanusse@andrew.cmu.edu

2 Laboratoire AIM, UMR CEA-CNRS-Paris, Irfu, SAp, CEA Saclay, 91191 Gif-sur-Yvette Cedex, France

3 Department of Physics and Astronomy, University College London, Gower Place, London WC1E 6BT, UK

Received: 9 February 2016

Accepted: 12 March 2016

Aims. We propose a new mass mapping algorithm, specifically designed to recover small-scale information from a combination of gravitational shear and flexion. Including flexion allows us to supplement the shear on small scales in order to increase the sensitivity to substructures and the overall resolution of the convergence map without relying on strong lensing constraints.

Methods. To preserve all available small scale information, we avoid any binning of the irregularly sampled input shear and flexion fields and treat the mass mapping problem as a general ill-posed inverse problem, which is regularised using a robust multi-scale wavelet sparsity prior. The resulting algorithm incorporates redshift, reduced shear, and reduced flexion measurements for individual galaxies and is made highly efficient by the use of fast Fourier estimators.

Results. We tested our reconstruction method on a set of realistic weak lensing simulations corresponding to typical HST/ACS cluster observations and demonstrate our ability to recover substructures with the inclusion of flexion, which are otherwise lost if only shear information is used. In particular, we can detect substructures on the 15′′ scale well outside of the critical region of the clusters. In addition, flexion also helps to constrain the shape of the central regions of the main dark matter halos.

Key words: gravitational lensing: weak / methods: data analysis / galaxies: clusters: general / dark matter

Our mass mapping software, called Glimpse2D, is made freely available at http://www.cosmostat.org/software/glimpse

© ESO, 2016

1. Introduction

Probing the mass distribution of the various structural components of the Universe is a fundamental tool for constraining cosmological models. Gravitational lensing, which is sensitive to the total mass content, is particularly well suited to this task. One of the strengths of gravitational lensing is its ability to probe the dark matter content from the largest cosmological scales down to the galaxy scale, providing valuable constraints on all intermediary scales.

In particular, on the galaxy cluster scale, gravitational lensing is an established tool for measuring the total mass distribution and investigating the nature of dark matter (e.g. Clowe et al. 2006; Massey et al. 2015). While the combination of gravitational shear and strong lensing constraints has proven very successful to resolve the fine structure of galaxy clusters (Bradac et al. 2005; Cacciato et al. 2006; Merten et al. 2009), strong lensing is only effective within the Einstein radius of the cluster, which represents for most clusters only a fraction of their total area. Outside of this region, constraints from gravitational shear are generally very poor below the arcminute scale, which makes the detection of substructures extremely unlikely. A promising avenue to bridge the gap between shear and strong lensing and to help resolve substructures outside of the innermost regions of galaxy clusters is gravitational flexion, which has already been shown to provide valuable constraints on these intermediate scales (Leonard et al. 2007).

Measuring flexion has proven to be a difficult task in practice (Viola et al. 2012; Rowe et al. 2013). Originally, flexion estimators were derived from shapelet coefficients (Goldberg & Bacon 2005; Bacon et al. 2006). Another approach relied on moments of galaxy images (Okura et al. 2007; Goldberg & Leonard 2007; Schneider & Er 2008). More recently, a different approach to flexion estimation, called the analytic image method (AIM), has been proposed by Cain et al. (2011) based on forward-fitting analytical galaxy image models. Among the benefits of this promising new method are a complete invariance under the mass-sheet degeneracy and a better characterisation of the measurement errors.

Very few methods have been developed for mass mapping that includes flexion. The first reconstruction technique using flexion has been proposed in Bacon et al. (2006) as an extension of the Kaiser-Squires Fourier estimator (Kaiser & Squires 1993). A parametric reconstruction of Abell 1689 was attempted in Leonard et al. (2007) and Leonard et al. (2009), Leonard & King (2010) later proposed an extension of aperture mass filters to the case of flexion. Joint reconstruction based on maximum likelihood methods combining shear, flexion and strong lensing have also been proposed by Er et al. (2010) and most recently in Cain et al. (2016).

Recovering high resolution mass-maps from weak lensing is a difficult problem for two main reasons: the irregular sampling of the lensing field and the low signal-to-noise ratio (S/N) on small scales. While the lensing equations can be most easily inverted when the field is fully sampled on a regular grid, for instance using a Kaiser-Squires estimator, in practice the shear and flexion fields are only sampled at the position of the background galaxies, which are not regularly distributed. In that case the lensing equations are no longer directly invertible and mass mapping becomes an ill-posed inverse problem, i.e., the solution is not unique and/or extremely unstable to measurement noise. To avoid this problem, a usual approach is to bin or smooth the input shear or flexion on a regular grid, which not only serves as a regularisation of the inverse problem but also acts as a denoising step by suppressing smaller scales, where the noise typically dominates. Although this reasoning holds for shear alone, adding flexion information greatly increases the S/N of structures on small scales, in which case binning or smoothing the input data can destroy relevant information.

The aim of this paper is to propose a new mass mapping methodology that is specifically designed to recover small-scale information from weak lensing by avoiding any binning or smoothing of the input data and supplementing shear information with flexion to increase the sensitivity to substructures. We address the mass mapping problem as a general ill-posed inverse problem which we regularise using a wavelet-based sparsity prior on the recovered convergence map. Similar sparse regularisation methods have already been proposed in the context of weak lensing mass mapping in Pires et al. (2009) for inpainting missing data and Leonard et al. (2012, 2014) for 3D mass mapping. In this work, the sparse regularisation framework not only allows us to solve the lensing equations even at very low sampling rates but it also provides a very robust multi-scale noise regularisation. Furthermore, despite the irregular sampling of the data, our algorithm relies on fast Fourier estimators to invert the lensing equations, by treating the computation of the Fourier transform (which is ill-posed in this case) as part of the same inverse problem. This makes our approach far more efficient than the costly finite differencing schemes usually used in maximum likelihood algorithms. Finally, the proposed algorithm accounts for reduced shear and flexion and incorporates individual redshift measurements of background sources.

This paper is structured as follows. We present a short overview of the weak lensing formalism and motivate the interest of flexion in Sect. 2. In Sect. 3 we introduce and demonstrate the effectiveness of our sparse regularisation approach in the simplified setting of reconstructing the convergence from shear alone. Then, in Sect. 4, we incorporate the non-linearity induced by the reduced shear, we add redshift information for individual galaxies and we extend the framework to include flexion. Finally, in Sect. 5 we demonstrate on realistic cluster simulations that our method is successful at reconstructing the surface mass density and illustrate the impact of the additional flexion information for the recovery of small scale substructures.

2. Weak gravitational lensing formalism

2.1. Weak lensing in the linear regime

Because gravitational lensing is sensitive to the total matter content of structures, it is an ideal probe to map the dark matter distribution. The presence of a massive lens along the line of sight will induce a distortion of the background galaxy images which can be described by a coordinate transformation (Bartelmann 2010) between the unlensed coordinates β and the observed image coordinates θ:  (1)where ψ is the 2D lensing potential encoding the deflection of light rays by the gravitational lens. The lensing potential can be seen as generated from a source term, through the following Poisson equation:

(1)where ψ is the 2D lensing potential encoding the deflection of light rays by the gravitational lens. The lensing potential can be seen as generated from a source term, through the following Poisson equation:  (2)where κ is the convergence, a dimensionless surface density. If we consider a single thin lens, κ can be derived from the surface mass density of the lens Σ as:

(2)where κ is the convergence, a dimensionless surface density. If we consider a single thin lens, κ can be derived from the surface mass density of the lens Σ as:  (3)where Σcritic is the critical surface density defined by a ratio of distances between the observer, the lens and the source:

(3)where Σcritic is the critical surface density defined by a ratio of distances between the observer, the lens and the source:  (4)In this expression, DS, DL and DLS are respectively the angular diameter distances to the source, the lens and between the lens and the source.

(4)In this expression, DS, DL and DLS are respectively the angular diameter distances to the source, the lens and between the lens and the source.

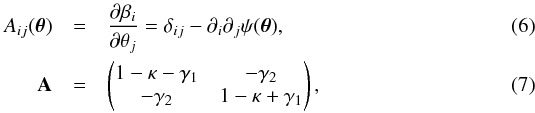

If the lensing effect is weak enough, the coordinate transformation can be approximated using a Taylor expansion to first order of Eq. (1) as:  (5)where A is the amplification matrix, defined in terms of the derivatives of the lensing potential and which takes the form:

(5)where A is the amplification matrix, defined in terms of the derivatives of the lensing potential and which takes the form:  where γ is the complex shear, causing anisotropic distortions whereas κ only produces an isotropic magnification effect. However, in practice, when using galaxy ellipticities as a measurement of the lensing effect, neither the convergence nor the shear are directly observable. Indeed, the net contribution of weak lensing to the ellipticity of a galaxy is not the shear γ but the reduced shear g defined as

where γ is the complex shear, causing anisotropic distortions whereas κ only produces an isotropic magnification effect. However, in practice, when using galaxy ellipticities as a measurement of the lensing effect, neither the convergence nor the shear are directly observable. Indeed, the net contribution of weak lensing to the ellipticity of a galaxy is not the shear γ but the reduced shear g defined as  . The amplification matrix can equivalently be expressed as a function of the reduced shear by factorising a term (1−κ) which yields:

. The amplification matrix can equivalently be expressed as a function of the reduced shear by factorising a term (1−κ) which yields:  (8)Note that in the weak lensing regime, for κ ≪ 1, the reduced shear can be approximated to the actual shear, γ ≃ g. However, this assumption no longer holds for the purpose of mapping galaxy clusters where it is critical to correctly account for the reduced shear.

(8)Note that in the weak lensing regime, for κ ≪ 1, the reduced shear can be approximated to the actual shear, γ ≃ g. However, this assumption no longer holds for the purpose of mapping galaxy clusters where it is critical to correctly account for the reduced shear.

2.2. Gravitational flexion

The previous expressions for the coordinate transformations hold if the convergence and shear fields are assumed to be constant on the scale of the observed source images. When this assumption is not verified, the expansion of the coordinate transformation can be pushed to second order (Goldberg & Bacon 2005):  (9)where the third order lensing tensor Dijk can be derived from Dijk = ∂kAij. This additional term gives rise to third order derivatives of the lensing potential which can be summarised as a spin-1 field ℱ = ∇κ and a spin-3 field

(9)where the third order lensing tensor Dijk can be derived from Dijk = ∂kAij. This additional term gives rise to third order derivatives of the lensing potential which can be summarised as a spin-1 field ℱ = ∇κ and a spin-3 field  respectively called first and second flexion.

respectively called first and second flexion.

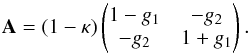

Similarly to the case of the reduced shear, these two flexion components are not directly observable. Instead, only the reduced flexion fields are measured from galaxy images:  (10)Furthermore, estimating in practice the second flexion G is extremely delicate and measurements are generally noise dominated and discarded. As a result, in the rest of this work, we will only include measurements of the reduced first flexion F.

(10)Furthermore, estimating in practice the second flexion G is extremely delicate and measurements are generally noise dominated and discarded. As a result, in the rest of this work, we will only include measurements of the reduced first flexion F.

Throughout this work, we assume reduced shear and flexion measurement provided by the AIM method of Cain et al. (2011). In particular, we will use σF = 0.029 arcsec-1 for the dispersion of flexion measurements, as reported by Cain et al. (2011) on HST data.

2.3. mass mapping using Fourier estimators

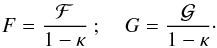

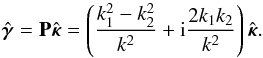

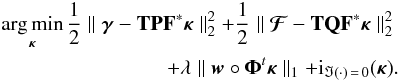

The most well known inversion technique for weak lensing mass mapping is the Kaiser-Squires inversion (Kaiser & Squires 1993) which consists in applying a minimum variance filter in Fourier space to both components of the shear, yielding one estimate of the convergence field with minimum noise variance, given by  (11)where

(11)where  . This estimator is only valid for k2 ≠ 0 and as a result the mean value of the field cannot be recovered, which corresponds to the well-known mass sheet degeneracy. Assuming white Gaussian noise of variance

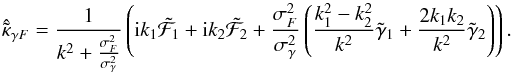

. This estimator is only valid for k2 ≠ 0 and as a result the mean value of the field cannot be recovered, which corresponds to the well-known mass sheet degeneracy. Assuming white Gaussian noise of variance  on the input shear field, the Kaiser-Squires estimator features a simple flat noise power spectrum:

on the input shear field, the Kaiser-Squires estimator features a simple flat noise power spectrum:  (12)Although this inversion technique has some very desirable properties including linearity and minimum variance, it has one major shortcoming, namely that is not well defined on bounded domains, even less so on domains with complex geometries and missing data, which occurs in actual surveys. Some alternatives to the simple Kaiser-Squires have also been developed to mitigate some of it shortcomings (Pires et al. 2009; Deriaz et al. 2012) using iterative schemes.

(12)Although this inversion technique has some very desirable properties including linearity and minimum variance, it has one major shortcoming, namely that is not well defined on bounded domains, even less so on domains with complex geometries and missing data, which occurs in actual surveys. Some alternatives to the simple Kaiser-Squires have also been developed to mitigate some of it shortcomings (Pires et al. 2009; Deriaz et al. 2012) using iterative schemes.

Similarly to the Kaiser-Squires inversion for the shear, Bacon et al. (2006) proposed a minimum variance filter for estimating the convergence from first flexion ℱ. As the flexion is only the gradient of the convergence, these two quantities can be related in the Fourier domain as:  (13)With the same approach as the Kaiser-Squires inversion, the two components of the first flexion can be combined in order to yield a minimum variance estimator of the convergence, which takes the simple expression:

(13)With the same approach as the Kaiser-Squires inversion, the two components of the first flexion can be combined in order to yield a minimum variance estimator of the convergence, which takes the simple expression:  (14)Note that, just as with the Kaiser-Squires inversion, this expression is only valid for k1 ≠ 0 and k2 ≠ 0, which means that the flexion reconstruction is still subject to the mass sheet degeneracy. Contrary to the previous Kaiser-Squires inversion, this estimator does not have a flat noise power spectrum. Indeed, assuming uncorrelated flexion measurements with intrinsic variance

(14)Note that, just as with the Kaiser-Squires inversion, this expression is only valid for k1 ≠ 0 and k2 ≠ 0, which means that the flexion reconstruction is still subject to the mass sheet degeneracy. Contrary to the previous Kaiser-Squires inversion, this estimator does not have a flat noise power spectrum. Indeed, assuming uncorrelated flexion measurements with intrinsic variance  , the variance of this estimator is:

, the variance of this estimator is:  This makes the reconstruction of mass maps from flexion alone using this estimator very problematic on large scales where the noise will always dominate the signal. This means that applying any low-pass smoothing actually reduces the S/N of the reconstruction.

This makes the reconstruction of mass maps from flexion alone using this estimator very problematic on large scales where the noise will always dominate the signal. This means that applying any low-pass smoothing actually reduces the S/N of the reconstruction.

|

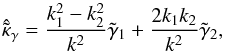

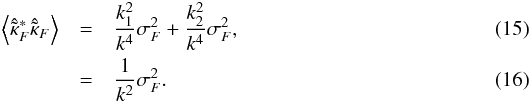

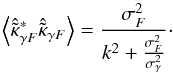

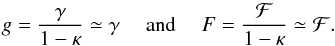

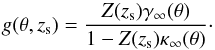

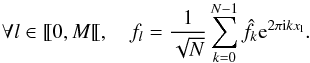

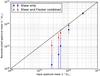

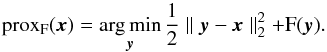

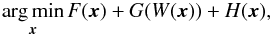

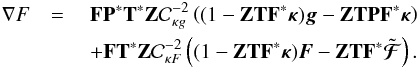

Fig. 1 Noise power spectra of the minimum variance estimators for shear alone, flexion alone and shear and flexion combined. The vertical red line indicates the arcminute scale. These noise power spectra assume σF = 0.029 arcsec-1, σγ = 0.3 and ng = 80 gal/arcmin2 with 14 arcsecs pixels. |

Figure 1 shows the noise power spectra of a shear inversion alone and flexion inversion alone, the arcminute scale is marked by the vertical line. As can be seen, the flexion noise power-spectrum is much more favourable than the shear on small scales. However, it is only worth considering adding flexion information if the scale at which these two power spectra cross is still relevant. This scale only depends on the ratio of shear and flexion intrinsic dispersion and we find it to be around 65 arcsec for σF = 0.029 arcsec-1 and σγ = 0.3. As a result, based solely on considerations about the relative noise power spectra using these Fourier estimators, we argue that flexion can be used to help resolve sub-arcmins structures.

It is clear from Fig. 1 that although flexion can help constrain small features, it is not competitive with respect to the shear on scales larger than 1 arcmin. To simultaneously benefit from both shear and flexion, Bacon et al. (2006) proposed a minimum variance filter combining both measurements. Although they present reconstructions using this combined filter, they do not explicitly provide its expression, which can easily be derived and takes the form:  (17)The noise variance of this estimator is now:

(17)The noise variance of this estimator is now:  (18)As can be seen, on large scales, i.e. for small k we recover asymptotically the flat shear noise power spectrum while on small scales we recover the flexion estimator noise power spectrum. Figure 1 illustrates the noise power spectrum of this combined estimator (blue line) in realistic conditions. We see that the combined estimator starts improving over the shear alone around the arcminute scale.

(18)As can be seen, on large scales, i.e. for small k we recover asymptotically the flat shear noise power spectrum while on small scales we recover the flexion estimator noise power spectrum. Figure 1 illustrates the noise power spectrum of this combined estimator (blue line) in realistic conditions. We see that the combined estimator starts improving over the shear alone around the arcminute scale.

|

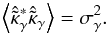

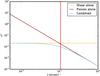

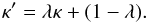

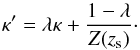

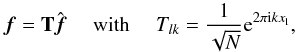

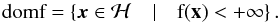

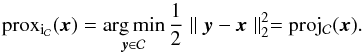

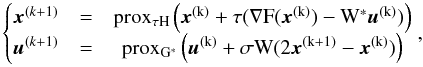

Fig. 2 Top: simulated 10 × 10 arcmin2 field containing a massive cluster a) and its reconstruction using the combined minimum variance estimator b). Bottom: reconstructions using the shear alone Kaiser-Squires estimator c) and the flexion alone minimum variance estimator d).The noise level correspond to σF = 0.04 arcsec-1, σγ = 0.3 and ng = 50 gal/arcmin2 with 6 arcsecs pixels. |

An example of reconstruction using this combined estimator is shown in Fig. 2. The input convergence map is reconstructed from shear alone, flexion alone and combining shear and flexion. As expected, the shear alone reconstruction is very noisy, especially on small scales because of its flat noise power spectrum. On the contrary, the flexion alone reconstruction is noise dominated on scales larger than 1 arcmin. However, by combining both shear and flexion information, the noise is effectively suppressed on small scales and not amplified on large scales which makes the cluster and some of its substructure clearly identifiable without any additional filtering. Note however that this simple example only illustrates the noise properties of these estimators but is unrealistic in the sense that it does not consider the problem of missing data.

These Fourier estimators are only valid in the weak lensing regime and should only be applied when the convergence is weak (when κ ≪ 1) such that the observed reduced shear and flexion can be approximated to the true shear and flexion:  (19)Of course this assumption no longer holds at the close vicinity of galaxy clusters and it is therefore important to properly account for reduced shear and flexion for the purpose of mapping galaxy clusters. In those situations, κ is no longer negligible and the reconstruction problem becomes non-linear. As was noted in Seitz & Schneider (1995), it is still possible to recover the convergence using the previous linear estimators by iteratively solving the inversion problem, using at each iteration the previous estimate of the convergence to correct the measured reduced shear:

(19)Of course this assumption no longer holds at the close vicinity of galaxy clusters and it is therefore important to properly account for reduced shear and flexion for the purpose of mapping galaxy clusters. In those situations, κ is no longer negligible and the reconstruction problem becomes non-linear. As was noted in Seitz & Schneider (1995), it is still possible to recover the convergence using the previous linear estimators by iteratively solving the inversion problem, using at each iteration the previous estimate of the convergence to correct the measured reduced shear: ![\begin{equation} \kappa_{n+1}(\bm{\theta}) -\kappa_0 = \frac{1}{\pi} \int \mathrm{d} \bm{\theta}^\prime (1 - \kappa_n(\bm{\theta}^\prime)) \Re \left[D^*(\bm{\theta} - \bm{\theta}^{\prime}) g(\bm{\theta}^\prime) \right] , \label{eq:reducedShearCorrection} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq61.png) (20)where D(θ) is the Kaiser-Squires convolution kernel and the constant κ0 accounts for the fact that the constant of the convergence is not constrained by the data. This iterative process is generally found to converge quickly to the solution. Of course, a similar procedure can be implemented to correct the reduced flexion and we will use the same principle in the reconstruction algorithm presented in the next section.

(20)where D(θ) is the Kaiser-Squires convolution kernel and the constant κ0 accounts for the fact that the constant of the convergence is not constrained by the data. This iterative process is generally found to converge quickly to the solution. Of course, a similar procedure can be implemented to correct the reduced flexion and we will use the same principle in the reconstruction algorithm presented in the next section.

2.4. Mass-sheet degeneracy and redshift dependency

One of the most notorious issues in weak lensing mass mapping is the so-called mass-sheet degeneracy (Seitz & Schneider 1996). This degeneracy is due to the fact that the shear is left invariant by the addition of a constant mass-sheet to the lens surface density. As a result, the observed reduced shear is invariant under the following λ-transformation:  (21)Indeed, if g′ is the reduced shear generated by κ′ then:

(21)Indeed, if g′ is the reduced shear generated by κ′ then:  (22)By the same mechanism, the reduced flexion F is also invariant under the same λ-transformation: adding the flexion information will not affect the mass-sheet degeneracy.

(22)By the same mechanism, the reduced flexion F is also invariant under the same λ-transformation: adding the flexion information will not affect the mass-sheet degeneracy.

This issue is particularly problematic for measuring the mass of galaxy clusters from weak-lensing. Indeed, these measurements are typically performed on small fields where it cannot simply be assumed that the convergence goes to zero outside the field without biasing the measurement (Bartelmann 1995).

However, although it is true that from shear alone the mass-sheet degeneracy can not be lifted, it can nonetheless be mitigated when including additional information about the relative distances between the lens and the different sources. Indeed, as was pointed out in Bradac et al. (2004), knowledge of individual photometric redshifts of background galaxies is enough to constrain the mass-sheet for strong enough lenses.

Let us consider γ∞(θ) and κ∞(θ) the shear and convergence of a given lens at redshift zl, for sources at infinite redshift. The actual shear and convergence applied to a galaxy at a specific redshift zs can be expressed as κ(θ,zs) = Z(zs)κ∞(θ) and γ(θ,zs) = Z(zs)γ∞(θ) where Z is a cosmological weight, function of the redshifts of the source and lens, which can be expressed as a ratio of two critical surface densities:  (23)where H is the Heaviside step function, which only accounts for the fact that sources located at lower redshift than the lens are not lensed, and

(23)where H is the Heaviside step function, which only accounts for the fact that sources located at lower redshift than the lens are not lensed, and  is the critical surface density for sources at infinite redshift.

is the critical surface density for sources at infinite redshift.

If we now consider the reduced shear measured on a galaxy at a specific redshift zs as a function of κ∞ and γ∞, it takes the form:  (24)This expression alone is just a rewriting of the measured reduced shear, which makes explicit the dependency on the redshift of the source. Therefore, the convergence is still subject to the mass-sheet degeneracy which can now be made explicit in term of zs:

(24)This expression alone is just a rewriting of the measured reduced shear, which makes explicit the dependency on the redshift of the source. Therefore, the convergence is still subject to the mass-sheet degeneracy which can now be made explicit in term of zs:  (25)It is easily shown that such a transformation leaves the measured reduced shear invariant. However, it is specific to a given source redshift zs and the reduced shear measured on a galaxy at a different redshift

(25)It is easily shown that such a transformation leaves the measured reduced shear invariant. However, it is specific to a given source redshift zs and the reduced shear measured on a galaxy at a different redshift  is not invariant under the same transformation. As a result, simultaneously constraining the convergence to fit the reduced shear at different redshifts formally leads to λ = 1 and breaks the degeneracy. In practice however, this mechanism is only effective at lifting the degeneracy in regions where the convergence is strong enough. Indeed, in the linear regime the transformation κ′ = κ + λ has no dependency on the redshift of the source. Therefore, the mass-sheet degeneracy can only be lifted in the neighbourhood of strong enough lenses, where the weak lensing approximation no longer holds.

is not invariant under the same transformation. As a result, simultaneously constraining the convergence to fit the reduced shear at different redshifts formally leads to λ = 1 and breaks the degeneracy. In practice however, this mechanism is only effective at lifting the degeneracy in regions where the convergence is strong enough. Indeed, in the linear regime the transformation κ′ = κ + λ has no dependency on the redshift of the source. Therefore, the mass-sheet degeneracy can only be lifted in the neighbourhood of strong enough lenses, where the weak lensing approximation no longer holds.

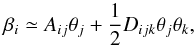

For the rest of this work, we will assume knowledge of the redshift zL of the lens as well as individual photometric redshifts for the sources. For a source with a given photo-z distribution pi(z), we define the following lensing weight Zi relating the convergence of the source and the convergence at infinite redshift:  (26)where Σcritic(z) is the critical density for a lens at redshift zL and a source at redshift zs = z while

(26)where Σcritic(z) is the critical density for a lens at redshift zL and a source at redshift zs = z while  is the critical density for sources at infinite redshift. With this definition, the reduced shear gi and reduced flexion Fi for a given source can be described as a function of the shear, flexion and convergence for sources at infinite redshift γ∞, F∞ and κ∞:

is the critical density for sources at infinite redshift. With this definition, the reduced shear gi and reduced flexion Fi for a given source can be described as a function of the shear, flexion and convergence for sources at infinite redshift γ∞, F∞ and κ∞:  (27)Finally, for convenience in the rest of the paper, we introduce the diagonal matrix Z = diag(Z1,Z2,...,ZN), with N the number of galaxies in the survey.

(27)Finally, for convenience in the rest of the paper, we introduce the diagonal matrix Z = diag(Z1,Z2,...,ZN), with N the number of galaxies in the survey.

3. A new mass mapping methodology

3.1. Dealing with irregularly sampled data

The global inversion methods presented in the previous section all assume knowledge of the shear on a grid. However, the shear is only sampled in practice at randomly distributed galaxy positions. Therefore a first step in all of these methods is to bin the shear catalogue in order to have a sufficient number of galaxies per pixel prior to performing the inversion. Even so, some regions are bound to remain empty because of various masks applied to the data. The simplest and most common procedure to handle missing data consists in applying a large Gaussian smoothing to the binned shear maps with a kernel larger than the typical size of the masks. Obviously, this strategy is far from optimal as it irremediably destroys small scale information.

A more sophisticated approach was proposed in Pires et al. (2009), based on sparse inpainting. This method regularises the inversion in the case of missing data by imposing on the solution a sparsity prior in a discrete cosine transform (DCT) dictionary. In effect, the resulting inpainted map features minimal B-modes leaking and unbiased power spectrum and bispectrum with respect to the original map. This method as been employed to map galaxy clusters in Jullo et al. (2014) where the authors advocate using a very fine binning of the data in order to have on average one galaxy per pixel.

We propose in this section a new method for reconstructing the convergence map, based on Fourier estimators, which does not require any binning or smoothing of the input shear. Although this approach allows us to preserve small-scale information which would otherwise be lost, it does turn the reconstruction problem into an ill-posed inverse problem, which we will address using sparse regularisation

3.1.1. Non-equispaced discrete fourier transform

When using Fourier based methods, the fundamental motivation behind binning or smoothing is to regularise the discrete fourier transform (DFT) of the shear, which is not well defined for data sampled on an irregular grid and which is known in this case as a non-equispaced discrete fourier transform (NDFT). The NDFT is of course no longer an orthogonal transform and is usually not even invertible. We will consider the case where only the spatial nodes  are arbitrary while the frequency nodes k = ˜0,N˜ are N regularly spaced integers. Computing the NDFT from a set of Fourier coefficients

are arbitrary while the frequency nodes k = ˜0,N˜ are N regularly spaced integers. Computing the NDFT from a set of Fourier coefficients  simply amounts to evaluating the trigonometric polynomials:

simply amounts to evaluating the trigonometric polynomials:  (28)This operation can more conveniently be expressed using matrix notations as:

(28)This operation can more conveniently be expressed using matrix notations as:  (29)where T is the NDFT matrix. Note that in the case of equispaced spatial nodes such that

(29)where T is the NDFT matrix. Note that in the case of equispaced spatial nodes such that  , this operation corresponds to the conventional DFT and T reduces to the Fourier matrix F defined as:

, this operation corresponds to the conventional DFT and T reduces to the Fourier matrix F defined as:  (30)This Fourier matrix is unitary and its inverse is simply its Hermitian conjugate: F-1 = F∗. On the contrary, for non-equispaced spatial nodes x, the operator T is typically neither orthogonal nor admits an inverse. Still, one can consider the adjoint NDFT operator T∗:

(30)This Fourier matrix is unitary and its inverse is simply its Hermitian conjugate: F-1 = F∗. On the contrary, for non-equispaced spatial nodes x, the operator T is typically neither orthogonal nor admits an inverse. Still, one can consider the adjoint NDFT operator T∗:  (31)Although this adjoint operation no longer corresponds to the inverse of the transform, it can be used in practice to estimate the inverse when the problem remains over-determined through a least squares optimisation of the form:

(31)Although this adjoint operation no longer corresponds to the inverse of the transform, it can be used in practice to estimate the inverse when the problem remains over-determined through a least squares optimisation of the form:  (32)This problem can be efficiently solved using iterative algorithms (in particular using a conjugate gradient) which involve the computation of both T and T∗.

(32)This problem can be efficiently solved using iterative algorithms (in particular using a conjugate gradient) which involve the computation of both T and T∗.

It is therefore important to have fast algorithms for the computation of the NDFT and its adjoint. Note that a naive evaluation of the sum in Eq. (28) would scale as  which is prohibitively large for most applications (including for our mass mapping problem). In this work, we use a fast approximate algorithm1 to evaluate the NDFT and its adjoint, called NFFT (Keiner et al. 2009), which only scales as

which is prohibitively large for most applications (including for our mass mapping problem). In this work, we use a fast approximate algorithm1 to evaluate the NDFT and its adjoint, called NFFT (Keiner et al. 2009), which only scales as  where ϵ is the desired accuracy. For a given sampling of the frequency space (i.e. for a given N), the NFFT only linearly scales with the number M of spatial nodes xl, which in our case will correspond to the number of galaxies in the survey.

where ϵ is the desired accuracy. For a given sampling of the frequency space (i.e. for a given N), the NFFT only linearly scales with the number M of spatial nodes xl, which in our case will correspond to the number of galaxies in the survey.

Although the DFT can still be estimated for irregularly sampled data by solving Eq. (32) when the problem remains over-determined, the under-determined problem is ill-posed and cannot be directly solved using a least-squares but requires an additional regularisation.

3.1.2. Sparse regularisation of inverse problems

For the specific application of weak lensing mass mapping, the inverse problem of estimating the Fourier transform of the signal for irregular samples can be severely ill-posed when the galaxy density is low. In that case, a simple least-squares such as Eq. (32) is not able to recover a satisfying estimate of  without additional prior. To regularise this inversion, we propose in this paper to use a so-called analysis-based sparsity prior. We introduce here the concepts of sparse regularisation in a general setting before specialising them to the weak lensing mass mapping problem in the next section.

without additional prior. To regularise this inversion, we propose in this paper to use a so-called analysis-based sparsity prior. We introduce here the concepts of sparse regularisation in a general setting before specialising them to the weak lensing mass mapping problem in the next section.

Let us consider a general linear inverse problem of the form:  (33)where A is a linear operator, y are the measurements, contaminated by an additive Gaussian noise n, and x is the unknown signal we wish to recover from the measurements y. Ill-posed inverse problems typically occur when the operator A is not invertible or extremely badly conditioned, in which case the solution may not be unique and is at least extremely unstable to measurement noise. In order to recover a meaningful estimate of the unknown signal some additional prior information is required to help restrict the space of possible solutions. One particularly powerful instance of such a prior is the sparsity prior which assumes that the signal can be represented in an suitable domain using only a small number of nonzero coefficients (Starck et al. 2015).

(33)where A is a linear operator, y are the measurements, contaminated by an additive Gaussian noise n, and x is the unknown signal we wish to recover from the measurements y. Ill-posed inverse problems typically occur when the operator A is not invertible or extremely badly conditioned, in which case the solution may not be unique and is at least extremely unstable to measurement noise. In order to recover a meaningful estimate of the unknown signal some additional prior information is required to help restrict the space of possible solutions. One particularly powerful instance of such a prior is the sparsity prior which assumes that the signal can be represented in an suitable domain using only a small number of nonzero coefficients (Starck et al. 2015).

More formally, let us consider a signal x, it can be analysed using a dictionary Φ to yield a set of analysis coefficients α, such that α = Φ∗x. For instance, in the case of the Fourier dictionary, Φ would correspond to the DFT matrix F and α would be the Fourier coefficients of the signal x. A signal is considered sparse in this analysis framework if only a small number of its coefficients α are non-zero.

Under a sparsity prior, the solution of the inverse problem stated in Eq. (33) can be recovered as the sparsest possible signal  still compatible with the observations. This can be formalised as a convex optimisation problem of the form:

still compatible with the observations. This can be formalised as a convex optimisation problem of the form:  (34)The first term in this expression in this minimisation problem is a quadratic data fidelity term while the second term promotes the sparsity of the solution by penalising the ℓ1 norm of the analysis coefficients of x.

(34)The first term in this expression in this minimisation problem is a quadratic data fidelity term while the second term promotes the sparsity of the solution by penalising the ℓ1 norm of the analysis coefficients of x.

Thanks to very recent advances in the field of convex optimisation and proximal theory, we now have at our disposal very efficient algorithms for solving this optimisation problem, which had remained for a long time largely intractable. In the application presented in this paper, we chose to implement an adaptation of the primal-dual algorithms introduced in Condat (2013), Vu (2013). We direct the interested reader to Sect. A for a few elements of proximal calculus and details concerning this specific algorithm. A more in depth introduction to proximal theory can be found in Starck et al. (2015).

3.2. Sparse recovery algorithm for weak lensing mass mapping

We now consider the specific case of weak lensing mass mapping from irregularly sampled data and we propose a sparse recovery algorithm to reconstruct the convergence map from noisy shear data.

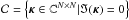

Consider a lensing survey with Ng galaxies. We can write the expression of the shear γ = (γi)i ∈ ˜0,Ng˜ at the position of each galaxy given a convergence map κ as  (35)In this expression, F is the Fourier matrix and T is the NDFT matrix defined for arbitrary spatial nodes x placed at the position of each galaxy in the survey. The diagonal operator P implements the transformation from convergence to shear in Fourier space:

(35)In this expression, F is the Fourier matrix and T is the NDFT matrix defined for arbitrary spatial nodes x placed at the position of each galaxy in the survey. The diagonal operator P implements the transformation from convergence to shear in Fourier space:  (36)Of course, this expression is not defined for k1 = k2 = 0, which corresponds to the well known mass-sheet degeneracy and by convention we will set the mean to 0. We are using complex notations for both shear and convergence, with in particular κ = κE + iκB where κE and κB are respectively E- and B-modes maps.

(36)Of course, this expression is not defined for k1 = k2 = 0, which corresponds to the well known mass-sheet degeneracy and by convention we will set the mean to 0. We are using complex notations for both shear and convergence, with in particular κ = κE + iκB where κE and κB are respectively E- and B-modes maps.

This expression is only formally correct if the Fourier transform is evaluated on the R2 plane. In practice however, we consider finite fields and rely on a DFT to compute the Fourier transform which imposes artificial periodic conditions. The result of Eq. (36) is therefore a circular convolution of the convergence field and the Kaiser-Squires kernel. In practice, when evaluating this expression, we apply sufficient zero-padding to the convergence field κ in order to minimise the impact of these periodic boundary conditions. As the Kaiser-Squires kernel decays to the inverse angular distance squared, the quality of the approximation due to the circular convolution quickly improves with the amount of zero-padding.

An important point to stress is that the operator P is unitary, with P∗P = Id, just like the Fourier matrix F, and as such is readily invertible. Solving Eq. (35) therefore reduces to the inversion of the NDFT operator, which is the only difficult step. As mentioned in the previous section, if the spatial nodes are not regularly spaced, estimating the inverse NDFT is in the general case an ill-posed inverse problem.

Our aim is to apply the sparse regularisation framework introduced in Sect. 3.1.2 to the inversion of the NDFT operator, thus yielding an estimate of the convergence. We consider the following sparse optimisation problem: ![\begin{equation} \vspace*{2.2mm}\bar{\bm{\kappa}} = \argmin_{\bm{\kappa}} \frac{1}{2} \parallel \Sigma^{-\frac{1}{2}}_{\gamma} \left[\bm{\gamma} - \mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \right] \parallel_2^2 + \lambda \parallel \bm{w} \circ \bm{\Phi}^* \bm{\kappa} \parallel_1 + {\rm i}_{\Im(\cdot) = 0}(\bm{\kappa}) . \label{eq:conv_sparse_rec_lin} \vspace*{2.2mm} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq143.png) (37)The first term is a quadratic data fidelity term, where Σγ is the covariance matrix of the individual shear measurements, generally assumed to be diagonal as we are treating galaxies independently. The second term is an analysis-based sparsity constraint where Φ is a dictionary providing a sparse representation of the signal we want to recover, ° is the Hadamard product (term by term product), and w is a vector of weights allowing us to adaptively adjust the ℓ1 ball based on the local level of noise (see Sect. 3.4). Finally, the last term imposes the imaginary part of the solution (i.e. B-modes) to vanish. Remember that the indicator function

(37)The first term is a quadratic data fidelity term, where Σγ is the covariance matrix of the individual shear measurements, generally assumed to be diagonal as we are treating galaxies independently. The second term is an analysis-based sparsity constraint where Φ is a dictionary providing a sparse representation of the signal we want to recover, ° is the Hadamard product (term by term product), and w is a vector of weights allowing us to adaptively adjust the ℓ1 ball based on the local level of noise (see Sect. 3.4). Finally, the last term imposes the imaginary part of the solution (i.e. B-modes) to vanish. Remember that the indicator function  of a set

of a set  is defined by

is defined by ![\begin{equation} i_{\mathcal{C}}(x) = \begin{cases}\vspace*{2.2mm} 0 & \mbox{if } x \in \mathcal{C} \\[2.2mm] + \infty & \mbox{otherwise} . \vspace*{2.2mm} \end{cases} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq150.png) (38)Here,

(38)Here,  where ℑ is the imaginary part, and N × N is the size of the reconstruction grid. As a result any solution with a non-zero imaginary part is excluded. This additional constraint is crucial as the irregular galaxy sampling leads to important leakage between E- and B-modes. As E-modes are constrained by the sparsity prior, most of the signal would tend to leak towards B-modes without this additional term. Vanishing B-modes is of course a completely physically motivated prior as gravitational lensing only produces E-modes, but this also means that this method will be sensitive to spurious B-modes resulting from uncorrected systematics which will contaminate the recovered signal.

where ℑ is the imaginary part, and N × N is the size of the reconstruction grid. As a result any solution with a non-zero imaginary part is excluded. This additional constraint is crucial as the irregular galaxy sampling leads to important leakage between E- and B-modes. As E-modes are constrained by the sparsity prior, most of the signal would tend to leak towards B-modes without this additional term. Vanishing B-modes is of course a completely physically motivated prior as gravitational lensing only produces E-modes, but this also means that this method will be sensitive to spurious B-modes resulting from uncorrected systematics which will contaminate the recovered signal.

We efficiently solve this problem by adopting a primal-dual algorithm following Condat (2013), Vu (2013). The specialisation of this algorithm to the weak lensing mass mapping problem is presented in Sect. A and summarised in algorithm 1.

The solution of this optimisation problem tends to be biased as a well-known side effect of the ℓ1 sparsity constraint. In order to correct for this bias and improve the quality of the solution, we implement a two-steps approach.

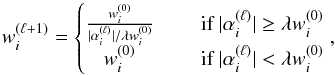

We first apply the reweighted-ℓ1 strategy from Candès et al. (2008) which relies on iteratively solving Eq. (37), adjusting each time the weights w based on the previous estimate of the solution. This procedure is described below:

-

1.

Set the iteration countℓ = 0 and initialise the weights w(0) according to the procedure described in Sect. 3.4.

-

2.

Solve the weighted ℓ1 minimisation problem of Eq. (37) using Algorithm 1, yielding a solution κ(ℓ).

-

3.

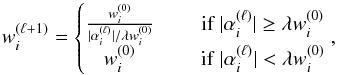

Update the weights based on the wavelet transform of the solution α(ℓ) = Φtκ(ℓ):

(39)

(39) -

4.

Terminate on convergence. Otherwise, increment ℓ and go to step 2.

In practice we find that 3 or 5 re-weightings are generally sufficient to reach a satisfying solution. However, despite improving the quality of the solution, this reweighting scheme does not completely correct for the ℓ1 amplitude bias.

The second correction step involves using this reweighted-ℓ1 solution to define the support Ω of the solution in the analysis dictionary, which can then be used in place of the ℓ1 constraint to recover an unbiased solution. This support is defined as: ![\begin{equation} \Omega_i = \begin{cases} 1, & \textrm{if } [\mathbf{\Phi}^*\kappa^{(l)}]_i > \lambda w_i^{(0)} \\ 0, & \textrm{otherwise}. \end{cases} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq173.png) (40)Using the support to constrain the solution, the recovery problem becomes:

(40)Using the support to constrain the solution, the recovery problem becomes: ![\begin{equation} \bar{\bm{\kappa}} = \argmin_{\bm{\kappa}} \frac{1}{2} \parallel \Sigma^{-\frac{1}{2}}_{\gamma} \left[\bm{\gamma} - \mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \right] \parallel_2^2 + i_{\Omega}\left( \bm{\Phi}^* \bm{\kappa} \right) + i_{\Im(\cdot) = 0}(\bm{\kappa}) . \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq174.png) (41)where iΩ is the indicator function of the support Ω. This problem preserves the sparsity of the solution of the reweighted-ℓ1 iterative scheme but no longer penalises the amplitude of the analysis coefficients, which leads to an unbiased solution. This problem can still be solved using Algorithm 1 simply by replacing the Soft-Thresholding operation on line 6 by a multiplication with the support Ω.

(41)where iΩ is the indicator function of the support Ω. This problem preserves the sparsity of the solution of the reweighted-ℓ1 iterative scheme but no longer penalises the amplitude of the analysis coefficients, which leads to an unbiased solution. This problem can still be solved using Algorithm 1 simply by replacing the Soft-Thresholding operation on line 6 by a multiplication with the support Ω.

3.3. Choice of dictionary

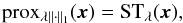

|

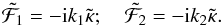

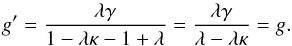

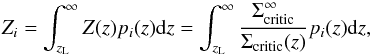

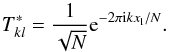

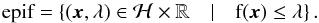

Fig. 3 Comparison of reconstruction using starlets or a combination of starlets and Battle-Lemarié wavelets as the dictionary Φ. The Battle-Lemarié atoms are very effective at penalising isolated pixels. |

For any sparse regularisation method, an appropriate choice of dictionary is important to the quality of the result. This is especially true for noise dominated problems where the prior takes prevalence when the data is not constraining. Previous sparsity based methods developed for weak lensing mass mapping either employed starlets for denoising (Starck et al. 2006) or DCT for inpainting (Pires et al. 2009). Indeed, at small scale, the non-Gaussian convergence signal essentially generated by isolated galaxy clusters is well represented using the starlet dictionary which features isotropic atoms, adapted to the average circular profile of dark matter halos. On the other hand, on large scales, the DCT is more efficient at capturing the Gaussian part of the convergence signal and has proven to be an excellent dictionary to inpaint missing data due to masks without altering the power spectrum of the reconstructed maps.

In this work, we aim at reconstructing the convergence map on small scales and we therefore adopt the starlet dictionary for the reasons stated above. However, we find that when using the starlet alone, details at the finest scale are not sufficiently constrained, in particular spurious isolated pixels tend to contaminate the solution, as shown on Fig. 3a. To help penalise this unwanted behaviour, we build a hybrid dictionary by concatenating to the starlet dictionary the first scale of an undecimated bi-orthogonal wavelet transform, more specifically a Battle-Lemarié wavelet of order 5. Contrary to starlet atoms, Battle-Lemarié wavelets are much more oscillatory and have a larger support (formally infinite but with an exponential decay). This makes them relatively inefficient at sparsely representing singularities such as isolated pixels, which are therefore more strongly penalised by the sparsity prior.

To illustrate the benefits of using this hybrid dictionary, we compare in Fig. 3 the results of our reconstruction algorithm in a simple noiseless case when using starlets alone or the hybrid dictionary described above, all other parameters being kept fixed. As this simple qualitative comparison demonstrates, starlets alone tend to create small pixel-sized artefacts, even in the absence of noise. These are completely eliminated by the inclusion of the Battle-Lemarié wavelets.

We add that although we find this dictionary to be effective at regularising the mass mapping inversion, it remains generic and was not specifically designed for an optimal representation of convergence maps. More specific dictionaries could be used just as well and would potentially improve further our results. It has for instance been shown that application specific dictionaries built using Dictionary Learning (DL) perform better than wavelets for the recovery of astronomical images Beckouche 2013.

3.4. Adjusting the sparsity constraint

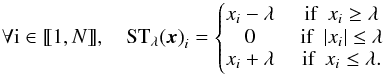

|

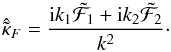

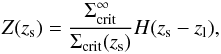

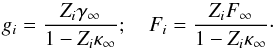

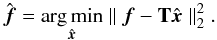

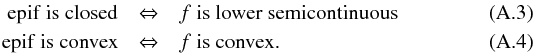

Fig. 4 Standard deviation maps w used to scale the sparsity constraint, obtained by propagating the shear noise to the wavelet coefficients. We show here noise maps for four successive starlet scales. |

A recurring issue with sparse recovery problems such as the one stated in Eq. (37) is the choice of the regularisation parameter λ. There is unfortunately no general rule indicating how to set this parameter in practice. For this application, we adopt the approach that was proposed in Paykari et al. (2014), which consists in defining this parameter with respect to the noise level.

Formally, the parameter λ scales the ℓ1 ball used in the sparsity constraint. In practice, it defines the level of Soft Thresholding applied to the dual variable α (see line 6 in Algorithm 1) and therefore discriminates between significant and non-significant coefficients. While this threshold can be set according to a given sparsity model of the signal to recover, for noise dominated problems, it is much more crucial to define this threshold with respect to the noise level. As the noise statistics vary across the field, depending on the specific galaxy distribution, we introduce a vector of weights w (found in the ℓ1 term in Eq. (37)) with the purpose of locally scaling the sparsity constraint based on the standard deviation of the noise propagated to the coefficients α. For each wavelet coefficient αi we set the weight wi to the estimated standard deviation σ(αi). As a result, the level of Soft Thresholding applied to each coefficient αi is  and accounts for noise variations across the field. As the threshold is proportional to the standard deviation of the noise, it can be interpreted as an hypothesis test to determine if a coefficient is due to signal or noise, assuming Gaussian statistics for the noise, which adds a powerful detection aspect to the sparsity constraint.

and accounts for noise variations across the field. As the threshold is proportional to the standard deviation of the noise, it can be interpreted as an hypothesis test to determine if a coefficient is due to signal or noise, assuming Gaussian statistics for the noise, which adds a powerful detection aspect to the sparsity constraint.

To estimate this noise level, and therefore set the weights w, it is first necessary to understand how the noise in the data propagates to the dual variable α. By considering Algorithm 1, it can be seen that the noise at the level of the shear γN is propagated to the wavelet coefficients through the operation Φ∗ℜ(FP∗T∗γN). In practice, we estimate the standard deviation of wavelet coefficients by generating Monte-Carlo noise simulations, obtained by keeping the galaxies at their observed position while randomising their orientation. Note that this step needs only to be performed once, outside of the main iteration of Algorithm 1. An example of the resulting standard deviation maps for different wavelet scales is shown on Fig. 4. As can be seen, on the finest scales, the noise level has important fluctuations across the field and the contribution of individual galaxies can be seen. On larger scales, these local fluctuations are smoothed out and the noise level becomes much more homogeneous. Using this strategy therefore allows us to tune locally the sparsity constraint to take into account the specific galaxy distribution of the survey and leaves only one free parameter λ.

3.5. Numerical experiment on noiseless data

The algorithm presented in this section is only meant to address the irregular sampling of the shear and the presence of noise, the complete problem being solved in the next section. In this simplified setting, we present a small numerical experiment to verify the algorithm’s effectiveness at solving the linear inverse problem.

|

Fig. 5 Test convergence map generated from an N-body simulation. The clusters are located at zl = 0.3 while the source plane is placed at zs = 1.2. |

We simulated a 10 × 10 arcmin2 field, containing a group of galaxy clusters extracted from the Bolshoi N-body simulations (Klypin et al. 2011, see Sect. 5.1, for more details), as shown on Fig. 5. These clusters are placed at redshift zl = 0.3 and we simulates a lensing catalogue with randomly distributed sources on a single lens plane at redshift zs = 1.2. Note that we only simulated shear measurements from the input convergence map and not the reduced shear.

We consider here the noiseless inpainting problem where the input shear is exactly known at the position of the sources. Of course this problem is unrealistic but does illustrate the impact of missing data on the mass mapping inversion using Fourier estimators.

|

Fig. 6 Reconstruction of the input convergence map using 30 galaxies per square arcminute using a Kaiser-Squires estimator b−c) and sparse recovery d). The top left panel shows the mask applied to the binned shear map with empty pixels marked in white. In this test, 93% of the pixels are masked. |

We generated a shear catalogue with a density of 30 galaxies per square arcminute which corresponds to a typical Euclid density. To exacerbate the effects of missing data, we reconstructed the input convergence map on a grid with 3 arcsec pixels. Binning the input catalogue at this resolution corresponds to an average of 0.075 galaxies per pixels, which means mostly empty pixels as illustrated by the mask in Fig. 6a where empty pixels are shown in white. A direct Kaiser-Squires inversion with such a mask is shown on Fig. 6b which corresponds to the solution of the inverse problem in the absence of regularisation. In order to recover the main structures we also show the result of a Gaussian smoothing with a kernel of 0.25 arcmin on Fig. 6c. Note that on this last figure the colour scale is adjusted for better visualisation but the amplitude of the reconstruction is an order of magnitude below the input signal smoothed with the same kernel. As illustrated by these plots, small scale information is lost with this approach and although using larger bins would regularise the inversion these details would still be lost.

We then applied Algorithm 1 to the same catalogue. As the weighting scheme presented in Sect. 3.4 is not meant to be used on noiseless data, we use a noisy version of the shear data to estimate the weights w and we set the regularisation parameter λ to a very low value (λ = 0.01) to apply the algorithm on noiseless data. The solution of the optimisation problem is shown on Fig. 6. The sparse recovery algorithm constitutes a major improvement over a simple Kaiser-Squires inversion and is able to recover pixel scale details despite 93% of the field being masked. Several reasons can explain these surprising results. First and foremost, the shear information is non local and even small structures formally impact the shear across all the field, albeit with an amplitude decaying to the square of the angular distance. The second reason is the use of isotropic wavelets which provide a sparse representation of the true convergence map while being morphologically distinct from the response of individual galaxies, which is quadrupolar. As a result, the sparsity constraint greatly favours the true convergence map, which is smooth with small isotropic features, over anisotropic artefacts resulting from the irregular shear sampling.

The point of this numerical experiment is to show that although the shear field is randomly sampled at a relatively low rate, high frequency information can still be recovered using our method. Of course, in practice small-scale details are generally lost in the considerable amount of noise coming from intrinsic galaxy ellipticities, but not necessarily at the close vicinity of the center of galaxy clusters where the shear signal can become significant even on small scales. The ability to reconstruct small-scale details is even more relevant when including flexion information as the noise power spectrum of the flexion estimator drops at small scales.

4. Sparse recovery for the complete problem: the Glimpse2D algorithm

In the previous section, we have introduced an algorithm based on sparse regularisation for solving the simplified linear inversion problem. Although not realistic, this problem is still an important step towards the complete surface density reconstruction that we address now in this section. We detail how the algorithm of the previous section can be modified to take into account reduced shear, redshift information for individual galaxies, and flexion information.

4.1. Handling the reduced shear

The problem addressed in the previous section assumed knowledge of the shear, in which case the inverse problem remains linear. While this assumption can be made in the weak regime, it breaks down at the vicinity of the structures (galaxy clusters) we are interested in mapping. Although the method presented in the previous section no longer directly applies, we present in this section how it can be extended to take into account the reduced shear  , which makes the inversion problem non-linear.

, which makes the inversion problem non-linear.

Throughout this paper, we will restrict ourselves to the case | g | ≤ 1 for simplicity, assuming that the sources which do not verify this condition can be identified and excluded from the sample. The method presented here could be extended using an iterative procedure to identify and thus treat accordingly sources lying in the region | g | > 1.

Replacing the shear by the reduced shear in the inversion problem stated in Eq. (37) yields: ![\begin{equation} \argmin_{\bm{\kappa}} \frac{1}{2} \parallel \Sigma^{-\frac{1}{2}} \left[\bm{g} - \frac{\mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa}}{1 - \mathbf{T} \mathbf{F}^*\bm{\kappa}} \right] \parallel_2^2 + \lambda \parallel \bm{w} \circ \bm{\Phi}^t \bm{\kappa} \parallel_1 +{\rm i}_{\Im(\cdot) \,=\, 0}(\bm{\kappa}) . \label{eq:algo_rec_non_lin} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq188.png) (42)Note that at the denominator the NDFT operator is only used to evaluate the convergence at the position of each galaxy. In this form, the full problem cannot be directly addressed using the algorithm presented in the previous section as the operator to invert is no longer linear. Nonetheless, following a common strategy to handle this non-linearity, the term

(42)Note that at the denominator the NDFT operator is only used to evaluate the convergence at the position of each galaxy. In this form, the full problem cannot be directly addressed using the algorithm presented in the previous section as the operator to invert is no longer linear. Nonetheless, following a common strategy to handle this non-linearity, the term  can be factored out and be interpreted as a diagonal covariance matrix, which depends on the signal κ:

can be factored out and be interpreted as a diagonal covariance matrix, which depends on the signal κ: ![\begin{eqnarray} \argmin_{\bm{\kappa}} \frac{1}{2} \parallel \mathcal{C}_\kappa^{-1} \left[(1 - \mathbf{T} \mathbf{F}^* \bm{\kappa}) \bm{g} - \mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \right] \parallel_2^2 \nonumber\\ + \lambda \parallel \bm{w} \circ \bm{\Phi}^t \bm{\kappa} \parallel_1 + {\rm i}_{\Im(\cdot) \,=\, 0}(\bm{\kappa}) . \label{eq:algo_rec_non_lin_linearised} \end{eqnarray}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq190.png) (43)If the factor

(43)If the factor  is kept fixed, the problem is now linear and can be solved once again using the same class of algorithms as in the previous section. By iteratively solving this linearised problem, updating each time the matrix

is kept fixed, the problem is now linear and can be solved once again using the same class of algorithms as in the previous section. By iteratively solving this linearised problem, updating each time the matrix  with the current estimate of κ we can recover the solution of the original problem stated in Eq. (42). This is for instance the strategy adopted in Merten et al. (2009).

with the current estimate of κ we can recover the solution of the original problem stated in Eq. (42). This is for instance the strategy adopted in Merten et al. (2009).

4.2. Including redshift information

So far we have not given any consideration to the fact that lensing sources are not located on a single plane but are distributed in redshift. As was described in Sect. 2.4, for a lens at a given redshift, the amplitude of the lensing effect experienced by each source will depend on its own redshift. Let us note Z the diagonal matrix of weights Zi introduced in Sect. 2.4, the reduced shear can be computed from the convergence κ using the Fourier operators introduced thus far as:  (44)where κ is understood to be the convergence at infinite redshift. With this new operator, the full inversion problem becomes:

(44)where κ is understood to be the convergence at infinite redshift. With this new operator, the full inversion problem becomes: ![\begin{eqnarray} \argmin_{\bm{\kappa}} \frac{1}{2} \parallel \mathcal{C}_\kappa^{-1} \left[(1 - \bm{Z} \mathbf{T} \mathbf{F}^* \bm{\kappa}) \bm{g} - \bm{Z}\mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \right] \parallel_2^2 \nonumber\\ + \lambda \parallel \bm{w} \circ \bm{\Phi}^t \bm{\kappa} \parallel_1 +{\rm i}_{\Im(\cdot) \,=\, 0}(\bm{\kappa}) . \label{eq:algo_rec_non_lin_linearised_z} \end{eqnarray}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq194.png) (45)where the matrix

(45)where the matrix  now becomes

now becomes  . This is simply a generalisation of Eq. (43), which can be recovered when no redshift information is available by setting Z = Id, and can be solved exactly in the same way.

. This is simply a generalisation of Eq. (43), which can be recovered when no redshift information is available by setting Z = Id, and can be solved exactly in the same way.

The main advantage of using redshift estimates for individual galaxies is the proper scaling of the resulting convergence map, which can be translated into a physical surface mass density map Σ of the lens plane:  (46)The mass of the lens can then be estimated by integrating Σ within a given radius. The second advantage of using individual redshifts is that it can help mitigate the mass-sheet degeneracy, as was described in Bradac et al. (2004) and presented in Sect. 2.4. We stress however that this degeneracy cannot be completely lifted using the method presented here as, of the two terms in the gradient of the χ2 in Eq. (A.14), we only retain the one that is insensitive to the mean. Therefore, the mean value of the field remains unconstrained by the data using our algorithm. Nevertheless, we expect the additional redshift information to locally break the degeneracy and help recover the correct amplitude for the most significant structures.

(46)The mass of the lens can then be estimated by integrating Σ within a given radius. The second advantage of using individual redshifts is that it can help mitigate the mass-sheet degeneracy, as was described in Bradac et al. (2004) and presented in Sect. 2.4. We stress however that this degeneracy cannot be completely lifted using the method presented here as, of the two terms in the gradient of the χ2 in Eq. (A.14), we only retain the one that is insensitive to the mean. Therefore, the mean value of the field remains unconstrained by the data using our algorithm. Nevertheless, we expect the additional redshift information to locally break the degeneracy and help recover the correct amplitude for the most significant structures.

4.3. Improving angular resolution with flexion

We demonstrated in the previous section that our sparse recovery algorithm is capable of reconstructing small scale details in the absence of noise despite the irregular galaxy sampling. In practice however, the shear is noise dominated on those scales, which makes recovering high frequency information from shear measurements unlikely.

As was explained in Sect. 2.2, while the Kaiser-Squires estimator for shear has a flat noise power spectrum, the noise power spectrum of the minimum variance estimator for flexion has a 1 /k2 dependency. As a result, although flexion measurements are generally very noisy, the noise level of the estimated map eventually drops below that of the shear on sufficiently small scales. Flexion can therefore bring useful information but only below a given scale and is very complementary to shear.

Our aim is therefore to improve the mass-map reconstruction on small scales by extending our reconstruction method to incorporate flexion information. In the interest of simplicity, we consider here only the linear problem without redshift information, to highlight the difficulties inherent to the inclusion of flexion. The full problem is solved in the next section.

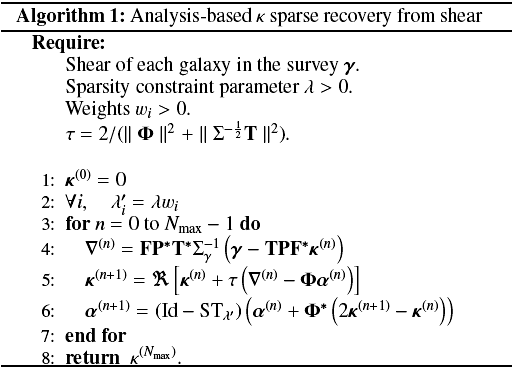

Following the approach developed in the first section, we address the problem using Fourier estimators. We first introduce the diagonal operator Q implementing the transform from convergence κ to first flexion ℱ in Fourier space, defined as:  (47)where we use complex notations for the flexion with ℱ = ℱ1 + iℱ2. Contrary to the shear operator P, this operator Q is not unitary but is still invertible:

(47)where we use complex notations for the flexion with ℱ = ℱ1 + iℱ2. Contrary to the shear operator P, this operator Q is not unitary but is still invertible:  (48)To extend the algorithm presented in the previous section, a first straightforward approach would be to simply add a flexion term to the χ2 of Eq. (37) and solve the following problem:

(48)To extend the algorithm presented in the previous section, a first straightforward approach would be to simply add a flexion term to the χ2 of Eq. (37) and solve the following problem:  (49)Although formally correct, solving this problem using the primal-dual algorithm presented in the previous section leads to a number of technical issues linked to the fact that the operator Q, being not unitary, now contributes to the difficulty of the inverse problem. In particular, this impacts our ability to robustly identify significant coefficients in the gradient of the data fidelity term (key to the regularisation strategy presented in the previous section), which is now affected by a mixture of a flat shear noise and a flexion noise with a power spectrum in k2. Furthermore, even without considering the regularisation, the inversion of the operator Q, which is essentially a 2D gradient, requires a large number of iterations if solved with a standard gradient descent. These considerations make the algorithm much slower and far less robust to noise than when solving the problem from shear alone.

(49)Although formally correct, solving this problem using the primal-dual algorithm presented in the previous section leads to a number of technical issues linked to the fact that the operator Q, being not unitary, now contributes to the difficulty of the inverse problem. In particular, this impacts our ability to robustly identify significant coefficients in the gradient of the data fidelity term (key to the regularisation strategy presented in the previous section), which is now affected by a mixture of a flat shear noise and a flexion noise with a power spectrum in k2. Furthermore, even without considering the regularisation, the inversion of the operator Q, which is essentially a 2D gradient, requires a large number of iterations if solved with a standard gradient descent. These considerations make the algorithm much slower and far less robust to noise than when solving the problem from shear alone.

This needs not be the case however as the inverse of this operator is explicit in Fourier space and these difficulties can be avoided if we make proper use of this explicit inverse. We propose therefore to address the combined shear and flexion reconstruction as the following sparse optimisation problem: ![\begin{eqnarray} \argmin_{\bm{\kappa}, \tilde{\bm{\mathcal{F}}}} \frac{1}{2} \parallel \bm{\gamma} - \mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \parallel_2^2 + \frac{1}{2} \parallel \bm{\mathcal{F}} - \mathbf{T} \mathbf{F}^* \tilde{\bm{\mathcal{F}}} \parallel_2^2 \nonumber\\ + \lambda \parallel \bm{w} \circ \mathbf{\Phi}^t \bm{\kappa} \parallel_1 + {\rm i}_{\mathrm{Im}(\mathbf{R})}\left( \left[\begin{matrix} \bm{\kappa} \\ \tilde{\bm{\mathcal{F}}} \end{matrix} \right] \right) , \label{eq:conv_sparse_rec_lin_flex} \end{eqnarray}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq206.png) (50)where we introduce the application

(50)where we introduce the application ![\hbox{$\mathbf{R}: \mathbb{R}^{N \,\times\, N} \rightarrow \mathbb{C}^{2 N\, \times\, N}, \ \bm{\kappa} \mapsto \left[\begin{matrix} \bm{\kappa} \\ \mathbf{F} \mathbf{Q} \mathbf{F}^* \bm{\kappa} \end{matrix} \right]$}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq207.png) , with N × N the size of the reconstruction grid.

, with N × N the size of the reconstruction grid.

Thanks to the inclusion of the auxiliary variable  we have now decoupled the problem of the inversion of the NDFT operator T from the conversion between flexion and convergence which is now addressed implicitly in the last term. Remember that the indicator function

we have now decoupled the problem of the inversion of the NDFT operator T from the conversion between flexion and convergence which is now addressed implicitly in the last term. Remember that the indicator function  is infinite outside of the set

is infinite outside of the set  and therefore exclude any solution which do not belong to

and therefore exclude any solution which do not belong to  . In our case, we require the solution to be in the image of the operator R:

. In our case, we require the solution to be in the image of the operator R:![\begin{equation} \mathrm{Im}(\mathbf{R}) = \left\lbrace \left[\begin{matrix} \bm{\kappa} \\ \tilde{\bm{\mathcal{F}}} \end{matrix} \right] \in \mathbb{C}^{2 N \,\times\, N} | \exists \bm{\kappa} \in \mathbb{R}^{N \, \times\, N}, \quad \tilde{\bm{\mathcal{F}}} = \mathbf{F} \mathbf{Q} \mathbf{F}^* \bm{\kappa} \right\rbrace. \label{eq:image_of_R} \end{equation}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq210.png) (51)The constraint iIm(R) therefore implies two conditions. First, the recovered convergence has to be real, which is equivalent to enforcing the vanishing B-modes condition already used for the shear alone inversion problem. The second is that the recovered flexion

(51)The constraint iIm(R) therefore implies two conditions. First, the recovered convergence has to be real, which is equivalent to enforcing the vanishing B-modes condition already used for the shear alone inversion problem. The second is that the recovered flexion  needs to match the flexion derived from the recovered convergence, which makes the connection between the two variables κ and

needs to match the flexion derived from the recovered convergence, which makes the connection between the two variables κ and  . Interestingly, the implementation of this constraint in the primal-dual algorithm naturally gives rise to the minimum variance estimator for shear and flexion introduced in Eq. (17) and therefore will ensure that shear and flexion are optimally combined at each iteration of the algorithm to maximise the S/N of features present in the signal on all scales.

. Interestingly, the implementation of this constraint in the primal-dual algorithm naturally gives rise to the minimum variance estimator for shear and flexion introduced in Eq. (17) and therefore will ensure that shear and flexion are optimally combined at each iteration of the algorithm to maximise the S/N of features present in the signal on all scales.

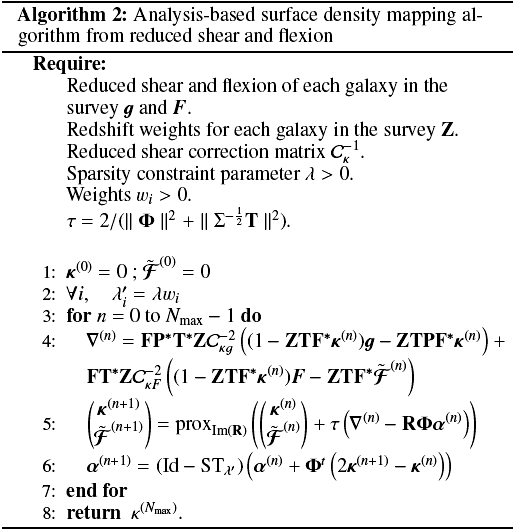

4.4. Complete surface density mapping algorithm

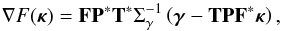

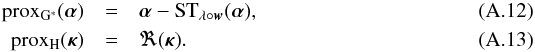

We now present the complete reconstruction algorithm, called Glimpse2D, combining reduced shear g and reduced flexion F, and taking into account individual redshift estimates for the sources. The complete problem we aim to solve is the following: ![\begin{eqnarray} \argmin_{\bm{\kappa}, \tilde{\bm{\mathcal{F}}}} &&\! \!\!\!\!\frac{1}{2} \parallel \mathcal{C}_{\kappa g}^{-1} \left[(1 - \bm{Z} \mathbf{T} \mathbf{F}^* \bm{\kappa}) \bm{g} - \bm{Z}\mathbf{T} \mathbf{P} \mathbf{F}^* \bm{\kappa} \right] \parallel_2^2 \nonumber \\ && + \frac{1}{2} \parallel \mathcal{C}_{\kappa F}^{-1} \left[(1 - \bm{Z} \mathbf{T} \mathbf{F}^* \bm{\kappa}) \bm{F} - \bm{Z} \mathbf{T} \mathbf{F}^* \tilde{\bm{\mathcal{F}}} \right] \parallel_2^2 \label{eq:full_conv_optim} \\ && + \lambda \parallel \bm{w} \circ \bm{\Phi}^t \bm{\kappa} \parallel_1 + {\rm i}_{\mathrm{Im}(\mathbf{R})}\left( \left[\begin{matrix} \bm{\kappa} \\ \tilde{\bm{\mathcal{F}}} \end{matrix} \right] \right) \nonumber , \end{eqnarray}](/articles/aa/full_html/2016/07/aa28278-16/aa28278-16-eq215.png) (52)where the non-linear correction matrices

(52)where the non-linear correction matrices  and