| Issue |

A&A

Volume 556, August 2013

|

|

|---|---|---|

| Article Number | A109 | |

| Number of page(s) | 20 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/201321575 | |

| Published online | 05 August 2013 | |

High-precision simulations of the weak lensing effect on cosmic microwave background polarization

AstroParticule et Cosmologie, Univ Paris Diderot, CNRS/IN2P3,

CEA/Irfu,

Obs. de Paris,

75230

Paris,

France

e-mail:

This email address is being protected from spambots. You need JavaScript enabled to view it.

; This email address is being protected from spambots. You need JavaScript enabled to view it.

Received:

26

March

2013

Accepted:

14

June

2013

Abstract

We studied the accuracy, robustness, and self-consistency of pixel-domain simulations of the gravitational lensing effect on the primordial cosmic microwave background (CMB) anisotropies due to the large-scale structure of the Universe. In particular, we investigated the dependence of the precision of the results precision on some crucial parameters of these techniques and propose a semi-analytic framework to determine their values so that the required precision is a priori assured and the numerical workload simultaneously optimized. Our focus was on the B-mode signal, but we also discuss other CMB observables, such as the total intensity, T, and E-mode polarization, emphasizing differences and similarities between all these cases. Our semi-analytic considerations are backed up by extensive numerical results. Those are obtained using a code, nicknamed lenS2HAT – for lensing using scalable spherical harmonic transforms (S2HAT) – which we have developed in the course of this work. The code implements a version of the previously described pixel-domain approach and permits performing the simulations at very high resolutions and data volumes, thanks to its efficient parallelization provided by the S2HAT library – a parallel library for calculating of the spherical harmonic transforms. The code is made publicly available.

Key words: cosmic background radiation / large-scale structure of Universe / gravitational lensing: weak / methods: numerical

© ESO, 2013

1. Introduction

The cosmic microwave background (CMB) anisotropies in both temperature and polarization are one of the most studied signals in cosmology and one of the major available sources of constraints of the early-Universe physics. After having decoupled from matter and set free at the time of recombination, CMB photons propagated nearly unperturbed throughout the Universe. The large-scale structures (LSS) emerging in the Universe in the post-recombination period have left their imprint on them, however, which are referred to as secondary anisotropies. In particular, the gravitational pull of the growing matter inhomogeneities has deviated the paths of primordial CMB photons, modifying somewhat the pattern of the CMB anisotropies observed today. This weak lensing effect on the CMB (see Lewis & Challinor 2006 for an extensive review) therefore offers a unique probe of the matter distribution at intermediate redshift where the forming LSS were still in the nearly-linear regime. Because this depends on the cumulative matter distribution in the Universe, it is expected to be particularly efficient in constraining the properties of all the parameters affecting the growth of LSS, such as neutrino masses and dark energy physics (de Putter et al. 2009; Das & Linder 2012; Hall & Challinor 2012).

The first observational evidence of the CMB lensing signal had been indirect and obtained through cross-correlation of the CMB maps with high-redshift mass tracers (Smith et al. 2007; Hirata et al. 2008). More recently, more direct measurements have become available, thanks to the latest generation of high-precision and resolution ground-based CMB temperature experiments, which have collected high-quality data and made possible a direct reconstruction of the power spectra of this deviation using CMB alone (Das et al. 2011; van Engelen et al. 2012). Even more recently, this has been further elaborated on by the Planck results based on the first 15 months of the total intensity data collected by the mission (Planck Collaboration 2013).

The forthcoming next generation of low-noise CMB polarization experiments such as EBEX (Oxley et al. 2004), POLARBEAR (Kermish et al. 2012), SPTpol (McMahon et al. 2009), and ACTpol (Niemack et al. 2010) and their future upgrades (e.g., POLARBEAR-II, Tomaru et al. 2012) will be able to target a CMB observable most affected by weak lensing – the B-mode polarization. Indeed, primordial CMB gradient-like polarization (E-modes) is converted into curl-like polarization (B-modes) by gravitational lensing (Zaldarriaga & Seljak 1998) and is expected to completely dominate the primordial signal at least at small angular scales. The lensing-generated B-modes are interesting because of their sensitivity to the large-scale structure distribution, but also because they are the main contaminant of any primordial B-modes signal, which is expected in many models of the very early Universe, and which is one of the major goals of the current and future CMB observations. Since sensitivities of the CMB polarization arrays are rapidly improving, the experiments aiming at setting constraints on values of the tensor-to-scalar ratio parameter r ≲ 10-2 are expected to be ultimately limited by the lensing signal (e.g., Errard & Stompor 2012). This acts as an extra noise source with a white spectrum shape on large scales and an amplitude of approximately 5 μK-arcmin, which could in principle be separated from the primordial signal with the help of an accurate de-lensing procedure (Kesden et al. 2002; Seljak & Hirata 2004; Smith et al. 2012).

The high quality of forthcoming datasets requires the development, testing and validation through simulations of data-analysis tools capable of fully exploiting the amount of information present there. An important part of this effort involves simulating very accurate, high-resolution maps of the CMB total intensity and polarization, covering a large fraction of the sky and with lensing effects included. The relevant approaches have been studied in the past (e.g. Lewis 2005; Basak et al. 2009; Lavaux & Wandelt 2010) and resulted in devising and demonstrating an overall framework for such simulations, as well as in two publicly available numerical codes (Lewis 2005; Basak et al. 2009). Because the computations involved in such a procedure are inherently very time-consuming, the proposed implementations of those ideas unavoidably involve trade-offs between calculation precision and their feasibility, giving rise to a number of problems, practical and more fundamental, which need to be carefully resolved to ensure that these techniques produce high-quality, reliable results. The main objective of this paper is to provide comprehensive answers to some of these problems, with special emphasis on those arising in the context of high-precision and-reliability simulations of the B-mode component of the CMB polarization signal.

2. Simulating weak lensing of the CMB

2.1. Algebraic background

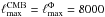

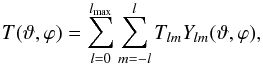

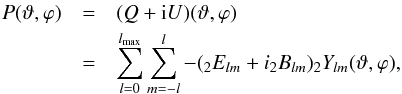

The CMB radiation is completely described by its brightness temperature and polarization

fields on the sky, T(ϑ,ϕ) and

P(ϑ,ϕ). Since both fields are (nearly) Gaussian, they

are characterized by their power spectra after their harmonic expansion in a proper basis.

Temperature is a scalar field and can be conveniently expanded in terms of scalar

spherical harmonics,  (1)while polarization

is described by the Stokes parameters Q and U, which are

coordinate-dependent objects, that behave like a spin-2 field on the sphere under

rotations (Zaldarriaga & Seljak 1997; Kamionkowski et al. 1997). The polarization field must

therefore be expanded in terms of spin-2 spherical harmonics,

(1)while polarization

is described by the Stokes parameters Q and U, which are

coordinate-dependent objects, that behave like a spin-2 field on the sphere under

rotations (Zaldarriaga & Seljak 1997; Kamionkowski et al. 1997). The polarization field must

therefore be expanded in terms of spin-2 spherical harmonics,

,

,

(2)where

(2)where

and

and  are the gradient and curl harmonic components of a spin-2 field, whose general definitions

for and arbitrary spin-s field are

are the gradient and curl harmonic components of a spin-2 field, whose general definitions

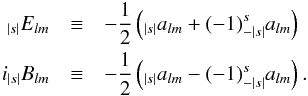

for and arbitrary spin-s field are  (3)Weak

gravitational lensing shifts the light rays coming from an original direction

(3)Weak

gravitational lensing shifts the light rays coming from an original direction

on the last scattering surface to the observed direction

on the last scattering surface to the observed direction

,

inducing a mapping between the two directions through the so-called displacement field

d, i.e., for a CMB observable

X ∈ { T,Q,U }

,

inducing a mapping between the two directions through the so-called displacement field

d, i.e., for a CMB observable

X ∈ { T,Q,U }  (4)Hereafter, we use a

tilde to denote a lensed quantity, we also use a tilde over a multipole number of a lensed

quantity, i.e.,

(4)Hereafter, we use a

tilde to denote a lensed quantity, we also use a tilde over a multipole number of a lensed

quantity, i.e.,  ,

to distinguish it from a multipole number of its unlensed counterpart.

,

to distinguish it from a multipole number of its unlensed counterpart.

The displacement field is a vector field on the sphere and can be decomposed into a

gradient-free and a curl-free component. In most cases we can neglect the gradient-free

component and consider the displacement field d as the gradient of the

so-called lensing potential Φ(ϑ,ϕ), the projection of the 3D

gravitational potential Ψ on the 2D unit sphere. This quantity can be computed with

Boltzmann codes (e.g. CAMB1 or CLASS2), from galaxy surveys or N-body

simulations (Carbone et al. 2008; Das & Bode 2008),

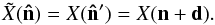

(5)Here

η∗ is the comoving distance to the last scattering surface,

η is the co-moving distance, dA is the

co-moving angular diameter distance. The lensing potential is expected to be correlated on

a large scale with temperature anisotropies and E-modes of polarization

through the integrated Sachs-Wolfe effect; this correlation mainly affects the large

angular scales and is of the order of 1% at ℓ ≈ 100 and will thus be

neglected in the following analysis.

(5)Here

η∗ is the comoving distance to the last scattering surface,

η is the co-moving distance, dA is the

co-moving angular diameter distance. The lensing potential is expected to be correlated on

a large scale with temperature anisotropies and E-modes of polarization

through the integrated Sachs-Wolfe effect; this correlation mainly affects the large

angular scales and is of the order of 1% at ℓ ≈ 100 and will thus be

neglected in the following analysis.

Since the lensing potential is a scalar function and can be expanded into canonical

spherical harmonics, its gradient (a spin-1 curl-free field) can be easily computed in the

harmonic domain with a spin-1 spherical harmonic transform (SHT):

(6)

(6)

2.2. Pixel-domain simulations

2.2.1. Basics

Because typical deviations of CMB photons are on the order of few arcminutes (although

coherent over the degree scale), we can work in the Born approximation, i.e.,

considering this deviation as constant between  and

and  ,

and evaluate the displaced field along the unperturbed direction.

,

and evaluate the displaced field along the unperturbed direction.

In practice this means that to compute the lensed CMB at a given point it is sufficient to compute the unlensed CMB at another position on the sky. This observation provides the basis for the pixel-based approaches to simulating lensing effects of the CMB maps. For every direction on the sky corresponding to a pixel center these methods first identify the displaced direction and then compute the corresponding sky signal value, which is used to replace the original value at the pixel center. The implementations of this approach typically involve the following main steps (Lewis 2005; Basak et al. 2009; Lavaux & Wandelt 2010):

-

1.

Generating a random realization of the harmonic coefficientsof the unlensed CMB map and its synthesis.

-

2.

Generating a random realization of the harmonic coefficients of the lensing potential and then of the spin-1 displacement field in the harmonic domain. Synthesizing the displacement field.

-

3.

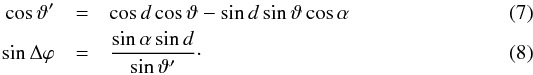

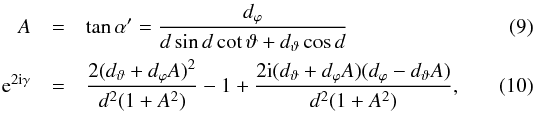

Sampling the displacement field at pixel centers and, for each of them, computing the coordinates of a displaced direction on the sky using the spherical triangle identities on the sphere. Defining α as the angle between the displacement vector and the eϑ versor, such that d = dcosα eϑ + dsinα eϕ, the value of a lensed field, i.e., T, Q and U, in a direction (ϑ,ϕ) is given by the unlensed field at (ϑ′,ϕ + Δϕ) where,

-

4.

Computing temperature and polarization fields at displaced positions.

-

5.

Re-assigning the temperature and polarization from the displaced to new positions to create the simulated lensed map sampled on the original grid. For the polarization, we need also to multiply the lensed field by an extra factor taking into account the different orientation of the basis vector at the two points. Calling γ the difference between the angles between eϑ and the geodesic connecting the two points, and defining

the

lensed polarization field becomes

the

lensed polarization field becomes

(11)

(11) -

6.

Smoothing and, potentially, re-pixelizing the lensed map to match a particular experimental resolution, if needed.

2.2.2. Challenges and goals

There are two main, closely intertwined challenges involved in implementing the approach detailed in the previous section. The first one is related to the bandwidths of fields used in, or produced as a result of, the calculation, and in particular to the need of imposing those on the fields, which are either naturally not band-limited or are band-limited but have too high bandwidths to make them acceptable from the computational efficiency point of view. The other challenge arises from step 4 of the algorithm: the displaced directions do not correspond in general to pixel centers of any iso-latitudinal grid on the sphere, and thus the lensed values of the CMB signal cannot be computed with the aid of a fast SHT algorithm and a more elaborated, and computationally costly approach is needed.

We emphasize that both these problems should be looked at from the perspective of the efficiency of the numerical calculations as well as accuracy of the produced results. We discuss them in some detail below.

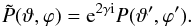

Signal bandwidths.

Because the lensing procedure needs to be applied prior to any instrumental response

function convolution, the relevant sky signals on all but the last steps above require

using a resolution sufficient to support the signal all the way to its intrinsic

bandwidth,  where

X is either T for the total intensity, or

P for the polarization, or Φ – for the gravitational potential.

However, because mathematically the lensing effects can be seen as a convolution in

the harmonic domain (Hu 2000; Okamoto & Hu 2003; Hu & Okamoto 2002) of the CMB signal – either the total

intensity, T, or the polarization, P, – and of the

potential, Φ, the bandwidth of the resulting lensed field will be broader than that of

any unlensed fields and is given roughly by

where

X is either T for the total intensity, or

P for the polarization, or Φ – for the gravitational potential.

However, because mathematically the lensing effects can be seen as a convolution in

the harmonic domain (Hu 2000; Okamoto & Hu 2003; Hu & Okamoto 2002) of the CMB signal – either the total

intensity, T, or the polarization, P, – and of the

potential, Φ, the bandwidth of the resulting lensed field will be broader than that of

any unlensed fields and is given roughly by  .

Consequently, the lensed map produced in step 5 should have its resolution

appropriately increased to eliminate potential power aliasing effects. The resolution

of the unlensed maps produced in steps 1–5 should then coincide with that of the

lensed signal but with the number of harmonic modes set by

.

Consequently, the lensed map produced in step 5 should have its resolution

appropriately increased to eliminate potential power aliasing effects. The resolution

of the unlensed maps produced in steps 1–5 should then coincide with that of the

lensed signal but with the number of harmonic modes set by

and

and

respectively.

respectively.

One of the problems arising in this context is related to the fact that the unlensed

sky signals, T, P and Φ, considered here are not

truly band-limited even if their power at the small scales decays quite abruptly as a

result of Silk damping. Picking an appropriate value for the bandwidth is therefore a

matter of a compromise between the precision of the final products and the calculation

cost, with both these quantities being quite sensitive to the chosen value, and which

will depend in general on a specific application. We emphasize that the presence of

the high-ℓ power decay plays a dual role in our considerations here.

On the one hand, it ensures that the lensing effect at sufficiently large scale can be

computed with an arbitrary precision by simply choosing the bandwidth values

sufficiently high. On the other hand it does introduce an extra complexity in defining

a set of sufficient conditions, which ensure required precision, because these will be

typically different in the regime of the high signal power and that of the damping

tail. In either case, though, it is clear that whatever the selected bandwidths, the

amplitudes of the harmonic modes of the lensed signal close to the highest value of

supported by the employed pixelization, i.e.,

supported by the employed pixelization, i.e.,  , will

generally be unavoidably misestimated, and satisfactory precision can only be achieved

for harmonic modes lower than some

, will

generally be unavoidably misestimated, and satisfactory precision can only be achieved

for harmonic modes lower than some  . From the

practitioner’s perspective the main problem is therefore, given some precision

criterion, ε, which we wish to be fulfilled by the harmonic modes of

the lensed signal up to some value of

. From the

practitioner’s perspective the main problem is therefore, given some precision

criterion, ε, which we wish to be fulfilled by the harmonic modes of

the lensed signal up to some value of  ,

how to determine the required bandwidths of the unlensed signals,

,

how to determine the required bandwidths of the unlensed signals,

where X and

Y can be the same, e.g., in the case of the T or

E signal lensing, or different, e.g., for the potential field or

B-modes.

where X and

Y can be the same, e.g., in the case of the T or

E signal lensing, or different, e.g., for the potential field or

B-modes.

One effect of these considerations is that if these are maps of the lensed signals,

which are of interest as the final product of the calculation, then the biased

high-ℓ modes should either be filtered out or suppressed before the

map is synthesized from its harmonic coefficients. To ensure that this does not

adversely affect the resolution of the final map, the bias should affect only angular

scales much smaller than the expected final resolution of the map as produced in step

6 of the algorithm. If the latter is defined by the experimental beam resolution, one

therefore needs to ensure that no bias is present for

,

where σbeam is an experimental beam width.

,

where σbeam is an experimental beam width.

Interpolations.

Interpolation is the most popular workaround of the need to directly calculate values of the unlensed fields for every displaced directions, which typically will not correspond to grid points of any iso-latitudinal pixelization. Three interpolation schemes have been considered to date in the context of the polarized signals. Lewis (2005) proposed a generic modified bicubic interpolation and demonstrated that it seems to work satisfactorily in a number of cases. This approach together with the direct summation are both implemented in the publicly available code LensPix3. Two other methods have been proposed more recently. Basak et al. (2009) implemented the general interpolation scheme, which recasts a band-limited function on the sphere as a band-limited function on the 2D torus where a non-equispaced fast Fourier (NFFT) transform algorithm is used to compute the field at the displaced positions. This method would be arbitrarily precise if the sky signals were strictly band-limited. However, the choice of NFFT can become a bottleneck for this algorithm since its numerical workload scales with the number of pixels squared, and its memory requirements are huge. As it is, the NFFT software can be run only on shared-memory architectures, making it more difficult to resolve both these problems. Consequently, the issue of the bandwidth values is becoming of crucial importance for the performance and applicability of the method, and its relevance in particular in the context of simulations of upcoming and future high-resolution experiments needs to be investigated in more detail.

Lavaux & Wandelt (2010) proposed a fast pixel-based method using the spectral characteristics of the field to be lensed to compute the weighting coefficient for the interpolation of this field, without using any spherical harmonic algorithm. Its accuracy is set by the number of neighboring pixels used to interpolate the field at a given point.

|

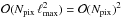

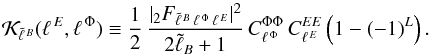

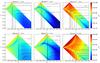

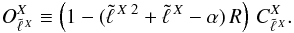

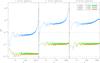

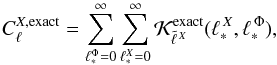

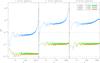

Fig. 1 Examples of the CMB B-modes lensing calculation and involved numerical effects. All panels show the recovered B-modes power spectrum overplotted over the theoretical B-mode spectrum computed with CAMB (color line). The bandwidth of the E-modes and the potential Φ is the same in all the panels and set to 2500, while the resolution of the maps used for simulating the lensed signal increases progressively from left to right. ECP pixelization has been used in all cases. The recovered B-spectrum overestimates the theoretical curve in the left panel due to the power-aliasing effect, while it underestimates it in the result recovered for much higher resolution as shown on the right. The nearly perfect recovery shown in the middle panel is merely accidental and results from the insufficient signal bandwidth (right panel) that compensates the extra contribution of the aliasing effect (left panel). The spectrum in the right panel is aliasing-free because it does not change anymore with the increasing resolution. |

In addition, Hirata et al. (2004) used in their work a polynomial interpolation scheme of arbitrary order and precision, which has been shown to successfully produce temperature maps (Hirata et al. 2004; Das & Bode 2008) but has not been tested for the polarized case.

Any interpolation in this context is not without its dangers because interpolations tend to smooth the underlying signals. For a genuinely band-limited function this could in principle be avoided as in, e.g., Basak et al. (2009). However, for the actual CMB signals the bandwidth is only approximate and is a function of the required precision and specific application; the sampling density and interpolation scheme therefore need to be chosen very carefully to render reliable results. Again, the choice of appropriate bandwidth values is therefore central for a successful resolution of this problem.

Numerical workload.

Numerical cost of the direct calculation per direction is given by

and

corresponds to the cost of calculating an entire set of all ℓ and

m modes of associated, scalar, or spin-weighted, Legendre

functions. For Npix directions the overall cost about

and

corresponds to the cost of calculating an entire set of all ℓ and

m modes of associated, scalar, or spin-weighted, Legendre

functions. For Npix directions the overall cost about

and is

therefore prohibitive for any values of Npix and

ℓmax of interest. Here, we assumed a relation,

and is

therefore prohibitive for any values of Npix and

ℓmax of interest. Here, we assumed a relation,

,

typically fulfilled for the full-sky pixelization with a proportionality coefficient

on the order of a few, e.g., for the HEALPix4

pixelization (Górski et al. 2005) we have

,

typically fulfilled for the full-sky pixelization with a proportionality coefficient

on the order of a few, e.g., for the HEALPix4

pixelization (Górski et al. 2005) we have

,

while for ECP,

,

while for ECP,  .

The interpolations can cut on this load, trimming it to the one needed to compute a

representation of the signals on an iso-latitudinal grid, with complexity

.

The interpolations can cut on this load, trimming it to the one needed to compute a

representation of the signals on an iso-latitudinal grid, with complexity

plus the interpolation with the complexity

plus the interpolation with the complexity  ,

or

,

or  in the case of NFFT, in both cases with a potentially large pre-factor. Nevertheless,

this is clearly a more favorable scaling than the one of the direct method and, as has

been shown in the past, makes such calculations feasible in practice. We note,

however, that for the sake of the precision of the interpolation one may need to

overpixelize the sky, meaning using a higher value of Npix

than what would normally be needed to support the harmonic modes all the way to

ℓmax. Hereafter, we denote the overpixelization factor

in each of the two directions, θ and φ, as

κ. Consequently, the number of pixels used is given by

κ2 Npix, where

Npix is the standard full-sky number of pixels as

determined by the selected value of ℓmax.

in the case of NFFT, in both cases with a potentially large pre-factor. Nevertheless,

this is clearly a more favorable scaling than the one of the direct method and, as has

been shown in the past, makes such calculations feasible in practice. We note,

however, that for the sake of the precision of the interpolation one may need to

overpixelize the sky, meaning using a higher value of Npix

than what would normally be needed to support the harmonic modes all the way to

ℓmax. Hereafter, we denote the overpixelization factor

in each of the two directions, θ and φ, as

κ. Consequently, the number of pixels used is given by

κ2 Npix, where

Npix is the standard full-sky number of pixels as

determined by the selected value of ℓmax.

Goals and methodology.

This paper has two main goals. One is to study internal consistency and convergence of the pixel-domain simulations in the context of the currently viable cosmologies. The other is to study the dependence of the precision of these simulations on some of its most important parameters.

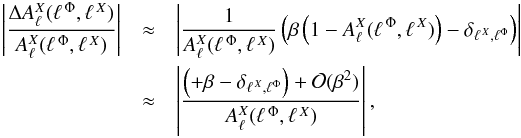

In previous works, analyses of this sort have usually been restricted to comparisons of power spectra of the lensed maps derived by a lensing simulation code and the theoretical predictions computed via an integration of the Boltzmann equation, as implemented in the publicly available codes, CAMB and CLASS. In these works, the effort has been made to find a set of the code parameters for which the resulting spectrum is consistent with the theoretical expectations. Such comparisons are without doubt an important part of a code and method validation. However, they are limited to the cases of the gravitational potentials, Φ, derived in a linear theory, and are not applicable in some other cases where the potential is obtained by some other means such as, N-body simulations. In addition, they may on occasion be misleading because the numerical effects can easily conspire to deliver a spectrum tantalizingly close to the desired one, without any reassurance that the map of the lensed sky characterized by it has correct other statistical properties, such as higher-order statistics. That this is particularly likely and consequential for the B-modes spectrum given its low amplitude and the lack of characteristic, fine-scale features. An example of such a conspiracy is shown in Fig. 1, where the power deficit at the high-ℓ end caused by the oversmoothing due to the interpolation nearly perfectly compensates the extra power aliased into the ℓ-range of interest as a consequence of too crude a resolution of the final map.

We therefore propose to study the robustness of the simulated results by demonstrating their convergence and internal stability with respect to sky sampling and band-limit changes, as expressed by two parameters introduced earlier: the upper value of the signal band, ℓmax, and the overpixelization factor, κ. Only once the convergence is reached we compare the results to those computed by other means, if any are available. We note that the convergence tests do not have to, and should not in general, be restricted to the power spectra comparison only and could instead involve other metrics more directly relevant to the simulated maps themselves. In all such tests it is typically required to consider maps with extreme resolutions, which has been traditionally prohibitive for numerical reasons. We overcome this problem with the help of a high-performance lensing code, lenS2HAT, which we have developed for this purpose.

Our second goal, i.e., to study the dependence of the calculation precision on the two crucial parameters, ℓmax and κ, is complementary and is aimed at providing meaningful and practically useful guidelines of how to select the values of these parameters prior to performing any numerical tests given some predefined precision targets. In this context, we present an in-depth semi-analytical analysis of the impact of these parameters on the lensed signal recovery. Though ultimately they may need to be confirmed numerically case-by-case, e.g., using the convergence tests as discussed earlier, they could be of significant help in providing a reasonable starting point for such tests.

At last we also present a simple, high-performance parallel implementation of the pixel-domain approach, lenS2HAT, which is capable of reaching extremely high sample density on the sphere thanks to its efficient parallelization and numerical implementation, and which has been instrumental in accomplishing all the other goals of this work.

3. Exploring the bandlimits

3.1. CMB lensing in the harmonic domain

This section addresses the second of goals, as stated above, and describes a

semi-analytic study of the impact of the assumed bandwidth values on the precision of the

lensed signal. Our discussion is based on the model of Hu

(2000) and focuses on the lensed B-mode signal that is obtained

obtained as a result of the lensing acting upon the primordial E-mode

signal, and is the main target of this paper. Similar considerations can be made, however,

for other CMB observable spectra and we present some relevant results calculated for these

cases (see Sect. 3.2 for some more details). Using

the results of Hu (2000), we represent the lensed

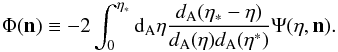

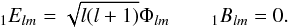

B-mode signal as  (12)where

(12)where

is a spin-2 coupling kernel (see Hu 2000 for a full

expression),

is a spin-2 coupling kernel (see Hu 2000 for a full

expression),  and

and

and

and

denote the

unlensed power spectra of the E mode polarization and of the

gravitational potential, respectively. This formula can be obtained by a second-order

series expansion around undisplaced direction, which is expected to be accurate to within

1% for multipoles

denote the

unlensed power spectra of the E mode polarization and of the

gravitational potential, respectively. This formula can be obtained by a second-order

series expansion around undisplaced direction, which is expected to be accurate to within

1% for multipoles  and

then for

and

then for  , where the CMB amplitude

is small and can be modeled by its gradient only, while in the intermediate scales its

precision degrades to nearly 5%. The reliability of this analytical model is discussed

later in Sect. 4.3.1. We can now introduce 1D

kernels,

, where the CMB amplitude

is small and can be modeled by its gradient only, while in the intermediate scales its

precision degrades to nearly 5%. The reliability of this analytical model is discussed

later in Sect. 4.3.1. We can now introduce 1D

kernels,  ,

defined as

,

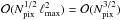

defined as  (13)Summed over

ℓ E for a fixed

(13)Summed over

ℓ E for a fixed

, these

give the lensed B-mode power contained in the mode

, these

give the lensed B-mode power contained in the mode

, Eq.

(12), while for a fixed

ℓ E they define the power spectrum of the

lensed B-modes signal, generated via lensing from the E

polarization signal that contains non-zero power in a single mode

ℓ E, and with its amplitude as given by

, Eq.

(12), while for a fixed

ℓ E they define the power spectrum of the

lensed B-modes signal, generated via lensing from the E

polarization signal that contains non-zero power in a single mode

ℓ E, and with its amplitude as given by

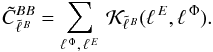

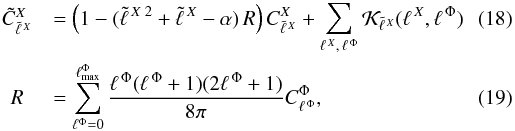

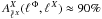

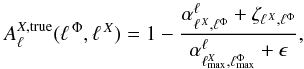

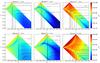

. The kernels are

displayed in Fig. 2 together with their analogs for

the total intensity and E-polarization signals. We find that the kernels

computed for different values of

. The kernels are

displayed in Fig. 2 together with their analogs for

the total intensity and E-polarization signals. We find that the kernels

computed for different values of  are

similar, just shifted with respect to each other accordingly. The change in the amplitude

simply reflects the change in the assumed power of the E signal, which in

turn follows that of the actual E power spectrum. The kernels are flat

for values

are

similar, just shifted with respect to each other accordingly. The change in the amplitude

simply reflects the change in the assumed power of the E signal, which in

turn follows that of the actual E power spectrum. The kernels are flat

for values  and

decay as a power law for

and

decay as a power law for  ,

displaying a sharp dip at

,

displaying a sharp dip at  .

Similar observations can be made for the T and E

kernels, with the exception that unlike their E and T

counterparts, the B kernels are not peaked around the dip. This behavior

is related to the fact that the lensed B-modes signal we discuss here,

described by Eq. (12), is generated by the

E-polarization, while the main effect of the lensing on

T and E is imprinted on these signals themselves. A

direct consequence of this is that for any lensed B-modes spectrum mode a

contribution from local unlensed multipoles will be less dominant, as is the case for the

T and E signals, and nonlocal contributions will be

relatively more important and therefore required to be accounted for in high-precision

calculations.

.

Similar observations can be made for the T and E

kernels, with the exception that unlike their E and T

counterparts, the B kernels are not peaked around the dip. This behavior

is related to the fact that the lensed B-modes signal we discuss here,

described by Eq. (12), is generated by the

E-polarization, while the main effect of the lensing on

T and E is imprinted on these signals themselves. A

direct consequence of this is that for any lensed B-modes spectrum mode a

contribution from local unlensed multipoles will be less dominant, as is the case for the

T and E signals, and nonlocal contributions will be

relatively more important and therefore required to be accounted for in high-precision

calculations.

|

Fig. 2 1D lensings kernels. The lensed power for T, E, and B spectra is computed assuming a delta-like spectra with power in a single mode ℓ′ = 10, 50, 100, 500, 1000, 2000, 3000, 4000, 5000 and 6000 in the unlensed CMB spectra. The blue dashed line represents the reference lensed spectra as computed by CAMB. The sum of all single-mode contributions for ℓ′ ∈ [0,∞] would reproduce the lensed spectra. For T and E cases, the subdominant contribution of the convolution part only is shown for visualization purposes and offset terms are ignored (see Sect. 3.2 and Eq. (20)). The comparison of 1D kernel shapes for T, E, and B for ℓ′ = 1000 is shown in the bottom-right panel: the peculiar shape of each type of kernel drives the locality and amplitude of the contribution to the lensed spectra. |

Indeed, owing to the flat plateau of the kernels at the low-ℓ end, in

principle all high-ℓ unlensed modes contribute to the lensed power at the

low-ℓ end. The magnitude of their contribution is modulated by the

shape of the unlensed E spectrum and therefore eventually becomes

negligible only because of the Silk damping, i.e., lack of the power at small angular

scales in the unlensed fields. Nevertheless, we can expect that nearly all the modes of

the unlensed E spectrum up to the damping scale have to be included in

the calculation of the lensed B spectrum to ensure high-precision

recovery of the lensed B-modes spectrum with

.

Given some specific target precision, we could and should fine-tune the required

E-spectrum bandwidth, and whatever is the value selected here, the

bandwidth for the potential field will have to be at least the same.

.

Given some specific target precision, we could and should fine-tune the required

E-spectrum bandwidth, and whatever is the value selected here, the

bandwidth for the potential field will have to be at least the same.

For high-ℓ modes of the lensed B-modes spectrum,

, the non-locality of the

power transfer due to lensing is even more striking, as due to the low amplitudes of the

E spectrum the local contributions are additionally suppressed, and the

long power-law tails of the contributions from large and intermediate angular scales,

ℓ E ≲ 1000 are evidently dominant. Less

evident is the fact that also the E-power from even smaller angular

scales,

, the non-locality of the

power transfer due to lensing is even more striking, as due to the low amplitudes of the

E spectrum the local contributions are additionally suppressed, and the

long power-law tails of the contributions from large and intermediate angular scales,

ℓ E ≲ 1000 are evidently dominant. Less

evident is the fact that also the E-power from even smaller angular

scales,  , may be

relevant. The contributions from each of these modes may appear small, Fig. 2, but are potentially non-negligible due to the large

number of those modes. A high-precision recovery of the high-ℓ tail of

the lensed B-modes spectrum will therefore need a careful assessment of

the importance of all these contributions, nevertheless, a generic expectation would be

that the bandwidth of the unlensed E-modes spectrum will have to be

higher than the highest value of the lensed B-modes signal multipole for

which high precision is required, and potentially higher than the scale of Silk damping.

Because these very high multipoles of the lensed B spectrum are expected

to have a significant contribution from relatively low multipoles of the unlensed

E signal, i.e., for which

, may be

relevant. The contributions from each of these modes may appear small, Fig. 2, but are potentially non-negligible due to the large

number of those modes. A high-precision recovery of the high-ℓ tail of

the lensed B-modes spectrum will therefore need a careful assessment of

the importance of all these contributions, nevertheless, a generic expectation would be

that the bandwidth of the unlensed E-modes spectrum will have to be

higher than the highest value of the lensed B-modes signal multipole for

which high precision is required, and potentially higher than the scale of Silk damping.

Because these very high multipoles of the lensed B spectrum are expected

to have a significant contribution from relatively low multipoles of the unlensed

E signal, i.e., for which  given the

triangular relations, Eq. (16), and the

definition of the kernels, Eq. (13), we

can conclude that the bandwidth of the potential field used in the simulations will have

to be at least as large as

given the

triangular relations, Eq. (16), and the

definition of the kernels, Eq. (13), we

can conclude that the bandwidth of the potential field used in the simulations will have

to be at least as large as  .

.

There are two main conclusions to be drawn here. First, it is clear that a high-fidelity

simulation of the B-polarization power spectrum even in a restricted

range of angular scales will require broad bandwidths, potentially all the way up to the

scale of Silk damping, for both the unlensed E-mode polarization signal

and the gravitational potential. However, these bandwidth values are not expected to

depend very strongly on the maximal B-mode multipole that we want to

recover, at least as long as it is in the range  .

Second, because the expected bandwidths are broad, it is important to optimize them to

ensure efficiency of the numerical codes without affecting precision of the results.

.

Second, because the expected bandwidths are broad, it is important to optimize them to

ensure efficiency of the numerical codes without affecting precision of the results.

Thanks to the peaked character of the respective kernels, the lensed modes for the lensed

T and E spectra are typically dominated by a local

contribution coming from the immediate vicinity of the mode. This in general permits

setting the bandwidth for the potential shorter than the mode of the lensed spectrum to be

computed. By contrast, the unlensed T and E spectrum

have to be known at least up to the multiple of interest of the lensed spectrum,

(X = T or E), augmented by the

assumed bandwidth of the potential. These observations reflect the usual rule of thumb,

(e.g., Lewis 2005), indicating that lower bandwidth

values can be used in these two cases for the same required accuracy.

(X = T or E), augmented by the

assumed bandwidth of the potential. These observations reflect the usual rule of thumb,

(e.g., Lewis 2005), indicating that lower bandwidth

values can be used in these two cases for the same required accuracy.

|

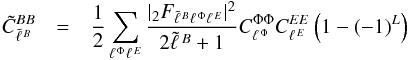

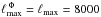

Fig. 3 Lensing kernels |

3.2. Accuracy

In this section we aim at turning the consideration presented above into more

quantitative prescriptions concerning the bandwidths of the input fields used in the

simulations. For this reason we introduce 2D kernels,

, defined as,

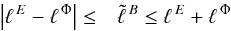

, defined as,  (14)These define for a

given value of ℓB a contribution of the

E power at

ℓ = ℓ E and the Φ power

at ℓ = ℓ Φ to the amplitude of the lensed

B-modes spectrum at that

(14)These define for a

given value of ℓB a contribution of the

E power at

ℓ = ℓ E and the Φ power

at ℓ = ℓ Φ to the amplitude of the lensed

B-modes spectrum at that  , which

can then be computed by summing over ℓ E and

ℓ Φ, i.e.,

, which

can then be computed by summing over ℓ E and

ℓ Φ, i.e.,  (15)The sum in this

equation involves in principle an infinite number of terms and therefore would have to be

truncated in any numerical work, either explicitly, e.g., by setting finite limits in the

formula above, or implicitly, e.g., by selecting the bandwidths, pixel sizes, etc., in the

pixel-domain codes. We therefore used these kernels to study the precision problems

involved in this type of calculations. As the expressions for the kernels are approximate,

so will be our conclusions. However, as our goal is to provide guidelines on how to select

the correct values for the simulations codes, this should not pose any problems. We will

return to this point later in this section.

(15)The sum in this

equation involves in principle an infinite number of terms and therefore would have to be

truncated in any numerical work, either explicitly, e.g., by setting finite limits in the

formula above, or implicitly, e.g., by selecting the bandwidths, pixel sizes, etc., in the

pixel-domain codes. We therefore used these kernels to study the precision problems

involved in this type of calculations. As the expressions for the kernels are approximate,

so will be our conclusions. However, as our goal is to provide guidelines on how to select

the correct values for the simulations codes, this should not pose any problems. We will

return to this point later in this section.

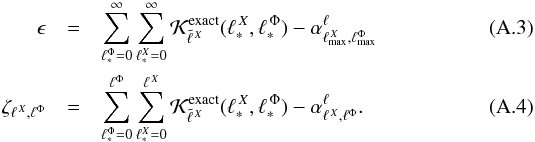

We show a sample of the kernels,  in Fig. 3. These are computed for selected values of

in Fig. 3. These are computed for selected values of

for which the approximations involved in their computation are expected to be valid. We

note that all elements of the kernel,

for which the approximations involved in their computation are expected to be valid. We

note that all elements of the kernel,  , vanish if the quantity

L, defined in the previous section, is even, as do those for which the

triangular relation

, vanish if the quantity

L, defined in the previous section, is even, as do those for which the

triangular relation  (16)is

not satisfied. This last fact is a consequence of the Wigner 3-j symbols in the

expressions for

(16)is

not satisfied. This last fact is a consequence of the Wigner 3-j symbols in the

expressions for  ,

(Hu 2000). Within these restrictions it is

apparent from Fig. 3 that each multipole of the

lensed B-modes spectra

,

(Hu 2000). Within these restrictions it is

apparent from Fig. 3 that each multipole of the

lensed B-modes spectra  receives contributions from a wide range of harmonic modes of both E and

Φ spectra, extending to values of ℓ E and

ℓ Φ significantly higher than

receives contributions from a wide range of harmonic modes of both E and

Φ spectra, extending to values of ℓ E and

ℓ Φ significantly higher than

and

roughly independent of the latter value at least for

and

roughly independent of the latter value at least for

. For

its higher values a non-negligible fraction of the contribution starts to come from

progressively higher multipoles of both E and Φ. Clearly, these trends

are consistent with what we have inferred earlier with help of the 1-dim kernels.

. For

its higher values a non-negligible fraction of the contribution starts to come from

progressively higher multipoles of both E and Φ. Clearly, these trends

are consistent with what we have inferred earlier with help of the 1-dim kernels.

As also observed earlier, we find the B-modes kernels qualitatively

different from those computed for the lensed total intensity and E-modes

polarization signals, Fig. 3, and they are more

localized in the harmonic space with the bulk of power coming mainly from scales for which

both ℓT,E are relatively close to the

considered lensed multipole,  .

.

We note that all the 2D kernels are positive5 and therefore including more terms in the sum, Eq. (15), will always improve the precision of the result. From the efficiency point of view one may want to include in the sum preferably the terms corresponding to the largest 2D kernel amplitudes because they provide the largest contribution to the final lensed result before adding those with progressively smaller kernel amplitudes until the required precision is reached. This approach would in principle ensure that the best accuracy is achieved with the smallest number of included terms. This may therefore look as a potentially attractive option from the perspective of optimizing the calculations. However, in practice, as the recurrence formulae are usually employed in the calculations, e.g., either those needed to compute spherical harmonics in the case of the pixel-domain codes or those needed to calculate the 3-j symbols as in a direct application of Eq. (15), and therefore all the terms up to a given bandwidth are at our disposal at any time, and it therefore seems efficient and useful to capitalize on those by including all of them in the calculation. Consequently, we estimated what degree of precision can be achieved by such calculations by including all the contributions up to some specific bandwidth values for the E and Φ multipoles.

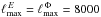

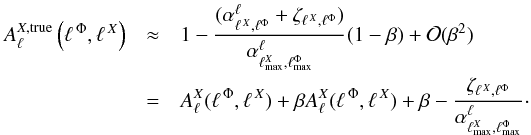

For the B-modes spectrum we therefore hereafter express the precision of

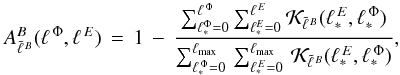

the calculations as  (17)where the sums in

the denominator should in principle extend over the infinite range of values of

ℓ, but for practical reasons are truncated to

ℓmax = 8000, which for the range of lensed multipoles of

interest in this work,

(17)where the sums in

the denominator should in principle extend over the infinite range of values of

ℓ, but for practical reasons are truncated to

ℓmax = 8000, which for the range of lensed multipoles of

interest in this work,  ,

should be sufficient.

,

should be sufficient.

|

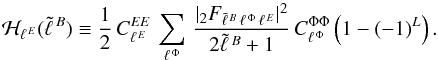

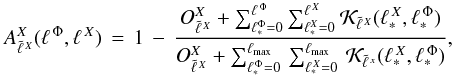

Fig. 4 Lensing kernels |

This expression can be generalized for all lensed CMB spectra, but in this case our model

has to take into account that the main effect due to lensing is to reshuffle the power of

the signal and not to convert it into some other component. Therefore the total variance

of the signal has to be conserved (e.g. Blanchard &

Schneider 1987). In this case the lensed power spectra of

X = T or =E can be written as

where

α is an integer that is different for each CMB spectra

where

α is an integer that is different for each CMB spectra

-

α = 4 for X = E

-

α = 0 for X = T

-

α = 2 for X = TE.

We note that the factor R is a smooth function of the cutoff value of

the sum over ℓ Φ, which quickly becomes nearly constant for

,

Fig. 5. Hereafter, we therefore precompute it once

assuming

,

Fig. 5. Hereafter, we therefore precompute it once

assuming  and use it in all subsequent

calculations. The generalized expression for the accuracy function in Eq. (17) would then be

and use it in all subsequent

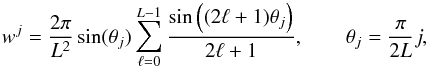

calculations. The generalized expression for the accuracy function in Eq. (17) would then be  (20)where for

shortness we have introduced

(20)where for

shortness we have introduced  We

note that for cosmological models of the current interest, the factor R

is typically found to be on the order of

We

note that for cosmological models of the current interest, the factor R

is typically found to be on the order of  and thus the term

and thus the term  is expected to be

negative for most of the values of

is expected to be

negative for most of the values of  in the

range of interest here, see Fig. 5.

in the

range of interest here, see Fig. 5.

In Fig. 3 black solid lines represent the expected

error estimates, as expressed by the accuracy function,

, for a number of selected

values ranging from 25% to 0.01%. We note that for the shown range of

, for a number of selected

values ranging from 25% to 0.01%. We note that for the shown range of

only the sub-percent values of the accuracy are likely to be somewhat biased due to the

assumed cutoff in the denominator of Eqs. (17) or (20), an effect, which is

therefore largely irrelevant for our considerations here. The fact that our accuracy

definition is based on an approximate formula is also not a problem because any potential

(and small, Challinor & Lewis 2005)

discrepancy would affect both the numerator and denominator of Eqs. (17) an (20) in the same way. It can therefore be shown that to the first order

in the discrepancies amplitude, precision of our accuracy criterion improves progressively

when the estimated level of the accuracy,

only the sub-percent values of the accuracy are likely to be somewhat biased due to the

assumed cutoff in the denominator of Eqs. (17) or (20), an effect, which is

therefore largely irrelevant for our considerations here. The fact that our accuracy

definition is based on an approximate formula is also not a problem because any potential

(and small, Challinor & Lewis 2005)

discrepancy would affect both the numerator and denominator of Eqs. (17) an (20) in the same way. It can therefore be shown that to the first order

in the discrepancies amplitude, precision of our accuracy criterion improves progressively

when the estimated level of the accuracy,  , tends to 0 and is degraded

to the percent level when

, tends to 0 and is degraded

to the percent level when  , i.e.,

when it is well outside of the region of any interest for the high-precision simulations

considered here (see Appendix A).

, i.e.,

when it is well outside of the region of any interest for the high-precision simulations

considered here (see Appendix A).

The differences in the shape of the lensing kernels result in differences in the accuracy contours for different lensed signals and their multipoles as shown in Figs. 3 and 4. In particular, for lensed B-modes, the contribution of large-scale power of the CMB to the lensed signal is more significant. In spite of these differences, we, however, find that the overall contours seem to share a similar shape made of two lines nearly aligned with the plot axes which meets at a right angle. Consequently, if one of the two bandwidths is fixed, then the accuracy, which can be reached by such a computation, will be limited and, moreover, starting from some value of the other bandwidth, nearly independent on its value. This has two consequences. First, if the attainable precision is not satisfactory given our goals, it can be improved only by increasing the value of the first bandwidth appropriately. Second, the value of the second bandwidth can be tuned to ensure nearly the best possible accuracy, given the fixed value of the first bandwidth, while keeping it much lower than what the triangular relation, Eq. (16), would imply. This could lead to a tangible gain in terms of the numerical workload needed to reach some specific accuracy. Turning this reasoning around, we could think of optimizing both bandwidths to minimize the cost of the computation for a desired precision. From this perspective, taking the turnaround point of the contour for a given accuracy may look as the optimal choice. However, this choice would merely minimize the sum of both bandwidths, (or some monotonic function of each of them) for the given accuracy, which may or may not be relevant for a specific case at hand. Instead we may rather select the bandwidths to minimize explicitly actual computational cost of whatever code we plan on using. We present specialized considerations of this sort in the next section.

On a more general level, we find that the standard rule of thumb, interpreting the

effects of lensing as a convolution of the unlensed CMB signal with a relatively narrow,

Δℓ ~ 500, convolution kernel due to the lensing potential,

applies only for T and E signals and even in these cases

only to low and intermediate values of  and

only as long as a computation precision on the order of ~1% is sufficient. For

higher values of the lensed spectrum multipoles or higher levels of the desired accuracy

in the case of T and E and for all multipoles of the

B-polarization signal, the required bandwidths of both the respective,

unlensed CMB signal and the gravitational potential are more similar and indeed the latter

bandwidth is often found to be broader.

and

only as long as a computation precision on the order of ~1% is sufficient. For

higher values of the lensed spectrum multipoles or higher levels of the desired accuracy

in the case of T and E and for all multipoles of the

B-polarization signal, the required bandwidths of both the respective,

unlensed CMB signal and the gravitational potential are more similar and indeed the latter

bandwidth is often found to be broader.

We note that an analysis of this sort is somewhat more prone to problems in the case of

the TE power spectrum since the lensing kernels

are not always positive

because they contain the products of two different Wigner 3j coefficients and TE

power spectra, which may be non-positive, rendering the corresponding accuracy

function not strictly monotonic. Hereafter, we excluded this spectrum from our analysis,

noting that any band limits prescriptions derived for T and

E will also apply directly to TE.

are not always positive

because they contain the products of two different Wigner 3j coefficients and TE

power spectra, which may be non-positive, rendering the corresponding accuracy

function not strictly monotonic. Hereafter, we excluded this spectrum from our analysis,

noting that any band limits prescriptions derived for T and

E will also apply directly to TE.

4. Numerical analysis

In this section, we present results of simulations of lensed polarized maps of the CMB anisotropies and their spectra. We address two aspects here. First, we numerically study self-consistency of the pixel-domain approach to simulating the lensing effect. Second, we demonstrate how the consideration from the previous section can be used to optimize numerical calculations involved in these simulations.

We start this section by introducing a new implementation of the pixel-domain algorithm, which we refer to as lenS2HAT.

4.1. lenS2HAT

lenS2HAT is a simple implementation of the pixel-domain algorithm for simulating effects of lensing on the CMB anisotropies. The hallmark of the code is its algorithmic simplicity and robustness, with its performance rooted in efficient, memory-distributed parallelization. The code is therefore particularly well-adapted to massively parallel supercomputers. Its implementation follows the blueprint described in Lewis (2005) that summarized in Sect. 2.2.1. The main features of the code are listed below.

Grids.

The code can produce lensed maps in a number of pixelizations used in cosmological

applications, but internally it uses grids based on the equidistant cylindrical

projection (ECP) pixelization where grid points, or pixel centers, are arranged in a

number of equidistant iso-latitudinal rings, with points along each ring assumed to be

equidistant. This pixelization supports a perfect quadrature for band-limited functions,

which in the context of this work permits minimizing undesirable leakages that typically

plague codes of this type. It can be shown, Driscoll

& Healy (1994), that an ECP grid made of 2 L

iso-latitudinal rings, each with 2 L points and a weight, as given by

(21)is required and

sufficient to ensure a perfect quadrature for any function with a band not larger than

L.

(21)is required and

sufficient to ensure a perfect quadrature for any function with a band not larger than

L.

Interpolation.

For the interpolation, the code employs the nearest grid point (NGP) assignment, e.g., we assign to every deflected direction a value of the sky signal computed at the nearest center of a pixel of the assumed pixelization scheme, therefore the respective sky signal values are calculable at the fast spherical harmonic speed. The NGP assignment is extremely quick and simple, but it requires the computations to be performed at a very high resolution to ensure that the results are reliable. The sufficient resolution required for this will in general depend on the intrinsic sky signal prior to the lensing procedure, as well as the resolution of the final maps to be produced, as is discussed in Sect. 4.2. As discussed above, in a typical case these are expected to be very high and the computations involved in the problem may quickly become very expensive. Nevertheless, as we show in Sect. 4.8, the overall computational time in this case is only somewhat longer than that involved in some other interpolation schemes, while the memory requirement can be significantly lower. However, the major advantage of this scheme for the purpose of this work is its simplicity and in particular the fact that its precision is driven by a single parameter defining the grid resolution.

|

Fig. 5 Example of the behavior of the offset term,

|

|

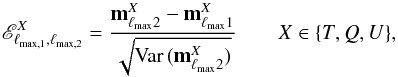

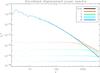

Fig. 6 Numerical cost gain by using the optimized set of ℓ Φ,ℓ E parameters compared with assumin ℓ Φ = ℓ E as a function of the accuracy of the computed spectrum for several values of the highest multipole of interest. An oversampling factor of κ = 8 was assumed to compute the cost function. |

|

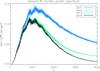

Fig. 7 Left column: examples of band-limit requirements for unlensed CMB and lensing potential as a function of obtainable accuracy, assuming they are equal, on several multipoles of lensed T, E and B spectra. Right column: summary of the optimized choice of bandwidth parameters for CMB (dot-dashed) and Φ field (dashed) compared with the cost function of the algorithm, as defined in Eq. (22). The diagonal bandwidth parameters are shown as a solid line for comparison. |

Spherical harmonic transforms.

To sidestep the problem of computing spherical harmonic transforms with a huge number of grid points and a very high band limit, lenS2HAT resorts to parallel computers and massively parallel numerical applications. With these becoming quickly more ubiquitous and affordable this solution is becoming progressively more attractive.

Parallelization of the fast spherical harmonic transforms is difficult due to the character of the input and output objects and the involved computations, where a calculation of each output datum requires knowledge of, and access to, all input data. This is clearly not straightforward to achieve without extensive data redundancy, as done e.g., in LensPix or parallel routines of HEALPix, or complex data exchanges between the CPUs involved in the computation. To avoid such problems in our implementation we used the publicly available scalable spherical harmonic transform (S2HAT) library6. This library provides a set of routines designed to perform harmonic analysis of arbitrary spin fields on the sphere on distributed memory architectures (though it has an efficient performance even when working in the serial case). It has a nearly perfect memory scalability obtained via a memory distribution of all main pixel and harmonic domain objects (i.e., maps and harmonic coefficients), and ensures very good load balance from the memory and calculation points of view. It is a very flexible tool that allows a simultaneous, multi-map analysis of any iso-latitude pixelization, symmetric with respect to the equator, with pixels equally distributed in the azimuthal angle, and provides support for a number of pixelization schemes, including the above mentioned ECP; see Szydlarski et al. (2011) for more details. The core of the library is written in F90 with a C interface and it uses the message passing interface (MPI) to institute distributed memory communication, which ensures its portability. The latest release of the library also includes routines suitable for general purpose graphic processing units (GPGPUs) coded in CUDA (Hupca et al. 2012; Szydlarski et al. 2011; Fabbian et al. 2012).

We emphasize that if a sufficient resolution can be indeed attained, the approach implemented here can produce results with essentially arbitrary precision. In the following we demonstrate that thi is indeed the case for the described code.

4.2. Code parameters

4.2.1. Overview

In this section we describe how we fixed the essential parameters of the code. We first emphasize important relations between them. A detailed description of the procedures used to assign specific values to them, is given in the following sections.

-

1.

We start by defining a target value in terms of the highest value ofthe harmonic mode,

,

that we aim to recover and its desired precision, ε. We then use

the reasoning from Sect. 3.2 to translate

this requirement into corresponding bandwidths,

ℓ X and

ℓ Φ, of the relevant unlensed signals,

X and Φ. These ensure that the precision of all modes of the

lensed signal up to

,

that we aim to recover and its desired precision, ε. We then use

the reasoning from Sect. 3.2 to translate

this requirement into corresponding bandwidths,

ℓ X and

ℓ Φ, of the relevant unlensed signals,

X and Φ. These ensure that the precision of all modes of the

lensed signal up to  will be not lower than ε, barring any unaccounted-for, numerical

inaccuracies. The values of ℓ X and

ℓ Φ are then used to estimate the bandwidth of the

output, lensed map,

will be not lower than ε, barring any unaccounted-for, numerical

inaccuracies. The values of ℓ X and

ℓ Φ are then used to estimate the bandwidth of the

output, lensed map,  .

.

-

2.

We then simulate two unlensed maps, mX and mΦ, of the signal X and potential field, Φ, with their band limits set to ℓ X and ℓ Φ, as estimated earlier. The number of pixels of the displacement map, mΦ, is equal to that in the output map of the lensed signal, and for the ECP grid, equal therefore to

. The number of

pixels in the X-signal map,

mX is then given by

. The number of

pixels in the X-signal map,

mX is then given by

, where

κ is an overpixelization factor introduced in Sect. 2.2.1 and discussed in detail below, Sect. 4.2.4. For simplicity, we assume that the grid

for which the unlensed field X is computed is a subgrid of the

grid used for Φ.

, where

κ is an overpixelization factor introduced in Sect. 2.2.1 and discussed in detail below, Sect. 4.2.4. For simplicity, we assume that the grid

for which the unlensed field X is computed is a subgrid of the

grid used for Φ. -

3.

The reassignment procedure (step 5 of the algorithm, Sect. 2.2.1) is then straightforwardly performed, leading to the map containing power potentially up to

,

which maybe needed to be filtered down to the band limit of

,

which maybe needed to be filtered down to the band limit of

,

as initially required.

,

as initially required.

4.2.2. Intrinsic bandwidths

We employ the procedure described earlier in this work in Sect. 3.2 to set the intrinsic band limits. Instead of using generic

predictions, we aim at optimizing their values to ensure the lowest possible

computational overhead. To do so we need to provide a model of the cost of numerical

calculations involved in lenS2HAT. This is dominated by large spherical

harmonic transforms, one needed to calculate the map of Φ and the other to calculate

that of signal X. Given the parameters introduced above and because the

total cost of a spherical harmonic transform is proportional to

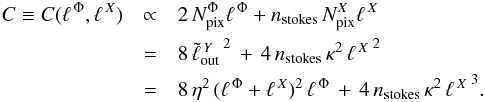

Npix ℓmax we therefore

obtain  (22)Here

nstokes stands for the number of signal maps, that we aim

to produce and is equal 1 – T-only, 2 – E and

B, or 3 – T, E, and

B, while for the field Φ the pre-factor is fixed and equal to 2,

reflecting the number of components of a vector field on the sphere. In deriving the

last equation above we have assumed that

(22)Here

nstokes stands for the number of signal maps, that we aim

to produce and is equal 1 – T-only, 2 – E and

B, or 3 – T, E, and

B, while for the field Φ the pre-factor is fixed and equal to 2,

reflecting the number of components of a vector field on the sphere. In deriving the

last equation above we have assumed that  . This is justified below,

as are the values that should be adopted for η and κ.

The expression above includes neither the cost of the interpolation nor reshuffling, but

because in both these cases the number of involved operations is proportional to

Npix, their cost is negligible with respect to that of the

transforms.

. This is justified below,

as are the values that should be adopted for η and κ.

The expression above includes neither the cost of the interpolation nor reshuffling, but

because in both these cases the number of involved operations is proportional to

Npix, their cost is negligible with respect to that of the

transforms.

Solving for the optimized values of the bandwidths, which simultaneously ensure the

desired precision, ε, at a selected multipole,

,

involves minimizing the cost function in Eq. (22), with a constraint,

,

involves minimizing the cost function in Eq. (22), with a constraint,  , Eqs. (17) and (20). This is implemented as follows. First, we define a grid of

levels of the cost function and for each level calculate the best accuracy achievable on

its corresponding contour. If this accuracy for some of the levels is close to our

target, we find a corresponding pair of bandwidth values,

(ℓ Φ,ℓ X),

which then defines our optimized solution. If none of the accuracies is sufficiently

close to the required precision, we take two levels for which the assigned accuracies

bracket the target value and insert an intermediate level for which we calculate the

corresponding best accuracy. We repeat this procedure until the best accuracy found for

the newly added contour is sufficiently close to the target one. We then use it to find

the pair of the optimized bandwidths as above. As mentioned before, in general, the two

optimized bandwidth values will not be equal. This appears to be particularly the case

when simulating the CMB spectra at very high multipoles and especially in the cases

involving the B modes, which have broader kernels and are more

demanding in terms of bandwidth requirements. The procedure allows one to gain a factor

of nearly 40% in terms of runtime inthea range of accuracy of interest for lensed

B multipoles close to 4000, especially if high oversampling is

required. For temperature and E-mode polarization, where less extra

power is required in Φ to obtain an accurate result, the gain can be quantified to be

nearly 20%–30%. We report in Fig. 7 the dependence

of the optimized bandwidth parameters as a function of the required accuracy imposed at

different lensed multipoles of T, E, and

B spectra, in the right column, and contrast them with the bandwidths

obtained in the case when both of them are assumed to be equal. In Fig. 6 we show typical runtime gains as a function of the

required accuracy.

, Eqs. (17) and (20). This is implemented as follows. First, we define a grid of

levels of the cost function and for each level calculate the best accuracy achievable on

its corresponding contour. If this accuracy for some of the levels is close to our

target, we find a corresponding pair of bandwidth values,

(ℓ Φ,ℓ X),

which then defines our optimized solution. If none of the accuracies is sufficiently

close to the required precision, we take two levels for which the assigned accuracies

bracket the target value and insert an intermediate level for which we calculate the

corresponding best accuracy. We repeat this procedure until the best accuracy found for

the newly added contour is sufficiently close to the target one. We then use it to find

the pair of the optimized bandwidths as above. As mentioned before, in general, the two

optimized bandwidth values will not be equal. This appears to be particularly the case

when simulating the CMB spectra at very high multipoles and especially in the cases

involving the B modes, which have broader kernels and are more

demanding in terms of bandwidth requirements. The procedure allows one to gain a factor

of nearly 40% in terms of runtime inthea range of accuracy of interest for lensed

B multipoles close to 4000, especially if high oversampling is

required. For temperature and E-mode polarization, where less extra

power is required in Φ to obtain an accurate result, the gain can be quantified to be

nearly 20%–30%. We report in Fig. 7 the dependence

of the optimized bandwidth parameters as a function of the required accuracy imposed at

different lensed multipoles of T, E, and

B spectra, in the right column, and contrast them with the bandwidths

obtained in the case when both of them are assumed to be equal. In Fig. 6 we show typical runtime gains as a function of the

required accuracy.

We note that here that whether we choose to optimize the bandwidths or just assume that

they are equal, we find that imposing a certain accuracy level at some multipole,

,

ensures that the same accuracy requirement will be fulfilled for all

,

ensures that the same accuracy requirement will be fulfilled for all

.

.

4.2.3. Lensed map band-limit

For the resolution of the final map, we note that in an absence of numerical effects,

such as those due to the pixelization and interpolation, the lensing procedure would be

described by Eq. (12) and the bandwidth

of the lensed map would be simply given by

ℓ X + ℓ Φ.

In the presence of the numerical effects, the output map will have an effective

bandwidth typically higher than that, which will lead to some power-aliasing at the

high-ℓ end if this theoretical band limit is imposed. We find this to

be indeed the case in our numerical calculations. However, we also find that once the

overpixelization factor is set correctly, the aliasing is localized to at most 25% of

the bandwidth and therefore easy to deal with in post-processing, e.g., step 6 of the

algorithm outline in Sect. 2.2.1. Consequently, we

used  in our numerical

simulations, with η = 1.25 as the band limit.

in our numerical

simulations, with η = 1.25 as the band limit.

It is important to emphasize that NGP is one of the sources of the aliasing, because it does not preserve the bandwidth of the interpolated function, like some of the other, ad hoc procedures proposed in this context. Clearly, an interpolation that preserves the function bandwidth would be a significant improvement for this type of algorithms, if it comes without prohibitive numerical cost. We leave such an investigation to future work.

|

Fig. 8 Comparison between the E-modes power spectra of the input displacement field (black) and the displacement field after NGP assignment for several values of the oversampling factor κ. The input displacement is computed on an ECP grid with a number of pixel Npix = 16 3842 while the discretized one is the result of an NGP assignment on a grid of κ2Npix. With progressively higher resolution the extra power due to discretization becomes negligible and the two spectra become almost indistinguishable. The discretization-induced error power spectrum is shown as a dotted line for reference; both E and B modes of the discretization error have the same power spectra. |

4.2.4. Overpixelization factor