| Issue |

A&A

Volume 663, July 2022

|

|

|---|---|---|

| Article Number | A81 | |

| Number of page(s) | 18 | |

| Section | Extragalactic astronomy | |

| DOI | https://doi.org/10.1051/0004-6361/202243354 | |

| Published online | 14 July 2022 | |

Evaluating the feasibility of interpretable machine learning for globular cluster detection

1

European Space Agency, European Space Research and Technology Centre, Advanced Concepts Team, Keplerlaan 1, 2201 AZ Noordwijk, The Netherlands

e-mail: dominik.dold@esa.int

2

European Space Agency, European Space Research and Technology Centre, Keplerlaan 1, 2201 AZ Noordwijk, The Netherlands

e-mail: katja.fahrion@esa.int

Received:

17

February

2022

Accepted:

31

March

2022

Extragalactic globular clusters (GCs) are important tracers of galaxy formation and evolution because their properties, luminosity functions, and radial distributions hold valuable information about the assembly history of their host galaxies. Obtaining GC catalogues from photometric data involves several steps which will likely become too time-consuming to perform on the large data volumes that are expected from upcoming wide-field imaging projects such as Euclid. In this work, we explore the feasibility of various machine learning methods to aid the search for GCs in extensive databases. We use archival Hubble Space Telescope data in the F475W and F850LP bands of 141 early-type galaxies in the Fornax and Virgo galaxy clusters. Using existing GC catalogues to label the data, we obtained an extensive data set of 84929 sources containing 18556 GCs and we trained several machine learning methods both on image and tabular data containing physically relevant features extracted from the images. We find that our evaluated machine learning models are capable of producing catalogues of a similar quality as the existing ones which were constructed from mixture modelling and structural fitting. The best performing methods, ensemble-based models such as random forests, and convolutional neural networks recover ∼90−94% of GCs while producing an acceptable amount of false detections (∼6−8%), with some falsely detected sources being identifiable as GCs which have not been labelled as such in the used catalogues. In the magnitude range 22 < m4_g ≤ 24.5 mag, 98−99% of GCs are recovered. We even find such high performance levels when training on Virgo and evaluating on Fornax data (and vice versa), illustrating that the models are transferable to environments with different conditions, such as different distances than in the used training data. Apart from performance metrics, we demonstrate how interpretable methods can be utilised to better understand model predictions, recovering that magnitudes, colours, and sizes are important properties for identifying GCs. Moreover, comparing colour distributions from our detected sources to the reference distributions from input catalogues finds great agreement and the mean colour is recovered even for systems with fewer than 20 GCs. These are encouraging results, indicating that similar methods trained on an informative sub-sample can be applied for creating GC catalogues for a large number of galaxies, with tools being available for increasing the transparency and reliability of said methods.

Key words: galaxies: star clusters: general / methods: data analysis / galaxies: formation / galaxies: evolution

© ESO 2022

1. Introduction

Globular clusters (GCs) are massive, dense star clusters that can be found in almost all galaxies (see the reviews by Brodie & Strader 2006; Forbes et al. 2018a; Beasley 2020). With typical masses between 104 and 106 M⊙, and compact sizes (effective radii of ∼3−10 pc), GCs are extremely dense stellar systems that are detectable in distant galaxies.

In the last decades, photometric surveys have produced extensive catalogues of GC candidates (e.g. Jordán et al. 2007a, 2015; Cantiello et al. 2020). These catalogues typically report coordinates, luminosities, and colours in at least two bands for individual GCs and enable exploration of a large variety of science cases related to the host galaxies and their assembly. For example, the luminosity or mass function of GC systems have long been used as distance indicators (e.g. Richtler 2003; Lomelí-Núñez et al. 2022). Additionally, it has been established that the number of GCs is tightly correlated with the total dark matter halo mass of a galaxy (Harris et al. 2017a; Forbes et al. 2018b), which is often interpreted as a consequence of hierarchical merging (e.g. Valenzuela et al. 2021). Similarly, the radial profiles of GC systems correlate with dark matter halo properties (e.g. Hudson & Robison 2018; Reina-Campos et al. 2021; De Bórtoli et al. 2022). Moreover, GC colours are regarded as valuable indicators of a galaxy’s merger history. Many galaxies exhibit a bimodal colour distribution with a blue and red population (e.g. Brodie & Strader 2006), which are often interpreted as stemming from two populations with different metallicities of different origins: a metal-poor, blue population that was born in currently accreted dwarf galaxies and a red, metal-rich population that formed in situ in the host galaxy (e.g. Ashman & Zepf 1992; Côté et al. 1998; Beasley et al. 2002). Although this strict division of accreted and in situ formed GCs might be a bit too simplistic (e.g. Forbes & Remus 2018; Fahrion et al. 2020a), GC colours are nonetheless valuable tracers of the underlying host metallicity (e.g. Geisler et al. 1996; Forbes & Forte 2001; Fahrion et al. 2020b).

Due to their wide applicability, obtaining GC catalogues remains a relevant task for ongoing studies covering an ever-growing number of galaxies. However, the techniques to obtain these catalogues have remained the same for many years and usually involve multiple steps, including source detection, cleaning based on photometric properties, and magnitude and colour cuts (e.g. Jordán et al. 2007a; Harris et al. 2017b; Cantiello et al. 2020; Lomelí-Núñez et al. 2022). Works based on ground-based photometry usually rely on a large number of photometric filters as most extragalatic GCs appear unresolved in seeing-limited data, requiring that the colour-colour space is explored to remove contaminants such as background galaxies and foreground Milky-Way stars (e.g. D’Abrusco et al. 2016; Cantiello et al. 2018). In contrast, photometric surveys with the Hubble Space Telescope (HST) have exploited the fact that GCs are marginally resolved in HST data out to many tens of megaparsecs and thus require less photometric bands (Jordán et al. 2007a, 2015). However, in these studies the individual sources need to be modelled to infer their sizes which can be time consuming for a large number of initial detections.

For upcoming space-based wide-field survey facilities such as Euclid (Laureijs et al. 2011) or the Nancy Grace Roman Space Telescope (Spergel et al. 2015), such classical techniques might not be the best choice due to the amount of data that has to be analysed. For this reason, it is necessary to devise and test new methods of obtaining GC catalogues. In this paper, we apply and test a range of machine learning techniques that are capable of handling large data sets on galaxies with existing photometric GC catalogues to explore the performance with regard to classical methods.

In the recent years, many authors have approached astrophysical problems with machine learning, showing the general interest in such techniques for today’s and future applications. To just name a few recent examples, machine learning has been used for classification of supernovae (e.g. Fremling et al. 2021), inference of galaxy halo properties (von Marttens et al. 2021; Villanueva-Domingo et al. 2021), cosmological predictions (Li et al. 2021; Villaescusa-Navarro et al. 2021), or galaxy identification and classification (e.g. Müller & Schnider 2021; Tarsitano et al. 2022; Ćiprijanović et al. 2021).

Additionally, machine learning has been applied for star cluster science, both for individual galaxies and with data of larger surveys. Bialopetravičius et al. (2019) tested convolutional neural networks (CNNs) on mock images of artificial resolved clusters and Wang et al. (2021) applied CNNs to identify star cluster candidates in M 31. Additionally, Bialopetravičius & Narbutis (2020a,b) explored CNNs to identify and study young star clusters in M 83 from multi-band HST observations. Wei et al. (2020) and Whitmore et al. (2021) demonstrated that deep learning can be used to identify young star clusters in HST data of the Physics at High Angular Resolution in Nearby GalaxieS (PHANGS)-HST survey, while Pérez et al. (2021) presented a machine learning pipeline based on CNNs to identify (young) star clusters in HST data from the Legacy Extra Galactic Ultraviolet Survey (LEGUS). These studies apply various machine learning methods with success and typically report ≳90% success fractions for identifying bright, symmetric star clusters. While the aforementioned works focused on young or resolved star clusters, identification of (old) GCs with machine learning methods has been recently studied by Mohammadi et al. (2022). In this work, random forest (RF) and Localized Generalized Matrix Learning Vector Quantization (LGMLVQ) classifiers are used to identify ∼500 GCs and ultra-compact dwarf galaxies in multi-wavelength ground-based photometric data of ∼7700 sources in the Fornax galaxy cluster with promising results (see also Saifollahi et al. 2021).

In this paper, we aim to extend previous work on star cluster identification by applying and testing the performance of a range of different machine learning methods on space-based HST data of non-star forming galaxies for which GC catalogues exist. We assemble a large data set of ∼85 000 sources containing ∼18 500 known GCs, which was obtained by combining data from two different environments, the Virgo and the Fornax galaxy clusters. The machine learning methods are thoroughly investigated on different data representations, such as image data and tabular features extracted from images, and we test different scenarios, for example training and testing on sources of different galaxy clusters. We further explore methods from the field of explainable artificial intelligence, identifying that our models use similar indicators for detecting GCs as commonly employed in traditional methods. The obtained results demonstrate that modern machine learning techniques are an intriguing tool for generating GC catalogues from upcoming space-based wide-field surveys.

In the following section, the data are explained in more detail. Section 3 gives an overview of the used machine learning methods for tabular and image data. The results are presented in Sect. 4 and discussed in Sect. 5. We conclude in Sect. 7.

2. Data

We use the data from the Advanced Camera for Surveys (ACS) Virgo Cluster Survey (ACSVCS; Côté et al. 2004) and ACS Fornax Cluster Survey (ACSFCS; Jordán et al. 2007b), in which GCs are marginally resolved due to the close distances of the Virgo and Fornax galaxy clusters (16.5 Mpc and 20 Mpc, respectively; Mei et al. 2007; Blakeslee et al. 2009). GC catalogues were presented in Jordán et al. (2007a, 2015). Both the ACSVCS and the ACSFCS are surveys based on HST ACS observations in the F475W (∼g) and F850LP (∼z) bands of 100 massive early-type galaxies in Virgo and 43 galaxies in the Fornax galaxy cluster, respectively. We downloaded all available ACS data from the Hubble Legacy Archive1 consisting of 98 galaxies in Virgo and all 43 galaxies in Fornax. For VCC 1049 and VCC 1261 no data were available. In the ACSVCS, the data have exposure times of 750 and 1210 seconds in the g- and z bandpasses, respectively, and 760 (g-band) and 1220 (z-band) seconds in the ACSFCS.

2.1. Existing globular cluster catalogues

Extensive GC catalogues based on the ACS data exist for both galaxy clusters, presented in Jordán et al. (2009) for Virgo and Jordán et al. (2015) for Fornax. These catalogues were constructed using the methods detailed in Jordán et al. (2009) and are based on data reduction procedures and initial selections for the ACSVCS presented in Jordán et al. (2004, 2007b) for ACSFCS.

Here, we summarise the most important steps. As described in Jordán et al. (2004), initial source catalogues were derived with SExtractor (Bertin & Arnouts 1996) after a surface brightness model of each galaxy was subtracted from the images. Then, initial cuts on the source magnitudes and shape were made, which excluded overly bright and elongated sources. Additionally, sources found within 0.5″ of the galaxy centres were omitted to not include nuclear star clusters. Then, all sources were fitted with point-spread function-convolved King models (King 1966) to obtain structural and photometric parameters such as magnitudes, half-light radii, and the concentration c.

This initial sample still contained contaminants from faint foreground stars and background galaxies which were further removed based on a broad colour cut of 0.5 < (g − z) < 1.9 mag and removal of extended as well as unresolved sources with 0.75 pc < rh < 10 pc. Then, model-based clustering was used to divide the remaining sources into two components. While the contaminant component is assumed to be a fixed component based on a control field, the GC component model considers source magnitudes and sizes. Based on these parameters, the probability that a source is a GC pGC can be calculated. While the ACSVCS GC catalogue presented in (Jordán et al. 2009) only contains sources with pGC ≥ 0.5, a slightly different selection was made for the ACSFCS GC catalogue (Jordán et al. 2015) which also contains sources with pGC < 0.5.

In total, the ACSVCS catalogue contains 12763 GC candidates, the ACSFCS catalogue contains 9136 sources, 6275 of them with pGC ≥ 0.5. In the following, we only use those as GC candidates. In summary, this thorough work of detecting, cleaning, and modelling the sources in the ACSVCS and ACSFCS projects has produced an extensive data set of 19038 GCs.

2.2. Data preparation

While the many steps that were taken to obtain the ACSVCS and ACSFCS GC catalogues have produced a rather clean set of GCs, in this work we wish to explore how well different machine learning methods can reproduce these GC catalogues starting from an unprocessed initial data set that was produced by simple source detection methods. For this reason, we derived our own source catalogues from the archival ACS data and only use the GC catalogues to label some of the detected sources as GCs. The data that are fed to the machine learning methods have not gone through any colour or magnitude cuts and also contain contaminants of many kinds, such as foreground stars, background galaxies, and image artefacts.

To obtain our data, we take the following steps for each of the ACSFCS and ACSVCS galaxies. First, a median filter is used to remove the smooth galaxy background using a 7 × 7 pixel filter in both bandpasses. Testing with different filter sizes gives similar results. Then, sources were detected in the residual images using Photutil’s segmentation routines Bradley et al. (2020). We used a 3 × 3 pixel Gaussian kernel for filtering and selected all sources that have at least two pixels which are 3σ above the local median background. Standard deblending parameters with 32 levels were chosen. From this initial source list, we only keep those that were detected in both filters and that are at least five pixels from the edges of the detector gap that bisects the two ACS charge-coupled devices (CCDs) of the wide field channel (WFC).

Then this source list is matched with the ACSFCS and ACSVCS catalogues, labelling matched sources as GCs. In a last step, simple photometry is performed to get a measurement of the aperture magnitudes. We measured the magnitudes for each source using three, four, and five pixel apertures and corrected those for foreground extinction using the dust maps from Schlegel et al. (1998). Additionally, we measured the concentration index CI of these apertures as the magnitude difference between each three, four, or five pixel aperture and a one pixel aperture. In the following, we use only these aperture magnitudes and do not apply any aperture correction as those would need additional information on the type of object that is observed. However, such corrections will need to be made eventually if the data is finally used for GC science (e.g. Jordán et al. 2015). The extracted features are described in Appendix A.

Unfortunately, not all sources from the ACSVCS and ACSFCS catalogues were detected in our simple pipeline. In Virgo, 304 (2.4 %) of 12 699 catalogue GCs2 could not be found, 69 of those fainter than g = 26 mag. Often, those faint sources were only found in one filter. 114 GCs of 6275 (1.8 %) were not found in the Fornax galaxies.

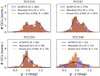

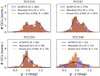

In total, our source lists contain 84 929 sources, 63 162 in Virgo and 21 767 in Fornax. Out of those, 18 556 are matched to GC candidates in the ACSFCS and ACSVCS catalogues, 12 395 in Virgo and 6161 in Fornax. Consequently, 21.8% of our sources are matched to catalogue sources and are labelled as GCs. The remaining sources are labelled as non-GCs. Figure 1 illustrates this sample in colour-magnitude diagrams. The data set statistics are summarised in Table 1.

|

Fig. 1. Colour-magnitude diagrams for the different samples based on four pixel aperture magnitudes that are corrected for foreground extinction. We differentiate between GCs as the ones that were matched to the ACSVCS and ACSFCS catalogues and non-GCs. |

Statistics of the assembled data sets.

As visible from Fig. 1, the colour-magnitude distributions for the sources matched with catalogue GCs are very similar between Virgo and Fornax, but the contaminant distributions vary slightly. One reason for this is the larger distance to Fornax (20 Mpc instead of 16.5 Mpc). In combination with the almost equal exposure time, this creates a cut-off of detected sources at brighter magnitudes than in Virgo. Additionally, the non-GC sources in Virgo show a small overdensity at m4_g − m4_z ∼ 0.2 mag. Inspecting other parameters and the image cut-outs of these sources finds them to be dominated by compact sources with low concentration indices (CI ∼ 1). We therefore believe that these sources are foreground stars, which are not visible in the Fornax sample because of their overall low numbers (< 0.5% of Virgo sources).

In addition to tabular feature data, we want to test machine learning methods on image data of the sources. For this reason, we created 20 × 20 pixel cut-outs in the g- and z-bands of all detected sources from the residual images which were previously used for source detection. The source centroid was placed into the centre of each image. Figure 2 shows four examples of such cut-outs. This illustrates that while some elongated sources (like in the top right panel) can be excluded as GCs easily by eye, the case becomes more difficult for fainter sources.

|

Fig. 2. Examples of image data of GCs (left) and non-GCs (right). The sources have different magnitudes and the plotted flux scale differs from source to source. |

3. Methods

To get a thorough understanding of our data set and the problem of extracting GCs from photometric surveys, we evaluate the capability of several machine learning models with different degrees of complexity. We apply such models on tabular features extracted from the images that are commonly used to identify GCs as well as the images directly. In the following, we briefly review the used methods as well as the evaluation scenarios and metrics used throughout the remainder of this document. A detailed mathematical description of the considered models can be found in Appendix B.

3.1. Notation

We employ a uniform notation to describe both the data and the models. By x(i) ∈ ℝN, we denote the tabular feature vector of the ith source in our training data set, while y(i) ∈ {0, 1} is the corresponding label with y(i) = 1 if source i is a GC and y(i) = 0 otherwise. x ∈ ℝN denotes feature vectors in general.

Machine learning models are described as functions p : ℝN → ℝ that map from the feature space into the real space, from which a class label ym(x) is inferred via thresholding

with threshold θ (e.g. θ = 0.5). For models that work directly with the image data, x(i) ∈ ℝd × M × M denotes instead the M × M pixel-sized image with d filter bands of source i in our training data set, while y(i) ∈ {0, 1} is again the corresponding label. In this case, p : ℝd × M × M → ℝ maps from the image space into the real space, x ∈ ℝd × M × M and  .

.

3.2. GC detection using tabular data

For the task of detecting GCs using tabular feature data, we investigate logistic regression, support vector machines, neural networks, tree-based algorithms and nearest neighbour classifiers.

3.2.1. Logistic regression

Logistic regression is a linear classifier, which means that the decision rule for identifying GCs depends linearly on the input x. The decision rule of logistic regression can be visualised as a hyperplane with normal vector W (weights) and offset b (biases) in the input space that separates the two classes. This yields a high degree of interpretability, since the learned weights W directly tell us which features in x are important for classifying a source as a GC. If such a separation via a hyperplane is not possible, the data is not linearly separable and more expressive models like support vector machines, neural networks, and trees are required to achieve better results.

3.2.2. Support vector machines

Similar to logistic regression, linear support vector machines (Boser et al. 1992) use a hyperplane W to distinguish classes, but the hyperplane is chosen such that the distance to data points close to it is maximised. To deal with non-linearly separable data, the kernel trick (Aizerman 1964) is commonly applied: instead of using the features x directly, new features are obtained by applying a kernel function κ(⋅, ⋅) to x and the training samples x(i), κ(x(i), x). A prominent choice for the kernel is the Gaussian radial basis function κ(x(i), x) = exp(−γ ∥x(i)−x∥2) with hyperparameter γ ∈ ℝ+. This is equivalent to mapping the features x into a high-dimensional vector space where a linear separation of classes is easier.

3.2.3. Neural networks

Neural networks extend the previous methodologies to deep architectures, where an input is processed by sequentially applying the following operations multiple times: (i) linearly map the input (as in logistic regression), (ii) apply a non-linear activation function ϕ, and (iii) use the output as the new input. By training this hierarchy of functional modules (also called layers) using the error backpropagation algorithm (Linnainmaa 1970; Werbos 1982; Rumelhart et al. 1986), neural networks find a suitable non-linear transformation F(x) of the input features to linearly separate the underlying classes. In fact, most often the last layer of a neural network is logistic regression applied on the transformed feature vectors F(x) (see Appendix B.1.3). In the special case of linear activation functions (ϕ(x) = x), neural networks become identical to logistic regression.

Although deep neural networks often reach outstanding performance levels (LeCun et al. 2015), they suffer from a lack of interpretability (Arrieta et al. 2020; Linardatos et al. 2021). Several approaches have been proposed to explain the output of a neural network retrospectively. Model-agnostic methods, such as LIME (Ribeiro et al. 2016), train linear surrogate models that behave like the parent model locally (around a single feature vector) to generate explanations. Alternatively, one can identify which changes to the input feature vector x mostly affect the classification output of the model. This information can, for instance, be obtained from the model gradient ∇xp(x) (Sundararajan et al. 2017; Montavon et al. 2017). In addition, neural network architectures like TabNet3 (Arik & Pfister 2021) and BagNet (Brendel & Bethge 2019) have recently been proposed that are more interpretable by design and provide insight into which features the network used for its prediction. To further increase the transparency of neural networks, techniques like Monte Carlo dropout (Gal & Ghahramani 2016) can be used to provide both a class prediction and a sensible estimate of the neural network’s uncertainty (see Appendices B.1.3 and D).

3.2.4. Decision trees and forests

A decision tree (Breiman et al. 1984) is a tree-structured series of questions that are used to figure out which class an input x belongs to. It is created by repeatedly splitting the training data into subsets (branches) by finding appropriate conditions for elements of the feature vector x. The reasons why a source is assigned a certain class is given by the decision path in the tree and is hence completely comprehensible.

However, decision trees are prone to overfitting the training data. This downside can be alleviated by building ensembles of decision trees, so-called RFs (Breiman 2001), where each tree is grown from a random sub-selection of features, that is, elements in x on which conditions can be applied (called feature bagging) and training data samples (called bootstrapping). In this case, the final classification is given by the majority vote of all trees in the ensemble. In a RF, all trees are created independently. Instead, one can use a technique called gradient boosting, where trees are sequentially added to the ensemble and subsequent trees learn to correct the mistakes of their predecessors.

Both for RFs and boosted trees, the decision pathways can be investigated – although less conveniently than for a single decision tree. A more global view of the inner workings of RFs and boosted trees can be obtained by looking at which features have been most important for building the decision trees of the ensemble. Moreover, by using model-agnostic methods like LIME, explanations for individual classifications can be generated as well.

3.2.5. k nearest neighbours

A k nearest neighbour (kNN) classifier searches for the k sources in the training data that are closest, for example, with respect to the Euclidean norm, to the source we want to classify and assigns it the label that the majority of the k nearest neighbours have. Thus, different from the previous methods, no parameters or decision rules are learned from the training data, but the training data itself is used during inference time4. One benefit of this method is that we can directly look at the training samples (and their distances to x) that were used to make the class prediction for x. However, a downside is that the inference time grows with the training set size, making it often slower than alternative methods like neural networks and RFs.

3.3. GC detection using image data

For the task of detecting GCs from image data, we use again the kNN and RF algorithms from the previous section as baselines. In addition, we also apply CNNs (Fukushima & Miyake 1982; LeCun et al. 1998), which are currently one of the most prominent models for image recognition tasks (LeCun et al. 2015).

Similar to a normal neural network (Sect. 3.2.3), a CNN is a hierarchy of functional units, with the main difference being that in initial layers, linear maps take the form of convolutions with learnable filters (weights) over the spatial structure of the input. Like classical neural networks, CNNs suffer from a lack of interpretability. Although gradient and surrogate methods like LIME could be used to identify which pixels of the input image mostly contributed to the model’s prediction, this only leads to limited insights due to the low spatial resolution of GC cut-out images. For this reason, we further investigate a variant of the CNN architecture that replaces the logistic regression layer at the end of the CNN architecture with a method that is more interpretable by default (Papernot & McDaniel 2018) – in this case, kNN. This works as follows: instead of using the image data for the kNN search, we project training images x(i) and the test image x into the latent space of the CNN, x(i) ↦ F(x(i)) and x ↦ F(x), and use these representations for the kNN algorithm5. This allows us to investigate the k nearest neighbours used to predict the class label in the original image space (or even the tabular feature space), increasing the transparency of the algorithm without impairing performance. Again, as in Sect. 3.2.3, Monte Carlo dropout can be used during inference time to provide both a class prediction and an uncertainty estimate from CNNs (see Appendix D).

3.4. Data splits and rescaling

We look at two specific cases that mimic how we would realistically approach the problem of identifying GCs from photometric surveys:

-

1.

The data are available as a collection of sources from different clusters, with a fraction of sources being labelled. In this case, we merged the Fornax and Virgo data set. The resulting data set was randomly split in a 76:4:20 ratio into training, validation, and test data. For cross-validation, we repeated this process ten times to generate different splits.

-

2.

Training data are available only for one cluster (e.g. Virgo) and the trained model was used to find GCs in the galaxies of another cluster (e.g. Fornax). In this case, training and validation data were generated by randomly splitting the sources of, for example, Virgo into a 95:5 ratio, while the sources of Fornax were only used for testing.

The validation data were used to tune hyperparameters and select the best model, while the test data were only used to measure the performance of the final model.

We further rescaled all tabular features such that they spanned similar value ranges6. The general formula for rescaling a feature  is given by

is given by

The scaling parameters αk and βk are calculated from the training split and then applied to the training, validation and test data. Details on how αk and βk have been chosen for individual features k can be found in Appendix C. The image data are not rescaled.

In the training data, sources that contain ‘NaN’7 tabular feature values are dropped during training. For the validation and test data, ‘NaN’ entries are replaced by the median value of the corresponding tabular feature obtained from the training split. Some of the tabular features were dropped from the data altogether, for example, non-meaningful features like sky coordinates or features with a high fraction of ‘NaN’ values. ‘NaN’ values in the images are replaced by 0.

3.5. Evaluation metrics

In the following, we denote the test data set by 𝒯. For convenience, we introduce the following function

to test whether the model prediction agrees with the according test label. With this set up, we can define the following quantities: the number of True Positives (TP), True Negatives (TN), False Positives (FP) and False Negatives (FN):

In other words, TP and TN are the amount of actual GCs and non-GCs found by the model, respectively, while FP and FN are the number of wrongly detected GCs and non-GCs, respectively.

To evaluate our model, we are interested in identifying how reliably GCs are found, but also how many GCs are erroneously proposed by the model. To do this, we use the True Positive Rate (TPR) and False Positive Rate (FPR)

Thus, the TPR is the fraction of GCs found from the test data set, while the FPR is the fraction of non-GCs that have been misclassified as a GC. In our case, the goal is to detect as many real GCs as possible, meaning that we strive for a TPR close to one and a FPR close to zero.

Since our data set often contains many more sources that are non-GCs, it might still be that a large fraction of detected GCs are false positives even if the TPR and FPR values are very good. For this reason, we also look at the False Discovery Rate (FDR)

which tells us how many of the GCs the model predicts are actually false positives.

Most of the used models provide a scalar output for each source from which the class label is inferred using a threshold θ. For instance, some models return the probability of a source being a GC and the classification result is obtained by comparing if it exceeds θ, for instance θ = 0.5. Through θ the trade-off between TPR and FPR can be adjusted. By increasing θ, only sources where the model is more confident will be classified as GCs, reducing the amount of FPs (but also possibly reducing the number of TPs). How the TPR changes with respect to the FPR is quantified by the Receiver Operating Characteristic (ROC) curve, which plots the FPR against the TPR for varying thresholds. The ROC curve is bounded by TPR = 0, FPR = 0 (all sources are classified as non-GCs) and TPR = 1, FPR = 1 (all sources are classified as GCs). How a model responds to changing the threshold can be quantified via the area under the ROC curve (AUC ROC), with values closer to one being better, meaning that the threshold can be set in a way that even for low FPRs, high TPRs are achieved.

3.6. Software implementation

We implemented the aforementioned models in Python 3.8.8 using the Scikit-Learn 0.24.2 (Pedregosa et al. 2011), PyTorch 1.10.0 (Paszke et al. 2019), and CatBoost 1.0.3 (Prokhorenkova et al. 2018) libraries. Simulations were performed on a commercial CPU (AMD Ryzen 9 5900HS at 3.3 GHz) and GPU (NVIDIA GeForce RTX 3060 Laptop GPU). The data sets and code with hyperparameters are publicly available on github8.

4. Results

4.1. Globular cluster detection

We applied the described models of Sect. 3.2 on the tabular feature data, once for the scenario of (i) predicting GCs from a mixture of sources (Table 2) and (ii) for training the network on data from the Virgo cluster to find GCs in the Fornax cluster (Table 3). As a cross-reference to the boosted tree implementation of Scikit-Learn (AdaBoost), we also deployed CatBoost developed by Yandex, which promises good performance even with the provided default hyperparameters (Prokhorenkova et al. 2018).

Classifying GCs from the Virgo and Fornax tabular data sets.

Less complex models like logistic regression perform not optimally in both scenarios, missing around 25−40% of detectable GCs while producing a high number of false positives (FPR ∼ 5% and FDR ∼ 16−20%). The best performance is reached by tree-based algorithms like RFs and boosted trees as well as deep neural networks, that is, neural networks with more than two layers. Especially RFs and boosted trees reach a high true positive rate of around 90% while producing the lowest number of false positives (FPR ∼ 2−3% and FDR ∼ 6−8%). For instance, in the case of predicting GCs in Fornax, the number of false positives is almost halved compared to logistic regression. In contrast, deep neural networks are capable of finding slightly more GCs (TPR ∼ 90−94%), but with the downside of also producing more false positives (FPR ∼ 2−4% and FDR ∼ 10%).

The obtained results for both scenarios are consistent, with training on sources from mixed clusters leading to slightly better performance levels. This is to be expected because the data of both surveys were not obtained under identical intrinsic conditions, as Fornax is ∼5 Mpc more distant and hence completeness levels are different. The same GC will appear fainter and with a slightly smaller angular size in Fornax than in Virgo. However, it is promising that a model trained on data from one survey can be used to detect GCs in another one, given that the data statistics agree sufficiently.

The second evaluation scenario allows us to evaluate the test performance of each model per Fornax galaxy (Table 3, left). This mostly leads to an increase of the FDR as some low-mass galaxies only contain a small number of GCs. Hence, the model also only detects a small number of GCs, of which a larger fraction are false positives even if the FPR is rather low.

Training on tabular data from Virgo and evaluating on Fornax data.

On the image data, both kNN and RFs suffer from a drop in performance, which is to be expected since both models are known to perform best on tabular data (Tables 4 and 5). However, both CNNs and CNNs combined with kNN as a last layer perform well in both scenarios, reaching similar or even slightly better performance levels than tree-based methods on the tabular data. Thus, both CNN-based methods on the image data as well as tree-based methods on the tabular data reach good performance levels, that is, high TPR and low FPR and FDR, on both of the investigated scenarios. Similar results are obtained when training models on data of the Fornax cluster and testing on Virgo (see Tables E.1 and E.2). ROC curves for several models are shown in Appendix F.

Classifying GCs from the combined image data sets of Virgo and Fornax.

Training on image data of Virgo and evaluating on Fornax image data.

In both cases, model performance strongly depends on the magnitude range of the sources, as Fig. 3 shows. For example, for sources with intermediate values of m4_g (22 mag < m4_g ≤ 24.5 mag), RFs trained on tabular data reach a TPR of ∼97.22−98.83% and a FDR of ∼2.31−6.48% while CNNs trained on image data reach a TPR of ∼98.15−99.53% and a FDR of ∼4.05−5.90%. For fainter sources (m4_g > 24.5 mag), TPR and FDR become worse but remain above ∼90% and below ∼15%, respectively, for magnitudes of up to 25.5 mag. Especially compared to logistic regression – which also only reaches a FDR of ∼10.5−13.8% in the magnitude range 22−24.5 mag – more complex models are capable of generalising towards sources with much lower or higher magnitudes. For example, CNNs still reach a TPR of ∼81% and a FDR of ∼22% for sources in the magnitude range of 25.5−26 mag, as well as ∼52% and ∼24%, respectively, for the range of 26−26.5 mag, but in general the performance drops in magnitude ranges where only few sources are present in the input data. Similar results are observed when training on data from Virgo and evaluating on data from Fornax (see Appendix G).

|

Fig. 3. Model performance for sources of different magnitude ranges, separated into bins of size 0.5 mag, for the case of training on data of the Virgo cluster and evaluating on data of Fornax. At the top, the number of GCs and non-GCs per bin are shown. |

4.2. Model interpretability

Although model performance is an important indicator, it is by far not the only criteria that should be considered when investigating the usability of a model (Arrieta et al. 2020; Linardatos et al. 2021). Especially when the results of a machine learning model are utilised in subsequent projects, it is important that models also provide a degree of interpretability that allows proper investigation of the model’s decision process and the data used to train the model. Although an extensive evaluation of model interpretability in the form of a survey is out of scope for this work, we demonstrate for selected examples how more insight into the inner workings of machine learning models can be obtained.

In case of tree-based algorithms like RFs, a first overview of what the model focuses on can be acquired by looking at which features have been most important in building the decision trees of the ensemble. For a RF trained on tabular data of Virgo, we can see that, as expected, features such as orientation (position angle of the source) are least important, while concentration indices and magnitudes are most important (Fig. 4, left).

|

Fig. 4. Feature importance of a RF trained on Virgo data (left) and explanations for individual RF predictions generated with LIME (right). |

Explanations for individual classifications (x,ym(x)) can be obtained using the model-agnostic LIME method. In LIME, a linear surrogate model is trained that approximates the behaviour of the RF in the local neighbourhood of the input x. This is achieved by randomly generating inputs  around x, evaluating them using the RF to get its prediction

around x, evaluating them using the RF to get its prediction  and using the

and using the  tuples as the training set for the surrogate model. To get more expressive explanations, the features of

tuples as the training set for the surrogate model. To get more expressive explanations, the features of  are further discretised into value ranges. Since the surrogate model is linear, the feature ranges with the highest absolute weights are then used as an explanation for the original model’s decision. To guarantee that the created explanations are somewhat reliable, we use the statistical stability indices introduced in Visani et al. (2020) for LIME.

are further discretised into value ranges. Since the surrogate model is linear, the feature ranges with the highest absolute weights are then used as an explanation for the original model’s decision. To guarantee that the created explanations are somewhat reliable, we use the statistical stability indices introduced in Visani et al. (2020) for LIME.

A demonstration of LIME is shown in the right panels of Fig. 4 for four different sources – two which are classified as non-GCs and two which are classified as GCs by the RF. For the two non-GCs, we picked examples where the images can be used to easily evaluate explanations provided by LIME. In the top example, LIME states that the concentration indices and magnitudes are too low, meaning that the source is not sufficiently extended and too faint to be a GC. In the bottom example, LIME states the opposite: the large concentration indices as well as the large area covered by the source are the reasons why it is not classified as a GC. At least in these straightforward cases, the explanations provided by LIME are consistent with what one would expect from looking at the images of the sources. For the two sources classified as GCs, the LIME explanation focuses on different features, in particular the g and z-band magnitudes and the concentration indices. This is encouraging since magnitudes (and consequently colours) as well as concentration indices are traditionally used to identify star clusters in photometric data (e.g. Amorisco et al. 2018; Adamo et al. 2020; Thilker et al. 2022). Moreover, for both GCs, the provided explanations are very similar, showcasing a certain degree of consistency of LIME. This is also true for explanations generated for other GCs (see Appendix H).

In the case of the CNN with a kNN layer, the images of the training sources that have been used for the prediction can be directly investigated together with their labels and distances to the input image x ∈ ℝd × M × M in the latent space F(⋅) of the CNN. For instance, in Fig. 5 we show source 71 of the Fornax galaxy FCC 47 which has been falsely classified as a non-GC. The k nearest neighbours show that similar images exist in the training data which are either classified as non-GCs or only classified as GCs with a low confidence. We can further see that many of these sources show an elongated structure around the central source which is also slightly present in source 71 of FCC47 and might be the reason why it has not been classified as a GC.

|

Fig. 5. Images of the k nearest neighbours with labels (surrounding) used to classify source 71 of FCC47 (centre). The nearest neighbours are not found in the image space x, but in the latent space of the CNN F(x). |

5. Discussion

5.1. False negatives and false positives

We first explore the properties of sources that are either false negatives or false positives – even in the best performing models – to understand whether these misclassified sources are informative about inherent problems in the modelling.

Figure 6 shows a colour-magnitude diagram of all sources labelled as GCs in the original data. Sources that were not found by the RFs (applied to the tabular data) or CNNs (applied to the image data) are highlighted in colour. This figure illustrates that the false negatives tend to be faint sources, many of them with m4_g > 26 mag, much fainter than the bulk of GCs. The faintness of these sources could be the reason for the misclassification, because identification of fainter sources is generally more difficult due to relatively higher backgrounds and noise levels.

|

Fig. 6. Colour-magnitude diagram showing the recovered GCs in grey. Sources in pink and blue refer to sources labelled as GCs that were not found (false negatives) by the RF or CNN method applied to the full data set, respectively. |

Exploring the false negatives further, Fig. 7 shows the g and z-band images of a few GCs that were not detected by the CNN. For some sources, reasons for the failure of detection can be identified from these images, such as image artefacts in one filter, extended backgrounds, or proximity to detector edges. However, for other sources the reasons cannot be inferred from the images by eye. For those, interpretable methods as presented above might hold additional information, which is illustrated in Fig. 5 for source 71 of FCC47 (top left source in Fig. 7).

|

Fig. 7. Examples of sources that are labelled as GCs, but were not found by the CNN on the image data (false negatives). While for some sources, the reason for the non-detection is not immediately obvious, others show extended backgrounds or are located close to the detector edges. |

Similarly, exploring the images of false positives only provides in some cases insights into why a source was misclassified. Figure 8 shows examples of several false positives produced by the RF. While clearly some of the sources are image artefacts, for example at the detector edges, others are indistinguishable from GCs by eye.

|

Fig. 8. Examples of false positives identified with the RF method. While some sources are indistinguishable from GCs by eye, also image artefacts at the detector edges are among the false positives. |

However, we have reasons to believe that at least some of these false positives are in fact true GCs which were not labelled as such in the ACSFCS and ACSVCS catalogues. To illustrate this, Fig. 9 shows a cut-out of the ACSFCS image of the edge-on S0 galaxy FCC 170. According to the RF method trained on Virgo galaxies, this galaxy has 25 false positives and as the figure shows, 24 of them are in the central high surface brightness region of the galaxy. This region was not included in the ACSFCS catalogue, likely because subtracting a surface brightness model of this galaxy is challenging due to the X-shaped bulge and the thin disk (e.g. Pinna et al. 2019; Fahrion et al. 2021). As we used a simple median background filter, we have included sources in these regions and find several sources which were classified as GCs by the RF. That at least the brightest of those are in fact GCs can be seen by cross-referencing with the sample of spectroscopically confirmed GCs from Fahrion et al. (2020b).

|

Fig. 9. Zoom into the central region of the HST ACS F475W image of FCC170. Sources in blue are GCs from the ACSFCS catalogue (Jordán et al. 2015) while sources in cyan were identified as GCs by the RF, but are not labelled as such in the original ACSFCS catalogue (false positives). The crosses mark spectroscopically confirmed GCs from Fahrion et al. (2020b). |

This closer inspection of false negatives and positives illustrates that already excluding sources near the detector edges in addition to the detector gap leads to a cleaner sample. Nonetheless, at these low rates, consequences for inferred properties of GC systems such as luminosity functions should be minimal, especially since the existing catalogues are not perfect either. However, we note that low-mass galaxies might be affected more due to the intrinsic lower number of GCs.

5.2. Model performance and generalisation to new data

The number of true and false positives can be adjusted for most models via the decision threshold, which has to be set depending on the application the model is used for. For example, by increasing the threshold, the fraction of false positives will decrease, although with the drawback that less of the real GCs present in the data will be found as well.

In general, the trained models reach good performance levels and are suitable for detecting GCs in photometric data. Our results agree with a recent study evaluating explainable machine learning for extracting ultra-compact dwarfs and GCs from ground-based imaging of the Fornax cluster, which reports true positives rates of 0.89−0.97 and false discovery rates of 0.04−0.07 (Mohammadi et al. 2022), although on a much smaller data set of ∼7700 sources containing ∼500 GCs and for six instead of only two bands, making an exact comparison impossible. Recently published studies on detecting star clusters from HST data using machine learning also report similar results: Pérez et al. (2021) use a CNN (StarcNet) and a data set of ∼15 000 sources from the LEGUS galaxies with observations in five bands for training, validation, and testing. They report a TPR of ∼81% and a FDR of ∼19% on a cluster/non-cluster classification task, reaching a TPR of ∼93% and a FDR of ∼7% if testing is restricted to high-mass objects (153 sources). Whitmore et al. (2021) report a TPR of ∼82% for a similar cluster/non-cluster classification task for sources from five galaxies of the PHANGS-HST sample using two popular CNN architectures (Resnet18 and VGG19-BN) trained via transfer learning on ∼5500 sources of ten LEGUS galaxies. When only applying their trained models to isolated objects and for detecting compact and symmetric star clusters, they reach a TPR of ∼92%. Again, due to the differences in the investigated data sets and evaluation schemes, an exact comparison with our results is difficult.

It is especially encouraging that models trained on sources of one cluster generalise to other clusters (e.g. training on Virgo to find GCs in Fornax). However, this should be taken with a grain of salt, since generalisability is not guaranteed when applying the trained model on data that lies outside of the training domain. For instance, applying our models to NGC 1427a, a star-forming dwarf galaxy in Fornax (Mora et al. 2015; Lee-Waddell et al. 2018), leads to many false detections of sources that likely are not GCs but rather young star clusters and stellar associations.

Both the models trained on image and tabular data reach similar maximum performance levels, most likely due to noise and imperfections both in the recorded data as well as the ground-truth labels used to train and evaluate the models. Still, the performance might be further improved by enriching the data, for example by including more filter bands, by increasing the amount of labelled data for training, or by fine-tuning models by providing more class labels than just non-GC and GC. For the latter case, the catalogues of young star clusters created within the LEGUS or PHANGS projects (e.g. Whitmore et al. 2021; Pérez et al. 2021) could be used to build a comprehensive sample of GCs and young star clusters for training models. However, when including young star clusters, possibly additional features that encompass the specifics of young star clusters in comparison to mostly spherical GCs need to be included (see e.g. Whitmore et al. 2011 or Deger et al. 2022). Furthermore, observed data might be extended using mock data, although great care has to be put into guaranteeing that artificially created data is representative of recorded data. For the data volumes expected for future wide field missions such as Euclid or the Nancy Grace Roman Space Telescope, labelled data will be greatly outweighed by unlabelled data, hence potential methodologies to be explored in future work are unsupervised and semi-supervised approaches.

5.3. Model complexity

We identified ensemble-based tree methods on tabular data and CNN-based methods on image data as the two best-performing approaches. Compared to CNNs, models like RFs have the advantage of being easy to set up: they require no feature rescaling of the data, contain only a small number of tunable hyperparameters, generally perform and generalise well due to being ensemble-based, and are appropriately interpretable. In contrast, CNNs can be directly applied to images, but lack interpretability and come with many degrees of freedom that can be tuned, such as the model architecture, used activation functions, regularisation techniques and hyperparameters. This certainly makes tree-based models the more attractive choice for the task of detecting GCs. Nevertheless, CNNs have the potential of outperforming RFs especially for increased data complexities (e.g. more filters) due to their capability of learning to identify appropriate and task-dependent features in the images automatically.

5.4. Model interpretability

We illustrated the application of interpretable methods to provide more insight into model decisions and to help explore the training data set, for example, to find unexpected labels or artefacts. Interpretable methods are important to increase the trustworthiness of models, easing the decision process for whether model predictions can be trusted when applying them on data beyond the initial training domain (Arrieta et al. 2020; Linardatos et al. 2021).

In particular, the model-agnostic LIME method confirms that the investigated models focus on the proper tabular features for detecting GCs. However, an extensive study of the quality of explanations provided by LIME is out of scope for this work. Hence, there is no guarantee that for all instances, the explanations obtained via LIME are helpful or completely accurate in describing the original model. In fact, how to evaluate the quality of generated explanations is an active field of research (Zhou et al. 2021). The quality of generated explanations could be quantified in future work via human-centred surveys or by deriving fidelity metrics that score how accurately the surrogate explanation describes the model’s decision.

6. Example science case: Colour distributions

Eventually, the goal of building a machine learning pipeline for detecting GCs is to use the obtained catalogues in scientific analyses, for example, to study galaxy properties. Although the overlap between model-generated and original catalogues is high, it is not perfect – especially not on the level of individual galaxies. Thus, to demonstrate the applicability of model-generated GC catalogues for scientific use-cases, we provide an example of a measure of interest – the g − z colour distribution of GCs in a host galaxy – that can be derived from our model output and compare it to corresponding results derived from the original catalogues.

Figure 10 shows (g − z) colour distributions for four Fornax galaxies. We compare the original ACSFCS catalogue, the matched sources labelled as GCs after cross-referencing with the ACSFCS catalogue, and the sources our model, here shown for the CNN, identifies as GCs. For this comparison, we applied the average aperture corrections of Ag = 0.237 mag and Az = 0.347 as given in Jordán et al. (2009) to transform our m4_g apertures to corrected magnitudes. We note that the choice of this aperture correction might slightly affect the colour distributions. The colour distributions as recovered from the CNN model agree with the ACSFCS catalogue, especially for massive galaxies with a large number of GCs. For low-mass galaxies like FCC 255 or FCC 106 that only have a small number of GCs, the difference becomes larger due to low number statistics that is strongly affected by the classification of individual sources. Nonetheless, even in the extreme case of a galaxy like FCC 106 with only 15 GCs, the recovered colour distribution is comparable to the ACSFCS result. Testing this for all Fornax galaxies shows that the mean colour from the ACSFCS catalogue is reproduced within 0.02 mag for systems with more than 100 GCs. For galaxies like FCC 106 with less than 20 GCs, the mean colour is still within 0.12 mag from the ACSFCS value. Likely, any results inferred from these distributions concerning, for instance, the assembly history would be similar for both distributions.

|

Fig. 10. Four (g − z) colour distributions of galaxies in the Fornax cluster. Blue histograms show the original ACSFCS data, red are all matched GCs in our data set and orange histograms show the sources identified as GCs by the CNN. The corresponding lines are kernel density estimations for visualisation of the histogram shapes. The legend states the number of sources in each sample. |

7. Conclusions

In this paper, we determine the performance of various machine learning techniques of varying complexity for the detection of GCs in archival HST data of the Fornax and Virgo galaxy clusters. We employ several methods on both image data and tabular data extracted from the image data using different evaluation strategies. Our main results are:

-

Tree-based or hierarchical models like RFs and neural networks reach the best performances, outperforming more simple methods such as logistic regression, nearest neighbours classification, and (linear) support vector machines.

-

RFs on tabular data as well as CNNs on image data reach comparable performance levels, recovering ∼90−94% of GCs under test conditions, with only ∼6−8% of detected GCs being false positives.

-

In the magnitude range 22 < m4_g ≤ 24.5 mag, the true positive rate even reaches 98−99% with a false discovery rate < 5%.

-

Although a lower false positive rate would be preferred, exploring false positives in more detail shows that at least some of those are actual GCs that were not labelled as such in the original catalogue.

-

Our experiments show that models trained on data of one environment can be applied to data of other environments if similar conditions on galaxy types and distance are met.

-

Explainable artificial intelligence methods provide additional ways of getting insight into the decision process of trained models as well as the training data. Using such methods shows that magnitudes, colours, and concentration indices are most important for identifying GCs, in agreement with traditional detection methods.

-

As one exemplary science case, we compare GC colour distributions as derived from our best models to the distributions from the original catalogues. This shows that the distributions are recovered even at very low GC numbers (< 20), indicating that science results derived from our detected sources will agree with the results from the original catalogues.

-

The collected tabular and image data sets consisting of 18 556 GCs and 84 929 sources in total are made publicly available for future research.

This study gives an encouraging outlook of the potential machine learning might unfold when applied to future data of upcoming wide-field survey facilities such as Euclid or the Nancy Roman Space Telescope. The presented approach builds on the effort that has been put into collecting clean catalogues of a relatively small number of galaxies. Given our results, we are confident that machine learning models trained by cross-referencing these catalogues will assist in creating novel catalogues of similar quality, but containing many more sources using less processed data. Combined with the currently developed interpretable methods (and coupled with traditional approaches), such models promise to produce reliable and comprehensible results, which is essential if machine learning methods are to be applied to future data volumes too large for manual inspection.

For a detailed description of F(x), see Appendix B.1.5.

Not a Number (IEEE 2019).

Acknowledgments

We thank the referee Brad Whitmore for helpful comments and suggestions that helped to polish and improve this manuscript. We thank Andreas Baumbach, Akos Kungl, and Guido De Marchi for valuable feedback on the manuscript. D.D. and K.F. acknowledge support through the European Space Agency fellowship programme. D.D. further thanks his colleagues at ESA’s Advanced Concepts Team for their ongoing support. Based on observations made with the NASA/ESA Hubble Space Telescope, and obtained from the Hubble Legacy Archive, which is a collaboration between the Space Telescope Science Institute (STScI/NASA), the Space Telescope European Coordinating Facility (ST-ECF/ESA) and the Canadian Astronomy Data Centre (CADC/NRC/CSA). This research made use of Astropy (http://www.astropy.org), a community-developed core Python package for Astronomy (Astropy Collaboration 2013, 2018). This research made use of Photutils, an Astropy package for detection and photometry of astronomical sources (Bradley et al. 2020). Furthermore, this research made use of the open source libraries Scikit-Learn (Pedregosa et al. 2011), PyTorch (Paszke et al. 2019), NumPy (Harris et al. 2020), Matplotlib (Hunter 2007), pandas (Reback et al. 2020; McKinney 2010), tqdm (da Costa-Luis et al. 2021) and CatBoost (Prokhorenkova et al. 2018).

References

- Adamo, A., Hollyhead, K., Messa, M., et al. 2020, MNRAS, 499, 3267 [Google Scholar]

- Aizerman, M. A. 1964, Autom. Remote Control, 25, 821 [Google Scholar]

- Amorisco, N. C., Monachesi, A., Agnello, A., & White, S. D. M. 2018, MNRAS, 475, 4235 [NASA ADS] [CrossRef] [Google Scholar]

- Arik, S. O., & Pfister, T. 2021, Proceedings of the AAAI Conference on Artificial Intelligence, 35, 6679 [Google Scholar]

- Arrieta, A. B., Díaz-Rodríguez, N., Del Ser, J., et al. 2020, Inf. Fusion, 58, 82 [CrossRef] [Google Scholar]

- Ashman, K. M., & Zepf, S. E. 1992, ApJ, 384, 50 [Google Scholar]

- Astropy Collaboration (Robitaille, T. P., et al.) 2013, A&A, 558, A33 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Astropy Collaboration (Price-Whelan, A. M., et al.) 2018, AJ, 156, 123 [Google Scholar]

- Beasley, M. A. 2020, Globular Cluster Systems and Galaxy Formation (Cham: Springer International Publishing), 245 [Google Scholar]

- Beasley, M. A., Baugh, C. M., Forbes, D. A., Sharples, R. M., & Frenk, C. S. 2002, MNRAS, 333, 383 [Google Scholar]

- Bertin, E., & Arnouts, S. 1996, A&AS, 117, 393 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Bialopetravičius, J., & Narbutis, D. 2020a, A&A, 633, A148 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Bialopetravičius, J., & Narbutis, D. 2020b, AJ, 160, 264 [CrossRef] [Google Scholar]

- Bialopetravičius, J., Narbutis, D., & Vansevičius, V. 2019, A&A, 621, A103 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Blakeslee, J. P., Jordán, A., Mei, S., et al. 2009, ApJ, 694, 556 [Google Scholar]

- Boser, B. E., Guyon, I. M., & Vapnik, V. N. 1992, Proceedings of the Fifth Annual Workshop on Computational Learning Theory, 144 [CrossRef] [Google Scholar]

- Bradley, L., Sipőcz, B., Robitaille, T., et al. 2020, https://doi.org/10.5281/zenodo.4044744 [Google Scholar]

- Breiman, L. 2001, Mach. Learn., 45, 5 [Google Scholar]

- Breiman, L., Friedman, J., Olshen, R., & Stone, C. 1984, Classification and Regression Trees (Monterey: Wadsworth and Brooks) [Google Scholar]

- Brendel, W., & Bethge, M. 2019, Seventh International Conference on Learning Representations (ICLR 2019) [Google Scholar]

- Brodie, J. P., & Strader, J. 2006, ARA&A, 44, 193 [Google Scholar]

- Cantiello, M., D’Abrusco, R., Spavone, M., et al. 2018, A&A, 611, A93 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Cantiello, M., Venhola, A., Grado, A., et al. 2020, A&A, 639, A136 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Ćiprijanović, A., Kafkes, D., Perdue, G. N., et al. 2021, ArXiv e-prints [arXiv:2111.00961] [Google Scholar]

- Côté, P., Marzke, R. O., & West, M. J. 1998, ApJ, 501, 554 [Google Scholar]

- Côté, P., Blakeslee, J. P., Ferrarese, L., et al. 2004, ApJS, 153, 223 [Google Scholar]

- D’Abrusco, R., Cantiello, M., Paolillo, M., et al. 2016, ApJ, 819, L31 [CrossRef] [Google Scholar]

- da Costa-Luis, C., Larroque, S. K., Altendorf, K., et al. 2021, https://doi.org/10.5281/zenodo.5517697 [Google Scholar]

- De Bórtoli, B. J., Caso, J. P., Ennis, A. I., & Bassino, L. P. 2022, MNRAS, 510, 5725 [CrossRef] [Google Scholar]

- Deger, S., Lee, J. C., Whitmore, B. C., et al. 2022, MNRAS, 510, 32 [Google Scholar]

- Fahrion, K., Lyubenova, M., Hilker, M., et al. 2020a, A&A, 637, A27 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Fahrion, K., Lyubenova, M., Hilker, M., et al. 2020b, A&A, 637, A26 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Fahrion, K., Lyubenova, M., van de Ven, G., et al. 2021, A&A, 650, A137 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Forbes, D. A., & Forte, J. C. 2001, MNRAS, 322, 257 [NASA ADS] [CrossRef] [Google Scholar]

- Forbes, D. A., & Remus, R.-S. 2018, MNRAS, 479, 4760 [Google Scholar]

- Forbes, D. A., Bastian, N., Gieles, M., et al. 2018a, Proc. R. Soc. London Ser. A, 474, 20170616 [Google Scholar]

- Forbes, D. A., Read, J. I., Gieles, M., & Collins, M. L. M. 2018b, MNRAS, 481, 5592 [NASA ADS] [CrossRef] [Google Scholar]

- Fremling, C., Hall, X. J., Coughlin, M. W., et al. 2021, ApJ, 917, L2 [NASA ADS] [CrossRef] [Google Scholar]

- Fukushima, K., & Miyake, S. 1982, Competition and Cooperation in Neural Nets (Springer), 267 [CrossRef] [Google Scholar]

- Gal, Y., & Ghahramani, Z. 2016, International Conference on Machine Learning (PMLR), 1050 [Google Scholar]

- Geisler, D., Lee, M. G., & Kim, E. 1996, AJ, 111, 1529 [NASA ADS] [CrossRef] [Google Scholar]

- Harris, W. E., Blakeslee, J. P., & Harris, G. L. H. 2017a, ApJ, 836, 67 [Google Scholar]

- Harris, W. E., Ciccone, S. M., Eadie, G. M., et al. 2017b, ApJ, 835, 101 [NASA ADS] [CrossRef] [Google Scholar]

- Harris, C. R., Millman, K. J., van der Walt, S. J., et al. 2020, Nature, 585, 357 [NASA ADS] [CrossRef] [Google Scholar]

- Hudson, M. J., & Robison, B. 2018, MNRAS, 477, 3869 [NASA ADS] [CrossRef] [Google Scholar]

- Hunter, J. D. 2007, Comput. Sci. Eng., 9, 90 [NASA ADS] [CrossRef] [Google Scholar]

- IEEE 2019, IEEE Standard for Floating-Point Arithmetic (Revision of IEEE 754-2008), 1 [Google Scholar]

- Jordán, A., Blakeslee, J. P., Peng, E. W., et al. 2004, ApJS, 154, 509 [CrossRef] [Google Scholar]

- Jordán, A., McLaughlin, D. E., Côté, P., et al. 2007a, ApJS, 171, 101 [CrossRef] [Google Scholar]

- Jordán, A., Blakeslee, J. P., Côté, P., et al. 2007b, ApJS, 169, 213 [Google Scholar]

- Jordán, A., Peng, E. W., Blakeslee, J. P., et al. 2009, ApJS, 180, 54 [Google Scholar]

- Jordán, A., Peng, E. W., Blakeslee, J. P., et al. 2015, ApJS, 221, 13 [Google Scholar]

- King, I. R. 1966, AJ, 71, 64 [Google Scholar]

- Laureijs, R., Amiaux, J., Arduini, S., et al. 2011, ArXiv e-prints [arXiv:1110.3193] [Google Scholar]

- LeCun, Y., Bottou, L., Bengio, Y., & Haffner, P. 1998, Proc. IEEE, 86, 2278 [Google Scholar]

- LeCun, Y., Bengio, Y., & Hinton, G. 2015, Nature, 521, 436 [Google Scholar]

- Lee-Waddell, K., Serra, P., Koribalski, B., et al. 2018, MNRAS, 474, 1108 [Google Scholar]

- Li, Y., Ni, Y., Croft, R. A. C., et al. 2021, Proc. Natl. Acad. Sci., 118, 2022038118 [CrossRef] [Google Scholar]

- Linardatos, P., Papastefanopoulos, V., & Kotsiantis, S. 2021, Entropy, 23, 18 [Google Scholar]

- Linnainmaa, S. 1970, Master’s Thesis (in Finnish), Univ. Helsinki [Google Scholar]

- Lomelí-Núñez, L., Mayya, Y. D., Rodríguez-Merino, L. H., Ovando, P. A., & Rosa-González, D. 2022, MNRAS, 509, 180 [Google Scholar]

- McKinney, W. 2010, in Proceedings of the 9th Python in Science Conference, eds. S. van der Walt, & J. Millman, 56 [Google Scholar]

- Mei, S., Blakeslee, J. P., Côté, P., et al. 2007, ApJ, 655, 144 [Google Scholar]

- Mohammadi, M., Mutatiina, J., Saifollahi, T., & Bunte, K. 2022, Astron. Comput., 39, 100555 [NASA ADS] [CrossRef] [Google Scholar]

- Montavon, G., Lapuschkin, S., Binder, A., Samek, W., & Müller, K.-R. 2017, Pattern Recognit., 65, 211 [NASA ADS] [CrossRef] [Google Scholar]

- Mora, M. D., Chanamé, J., & Puzia, T. H. 2015, AJ, 150, 93 [NASA ADS] [CrossRef] [Google Scholar]

- Müller, O., & Schnider, E. 2021, Open J. Astrophys., 4, 3 [Google Scholar]

- Nair, V., & Hinton, G. E. 2010, Proceedings of the 27th International Conference on Machine Learning, 807 [Google Scholar]

- Papernot, N., & McDaniel, P. 2018, ArXiv e-prints [arXiv:1803.04765] [Google Scholar]

- Paszke, A., Gross, S., Massa, F., et al. 2019, in Advances in Neural Information Processing Systems 32, eds. H. Wallach, H. Larochelle, A. Beygelzimer, et al. (Curran Associates, Inc.), 8024 [Google Scholar]

- Pedregosa, F., Varoquaux, G., Gramfort, A., et al. 2011, J. Mach. Learn. Res., 12, 2825 [Google Scholar]

- Pérez, G., Messa, M., Calzetti, D., et al. 2021, ApJ, 907, 100 [CrossRef] [Google Scholar]

- Pinna, F., Falcón-Barroso, J., Martig, M., et al. 2019, A&A, 623, A19 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Prokhorenkova, L., Gusev, G., Vorobev, A., Dorogush, A. V., & Gulin, A. 2018, in Advances in Neural Information Processing Systems, eds. S. Bengio, H. Wallach, H. Larochelle, et al. (Curran Associates, Inc.), 31 [Google Scholar]

- Reback, J., McKinney, W., Van den Bossche, J., et al. 2020, https://doi.org/10.5281/zenodo.3715232 [Google Scholar]

- Reina-Campos, M., Trujillo-Gomez, S., Deason, A. J., et al. 2021, MNRAS, 513, 3925 [Google Scholar]

- Ribeiro, M. T., Singh, S., & Guestrin, C. 2016, Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 1135 [CrossRef] [Google Scholar]

- Richtler, T. 2003, in The Globular Cluster Luminosity Function: New Progress in Understanding an Old Distance Indicator, eds. D. Alloin, & W. Gieren, 635, 281 [NASA ADS] [Google Scholar]

- Rumelhart, D. E., Hinton, G. E., & Williams, R. J. 1986, Nature, 323, 533 [Google Scholar]

- Saifollahi, T., Janz, J., Peletier, R. F., et al. 2021, MNRAS, 504, 3580 [NASA ADS] [CrossRef] [Google Scholar]

- Schlegel, D. J., Finkbeiner, D. P., & Davis, M. 1998, ApJ, 500, 525 [Google Scholar]

- Spergel, D., Gehrels, N., Baltay, C., et al. 2015, ArXiv e-prints [arXiv:1503.03757] [Google Scholar]

- Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., & Salakhutdinov, R. 2014, J. Mach. Learn. Res., 15, 1929 [Google Scholar]

- Sundararajan, M., Taly, A., & Yan, Q. 2017, International Conference on Machine Learning (PMLR), 3319 [Google Scholar]

- Tarsitano, F., Bruderer, C., Schawinski, K., & Hartley, W. G. 2022, MNRAS, 511, 3330 [NASA ADS] [CrossRef] [Google Scholar]

- Thilker, D. A., Whitmore, B. C., Lee, J. C., et al. 2022, MNRAS, 509, 4094 [Google Scholar]

- Valenzuela, L. M., Moster, B. P., Remus, R.-S., O’Leary, J. A., & Burkert, A. 2021, MNRAS, 505, 5815 [NASA ADS] [CrossRef] [Google Scholar]

- Villaescusa-Navarro, F., Anglés-Alcázar, D., Genel, S., et al. 2021, ApJ, 915, 71 [NASA ADS] [CrossRef] [Google Scholar]

- Villanueva-Domingo, P., Villaescusa-Navarro, F., Anglés-Alcázar, D., et al. 2021, ArXiv e-prints [arXiv:2111.08683] [Google Scholar]

- Visani, G., Bagli, E., Chesani, F., Poluzzi, A., & Capuzzo, D. 2020, Journal of the Operational Research Society (Taylor& Francis), 1 [Google Scholar]

- von Marttens, R., Casarini, L., Napolitano, N. R., et al. 2021, MNRAS, submitted [arXiv:2111.01185] [Google Scholar]

- Wang, S., Chen, B., Ma, J., et al. 2021, A&A, 658, A51 [Google Scholar]

- Wei, W., Huerta, E. A., Whitmore, B. C., et al. 2020, MNRAS, 493, 3178 [NASA ADS] [CrossRef] [Google Scholar]

- Werbos, P. J. 1982, System Modeling and Optimization (Springer), 762 [CrossRef] [Google Scholar]

- Whitmore, B. C., Chandar, R., Kim, H., et al. 2011, ApJ, 729, 78 [NASA ADS] [CrossRef] [Google Scholar]

- Whitmore, B. C., Lee, J. C., Chandar, R., et al. 2021, MNRAS, 506, 5294 [NASA ADS] [CrossRef] [Google Scholar]

- Zhou, J., Gandomi, A. H., Chen, F., & Holzinger, A. 2021, Electronics, 10, 593 [CrossRef] [Google Scholar]

Appendix A: Used features

We describe the features of the tabular data in the following. They were extracted using Photutil’s segmentation9 and aperture photometry routines after subtracting a median background from the HST ACS images of each galaxy. Not all features produced by these methods are used in the modelling, as for example the coordinates are not relevant.

-

m3_g, m3_z, m4_g, m4_z, m5_g, and m5_z are the aperture magnitudes in the two filters extracted using three, four, and five pixel radii, respectively.

-

CI3_g, CI3_z, CI4_g, CI4_z, CI5_g, and CI5_z are the concentration indices corresponding to the magnitude difference between a one pixel aperture and three, four, or five pixels, respectively.

-

colour refers to m4_g - m4_z.

-

eccentricity, eccentricity_z, orientation, orientation_z are the eccentricity and orientation (position angle) of each source in the g and z bands, respectively.

-

semimajor_sigma, semiminor_sigma, semimajor_sigma_z, and semiminor_sigma_z are measurements of the semimajor and semiminor axis lengths of each source.

-

area and area_z refer to the area of each source.

-

min_value, max_value, min_value_z, and max_value_z are the minimum and maximum flux value of each source in the g and z bands, respectively.

-

segment_flux and segment_flux_z are the fluxes of each source as computed by Photutil’s segmentation routines.

Appendix B: Models

For all models with learnable parameters we use L2 regularisation. For instance, for a parameter b ∈ ℝN, a term  with ∥ ⋅ ∥ being the absolute value is added to the loss ℒ.

with ∥ ⋅ ∥ being the absolute value is added to the loss ℒ.

B.1. Expanded notation

In the following, the used machine learning models are described as functions f : ℝN → ℝ that map from the feature space into the real space x ↦ f(x). This outcome can be further squished by another function g, for example to obtain a probability estimate on which we can apply a decision rule to obtain the model prediction ym(x) with ym : ℝN → {0, 1},

where θ is a threshold value, for instance θ = 0.5, and g(f(x)) = p(x). For the methods discussed here, g is either a logistic function (σ : ℝ → [0, 1])

the sign function (sign : ℝ → { − 1, 1})

or the identity function.

B.1.1. Logistic regression

Given a feature vector x ∈ ℝN, logistic regression provides a probability estimate for its class affiliation,

where W ∈ ℝ1 × N and b ∈ ℝ are learnable parameters. To ease notation, we drop the dependence on W and b in the following. An input x is classified as a GC if p(x) > 0.5, which is identical to requiring f(x) > 0. The parameters W and b are obtained by minimising a cross-entropy loss

B.1.2. Support vector machines

Similar to logistic regression, a linear support vector machine classifies a source with feature vector x ∈ ℝN as a GC if sign[f(x)] > 0, with W now given by

where wi ≥ 0 are learnable parameters and z(i) = 2y(i) − 1. W and b are optimised using a hinge loss,

which increases the margin between the found decision boundary and data points close to it. To deal with non-linearly separable data, the input features x can be mapped into a high-dimensional vector space φ(x), resulting in

which depends on the inner product of the mapped feature vectors. The kernel trick states that these products can be reduced to inner products in the original feature space, weighted by some kernel κ(⋅,⋅), allowing us to write our model as

The linear support vector machine can be recovered by choosing κ(x(i), x) = x(i) ⋅ x, which only differs from logistic regression in the choice of the loss function.

B.1.3. Neural networks

A neural network is a hierarchy of functional units

where ∘ is the concatenation of functions, f(2)° f(1)(x) = f(2)(f(1)(x)). The functional units f(l) are called the layers l of the neural network and the individual elements of each layer are called neurons ( is the output of neuron j of layer l). Each layer has a stereotypical structure, which in our case is given by

is the output of neuron j of layer l). Each layer has a stereotypical structure, which in our case is given by

Here, δ(x) is the dropout operator that randomly sets a fraction of elements of its input to zero during training to avoid overfitting (Srivastava et al. 2014). The W(l) ∈ ℝn(l) × n(l − 1) are learnable weights that map from the n(l − 1) neurons of layer l − 1 to the n(l) neurons of layer l. b(l) ∈ ℝn(l) are learnable biases. ϕ(x) is the so-called rectified linear unit (ReLU) activation function (Nair & Hinton 2010) which is widely used in the literature. In our case, a probabilistic estimate for the class of an input is obtained by applying a logistic activation function to the output, p(x) = σ(f(x)). Therefore, a source x is classified as a GC if p(x) > 0.5.

The dropout operator δ is usually only applied during training, but can also be used during inference time (Gal & Ghahramani 2016). In this case, evaluating f(x) multiple times will result in a distribution of values for p(x). Thus, for each source, we can not only provide a class label via the mean of the sampled distribution, but through its width also a sensible estimate of the network’s uncertainty (see Appendix D).

The network parameters W(l) and b(l) are obtained ∀l ∈ [0, n] by minimising a cross-entropy loss (Eq. B.6) using the error backpropagation algorithm.

B.1.4. k nearest neighbours

In the introduced notation, the decision function of kNN can be written as

where 𝒩k(x) is a set containing the indices of the k nearest neighbours of x from the training data set. A source is classified as a GC if p(x) > 0.5.

B.1.5. Convolutional neural network

Here, we use the following architecture

with

and f(l), a(l), ϕ and δ given by Eqn. (B.13) to (B.15). c(l) are convolutional layers, where W(l) is a set of learnable filters that are convolved with the input x ∈ ℝd × M × M. Pmax(x) is the max-pooling operator, which reduces the dimension of x by replacing neighbouring pixels by a single pixel containing the maximum value. For instance, a 2 × 2 max-pooling operator returns Pmax([0, 1],[2, 3]) = 3. A probabilistic estimate for the class of an input is obtained by applying a logistic activation function to the output, p(x) = σ(f(x)). Therefore, a source x is classified as a GC if p(x) > 0.5.

Appendix C: Feature rescaling