| Issue |

A&A

Volume 600, April 2017

|

|

|---|---|---|

| Article Number | A68 | |

| Number of page(s) | 16 | |

| Section | Astrophysical processes | |

| DOI | https://doi.org/10.1051/0004-6361/201629903 | |

| Published online | 31 March 2017 | |

Stable laws and cosmic ray physics

LAPTh, Université Savoie Mont Blanc & CNRS, 9 chemin de Bellevue, BP 110, 74941 Annecy-le-Vieux, France

e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

Received: 14 October 2016

Accepted: 19 December 2016

Abstract

Context. In the new “precision era” for cosmic ray astrophysics, scientists making theoretical predictions cannot content themselves with average trends, but need to correctly take into account intrinsic uncertainties. The space-time discreteness of the cosmic ray sources, together with a substantial ignorance of their precise epochs and locations (with the possible exception of the most recent and close ones) play an important role in this sense.

Aims. We elaborate a statistical theory to deal with this problem, relating the composite probability P(Ψ) to obtain a flux Ψ at the Earth and the single-source probability p(ψ) to contribute with a flux ψ. The main difficulty arises from the fact that p(ψ) is a “heavy tail” distribution, characterized by power-law or broken power-law behavior up to very large fluxes, for which the central limit theorem does not hold, and leading to distributions different from Gaussian. The functional form of the distribution for the aggregated flux is nonetheless unchanged by its own convolution, that is, it belongs to the so-called stable laws class.

Methods. We analytically discuss the regime of validity of the stable laws associated with the distributions arising in cosmic ray astrophysics, as well as the limitations to the treatment imposed by causal considerations and partial source catalog knowledge. We validate our results with extensive Monte Carlo simulations, for different regimes of propagation parameters and energies.

Results. We find that relatively simple recipes provide a satisfactory description of the probability P(Ψ). We also find that a naive Gaussian fit to simulation results would underestimate the probability of very large fluxes, that is, several times above the average, while overestimating the probability of relatively milder excursions. At large energies, large flux fluctuations are prevented by causal considerations, while at low energies, a partial knowledge of the recent and nearby population of sources plays an important role. A few proposals have been recently discussed in the literature to account for spectral breaks reported in cosmic ray data in terms of local contributions. We apply our newly developed theory to assess their probabilities, finding that they are relatively small, typically at the 0.1% level or smaller, never exceeding 1%.

Conclusions. The use of heavy tail distributions is relevant in assessing how likely a measured cosmic ray flux is to depart from the average expectation in a given model. The existing mathematical theory leading to stable laws can be adapted to the case of interest via some recipes that closely reproduce numerical simulations and are relatively easy to implement.

Key words: astroparticle physics / cosmic rays / diffusion / supernovae: general / methods: statistical

© ESO, 2017

1. Introduction

A striking property of the (Galactic) cosmic ray spectra is their power-law behavior over many decades in energy. Power laws are omnipresent in nature (as well as in many domains of interest for human activities), typically associated with self-similar/scale invariant phenomena. For example, the photon spectra, thought to be tracers of the parent charged cosmic ray particle spectra, of most sources in high-energy astrophysics (supernova remnants, pulsar wind nebulae, diffuse radiations, etc.) at different wavelengths from radio to gamma-rays are also well described by (broken) power laws, typically with spectral index α in the range −3 < α < −1. Even the interstellar magnetic field turbulence is well-described by a power-law power spectrum, which in turn justifies a power-law behavior for the rigidity dependence of the diffusion coefficient describing cosmic ray propagation in the Galaxy.

Not surprisingly, then, the customary calculation of the cosmic ray flux at the Earth postulates a universal power-law source term, further assuming the limit of a continuous distribution of sources, both spatially and temporally, in a volume modeling the Galaxy: often, a simple cylinder or an effectively infinite slab. This is an approximation, as the distribution of cosmic ray sources is actually discrete. This approximation is valid if the distribution of sources is sufficiently dense in space and in time to be described as a continuum, much like in thermodynamics when the details of microscopic states corresponding to a given macroscopic state are ignored. This approximation is well-suited for describing the average expectations for the flux at Earth associated to a given hypothesis on propagation parameters, source spectra, and energetics. However, the discrete nature of cosmic ray sources should affect other observables, for instance, the time variation of the flux measured, which is nonetheless less important for all but a few phenomenological consequences.

Indeed, recently, several experiments have established that the cosmic ray spectrum, even within the three decades of energy probed by a single experiment such as AMS02, cannot be satisfactorily described by a single power-law (for a recent review see Sect. 2 in Serpico 2015). This is observed for protons, helium nuclei, and some heavier species. Several articles have attempted to explain this phenomenology as the result of the discrete nature of cosmic ray sources in space and time (as reviewed in Sect. 3.3 of Serpico 2015 and also in Bernard et al. 2012). While specific scenarios fitting the data can be found, the likelihood of these solutions, given our statistical knowledge of the source distribution and rate, is unknown. This is related to the conceptual difficulty that the probability distribution function p of a single source contributing a flux ψ at Earth is not a Gaussian function, but rather a heavy-tail/power law distribution (see Bernard et al. 2012, and arguments leading to Eqs. (21) and (24) in Sect. 3.2) which, if extrapolated to very large fluxes, does not even have a finite variance; the central limit theorem for the sum flux probability distribution P(Ψ) cannot be applied. Ironically, the same mathematical property that makes a phenomenological description of the cosmic ray observables so simple (“power laws”) raises difficulties in theoretical probabilistic assessments starting from p(ψ). While this problem has been overtly recognized (Lee 1979; Lagutin & Nikulin 1995), the quantitative consequences of this fact for cosmic ray physics are still a matter of debate. Far from being academic, the problem also has important implications for the theoretical limitations in the extraction of propagation parameters, as well as comparing theoretical models up to current experimental precision. Ultimately, barring an unrealistic, fully deterministic model of the sources of cosmic rays, theoretical predictions in this field are intrinsically statistical in nature. In fact, theoretical calculations concerning ultra-high-energy cosmic ray observables have already routinely used ensemble techniques for estimating the flux and mass composition uncertainties (see e.g., Ahlers et al. 2013). Important differences arise, however, due to the quasi-ballistic propagation and the non-negligible loss-effects ruling the extragalactic propagation regime.

Here, we aim at establishing a systematic theory to evaluate these effects for Galactic cosmic rays. Our main focus is on analytical treatment, but all major conclusions are validated by extensive numerical simulations. By itself, the numerical part of our work represents a major novel contribution to the understanding of the consequence of the discrete nature of sources for cosmic ray phenomenology. Also, novel when compared to previous publications in this domain, we analyze the effects of physical limitations on idealized mathematical extrapolations, such as the eventual failure of diffusion equations to cope with constraints such as causality or a priori knowledge about the discrete source distribution. Whenever a comparison with observations is performed, we refer to the proton flux, which is the best measured one due to high statistics, and for which the effects discussed here should be most prominent. Still, the same formalism can apply to any nuclear species, and could also be extended to leptons (at least qualitatively; for a pioneering earlier investigation of the lepton channel, see Mertsch 2011).

As an application, we then focus on the compatibility of several experimental results on interstellar protons (such as AMS02, Pamela, CREAM) with our current understanding of cosmic ray propagation, properly taking the theoretical uncertainty into account.

This paper is organized as follows. In Sect. 2, we describe the problem and introduce the main difficulties that make its solution challenging, as well as our strategy to approach them. Section 3 deals with the technical aspects of the analytical treatment proposed, including a discussion of the limitations of an overly naive approach. The reader uninterested by these subtleties and mostly interested in the validation of our description and the phenomenological implications of our work can quickly gloss over this part and focus on the following sections. In Sect. 4, we compare our theory with extensive simulations: this allows us to validate the theory, better defining its regimes of validity, also clarifying when one should be able to draw conclusions relying solely on analytical arguments without the need for long computing time. A few applications to cases of phenomenological interest are reported in Sect. 5. Finally, in Sect. 6, we conclude.

2. Description of the problem

Galactic cosmic rays are accelerated at discrete sources, the position and age of which is not known individually, only statistically. From the statistical distribution of these sources, we can infer the statistical distribution of the cosmic ray flux in the solar neighborhood. In this section, we describe the issues raised by this program. The cosmic ray (CR) flux Ψ obeys a diffusion equation which, in its simplest incarnation boils down to the form (energy dependence implicit): ![Mathematical equation: \begin{equation} \left[\frac{\partial} {\partial t}-K\,\nabla^2\right]\Psi={\cal Q}\,,\label{DiffEq1} \end{equation}](/articles/aa/full_html/2017/04/aa29903-16/aa29903-16-eq8.png) (1)where K is the spatial diffusion coefficient supposed to be uniform in the diffusion volume and

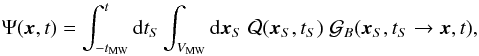

(1)where K is the spatial diffusion coefficient supposed to be uniform in the diffusion volume and  is the source term. Additional terms accounting for convection, reacceleration, and energy losses are of little relevance for the species and the energy ranges of interest in the following and can be anyway dealt with at very least with standard techniques like the ones implemented in numerical codes such as GALPROP or DRAGON or semi-numerical ones such as USINE. The solution to this equation can be formally written as an integral over the Galactic volume and over the past Galactic history as:

is the source term. Additional terms accounting for convection, reacceleration, and energy losses are of little relevance for the species and the energy ranges of interest in the following and can be anyway dealt with at very least with standard techniques like the ones implemented in numerical codes such as GALPROP or DRAGON or semi-numerical ones such as USINE. The solution to this equation can be formally written as an integral over the Galactic volume and over the past Galactic history as:  (2)where VMW is the volume of the Galaxy, given by VMW ≃ 2 hπR2 if the Galaxy is modeled as a cylinder of radius R and half-thickness h, of age tMW, and

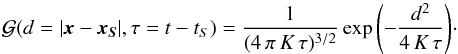

(2)where VMW is the volume of the Galaxy, given by VMW ≃ 2 hπR2 if the Galaxy is modeled as a cylinder of radius R and half-thickness h, of age tMW, and  is the Green function obtained by solving the analog of Eq. (1) with a temporal and spatial delta at the RHS, and the appropriate boundary conditions. As a reminder, the Green function of the pure diffusive problem (without boundary) with delta centered at spatial position xS and time tS would simply write

is the Green function obtained by solving the analog of Eq. (1) with a temporal and spatial delta at the RHS, and the appropriate boundary conditions. As a reminder, the Green function of the pure diffusive problem (without boundary) with delta centered at spatial position xS and time tS would simply write  (3)There are a few issues related to the computation of the CR flux, which we now briefly describe.

(3)There are a few issues related to the computation of the CR flux, which we now briefly describe.

First, the source term  is expected to be a sum of discrete injection points in space-time (the spatial and time scale of likely accelerators being assumed much shorter than propagation length and time), whose actual positions and epochs are unknown. This is the so-called myriad model approach (Higdon & Lingenfelter 2003) where cosmic rays are sourced by a constellation of point-like objects and not by a continuous jelly. It would then be formally correct to write

is expected to be a sum of discrete injection points in space-time (the spatial and time scale of likely accelerators being assumed much shorter than propagation length and time), whose actual positions and epochs are unknown. This is the so-called myriad model approach (Higdon & Lingenfelter 2003) where cosmic rays are sourced by a constellation of point-like objects and not by a continuous jelly. It would then be formally correct to write  (4)leading to

(4)leading to  (5)Commonly Eq. (1), sourced by a discrete sum, is replaced with a continuous proxy corresponding to its ensemble average. Specifically, the source term writes (for simplicity we assume a unique source term, i.e., qi = q):

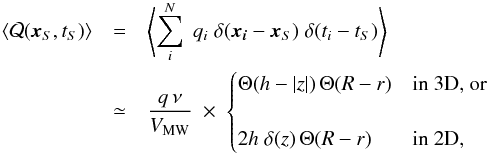

(5)Commonly Eq. (1), sourced by a discrete sum, is replaced with a continuous proxy corresponding to its ensemble average. Specifically, the source term writes (for simplicity we assume a unique source term, i.e., qi = q):  (6)where q is the single source spectrum (particles per unit energy) and ν the source rate per unit time. For example, assuming supernova remnants to be the sources of CRs, a rate of three explosions per century is reasonable. In the second equality, we assume a homogeneous distribution of sources lying within a cylindrical approximation of the Galaxy with radius R. Along the vertical direction, the sources are either uniformly distributed inside a disk with half-thickness h (3D case), or pinched inside an infinitesimally thick disk (2D case). The generalization to a different distribution is straightforward.

(6)where q is the single source spectrum (particles per unit energy) and ν the source rate per unit time. For example, assuming supernova remnants to be the sources of CRs, a rate of three explosions per century is reasonable. In the second equality, we assume a homogeneous distribution of sources lying within a cylindrical approximation of the Galaxy with radius R. Along the vertical direction, the sources are either uniformly distributed inside a disk with half-thickness h (3D case), or pinched inside an infinitesimally thick disk (2D case). The generalization to a different distribution is straightforward.

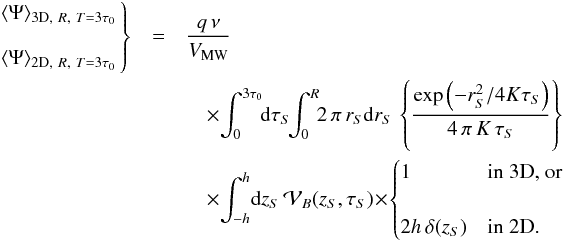

This source term leads to the theoretical average flux:  (7)This is only true on average. We expect the flux observed at the Earth to be ruled by a probability distribution function (pdf) P(Ψ). This function also depends on the actual value of N of the underlying discrete sources. The average flux becomes

(7)This is only true on average. We expect the flux observed at the Earth to be ruled by a probability distribution function (pdf) P(Ψ). This function also depends on the actual value of N of the underlying discrete sources. The average flux becomes  (8)Obtaining the probability P(Ψ), entering, for example, in Eq. (8), requires a “change of variables”, from space-time location to flux space. To this end, we exploit the fact that there is a straightforward relation between { tS,xS } and the flux obtained by a single source, ψS, located at { tS,xS }. For example, Eq. (8) can be rewritten in terms of the pdf for the flux from a single source, p(ψ), as

(8)Obtaining the probability P(Ψ), entering, for example, in Eq. (8), requires a “change of variables”, from space-time location to flux space. To this end, we exploit the fact that there is a straightforward relation between { tS,xS } and the flux obtained by a single source, ψS, located at { tS,xS }. For example, Eq. (8) can be rewritten in terms of the pdf for the flux from a single source, p(ψ), as  (9)Obtaining p(ψ) (or rather its cumulative distribution) is the main subject of Sect. 3.2. Let us briefly note that the link is formally written as

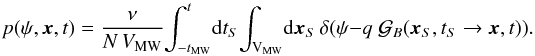

(9)Obtaining p(ψ) (or rather its cumulative distribution) is the main subject of Sect. 3.2. Let us briefly note that the link is formally written as  (10)Once p(ψ) is known, the pdf for the sum flux P(Ψ) can be computed thanks to the convolution of the individual probabilities p(ψi) under the constraint

(10)Once p(ψ) is known, the pdf for the sum flux P(Ψ) can be computed thanks to the convolution of the individual probabilities p(ψi) under the constraint  . The probability P(Ψ) is formally written as

. The probability P(Ψ) is formally written as  This relation is based on the disputable yet natural assumption that the sources are not correlated with each other. The probability that two sources yield the fluxes ψ1 and ψ2 , respectively, is thus given by the product p(ψ1)p(ψ2).

This relation is based on the disputable yet natural assumption that the sources are not correlated with each other. The probability that two sources yield the fluxes ψ1 and ψ2 , respectively, is thus given by the product p(ψ1)p(ψ2).

A second problem imposes itself as follows. If we try to use the generalization of Eq. (9) to compute, for example, the second moment of the flux, ⟨ Ψ2 ⟩, and hence the expected variance of the flux, the expression formally diverges since the underlying p(ψ) has a power-law tail, p(ψ) ∝ ψ− α−1, with 1 <α< 2 (as derived e.g., in (Bernard et al. 2012)). It turns out that despite the fact that (for instance) the variance of p(ψ) is formally infinite, thanks to a so-called generalized central limit theorem, the resulting probability P(Ψ), for large N, has a universal shape, a so-calledstable law, only dependent on α and independent of N, but for a rescaling. More details on this are given in Sect. 3.1. Hence, this difficulty does not appear so severe, since meaningful statistical quantities (such as confidence levels or quantiles) can still be computed in this limit. A relatively minor complication is that the index α of the pdf p(ψ) is in fact energy dependent and depends on the propagation model as well. We introduce two limiting behaviors of α in Sect. 3.2, which allow for a satisfactory description of the distribution over a wide range of fluxes.

The third issue is that some of the above-mentioned properties depend on the fact that the behavior p(ψ) ∝ ψ− α−1 formally extends to infinity. Physically, however, there is no such thing as an infinite flux: an obvious cutoff is imposed, for instance, by the empirically established absence of a source that is too close and/or too recent; of course, this depends on the level of credibility attributed to independent astrophysical information, such as available catalogs. In Sects. 3.3 and 3.5 we see the impact of this constraint on the maximum flux. A more subtle reason for having an effective cutoff to the domain of the probability distribution function p(ψ) is the fact that Eq. (1) is non-relativistic, and does not automatically ensure that causality is fulfilled. Accounting exactly for this is outside of the scope of our study, but we show that this introduces an effective cutoff that is particularly relevant at high energies (see Sects. 3.3 and 3.4). In either case, however, the conceptual problem that arises is that for a pdf p(ψ), with a finite support, the asymptotic pdf for the sum of the fluxes variable Ψ is now a Gaussian due to the standard central limit theorem (moments are, in fact, finite). It turns out that stable laws still provide acceptable descriptions of the pdf P(Ψ) up to the fluxes of phenomenological interest, and that for the values of N and Ψ of actual interest one is relatively far from the Gaussian limit and much closer to the stable law limit. This aspect is described in Sect. 3.3.

Before concluding this Section, a couple of comments are in order.

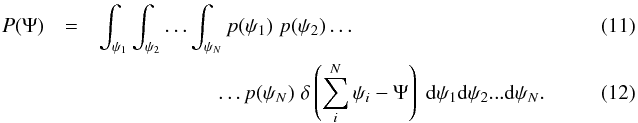

First, we shall limit ourselves to discussing the probabilities of departures of fluxes from their average only at a single energy. A natural generalization would be to discuss the probability that the flux at the Earth departs from its expected average value by more than a certain amount at two or more energies. Fluxes at different energies (especially near ones) are not expected to be independent, rather strongly correlated, which can be formally described, for instance, via the following inequality in the conditional probability ![Mathematical equation: \begin{eqnarray} &&P([\Psi(E_1)>\langle \Psi (E_1)\rangle] \cap[\Psi(E_2)>\langle\Psi (E_2)\rangle] ) \nonumber \\&& \neq P(\Psi(E_1)>\langle \Psi (E_1)\rangle)\,P(\Psi(E_2)>\langle\Psi (E_2) \rangle). \end{eqnarray}](/articles/aa/full_html/2017/04/aa29903-16/aa29903-16-eq46.png) (13)A manifestation of this property if second moments are finite is

(13)A manifestation of this property if second moments are finite is  (14)Estimating the probability of the most significant deviation among the different energy bins provides, however, an upper limit to the true probability of observing the actual flux excursion at different energy bins, the former being necessarily equal or lower due to the unitarity property of pdfs.

(14)Estimating the probability of the most significant deviation among the different energy bins provides, however, an upper limit to the true probability of observing the actual flux excursion at different energy bins, the former being necessarily equal or lower due to the unitarity property of pdfs.

Second, while the effects discussed in our paper are the result of replacing the true underlying discrete source distribution with a continuum space-time distribution that describes its smooth average, and are thus accounting for the partial (only “probabilistic”) knowledge we have on the sources frequency and position, this is different from what is usually intended by the “effects of the granularity” of the sources, in statistical mechanics, for example. These would typically manifest themselves in higher-order correlation functions (again, if finite, otherwise via conditional probabilities), like the correlations of fluxes measured at different times, or at the same time at different locations, etc. We emphasize that all source explosion models sharing the same time and volume average would give us the same flux ⟨ Ψ ⟩, while the above higher-order correlation observables could be different. In particular, in the myriad model approach that we follow here, we assume point-like sources not to be correlated with each other. For example, a model where 20 SN explode at the same time in the Galaxy every 800 yr does not satisfy this condition, and it will yield a different probability distribution function P(Ψ) for the flux than a model where 1 SN explodes randomly in the Galaxy every 40 yr. Both models have the same average flux but fluctuations around that mean do not follow the same law, and time correlations, for example, are different. On the other hand, our formalism can still provide a good approximation of strongly correlated source models: if the sources are strongly correlated in space and time (e.g., the 20 SN in the example above were to explode within a few pc distance of one another), simply downscaling ν and increasing q by a corresponding factor would provide a satisfactory approximation for all but very small scale correlations.

3. Technical aspects

3.1. Generalized central limit theorem: mathematical statement

The central limit theorem states that the sum Ψ of a large number N of independent, identical, and stochastic variables ψ is distributed according to a Gaussian law whose variance  is N times larger than the variance

is N times larger than the variance  of each individual contribution ψ. In our case though, the pdf of ψ has a power-law tail p(ψ) ∝ ψ− α−1 with 1 <α< 2. The average flux ⟨ ψ ⟩ from a single source is defined but its variance is not and the central limit theorem cannot be applied, at least in its mundane form.

of each individual contribution ψ. In our case though, the pdf of ψ has a power-law tail p(ψ) ∝ ψ− α−1 with 1 <α< 2. The average flux ⟨ ψ ⟩ from a single source is defined but its variance is not and the central limit theorem cannot be applied, at least in its mundane form.

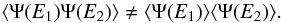

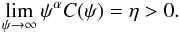

A more general form of the theorem has been discussed in section 1.8 of Nolan (2012), for example, and can be readily applied to our problem. We simply recall here the salient features of the version that can be adapted to the myriad model. Let ψ be a random variable with probability law p(ψ) defined on R+. The tail behavior can be captured by the cumulative distribution function (also called survival function) C(ψ) such that  (15)That function encodes the probability for the flux of a single source to be larger than ψ. As shown in what follows, its asymptotic behavior, which turns out to be an essential ingredient in the proof of the generalized theorem, has the simple power-law form

(15)That function encodes the probability for the flux of a single source to be larger than ψ. As shown in what follows, its asymptotic behavior, which turns out to be an essential ingredient in the proof of the generalized theorem, has the simple power-law form  (16)We denote by Ψ = ψ1 + ··· + ψN the sum of N independent and identically p distributed random variables ψi. By introducing the rescaled flux

(16)We denote by Ψ = ψ1 + ··· + ψN the sum of N independent and identically p distributed random variables ψi. By introducing the rescaled flux  (17)the generalized central limit theorem states that the probability law P of SN converges for large N toward the law S [ α,1,1,0;1 ] among the class of stable laws, in the notation of Nolan (2012). Note that the spread parameter σN of the stable law depends on the constant η and increases as N1 /α instead of

(17)the generalized central limit theorem states that the probability law P of SN converges for large N toward the law S [ α,1,1,0;1 ] among the class of stable laws, in the notation of Nolan (2012). Note that the spread parameter σN of the stable law depends on the constant η and increases as N1 /α instead of  in the Gaussian case. The general stable law function S [ α,β,γ,δ;1 ] also depends on additional arguments beyond the stability index α, notably a skewness parameter β, a scale parameter γ, and a location parameter δ. The last index sets the type of parameterization used, as several exist in the literature. For instance, the normal or Gaussian distribution centered around μ and with variance σ2 is denoted in this notation as

in the Gaussian case. The general stable law function S [ α,β,γ,δ;1 ] also depends on additional arguments beyond the stability index α, notably a skewness parameter β, a scale parameter γ, and a location parameter δ. The last index sets the type of parameterization used, as several exist in the literature. For instance, the normal or Gaussian distribution centered around μ and with variance σ2 is denoted in this notation as  . We recall that a pdf that is invariant under the product of convolution is described as stable. This is the case for well-known examples such as the Gaussian, Cauchy, and Lévy distributions. But this class of functions is broad, as shown by Paul Lévy in his study of sums of independent identically distributed terms in the 1920s (Lévy 1925). Most of the densities and distribution functions that it encompasses cannot be expressed analytically by closed-form expressions, although their Fourier transforms are tractable. That is why stable laws, such as the Pareto-Levy distribution S [ α,1,1,0;1 ] , which we use hereafter, are not common in the field of astrophysics, but are relatively well known in finance for instance (Mandelbrot 1960; Uchaikin & Zolotarev 1999). Nowadays, computer programs such as Mathematica (Wolfram Research, Inc. 2016) can easily be used to compute these special functions.

. We recall that a pdf that is invariant under the product of convolution is described as stable. This is the case for well-known examples such as the Gaussian, Cauchy, and Lévy distributions. But this class of functions is broad, as shown by Paul Lévy in his study of sums of independent identically distributed terms in the 1920s (Lévy 1925). Most of the densities and distribution functions that it encompasses cannot be expressed analytically by closed-form expressions, although their Fourier transforms are tractable. That is why stable laws, such as the Pareto-Levy distribution S [ α,1,1,0;1 ] , which we use hereafter, are not common in the field of astrophysics, but are relatively well known in finance for instance (Mandelbrot 1960; Uchaikin & Zolotarev 1999). Nowadays, computer programs such as Mathematica (Wolfram Research, Inc. 2016) can easily be used to compute these special functions.

3.2. Application of the theorem to the probability of measuring a Galactic CR flux

To compute the spread σN that comes into play in the stable law followed by the total flux ΨN, we need to determine the spectral index α so that limψ → ∞C(ψ)ψα = η. We recall that C(ψ) is the survival probability to get a flux higher than ψ at the Earth. This probability can be obtained by integrating the density of a single source in space and time over the phase space region where it produces a flux larger than ψ.

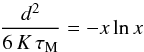

As we are interested in the limit where the flux ψ is large, if not infinite, the source must be local and young. In that case, CR propagation is the same as if the magnetic halo was infinite and diffusion was dominating the other processes such as convection and spallation. The region in space and time that yields a flux ψ may be derived from the boundless propagator of Eq. (3), which we translate into:  (18)For simplicity, we have introduced the dimensionless time variable x ≡ τ/τM, where the timescale τM is defined as the maximal age for a source to provide a flux ψ. That value is reached when the source is located at d = 0 and is equal to τM = (a/ψ)2/3. It is straightforward to check that for a given value K of the diffusion coefficient, and hence at a given CR energy E, all space-time points in the plane (x,d2) satisfying the condition

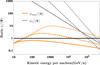

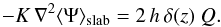

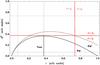

(18)For simplicity, we have introduced the dimensionless time variable x ≡ τ/τM, where the timescale τM is defined as the maximal age for a source to provide a flux ψ. That value is reached when the source is located at d = 0 and is equal to τM = (a/ψ)2/3. It is straightforward to check that for a given value K of the diffusion coefficient, and hence at a given CR energy E, all space-time points in the plane (x,d2) satisfying the condition  (19)are characterized by the same flux ψ ≡ ψ(r = 0,τ = τM). This corresponds to the thick blue line drawn in Fig. 1. Points below that curve yield a flux ψ′>ψ(0,τM), while points above it produce a flux ψ′<ψ(0,τM).

(19)are characterized by the same flux ψ ≡ ψ(r = 0,τ = τM). This corresponds to the thick blue line drawn in Fig. 1. Points below that curve yield a flux ψ′>ψ(0,τM), while points above it produce a flux ψ′<ψ(0,τM).

|

Fig. 1 Below the thick line: space and time region where a source contributes a flux |

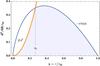

One can thus compute the cumulative distribution C(ψ) (sources with fluxes larger than ψ) by integrating the space and time density distribution of a single source ρ(r,t) (assumed constant in the following) over the region in phase space located below the blue line of Fig. 1. In the cylindrical approximation, N denoting the number of sources contributing to the total flux Ψ allows us to write:  (20)There is no closed-form expression for C(ψ) in general, but two relevant limiting situations can be discussed. Given ψ, if τM is such that the key length parameter (6 KτM)1/2 is smaller than the typical thickness of the Galactic disk, h, the result will be equivalent to that of a uniform density in a 3D volume, hence

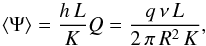

(20)There is no closed-form expression for C(ψ) in general, but two relevant limiting situations can be discussed. Given ψ, if τM is such that the key length parameter (6 KτM)1/2 is smaller than the typical thickness of the Galactic disk, h, the result will be equivalent to that of a uniform density in a 3D volume, hence  (21)We highlight that the coefficient η depends on the space-time probability density to find a source lying in the neighborhood of the observer; as does the typical flux spread σN. We may nevertheless express it as a function of the average flux ⟨ Ψ ⟩ to demonstrate how the various CR parameters at stake come into play. For the sake of clarity, the value of ⟨ Ψ ⟩ has been derived here assuming that the Galactic disk is an infinitesimally thick slab. Taking the 2D model neglecting radial boundary conditions leads to the simple expression

(21)We highlight that the coefficient η depends on the space-time probability density to find a source lying in the neighborhood of the observer; as does the typical flux spread σN. We may nevertheless express it as a function of the average flux ⟨ Ψ ⟩ to demonstrate how the various CR parameters at stake come into play. For the sake of clarity, the value of ⟨ Ψ ⟩ has been derived here assuming that the Galactic disk is an infinitesimally thick slab. Taking the 2D model neglecting radial boundary conditions leads to the simple expression  (22)whose numerical value is very close to the actual result, as shown in the Appendix. Qualitatively, we expect that the behavior outlined in Eq. (21) is always attained for sufficiently high fluxes, since those require very close sources, although when this regime is attained depends on the energy E via the diffusion coefficient K. Lower fluxes, however, are also yielded by sources located “far away” from us, with respect to the scale h. In the limit where these dominate, one can effectively model the Galaxy as an infinitesimally thick disk, so that the only quantity that matters is the 2D (surface) density μ(r,t) which may be expressed as

(22)whose numerical value is very close to the actual result, as shown in the Appendix. Qualitatively, we expect that the behavior outlined in Eq. (21) is always attained for sufficiently high fluxes, since those require very close sources, although when this regime is attained depends on the energy E via the diffusion coefficient K. Lower fluxes, however, are also yielded by sources located “far away” from us, with respect to the scale h. In the limit where these dominate, one can effectively model the Galaxy as an infinitesimally thick disk, so that the only quantity that matters is the 2D (surface) density μ(r,t) which may be expressed as  (23)In this 2D limit, one gets:

(23)In this 2D limit, one gets:  (24)We argue in the following that, depending on the regime of fluxes Ψ in which one is interested, either the 2D or the 3D distribution is relevant to the description of the problem. In any case, by virtue of the generalized version of the central limit theorem of Sect. 3.1, we know how to derive the pdf P(Ψ) for both cases. It is sufficient to take an index α = 5/3 (3D) or α = 4/3 (2D) and to compute the spread σN from Eq. (17) via the corresponding coefficients η3D and η2D. Notice that σN can be expressed as a function of the average flux ⟨ Ψ ⟩ and the various CR parameters. The number of sources N has cancelled out in the product ηN. When it is large enough, the asymptotic regime where the central limit theorem holds is reached and we expect

(24)We argue in the following that, depending on the regime of fluxes Ψ in which one is interested, either the 2D or the 3D distribution is relevant to the description of the problem. In any case, by virtue of the generalized version of the central limit theorem of Sect. 3.1, we know how to derive the pdf P(Ψ) for both cases. It is sufficient to take an index α = 5/3 (3D) or α = 4/3 (2D) and to compute the spread σN from Eq. (17) via the corresponding coefficients η3D and η2D. Notice that σN can be expressed as a function of the average flux ⟨ Ψ ⟩ and the various CR parameters. The number of sources N has cancelled out in the product ηN. When it is large enough, the asymptotic regime where the central limit theorem holds is reached and we expect ![Mathematical equation: \begin{equation} P(\Psi)\to \;\frac{1}{\sigma_{N}}\;S[\alpha,1,1,0;1]\left(\frac{\Psi-\langle\Psi\rangle}{\sigma_{N}}\right)\cdot \label{eq:pPsi} \end{equation}](/articles/aa/full_html/2017/04/aa29903-16/aa29903-16-eq105.png) (25)In order to comment upon the dependencies of the spread σN on the parameters of the problem, one may write

(25)In order to comment upon the dependencies of the spread σN on the parameters of the problem, one may write  (26)As the reader may have noticed, the number N of sources has disappeared from the rescaled quantity σN/ ⟨ Ψ ⟩. The latter encodes the statistical excursions of the total flux Ψ around its mean value ⟨ Ψ ⟩. Rescaled to the mean flux, the spread has the same dependence on the height L of the diffusive halo (∝1 /L) in both the 2D and 3D regimes. The thickness h of the Galactic disk only enters in the expression of η3D. The relative spread σN/ ⟨ Ψ ⟩ increases with CR energy through the diffusion coefficient K. It decreases as the rate ν of explosions is increased. Both K and ν enter through the ratio K/ν, with an exponent of 2/5 (3D) or 1/4 (2D).

(26)As the reader may have noticed, the number N of sources has disappeared from the rescaled quantity σN/ ⟨ Ψ ⟩. The latter encodes the statistical excursions of the total flux Ψ around its mean value ⟨ Ψ ⟩. Rescaled to the mean flux, the spread has the same dependence on the height L of the diffusive halo (∝1 /L) in both the 2D and 3D regimes. The thickness h of the Galactic disk only enters in the expression of η3D. The relative spread σN/ ⟨ Ψ ⟩ increases with CR energy through the diffusion coefficient K. It decreases as the rate ν of explosions is increased. Both K and ν enter through the ratio K/ν, with an exponent of 2/5 (3D) or 1/4 (2D).

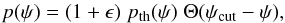

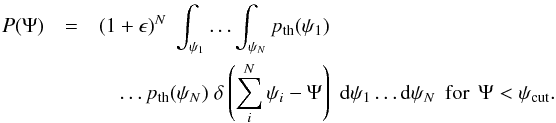

3.3. The case with an upper cut on the flux

Strictly speaking, applying the generalized version of the central limit theorem requires that the cumulative distribution function C(ψ) for a single source has a heavy-tail behavior up to an infinite flux ψ. This behavior should nevertheless break down since infinite fluxes, for example, are unphysical. However, one expects the stable law expression (25) for P(Ψ) to still provide a good approximation of the actual distribution up to some value of the total flux Ψ, should the underlying power-law behavior of the single source pdf p(ψ) be valid over a sufficiently large range. To quantify this, let us assume that the single source pdf follows the power-law behavior p(ψ) ∝ ψ− α−1, with 1 <α< 2, up to some critical value ψcut above which it vanishes. There may be several reasons for this heuristic argument to hold, some to be explored in the following. Schematically, one can write the probability of measuring a flux ψ from a single source as:  (27)where pth(ψ) is the idealized pdf with an infinite power-law tail, discussed in Sect. 3.1. Of course this may be too crude an approximation close to ψcut, but provided that ψcut is large compared to the fluxes of interest and the renormalization correction ϵ is small, it should not have a significant affect. In fact, given the Ansatz of Eq. (27), one can write the probability to measure a flux

(27)where pth(ψ) is the idealized pdf with an infinite power-law tail, discussed in Sect. 3.1. Of course this may be too crude an approximation close to ψcut, but provided that ψcut is large compared to the fluxes of interest and the renormalization correction ϵ is small, it should not have a significant affect. In fact, given the Ansatz of Eq. (27), one can write the probability to measure a flux  from N sources, among which N are located inside the light cone, as

from N sources, among which N are located inside the light cone, as  For values of Ψ such as Ψ <ψcut1, each individual value ψi must satisfy ψi<ψcut. Hence we get

For values of Ψ such as Ψ <ψcut1, each individual value ψi must satisfy ψi<ψcut. Hence we get  (30)Thus, forsufficiently large N , we expect

(30)Thus, forsufficiently large N , we expect ![Mathematical equation: \begin{eqnarray} &&P(\Psi)\approx(1+\epsilon)^{{N}}\;\frac{1}{\sigma_{N}}\;S[\alpha,1,1,0;1]\left(\frac{\Psi-\langle\Psi\rangle_{\rm th}}{\sigma_{N}}\right)\nonumber\\ && \text{for}\quad\Psi<\psi_{\rm cut}\;(\star), \label{eq:main_relation} \end{eqnarray}](/articles/aa/full_html/2017/04/aa29903-16/aa29903-16-eq125.png) (31)where the average flux ⟨ Ψ ⟩ th in the argument of the stable function corresponds to the uncut idealized pdf pth(ψ). The approximation of Eq. (31) is relatively general, but its actual usefulness depends on the precise values of ψcut and ϵ. For example, as long as Nϵ ≪ 1, the result does not really depend on ϵ. That condition turns out to be satisfied for the cases that are subsequently discussed. In the following subsections, we actually distinguish two evident physical reasons for imposing a cut on the tail of the pdf distribution.

(31)where the average flux ⟨ Ψ ⟩ th in the argument of the stable function corresponds to the uncut idealized pdf pth(ψ). The approximation of Eq. (31) is relatively general, but its actual usefulness depends on the precise values of ψcut and ϵ. For example, as long as Nϵ ≪ 1, the result does not really depend on ϵ. That condition turns out to be satisfied for the cases that are subsequently discussed. In the following subsections, we actually distinguish two evident physical reasons for imposing a cut on the tail of the pdf distribution.

3.4. Causality effect

To commence, propagation through diffusion should not violate causality, a condition which is not always satisfied by the propagator as defined in Eq. (3). Taking any finite age τ for a source leads to a non-zero value for  , whatever the distance d. This will not cause any problems for far and young sources for which the flux is exponentially suppressed, but for young and close objects, which happen to dominate large positive fluctuations of Ψ with respect to the average value ⟨ Ψ ⟩, this can lead to much larger flux contributions than physically allowed.

, whatever the distance d. This will not cause any problems for far and young sources for which the flux is exponentially suppressed, but for young and close objects, which happen to dominate large positive fluctuations of Ψ with respect to the average value ⟨ Ψ ⟩, this can lead to much larger flux contributions than physically allowed.

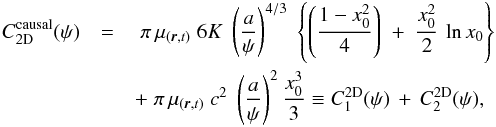

A correction for this effect can be quantified with reference to the space-time diagram of Fig. 1 by removing the portion where causality is violated from the region of phase space that contributes to the flux. The domain to be withdrawn extends above the orange curve defined by the light cone condition  , c being the speed of light in this case. The orange parabola allows us to carve away the domain in white that lies below the blue line, leaving only the light-blue shaded region to contribute to fluxes larger than ψ. The intersection between the blue (diffusion) and orange (light cone) curves takes place at x = x0 for which

, c being the speed of light in this case. The orange parabola allows us to carve away the domain in white that lies below the blue line, leaving only the light-blue shaded region to contribute to fluxes larger than ψ. The intersection between the blue (diffusion) and orange (light cone) curves takes place at x = x0 for which  (32)The cumulative distribution function C(ψ) has to be recalculated. It is given now by two different contributions corresponding to the integrals of the light-blue shaded area extending to the left (C1) and to the right (C2) of the vertical line at x0 in Fig. 1. The 2D and 3D results may be expressed as:

(32)The cumulative distribution function C(ψ) has to be recalculated. It is given now by two different contributions corresponding to the integrals of the light-blue shaded area extending to the left (C1) and to the right (C2) of the vertical line at x0 in Fig. 1. The 2D and 3D results may be expressed as:  (33)

(33)

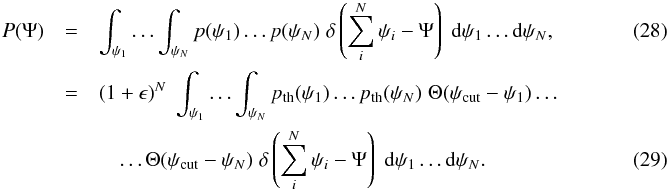

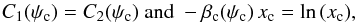

(34)The larger the flux ψ, the smaller the maximal age τM. In this limit, the coefficient β ∝ τM vanishes. As the orange parabola of Fig. 1 opens, the value of x0 tends to 1 and a large fraction of the space-time volume becomes causally disconnected. Thus, in the high flux limit, C1 vanishes while C2 increases. In the 2D regime, C2D(ψ) ∝ ψ-2 whereas in the 3D regime, C3D(ψ) ∝ ψ− 8/3. As mentioned previously, the latter eventually takes over the former for very large values of the flux ψ for which the typical distances of the sources are smaller than the thickness h of the Galactic disk. As 8/3 > 2, the variance associated to the pdf p(ψ) is now finite. According to the central limit theorem, the probability P(Ψ) converges toward a Gaussian when the number of sources N goes to infinity. We note, incidentally, that if the Galactic disk was infinitesimally thick, with h = 0, the 2D regime would apply with α = 2. The variance is in that case divergent but the generalized central limit theorem can be applied, with the consequence that the total flux pdf P(Ψ) also reaches a Gaussian form. Although causality arguments per se would allow arbitrarily large fluxes, we see that the whole discussion on stable laws loses its importance once a sizable fraction of the space-time volume is removed, since p(ψ) is too steep. In practice, one can account for these effects by abruptly cutting the “standard” power-law distribution pdf above a transition flux ψcut ≡ ψc, which we may define for instance via the condition C1(ψc) = C2(ψc). As we are interested in computing probabilities around the mean value ⟨ Ψ ⟩, let us compute ψc and compare it with ⟨ Ψ ⟩. To do so, we need to determine (ψc,xc) by solving the two following equations:

(34)The larger the flux ψ, the smaller the maximal age τM. In this limit, the coefficient β ∝ τM vanishes. As the orange parabola of Fig. 1 opens, the value of x0 tends to 1 and a large fraction of the space-time volume becomes causally disconnected. Thus, in the high flux limit, C1 vanishes while C2 increases. In the 2D regime, C2D(ψ) ∝ ψ-2 whereas in the 3D regime, C3D(ψ) ∝ ψ− 8/3. As mentioned previously, the latter eventually takes over the former for very large values of the flux ψ for which the typical distances of the sources are smaller than the thickness h of the Galactic disk. As 8/3 > 2, the variance associated to the pdf p(ψ) is now finite. According to the central limit theorem, the probability P(Ψ) converges toward a Gaussian when the number of sources N goes to infinity. We note, incidentally, that if the Galactic disk was infinitesimally thick, with h = 0, the 2D regime would apply with α = 2. The variance is in that case divergent but the generalized central limit theorem can be applied, with the consequence that the total flux pdf P(Ψ) also reaches a Gaussian form. Although causality arguments per se would allow arbitrarily large fluxes, we see that the whole discussion on stable laws loses its importance once a sizable fraction of the space-time volume is removed, since p(ψ) is too steep. In practice, one can account for these effects by abruptly cutting the “standard” power-law distribution pdf above a transition flux ψcut ≡ ψc, which we may define for instance via the condition C1(ψc) = C2(ψc). As we are interested in computing probabilities around the mean value ⟨ Ψ ⟩, let us compute ψc and compare it with ⟨ Ψ ⟩. To do so, we need to determine (ψc,xc) by solving the two following equations:  (35)which numerically yields xc ≈ 0.6226 in the 2D case and xc ≈ 0.6424 in the 3D case. Note that xc (or equivalently βc) does not depend on the cosmic ray energy, and is not very different between the 2D and 3D regimes. We can also define y = ψc/ ⟨ Ψ ⟩, which can be written as

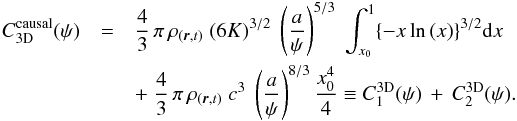

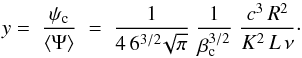

(35)which numerically yields xc ≈ 0.6226 in the 2D case and xc ≈ 0.6424 in the 3D case. Note that xc (or equivalently βc) does not depend on the cosmic ray energy, and is not very different between the 2D and 3D regimes. We can also define y = ψc/ ⟨ Ψ ⟩, which can be written as  (36)The estimate for y varies only by 14% between the 2D and 3D cases. As this difference is relatively small, we will take the average between these two values in the following discussion. Notice that y depends on energy through the diffusion coefficient K. Of course, for our discussion to be of any relevance, the stable law Eq. (31) should be valid for a total flux Ψ well in excess of its average ⟨ Ψ ⟩, that is, for values of y as large as possible. As an example, for the MED propagation model borrowed from Donato et al. (2004), we find that y ≃ 32 at 10 TeV while it reaches ~2 × 104 at 100 GeV.

(36)The estimate for y varies only by 14% between the 2D and 3D cases. As this difference is relatively small, we will take the average between these two values in the following discussion. Notice that y depends on energy through the diffusion coefficient K. Of course, for our discussion to be of any relevance, the stable law Eq. (31) should be valid for a total flux Ψ well in excess of its average ⟨ Ψ ⟩, that is, for values of y as large as possible. As an example, for the MED propagation model borrowed from Donato et al. (2004), we find that y ≃ 32 at 10 TeV while it reaches ~2 × 104 at 100 GeV.

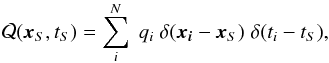

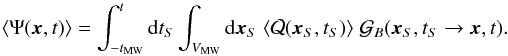

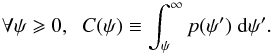

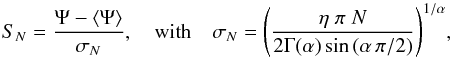

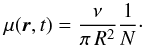

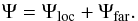

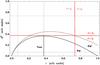

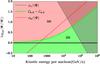

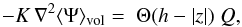

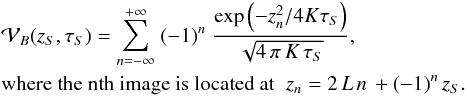

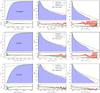

The evolution of ψc/ ⟨ Ψ ⟩ as a function of energy is displayed in gray in Fig. 2 for the three different benchmark propagation models discussed in Donato et al. (2004), namely MIN, MED and MAX, and whose parameters are in Table A.1. That ratio is always larger than ten for cosmic ray energies below 10 TeV. To be conservative, the causality constraint could be forgotten below the TeV scale as long as we are interested in values of Ψ not exceeding a few times the average. However, depending on the propagation model, the light cone cut-off may seriously impair the use of Eq. (31) above a few tens of TeV, an energy range probed by calorimetric instruments such as CREAM or CALET.

|

Fig. 2 Causal cutoff flux ψc/ ⟨ Ψ ⟩ (local environment cutoff flux ψmax/ ⟨ Ψ ⟩) as a function of energy is displayed in gray (orange) from top to bottom for the three propagation models MIN (dotted), MED (dashed) and MAX (solid). |

3.5. Absence of very close and/or young sources

Another natural limitation to the maximum flux that a single source may contribute to the measured CR flux Ψ comes from some (partial) deterministic information on nearby sources; for example, one knows the sources within some distance one by one, but not the ones that are far away; or one only knows that no source exists within some distance. In a schematic way, one may split the flux into the sum of a local contribution from known sources and a remote component, on which the only information is of statistical nature, as considered above:  (37)The situation may be intermediate, for instance one has a catalog whose completeness decreases with distance and age of the source, but accounting for this complication is unnecessary for what follows. For a given catalog, which is assumed to be complete within a given region of space-time, from which the contribution Ψloc can be “exactly” computed, one could repeat the reasoning of the previous section to determine the pdf associated to Ψfar. The cumulative distribution function C(ψ) of a remote source can be computed by carving out the space-time region covered by the catalog.

(37)The situation may be intermediate, for instance one has a catalog whose completeness decreases with distance and age of the source, but accounting for this complication is unnecessary for what follows. For a given catalog, which is assumed to be complete within a given region of space-time, from which the contribution Ψloc can be “exactly” computed, one could repeat the reasoning of the previous section to determine the pdf associated to Ψfar. The cumulative distribution function C(ψ) of a remote source can be computed by carving out the space-time region covered by the catalog.

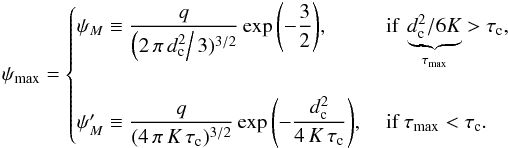

Let us develop instead a slightly simpler argument by determining the maximal flux ψcut ≡ ψmax one can expect from a source located at the inner boundary of the phase space region filled by the catalog. We can use it to find the closest and youngest object, hence deriving plausible values for the minimal distance and age below which no cosmic ray injection takes place. A source located at distance d, which exploded at time τ in the past contributes the flux  (38)In the most general case, we want to put lower limits on the distance (d>dc) and age (τ>τc) of remote sources in order to extract the cut-off, hereafter denoted by ψmax, above which the pdf p(ψ) vanishes. Constraining the distance d to be larger than dc translates into the maximal flux

(38)In the most general case, we want to put lower limits on the distance (d>dc) and age (τ>τc) of remote sources in order to extract the cut-off, hereafter denoted by ψmax, above which the pdf p(ψ) vanishes. Constraining the distance d to be larger than dc translates into the maximal flux  (39)which is reached for an age

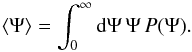

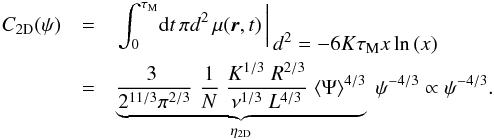

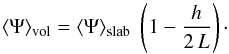

(39)which is reached for an age  . The flux ψM corresponds to the solid gray curve of Fig. 3. In this space-time diagram, the region below d = dc is excluded. From now on, two cases must be distinguished depending on the relative values of τmax and τc. If τmax>τc, the maximum flux that a remote source may provide is still ψM, a value reached at the space-time location (τmax,dc). On the other hand, if τmax<τc, the region to the left of the vertical line τ = τc being excluded, remote sources can no longer yield the flux ψM. The solid gray line lies entirely in the excluded portion of the phase space diagram of Fig. 3. In this regime, the maximal flux attainable by a remote source is

. The flux ψM corresponds to the solid gray curve of Fig. 3. In this space-time diagram, the region below d = dc is excluded. From now on, two cases must be distinguished depending on the relative values of τmax and τc. If τmax>τc, the maximum flux that a remote source may provide is still ψM, a value reached at the space-time location (τmax,dc). On the other hand, if τmax<τc, the region to the left of the vertical line τ = τc being excluded, remote sources can no longer yield the flux ψM. The solid gray line lies entirely in the excluded portion of the phase space diagram of Fig. 3. In this regime, the maximal flux attainable by a remote source is  . It corresponds to the dashed gray line that touches the allowed phase space region at location (τc,dc). In summary, imposing the constraint { d>dc,τ>τc } translates into ψ<ψmax with:

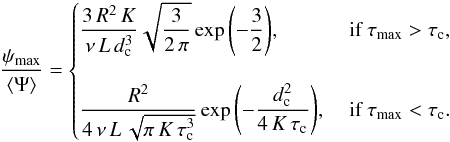

. It corresponds to the dashed gray line that touches the allowed phase space region at location (τc,dc). In summary, imposing the constraint { d>dc,τ>τc } translates into ψ<ψmax with:  (40)This value may be compared to the mean flux ⟨ Ψ ⟩ taken as the theoretical average corresponding to the slab model and derived in Appendix B. The relations (40) readily translate into

(40)This value may be compared to the mean flux ⟨ Ψ ⟩ taken as the theoretical average corresponding to the slab model and derived in Appendix B. The relations (40) readily translate into  (41)As a conservative example of this procedure, we have taken dc = 0.06 kpc as our lower limit on the distance, inspired by the closest known source G+276.5+19. In the same way, our lower boundary on the age comes from the youngest known source J0855-4644 for which τc = 2.7 kyr. These values are taken from the catalog compiled by Delahaye et al. (2010). Using relations (41), we have derived the ratio ψmax/ ⟨ Ψ ⟩ and plotted it in orange in Fig. 2 to compare it with ψc/ ⟨ Ψ ⟩. We observe a change of slope in the dependence of ψmax/ ⟨ Ψ ⟩ as a function of cosmic ray energy. This is particularly obvious in the MIN case (dotted orange curve) for which the diffusion coefficient is the smallest at low energies compared to the other propagation regimes MED and MAX. In the MIN configuration, the ratio ψmax/ ⟨ Ψ ⟩ increases with energy below 1 TeV. In this regime, K is small and the critical age

(41)As a conservative example of this procedure, we have taken dc = 0.06 kpc as our lower limit on the distance, inspired by the closest known source G+276.5+19. In the same way, our lower boundary on the age comes from the youngest known source J0855-4644 for which τc = 2.7 kyr. These values are taken from the catalog compiled by Delahaye et al. (2010). Using relations (41), we have derived the ratio ψmax/ ⟨ Ψ ⟩ and plotted it in orange in Fig. 2 to compare it with ψc/ ⟨ Ψ ⟩. We observe a change of slope in the dependence of ψmax/ ⟨ Ψ ⟩ as a function of cosmic ray energy. This is particularly obvious in the MIN case (dotted orange curve) for which the diffusion coefficient is the smallest at low energies compared to the other propagation regimes MED and MAX. In the MIN configuration, the ratio ψmax/ ⟨ Ψ ⟩ increases with energy below 1 TeV. In this regime, K is small and the critical age  exceeds the lower bound τc. According to Eq. (41), the ratio ψmax/ ⟨ Ψ ⟩ scales as K and increases with cosmic ray energy. At approximately 1 TeV, a change of regime occurs when τmax becomes smaller than τc, and the ratio ψmax/ ⟨ Ψ ⟩ scales as 1 /

exceeds the lower bound τc. According to Eq. (41), the ratio ψmax/ ⟨ Ψ ⟩ scales as K and increases with cosmic ray energy. At approximately 1 TeV, a change of regime occurs when τmax becomes smaller than τc, and the ratio ψmax/ ⟨ Ψ ⟩ scales as 1 / , decreasing with energy. The same trend is featured by the MED (dashed orange) and MAX (solid orange) curves, although in a milder way.

, decreasing with energy. The same trend is featured by the MED (dashed orange) and MAX (solid orange) curves, although in a milder way.

|

Fig. 3 Solid gray line: locus of source ages and distances giving the same flux as the maximal one ψM yielded by a source located at distance dc from the Earth, attained for |

As clearly shown in Fig. 2, the cut-off ψmax imposed by the catalog of local and recent sources is much more constraining than the value ψc yielded by the causality argument. The orange curves are basically always below the gray lines, which they intersect at energies in excess of 200 TeV, and for values of the ratio ψcut/ ⟨ Ψ ⟩ of approximately 1. The use of approximation (31) is therefore possible below the orange curves. It is effectively interesting for values of the cut-off ψmax larger than the average flux ⟨ Ψ ⟩. This is not the case for the MAX configuration. For the MIN and MED propagation models, the stable law (31) can still be applied on a fairly limited region in energy and total flux Ψ. As featured in Fig. 2, the ratio ψmax/ ⟨ Ψ ⟩ reaches a maximal value of ten at an energy of 1 TeV. The constraint from the catalog is therefore relatively strict. Most of the populations of sources that would otherwise lead to large values of the cosmic ray flux are excluded from the statistical analysis when the age and distance constraints are imposed. A word of caution is in order though since the completeness of a catalog is always questionable. Setting lower limits on the age and distance of nearby sources may be relatively subjective and eventually hazardous. That is why we have disregarded them in the Monte Carlo simulations that we discuss in the following section.

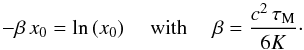

4. Comparison with numerical simulations

Until now, we have determined the range of fluxes Ψ for a given propagation setup over which the approximation described in Sect. 3.3 holds. However, identically to how the combined pdf P(Ψ) tends toward a Gaussian law when the conventional central limit theorem applies, the convergence to a stable law is only an asymptotic behavior. While exact results on the closeness to a stable law at finite N may exist in the mathematical literature, generic results are not useful in our case where a cut-off may be imposed on the individual flux pdf p(ψ). We thus need to validate the reliability of the analytical theory via extensive numerical simulations. Our aim is to study how the total flux pdf P(Ψ) converges to or departs from the stable law Eq. (25).

|

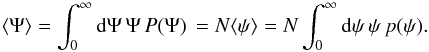

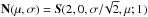

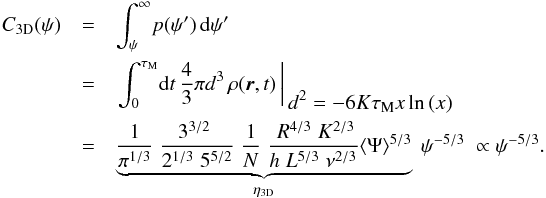

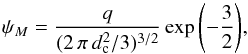

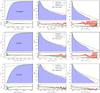

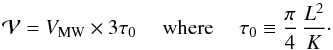

Fig. 4 For each row, the left and right cumulative blue histograms of 106 Monte Carlo realizations of Galactic populations of CR sources are displayed in the left and right panels, respectively, whereas the pdf P(Ψ) stands in the middle. The MED propagation model is used without taking into account convection, diffusive reacceleration and spallations. From top to bottom, the CR kinetic energy has been set equal to 100 GeV, 1 TeV, and 10 TeV. The solid green line indicates the theoretical prediction for the 2D model of the Galactic magnetic halo, whereas the dashed red curve corresponds to the 3D case. The residuals between theory and simulations are displayed below each histogram with their 1σ Poissonian error. |

4.1. Simulation settings

For each realization of Ψ, we simulate a Galactic population of sources in the framework of the 3D model of Eq. (6). Each source is generated at the random position xi within the Galactic disk, and with the random age τi. The positions xi are taken within an idealized cylindrical Milky Way Galaxy of radius R and half-thickness h, so that the spatial density of sources is homogeneous within the disk. The age of each source τi is taken between 0 and T, where T is the integration time of the simulation and is chosen to be 3πL2/ 4K, that is, approximately three times the typical diffusion time within the magnetic halo. The number of sources of a realization is N = Tν, with ν the rate of SN explosions, taken here to be three per century. Note that for a constant explosion rate of 3 SN/century and the choosen T, this means considering only sources younger than about 133 Myr at 100 GeV, so still much younger than the age of the Galaxy. In our model, this number reaches approximately 4 × 106 at a reference energy of 100 GeV. The spectrum of particles injected by each source is defined as  (42)where ℛ stands for the CR rigidity while the normalization q0 is set equal to 1 GeV-1. Notice that q does not come into play insofar as it can be factored out from our numerical results. The flux yielded by a source is computed assuming the CR propagation model MED, where we have safely neglected the effects of convection, diffusive reacceleration and spallations, which become negligible at the energies considered in our simulations. Without loss of generality, fluxes are derived for an observer placed at the radial and vertical centers of the Galactic disk, that is, at r = z = 0. The contributions ψi of the N sources of a given population are added to obtain one realization of Ψ. When the causality condition discussed in Sect. 3.4 is implemented, the contributions from sources lying outside the light cone of the observer are not included. We evaluated Ψ for each decade of energy between 100 GeV and 1 PeV. As we are interested in the pdf of the random variable Ψ, we simulated “only” 106 realizations of an idealized cylindrical Milky Way Galaxy, since we are not concerned with probabilities below the 10-5 level.

(42)where ℛ stands for the CR rigidity while the normalization q0 is set equal to 1 GeV-1. Notice that q does not come into play insofar as it can be factored out from our numerical results. The flux yielded by a source is computed assuming the CR propagation model MED, where we have safely neglected the effects of convection, diffusive reacceleration and spallations, which become negligible at the energies considered in our simulations. Without loss of generality, fluxes are derived for an observer placed at the radial and vertical centers of the Galactic disk, that is, at r = z = 0. The contributions ψi of the N sources of a given population are added to obtain one realization of Ψ. When the causality condition discussed in Sect. 3.4 is implemented, the contributions from sources lying outside the light cone of the observer are not included. We evaluated Ψ for each decade of energy between 100 GeV and 1 PeV. As we are interested in the pdf of the random variable Ψ, we simulated “only” 106 realizations of an idealized cylindrical Milky Way Galaxy, since we are not concerned with probabilities below the 10-5 level.

4.2. Simulation results

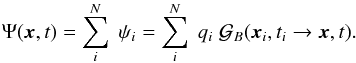

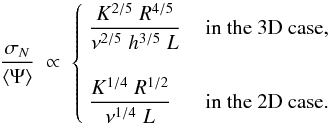

In Fig. 4, we show the results of the simulations that we carried out at 100 GeV, 1 TeV, and 10 TeV, with the light cone cut-off switched on. In each row, the left and right cumulative blue histograms of 106 realizations of Galactic populations of CR sources are displayed in the left and right panels, respectively, whereas the pdf P(Ψ) stands in the middle. Once normalized to unity, the cumulative histograms on the left and on the right directly yield the probability of getting a population sourcing a flux smaller or larger, respectively, than the value Ψ/ ⟨ Ψ ⟩ sim read on the horizontal axis. Notice that ⟨ Ψ ⟩ sim denotes the value of the flux averaged over the 106 realizations of our Monte Carlo. Due to finite sampling, results start to become unreliable for probabilities below 10-5, and are obviously not even defined below 10-6.

In each of the panels of Fig. 4, we also display the left and right cumulative distributions as well as the pdf corresponding to the theoretical predictions of Sect. 3.2. The dashed red line stands for the 3D case (i.e., α = 5/3) whereas the solid green curve refers to the 2D case (i.e., α = 4/3). In the argument of the stable distribution of Eq. (25), the average flux ⟨ Ψ ⟩ must be calculated exactly, assuming the same Milky Way magnetic halo as in the Monte Carlo simulations, namely a disk of radius 20 kpc and a maximal age for the SN explosions of T. Depending on whether the theoretical prediction is 2D or 3D, the sources are distributed along the vertical direction according to Eq. (6). As shown in the Appendix, the precise value of ⟨ Ψ ⟩ is slightly different from the approximation of Eq. (22). Once derived, the argument of the stable distribution (25) must be carefully rescaled to match the variable Ψ/ ⟨ Ψ ⟩ sim used on the horizontal axes of Fig. 4. For completeness, the residuals between the models (2D and 3D) and the histograms are also displayed below each panel. The shaded areas correspond to the one-sigma Poissonian uncertainty coming from the histogram binning. Finally, the dashed-dotted blue line depicts the results expected from a Gaussian distribution with the same average and variance as given by the simulations. That variance is finite insofar as the light cone cut-off condition is implemented.

For energies not in excess of 10 TeV, the first noticeable result is the remarkable good convergence of the simulations toward the analytical model based on stable distributions. At low fluxes, the pdf and the left cumulative distribution of the simulations are very well matched by the theoretical 2D curve, and this holds whatever the energy considered. On the other hand, the theoretical prediction of the 3D model is always the closest to the simulations for large fluxes. Whatever the regime, all histograms reproduce the theoretical probability within  (10%) down to the 10-4 level, and even with the order of magnitude below 10-5. Note that whatever the energy in the range extending from 100 GeV to 1 PeV, the simulations are not at all reproduced by the Gaussian law, featured by the dashed-dotted blue lines, which would be the limiting case for an infinite number N of sources according to the conventional central limit theorem. Stable laws are, on the contrary, an excellent approximation to our results, even though a cut has been imposed on the single source pdf p(ψ) from causality considerations and one would naively expect P(Ψ) to relax toward a Gaussian law.

(10%) down to the 10-4 level, and even with the order of magnitude below 10-5. Note that whatever the energy in the range extending from 100 GeV to 1 PeV, the simulations are not at all reproduced by the Gaussian law, featured by the dashed-dotted blue lines, which would be the limiting case for an infinite number N of sources according to the conventional central limit theorem. Stable laws are, on the contrary, an excellent approximation to our results, even though a cut has been imposed on the single source pdf p(ψ) from causality considerations and one would naively expect P(Ψ) to relax toward a Gaussian law.

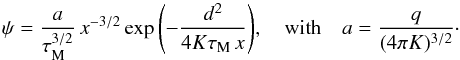

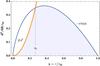

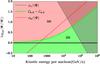

At fixed CR energy, we observe a transition occurring at some critical value ψh of the flux Ψ, above which the 3D (i.e., α = 5/3) stable law yields a better approximation than the 2D (i.e., α = 4/3) distribution. In order to derive an estimate for ψh, we should keep in mind that stable laws tend to be dominated by the contribution from a single object. The transition between the 2D and 3D regimes of the total flux pdf P(Ψ) should result from an evolution in the behavior of the individual flux pdf p(ψ) with respect to ψ. This change is, in turn, related to a modification in the spatial distribution of the sources. We remark that the closer the object, the higher the flux it yields. Let us now make an educated guess and define ψh as the critical flux above which the dominant sources are statistically very close to the observer, at a distance less than the half-thickness h of the Galactic disk. As seen by the observer, they are isotropically distributed and the 3D model applies. As objects yielding a flux less than ψh are farther, their spatial distribution reflects the flatness of the Galactic disk and the 2D model is best suited to describe the simulations. In the phase space diagram of Fig. 3, the flux ψh corresponds to the solid gray iso-flux curve where dc is replaced by h. A value can be derived from Eq. (39) and translates to the red solid line of Fig. 5 that depicts the behavior of the ratio ψh/ ⟨ Ψ ⟩ as a function of CR kinetic energy. That curve features the same trend as our results. As the CR energy increases, the 2D to 3D transition occurs at higher values of the flux relative to the average ⟨ Ψ ⟩. At low CR energy, the agreement is not very good though. The transition flux ψh falls beneath the average flux for energies below 300 GeV, a trend that is not observed in our simulations. In the upper-left panel of Fig. 4, the left cumulative histogram is very well matched by the 2D stable law. Furthermore, defining ψh as the maximal flux yielded by sources located at a distance dc exactly equal to h is somewhat arbitrary.

|

Fig. 5 Regions in the flux versus energy plane where the 2D or 3D stable law is best suited to computing the probability of an excess above the mean (MED propagation model assumed). For a fluctuation lying in the light red (green) region, one should use the 3D (2D) approximation corresponding to the index α = 5/3 (α = 4/3). The dashed green curve signals the transition between these regimes as estimated from the equality of the cumulative distributions, |

If we are interested in quantifying the probability of measuring a particular flux excess with respect to the mean, as is the case for the applications discussed in Sect. 5, we can alternatively define ψh as the value for which the 2D and 3D right cumulative distributions C(ψ) are equal. Following the notations of Eq. (15), the value of ψh is now given by the condition  . This leads to the dashed green line of Fig. 5 whose behavior with respect to CR energy exhibits the same trend as the red curve of the previous estimate. This time, the transition flux is always larger than the average ⟨ Ψ ⟩. The dashed green line separates the plot in two distinct regions. In the light green domain extending below the frontier, the simulations are well explained by the theoretical 2D stable law (i.e., α = 4/3). In the light red part of the diagram, the 3D stable distribution (i.e., α = 5/3) provides the best approximation. Above 1 TeV, our new value of the transition flux is smaller than the previous estimate derived from the Galactic disk half-thickness argument. Indeed, the cumulative distribution C(ψ) is obtained via an integration of the pdf p(ψ) from ψ upward. It contains information pertaining to the high-flux behavior of the pdf, and feels the 3D regime for smaller values of the flux compared to the other approach. Below 100 GeV, the 3D and 2D stable laws should be used above and beneath the mean flux, respectively, indicated in the plot by the solid black horizontal line.

. This leads to the dashed green line of Fig. 5 whose behavior with respect to CR energy exhibits the same trend as the red curve of the previous estimate. This time, the transition flux is always larger than the average ⟨ Ψ ⟩. The dashed green line separates the plot in two distinct regions. In the light green domain extending below the frontier, the simulations are well explained by the theoretical 2D stable law (i.e., α = 4/3). In the light red part of the diagram, the 3D stable distribution (i.e., α = 5/3) provides the best approximation. Above 1 TeV, our new value of the transition flux is smaller than the previous estimate derived from the Galactic disk half-thickness argument. Indeed, the cumulative distribution C(ψ) is obtained via an integration of the pdf p(ψ) from ψ upward. It contains information pertaining to the high-flux behavior of the pdf, and feels the 3D regime for smaller values of the flux compared to the other approach. Below 100 GeV, the 3D and 2D stable laws should be used above and beneath the mean flux, respectively, indicated in the plot by the solid black horizontal line.

|

Fig. 6 Same as in Fig 4 with a CR kinetic energy of 100 TeV. The upper row features the results of a simulation where the causality constraint is implemented whereas in the lower row, all sources are taken into account in the calculation of the flux Ψ, including those lying outside the light cone of the observer. |

The shaded area in the upper-right corner of Fig. 5 lies above the dashed gray curve featuring the ratio ψc/ ⟨ Ψ ⟩. In this region, causality is expected to limit the statistical excursions of the flux toward high values, and the stable law should overestimate the actual pdf P(Ψ). This trend is already present in the lower-right panel of Fig. 4, where the right cumulative blue histogram lies below the dashed red curve of the 3D stable law. For the simulations performed at 100 GeV and 1 TeV, the agreement is excellent. According to Fig. 5, the light cone cut-off starts to seriously affect the (not too large) fluctuations of the flux above an energy of 10 TeV. To illustrate this effect, we show the results of simulations realized at 100 TeV in Fig. 6, for which the causality constraint has been switched on (upper row) or off (lower row). The behavior of the pdf P(Ψ) is very different between the two cases. In the upper row, sources lying outside the light cone of the observer are removed. The discrepancy between the simulated histograms and the stable law predictions is striking, even for fluctuations only 30% larger than the mean. If, now, all the sources are allowed to contribute to the flux Ψ, the agreement between the histograms and the stable laws is recovered. In the lower-right panel of Fig. 6, the 2D prediction  for the right cumulative distribution accounts well for the Monte Carlo results up to a flux approximately ten times larger than the mean. For larger fluctuations, the 3D function

for the right cumulative distribution accounts well for the Monte Carlo results up to a flux approximately ten times larger than the mean. For larger fluctuations, the 3D function  is a good match to the histograms.

is a good match to the histograms.

One last remark is in order. According to Fig. 5, the ratio ψc/ ⟨ Ψ ⟩ drops below unity for energies above 100 TeV. In this energy range, we expect the effect of the causal cut to be so important that stable laws are poor representations of the actual pdf P(Ψ), which they overshoot by a large margin. As discussed above, this occurs as soon as the flux exceeds its mean value. But for values of Ψ lower than the average ⟨ Ψ ⟩, the 2D stable law prediction (solid green curve) is still in excellent agreement with the histograms, whether the light cone cut-off condition is implemented or not. In this regime, small fluxes are involved with two consequences. Distant sources dominate the faint signal received by the observer and their spatial distribution reflects the flatness of the Galactic disk, which is 2D in nature. Moreover, they yield a flux ψ well below the light cone cut-off ψc to which they are totally insensitive. Notice the excellent agreement between the simulations and the 2D stable law predictions in all the left panels of Figs. 4 and 6. We must finally conclude that for fluxes smaller than the mean, the pdf P(Ψ) has asymptotically relaxed toward the (2D) stable distribution of Eq. (25), even though this is not the case in the high-flux regime. Stable laws seem to be robust descriptions of the pdf P(Ψ) as soon as the condition of Eq. (16) is fulfilled over some range of values of ψ.

Probability that a source configuration leads to a 3σ fluctuation above and below the flux measured by AMS02 and PAMELA.

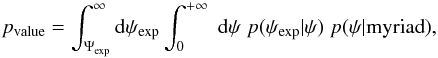

5. Applications