Fig. 2

Download original image

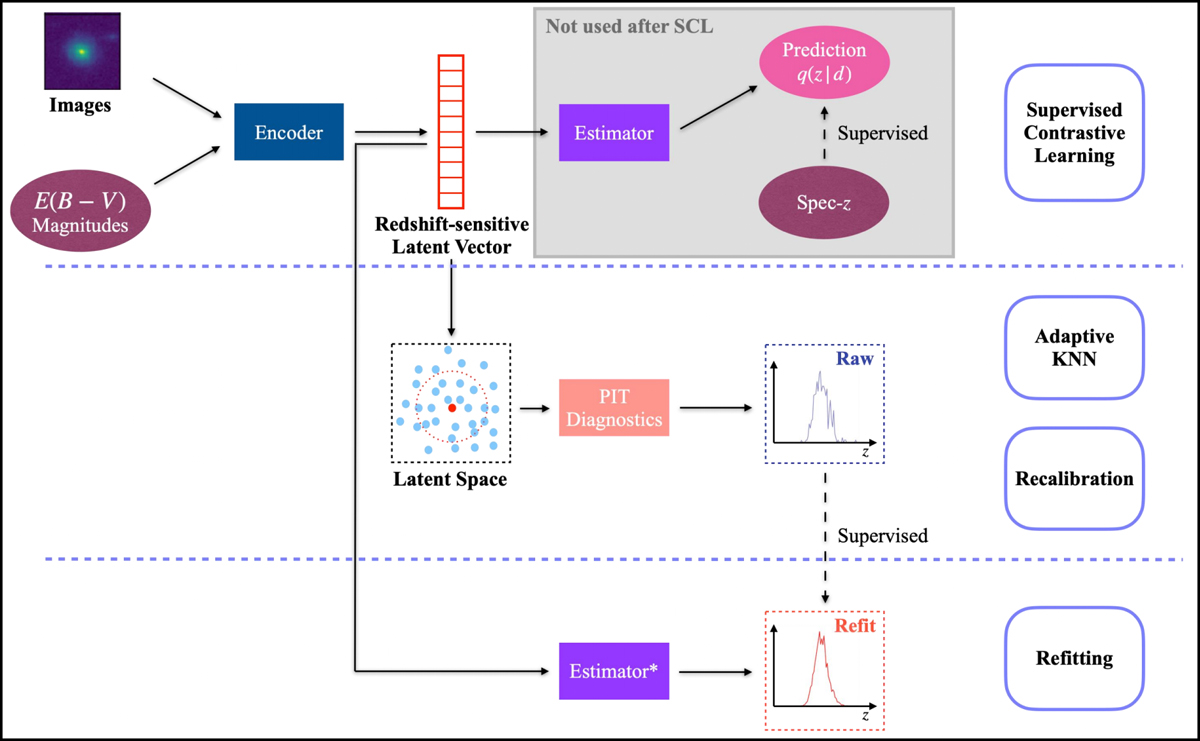

Graphic illustration of our method CLAP for photometric redshift probability density estimation. The development of a CLAP model consists of several procedures, including supervised contrastive learning (SCL), adaptive KNN, reconstruction, and refitting. The SCL framework is based on neural networks. It uses an encoder network to project multi-band galaxy images and additional input data (i.e. galactic reddening E(B – V), magnitudes) to low-dimensional latent vectors, which form a latent space that encodes redshift information and has a distance metric defined. The spectroscopic redshift labels are leveraged to supervise the redshift outputs predicted by an estimator network for extracting redshift information, but the trained estimator and its outputs are no longer used once the latent space is established (indicated by the shaded region). These outputs are uncalibrated, and should not be regarded as the final estimates produced by CLAP. The adaptive KNN and the KNN-enabled recalibration are implemented locally on the latent space via diagnostics with the probability integral transform (PIT), constructing raw probability density estimates using the known redshifts of the PIT-selected nearest neighbours. The raw estimates are then used as labels to retrain the estimator from scratch in a refitting procedure with the trained encoder fixed, resuming an end-to-end model ready to process imaging data and produce the desired estimates. Lastly, we combine the estimates from an ensemble of CLAP models developed following these procedures (not shown in the figure).

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.