| Issue |

A&A

Volume 529, May 2011

|

|

|---|---|---|

| Article Number | A27 | |

| Number of page(s) | 28 | |

| Section | Numerical methods and codes | |

| DOI | https://doi.org/10.1051/0004-6361/201014949 | |

| Published online | 24 March 2011 | |

SEREN – a new SPH code for star and planet formation simulations

Algorithms and tests⋆

1

Department of Physics and AstronomyUniversity of Sheffield, Hicks Building, Hounsfield Road, Sheffield S3 7RH, UK

e-mail: D.Hubber@sheffield.ac.uk

2

School of Physics and Astronomy, Cardiff University, Queens Buildings, The Parade, Cardiff, CF24 3AA, Wales, UK

3

Institute for Theoretical Astrophysics, University of Oslo, Pb 1029 Blindern, 0315 Oslo, Norway

4

Centre of Mathematics for Applications, University of Oslo, Pb 1053 Blindern, 0316 Oslo, Norway

5 Astronomical Institute, Academy of Sciences of the Czech Republic, Boční II 1401, 141 31 Praha 4, Czech Republic

Received: 6 May 2010

Accepted: 29 January 2011

We present SEREN, a new hybrid Smoothed Particle Hydrodynamics and N-body code designed to simulate astrophysical processes such as star and planet formation. It is written in Fortran 95/2003 and has been parallelised using OpenMP. SEREN is designed in a flexible, modular style, thereby allowing a large number of options to be selected or disabled easily and without compromising performance. SEREN uses the conservative “grad-h” formulation of SPH, but can easily be configured to use traditional SPH or Godunov SPH. Thermal physics is treated either with a barotropic equation of state, or by solving the energy equation and modelling the transport of cooling radiation. A Barnes-Hut tree is used to obtain neighbour lists and compute gravitational accelerations efficiently, and an hierarchical time-stepping scheme is used to reduce the number of computations per timestep. Dense gravitationally bound objects are replaced by sink particles, to allow the simulation to be evolved longer, and to facilitate the identification of protostars and the compilation of stellar and binary properties. At the termination of a hydrodynamical simulation, SEREN has the option of switching to a pure N-body simulation, using a 4th-order Hermite integrator, and following the ballistic evolution of the sink particles (e.g. to determine the final binary statistics once a star cluster has relaxed). We describe in detail all the algorithms implemented in SEREN and we present the results of a suite of tests designed to demonstrate the fidelity of SEREN and its performance and scalability.

Key words: hydrodynamics / methods: numerical / stars: formation

Further information and additional tests of SEREN can be found at the web-page http://www.astro.group.shef.ac.uk/seren.

© ESO, 2011

1. Introduction

Star formation problems are amongst the most demanding in computational astrophysics, requiring a large number of physical processes to be modeled (e.g. hydrodynamics, self-gravity, optically thick radiative cooling, gas chemistry, ionization, gas-ion coupling, magneto-hydrodynamics, radiative and mechanical feedback) over a very large range of physical conditions (i.e. gas densities from ~ 10-20 g cm-3 to ~ 10 + 1 g cm-3, and gas temperatures from ~ 10 K to ~ 107 K). It is non-trivial to include all of the above physics in a single code which works over such a wide range of physical conditions and produces accurate results in an efficient manner. There are also often multiple methods available to model these processes, and one must choose the most appropriate and/or optimal method to study a given problem. The principal choice is whether to use an Eulerian grid-based code to simulate the fluid dynamics, or a Lagrangian particle-based code. Grid-based schemes are capable of modelling incompressible fluid dynamics accurately and efficiently, but for highly compressible fluids, where the density can take a large range of values, expensive adaptive-mesh-refinement techniques are required. Particle-based schemes, such as smoothed particle hydrodynamics, do not model hydrodynamical processes as well as grid-based schemes (Agertz et al. 2007), but they can model highly compressible fluids through a large range of scales with ease. This makes particle codes well suited to modelling self-gravitating fluids such as those involved in star formation. A number of publicly available codes using either static or adaptive-mesh-refinement grids (e.g. ZEUS, Stone & Norman 1992;FLASH, Fryxell et al. 2000; RAMSES, Teyssier 2002; ENZO, Abel et al. 2002) or particles (e.g. GADGET, Springel et al. 2001; GADGET2, Springel 2005; VINE, Wetzstein et al. 2009; Nelson et al. 2009) are available, and have been applied to a variety of different phenomena in interstellar gas dynamics, star and galaxy formation, and cosmology.

Here we present SEREN, a new multi-dimensional self-gravitating hydrodynamics and N-body code. SEREN uses the smoothed particle hydrodynamics (SPH) algorithm to model fluid dynamics, in combination with tree-gravity and hierarchical block-timestepping routines. It also includes a variety of specialist routines designed to tackle star and planet formation problems, such as sink particles (Bate et al. 1995), and a 4th order Hermite N-body integrator (Makino & Aarseth 1992) to follow the ballistic evolution of a star cluster once its gas has been accreted or dispersed.

The purposes of this paper are (i) to describe the algorithms implemented in SEREN, and (ii) to demonstrate the fidelity of SEREN – i.e. that the algorithms are coded correctly and reproduce known results in tests, so that future publications presenting simulations performed with SEREN can refer to this paper for a full description of the code. In Sect. 2, we give a brief overview of SEREN and all of its main features,and compare these features with those available in other available astrophysical SPH codes. In Sect. 3, we describe in detail the SPH algorithms used. In Sect. 4, we describe the implementation of self-gravity in SPH. In Sect. 5, we briefly discuss the available thermal physics modules, including the transport of heating, cooling and ionizing radiation. In Sect. 6, we discuss the integration schemes and time-stepping criteria. In Sect. 7, we discuss the implementation of sink particles. In Sect. 8, we discuss the 4th order Hermite N-body integrator and the additional features contained within it (e.g. binary identification). In Sect. 9, we discuss the implementation of the Barnes-Hut tree, and how it is used to determine neighbour lists and calculate gravitational accelerations. In Sect. 10, we present a large suite of tests, to demonstrate that SEREN simulates correctly the physical processes it is intended to capture. In Sect. 11, we discuss the memory optimisations used. In Sect. 12, we discuss the techniques used to parallelise SEREN using OpenMP, and we demonstrate how SEREN scales on shared-memory machines. In Sect. 13, we outline the major features that are still to be implemented.

2. Overview of SEREN and other codes

SEREN is a multi-dimensional self-gravitating SPH and N-body code. It has been designed for star and planet formation problems, but it can easily be adapted to simulate other astrophysical phenomena. SEREN is written in Fortran 95 (with some Fortran 2003 features) and is parallelised using OpenMP. It is written in a highly modular style, with a large number of features that can be switched on or off using Makefile options and conditional compilation flags. It can be compiled for one, two or three-dimensions, although it is most optimal in three-dimensional mode. We list here the main algorithms and features included in SEREN:

-

standard SPH (e.g. Monaghan 1992), “grad-h” SPH (Springel & Hernquist 2002; Price & Monaghan 2004b), and Godunov SPH(Inutsuka 2002; Cha & Whitworth 2003);

-

kernel-softened self-gravity (Price & Monaghan 2007b);

-

artificial dissipation (Lattanzio & Monaghan 1985; Balsara 1995; Monaghan 1997; Morris & Monaghan 1997; Price 2008);

-

2nd-order Runge-Kutta, 2nd-order Predictor-Corrector and 2nd-order kick-drift-kick and drift-kick-drift Leapfrog integration schemes;

-

hierarchical block time-stepping (e.g. Hernquist & Katz 1989);

-

periodic boundary conditions, including periodic gravity (Hernquist et al. 1991; Klessen 1997);

-

several particle types: self-gravitating gas particles, non-gravitating inter-cloud particles, static or non-static boundary particles;

-

octal-spatial trees for neighbour-searching and gravity (Barnes & Hut 1986; Pfalzner & Gibbon 1996);

-

simple isothermal, polytropic or barotropic equations of state, solution of the energy equation with associated radiation transport (Stamatellos et al. 2007), and propagation of ionizing radiation using HEALPix rays (Bisbas et al. 2009);

-

sink particles (Bate et al. 1995);

-

4th order Hermite N-body integrator (Makino & Aarseth 1992);

-

identification of binaries and calculation of binary properties (e.g. Aarseth 2003).

We control which algorithms are used in SEREN using Makefile options, so only the employed subroutines are compiled and included in the executable file. The parameters which determine how the selected algorithms function are set in a separate parameters file. In Sects. 3 to 9, we describe in more detail the implementation of these algorithms in SEREN.

Several other SPH codes are available to the astrophysics community for performing simulations of self-gravitating hydrodynamics. While these codes share a common set of basic features, most contain specialised algorithms to model certain astrophysical processes, or are optimised to perform a particular class of simulation. We briefly discuss the algorithms and features in other available astrophysical SPH codes, in order to highlight to potential users the relative merits of each code for solving particular problemsand how they contrast with the features implemented in SEREN. We only discuss here those codes that have a refereed or archived publication containing details of the implementation and tests.

2.1. GADGET and GADGET 2

GADGET (Springel et al. 2001) and GADGET 2 (Springel 2005) are written in C and parallelised using MPI. While the original GADGET code was designed to investigate galaxy formation problems, GADGET 2 was designed to investigate large-scale cosmological problems such as galaxy cluster formation and the formation of structure in the Universe (e.g. Springel et al. 2005). MPI can be used very efficiently when the work distributed to all CPUS is automatically load-balanced. Therefore, the approximately uniform (large-scale) density distribution used in cosmological simulations is a problem that an MPI code like GADGET 2 can handle efficiently on very large clusters with 1000 s of CPUs (e.g. Springel 2005). GADGET 2 uses a Peano-Hilbert space-filling curve in order to determine how to distribute the particles amongst the available processors. This improves the scalability, by reducing communication overheads. GADGET 2 uses a conservative SPH formulation combined with solving the entropy equation for the thermal physics (Springel & Hernquist 2002). Particle properties can be integrated using either a Leapfrog-KDK or Leapfrog-DKD integration scheme, in combination with a hierarchical block-timestep scheme. The calculation of gravitational forces is split into short and long-range computations; short range forces are computed using a Barnes-Hut tree (which is efficient for clumpy density distributions), and long-range forces are computed using a particle-mesh scheme (which is efficient for smoother density distributions). GADGET 2 contains the ability to model several different particle types relevant to galaxy and cosmology simulations, namely gas, cold-dark matter and star particles. Star particles usually represent a whole cluster of stars, in comparison to sink particles in SEREN which represent individual stars, or unresolved small, multiple systems.

2.2. GASOLINE

GASOLINE (Wadsley et al. 2004) is written in Fortran and is parallelised for shared-memory machines. GASOLINE uses the standard formulation of SPH (e.g. Monaghan 1992) with (α, β) viscosity (Monaghan & Gingold 1983) and a Balsara switch (Balsara 1995) for reducing unwanted shear viscosity. GASOLINE can use two separate trees; a K-D tree for neighbour searching and a Barnes-Hut octal tree (Barnes & Hut 1986) for calculating gravitational forces. The code computes multipole moments up to hexadecapole-order to compute gravitational forces efficiently, but only uses the geometrical MAC for evaluating the cell-interaction list. Ewald summation is also available for simulating periodic boxes. GASOLINE contains a number of options for treating thermal physics, including an implicit integrator for solving the energy equation. A number of cooling and ionisation processes can be selected, as well as a simple heating-feedback prescription due to star formation. GASOLINE uses a Leapfrog-KDK integration scheme for advancing particle positions and velocities, along with a standard hierarchical block-timestep scheme.

2.3. VINE

VINE (Wetzstein et al. 2009; Nelson et al. 2009) is written in Fortran 95 and parallelised using OpenMP. As with GADGET and GADGET 2, VINE has been designed to investigate galaxy and cosmological problems. VINE has also been parallelised using OpenMP. It has been tested on up to 128 CPUs and scales well provided the problem size is large enough. VINE uses a nearest-neighbour binary tree (e.g. Benz et al. 1990) to compute gravitational forces and to search for neighbours efficiently. VINE also has the facility to use GRAPE boards (e.g. Makino et al. 2003) and thus can significantly speed up the calculation of the gravitational forces for particular problems. VINE does not use a conservative form of SPH, but rather uses the traditional form of SPH (Monaghan 1992). VINE contains a variety of particle types similar to GADGET 2, such as gas, cold-dark matter and star particles.

2.4. MAGMA

MAGMA (Rosswog & Price 2007) is an Smoothed Particle Magneto-hydrodynamics (SPMHD) code which is parallelised using OpenMP. MAGMA has been designed to model compact objects, such as binary-neutron stars. MAGMA uses the conservative “grad-h” SPH scheme for computing hydro and gravitational forces, and “Euler potentials” for solving the ideal MHD equations; this enforces div B = 0 by design. The code includes dissipative artificial viscosity, conductivity and resistivity terms, with switches such as time-dependent viscosity (Morris & Monaghan 1997) for reducing dissipation. Thermal physics includes an equation-of-state for modelling the nucleon fluid at super-nuclear densities, and a method for modelling neutrino emission. No additional particle types (e.g. sink particles) are included. Gravitational forces are computed with a binary tree (Benz et al. 1990) and particle-particle interactions are computed with kernel-softened gravity (Price & Monaghan 2007b). Particle positions and velocities are integrated with a second-order predictor-corrector scheme, using an individual timestep scheme.

2.5. EvoL

EvoL (Merlin et al. 2010) is written in Fortran 95 and is parallelised using MPI. EvoL was designed to investigate cosmological structure, galaxy-cluster and galaxy formation problems, similar to GADGET 2 and VINE. As with other galaxy/cosmological codes, EvoL can model self-gravitating gas, cold dark matter and star particles. EvoL models the gas-dynamics using a modified “grad-h” SPH formulation, and also contains terms that correct for unequal-mass particles. Gravity is calculated using a Barnes-Hut tree, and neighbouring particle gravitational forces are computed with a conservative scheme similar to Price & Monaghan (2007b), but using the number density instead of the mass density, which again is beneficial when using unequal mass particles. EvoL uses a Leapfrog-KDK scheme for integrating particle positions and velocities. A standard hierarchical block-timestep scheme is employed, along with the instantaneous timestep-reduction procedure (Saitoh & Makino 2009) to ensure the timesteps used for neighbouring particles are not greatly different. EvoL also contains the ability to evolve the particle positions using the X-SPH method (e.g. Monaghan 1992) which can prevent particle interpenetration.

3. Smoothed particle hydrodynamics

SPH is a Lagrangian hydrodynamics scheme which uses particles to represent the fluid (Gingold & Monaghan 1977; Lucy 1977). SEREN contains three different variants of SPH: the standard implementation (Monaghan 1992), the conservative “grad-h” implementation (Springel & Hernquist 2002; Price & Monaghan 2004b) and the Godunov implementation (Cha & Whitworth 2003; Eqs. (9), (10)). The “grad-h” implementation is the favoured, default implementation in SEREN.

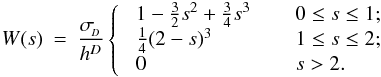

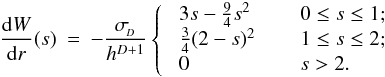

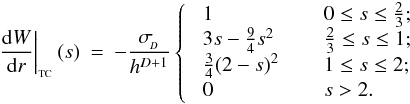

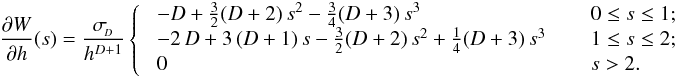

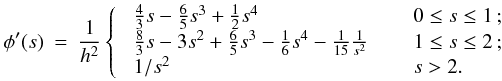

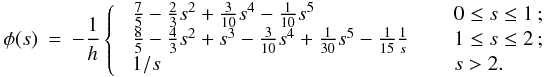

In SPH, particle properties are smoothed over a length scale, h, called the smoothing length, using a weighting function, W(r,h), called the kernel function. The fluid is thus still a continuous medium despite being represented by a finite number of discrete particles. The volume over which a particle is smoothed is called its smoothing kernel. Particle i interacts hydrodynamically with all other SPH particles, j, that lie inside the smoothing kernel of i (gather), and/or whose own smoothing kernels overlap i (scatter). These particles are referred to as the neighbours of i. The smoothing length determines the spatial resolution and can in principle be set to any value. The simplest choice is to keep h uniform in space and constant in time, throughout the simulation. However, to take advantage of the Lagrangian nature of SPH, it is often desirable to set the smoothing length of an SPH particle to be of order the local mean particle separation. The resolution then automatically adapts to the local conditions, providing an adaptability that is much more difficult to achieve with grid codes. SEREN contains two choices for the kernel function, both of which have finite extent, rMAX = ℛh: the M4 cubic spline kernel (Monaghan & Lattanzio 1985) with ℛ = 2, and the quintic spline kernel (Morris 1996) with ℛ = 3. Detailed properties of these kernels are given in Appendix A.

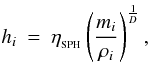

Since “grad-h” is the default implementation of SPH in SEREN, we briefly describe its main features here. In order to guarantee conservation of momentum, angular momentum and energy, the SPH fluid equations are derived from the Euler-Lagrange equations. This requires that the smoothing length of a particle be either constant, a function of the particle’s co-ordinates, or a function of some property that is itself a function of the particle’s co-ordinates. We follow Springel & Hernquist (2002) and Price & Monaghan (2004b) in making the smoothing length a function of the density. Specifically, for particle i we put  (1)where mi is the mass of particle i, ρi is the SPH density at the position of particle i, D is the spatial dimensionality, and

(1)where mi is the mass of particle i, ρi is the SPH density at the position of particle i, D is the spatial dimensionality, and  is a parameter that controls the mean number of neighbours,

is a parameter that controls the mean number of neighbours,  in one, two and three dimensions respectively. ρi is calculated using

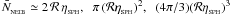

in one, two and three dimensions respectively. ρi is calculated using  (2)where rij ≡ ri − rj, and the summation includes particle i itself. Since the smoothing length is needed in order to calculate the density in Eq. (2) and vice versa in Eq. (1), hi and ρi are obtained by iteration.

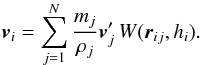

(2)where rij ≡ ri − rj, and the summation includes particle i itself. Since the smoothing length is needed in order to calculate the density in Eq. (2) and vice versa in Eq. (1), hi and ρi are obtained by iteration.

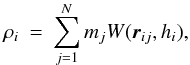

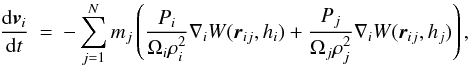

Once h and ρ are evaluated for all particles, the terms in the SPH fluid equations can be computed. The momentum equation is  (3)where Pi is the pressure of particle i, ∇iW is the gradient of the kernel function at the position of particle i, and

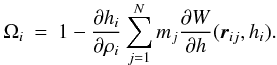

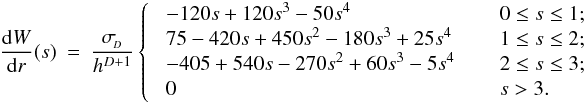

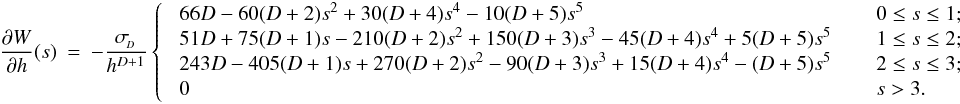

(3)where Pi is the pressure of particle i, ∇iW is the gradient of the kernel function at the position of particle i, and  (4)Ωi is a dimensionless quantity that corrects for the spatial variability of h. ∂hi/∂ρi is obtained explicitly from Eq. (1). ∂W/∂h is obtained from the kernel function (see Appendix A). The SPH energy equation is

(4)Ωi is a dimensionless quantity that corrects for the spatial variability of h. ∂hi/∂ρi is obtained explicitly from Eq. (1). ∂W/∂h is obtained from the kernel function (see Appendix A). The SPH energy equation is  (5)where vij ≡ vi − vj. Since the mass of each particle is constant, and the density is computed using Eq. (2), there is no need to solve the SPH continuity equation.

(5)where vij ≡ vi − vj. Since the mass of each particle is constant, and the density is computed using Eq. (2), there is no need to solve the SPH continuity equation.

The summations in Eqs. (2)–(5) are formally over all particles in the simulation. However, since the kernels used in SEREN both have finite extent, the summations are actually only over the neighbours of particle i. SEREN uses a Barnes-Hut tree (Barnes & Hut 1986) to obtain neighbour lists. The procedures for constructing and walking the tree are described in Sect. 9.

3.1. Artificial viscosity and conductivity

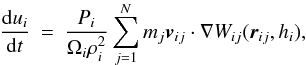

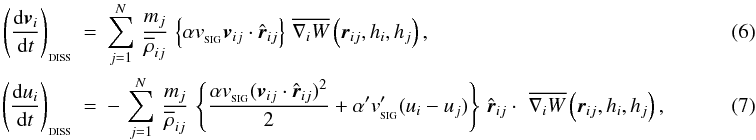

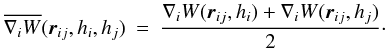

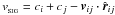

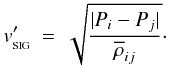

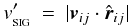

In most formulations of SPH, artificial viscosity terms are needed to ensure that shocks are captured, i.e. that converging particle streams do not interpenetrate, but rather form a contact discontinuity, and that kinetic energy is converted into thermal energy at the shock, thereby generating entropy. SEREN includes two different forms of artificial viscosity: the standard (α,β) formulation (Monaghan & Gingold 1983), and the formulation based on Riemann solvers (Monaghan 1997). The Monaghan-Riemann formulation is the default in SEREN, and involves adding the following extra terms to the momentum and energy equations,  where α and α′ are user specified coefficients of order unity,

where α and α′ are user specified coefficients of order unity,  and

and  are signal speeds,

are signal speeds,  , and

, and  (8)This form of artificial dissipation is chosen as the default because (a) it has a physically informed motivation; and (b) it can be generalised to model dissipation in other quantities while giving just as good results as the standard (α, β) viscosity when modelling shocks. The dissipation term on the right-hand side of Eq. (6) and the first term on the right-hand side of Eq. (7) represent artificial viscosity – i.e. exchange of momentum between neighbouring particles which are approaching or receding from one another, and conversion of the kinetic energy lost into thermal energy – and they are moderated by the signal speed

(8)This form of artificial dissipation is chosen as the default because (a) it has a physically informed motivation; and (b) it can be generalised to model dissipation in other quantities while giving just as good results as the standard (α, β) viscosity when modelling shocks. The dissipation term on the right-hand side of Eq. (6) and the first term on the right-hand side of Eq. (7) represent artificial viscosity – i.e. exchange of momentum between neighbouring particles which are approaching or receding from one another, and conversion of the kinetic energy lost into thermal energy – and they are moderated by the signal speed  . The second term on the right-hand side of Eq. (7) represents artificial conductivity, and acts to smooth out gradients in the specific internal energy. For purely hydrodynamic simulations, Price (2008) advocates that the artificial conductivity be moderated by the signal speed

. The second term on the right-hand side of Eq. (7) represents artificial conductivity, and acts to smooth out gradients in the specific internal energy. For purely hydrodynamic simulations, Price (2008) advocates that the artificial conductivity be moderated by the signal speed  (9)However, in self-gravitating simulations this can drain thermal energy from dense condensations, thereby artificially accelerating gravitational contraction. Wadsley et al. (2006) have proposed the alternative signal speed

(9)However, in self-gravitating simulations this can drain thermal energy from dense condensations, thereby artificially accelerating gravitational contraction. Wadsley et al. (2006) have proposed the alternative signal speed  (10)for artificial conductivity. Both Eqs. (9) and (10) are included as options in SEREN. We note that when the Godunov-SPH formulation is selected, we can disable the artificial viscosity since the Riemann solver should allow us to capture shocks accurately. We may need to retain the artificial conductivity sinceour simple implementation of a Riemann solver into SPH does not address that problem.

(10)for artificial conductivity. Both Eqs. (9) and (10) are included as options in SEREN. We note that when the Godunov-SPH formulation is selected, we can disable the artificial viscosity since the Riemann solver should allow us to capture shocks accurately. We may need to retain the artificial conductivity sinceour simple implementation of a Riemann solver into SPH does not address that problem.

3.1.1. Artificial viscosity switches

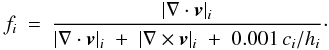

Artificial viscosity can have undesirable side effects. In the absence of shocks it can lead to kinetic energy being dissipated at an unacceptably high rate, i.e. much faster than would happen with physical viscosity; this is an important consideration in simulations of interstellar turbulence. It can also deliver an unacceptably high shear viscosity, and thereby corrupt shear flows; this is an important consideration in simulations of the long-term evolution of accretion discs. A number of devices has been proposed to reduce the artificial viscosity in regions where it is not needed. Three such viscosity limiters are included in SEREN. The first is the switch proposed by Balsara (1995) in which α is multiplied by the dimensionless quantity  , where

, where  (11)In regions of strong compression (i.e. shocks), the | ∇·v | terms tend to dominate over the | ∇ × v | term, so fi → 1. In regions where vorticity dominates (i.e. shear flows), the | ∇ × v | term dominates, so fi → 0.

(11)In regions of strong compression (i.e. shocks), the | ∇·v | terms tend to dominate over the | ∇ × v | term, so fi → 1. In regions where vorticity dominates (i.e. shear flows), the | ∇ × v | term dominates, so fi → 0.

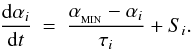

The second device (which can be used in conjunction with the first) is time-dependent viscosity (Morris & Monaghan 1997). In time-dependent viscosity, each particle i has its own value of αi, which evolves according to the equation  (12)Here

(12)Here  is the default value of αi, and τi is the e-folding time on which αi relaxes to

is the default value of αi, and τi is the e-folding time on which αi relaxes to  , if the source term,

, if the source term,  (13)vanishes (cf. Rosswog et al. 2000). Reasonable results are obtained with αMIN = 0.1, since a small residual artificial viscosity is needed to suppress high-frequency particle noise. The e-folding time is given by

(13)vanishes (cf. Rosswog et al. 2000). Reasonable results are obtained with αMIN = 0.1, since a small residual artificial viscosity is needed to suppress high-frequency particle noise. The e-folding time is given by  with

with  (i.e. roughly a sound-crossing time for the smoothing kernel). The source term ensures that if particle i enters a shock, αi quickly increases towards αMAX ~ 1, but as soon as the shock is passed it decays back to

(i.e. roughly a sound-crossing time for the smoothing kernel). The source term ensures that if particle i enters a shock, αi quickly increases towards αMAX ~ 1, but as soon as the shock is passed it decays back to  . If we use (α,β) viscosity, then we set βi = 2αi.

. If we use (α,β) viscosity, then we set βi = 2αi.

The third device is the pattern-matching switch described by Cartwright & Stamatellos (2010). This switch is very effective in pure Keplerian discs, i.e. non-self-gravitating equilibrium discs modelled in the frame of reference of the central star, but has not yet been adapted to work in more general situations.

4. Self-gravity

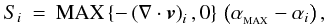

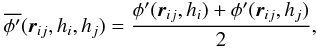

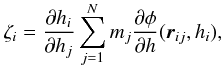

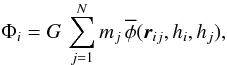

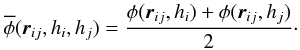

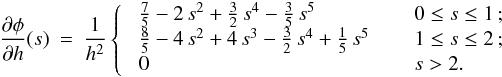

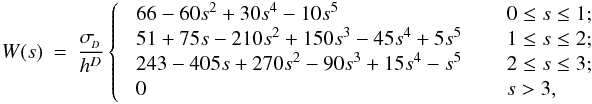

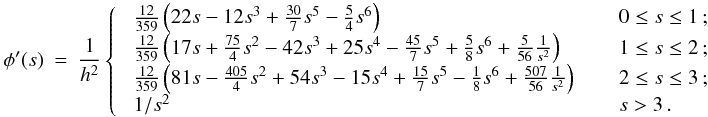

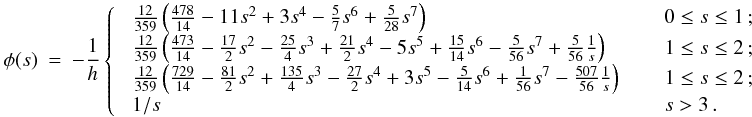

Although the calculation of gravitational accelerations resembles an N-body problem, with forces between point masses, one should – for consistency with the calculation of SPH accelerations – take proper account of fact that the underlying density field, given by Eq. (2), is actually continuous, and the gravitational potential is related to this continuous density field by Poisson’s equation, ∇2Φ = 4 π G ρ. Price & Monaghan (2007b) derive the equations of self-gravitating SPH by including the gravitational potential in the Lagrangian, and then proceeding as in Price & Monaghan (2004a). It is then necessary to introduce two additional kernel functions, the gravitational acceleration kernel (φ′) and the gravitational potential kernel (φ, called the softening kernel by Price & Monaghan 2007b),  As with the basic kernel function, W, and its other derivatives, these new gravitational kernels are computed in advance, on a grid, and stored, so that subsequently values can be obtained efficiently by interpolation. The forms of both of these kernels are discussed in Appendix A. Using these kernels, Price & Monaghan (2007b) show that the gravitational acceleration of particle i is

As with the basic kernel function, W, and its other derivatives, these new gravitational kernels are computed in advance, on a grid, and stored, so that subsequently values can be obtained efficiently by interpolation. The forms of both of these kernels are discussed in Appendix A. Using these kernels, Price & Monaghan (2007b) show that the gravitational acceleration of particle i is  (16)where

(16)where  (17)

(17) (18)and Ωi is given by Eq. (4). The two summation terms in Eq. (16) are, respectively, the kernel-softened gravitational acceleration, and the “grad-h” corrections that account for adaptive smoothing lengths. The ζi term is calculated and stored when other SPH quantities are calculated (i.e. ρi, (∇·v)i, Ωi, etc.). To compute ζi requires ∂φ/∂h, which can be calculated and stored, once the form of W has been specified (see Appendix A). The gravitational potential at the position of particle i due to all other particles is

(18)and Ωi is given by Eq. (4). The two summation terms in Eq. (16) are, respectively, the kernel-softened gravitational acceleration, and the “grad-h” corrections that account for adaptive smoothing lengths. The ζi term is calculated and stored when other SPH quantities are calculated (i.e. ρi, (∇·v)i, Ωi, etc.). To compute ζi requires ∂φ/∂h, which can be calculated and stored, once the form of W has been specified (see Appendix A). The gravitational potential at the position of particle i due to all other particles is  (19)where

(19)where  (20)If we choose standard SPH or Godunov SPH, the second summation in Eq. (16) is omitted, and the total energy is not as well conserved (see Price & Monaghan 2007b).

(20)If we choose standard SPH or Godunov SPH, the second summation in Eq. (16) is omitted, and the total energy is not as well conserved (see Price & Monaghan 2007b).

To compute gravitational accelerations exactly, using Eqs. (16)–(18), requires a summation over all particle pairs and is therefore an  process. To speed up the computation of gravitational accelerations, SEREN uses a Barnes-Hut tree (Barnes & Hut 1986). The resulting gravitational accelerations are not exact, but the resulting small fractional errors are considered acceptable, since there are other comparable or larger sources of error. The implementation of the gravity tree is described in Sect. 9.

process. To speed up the computation of gravitational accelerations, SEREN uses a Barnes-Hut tree (Barnes & Hut 1986). The resulting gravitational accelerations are not exact, but the resulting small fractional errors are considered acceptable, since there are other comparable or larger sources of error. The implementation of the gravity tree is described in Sect. 9.

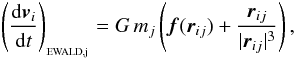

4.1. Periodic gravity

Cosmological simulations (e.g. Springel et al. 2005) and simulations of turbulent molecular clouds (e.g. Klessen et al. 2000) often set out to model a representative piece of an infinite (or much more extended) medium, by assuming that the infinite medium consists of an infinite number of replicas of the main computational domain, extending periodically in all directions, and then employing periodic boundary conditions. For purely hydrodynamic simulations, periodic wrapping is sufficient to give acceptable boundary conditions. When self-gravity is invoked, we must include a contribution to the acceleration from all the replicas of the computational domain, extending to infinity. SEREN does this using the Ewald method (Hernquist et al. 1991; Klessen 1997). If the computational domain is a cube of side-length L, the total gravitational acceleration exerted on particle i by all of the infinite replicas of particle j (but not directly by the particle j itself) is  (21)where

(21)where  (22)and α = 2/L. The first summation in Eq. (22) is over all replicas in all directions (i.e. all n-space) and the second summation is over all phase-space (i.e. all k-space). The summations converge rapidly and can be truncated for | r − nL | < 3.6L and k2 < 40π2/L2. SEREN computes the dimensionless correction forces for a wide range of separations and tabulates the values in a look-up table.

(22)and α = 2/L. The first summation in Eq. (22) is over all replicas in all directions (i.e. all n-space) and the second summation is over all phase-space (i.e. all k-space). The summations converge rapidly and can be truncated for | r − nL | < 3.6L and k2 < 40π2/L2. SEREN computes the dimensionless correction forces for a wide range of separations and tabulates the values in a look-up table.

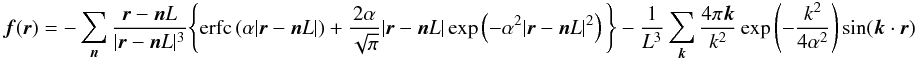

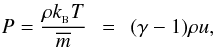

5. Thermal physics

SEREN contains several equation-of-state (EOS) algorithms which can be selected using Makefile options. In all cases we assume that the gas is ideal, and so the pressure and specific internal energy are related by  (23)where

(23)where  is Boltzmann’s constant,

is Boltzmann’s constant,  is the mean gas-particle mass, and γ is the ratio of specific heats. With Options 1 to 3 below there is no need to solve the SPH energy equation, whereas with Options 4 and 5 there is.

is the mean gas-particle mass, and γ is the ratio of specific heats. With Options 1 to 3 below there is no need to solve the SPH energy equation, whereas with Options 4 and 5 there is.

1. Isothermal equation of state. If the gas is isothermal at temperature  ,

,  (24)with constant isothermal sound speed,

(24)with constant isothermal sound speed,  .

.

2. Polytropic equation of state. The polytropic EOS has the form  (25)where K is the polytropic constant and η is the polytropic exponent; the polytropic index is n = (η − 1)-1.

(25)where K is the polytropic constant and η is the polytropic exponent; the polytropic index is n = (η − 1)-1.

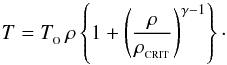

3. Barotropic equation of state. SEREN includes a barotropic equation of state of the form  (26)This mimics the behaviour of interstellar gas in molecular clouds, where the gas is optically thin to its cooling radiation and approximately isothermal (at TO ~ 10 K) when the density is low (ρ < ρCRIT ~ 10-13 g cm-3), and optically thick to its own cooling radiation and approximately adiabatic (e.g. with γ ≃ 5/3) at higher densities (ρ > ρCRIT).

(26)This mimics the behaviour of interstellar gas in molecular clouds, where the gas is optically thin to its cooling radiation and approximately isothermal (at TO ~ 10 K) when the density is low (ρ < ρCRIT ~ 10-13 g cm-3), and optically thick to its own cooling radiation and approximately adiabatic (e.g. with γ ≃ 5/3) at higher densities (ρ > ρCRIT).

4. Adiabatic equation of state. We integrate the internal energy equation explicitly (using Eq. (5)) and then calculate the thermal pressure from Eq. (23). Changes in the specific internal energy are solely due to compressional and/or viscous heating.

5. Radiative cooling. The method of Stamatellos et al. (2007) is used to capture realistically the main effects of radiative heating and cooling (in the optically thin, thick and intermediate regimes), but without the expense of a full radiative transfer calculation. This algorithm uses local functions of state (namely the density, temperature and gravitational potential) to compute an approximate optical depth to infinity, and hence to obtain an approximate cooling rate. This cooling rate is then used to solve the energy equation implicitly, and hence to determine the thermal evolution of the gas.

6. Ionising radiation. SEREN also includes the option to model a single discrete source of ionising radiation (i.e. an OB star or tight cluster of OB stars) using the algorithm of Bisbas et al. (2009). This algorithm generates an isotropic distribution of HEALPix rays, which are split into smaller child rays wherever finer resolution is needed. The rays propagate until they reach the ionisation front, where they are terminated. Particles well inside the Hii region are given a high temperature (~10 000 K) and particles well outside the Hii region are treated with one of the EOS algorithms listed above. There is a region with thickness of order the local smoothing length in which the temperature variation is smoothed, so as to avoid problems associated with abrupt temperature discontinuities.

6. Time integration

6.1. Integration schemes

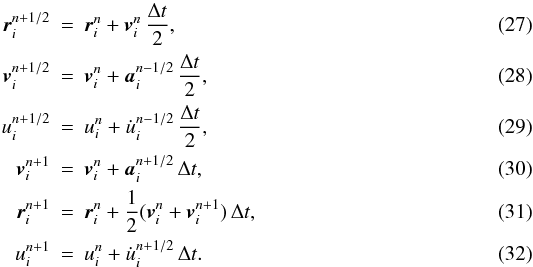

SEREN offers a choice of four integration schemes: 2nd-order Runge-Kutta, 2nd-order Leapfrog (kick-drift-kick and drift-kick-drift) and 2nd-order Predictor-Corrector. The default choice isthe 2nd-order Leapfrog drift-kick-drift:  The main advantage of this scheme is that it only requires one acceleration calculation per timestep, as opposed to two in the 2nd-order Runge-Kutta scheme. Leapfrog schemes (both the Leapfrog kick-drift-kick and drift-kick-drift) are symplectic (i.e. they conserve phase-space) and so they are more stable for orbital integration (for example, in disc simulations). They are also, in principle, time-reversible for constant, global timesteps. The use of block time-stepping breaks exact time-reversibility (see Sect. 6.3), and also breaks exact momentum and angular momentum conservation. The other integration schemes are included because some perform better than the Leapfrog scheme in non–self-gravitating problems, and to allow comparison with other codes that use different integrators.

The main advantage of this scheme is that it only requires one acceleration calculation per timestep, as opposed to two in the 2nd-order Runge-Kutta scheme. Leapfrog schemes (both the Leapfrog kick-drift-kick and drift-kick-drift) are symplectic (i.e. they conserve phase-space) and so they are more stable for orbital integration (for example, in disc simulations). They are also, in principle, time-reversible for constant, global timesteps. The use of block time-stepping breaks exact time-reversibility (see Sect. 6.3), and also breaks exact momentum and angular momentum conservation. The other integration schemes are included because some perform better than the Leapfrog scheme in non–self-gravitating problems, and to allow comparison with other codes that use different integrators.

6.2. Optimal timesteps

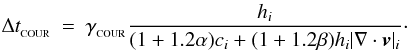

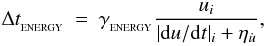

SEREN calculates (but does not explicitly use) the optimal timestep for particle i, Δti, by determining the minimum value of three separate timesteps. The first is based on a modified Courant condition of the form  (33)The denominator contains the hi | ∇·v | i term (which is frame-independent) instead of the absolute speed, | v | i (which is normally used in the Courant condition). The terms involving α and β in the denominator account for particles that are in the vicinity of shocks. The second timestep condition is an acceleration condition similar to those used in some N-body codes, i.e.

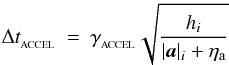

(33)The denominator contains the hi | ∇·v | i term (which is frame-independent) instead of the absolute speed, | v | i (which is normally used in the Courant condition). The terms involving α and β in the denominator account for particles that are in the vicinity of shocks. The second timestep condition is an acceleration condition similar to those used in some N-body codes, i.e.  (34)where ηa is a small positive acceleration to ensure the denominator does not at any time fall to zero. The third timestep condition is the heating condition, which limits the fractional change in the internal energy per timestep,

(34)where ηa is a small positive acceleration to ensure the denominator does not at any time fall to zero. The third timestep condition is the heating condition, which limits the fractional change in the internal energy per timestep,  (35)where

(35)where  is a small positive heating rate to ensure the denominator does not fall to zero. This timestep criterion is only used when the SPH energy equation (Eq. (5)) is solved explicitly. If we solve the energy equation implicitly (e.g. Stamatellos et al. 2007), we only use the Courant and acceleration timesteps, Eqs. (33) and (34), to compute the optimal timestep for particle i, Δti.

is a small positive heating rate to ensure the denominator does not fall to zero. This timestep criterion is only used when the SPH energy equation (Eq. (5)) is solved explicitly. If we solve the energy equation implicitly (e.g. Stamatellos et al. 2007), we only use the Courant and acceleration timesteps, Eqs. (33) and (34), to compute the optimal timestep for particle i, Δti.

6.3. Hierarchical block timesteps

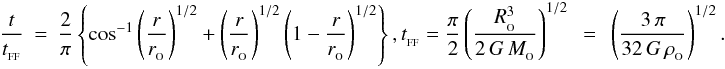

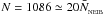

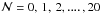

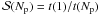

SEREN uses hierarchical block time-stepping (e.g. Aarseth 2003) to reduce the run-time of a simulation. In a typical star formation simulation, only a small fraction of the particles might require very small timesteps, for example those passing through a shock or those near the centre of a condensation. If a global timestep is used, accelerations are recalculated for all particles, irrespective of whether the recalculation is really needed. Instead, we allow each particle to have its own timestep, chosen from a binary hierarchy of possible values, Δtn = 2n ΔtMIN, where n = 0,1,2,...,nMAX. Particle i is then allocated the largest value of Δtn from this hierarchy that is smaller than its optimal timestep, Δti (based on Eqs. (33)–(35)). By restricting the ratio of timesteps to integer powers of 2, we ensure that the particles are always synchronised at the end of the largest timestep, ΔtMAX = 2nMAX ΔtMIN.

The acceleration of a particle is then recalculated with a frequency determined by its allocated timestep, Δtn. The most expensive parts of this recalculation are those associated with walking the trees. At any time, the positions, velocities and thermodynamic properties of particles whose accelerations do not yet need to be recalculated are simply estimated by extrapolation.

The timestep for a particle is recalculated at the end of its current timestep, using Eqs. (33) to (35). When the allocated timestep of a particle decreases (i.e. it moves to lower n in the hierarchy), there is no problem, because any lower timestep in the hierarchy is automatically synchronised with the higher one from which the particle is descending. On the other hand, this is not necessarily the case when a particle’s allocated timestep increases (i.e. it moves to higher n in the hierarchy). In this situation, we have to check that the lower timestep is correctly synchronised with the higher one before we can move the particle up (i.e. increase its allocated timestep). In addition, we only allow a particle to increase its allocated timestep one level at a time.

As shown by Saitoh & Makino (2009), SPH can perform poorly when neighbouring particles have very different timesteps. For example, in a high Mach-number shock, the particles may interpenetrate because particles from the low-density pre-shock gas have much longer timesteps than those in the high-density post-shock gas, and therefore in a single timestep they advance deep into the shocked region. SEREN mitigates this effect by broadcasting each particle’s allocated timestep to all it neighbours. If one of the neighbours j of particle i has an allocated timestep which is more than two levels higher in the hierarchy(i.e. more than a factor 4 longer; tj > 4 ti), the neighbour’s timestep is automatically reduced to tj = 4 ti as soon as the timestep hierarchy is correctly synchronised.

7. Sink particles

Sink particles are used in SPH to allow simulations of star formation to be followed longer (Bate et al. 1995). Gravitational collapse inevitably leads to high densities, short smoothing lengths, high accelerations, and therefore short timesteps. Under these circumstances, even the use of block time-stepping (Sect. 6.3) cannot prevent run-times from becoming impractically long. To circumvent this problem, we replace dense condensations with sink particles. A sink particle possesses the collective properties of the condensation it represents (i.e. mass, centre-of-mass position and net momentum) but does not retain any information about the internal structure and evolution of the condensation. Thus SPH particles that would otherwise have continued evolving inexorably towards higher density (thereby using up ever increasing amounts of CPU-time) are instead excised from the simulation. This means that the dynamics of the remaining more diffuse gas, and the formation of additional condensations, can be followed in an acceptable run-time. The assumption is made that – in the absence of feedback from the resulting protostar – the only important effect that the material inside a sink particle will have on its surroundings is due to its gravitational field. Thus sink particles interact gravitationally, but not hydrodynamically, with other sink and SPH particles.

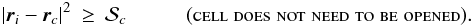

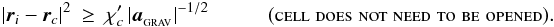

A sink particle is created when an SPH particle satisfies all the stipulated sink-creation criteria. These criteria are divided into default criteria and optional criteria. The SPH particle which triggers the formation of a sink is referred to as the seed particle. The default criteria for sink creation are then (i) that the SPH density of the seed particle is greater than  ; and (ii) that there is no other sink particle within 2rSINK of the seed particle (i.e. a sink particle should not be formed overlapping a pre-existing sink particle). In principle,

; and (ii) that there is no other sink particle within 2rSINK of the seed particle (i.e. a sink particle should not be formed overlapping a pre-existing sink particle). In principle,  and

and  can be chosen independently. However, the results are only realistic if the material going initially into a sink particle is resolved. The default procedure in SEREN is to set rSINK = ℛhs, where hs is the smoothing length of the seed particle. This means that different sink particles have slightly different radii. The option exists in SEREN to prescribe a universal

can be chosen independently. However, the results are only realistic if the material going initially into a sink particle is resolved. The default procedure in SEREN is to set rSINK = ℛhs, where hs is the smoothing length of the seed particle. This means that different sink particles have slightly different radii. The option exists in SEREN to prescribe a universal  .

.

The four optional sink-creation criteria are (iii) that the mean density of the seed particle and all its neighbours exceeds  (this ensures that a stochastic density fluctuation does not result in the formation of a sink); (iv) that the SPH velocity divergence of the seed particle is negative, (∇·v)s < 0 (this ensures that the particles going into the sink are condensing, and not being sheared apart); (v) that the SPH acceleration divergence of the seed particle is negative, (∇·a)s < 0 (this ensures that the condensation is not being torn apart by tidal forces); (vi) that the total mechanical energy of the seed particle and its neighbours (kinetic plus gravitational potential energy in the centre-of-mass frame) is negative.

(this ensures that a stochastic density fluctuation does not result in the formation of a sink); (iv) that the SPH velocity divergence of the seed particle is negative, (∇·v)s < 0 (this ensures that the particles going into the sink are condensing, and not being sheared apart); (v) that the SPH acceleration divergence of the seed particle is negative, (∇·a)s < 0 (this ensures that the condensation is not being torn apart by tidal forces); (vi) that the total mechanical energy of the seed particle and its neighbours (kinetic plus gravitational potential energy in the centre-of-mass frame) is negative.

Only one sink particle can be created in any one timestep; otherwise the possibility would exist to generate multiple overlapping sinks. At each timestep, SEREN loops over all the SPH particles, and finds those whose SPH density (or, if required, mean density) exceeds  . These candidate seed particles are then ordered in a list of decreasing SPH density (or mean density), and SEREN runs through this list until it finds a seed particle that satisfies all the creation criteria, and creates a sink particle out of this seed particle and all its neighbours.

. These candidate seed particles are then ordered in a list of decreasing SPH density (or mean density), and SEREN runs through this list until it finds a seed particle that satisfies all the creation criteria, and creates a sink particle out of this seed particle and all its neighbours.

An SPH particle i is accreted by an existing sink particle s if (a) the SPH particle lies inside the sink-particle’s radius, | ri − rs | ≤ rSINK, and (b) the kinetic plus gravitational energy of the two-body system comprising the sink-particle and the SPH-particle is negative. The SPH particle’s mass, linear and angular momentum are then assimilated by the sink particle, and the SPH particle itself is removed from the simulation. When determining which SPH particles are accreted by which sink particles, we first compile a list of all the SPH particles which are to be accreted by each sink, and only when these lists are complete do we update the sink properties (mass, position, momentum) to account for the SPH particles it has just assimilated. This is necessary because otherwise the accretion process would depend on the order in which the SPH particles were interrogated.

8. N-body integrator

Simulations of star formation very often result in the formation of multiple stellar systems. Such simulations are modelled with hydrodynamical codes until most of the gas has been accreted by protostars, or dispersed by feedback; this is referred to as the accretion phase. In the absence of magnetic fields and feedback, the accretion phase is driven entirely by the competition between thermal pressure, viscosity, and gravity. The system then enters the ballistic phase, in which N-body dynamics modify the final clustering and binary properties, typically over a period of several tens of crossing times (Van Albada 1968). SPH simulations are often terminated after the accretion phase and not evolved through the ballistic phase.

SEREN includes an N-body integrator, so that it can follow both the accretion phase and the ballistic phase, in a single simulation. SEREN switches from an SPH simulation of the accretion phase, to an N-body simulation of the ballistic phase, if one of two conditions are met: either the simulation has reached the end-time stipulated in the parameters file, or a critical fraction of the original gas mass has been accreted by sink particles. At the switch-over, SEREN identifies any SPH particles which are strongly bound to a particular sink. On the assumption that these SPH particles are either about to be accreted by that sink, or will form a tightly bound disc around it (and eventually be accreted or form a planetary system), they are instantaneously accreted by the sink to which they are bound. This ensures that their contribution to the overall gravitational potential is not suddenly lost at the switch-over.

One problem that corrupts N-body codes is inaccuracies resulting from close interactions. These can build up over the course of a simulation, or materialise quickly in near head-on interactions, causing large energy errors. A variety of techniques has been employed to alleviate this problem, such as using very short timesteps (e.g. Portegies Zwart et al. 2001), gravity-softening (Aarseth 2003) or transformation of the equations of motion (e.g. KS regularization, Stiefel & Scheifele 1971). In SEREN, the N-body code retains the kernel-softened gravity used in the SPH code, in order to ensure that the gravitational accelerations are computed consistently between the two parts of a simulation. This has the advantage of preventing large energy errors due to close interactions, but has the disadvantage of preventing the formation of close binaries (separations less than  ).

).

8.1. Hermite integrator

The N-body integrator of choice is a fourth-order Hermite integrator (Makino 1991; Makino & Aarseth 1992). The Hermite integrator has been presented in two different forms in the literature, either as a fourth-order leapfrog scheme or as a fourth-order predictor-corrector scheme (Aarseth 2003). SEREN uses the predictor-corrector version of the Hermite integrator. Both forms are considered superior to other 4th-order N-body integrators, in the sense of giving better energy conservation and allowing longer timesteps (Makino 1991; Aarseth 2003). The leapfrog version of the Hermite scheme also maintains many of the properties of a traditional 2nd order leapfrog integrator (for example, it is symplectic), but it is of higher order by virtue of using both the acceleration and its first time derivative.

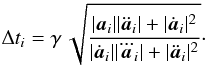

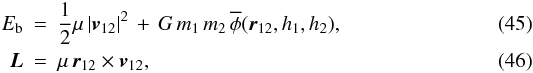

The N-body code uses a global timestep informed by the Aarseth (2001) criterion,  (36)Here

(36)Here  ,

,  and

and  are, respectively, the 1st, 2nd and 3rd time derivatives of the acceleration, calculated at the end of the previous timestep; γ is an accuracy factor of order ~ 0.1 (Makino & Aarseth 1992). Next we calculate the acceleration and its time-derivative (sometimes called the jerk) at the beginning of the step. The acceleration is given by

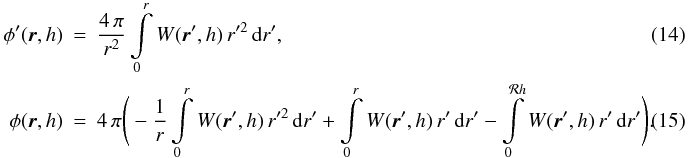

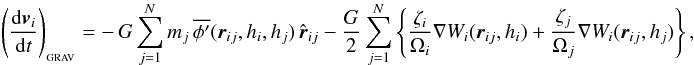

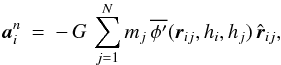

are, respectively, the 1st, 2nd and 3rd time derivatives of the acceleration, calculated at the end of the previous timestep; γ is an accuracy factor of order ~ 0.1 (Makino & Aarseth 1992). Next we calculate the acceleration and its time-derivative (sometimes called the jerk) at the beginning of the step. The acceleration is given by  (37)where

(37)where  is the same gravitational-acceleration kernel as used in calculating kernel-softened gravitational accelerations in SPH (Eq. (14)). The kernel-softening means we must account for the rate of change of the kernel function and include extra terms in the expression for the jerk (Makino & Aarseth 1992). Using the same notation as in Sect. 4, the expression for the jerk becomes

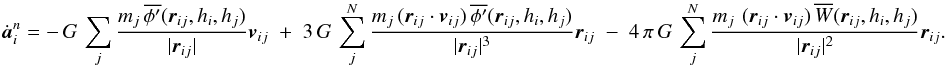

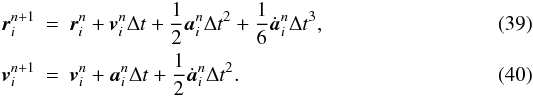

is the same gravitational-acceleration kernel as used in calculating kernel-softened gravitational accelerations in SPH (Eq. (14)). The kernel-softening means we must account for the rate of change of the kernel function and include extra terms in the expression for the jerk (Makino & Aarseth 1992). Using the same notation as in Sect. 4, the expression for the jerk becomes  (38)The particle positions and velocities are then advanced to the end of the timestep,

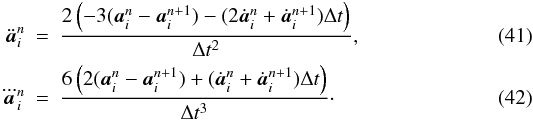

(38)The particle positions and velocities are then advanced to the end of the timestep,  We calculate the acceleration and jerk again using the new positions and velocities. We can thus calculate the second and third time derivatives at the beginning of the step (Makino & Aarseth 1992),

We calculate the acceleration and jerk again using the new positions and velocities. We can thus calculate the second and third time derivatives at the beginning of the step (Makino & Aarseth 1992),  Finally, we add the higher order terms to the position and velocity vectors,

Finally, we add the higher order terms to the position and velocity vectors,  The values of a,

The values of a,  ,

,  and

and  computed at the end of the timestep allow the code to calculate the next time step using Eq. (36). This is not possible on the very first timestep, and there we use explicit equations to calculate

computed at the end of the timestep allow the code to calculate the next time step using Eq. (36). This is not possible on the very first timestep, and there we use explicit equations to calculate  and

and  (e.g. Aarseth 2001, his Eqs. (6) and (7)); all subsequent timesteps are determined using

(e.g. Aarseth 2001, his Eqs. (6) and (7)); all subsequent timesteps are determined using  and

and  from Eqs. (41) and (42).

from Eqs. (41) and (42).

8.2. Identification of multiple systems

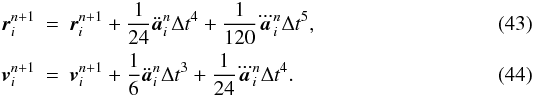

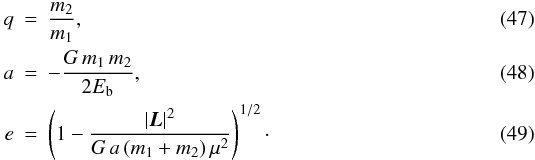

During the N-body simulation, SEREN automatically searches for binaries and hierarchical triples and quadruples. There is no single robust method for identifying a bound, stable multiple system that contains an arbitrary number of components. We use a simple two-stage procedure. The first stage is to identify all binary systems present at the current time. This involves calculating the two-body energies of all star-pairs in the simulation. If (a) stars 1 and 2 are found to be mutually most-bound (i.e. the two-body energy of stars 1 and 2 is a minimum and negative for both stars); and (b) stars 1 and 2 are not bound to any other stars (i.e. the two-body energies of 1 and 2 with all other stars are positive), then they are identified as a bound binary system. If the primary and secondary masses are m1 and m2 respectively, the instantaneous relative displacement is r12 ≡ r1 − r2, and the instantaneous relative velocity is v12 = v1 − v2, then the two-body energy and angular momentum are where μ = m1m2/(m1 + m2). The orbital binary parameters are then given by

where μ = m1m2/(m1 + m2). The orbital binary parameters are then given by  The next stage is to search for hierarchical systems. In order to facilitate this search, each binary found in the previous step is replaced by a single ghost-binary particle. We then repeat the procedure performed in the first stage, searching for any mutually most-bound pairs, but now using the ghost-binaries and the remaining unattached stars. If a ghost-binary is found to be most-bound to a single star and vice versa, they are identified as an hierarchical triple, and the orbit of the system is calculated as above and recorded. If two ghost-binaries are found to be most-bound to each other, then they are recorded as an hierarchical quadruple.

The next stage is to search for hierarchical systems. In order to facilitate this search, each binary found in the previous step is replaced by a single ghost-binary particle. We then repeat the procedure performed in the first stage, searching for any mutually most-bound pairs, but now using the ghost-binaries and the remaining unattached stars. If a ghost-binary is found to be most-bound to a single star and vice versa, they are identified as an hierarchical triple, and the orbit of the system is calculated as above and recorded. If two ghost-binaries are found to be most-bound to each other, then they are recorded as an hierarchical quadruple.

9. Tree

SEREN uses an implementation of the Barnes-Hut tree (Barnes & Hut 1986; Pfalzner & Gibbon 1996) to rapidly obtain neighbour lists for SPH interactions, and to efficiently calculate gravitational accelerations. The Barnes-Hut tree is an octal-spatial decomposition tree that splits the volume of the tree cells at each level into eight equal-volume cubic sub-cells (or four equal-area square sub-cells in 2D) – recursively until only a few, or zero, particles remain in each sub-cell. The cells at which a branch of the tree terminates are called leaf cells. SEREN decomposes the particles as an ensemble, in a similar manner to the algorithm described by Pfalzner & Gibbon (1996) (as distinct from the original Barnes-Hut method, which considers one particle at a time as the tree structure is built). The Pfalzner & Gibbon algorithm makes it easier to parallelise the tree-build routine using OpenMP.

We construct two separate trees, one for particles that experience hydrodynamic accelerations and one for particles that experience gravitational accelerations. This is advantageous because these accelerations are computed using different cell properties. In the case where all SPH particles are self-gravitating, we can build the tree structure once, copy this structure to the second tree, but then stock the two trees (i.e. calculate the properties of the tree cells) separately. Since the timestep criteria restrict how far particles can move in any one timestep, the tree structure will not change appreciably from one timestep to the next. Therefore we only build the tree structure every ~10 timesteps, but restock it every timestep.

9.1. Neighbour searching

The Barnes-Hut neighbour tree is constructed using all SPH particles. For each cell, we record (i) the position of centre of the bounding box containing all particles in the cell; (ii) the maximum distance of all particles from the bounding box centre; (iii) the maximum smoothing length of all particles in the cell, and, if it is a leaf cell; (iv) the identifiers of all particles contained in the cell. Storing these quantities enables us to find neighbours efficiently, either by gather (i.e. all particles for which  ), or by scatter (i.e. all particles for which

), or by scatter (i.e. all particles for which  ), or both. When we perform a tree-search of this type, we obtain a potential neighbour list, which is guaranteed to contain all of the true neighbours but normally also contains non-neighbours. There is no need to cull this list, because all non-neighbours which are passed to the SPH routines have no effect, since the kernel and its derivatives are zero for non-neighbours.

), or both. When we perform a tree-search of this type, we obtain a potential neighbour list, which is guaranteed to contain all of the true neighbours but normally also contains non-neighbours. There is no need to cull this list, because all non-neighbours which are passed to the SPH routines have no effect, since the kernel and its derivatives are zero for non-neighbours.

9.2. Tree gravity

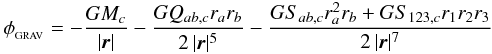

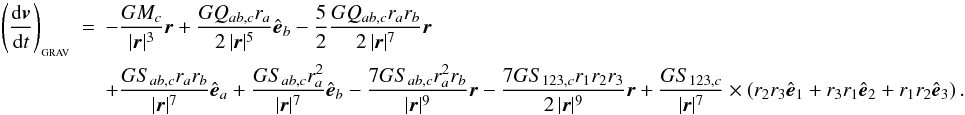

The Barnes-Hut gravity tree is built using only self-gravitating SPH particles. For each cell, we record by default (i) the total mass of the cell; (ii) the position of the centre of mass, and, if it is a leaf cell; (iii) the IDs of all SPH particles contained in the cell. Additionally, we can compute and store higher-order multipole terms, in order to calculate the gravity of a cell to greater accuracy. A multipole expansion can in principle be made up to any order, although it is usually optimal to truncate after only a few terms. The monopole term is simply the centre of mass term for each cell and the dipole term is always zero if calculated with respect to the centre of mass of the cell. In SEREN, we provide the option to include either the quadrupole moment terms, or the quadrupole and octupole moment terms. The equations for the quadrupole and octupole moment tensors of a cell are given in Appendix B. The quadrupole moment tensor is a traceless symmetric matrix, Q, meaning there are 5 independent terms to be stored for each cell. The octupole moment tensor is a more complicated rank-3 tensor, S, whose symmetries result in 10 independent terms which must be stored for each cell. The gravitational potential at the position of particle i due to cell c, up to octupole order, is  (50)where r = ri − rc is the position of particle i relative to cell c, (r1,r2,r3) are the Cartesian components of r, and we employ the Einstein summation convention (i.e. we sum over repeated indices). If we define

(50)where r = ri − rc is the position of particle i relative to cell c, (r1,r2,r3) are the Cartesian components of r, and we employ the Einstein summation convention (i.e. we sum over repeated indices). If we define  to be the unit vector in the ath Cartesian direction, the gravitational acceleration of particle i, due to cell c, up to octupole order, is

to be the unit vector in the ath Cartesian direction, the gravitational acceleration of particle i, due to cell c, up to octupole order, is  (51)When walking the gravity tree, the code must interrogate cells to decide whether to use the multipole expansion or to open up the cell and interrogate its child cells. This decision is determined by the multipole-acceptance criterion (MAC). SEREN includes a simple Geometric MAC and a GADGET-style MAC; it also includes a new Eigenvalue MAC, which uses the eigenvalues of the quadrupole moment terms to determine whether to open a cell.

(51)When walking the gravity tree, the code must interrogate cells to decide whether to use the multipole expansion or to open up the cell and interrogate its child cells. This decision is determined by the multipole-acceptance criterion (MAC). SEREN includes a simple Geometric MAC and a GADGET-style MAC; it also includes a new Eigenvalue MAC, which uses the eigenvalues of the quadrupole moment terms to determine whether to open a cell.

9.2.1. Geometric MAC

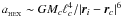

The Geometric MAC uses the size of the cell, ℓc (i.e. its longest corner-to-corner length), and its distance from the particle, | ri − rc | , to calculate the angle the cell subtends at the particle, θci = ℓc/|ri − rc|. If θc is smaller than some pre-defined tolerance,  , the gravitational acceleration due to the cell is given by the multipole expansion, Eq. (51). If this criterion is not satisfied, the cell is opened and the sub-cells on the next level are interrogated in the same way. If a leaf cell is opened, we store the identifiers of all the particles contained in it, and compute their contribution to the net gravitational acceleration directly (using Eqs. (16)–(18)). For computational efficiency, the code calculates and stores for each cell the quantity

, the gravitational acceleration due to the cell is given by the multipole expansion, Eq. (51). If this criterion is not satisfied, the cell is opened and the sub-cells on the next level are interrogated in the same way. If a leaf cell is opened, we store the identifiers of all the particles contained in it, and compute their contribution to the net gravitational acceleration directly (using Eqs. (16)–(18)). For computational efficiency, the code calculates and stores for each cell the quantity  . An unnecessary square-root operation is then avoided by applying the geometric MAC in the form

. An unnecessary square-root operation is then avoided by applying the geometric MAC in the form  (52)

(52)

9.2.2. GADGET-style MAC

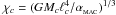

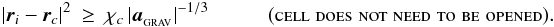

Springel et al. (2001) have formulated another type of MAC for the SPH code GADGET. This MAC uses an approximation to the leading error term in the multipole expansion to calculate for each cell the smallest distance from the cell at which the multipole expansion can be used. GADGET includes quadrupole moment corrections, and so the leading error term is the octupole term. However, Springel et al. suggest that the octupole moment term is small in a homogeneous density field, in which case the hexadecapole term is the largest error term. For a cell c of total mass Mc and linear size ℓc, an approximation to the magnitude of the acceleration of particle i due to the hexadecipole term is  . If

. If  is less than some user-defined fraction of the total gravitational acceleration of particle i, i.e. aHEX < αMAC | aGRAV | , the multipole expansion is used; otherwise cell c must be opened to the next level. Since the current acceleration of particle i is not yet available, the code uses the acceleration from the previous timestep as an approximation. The code therefore calculates and stores for each cell the quantity

is less than some user-defined fraction of the total gravitational acceleration of particle i, i.e. aHEX < αMAC | aGRAV | , the multipole expansion is used; otherwise cell c must be opened to the next level. Since the current acceleration of particle i is not yet available, the code uses the acceleration from the previous timestep as an approximation. The code therefore calculates and stores for each cell the quantity  , and then applies the GADGET-style MAC in the form

, and then applies the GADGET-style MAC in the form  (53)When the quadrupole and octupole moment terms are not used, the leading error term is the quadrupole term. Therefore an approximation to the acceleration of the quadrupole term,

(53)When the quadrupole and octupole moment terms are not used, the leading error term is the quadrupole term. Therefore an approximation to the acceleration of the quadrupole term,  , is used instead. In this case the code calculates and stores for each cell the quantity

, is used instead. In this case the code calculates and stores for each cell the quantity  , and then applies the GADGET-style MAC in the form

, and then applies the GADGET-style MAC in the form  (54)Since we do not have a value of

(54)Since we do not have a value of  on the very first timestep, we use the Geometric MAC with θMAC = 1.0 to obtain an initial estimate and then revert to Equations. (53) and (54). Equations (53) and (54) do not guarantee a maximum fractional force error, but rather attempt to set an upper limit on the error contribution from each cell. It is therefore possible that the error is larger than desired. Therefore we use the Geometric MAC with θMAC = 1.0, alongside the GADGET-style MAC, as a safety measure for the rare cases where Eqs. (53) and (54) are inadequate.

on the very first timestep, we use the Geometric MAC with θMAC = 1.0 to obtain an initial estimate and then revert to Equations. (53) and (54). Equations (53) and (54) do not guarantee a maximum fractional force error, but rather attempt to set an upper limit on the error contribution from each cell. It is therefore possible that the error is larger than desired. Therefore we use the Geometric MAC with θMAC = 1.0, alongside the GADGET-style MAC, as a safety measure for the rare cases where Eqs. (53) and (54) are inadequate.

9.2.3. Eigenvalue MAC

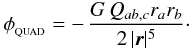

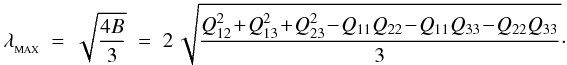

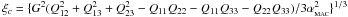

We introduce here a new Eigenvalue MAC, based on the quadrupole moment terms of a cell. Salmon & Warren (1994) originally suggested using higher-order multipole moments directly to formulate a MAC, but they only used upper limits to the multipole moment terms to constrain the leading error term of the multipole expansion. This resulted in a more conservative MAC than was actually required to achieve the desired accuracy, and hence more expensive tree walks. The Eigenvalue MAC is formulated by determining the maximum values of the gravitational potential (or acceleration) due to the quadrupole moment terms of a cell. The quadrupole moment tensor is a real, symmetric and traceless matrix. It therefore has three real eigenvalues, λ1,λ2,λ3. From Eq. (50), the gravitational potential due to the quadrupole moment term is  (55)The term in the numerator, Qab,crarb, is the quadratic form between the quadrupole matrix Q and the vector r. It can be shown (e.g. Riley et al. 1997) that the quadratic form has a maximum absolute value given by | λMAX | | r | 2, where

(55)The term in the numerator, Qab,crarb, is the quadratic form between the quadrupole matrix Q and the vector r. It can be shown (e.g. Riley et al. 1997) that the quadratic form has a maximum absolute value given by | λMAX | | r | 2, where  is the largestin magnitude of the three eigenvalues. We therefore solve the eigenvalue equation,

is the largestin magnitude of the three eigenvalues. We therefore solve the eigenvalue equation, ![\begin{eqnarray} \label{EQN:DEPRESSEDCUBIC} {\rm det}\left[Q-\lambda\,I\right]&=&A\,+\,B\lambda\,+\,C\lambda^2\,-\,\lambda^3\;\,=\;\,0,\\ A&=&-\,Q_{33}Q_{12}^2\,-\,Q_{22}Q_{13}^2\,-\,Q_{11}Q_{23}^2\,+\,2Q_{12}Q_{13}Q_{23}\,+\,Q_{11}Q_{22}Q_{33}\hspace{1.0cm}\equiv\;\,{\rm det}[Q],~~~~~~~~~~~~~~~~~\\ B&=&Q_{12}^2\,+\,Q_{13}^2\,+\,Q_{23}^2\,-\,Q_{11}Q_{22}\,-\,Q_{11}Q_{33}\,-\,Q_{22}Q_{33},\\ C&=&Q_{11}\,+\,Q_{22}\,+\,Q_{33}\hspace{7.2cm}\equiv\;\,{\rm Tr}[Q].~~~~~~~~~~~~~~~~~ \end{eqnarray}](/articles/aa/full_html/2011/05/aa14949-10/aa14949-10-eq185.png) Since, by design, C = Tr [ Q ] = 0, Eq. (56) is a depressed cubic equation, i.e. a cubic equation with no quadratic term. Since also Q is real and symmetric, the eigenvalues are real, and Eq. (56) can be solved by the method of Vieta (e.g. Martin 1998). In particular, the largest eigenvalue is

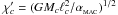

Since, by design, C = Tr [ Q ] = 0, Eq. (56) is a depressed cubic equation, i.e. a cubic equation with no quadratic term. Since also Q is real and symmetric, the eigenvalues are real, and Eq. (56) can be solved by the method of Vieta (e.g. Martin 1998). In particular, the largest eigenvalue is  (60)We therefore require that the magnitude of the quadrupole moment potential, | φQUAD | = GλMAX/2 | ri − rc | 3, be less than some user-defined fraction of the total potential, | φQUAD | < αMAC | φGRAV | . The code approximates

(60)We therefore require that the magnitude of the quadrupole moment potential, | φQUAD | = GλMAX/2 | ri − rc | 3, be less than some user-defined fraction of the total potential, | φQUAD | < αMAC | φGRAV | . The code approximates  with the value from the previous timestep, and calculates and stores for each cell the quantity

with the value from the previous timestep, and calculates and stores for each cell the quantity  . The Eigenvalue MAC is then applied in the form

. The Eigenvalue MAC is then applied in the form  (61)Equation (61) does not guarantee a maximum fractional error, but attempts to limit the error contribution from each cell. Therefore we also use the Geometric MAC with θMAC = 1.0, alongside the Eigenvalue MAC, as an extra safety measure.

(61)Equation (61) does not guarantee a maximum fractional error, but attempts to limit the error contribution from each cell. Therefore we also use the Geometric MAC with θMAC = 1.0, alongside the Eigenvalue MAC, as an extra safety measure.

9.2.4. SPH-neighbour cell-opening criterion

The multipole expansions used in SEREN assume that each SPH particle in a cell is a point-mass. In contrast, the derivation of the equation of motion takes account of the finite extent of an SPH particle (i.e. kernel-softened gravity; see Sect. 4). The SPH-neighbour criterion therefore requires that any cell that might contain neighbours of particle i be opened. The code calculates and stores for each cell the quantity  , where the maximum is over all the particles j in the cell; dc is the maximum extent of the smoothing kernels of the particles in cell c. The overall cell-opening criterion then takes the form

, where the maximum is over all the particles j in the cell; dc is the maximum extent of the smoothing kernels of the particles in cell c. The overall cell-opening criterion then takes the form  (62)This additional criterion adds an extra overhead to calculating the properties of the cells, and also to the gravity walk, since there are now two cell-opening criteria to check. However, in highly clustered geometries such as those found in gravitational collapse problems, this extra check brings significant accuracy and speed benefits.

(62)This additional criterion adds an extra overhead to calculating the properties of the cells, and also to the gravity walk, since there are now two cell-opening criteria to check. However, in highly clustered geometries such as those found in gravitational collapse problems, this extra check brings significant accuracy and speed benefits.

10. Tests

We have performed a large number of standard and non-standard tests to demonstrate that the algorithms in SEREN have been implemented correctly and perform well. It is not practical to test all possible combinations of the options available in SEREN, and we have therefore chosen tests which demonstrate the performance of particular algorithms. Where possible, we compare the test results with known analytic or semi-analytic solutions. Where an algorithm has been developed in another SPH code and the subroutine then imported into SEREN (e.g. the radiative cooling module of Stamatellos et al. 2007), or has been written directly into SEREN as an independent module (e.g. the HEALPix module for treating ionising radiation; Bisbas et al. 2009), the testing is not described here, and the interested reader is referred to the original paper.

10.1. Generation of initial conditions

The generation of initial conditions in SPH often needs careful consideration, since particle noise and edge effects can impact negatively on test simulations such as those described here. For example, random initial conditions suffer from Poisson-noise in the particle distribution which leads to high-frequency noise in the density and particle accelerations. A safer approach is to generate a so-called “glass” distribution of particles. A glass is a semi-regular structure in which all the particles are roughly equidistant from each other.

In order to generate a glass, we initially place equal-mass particles randomly in a periodic box. The particles are evolved using SEREN, with artificial viscosity to dissipate the kinetic energy, until the particles have settled into an equilibrium structure. We use an isothermal EOS and a Courant factor of γCOUR = 0.2. Once settled, the particle boxes can be replicated and joined together to create larger settled particle distributions, and uniform-density spheres can be cut from a box. All of the glass distributions used in this paper are set-up using this method. We note that glass-structures can be set up with different methods (e.g. “repulsive” gravity in GADGET2, Springel 2005).

|

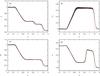

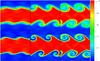

Fig. 1 Results of the adiabatic shock test using the “grad-h” SPH formulation (Price & Monaghan 2004a) showing a) the density, b) the x-velocity, c) the thermal pressure, and d) the specific internal energy after a time t = 1.0. The black dots represent the results from the SPH simulation and the red lines show the semi-analytic solution obtained using a Riemann solver. |

Initial conditions for the adiabatic Sod test (Cols. 2 and 3), and for the colliding flows test (Cols. 4 and 5).

10.2. Adiabatic Sod test (Sod 1978)