Fig. 5

Download original image

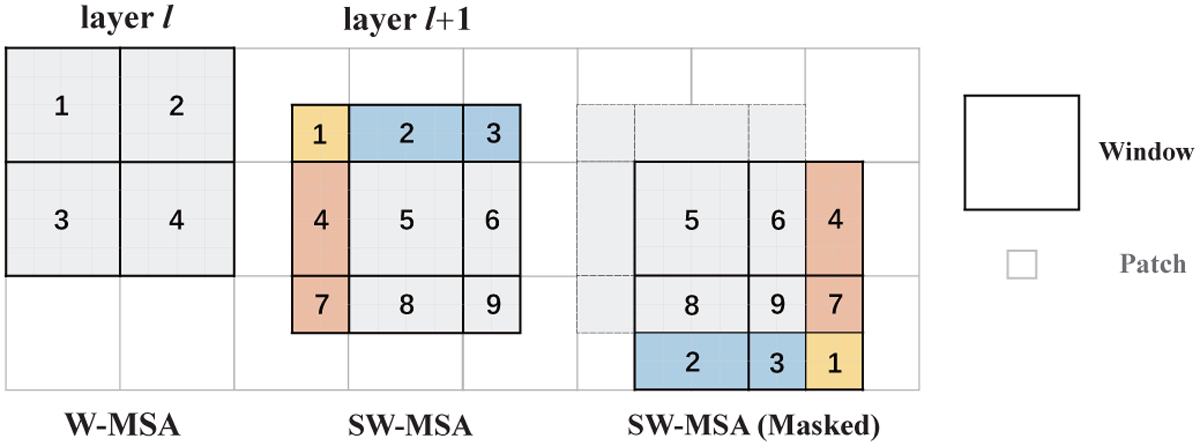

Attention calculation process in the Swin Transformer block. The left panel represents the use of W-MSA in the layer l, the middle panel represents the use of SW-MSA in the next layer, and these two appear in pairs. The attention calculation in SW-MSA is shown in the right panel, where the completion of the new window is achieved by moving the windows and then a masking operation is applied to calculate attention within the window. Please note that the black squares represent windows with a size of 4 × 4, and the grey squares represent patches.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.