Fig. 2

Download original image

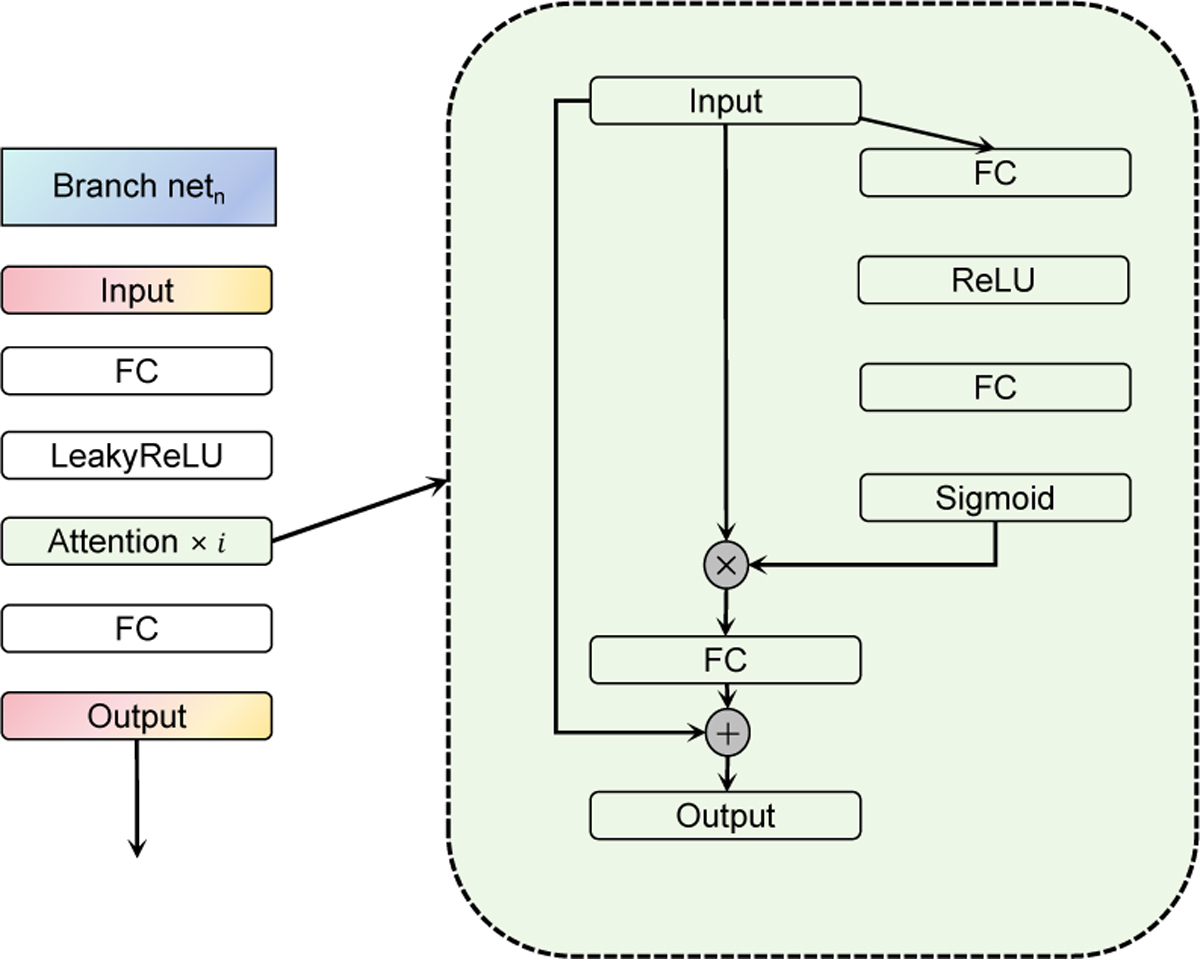

Detailed architectures of branch netn and attention mechanism, where FC is the full connected layer. The input of branch netn experiences the initial feature extraction through a FC layer and then carries out the feature amplification by attention mechanism, where i represents the layer number. 3, 6, and 6 layers are respectively used in branch networks from net1 to net3.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.