Fig. 8

Download original image

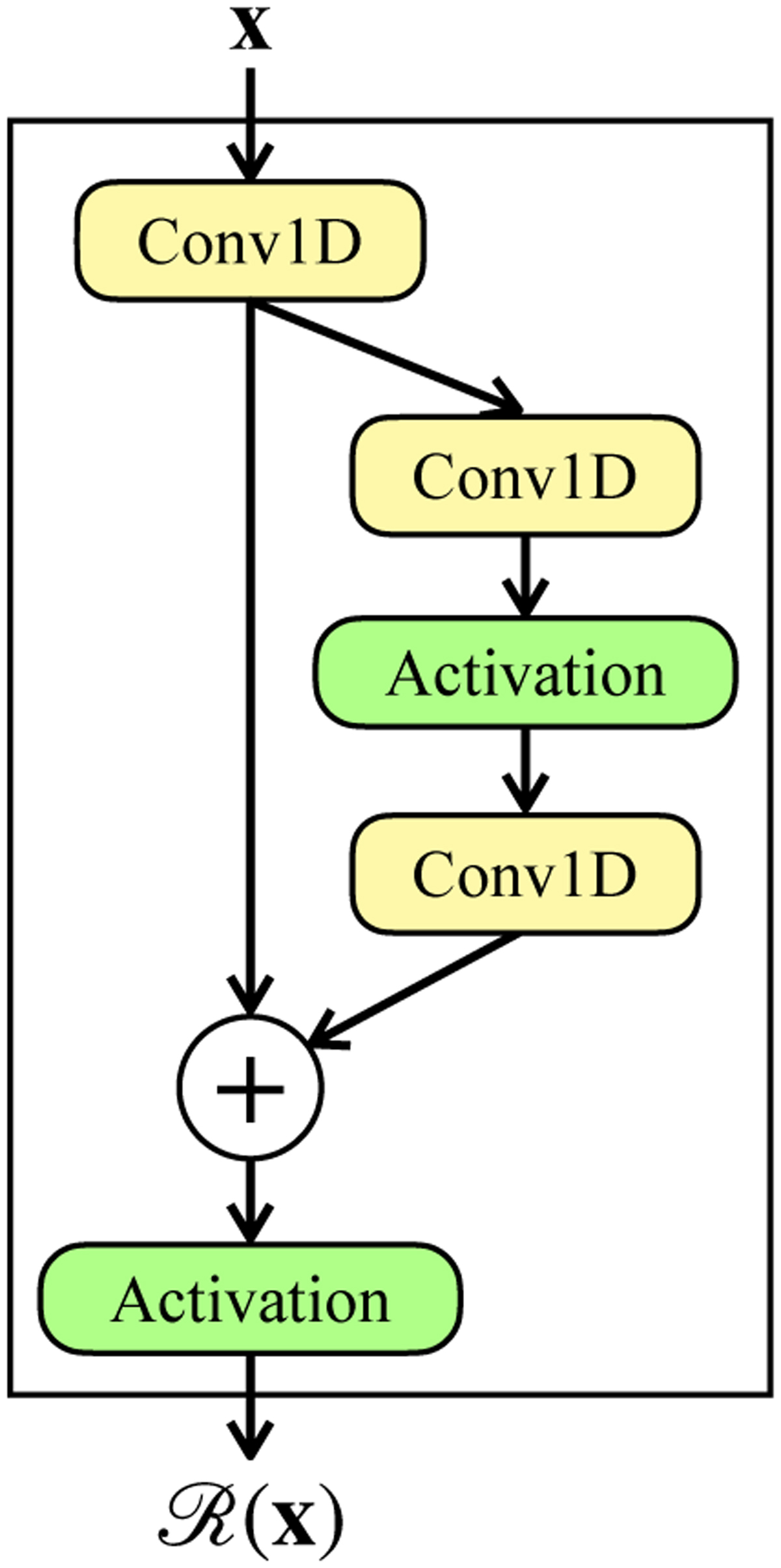

Residual block in SANSA. An input vector x is first passed through a convolutional layer and a copy of the output tensor is made which consecutively goes through a pair of convolutional layers introducing nonlinearity, all the while preserving the shape of the output tensor. The outcome is then algebraically added to the earlier copy (i.e., a parallel, identity function) and the sum is passed through a nonlinear activation to obtain the final outcome of the block. The latter two convolutional layers thus learn a residual nonlinear mapping. (Note that a zero-padding is applied during all convolutions in order to preserve the feature shape in the subsequent layers through the network.)

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.