Fig. B.1

Download original image

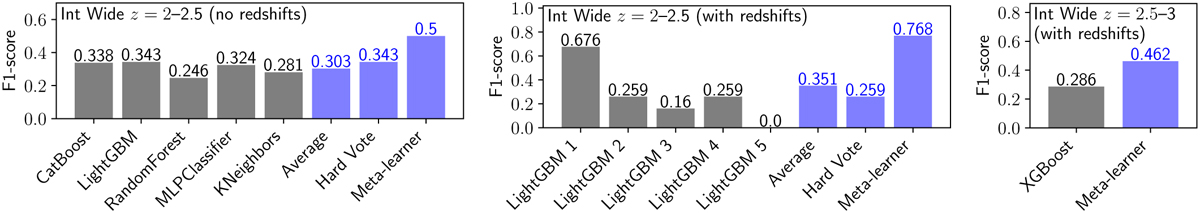

Examples of the F1-scores from individual base-learners and the model ensembling methods. Left: Selection of quiescent galaxies at z = 2–2.5 from the Int Wide catalogue using Euclid photometry, without foreknowledge of galaxy redshifts. As described in the text, the meta-learner performs a non-linear fusion of the individual classifiers, resulting in a significantly higher Fl-score than obtained by any of the individual base learners or the two other ensemble methods (averaging and hard-voting). Centre: Impact of ensembling a LightGBMClassifier model, the hyperparameters of which are well-tuned for this problem (LightGBM 1), with four other LightGBM models that have poorly tuned hyperparameters (LightGBM 2,3,4,5). In this case, averaging the model predictions and hard-voting both produce poor results, but the meta-learner is able to identify and weight accordingly the low quality class predictions. Right: Application of a meta-learner to a classification model produced by a single base-learner, in this case XGBoostClassifier. In this circumstance, the meta-learner performs 'error correction', resulting in a significant improvement in the quality of the classifier.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.