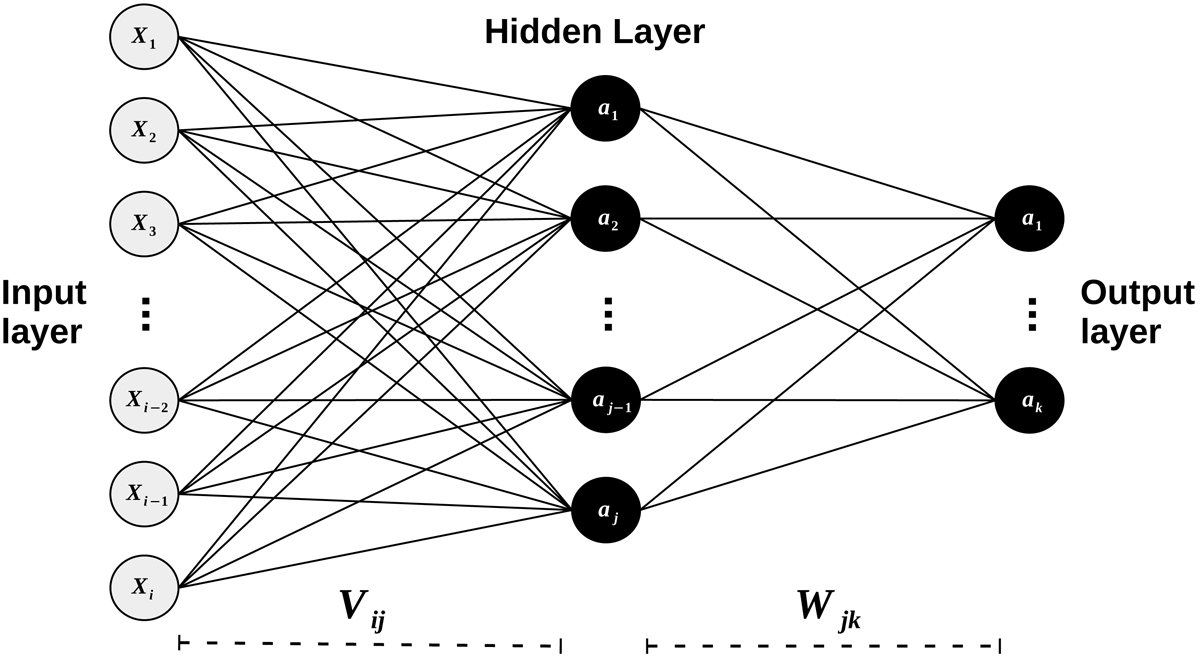

Fig. 1.

Schematic view of a simple neural network with only one hidden layer. The light dots are input dimensions. The black dots are neurons with the linking weights represented as continuous lines. Learning with this network relies on Eqs. (1)–(8). X[1, …, i] are the dimensions for one input vector, a[1, …, j] are the activations of the hidden neurons, a[1, …, k] are the activations of the output neurons, while Vij and Wjk represent the weight matrices between the input and hidden layers, and between the hidden and output layers, respectively.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.