| Issue |

A&A

Volume 531, July 2011

|

|

|---|---|---|

| Article Number | A86 | |

| Number of page(s) | 20 | |

| Section | Numerical methods and codes | |

| DOI | https://doi.org/10.1051/0004-6361/201016374 | |

| Published online | 20 June 2011 | |

Towards a new generation of multi-dimensional stellar evolution models: development of an implicit hydrodynamic code

1 École Normale Supérieure de Lyon, CRAL (UMR CNRS 5574), Université de Lyon 1, France

e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

2

Physics and Astronomy, University of Exeter, Stocker Road, Exeter, EX4 4QL, UK

Received: 20 December 2010

Accepted: 6 March 2011

Abstract

This paper describes the first steps in the development of a new multi-dimensional time implicit code devoted to the study of hydrodynamical processes in stellar interiors. The code solves the hydrodynamical equations in spherical geometry and is based on the finite volume method. Radiation transport is taken into account within the diffusion approximation. Realistic equation of state and opacities are implemented, allowing study of a wide range of the problems characteristic of stellar interiors. We describe the numerical method and various standard tests performed to validate the method in detail. We present preliminary results devoted to describing stellar convection. We first performed a local simulation of convection in the surface layers of a A-type star model. This simulation tested the ability of the code to address stellar conditions and to validate our results, since they can be compared to similar previous simulations based on explicit codes. We then present a global simulation of turbulent convective motions in a cold giant envelope, covering 80% in radius of the stellar structure. Although our implicit scheme is unconditionally stable, we show that in practice there is a limitation on the time step that prevents the flow moving over several cells during a time step. Nevertheless, in the cold giant model we reach a hydro CFL number of 100. We also show that we are able to address flows with a wide range of Mach numbers (10-3 ≲ Ms ≲ 0.5), which is impossible with an anelastic approach. Our first developments are meant to demonstrate that applying an implicit scheme to a stellar evolution context is perfectly thinkable and to provide useful guidelines for optimising the development of an implicit multi-dimensional hydrodynamical code.

Key words: hydrodynamics / convection / methods: numerical / stars: interiors

© ESO, 2011

1. Introduction

With the advent of massively parallel computers and the development of advanced numerical techniques like adaptive mesh refinement and massive parallelisation, astrophysical fields like cosmology, star formation, or stellar/galactic environment studies have recently made remarkable steps forward. Despite this revolution in computational astrophysics, our physical understanding of stellar interiors and evolution still largely relies on one-dimensional calculations. Implicit 1D stellar evolution codes were widely developed during the past century, gaining more and more in sophistication through improvements in the input physics and implementation of an increasing number of complex physical processes such as time-dependent turbulent convection, rotation, or MHD processes. Their description, however, still mostly relies on simplified, phenomenological approaches, with a predictive power hampered by the use of several free parameters. These approaches have now reached their limits for understanding stellar structure and evolution. The development of multi-dimensional hydrodynamical simulations becomes crucial for progress in the field of stellar physics and for the enormous observational efforts aimed at producing data of unprecedented quality, as expected from the asteroseismological space projects CoRoT and Kepler.

Several efforts have been devoted to the development of 2D and 3D tools for studying some aspects and specific problems of stellar structure and evolution. Impressive progress and developments have been achieved in multi-dimensional hydrodynamics and MHD models of stellar atmospheres and photospheres (see e.g. Freytag et al. 1996; Stein & Nordlund 1998; Asplund et al. 2000; Bigot et al. 2006; Nordlund & Stein 2009). In stellar interiors, many studies of convection, rotation, and magnetic fields are performed with anelastic hydrodynamic solvers, which filter out sound waves and have the priceless advantage of not being restricted to the Courant-Friedrich-Lewy (CFL hereafter) time step limit, a severe limitation for explicit compressible hydrodynamic solvers (Brun & Toomre 2002; Guzik 2011, and references therein). Anelastic approaches, however, are restricted to the study of flows characterised by very low Mach numbers and small thermodynamic fluctuations from the background (see discussions in Almgren et al. 2006; Meakin & Arnett 2007a).

Other groups use 2D/3D explicit hydrodynamical simulations to follow the properties on short snapshots of turbulent convection, mixing processes, or nuclear burning in stellar interiors such as cores and burning shells of massive stars or during the core He flash in low-mass stars (Dearborn et al. 2006; Meakin & Arnett 2007b; Eggleton et al. 2008; Mocák et al. 2009; Arnett et al. 2009). However, owing to the intrinsic time-step limitation of explicit codes, simulating those processes on time scales that are significantly larger than the dynamical one and relevant to stellar evolution would be prohibitively expensive. To overcome this problem, increasing efforts are now devoted to developing algorithms for low Mach-number, compressible stellar flows (Hujeirat & Thielemann 2009; Nonaka et al. 2010). However, many stellar regions and evolutionary phases are characterised by low-to-moderate Mach-number flows, which would ideally be described by fully time-implicit solvers.

We only know two multi-dimensional implicit codes applied to stellar physics problems, namely the 2D stellar evolution code ROTORC developed by Deupree (1990) and the 2D code VULCAN of Livne (1993). The work of Deupree is pioneering in the field of multi-dimensional implicit stellar evolution, with ROTORC applied to the study of stellar convection and rotation in stars of different masses. However, the numerical method involved in this code cannot compete with current, advanced numerical algorithms, because it is based on a non-conservative finite-difference scheme with the implicit solver as an extension to the 2D of the Henyey method. This method is widely used in 1D stellar evolution calculations but cumbersome (if not impossible) to parallelise, thus it significantly restricts the spatial resolution for multi-dimensional calculations. VULCAN is based on a two-step method consisting of an implicit Lagrangian step followed by an explicit remapping step on an arbitrary grid. The code has been essentially applied to studying core-collapse supernovae (see e.g. Livne et al. 2004; Burrows et al. 2007) and novae explosions (see e.g. Glasner & Livne 1995; Livne & Arnett 1995; Glasner et al. 1997; Livne 1999). Although VULCAN offers the possibility of solving advection implicitly, to our knowledge in these works the authors performed multi-dimensional computations by solving advection explicitly and radiation implicitly. Thus, we cannot gauge the capacity of this code to describe long-term stellar evolution problems, in general, and convective flows in various stellar interiors, in particular. Hujeirat (2005a,b) develops a multi-dimensional implicit MHD solver, but the applications are oriented more towards high-energy processes (e.g. accretion on a black hole). Finally, we mention the work of Toth et al. (1998) and Keppens et al. (1999), which describe the implementation and tests of various implicit methods for multi-dimensional computations of steady state and time-dependent problem in (magneto)hydrodynamic.

In this context, we started to develop a multi-dimensional time implicit code, guided by the motivation of improving the description of stellar interiors during different phases of evolution. The holy grail is to develop a tool that allows the 3D description of a complete star during thermal, nuclear, or accretion time scales that would be relevant to various stellar evolution phases. Our primary interests focus on the description of time-dependent convection in the envelope of pulsating stars like Cepheids, a long-standing problem in asteroseismology, or during the very early phases of stellar evolution (early pre-main sequence) following the hydrodynamical phase of a proto-star formation. Improving our description of these very early stages of evolution is crucial to a more accurate analysis of current observations and, in particular, to derivation of a key property for understanding star formation, namely the initial mass function (see e.g. discussion in Baraffe et al. 2002). For the above-mentioned problems, neither an anelastic nor a low-Mach approach is appropriate, since the convective flows are characterised by very low (M ≪ 0.01) to moderate (0.1 ≲ M ≲ 1) Mach numbers from the deep interior to the stellar surface. Also, a wide range of other astrophysicaly interesting problems can be addressed with such a tool, providing a wealth of applications on a long-term future and a revival of the field of stellar physics.

Our goal is exceedingly challenging, with a long way to go before achievement. This first paper describes our method and the first tests performed to describe convection in stellar interiors. Because implicit schemes are much more expensive in terms of CPU than explicit ones, part of the astrophysical, computational community casts doubts on the advantage and applicability of the former to any stellar physics problem. Our first developments are meant to demonstrate that the use of an implicit scheme in a stellar evolution context is perfectly thinkable. For this purpose, we present two examples of stellar convection for which we derive the exact CFL numbers and discuss the advantages and limits of an implicit approach. Even though the implicit method used throughout the paper has no stability limit on the time step (see Sect. 3.2.2), the time step cannot take arbitrarily high values for both technical and accuracy reasons. We show in this paper that both reasons are actually related and that the time step cannot grow much larger than the shortest time scale of the flow for crossing a grid cell. Technically this implies that the solution change is moderate between two time steps, insuring a safe convergence in the non-linear solver. In addition, this is also a good accuracy criterion, since advection over more than one grid cells is subject to strong numerical damping. Our first numerical experiments are meant to provide useful guidelines for developing an implicit multi-dimensional hydrodynamical code.

The paper is organised as follows. We describe in Sect. 2 our physical model and in Sect. 3 our numerical method. We present a test case in Sect. 4 and results for stellar models in Sect. 5. In Sect. 6, we conclude the paper and discuss future numerical advancements that are planned to optimise the code.

2. Equations

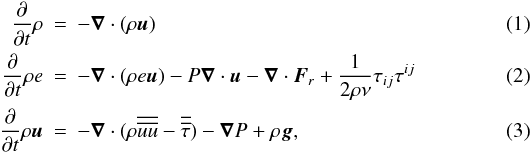

We solve the equations describing the evolution of density, momentum, and internal energy, taking into account external gravity and radiative diffusion:

where ρ is the density, e the specific internal energy, u the velocity, P the gas pressure, Fr the radiative flux (see below), g the gravitational acceleration, and  the viscous stress tensor, whose components are given by

the viscous stress tensor, whose components are given by  (4)

(4)

where eij is the strain rate tensor and ν the viscosity coefficient. The expected value of the molecular viscosity in stellar interiors implies large Reynolds numbers characterising the flow (up to 1012). It is therefore impossible to model all scales of the flow, from the stellar scale down to the dissipation scale, on current generation of computers. As a result, any numerical simulations of stellar interiors should be interpreted in the large eddy simulation (LES) paradigm, in which only large-scale motions are resolved on the grid. In the standard LES, the effect of subgrid-scale motions (i.e. turbulence) is taken into account by introducing a so-called subgrid scale (SGS) model. A classical example is the effective viscosity coefficient proposed in Smagorinsky (1963). On the other hand, the MILES approach (for Monotone Integrated Large Eddy simulation, see e.g. Boris et al. 1992) relies solely on the numerical viscosity of the scheme, which results from discretisation, to mimic the subgrid scale dissipation. In our case, a standard LES approach should rely on an SGS model relevant for compressible flow in a stratified medium, which to our knowledge has not been designed yet. It is far from certain that the use of standard SGS models, mostly relevant for incompressible and homogenous flow, will improve our results. Therefore, we choose to follow the MILES strategy and all the computations of stellar convection presented in this paper are based on the advection-diffusion equations without explicit viscosity.

We solve the internal energy density equation. Some of the popular numerical codes that have been used to study stellar convection solve the entropy equation (e.g. ASH code, Pencil code), which is equivalent to the internal energy equation. A better approach to energy conservation would be to solve the total energy equation, as for instance in the COBOLD code (see Freytag and collaborators) and in the PROMPI code (see Meakin & Arnett 2007b). In the future we plan to implement a total energy equation solver, which might be more appropriate for problems like stellar pulsations.

For stellar calculations, we treat radiation transport in the diffusion limit approximation. This approximation is suitable for an optically thick region but becomes inaccurate in the photosphere and optically thin regions. In this framework, the radiative flux Fr writes as  (5)

(5)

where the photon conductivity krad is given by  (6)

(6)

with κ the Rosseland opacity of the gas, which is interpolated from the OPAL tables (Iglesias & Rogers 1996).

The equations are closed with the equation of state:  (7)

(7)

Our equation of state is tabulated and includes the partial ionisation of several chemical elements (determined by the Saha-Bolztmann equations) from hydrogen to silicium and takes Coulombian pressure into account. The chemical abundances are set to the solar values. For details see Aubert et al. (1996). We consider here stellar envelopes and therefore we do not take nuclear reactions into account, which concern the central region of the star.

For the geometry, we adopt spherical coordinates. We assume azimuthal symmetry so the only independent coordinates are r (the radius) and θ (the colatitude). In general, the coordinates (r,θ) span a domain [rin,rout] × [θ1,θ2] . We use periodic conditions in the tangential direction. For boundary conditions at the top and bottom of the domain we impose

-

1.

non-penetrative conditions:ur = 0 at r = rin, rout;

-

2.

stress-free conditions:

at r = rin, rout;

at r = rin, rout; -

3.

constant energy flux F⋆ at the bottom of the domain r = rin;

-

4.

an energy flux at the top of the domain given by

(8)

(8)

where Tout is the temperature of the cells in the top layer of the domain. Finally, we would like to stress that we adopted 2D geometry to simplify the development of the code, but extension to 3D is planned in the future.

3. Numerical method

We present below our numerical method, describing separately the spatial (Sect. 3.1) and temporal (Sect. 3.2) discretisations.

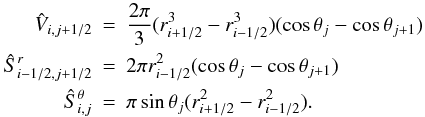

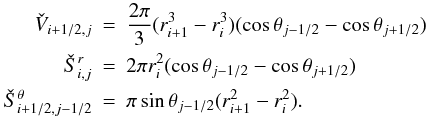

3.1. Spatial discretisation

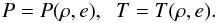

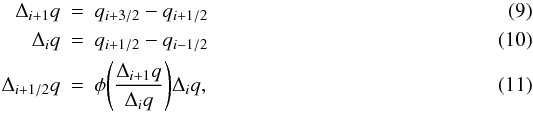

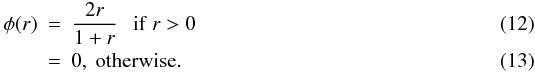

3.1.1. One-dimensional advection scheme

The equations are discretised on a staggered grid, using a finite volume approach. Cell interfaces location are denoted by xi,i = 1...Nx and cell centres by xi + 1/2,i = 1...Nx − 1. In the staggered grid approach, scalar quantities (e.g. density, internal energy, pressure, temperature, etc.) are located at cell centres, while velocity components are located at cell boundaries.

In the finite volume approach, the physical equations are integrated over a control volume to yield the evolution law for the physical quantities (mass, internal energy, momentum) in each cell. Fluxes are computed at cell interfaces, yielding a conservative scheme with respect to advection. For scalar quantities (density and internal energy) at xi + 1/2, the control volume is the cell [xi,xi + 1] with volume Vi + 1/2 and surfaces Si, Si + 1. For the velocity component at xi, the control volume is the cell [xi − 1/2,xi + 1/2] , with volume  and surfaces Ŝi − 1/2, Ŝi + 1/2.

and surfaces Ŝi − 1/2, Ŝi + 1/2.

We use the Van Leer method to compute the fluxes (see van Leer 1977; Stone & Norman 1992). To compute the flux at the control volume interfaces, it is necessary to perform interpolation since not all quantities are available at the considered interface. We define “barred” quantities (i.e.  ,

,  ) as quantities that are interpolated at the interface by taking a simple average between the values available on each side of the interface, for instance

) as quantities that are interpolated at the interface by taking a simple average between the values available on each side of the interface, for instance  . For a stable and TVD advection, it is necessary to introduce some limiting process in the interpolation scheme of advected quantities. Here we proceed with an upwind limited interpolation similar to the MUSCL method. In each cell a linear reconstruction of the solution is performed. For instance, we describe below the procedure for cell i + 1/2 and cell centred variable q. We first compute the limited slope Δi + 1/2q of the linear reconstruction in the cell by using the values of the neighbouring cells with a limiting process:

. For a stable and TVD advection, it is necessary to introduce some limiting process in the interpolation scheme of advected quantities. Here we proceed with an upwind limited interpolation similar to the MUSCL method. In each cell a linear reconstruction of the solution is performed. For instance, we describe below the procedure for cell i + 1/2 and cell centred variable q. We first compute the limited slope Δi + 1/2q of the linear reconstruction in the cell by using the values of the neighbouring cells with a limiting process:

where φ is the slope limiter. In the following we use the Van Leer limiter:  With such a reconstruction scheme, we reconstruct at each interface right and left values qR and qL:

With such a reconstruction scheme, we reconstruct at each interface right and left values qR and qL:

Depending on the sign of the velocity, one of these quantities is used to compute the flux at the interface. For instance, at interface xi one has

This defines the “tilded” quantities in the equations shown below. For the interfaces located at i + 1/2, the same reconstruction is performed and the interface value is chosen depending on the sign of  .

.

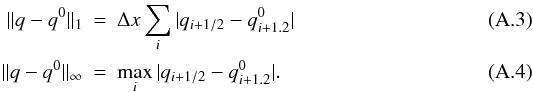

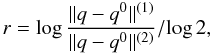

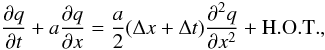

The pressure gradient at the interface is computed with a second-order, centred difference. The overall scheme is expected to be second order in space in the smooth regions of the flow. We present in Appendix A an order study for the linear advection problem.

After integration over the corresponding control volume and discretisation, the 1D Euler equations become

3.1.2. Gravity

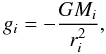

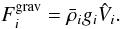

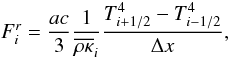

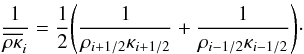

Since we are solving the internal energy equation, gravity only enters the momentum equation. We assume that gravity remains constant and spherically symmetric so that the gravitational acceleration at radius ri is given by  (21)

(21)

where G is the gravitational constant and Mi the mass contained inside the radius ri. Our stellar models do not extend to the centre of the star so Mi is computed assuming M1 = Mcore, where Mcore is determined from the original stellar model.

Under the assumption of spherical symmetry, the gravitational force enters the radial momentum equation only through the additional source term:  (22)

(22)

The assumption of spherical symmetry could become invalid if the star developed significant non-radial oscillations. The convective flows, which are not symmetric, do not alter the mass distribution symmetry significantly. Implementation of a full solver for the Poisson equation is planned for the future.

3.1.3. Radiative diffusion

The radiative flux through the cell interface is computed with a second-order central difference  (23)

(23)

where  (24)

(24)

3.1.4. Extension to 2D and spherical geometry

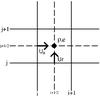

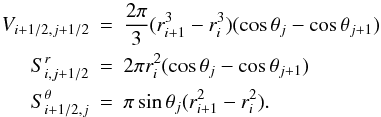

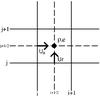

|

Fig. 1 Staggered mesh in 2D. |

Extension to 2D is done in the simplest possible way. Fluxes are computed along both directions following the 1D algorithm sketched above and added together in the update step (see below). Figure 1 shows the location of all quantities on our staggered 2D mesh. We avoid dimensional splitting (e.g. Strang splitting) that would increase the cost of the solver since several (implicit) substeps would have to be computed to advance the solution over one time step. It is clear in this case that the accuracy of the method as deduced from 1D analysis does not extend to multi-dimensional computation. One solution to improve the accuracy of the code would be to implement a genuinely multi-dimensional method (see e.g. Colella 1990; LeVeque 1997). Another possibility would be to increase the spatial order of the 1D reconstruction scheme to obtain a better accuracy in multi-dimensional computations. This is left for future developments.

We describe below how spherical geometry is taken into account in our spatial discretisation: in our finite volume approach, the cell (i + 1/2,j + 1/2) spans the parameter space domain [ri,ri + 1] × [θj,θj + 1] . The geometrical factors in the 2D version of cell-centred equations read as  These geometrical factors account for the geometrical effect associated with spherical coordinates. There are similar expression for the radial momentum and the tangential momentum equations (see Appendix B); however, due to the vector nature of the velocity, there are additional geometric source terms that appear in the momentum equations. In the radial momentum equation, the geometric source term reads as

These geometrical factors account for the geometrical effect associated with spherical coordinates. There are similar expression for the radial momentum and the tangential momentum equations (see Appendix B); however, due to the vector nature of the velocity, there are additional geometric source terms that appear in the momentum equations. In the radial momentum equation, the geometric source term reads as  (28)

(28)

and the geometric term in the tangential momentum is  (29)

(29)

The full semi-discretised formulae are detailed in Appendix B.

Spherical coordinates have severe drawbacks in the discretisation of a sphere. First, since r = 0 is a singular point of the coordinates, the cell size reduces to zero toward the centre. They are therefore inappropriate for modelling the central regions of the star. Also, the cell size increases toward the surface, whereas finer resolution is needed in these regions (see Sect. 5.2). Calhoun et al. (2008) shows how to design structured grids that overcome these limitations.

3.2. Temporal method

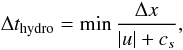

3.2.1. CFL time steps

When an explicit method is used to integrate a discrete set of equations in time, there is a constraint on the time step that results from the CFL condition. The CFL condition states that the physical domain of dependence of the solution should be included in the numerical domain of dependence, otherwise the numerical scheme cannot be convergent. For an explicit scheme, this yields a constraint on the time step that has to be smaller than the CFL time step to perform a stable computation (independently of any accuracy consideration).

Determining the stability limit for a given explicit scheme is not always trivial, if not impossible, especially in the non-linear and multi-dimensional cases. See, for instance, Hirsch (1990) for a careful derivation of the CFL time step for popular schemes. The CFL time steps that we define in this section are based on very simple arguments and should only be considered as a guideline for the maximum stable time step.

For the hydrodynamic equations, which are hyperbolic equations, the CFL condition states that the time step should not be longer than the crossing time of the fastest wave over a cell. In this case the maximum stable time step is equal to  (30)

(30)

where |u| + cs is the velocity of the fastest wave (u is the velocity of the background flow).

When time-dependent diffusion processes are considered, for instance in our case radiative diffusion, the corresponding CFL time step is related to the typical time scale for diffusion in a cell:  (31)

(31)

where χ = krad/(ρcp) is the radiative diffusivity coefficient, and cp the specific heat at constant pressure. For advection-diffusion problem, which are of mixed nature, the CFL time step is taken to be the minimum values of Eqs. (30) and (31).

We also define here a useful time step that we call the advective time scale. It is defined by  (32)

(32)

Its definition is inspired by the hydro time step (30) where we have set the velocity of the wave to be simply u instead of u + cs. This time step characterises the time needed for the flow to cross one cell. We stress that, for a code solving the standard compressible hydrodynamic equations with an explicit method, this time step is not a stability limit. We introduce this time scale since, as shown later, it corresponds to a natural limit for the time step; however, for anelastic codes, this time step represents the stability limit, since sound waves are filtered out in this approach.

With the definition of these three time steps, we can define the corresponding CFL numbers for a given numerical time step Δt:  (33)By adopting an implicit integration strategy, our goal is to release the stability limit on the time step. Therefore, to appreciate the performance of our solver, we carefully monitor the values of these three CFL numbers.

(33)By adopting an implicit integration strategy, our goal is to release the stability limit on the time step. Therefore, to appreciate the performance of our solver, we carefully monitor the values of these three CFL numbers.

In 2D, the time steps defined above are computed independently in both directions and the CFL time step is defined as the minimum of these values.

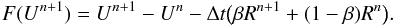

3.2.2. Implicit strategy

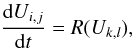

After the spatial discretisation method described in the previous section, one obtains a system of coupled ODEs (see Appendix B):  (34)

(34)

where Uk,l is a vector gathering all the quantities appearing below time derivatives (i.e. ρ, ρe, ρur, and ρuθ, multiplied by the appropriate volumes), and R(Uk,l) contains all fluxes and sources s:  (35)To obtain an update formula we now apply an implicit temporal method to integrate (Eq. (34)) in time:

(35)To obtain an update formula we now apply an implicit temporal method to integrate (Eq. (34)) in time:  (36)

(36)

where Rn (resp. Rn + 1) is the r.h.s. of equation (34) evaluated at time n (resp. n + 1). The first-order accurate Backward Euler method corresponds to β = 1. The second-order accurate Crank-Nicholson corresponds to β = 1/2. Both the Backward Euler and the Crank-Nicholson methods are A-stable, which implies that they are unconditionally stable1. For stiff equations, a more relevant property is the L-stability of the scheme (see e.g. LeVeque 2007). The Backward Euler method is L-stable but the Crank-Nicholson method is not. This implies that the latter can have difficulties with stiff problems and produce spurious oscillations. Indeed, when computing stellar models with high values of the radiative CFL (see the A-type star model in Sect. 5.1), we have found that it is more stable to use a value of β that is slightly higher than 1/2, for instance β = 0.55. In the future, we plan to implement the second-order backward differentiation formula, which is a second-order implicit multistep method having the A- & L-stability property.

Equation (36) describes a system of non-linear equations that defines the solution at the new time step, Un + 1. We use ρ, e, ur, and ut as independent variables, so the quantities that appear in the time derivatives in our equations are thus composite quantities of the primitive variables.

3.2.3. Newton-Raphson procedure

To solve for the new time step ρn + 1, en + 1,  ,

,  , one has to solve the set of non-linear equations F(Un + 1) = 0, where the residual F is defined as

, one has to solve the set of non-linear equations F(Un + 1) = 0, where the residual F is defined as  (37)

(37)

Here, we proceed with a Newton-Raphson procedure. The procedure to find Un + 1 is initialised by taking the current solution as an initial guess for the new solution:  (38)

(38)

At each Newton-Raphson iteration we solve for the corrections δU(k):  (39)

(39)

where J is the Jacobian matrix of the residual vector F. The current iteration is then updated with  (40)

(40)

where 0 < λ ≤ 1 is introduced to prevent divergence of the procedure. The classical Newton-Raphson update corresponds to λ = 1. A straightforward line-searching algorithm is applied to find the value of λ that ensures a decrease in the residual. However, we found that when solving the non-linear radiative diffusion term at very high radiative CFL numbers (~106), the first Newton-Raphson iteration always increases the residual. Different tests have shown that this seems unavoidable, and since the system would eventually converge within a few iterations, we always enforce λ = 1 during the first iteration in this particular case.

We stop the iteration procedure when the relative correction drops below a certain level:  (41)

(41)

where U0 are typical values of the variables. For the density and internal energy we simply choose the corresponding values at iteration k, but for the velocities we choose max(u,cs), where u is the value of the velocity at iteration k, and cs is the sound speed. In our computations we set ϵ = 10-6.

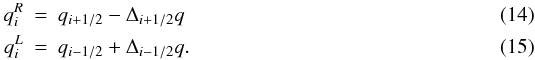

3.2.4. Jacobian matrix computation

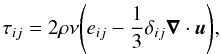

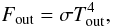

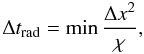

The Jacobian matrix elements are the partial derivatives of the numerical scheme Eqs. (37) with respect to all numerical variables. We compute the partial derivatives by finite differencing. The location of non-zero elements of the Jacobian depends exactly on the stencil of the numerical scheme (i.e. which variables contribute to a given equation). It is therefore easy to determine the non-zero structure (also known as the sparsity pattern) of the Jacobian matrix. Any Jacobian computation technique should then take advantage of the non-zero pattern to compute the Jacobian matrix elements. We use here the Coloured FiniteDifferencing technique (CFD hereafter, see Curtis et al. 1974; Gebremedhin et al. 2005), which minimises the number of function evaluation needed to compute the Jacobian matrix. The CFD algorithm uses the non-zero pattern of the Jacobian to group independent columns (i.e. variables) of the Jacobian matrix, i.e. those that do not share a non-zero element on the same line, into “colours”. This yields a “column compressed” representation of the Jacobian. The number of colours ng, which is also the number of columns of the compressed Jacobian matrix, is roughly equal to the maximum number of non-zero elements found in a row. This number depends only on the stencil of the scheme and is (ideally) independent of the dimension of the Jacobian matrix. To compute the matrix elements, the CFD algorithm perturbs all variables associated with the same colour and recomputes the non-linear equations. The matrix elements are then obtained by a straightforward finite difference against a reference value of the equations. The number of non-linear function evaluations is therefore equal to N(ng + 1), where N is the size of the matrix. As ng is independent from N, the CFD algorithm is expected to scale as N.

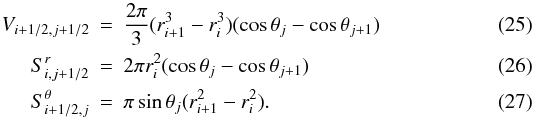

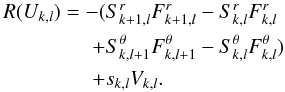

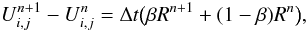

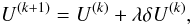

|

Fig. 2 Time spent in computing the Jacobian matrix (crosses) and in solving one linear system with MUMPS (dots) for different matrix sizes on a Pentium III Xeon quad-core CPU at 3 GHz. |

In our code, we use the CFD algorithm that is implemented in the Trilinos library (see Heroux et al. 2003). The graph-colouring algorithm is the “greedy” algorithm proposed in Curtis et al. (1974). We find that for our matrices this algorithm typically produces a number of colour nG = 51 ± 1 for matrix sizes ranging from 2304 (202 domain) to 652 864 (4002 domain). Therefore, the number of colours is roughly independent of the matrix size, and the algorithm scales as N. This is illustrated in Fig. 2, where we plot the time needed to compute the Jacobian matrix for different spatial resolutions.

3.2.5. Linear solver

The Newton-Raphson procedure requires the solution of the linear Eq. (39). Equation (39) is a sparse linear problem that can be solved by either a direct method (i.e. LU decomposition) or an iterative method. Iterative solvers (e.g. GMRES, BiCGStab) perform usually faster than direct methods but they rely heavily on preconditioning to improve (or even obtain) convergence. On the other hand, direct solvers are more accurate and robust but they become very expensive as the size of the linear system increases. For convenience, and since our 2D approach allows it, we decided to use a direct solver for developing the code. We use in our code the MUMPS solver (MUltifrontal Massively Parallel Solver, see Amestoy et al. 2001, 2006). MUMPS is able to handle general non-symmetric matrices; i.e., it is not restricted to tridiagonal or pentadiagonal matrices. MUMPS achieve robustness by detecting null pivots during the elimination phase and accuracy by applying a few (usually 2 or 3) steps of iterative refinement in the postprocessing phase.

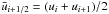

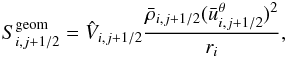

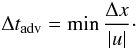

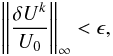

|

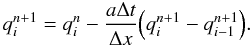

Fig. 3 Accoustic modes in the (z,ω) plane for Δt = 0.04 (left) and Δt = 0.5 (right). |

We use the advantage of knowing the non-zero structure of the Jacobian matrix to perform the symbolic factorisation of the linear system only one time, at the beginning of a simulation. Later on, only the numerical factorisation is performed with a given r.h.s. vector to obtain the solution of the linear system. The cost of the direct solver is illustrated in Fig. 2 where the time needed to solve one linear system is shown for various spatial resolutions. The linear solver dominates the time needed to compute the Jacobian matrix. With a simple linear regression in the log-log space, we find that in our case (i.e. for the Jacobian matrices that result from our temporal and spatial discretisation) the time needed to solve one linear system scales as N1.38. For 2D runs with resolution up to 5002, the number of variables is under one million. Linear systems are in this case still tractable with direct methods. The memory requirement for the LU decomposition ranges from 10 Mb for 2304 degrees of freedom to 9.4 Gb for 652 864 degrees of freedom. It is clear that larger systems (e.g. 3D problems) will require too much memory and computational time to be tractable. Therefore, we plan in the future to implement iterative solvers that are less expensive both in terms of memory and CPU time.

3.2.6. Time step control

In an implicit method, one has to introduce a time step control strategy, usually based on empirical grounds. We found that the following three strategies are useful, in the order of the most to the least basic:

-

Convergence-based:

If the Newton-Raphson procedure converges within five iterations, increase the time step by a factor of 1.5. If NR converges in more than ten iterations, decrease the time step by a factor of 2.

-

Relative change-based:

Compute relative change in the solution between time step n and n + 1. If it is lower than 10%, increase the time step by a factor of 1.5. If it is larger than 20%, decrease time step by a factor of 2.

-

Advection-based:

Set the time step so that CFLadv = 1.

The last strategy is physically motivated since it ensures that the fluid does not move across more than one cell width during one time step. For the preliminary results presented in this paper, we have used the first, least stringent, and least physical, strategy. In the future we plan to compare these three strategies, both in terms of accuracy and computational time. Finally, in some cases, we also provide maximum/minimum values for the CFL number or the time step.

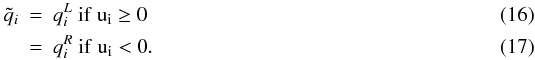

4. Test: oscillations of an entropy bubble

Run parameters for the entropy bubble test.

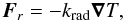

Basic tests (see Appendix A) have been successfully done for code validation during its first stages of development, so we focus here on a test that is more relevant to the physical processes we would like to investigate. It was taken from Dintrans & Brandenburg (2004, DB hereafter). We considered an isothermal stratified atmosphere with constant gravity perturbed by an entropy bubble. We computed the oscillations of this bubble around its equilibrium position, and we analysed the spectrum of internal gravity and sound waves driven by the bubble. The initial setup, parameters, and units normalisation are similar to DB and are therefore not reproduced here. The Brunt-Väisälä frequency of the atmosphere is N ~ 0.82. For this test, Eqs. (1)–(3) were solved with the viscous terms included. The kinematic viscosity coefficient ν was set to 5 × 10-4 in all runs. The thermal conductivity is set to zero, as we were interested in the adiabatic evolution of the system. We used a Cartesian version of the code2 and considered a domain (x,z) ∈ [− 0.5,0.5] × [0,1] . Periodic boundary conditions were used in the horizontal directions and non-penetrative, stress-free conditions were used at the bottom and top boundaries. All the run parameters are summarised in Table 1. For each run we set the time step to a constant value.

As in DB, we describe each wave mode by two integers l,n ≥ 0, which are the number of nodes in the horizontal and vertical directions, respectively. In this test we looked at two types of waves:

-

1.

vertical sound waves that have frequenciesω = (n + 1)π. These waves have l = 0 since they have no horizontal structure;

-

2.

internal gravity waves that have frequencies ω < N and are characterised by l ≠ 0 (internal gravity modes cannot propagate in the vertical direction).

See DB for the derivation of these properties. These two kinds of waves occupy well-separated regions of the frequency spectrum. Vertical sound waves have vertical periods P ≤ 2 and will therefore be more sensitive to the time step. Internal gravity modes have longer periods P ≳ 7.3 and will therefore be less sensitive to the time step. This test thus provides a good analysis of the influence of the time step on the accuracy of the numerical results.

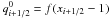

We first analysed the vertical sound wave spectrum by using method 2 described in DB, for runs 1 & 2 (cf. Table 1). In this method, the wave spectrum is represented in the (z,ω) plane. For any given time step Δt, the Nyquist theorem states that no periodic signal with frequency higher than ωN = π/Δt can be resolved. Also the temporal Fourier transform has a frequency resolution that is Δω = 2π/T, where T is the duration of the simulation. The results are shown in Fig. 3 for two different time steps. For Δt = 0.04, we recognise the signature of the n = 0,1,2 modes. The n = 3 mode is below the range of the isocontour levels. Furthermore, all the modes are located at the correct frequencies (within Δω ≃ 0.125). For Δt = 0.5, one has ωN = 2π thus normally allowing only for modes n = 0 and n = 1. In the map, one can recognise the signature of the n = 0,1,2 modes. None of them is located at the correct frequency, and it is surprising to find the n = 2 mode whose eigenfrequency is above the Nyquist frequency. In this case it is clear that the time step is too large to accurately resolve the sound waves. To analyse the gravity modes, we used the method developed in DB: we projected the velocity field on the anelastic eigenvectors. With this method we obtained the individual mode amplitudes cln which are the coefficients in the eigenvector expansion. With such a decomposition it is straightforward to obtain the mode frequency. We checked that, for both runs, the gravity mode frequencies match the analytical predictions to the percent level.

Stellar parameters of the models considered in this paper.

This test highlights the importance of the choice of the time step to correctly describe the physical process of interest. In the present case, sound-wave amplitudes are much lower than the amplitudes of the gravity modes (the initial setup is almost in hydrostatic equilibrium), and therefore they do not play an important role in this problem. One can then safely use a higher time step (e.g. Δt = 0.5) to study the internal gravity modes, as shown by the excellent agreement with predicted frequencies. Obviously, one could not do that if the initial setup was intended to be far from hydrostatic equilibrium, since sound waves would at least be as important as internal gravity modes.

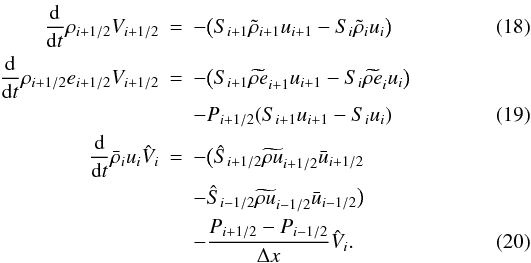

5. Stellar models

We now test our code on realistic stellar conditions.

5.1. Local simulation: A-type star

Numerical parameters for the A-star run.

We present in this section results of convection in A-type stars (see model I in Table 2), since comparison with previous models of such stars, calculated by different groups using explicit codes (see Sofia & Chan 1984; Freytag et al. 1996), are possible. These types of stars are weakly convective and possess two convectively unstable zone near their surface: around T ~ 40 000 K due to HeII ionisation and near T ~ 10 000 K due to HeI/H ionisation. Usually the HeI/H surface convective zone is strong, but very narrow, whereas the HeII convective zone is broader but much weaker. To model these convective regions it is therefore sufficient to focus on the surface layers of the star by performing local simulations.

Our initial model starts at the photosphere, i.e. the location where τ = 2/3 and Teff = 8500 K. This particular choice inhibits the convection in the HeI/H ionisation zone, which is located at the photosphere, so we do not expect to model this convective region correctly. A better modelling of this region should include a part of the stellar atmosphere in the computational domain, as is done for instance by Freytag et al. (1996). Our initial models are produced with a 1D stellar structure code (see Baraffe & El Eid 1991) where the mixing length theory (MLT hereafter) prescription for convection has been turned off. The resulting fully radiative models are very close to the MLT models (even near the surface) since convection is too weak, in terms of energy transport, to significantly modify the structure. The model presented here extends down to a depth where the temperature is T = 100 000 K, where the star is radiatively stable, so that the numerical domain includes a stable, radiative, buffer zone at the bottom of the domain. Since our simulation focusses on a very small fraction of the star, the geometry is Cartesian-like (but we use spherical geometry). In terms of physical dimensions, the physical domain has an average width of 90 000 km and a height of 37 500 km.

To construct the initial model for the multi-dimensional code, we simply copy the 1D structure along the tangential direction. However, the number of tangential grid points has to be carefully chosen in order to keep an acceptable aspect ratio of the cells. The number of grid points in the tangential direction therefore depends on the number of grid points in the radial direction through the radial extend of the cells.

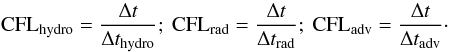

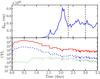

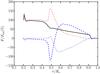

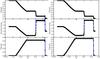

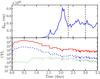

We discuss below the results of our run. Table 3 summarises important parameters. τKH is the thermal time scale defined as the ratio between the total internal energy in the computational domain divided by the luminosity of the model. The last column of Table 3 shows the memory requirement for the LU decomposition. Calculations were done on an AMD “Istanbul” processor (6 cores at 2.7 Ghz). Figure 4 shows the evolution as a function of time of the total kinetic energy in the domain (used to track the development and saturation of the convective instability) and the evolution of the different CFL numbers defined in Sect. 3.2.1. At the beginning, the model undergoes a relaxation toward hydrostatic equilibrium, and the CFL number rapidly reaches values >105. Eventually, the velocity field that develops as a result of the relaxation process triggers the convective instability, which corresponds to an increase in the kinetic energy to a significant level. As the first vortices develop, the time step decreases since the Newton-Raphson procedure would not converge otherwise. After ~2.3 days of simulated time, we reach a quasi-steady state that lasts until the end of the simulation at t ~ 3.5 days. In this phase, the time step remains roughly constant with Δt = 18 ± 2.6 s, corresponding to CFLhydro = 9.8 ± 1.4 and CFLrad = 400 ± 60 (the second value indicated correspond to the standard mean deviation). In this quasi steady state, convection is fully developed, and we observe a complex vortex dynamics involving vortex pairing/merging and the formation of downdrafts. These phenomena are characteristic of turbulent convection (see snapshot in Fig. 5).

|

Fig. 4 Evolution of the total kinetic energy (top panel) and CFL numbers (lower panel, solid: radiative; dashed: hydro; dotted: advection) for the A-type star run. The vertical dashed lines show the time interval on which the averaged fluxes shown in Fig. 7 are computed. |

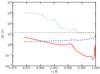

Figure 6 shows the radial profiles of the different CFL time steps defined in Sect. 3.2.1. The CFL time steps are first computed in each cell of the domain, and the radial profiles shown in Fig. 6 are then deduced by taking the minimum value along the tangential direction for each radius. We first note that the radiative CFL time step provides the most stringent constraint. Solving the radiative diffusion with an explicit method would require a time step lower than Δt = 3 × 10-2 s and would make any calculation cumbersome. The hydro CFL time step increases with radius, so that the hydro limitation on the time step would come from the bottom of the numerical domain. This is expected since we are using a uniform grid, and at the bottom of the envelope, the temperature, and hence the velocity of the sound waves, are the largest. The advective time scale shows the region where the flow velocities are the largest, i.e. near the surface. The time step of the actual simulation clearly corresponds to the lowest value of the advective time scale. If the time step was much longer than the advective time scale, the solution would change significantly since eddies would be moving across more than one grid cell. In this case, however, the Newton-Raphson procedure would have convergence problems since the initial guess (the solution at time step n) would strongly depart from the correct solution. Since our time step strategy is based on limiting the number of iterations, the time step would be automatically decreased. Therefore, we do not expect our current implicit method to work with much larger time steps, and we identify the minimum value of the advective time scale as a natural (physical) limit for the time step.

|

Fig. 5 Snapshot of our simulation of an A-type star. The axes are Cartesian coordinates normalised by the stellar radius and with the origin at the star centre. |

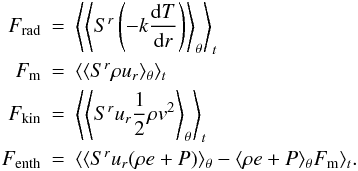

We now analyse the properties of the fully developed convective state. We computed the average of the radiative, mass, kinetic, and enthalpy fluxes:

These quantities have the dimension of a flux multiplied by a surface (i.e. a luminosity), in order to take geometrical effects introduced by the spherical coordinates into account. We however use the term “flux” in the following to make the discussion clearer. The total flux is defined as the sum of the enthalpy, radiative, and kinetic energy fluxes.

These quantities have the dimension of a flux multiplied by a surface (i.e. a luminosity), in order to take geometrical effects introduced by the spherical coordinates into account. We however use the term “flux” in the following to make the discussion clearer. The total flux is defined as the sum of the enthalpy, radiative, and kinetic energy fluxes.

Figure 7 shows the radial profile of the averaged fluxes defined above. The averaging was done on the interval 2.3–3 days (represents 1408 time steps) but we checked that, by taking smaller windows (but still large enough to have several hundreds of time step), we would obtain similar results. Figure 7 shows the typical profile of the enthalpy flux in the HeII convective zone, as expected from general properties of convection: an inward/outward directed flux, with an outward part stronger than the inward flux. Also the kinetic energy flux is typical of what is expected from convection: downward, as a result of the acceleration of the downdrafts by gravity. The outward convective flux reaches a maximum amplitude of about 0.68% of the stellar flux. Depending on the time interval on which the averaging was done, this maximum value ranges between 0.6% and 0.77% of the stellar flux. This corresponds to the prediction of MLT for an α parameter in the range 1.35−1.4. As discussed above, we do not expect to have realistic results for the H/HeI top convective zone since our outer boundary condition at the photosphere inhibits convection in this region. However, despite the lack of resolution (only a few grid points resolve the ionisation region), we still find a non zero average convective flux with an amplitude of ~10-4F⋆. For the aforementioned value of α, tuned to match the HeII zone, the MLT predicts a convective flux in the HeI/H region, which has a location and an amplitude consistent with our results.

We now compare our results with the work of Sofia & Chan (1984) and Freytag et al. (1996), who have performed numerical models of a A-type star with the same parameters as ours (model A5 of Sofia & Chan paper, model AT85g41N3 of Freytag et al. paper). Sofia & Chen also considered models extending to the photosphere, but they did not find the small convective flux that appears near the surface in our simulation, most certainly because of the lack of resolution in their computations (their resolution is twice lower than our). Since only Freytag et al. (1996) resolve the HeI/H region properly, we restrict the comparison to the HeII convective zone. For this region, Sofia & Chan (1984) found a convective flux between 0.15 and 0.2% of the stellar flux (depending on the aspect ratio of their model) and Freytag et al. (1996) found a convective flux about 0.12% of the stellar flux. This is significantly lower than the amplitude deduced from our simulations. This difference likely results from different input physics (metallicity, EOS, opacities).

|

Fig. 6 Radial profiles of the CFL time steps for the A-type star run (see text). From bottom to top: radiation (solid red), hydro (dash blue), and advection (dot green). The horizontal dash-dotted line indicates the time step of the snapshot under consideration. |

|

Fig. 7 Radial profiles of averaged fluxes for the A-type star run (dashed line: enthalpy flux, dotted line: kinetic energy flux). The solid line is the convective flux as predicted by the mixing length theory with a mixing length parameter α = 1.375. The box in the upper left corner is a zoom of the surface layer of the star. |

|

Fig. 8 Properties of the sampled grid for the giant model. Left panel: pressure scale height to radial cell size ratio. Right panel: cell aspect ratio when the radial mesh is extended in the tangential direction. |

Our results on A-type stars show that we could successfully describe 2D convective patterns in realistic stellar conditions with our fully implicit scheme. Thanks to this approach, the time step in our computation is not limited by sound waves or by radiative diffusion. However, we have shown that the time step is limited by the velocity of the fluid itself, which determines the rate of change in the solution. The hydro CFL cannot be much larger than 10 when convective motions are fully developed. On the other hand, the radiative CFL number is very high (~400). This is expected for the local models of “hot” stars that have very short radiative time scales in the low-density, photospheric region. The rather low value of the hydro CFL number and the high value of the radiative CFL number suggest that, for this type of local model, a mixed approach (explicit hydrodynamic + implicit radiation) could allow substantial speedup to be reached as compared to a fully explicit/implicit approach. However, our goal with the present simulation of A-type stars is not to compete with previous simulations performed with explicit codes, which are doing an excellent job for this type of star with very tiny atmospheric and subphotospheric convective zones. Also a realistic description of convection requires a 3D approach. The present study is meant to test the behaviour and the reliability of the implicit code to model convection under realistic conditions that have been already studied. Nevertheless, we stress that a fully implicit scheme remains very efficient at computing the initial development of convective instability, since the time step can grow to high values as long as velocities remain low.

5.2. Global simulation: cold giant star

The next step is to compute models involving a significant fraction of the star. We consider here a model with parameters corresponding to a cold giant star (model II in Table 2).

|

Fig. 9 Properties of the uniform grid for the giant model with Nr = 217. Left panel: pressure scale height to radial cell size ratio. Right panel: cell aspect ratio when the radial mesh is extended in the tangential direction. |

5.2.1. Initial model and grid design

For this model, MLT predicts that about 50% of the stellar envelope is convective. The initial model for the multi-dimensional simulations is based on a 1D MLT model. Starting from a radiative model, as previously done for the A-type star, yields difficulties since a radiative structure departs strongly from the convective solution. The MLT model might also depart from the real convective state, but one can expect this difference to be less pronounced than with a fully radiative model. We model a significant fraction of the star (80%) in order to have a large radiative zone below the convective zone. Our numerical domain therefore extends radially from 0.2 R⋆ to R⋆, where R⋆ is the radius of the star as defined by the location of the photosphere. This yields a pressure contrast of roughly six orders of magnitude (~14 pressure scale heights) and a density contrast of almost five orders of magnitude throughout the whole domain. Throughout the convective zone the pressure contrast is ~400 (~6 pressure scale heights) and the density contrast is ~50. Previous works that also consider an extended radial domain are the “star-in-a-box” simulations of Freytag et al. (2002), Woodward et al. (2003), and Dobler et al. (2006), where the full star is embedded in a Cartesian domain. Dobler et al. (2006) consider a very simplified stellar model, with a very low-density stratification (a factor of ~10). The two other works consider more realistic stellar models of super giant and AGB stars and obtain convection over multiple pressure scale heights. We also mention Brun & Palacios (2009), which presents a numerical simulation of the inner ~50% of a red giant star with the anelastic code ASH. Their density stratification in their convective zone (which occupies the whole numerical domain) is about two orders of magnitude.

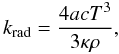

Our initial model extends up to the photosphere. The photospheric region is characterised by the ionisation regions of HeII and HeI/H, with “bumps” in the opacity profile. In addition, the surface region is characterised by the lowest values of the pressure scale height within the whole stellar structure. A non-uniform radial mesh would be necessary to solve the different scales present in the star. Our 1D stellar structure code uses such an adapted radial grid, which ensures a very good resolution of all features throughout the star. This is illustrated in the left panel of Fig. 8, where we have sampled the initial 1D model to create a radial mesh with a suitable number of grid points (200 in this case).

However, when considering multi-dimensional calculations, this grid presents a flaw: extension in the tangential direction yields intractable aspect ratio of the cells. This is illustrated in the right panel of Fig. 8, which shows the radial profile of the cell aspect ratio when the former radial mesh is simply extended in the tangential direction by putting 256 cells over a tangential angular width of π/2. Inspection of Fig. 8 shows that a constant number of cells per scale height translates into a large variation of the cell aspect ratio. In the particularly demanding region near the photosphere the aspect ratio drops below 10-2, which is unacceptable for performing numerical simulations.

It is therefore obvious that to perform multi-dimensional simulations from the deep stellar interior to the surface, one has to make a compromise on the resolution when using a single grid. A solution to this problem would be to use a system of nested grids where the grids are refined in both the radial and tangential directions as moving near the surface of the star. Here, we choose to consider a computational grid with an uniform grid spacing in both Δr and Δθ and apply a special treatment for the surface layers (see below). Due to spherical coordinates, the aspect ratio of the cells decreases with the radius. The radial step is chosen so that the cells in the middle of the domain are square. The right panel of Fig. 9 shows that our cells have an aspect ratio of 3 at the bottom of our domain and 0.6 at the top of the domain. As a result, the surface of the star is not resolved well, as shown by the number of grid points per pressure scale height (see left panel of Fig. 9).

5.2.2. Simulation setup

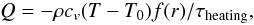

Since we cannot accurately describe the surface of the star, we choose to apply a heating function that drives an isothermal region in the outer, badly resolved, region of the star. To do so, we include in our energy equation a heating source term on the right-hand side:  (42)

(42)

where f(r) is a spatially varying function that is equal to 1 above a given radius rc and zero elsewhere, with a smooth transition in between, τheating is the typical cooling time scale and T0 is the forcing temperature. This method has been used for the “star-in-box” simulation presented in Dobler et al. (2006, see also references therein). The parameter T0 is chosen to be the temperature of the gas at rc in the initial model of the star. For the model presented in this section, rc = 0.95R ⋆ , T0 = 32 750 K, and τheating = 104 s. For comparison, the value of the radiative time scale (as defined in Eq. (31)) decreases exponentially from ~ 3.5 × 105 s to ~ 3.5 × 103 s in this region so our value of τheating insures that the source term acts on a short enough time scale to keep the outer region in a roughly isothermal state.

For the value of T0 used in our model, we do not have the HeI/H ionisation region near the surface. Also we prevent any convection motion in this region since the isothermal structure is stably stratified. There is essentially no radiative flux throughout this region, because the energy entering this region is evacuated by the source term instead.

This treatment of the surface layers is very basic, and is only used as a preliminary solution to produce our first results. We are currently investigating other more physically based methods to treat the surface layers.

5.2.3. Results

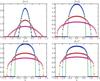

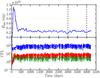

We now present the results of the run (summarised in Table 4). Figure 10 shows the evolution of the kinetic energy and CFL numbers. As in the previous section, we see that, during the initial phase of relaxation/development of convection, we reach high CFL numbers, with the hydro CFL number reaching a maximum value around 103. As the convective instability develops, we see a quick and strong rise in the kinetic energy in the domain, followed by a slower decrease as the model relaxes toward equilibrium. After 500 days, the kinetic energy tends toward a constant value, indicating that a quasi steady-state is reached. The time step remains roughly constant with a mean value of Δt = 2.5 × 104 ± 5 × 103 s, corresponding to CFLhydro = 100 ± 20, and CFLrad = 7.6 ± 1.5. The second value indicated corresponds to the standard mean deviation. Therefore in this kind of model, we see that the radiative time scale is much lower than the hydro time scale. Figure 11 shows a snapshot of the convective motions in the star.

|

Fig. 10 Evolution of the total kinetic energy (top panel) and CFL numbers for the giant star run (lower panel, solid red: radiative; dashed blue: hydro; dotted green: advection). The vertical dashed lines show the time interval on which the averaged fluxes shown in Fig. 12 were computed. |

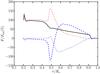

We computed the averaged fluxes defined in Sect. 5.1 between t = 3000 days and t = 4000 days (corresponding to 3452 time steps). The resulting radial profiles are shown in Fig. 12. We see that thermal equilibrium has not been reached yet, since the total flux throughout the envelope is not constant. We see a significant upward enthalpy flux in the ~40% outer radius of the star. Also, we see a very important downward kinetic energy flux in the convective zone. Around r ~ 0.5 R ⋆ , we observe strong bumps both in the radiative and in the enthalpy flux. This region corresponds to the interface between the convective zone and the radiative zone. When downdrafts cross the interface, the low efficiency of radiation prevent them from warming up, and as a result, they are subject to a very strong braking by buoyancy forces, which prevent them to significantly overshoot in the radiative zone. This is why the interface region is strongly perturbed whereas the inner radiative zone remains unperturbed. Finally, we checked the range of Mach numbers in the domain. The average Mach number of the flow extends from ~10-3 at the bottom of the envelope to ~0.15 at the top of the convective zone. However, Mach numbers in the range 0.30−0.40 can be reached locally, in the downdrafts. We stress that such a range of Mach number cannot be addressed by anelastic codes, which are restricted to low Mach number flows (Ms ≲ 10-2 − 10-1).

|

Fig. 11 Snapshot of the cold giant star simulation. The axis are Cartesian coordinates normalised by the stellar radius and with the origin at the star centre. |

Figure 13 shows the radial profiles of the different CFL time steps (as in Fig. 6). As already mentioned above, we see that the radiative CFL time step is much longer than the hydro time step; however, the strong decrease in the radiative time scale in the isothermal surface layer comes from the decrease in density in this region. We can expect that, if the surface regions were better resolved, the radiative time scale would drop to a lower value than the hydro time step, and we would recover locally a situation similar to the A-type star model. Here again the shortest hydro CFL time step is found at the bottom of the envelope, therefore we reach a higher hydro CFL number than for the A-type star model because our model extends deeper in the star. Finally, as discussed in the previous section, we see that the actual time step is roughly equal to the lowest value of the advective time scale, which is located in the convective zone. In this case, since the envelope extends deeper, our implicit scheme allows for reaching high values of the hydro CFL number by removing the limitation coming from the deeper layers of the star.

The results presented in this section show that it is meaningful to adopt a fully implicit approach for computing models of deep convective zones. Indeed, we show here an example of a situation where substantial CFL numbers could be reached by overcoming the time step limitation imposed by deep layers of the stellar envelope. This is possible since the flow velocity is low in those regions. In the surface convective region, however, we are still prone to the time step limitation imposed by the flow movement across grid cells.

Finally, the computation of such deep stellar envelopes also poses the problem of how to achieve the thermal relaxation of the convective envelope on an acceptable time scale. Indeed, the thermal time scale of our numerical domain is  (43)

(43)

whereas the simulation only ran for 4235 days ~11.6 years of stellar time. This is, however, an unavoidable difficulty that is common to all numerical approaches.

6. Conclusion

We have presented a new numerical code devoted to stellar interiors. The code solves the full set of the compressible Euler equations and radiative diffusion with a spatially and temporally accurate implicit scheme.

As a first step, we computed stellar convection following two approaches: a local approach for a relatively hot star (see Sect. 5.1) and a global approach for a cold giant star (see Sect. 5.2). In the first case, we were able to simulate the convective motions in the outer layers of a A-type star. For this type of model, we achieve a mean CFL number of 10 for hydro and of 400 for radiation. The rather low value of the hydro CFL number suggests that, in this particular case, a mixed approach (explicit hydro + implicit radiation) would result in a substantial speeding up of the calculation compared to a fully explicit or implicit approach. In the second case, we modelled the turbulent convective motions that transport energy in the bulk of a cold giant star. Our domain includes both the outer convective region and the bottom, stable, radiative region. For this model, we could reach a mean CFL number of 100 for hydro and of 7 for radiation. Such values of the hydro CFL numbers are encouraging and suggest that we might gain computational time thanks to our implicit approach.

The aim of this paper is to show the feasibility of using a fully implicit method for the accurate computation of time-dependent flows. We stress that our goal is, however, not to demonstrate that the code is successful in the sense of efficient production. Implicit methods are computationally much more expensive than explicit methods because they need to solve a set of non-linear equations. The efficiency of an implicit code is essentially set by the efficiency of the method used to solve this difficult task. The general framework for solving the non-linear system is the Newton-Raphson method. For tests and development purposes, we chose here a very simple approach where the Jacobian matrix is computed at each iteration, and where the linear system is solved exactly inside the Newton-Raphson loop. This results in a robust, but expensive non linear solver. A more efficient class of non linear solvers include inexact Newton-Krylov methods, which are Newton-Raphson methods where an iterative method is used to solve the linear system, see e.g. see (Knoll & Keyes 2004, for a review). These methods are Jacobian free methods and gain computational time by using an iterative method to approximately solve the linear system during the Newton-Raphson loop (hence the name “inexact”). These methods are very popular in computational physics and we plan to implement and test them in a future version of the code. Once the most efficient non-linear solvers currently available are implemented, we will be able to benchmark our code against explicit ones.

|

Fig. 12 Radial profiles of averaged fluxes for the giant star run (dashed-dotted line: radiative flux; dashed line: enthalpy flux; dotted line: kinetic energy flux; solid line: total flux). |

|

Fig. 13 Radial profiles of the CFL time steps for the giant star run (see text). From bottom to top: hydro (dashed blue), advection (dotted green), and radiation (solid red). The horizontal dash-dotted line indicates the time step of the snapshot under consideration. |

Concerning our numerical method, we found that the maximum time step that we can use without being faced with convergence issues in the Newton-Raphson procedure is closely related to the fluid velocity in the numerical domain. We introduced the advective time scale  (44)

(44)

and showed that for the computation of a time-dependent flow the time step cannot grow much above the minimum value of this time step. The definition of Eq. (44) should be adapted in the multi-dimensional case. In other words, our numerical method cannot handle fluid movements across several cells, which is intuitively and physically understandable. We stress that such a criterion is also suggested by accuracy concerns. This limitation on the time step stems from the fact that in order to advance the solution over one time step, one has to solve a system of non-linear equations with a Newton-Raphson procedure. For time step larger than the advective time scale, the fluid moves across several cells and the solution changes significantly between two time steps. This change is essentially the limiting factor for increasing the time step, since the Newton-Raphson procedure encounters problems when relative changes between two time steps are too large. We stress that one could improve this by finding a better initial guess for the Newton-Raphson procedure, instead of simply taking the solution of the last time step. In 1D implicit simulations, a time extrapolation from preceding time steps has been used successfully to produce an initial guess for the Newton procedure. However, in multi-dimensional flows, this procedure does not necessarily gives a satisfactory guess of the solution. Indeed our experience did not show any improvement by using such an extrapolated guess.

Our first developments show that using a fully implicit code to describe stellar interiors is realistic, providing a powerful tool for studying a full stellar structure characterised by low-to-moderate Mach numbers. Our test performed on the envelope of a giant star indeed highlights the possibility to extend the spatial domain to a large fraction of the star (80% in radius), where Mach numbers cover values from less than 1% to a few tens of percent. More generally, this implicit tool will be efficient for computing relaxation toward steady state solutions and very low Mach number flows, and to avoid the CFL constraint due to a particular location of the numerical domain. In the later case, it is possible to have a moderate Mach number flow in another region of the domain (this region would then be responsible for the limitation of the time step), as illustrated by the red giant case mentioned above.

Our first developments also stress the numerical difficulty of modelling a full star with a grid-based code. Since the typical length scales (e.g. pressure scale height) vary significantly between the deep interior of the star and the surface, it is not possible to resolve both the interior and the surface layers on a single grid. We plan to overcome this difficulty by implementing a system of nested grids refined in both the radial and tangential directions. Among other future developments of the code, we plan to extend it to 3D and to implement iterative solvers, rather than a direct method, involving parallelisation. Among our first astrophysical applications, we quote (i) the very early stages of evolution of low-mass stars, bridging the gap between the hydrodynamical phase of star formation and the quasi-static pre-main sequence evolutionary phase, (ii) the study of convection in pulsating stars, and (iii) the effect of fast rotation on convection (in low-mass stars, massive stars). But clearly, a wide range of astrophysical problems can be studied with such a tool. Based on our experience, we hope to achieve these future developments and the first astrophysical applications within a reasonable period. We also hope that our efforts demonstrate the feasibility of applying an implicit multi-dimensional hydrodynamical code to stellar interiors and will motivate the computational astrophysics community to develop such a tool.

Not all implicit methods are A-stable; e.g. the Adams-Moulton methods are implicit but only conditionally stable.

Cartesian coordinates are used in this particular case because of analytical solutions in this geometry.

Acknowledgments

M.V. acknowledges support from a Newton International Fellowship during part of this work. The numerical simulations were achieved thanks to the resources of the PSMN (“Pôle Scientifique de Modélisation Numérique”) at the ENS de Lyon. The authors are indebted to Cédric Mulet-Marquis and Emmanuel Lévêque for their significant contribution to an earlier version of the code. The authors are particularly thankful to Bernd Freytag and Ewald Mueller for many valuable and useful discussions which considerably helped the elaboration of this work. We also thank Sacha Brun for discussion and advice. Part of this work was funded by the European Research Council under the European Communitys 7th Framework Programme (FP7/2007-2013 Grant Agreement No. 247060) and by the French “Programme National de Physique Stellaire” (PNPS). Finally, the authors thank the anonymous referee for his/her useful comments that helped to improve the paper.

References

- Almgren, A. S., Bell, J. B., Rendleman, C. A., & Zingale, M. 2006, ApJ, 637, 922 [NASA ADS] [CrossRef] [Google Scholar]

- Amestoy, P. R., Duff, I. S., L’Excellent, J.-Y., & Koster, J. 2001, SIAM J. Matrix Anal. Applic., 23, 15 [Google Scholar]

- Amestoy, P. R., Guermouche, A., & Pralet, S. 2006, Parallel Computing, 32, 136 [Google Scholar]

- Arnett, D., Meakin, C., & Young, P. A. 2009, ApJ, 690, 1715 [CrossRef] [Google Scholar]

- Asplund, M., Ludwig, H., Nordlund, Å., & Stein, R. F. 2000, A&A, 359, 669 [NASA ADS] [Google Scholar]

- Aubert, O., Prantzos, N., & Baraffe, I. 1996, A&A, 312, 845 [NASA ADS] [Google Scholar]

- Baraffe, I., & El Eid, M. F. 1991, A&A, 245, 548 [NASA ADS] [Google Scholar]

- Baraffe, I., Chabrier, G., Allard, F., & Hauschildt, P. H. 2002, A&A, 382, 563 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Bigot, L., Kervella, P., Thévenin, F., & Ségransan, D. 2006, A&A, 446, 635 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Boris, J. P., Grinstein, F. F., Oran, E. S., & Kolbe, R. L. 1992, Fluid Dyn. Res., 10, 199 [NASA ADS] [CrossRef] [Google Scholar]

- Brun, A. S., & Palacios, A. 2009, ApJ, 702, 1078 [NASA ADS] [CrossRef] [Google Scholar]

- Brun, A. S., & Toomre, J. 2002, ApJ, 570, 865 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Burrows, A., Dessart, L., Ott, C. D., & Livne, E. 2007, Phys. Rep., 442, 23 [NASA ADS] [CrossRef] [Google Scholar]

- Calhoun, D. A., Helzel, C., & LeVeque, R. J. 2008, SIAM Rev., 50, 723 [CrossRef] [Google Scholar]

- Colella, P. 1990, J. Comput. Phys., 87, 171 [NASA ADS] [CrossRef] [Google Scholar]

- Curtis, A. R., Powell, M. J. D., & Reid, J. K. 1974, IMA J. Appl. Math., 13, 117 [Google Scholar]

- Dearborn, D. S. P., Lattanzio, J. C., & Eggleton, P. P. 2006, ApJ, 639, 405 [NASA ADS] [CrossRef] [Google Scholar]

- Deupree, R. G. 1990, ApJ, 357, 175 [NASA ADS] [CrossRef] [Google Scholar]

- Dintrans, B., & Brandenburg, A. 2004, A&A, 421, 775 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Dobler, W., Stix, M., & Brandenburg, A. 2006, ApJ, 638, 336 [NASA ADS] [CrossRef] [Google Scholar]

- Eggleton, P. P., Dearborn, D. S. P., & Lattanzio, J. C. 2008, ApJ, 677, 581 [NASA ADS] [CrossRef] [Google Scholar]

- Freytag, B., Ludwig, H., & Steffen, M. 1996, A&A, 313, 497 [NASA ADS] [Google Scholar]

- Freytag, B., Steffen, M., & Dorch, B. 2002, Astron. Nachr., 323, 213 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Gebremedhin, A. H., Manne, F., & Pothen, A. 2005, SIAM Rev., 47, 629 [NASA ADS] [CrossRef] [MathSciNet] [Google Scholar]

- Glasner, S. A., & Livne, E. 1995, ApJ, 445, L149 [NASA ADS] [CrossRef] [Google Scholar]

- Glasner, S. A., Livne, E., & Truran, J. W. 1997, ApJ, 475, 754 [NASA ADS] [CrossRef] [Google Scholar]

- Guzik, J. A. 2011, Ap&SS, in press [arXiv:1005.5406] [Google Scholar]

- Heroux, M., Bartlett, R., Hoekstra, V. H. R., et al. 2003, An Overview of Trilinos, Tech. Rep. SAND2003-2927, Sandia National Laboratories [Google Scholar]

- Hirsch, C. 1990, Numerical Computation Of Internal & External Flows: Computational Methods for Inviscid and Viscous Flows (New York, NY, USA: John Wiley & Sons, Inc.) [Google Scholar]

- Hujeirat, A. 2005a, A&A, 430, 893 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Hujeirat, A. 2005b, Comput. Phys. Commun., 168, 1 [NASA ADS] [CrossRef] [Google Scholar]

- Hujeirat, A. A., & Thielemann, F. 2009, MNRAS, 400, 903 [NASA ADS] [CrossRef] [Google Scholar]

- Iglesias, C. A., & Rogers, F. J. 1996, ApJ, 464, 943 [NASA ADS] [CrossRef] [Google Scholar]

- Keppens, R., Toth, G., Botchev, M., & van der Ploeg, A. 1999, Int. J. Num. Meth. Fluids, 30, 335 [Google Scholar]

- Knoll, D. A., & Keyes, D. E. 2004, J. Comp. Phys., 193, 357 [Google Scholar]

- LeVeque, R. J. 1997, J. Comp. Phys., 131, 327 [NASA ADS] [CrossRef] [Google Scholar]

- LeVeque, R. J. 2007, Finite Difference Methods for Ordinary and Partial Differential Equations: Steady-State and Time-Dependent Problems (SIAM) [Google Scholar]

- Livne, E. 1993, ApJ, 412, 634 [NASA ADS] [CrossRef] [Google Scholar]

- Livne, E. 1999, ApJ, 527, L97 [NASA ADS] [CrossRef] [PubMed] [Google Scholar]

- Livne, E., & Arnett, D. 1995, ApJ, 452, 62 [NASA ADS] [CrossRef] [Google Scholar]

- Livne, E., Burrows, A., Walder, R., Lichtenstadt, I., & Thompson, T. A. 2004, ApJ, 609, 277 [NASA ADS] [CrossRef] [Google Scholar]

- Meakin, C. A., & Arnett, D. 2007a, ApJ, 665, 690 [NASA ADS] [CrossRef] [Google Scholar]

- Meakin, C. A., & Arnett, D. 2007b, ApJ, 667, 448 [NASA ADS] [CrossRef] [Google Scholar]

- Mihalas, D., & Mihalas, B. W. 1999, Foundations of radiation hydrodynamics, ed. Dover [Google Scholar]