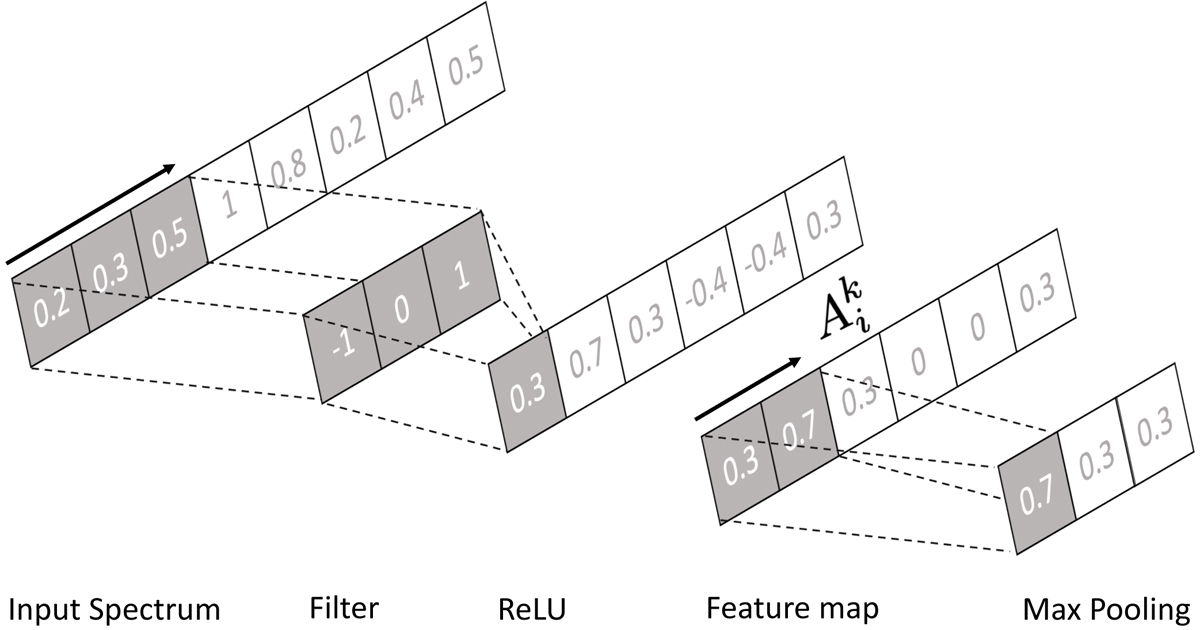

Fig. 4.

Download original image

Schematic for the generation of a single feature map. A filter/kernel consisting of a visual field of three pixels scans over the input in strides of one. At each position, the dot-product between the input and kernel is taken to form a single output in the next layer. This process is referred to as a convolution. The resulting output is then passed through a ReLU activation function which introduces a nonlinearity into the network by setting all negative values to zero. The feature map ![]() can then optionally be passed through a max pooling function, which scans across the map in strides of two and maintains only the largest value. The convolution process makes the network efficient by promoting sparse connections and weight sharing, while the pooling layer further reduces the number of parameters and promotes invariance of the network output to small shifts and rotations of the input.

can then optionally be passed through a max pooling function, which scans across the map in strides of two and maintains only the largest value. The convolution process makes the network efficient by promoting sparse connections and weight sharing, while the pooling layer further reduces the number of parameters and promotes invariance of the network output to small shifts and rotations of the input.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.