| Issue |

A&A

Volume 575, March 2015

|

|

|---|---|---|

| Article Number | A86 | |

| Number of page(s) | 11 | |

| Section | Numerical methods and codes | |

| DOI | https://doi.org/10.1051/0004-6361/201424167 | |

| Published online | 02 March 2015 | |

Super-resolution method using sparse regularization for point-spread function recovery

Laboratoire AIM, UMR CEA-CNRS-Paris 7, Irfu, Service d’Astrophysique, CEA

Saclay,

91191

Gif-sur-Yvette Cedex,

France

e-mail:

This email address is being protected from spambots. You need JavaScript enabled to view it.

Received: 9 May 2014

Accepted: 3 September 2014

Abstract

In large-scale spatial surveys, such as the forthcoming ESA Euclid mission, images may be undersampled due to the optical sensors sizes. Therefore, one may consider using a super-resolution (SR) method to recover aliased frequencies, prior to further analysis. This is particularly relevant for point-source images, which provide direct measurements of the instrument point-spread function (PSF). We introduce SParse Recovery of InsTrumental rEsponse (SPRITE), which is an SR algorithm using a sparse analysis prior. We show that such a prior provides significant improvements over existing methods, especially on low signal-to-noise ratio PSFs.

Key words: techniques: image processing / methods: numerical

© ESO, 2015

1. Introduction

The weak gravitational lensing is one of the most promising tools to probe the dark matter distribution in the universe. The idea is to infer, from a billion images of galaxies, the shape distortions due to dark matter gravitational lensing and then estimate the dark matter mass density along different lines of sight. The Euclid mission (launch planned for 2020) provides the data for such a purpose (ESA/SRE 2011). Nevertheless, galaxy images are distorted due to the point-spread function (PSF). Therefore, it is critical to know this distortion accurately. It can be modeled in first approximation as a convolution of the desired image by the PSF of the telescope, which is typically space and time-varying. In practice, isolated stars provide PSF measurements at different locations in the field of view. Nevertheless, these stars images can be aliased as it is the case in Euclid, given the CCD sensor sizes. On the other hand, the surveys are generally designed so that different images of the same stars are available and likely to be with different subpixel offsets on the sensor grid. Moreover, one may consider that nearby star images give to some extend the same local PSF. We, thus, can consider that different low-resolution versions of the same PSF are available in practice, so that one may apply a super-resolution (SR) method to recover aliased frequencies.

This paper precisely tackles this problem. The SR is a widely studied topic in general image processing literature. Yet, some methods have been specifically proposed for astronomical data. For instance, there is the software IMCOM (Rowe et al. 2011) and PSFEx, which proposes an SR option. The IMCOM provides an oversampled output image from multiple undersampled input images, assuming that the PSF is fully specified. Since it does not deal with the PSF restoration itself, we use PSFEx as our main reference. The PSFEx performs SR by minimizing the sum of two terms. The first term is a weighted quadratic distance, relating the underlying PSF to each of the low resolution measurements. The second term consists of the square l2 norm of the difference between the underlying PSF and a smooth first guess. This term is meant for regularization. In the proposed algorithm, we introduce a new regularization scheme based on the optimization variable sparsity in a suitable dictionary.

|

Fig. 2 Adapted observation model. This time we consider an isolated star as an input, and unlike in the general model, the output of the block “Blur” is the PSF. |

|

Fig. 3 SR general scheme. The deblurring does not apply to our case, since we want to precisely estimate the blur. |

Section 2 presents the general principle of SR along with some state-of-the-art methods in astronomical domain. In Sect. 3, we present the proposed algorithm in details, which is followed with some numerical experiments in Sect. 4. We conclude by summarizing the main results and giving some perspectives.

2. Super-resolution overview

2.1. Notations

We adopt the following notation conventions:

-

we use bold low case letters for vectors;

-

we use bold capital case letters for matrices;

-

the vectors are treated as column vectors unless explicitly mentioned otherwise.

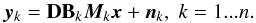

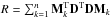

The underlying high resolution (HR) image of size d1p1 × d2p2 is written in lexicographic order (for instance, lines after lines) as a vector of pixels values x = (x1,...,xq)T, where q = d1p1d2p2, and d1 and d2 are respectively the line and column downsampling factors. We consider n LR observations. The vector of pixels values yk = (yk1,...,ykp)T denotes the kth LR observation written in lexicographical order with p = p1p2 and k = 1...n.

2.2. Observation model

We assume that x does not change during the acquisition of the n LR images so that we have  (1)The variable Mk is a warp matrix of size q × q. It represents the motions of the observations relative to each other and those in general need to be estimated. The variable Bk is a blur matrix of size q × q. It accounts for different blurs (the atmosphere blur, which is particularly considerable for ground based telescopes, the system optics blur, the imaging system shaking, etc.). The variable D is a matrix of size p × q, which simply realizes a downsampling operation. Finally, nk is a noise vector of q elements. This model is illustrated in Fig. 1.

(1)The variable Mk is a warp matrix of size q × q. It represents the motions of the observations relative to each other and those in general need to be estimated. The variable Bk is a blur matrix of size q × q. It accounts for different blurs (the atmosphere blur, which is particularly considerable for ground based telescopes, the system optics blur, the imaging system shaking, etc.). The variable D is a matrix of size p × q, which simply realizes a downsampling operation. Finally, nk is a noise vector of q elements. This model is illustrated in Fig. 1.

In our case, we are interested in estimating the PSF, or in other terms, the telescope’s contribution to the blur. We consider that the PSF varies slowly in the field so that the blocks “Warping” and “Blur” in Fig. 1 may be swapped, for slow motions between observations. Therefore, the block diagram can be adapted as in Fig. 2. This model still holds in presence of atmospheric blur (for ground-based telescopes) and jitter movements, if the LR images are extracted from the same exposure.

In the general case, the model (1) may simply be written as  (2)where Wk is a p × q matrix accounting for warping, blur, and downsampling.

(2)where Wk is a p × q matrix accounting for warping, blur, and downsampling.

2.3. SR techniques in astronomy

Generally, SR techniques involve three steps, which may be combined or performed separately. The registration step consists in evaluating the relative motions between different observations, so that their samples can be arranged on a common grid. It is critical that the precision of this registration should be smaller than the pixels dimensions over the target upsampling factors. Since these relative motions are arbitrary, this grid is non-uniform. The next step would be to interpolate this grid in such a way to get a regularly sampled HR image. This image is blurry and noisy. Therefore, the final step is a restoration procedure. This scheme is summarized in Fig. 3. In the next sections, we describe some SR techniques dedicated to astronomical images, but one may refer to Park et al. (2003) for more details on various SR frameworks in general image processing literature.

2.3.1. Shift-and-add method

The most simple SR method is certainly the shift-and-add method. It is performed in three steps. First, the images are upsampled to the target resolution. Then, they are shifted on a common grid and averaged. It has been used in astronomy for a long time, particularly for ground based telescopes. This method is simple, fast and is used for comparisons in the numerical experiments part. It has been shown that this method provides an optimal solution in the sense of the maximum likelihood (ML) with additive white Gaussian noise (WGN) and when only pure integer translation motions are considered with respect to the finer grid (Elad & hel Or 2001). The interpolation operator should be DT (from Eq. (1)), which comes down to a simple zero-padding interpolation. It has been shown in the same work that the matrix  is diagonal in this simple case. Thus, after registration and stacking of the interpolated images, each pixel value should be divided by the corresponding R diagonal coefficient.

is diagonal in this simple case. Thus, after registration and stacking of the interpolated images, each pixel value should be divided by the corresponding R diagonal coefficient.

2.3.2. PSFEx method

The software PSFEx is an open source program, which has been used in many projects such as the Dark Energy Survey (Mohr et al. 2012) or CFHTLS1 for PSF modeling. It takes a catalog of objects extracted from an astronomical image using SExtractor as input, which is also an open source tool for point sources (or stars) extraction. This catalog contains information about extracted point sources, such as signal-to-noise ratio (S/N), luminosity, full width at half maximum (FWHM), centroid coordinates, multiple flags related to saturation or blending, etc. Based on these measurements (performed in SExtractor) and some user provided parameters, PSFEx selects which sources are proper for PSF modeling. Afterwards, it constructs a PSF model, provides a fitting with an analytic function, and computes some of the PSF geometrical features. The PSF model construction may simply consist in optimally combining the input sources images for denoising, but it may also involve SR if these images are also undersampled. This SR functionality is our second reference for comparisons2.

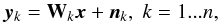

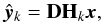

The desired HR image is now a matrix X of size dp × dp, where d is the downsampling factor and the kth observation Yk is a matrix of size p × p with k = 1...n. The coordinates of the centroid of the kth observation are denoted (ik,jk). Assuming that the images are bandlimited, the samples of the LR images can be interpolated from the desired HR image, thanks to the Shannon sampling theorem (Shannon 1949). In theory, this interpolation should involve a 2D sinus cardinal (sinc) kernel with an infinite support, which is not convenient for practical implementation. One can instead use a support compact function, which approximates the sinus cardinal. Let h(.,.) denote such a function. The estimate of the sample (i,j) of the kth observation is given by ![Mathematical equation: \begin{equation} \hat{y}_{k,ij} = \sum_l\sum_m h\left[ l-d(i-i_k),m-d(j-j_k)\right]x_{ij}. \label{intp_rel} \end{equation}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq35.png) (3)Then, we can define the cost function

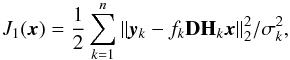

(3)Then, we can define the cost function  (4)where fk accounts for possible luminosity differences. The parameter

(4)where fk accounts for possible luminosity differences. The parameter  is related to the local background variance and any other uncertainty on the pixel value. These parameters need to be estimated.

is related to the local background variance and any other uncertainty on the pixel value. These parameters need to be estimated.

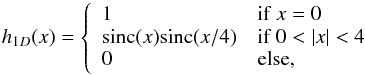

The PSFEx uses a Lanczos4 interpolant, which is defined in 1D as  (5)so that h(x,y) = h1D(x)h1D(y).

(5)so that h(x,y) = h1D(x)h1D(y).

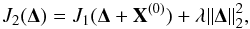

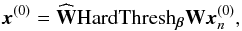

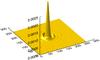

Finally, PSFEx minimizes the cost function defined as  (6)where Δ = X − X(0) and X(0) is a median image computed from the LR observations. This second term is meant for regularization purposes if the problem is ill-conditioned or undetermined (n<d2). One particularity of point-source images (see Fig. 4) is that one knows that the light comes from a single point, which is generally inferred to be the light blob’s centroid. Thus, one only needs the images centroid coordinates to perform the registration, which is implicitly done in Eq. (3).

(6)where Δ = X − X(0) and X(0) is a median image computed from the LR observations. This second term is meant for regularization purposes if the problem is ill-conditioned or undetermined (n<d2). One particularity of point-source images (see Fig. 4) is that one knows that the light comes from a single point, which is generally inferred to be the light blob’s centroid. Thus, one only needs the images centroid coordinates to perform the registration, which is implicitly done in Eq. (3).

|

Fig. 4 Simulated optical point-spread function. |

3. Sparse penalty

3.1. Sparsity-based approaches

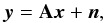

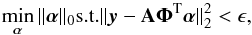

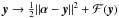

Using a sparsity prior to regularize a linear inverse problem has proven very effective in a large variety of domains and, in particular, in astronomical data processing (see Starck et al. 2010 and the references therein). It has recently led to impressive results for 3D weak gravitational lensing mass map reconstruction (Leonard et al. 2014). Let us consider the following general linear inverse problem:  (7)where x ∈ℝp is the signal to recover, y ∈ℝm are the noisy measurements, and n is an additive noise. The variable A is a matrix of size m × p, which might be ill-conditioned, and in the general case m can be smaller than p. To regularize this problem, the signal x is assumed to be sparsely represented in an appropriate overcomplete dictionary Φ: x = ΦTα, where there is only a few non zero values in the vector α. We can define the l0 pseudo-norm of α as

(7)where x ∈ℝp is the signal to recover, y ∈ℝm are the noisy measurements, and n is an additive noise. The variable A is a matrix of size m × p, which might be ill-conditioned, and in the general case m can be smaller than p. To regularize this problem, the signal x is assumed to be sparsely represented in an appropriate overcomplete dictionary Φ: x = ΦTα, where there is only a few non zero values in the vector α. We can define the l0 pseudo-norm of α as  (8)where k is the number of non-zero values in alpha. Thus, one way to tackle the problem stated in Eq. (7) under the sparsity assumption would be to solve the following

(8)where k is the number of non-zero values in alpha. Thus, one way to tackle the problem stated in Eq. (7) under the sparsity assumption would be to solve the following  (9)where ϵ is related to the noise variance. In practice, for most problems, the signals of interest are not strictly sparse, but this assumption can be relaxed to compressible signals; when expressed in a suitable dictionary, the coefficients of a compressible signal exhibit a polynomial decrease of their sorted absolute values:

(9)where ϵ is related to the noise variance. In practice, for most problems, the signals of interest are not strictly sparse, but this assumption can be relaxed to compressible signals; when expressed in a suitable dictionary, the coefficients of a compressible signal exhibit a polynomial decrease of their sorted absolute values: ![Mathematical equation: \begin{equation} |\alpha_{(i)}| \leq C i^{-\frac{1}{b}},\;\;1\leq i\leq q,\;b \in ]0,1] \end{equation}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq64.png) (10)where C is a constant. This tends to be verified for a well-chosen dictionary.

(10)where C is a constant. This tends to be verified for a well-chosen dictionary.

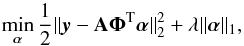

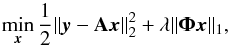

Besides, the problem 9 is combinatorial and is untrackable in most practical applications. One instead uses the l1 norm as a relaxation of the l0 pseudo-norm and solves a convex optimization problem of the form:  (11)where the parameter λ balances the sparsity against the data fidelity. This formulation is known as the augmented Lagrangian form of the basis pursuit denoising (BPDN) problem (Chen et al. 2001). In solving this problem, one seeks a sparse way to synthesize the wanted signal from the atoms of the dictionary Φ. This prior is referred to as the synthesis prior in the sparse recovery literature. Another way of promoting sparsity is through the analysis prior, which consists of seeking a solution, which has a sparse representation in the transform domain, without imposing the signal to be written as a linear combination of some dictionary atoms. This is done by solving the following problem:

(11)where the parameter λ balances the sparsity against the data fidelity. This formulation is known as the augmented Lagrangian form of the basis pursuit denoising (BPDN) problem (Chen et al. 2001). In solving this problem, one seeks a sparse way to synthesize the wanted signal from the atoms of the dictionary Φ. This prior is referred to as the synthesis prior in the sparse recovery literature. Another way of promoting sparsity is through the analysis prior, which consists of seeking a solution, which has a sparse representation in the transform domain, without imposing the signal to be written as a linear combination of some dictionary atoms. This is done by solving the following problem: (12)where the matrix Φ transforms x in the new representation domain. The problems 11 and 12 are not equivalent when the matrix Φ is not unitary. Therefore, a choice has to be made between the two, which is discussed in the next section.

(12)where the matrix Φ transforms x in the new representation domain. The problems 11 and 12 are not equivalent when the matrix Φ is not unitary. Therefore, a choice has to be made between the two, which is discussed in the next section.

3.2. Method

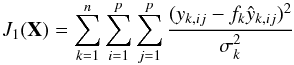

Now, let consider Eq. (3). It can be rewritten as  (13)where x and ŷk are, respectively, the desired matrix and the kth observation estimate written this time as column vectors in lexicographic order (we used the lines order); besides, Hk is a Toeplitz matrix (Gray 2006) of size d2p2 × d2p2, which contains the values of the kernel h(.,.) appearing in Eq. (3) and D is a decimation matrix of size p2 × d2p2 with d being the downsampling factor. We are assumming that the images are squared for convenience but they might be non-squared as well. In the same way, we can redefine the objective function of Eq. (4) as

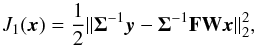

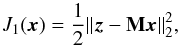

(13)where x and ŷk are, respectively, the desired matrix and the kth observation estimate written this time as column vectors in lexicographic order (we used the lines order); besides, Hk is a Toeplitz matrix (Gray 2006) of size d2p2 × d2p2, which contains the values of the kernel h(.,.) appearing in Eq. (3) and D is a decimation matrix of size p2 × d2p2 with d being the downsampling factor. We are assumming that the images are squared for convenience but they might be non-squared as well. In the same way, we can redefine the objective function of Eq. (4) as  (14)where yk is the kth observation rewritten consistently with x and ŷk. The function J1(x) is nothing but the log-likelihood associated with the observation model in the case of an uncorrelated Gaussian noise that is stationary for each observation up to a scalar factor. It can be written in an even more compact way as

(14)where yk is the kth observation rewritten consistently with x and ŷk. The function J1(x) is nothing but the log-likelihood associated with the observation model in the case of an uncorrelated Gaussian noise that is stationary for each observation up to a scalar factor. It can be written in an even more compact way as  (15)where W is obtained by concatening vertically the matrices DHk, F is a diagonal matrix constructed by repeating the coefficients fkp2 times for k = 1...n, and Σ is constructed the same way using the coefficients σk. Therefore, we can simply write

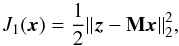

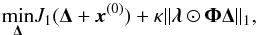

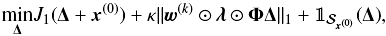

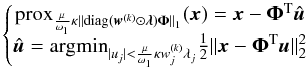

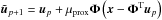

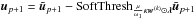

(15)where W is obtained by concatening vertically the matrices DHk, F is a diagonal matrix constructed by repeating the coefficients fkp2 times for k = 1...n, and Σ is constructed the same way using the coefficients σk. Therefore, we can simply write  (16)where M is a matrix of size np2 × d2p2. The SPRITE method constrains the minimization of this objective function using an analysis prior. Instead of using a single lagrangian multiplier as in Eq. (12), the analysis coefficients has individual weights κλi, where i is the coefficient index in the transform domain. This leads to the following formulation of the problem:

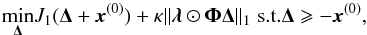

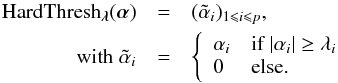

(16)where M is a matrix of size np2 × d2p2. The SPRITE method constrains the minimization of this objective function using an analysis prior. Instead of using a single lagrangian multiplier as in Eq. (12), the analysis coefficients has individual weights κλi, where i is the coefficient index in the transform domain. This leads to the following formulation of the problem:  (17)where Δ = x − x(0) is defined as in 6, x(0) is a first guess, and λ is now a vector of the same size as ΦΔ, ⊙ denoting the pointwise product.

(17)where Δ = x − x(0) is defined as in 6, x(0) is a first guess, and λ is now a vector of the same size as ΦΔ, ⊙ denoting the pointwise product.

Additionally, the PSF or equivalently the telescope optical impulse response is by definition a positive valued function (Thompson 1969). Therefore, we want the reconstruction x to have positive entries. This additional constraint is integrated as follows:

(18)where

(18)where

is a pointwise inequality, or equivalently,

is a pointwise inequality, or equivalently,

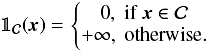

(19)where

(19)where  is the set of vectors t ∈

ℝd2 p2

satisfying the pointwise inequality

is the set of vectors t ∈

ℝd2 p2

satisfying the pointwise inequality  and

and  𝒮x(0)

is its indicator function (see Appendix B). The impact of this constraint is emphasized in Appendix C.

𝒮x(0)

is its indicator function (see Appendix B). The impact of this constraint is emphasized in Appendix C.

As we show in the Sect. 3.4.3, the choice of the parameters λ and κ relies on the noise expected on the analysis coefficients of the solution estimate. The choice of a vector regularization parameter rather than a single scalar is precisely motivated by the fact that this noise might be non-stationary.

As stated before, the problem 17 is not equivalent to its synthesis version, if the dictionary Φ is redundant. The synthesis prior is expected to be efficient if the desired solution can be accurately written as a sparse linear combination of the chosen dictionary atoms, which we cannot assume to be true for every PSF profiles. In contrast, the analysis prior appears to be more flexible. Moreover, in the cases where the problem would be ill-conditioned or underdetermined, the analysis prior would definitely be more suitable since it involves far less variables.

A similar l1 penalty has already been applied for SR. An example may be found in Yamagishi et al. (2012), where the cost function is minimized using variants of the alternating direction method of multipliers (ADMM). Moreover, advantages of such approaches over quadratic regularizers have been shown in many related problems in image and signal processing.

The use of the l1 norm as a relaxation for an l0 penalty has a well-known drawback, which is that it tends to bias the solution. Indeed with a l1 norm penalty, the problem resolution involves soft thresholding operations, which affect both weak and strong entries unlike a hard thresholding, which would only affect the weak and therefore unwanted entries. Formal definitions of soft and hard thresholding are given in Appendix B.

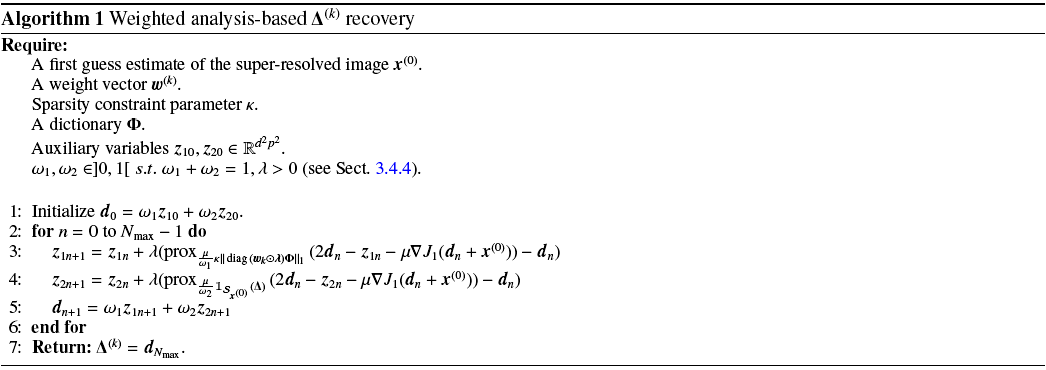

This is particularly unsuitable for scientific data analysis. The reweighting l1 minimization proposed in Candès et al. (2008) is one way to tackle this issue, while staying in the proof of convergence sets. Indeed, it consists of solving a succession of l1 minimization problems of the form

(20)where w(k) is a weighting vector for the transform coefficients at the kth minimization. Each entry of w(k) is calculated as a decreasing function of the corresponding transform coefficient magnitude in the (k − 1)th minimization. This way, the strong transform coefficients are less penalized than the weaker ones in the new minimization. One may refer to Appendix D for quantitative study of the reweighting effect.

(20)where w(k) is a weighting vector for the transform coefficients at the kth minimization. Each entry of w(k) is calculated as a decreasing function of the corresponding transform coefficient magnitude in the (k − 1)th minimization. This way, the strong transform coefficients are less penalized than the weaker ones in the new minimization. One may refer to Appendix D for quantitative study of the reweighting effect.

3.3. Algorithm

In the proposed method, the reweighting scheme is performed according to Candès et al. (2008):

-

1.

Set k = 0, for each entry in the weighting vector w(k), set

.

. -

2.

Solve the problem 20 yielding a solution Δ(k).

-

3.

Compute α(k) = ΦΔ(k).

-

4.

Update the weight vector according to

, where σj is the noise standard deviation expected at the jth transform coefficient, see Sect. 3.4.3.

, where σj is the noise standard deviation expected at the jth transform coefficient, see Sect. 3.4.3. -

5.

Terminated on convergence or when reaching the maximum number of iterations; otherwise, go to step 2.

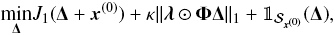

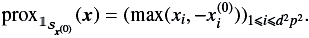

The step 2 resolution is detailed in Algorithm 1. We use the generalized forward-backward splitting introduced in Raguet et al. (2011). It requires the computation of proximity operators associated with the regularization functions in 20. One may refer to Appendix B for an introduction to proximal calculus.

Since the dictionary Φ is redundant, we do not have a closed-form expression for the proximity operator. Yet, it can be calculated as  (21)where û can be estimated using a forward-backward algorithm (Bauschke et al. 2011) as follows:

(21)where û can be estimated using a forward-backward algorithm (Bauschke et al. 2011) as follows:

-

1.

Set p = 0, initialize u0 = 0.

-

2.

.

. -

3.

.

. -

4.

Terminate on convergence or when reaching the maximum number of iterations, otherwise go to step 2.

The thresholding operator SoftThresh is defined in Appendix

B. The operator

is simply the orthogonal projector onto the set

is simply the orthogonal projector onto the set  defined in the previous section; it is given explicitly Appendix B.

defined in the previous section; it is given explicitly Appendix B.

A full description of SPRITE is provided in Algorithm 2.

3.4. Parameter estimation

The data fidelity term in the problem 20 is defined as ![Mathematical equation: \begin{equation} \left\lbrace \begin{matrix}J_1(\vec{x}) = \frac{1}{2}\sum_{k=1}^n\sum_{i=1}^p\sum_{j=1}^p\frac{(y_{k,ij}-f_k \hat{y}_{k,ij})^2}{\sigma_k^2} \\ \hat{y}_{k,ij} = \sum_l\sum_m h\left[ l-d(i-i_k),m-d(j-j_k)\right]x_{ij} \end{matrix}\right., \label{sprite_cost} \end{equation}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq126.png) (22)where

(22)where  is the desired image and h(.,.) is a 2D Lanczos kernel. As stated in Sect. 3.2, it can be written as

is the desired image and h(.,.) is a 2D Lanczos kernel. As stated in Sect. 3.2, it can be written as (23)if we write x in lexicographic order as a vector. The following parameters are required:

(23)if we write x in lexicographic order as a vector. The following parameters are required:

-

for the data fidelity term parameters the noise standard deviationin the LR images σk, the photometric flux fk, and the shift parameters (ik,jk);

-

a first guess x(0) (see problem 20);

-

the sparsity constraint parameters κ, λ = (λi)i and the dictionary Φ;

-

algorithmic parameter such as the gradient step size μ, the relaxation parameter λ in Algorithm 1, and the gradient step size μprox in Eq. (21) resolution.

3.4.1. Data fidelity parameters

Noise standard deviations

At the first step of the algorithm, the noise standard deviations in the low resolution images can be robustly estimated using the median absolute deviation (MAD) estimator (Starck et al. 2010).

Subpixel shifts

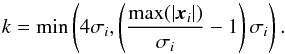

The subpixel shifts between the images are estimated based on the low resolution images centroids positions. Those are calculated on the low resolution images after a hard thresholding operation. For the image xi, the threshold is chosen as  (24)In this way, we only keep pixels with a high S/N for the centroid estimation. We then estimate the centroid positions using the iteratively weighted algorithm introduced in Baker & Moallem (2007). The thresholding operation undoubtedly biases the estimated centroid position, but the resulting estimated shifts are expected to be unbiased, up to the finite sampling and noise effects.

(24)In this way, we only keep pixels with a high S/N for the centroid estimation. We then estimate the centroid positions using the iteratively weighted algorithm introduced in Baker & Moallem (2007). The thresholding operation undoubtedly biases the estimated centroid position, but the resulting estimated shifts are expected to be unbiased, up to the finite sampling and noise effects.

Photometric flux

The flux parameters are calculated by integrating the low resolution images on a fixed circular aperture centered on their centroids estimates. At Euclid resolution (see Sect. 4), we obtained quite accurate flux estimates in simulations using a radius of 3 pixels for the aperture. These parameters define the matrix M in Eq. (23).

All these parameters are automatically calculated without requiring any user input.

3.4.2. First guess computation

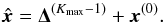

As one can see in the Algorithm 2, the final image is computed as  (25)This implies that the noise and any artifact in x(0) which does not have a sparse decomposition in Φ will be present in the final solution. Therefore, one has to be careful at this step. To do so, we compute a noisy first guess

(25)This implies that the noise and any artifact in x(0) which does not have a sparse decomposition in Φ will be present in the final solution. Therefore, one has to be careful at this step. To do so, we compute a noisy first guess  using a shift-and-add, as presented in Sect. 2.3.1. Then we apply a wavelet denoising to

using a shift-and-add, as presented in Sect. 2.3.1. Then we apply a wavelet denoising to  . In other terms, we transform

. In other terms, we transform  in a “sparsifying” wavelet dictionary W. We threshold each wavelet scale in such a way to keep only the coefficients above the noise level expected in the scale. Finally, we apply a reconstruction operator, which is a dictionary

in a “sparsifying” wavelet dictionary W. We threshold each wavelet scale in such a way to keep only the coefficients above the noise level expected in the scale. Finally, we apply a reconstruction operator, which is a dictionary  verifying

verifying  to the thresholded coefficients (see Starck et al. 2010). We note β = (βi)i, a vector made of the denoising thresholds for each wavelet scale. We set βi at 5σi, where σi is the noise standard deviation in the ith wavelet scale. The first guess is finally computed as

to the thresholded coefficients (see Starck et al. 2010). We note β = (βi)i, a vector made of the denoising thresholds for each wavelet scale. We set βi at 5σi, where σi is the noise standard deviation in the ith wavelet scale. The first guess is finally computed as  (26)which robustly removes the noise without breaking important features. One can refer to Appendix A for (σi)i estimation.

(26)which robustly removes the noise without breaking important features. One can refer to Appendix A for (σi)i estimation.

3.4.3. The choice of dictionary and regularization parameter

Regularization parameter

The regularization parameter κ can be set, according to a desired level of significance. Indeed, it can be seen that the transform domain vector û is constrained into weighted l∞-ball of radius μκ in Eq. (21) and can be interpreted as the non-significant part of the wanted signal current estimate. To set this radius according to the expected level of noise for each transform coefficient, we propagate the noise on the data vector z from Eq. (23) through μΦMTM and estimate its standard deviation at each transform coefficient, which sets the parameters λj. In practice, this can be done in two ways. We can either run a Monte-Carlo simulation of the noise in z and take the empirical variance of the sets of realizations of each transform coefficient. On the other hand, if Φ is a wavelet dictionary and if the noise is expected to be stationary in each wavelet scale, then we only need to compute a single standard deviation per scale. This can be done by estimating the noise in each scale of the wavelet transform of the gradient at each iteration (up to the factor μ) using a MAD, for instance. Indeed, the residual z − M(dn + x(0)) tend to be consistent with the noise in z, so that it can be used as a noise realization. With a stationary noise in each input image, the two approaches give very close estimates of the noise standard deviation and the second one is far less demanding in terms of complexity. As a result, coefficients below κλj are considered as part of the noise and one only needs to set the global parameter κ to tune the sparsity constraint according to the noise level.

Dictionary

The choice of the dictionary impacts the performance of the algorithm. We considered two transforms: a biorthogonal undecimated wavelet transform with a 7/9 filter bank and the second generation starlet transform (Starck et al. 2011). These two transforms are generic and not specifically tuned to a given PSF profile.

3.4.4. Algorithmic parameters

Gradient steps sizes

The gradient step size μ in Algorithm 1 needs to be chosen just in ![Mathematical equation: \hbox{$\left]0,2/\rho(\mathbf{M}^{\rm T}\mathbf{M})\right[$}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq152.png) , where ρ(.) denotes the spectral radius of a square matrix. In the same way, μprox needs to be chosen in

, where ρ(.) denotes the spectral radius of a square matrix. In the same way, μprox needs to be chosen in ![Mathematical equation: \hbox{$\left]0,2/\rho(\bm{\Phi}\bm{\Phi}^{\rm T})\right[$}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq154.png) .

.

Relaxation parameter

The parameter λ in Algorithm 1 needs to be chosen in ![Mathematical equation: \hbox{$\left]0,\min\left(\frac{3}{2},\frac{1+2/{\rho(\mathbf{M}^{\rm T}\mathbf{M})\mu}}{2}\right)\right[$}](/articles/aa/full_html/2015/03/aa24167-14/aa24167-14-eq155.png) (Raguet et al. 2011). This parameter tunes the updating speed of the auxiliary variables in Algorithm 1. In practice, we use μ = 1 /ρ(MTM) and λ = 1.4.

(Raguet et al. 2011). This parameter tunes the updating speed of the auxiliary variables in Algorithm 1. In practice, we use μ = 1 /ρ(MTM) and λ = 1.4.

3.4.5. User parameters

It is important to mention that the user only has to set the parameter κ and the dictionary with the other parameters being automatically estimated. In all our experiments, we took κ = 4, which is quite convenient, if we assume Gaussian noise in the data. The dictionary choice will be emphasized in the next section.

4. Numerical experiments

This section presents the data used, the numerical experiments realized as a mean to compare three SR techniques (shift-and-add, PSFEx method, and our method) and the results.

4.1. Data set

The PSFs provided are optical PSFs computed using a fast Fourier transform of the exit pupil. They are not a system PSF, so they do not include a jitter or detector response. A set of PSFs covering the whole field of view is provided. They are monochromatic PSFs at 800 nm and are derived from tolerance analysis. They account for manufacturing and alignments errors and thermal stability of the telescope. Manufacturing and alignment errors are partially compensated by a best focus optimization, while thermal stability effects are simulated by a small displacement of the optics that are not compensated on a short-time scale. The optical model used is dated from 2011 and is prior to the current reference model (provided by Astrium, which has been awarded the payload module contract in 2013). In particular, the 2011 model does not contain the latest definition of the pupil mask. The pupil, however, includes central obscuration and a three-vane spider. This is the model that has been used for the science feasibility studies that led to the acceptance of the Euclid mission.

|

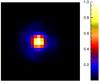

Fig. 5 Critically sampled PSF. |

|

Fig. 6 PSF sampled at Euclid resolution with different offsets and noise. |

4.2. Simulation

In the Euclid mission, the actual sampling frequency is about 0.688 times the Nyquist frequency that we define as twice the telescope spatial cut-off frequency (Cropper et al. 2013). Therefore we target an upsampling factor of 2, which gives a sufficient bandpass to recover the high frequencies. The PSF is typically space-varying, and this is particularly true for wide field of view instruments as Euclid telescope (ESA/SRE 2011). Thus, the data set contains simulated PSF measurements on a regular 18 × 18 grid on the field of view. The original PSF models are downsampled to twice Euclid resolution. For each PSF, four randomly shifted “copies” are generated and downsampled to Euclid resolution (see Figs. 5 and 6 below). These LR images are of size 84 × 84. Different levels of WGN are added. We define the signal level as its empirical variance that is calculated in a 50 × 50 patch centered on the HR PSF main lobe. For each algorithm, we used these four images to reconstruct a PSF which has twice their resolutions in lines and columns.

4.3. Quality criterion

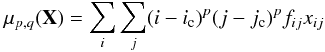

For an image X = (xij)i,j, the weighted central moments are defined as  (27)with (p,q) ∈ℕ2, (ic,jc) are the weighted image centroid coordinates, and F = (fij)i,j is an appropriate weighting function (typically a Gaussian function). The ellipticity parameters are then defined as follows: The vector ϵ = [e1,e2] is an important tool, since if measured on a large set of galaxies, it can be statistically related to the dark matter induced geometrical distortions and finally its mass density. Furthermore, this ellipticity parameters are magnitude invariant and approximately shift invariant. The error on ellipticity is therefore an interesting criteria for quality assessment. Thus, we used the mean absolute error for each ellipticity parameter,

(27)with (p,q) ∈ℕ2, (ic,jc) are the weighted image centroid coordinates, and F = (fij)i,j is an appropriate weighting function (typically a Gaussian function). The ellipticity parameters are then defined as follows: The vector ϵ = [e1,e2] is an important tool, since if measured on a large set of galaxies, it can be statistically related to the dark matter induced geometrical distortions and finally its mass density. Furthermore, this ellipticity parameters are magnitude invariant and approximately shift invariant. The error on ellipticity is therefore an interesting criteria for quality assessment. Thus, we used the mean absolute error for each ellipticity parameter,  (28)and the associated empirical standard deviations.

(28)and the associated empirical standard deviations.

Moreover, the PSF size is also an important characteristic of the PSF kernel. For example, it has been shown in Paulin-Henriksson et al. (2008) that the PSF size largely contributes to the systematic error in weak gravitational lensing surveys. Therefore, we use it in quality assessment by computing the mean absolute error on the FWHM. The FWHM is estimated by fitting a modified Lorentzian function on the PSF images. We used routines from a publicly available library3.

4.4. Results and discussion

Figures 5 and 6 show a simulated PSF that is sampled at almost the Nyquist rate and the two LR shifted and noisy PSF derive, with S/N of around 30dB.

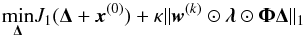

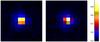

Figure 7 shows an example of super-resolved PSF at 30dB of S/N, from four LR images and the corresponding error maps that are defined as the absolute value of the difference between the original HR noise free PSF and the PSF reconstructions for each algorithm. This error map standard deviation is at least 30% lower with SPRITE.

|

Fig. 7 PSF reconstruction and error map at 30dB for three methods: from the left to the right, shift-and-add, PSFEx, and SPRITE. The error image standard deviation is at least 30% smaller with SPRITE. |

|

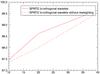

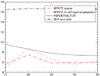

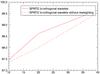

Fig. 8 Errors in log on ellipticity parameters versus the S/N. For S/N = 10 dB, SPRITE achieves around 6 dB less than others methods, which corresponds to a factor of e6 on a linear scale. |

Figure 8 shows smaller errors and errors dispersions are achieved with SPRITE algorithm, especially at low S/N. One can note that the dispersion is slightly smaller with biorthogonal undecimated wavelet. The error on the FWHM given in percent in Fig. 9 is smaller with SPRITE. In practice, there is more variability in the PSF (wavelength and spatial dependency, time variations...) so that the real problem will be more underdetermined. Thanks to the multiple exposures on the one hand, and that the spatial variations of the PSF are expected to be slow on the other hand, the real problem could actually be very well constrained. Moreover, these results suggest that even better results could be achieved by using more adapted dictionaries, built either from PSF model or through a dictionary learning algorithm (Beckouche et al. 2013).

5. Complexity and performances

The simulations were run on a typical desktop computer. Let us suppose that we have n LR images of sizes p1 × p2 and that we choose an upsampling factor d in lines and columns. As stated before, we took p1 = p2 = 84, n = 4, and d = 2 in our numerical experiments. Under this setting, it takes roughly 60 s and 1 GB of physical memory to compute a super-resolved PSF. More generally, the computational complexity of the algorithm is in O(np1p2d2log (p1p2d2)), which is related to the implementation of the matrices M from Eq. (16) and MT using FFT.

|

Fig. 9 Mean absolute error on the full width at half maximum (FWHM) in percent. SPRITE achieves on average an error of 6% on the FWHM which is 2% less than PSFEX in average. |

6. Software

Following the philosophy of reproducible research (Buckheit & Donoho 1995), the algorithm introduced in this paper and the data used are available online4. We used the following calls for the SPRITE executable:

-

run_sprite -t 2 -s 4 -r 2 -F -N data_file output_fileoutput_directory for the second genration Starlet transform;

-

run_sprite -t 24 -s 4 -r 2 -F -N data_file output_file output_directory for the undecimated biorthogonal wavelet transform.

The options “-s” and “-r” set the parameter κ (see Sect. 3.4.3) and the upsampling factor for both lines and columns respectively. The options “-F” and “-N” indicate that the photometric flux and the noise might have different levels in the LR images and need to be estimated.

7. Conclusion

We introduced SPRITE, which is a super-resolution algorithm based on sparse regularization. We show that adding a sparse penalty in the recovery leads to far better accuracy in terms of ellipticity error, especially at low S/N.

Quantitatively, we achieved

-

a 30% lower error on the reconstruction itself at 30dBof S/N;

-

around 6dB less than other methods on the shape parameters, which corresponds to a factor of e6 on a linear scale, at 10 dB of S/N;

-

6%of error on the FWHM in average, which 2% less than PSFEX.

However this algorithm does not handle the PSF spatial variations. Thus one natural extension of this work would be to simultaneously perform SR and dimensionality reduction assuming only one LR version of each PSF, as it is the case in practice strictly speaking, but by using a large PSF set. The PSF wavelength dependency would also be an interesting aspect to investigate.

These codes and the associated documentation may be found on the website http://www.astromatic.net/.

References

- Baker, K. L., & Moallem, M. M. 2007, Optics Express, 15, 5147 [NASA ADS] [CrossRef] [Google Scholar]

- Bauschke, H. H., Burachik, R. S., Combettes, P. L., et al. 2011, Fixed-Point Algorithms for Inverse Problems in Science and Engineering (Springer), 185 [Google Scholar]

- Beckouche, S.,Starck, J. L., &Fadili, J. 2013, A&A, 556, A132 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Blumensath, T., Yaghoovi, M., & Davies, M. E. 2007, in IEEE International Conference on Acoustics, Speech and Signal Processing, Honolulu, USA [Google Scholar]

- Buckheit, J., & Donoho, D. 1995, in Wavelets and Statistics, eds. A. Antoniadis, & G. Oppenheim (New York: Springer), Lect. Notes Stat., 103, 55 [Google Scholar]

- Candès, E.,Wakin, M., &Boyd, S. 2008, J. Fourier Analysis and Applications, 14, 877 [CrossRef] [Google Scholar]

- Chen, S.,Donoho, D., &Saunders, M. 2001, SIAM Rev., 43, 129 [Google Scholar]

- Combettes, P. L., &Wadjs, V. R. 2005, Simul., 4, 1168 [Google Scholar]

- Cropper, M.,Hoekstra, H.,Kitching, T., et al. 2013, MNRAS, 431, 3103 [NASA ADS] [CrossRef] [Google Scholar]

- Elad, M., & hel Or, Y. 2001, IEEE Transactions on Image Processing, 10, 1187 [Google Scholar]

- ESA/SRE. 2011, EUCLID Mapping the geometry of the dark universe, Tech. Rep., ESA [Google Scholar]

- Gray, R. M. 2006, Foundations and Trends® in Communications and Information Theory, 2, 155 [Google Scholar]

- Leonard, A.,Lanusse, F., &Starck, J.-L. 2014, MNRAS, 440, 1281 [NASA ADS] [CrossRef] [Google Scholar]

- Mohr, J. J., Armstrong, R., Bertin, E., et al. 2012, in SPIE Conf. Ser., 8451 [Google Scholar]

- Park, S. C.,Park, M. K., &Kang, M. G. 2003, IEEE Signal Processing Magazine, 3, 1053 [Google Scholar]

- Paulin-Henriksson, S.,Amara, A.,Voigt, L.,Refregier, A., &Bridle, S. L. 2008, A&A, 484, 67 [Google Scholar]

- Raguet, H.,Fadili, J., &Peyré, G. 2011, SIAM J. Imag. Sci., 6, 1199 [Google Scholar]

- Rodgers, J. L., &Nicewander, A. W. 1988, The American Statistician, 42, 59 [Google Scholar]

- Rowe, B., Hirata, C., & Rhodes, J. 2011 [arXiv:1105.2852v2] [Google Scholar]

- Shannon, C. 1949, Proc. IRE, 37, 10 [NASA ADS] [CrossRef] [Google Scholar]

- Starck, J.-L., Murtagh, F., & Fadili, J. 2010, Sparse Image and Signal Processing: Wavelets, Curvelets, Morphological Diversity (New York, NY, USA: Cambridge University Press) [Google Scholar]

- Starck, J.-L., Murtagh, F., & Bertero, M. 2011, in Handbook of Mathematical Methods in Imaging, ed. O. Scherzer (New York: Springer), 1489 [Google Scholar]

- Thompson, B. J. 1969, Science, 164, 170 [CrossRef] [Google Scholar]

- Yamagishi, M.,Ono, S., &Yamada, I. 2012, CAS, 111, 465, 49 [Google Scholar]

Appendix A: First guess noise

We keep the same notations as in Sect. 3.4.2. We note xwi as the ith scale of  wavelet transform in W. As the noise in

wavelet transform in W. As the noise in  is correlated, the estimation of σi is not straightforward, so we proceed as follows:

is correlated, the estimation of σi is not straightforward, so we proceed as follows:

-

1.

-

2.

-

3.

.

.

The factor 1.4826 comes from the assumption that the noise is approximately Gaussian. Finally, the factor k must be sufficiently high so that all the noise will remain in the residual  . We took k = 5. The minimization in step 2 usually takes up to five iterations to converge. For the finer scales,

. We took k = 5. The minimization in step 2 usually takes up to five iterations to converge. For the finer scales,  is quite close to σi. But for coarser scales,

is quite close to σi. But for coarser scales,  is significantly overestimated (it might be more than 10 times greater than σi). We implicitly assumed that the noise in the wavelet scales is stationary, which is reasonable apart from the edges effects due to the wavelet transform.

is significantly overestimated (it might be more than 10 times greater than σi). We implicitly assumed that the noise in the wavelet scales is stationary, which is reasonable apart from the edges effects due to the wavelet transform.

Appendix B: Proximal calculus

Let ℋ be a finite-dimensional Hilbert space (typically a real vector space) equipped with the inner product ⟨ .,. ⟩ and associated with the norm ∥ . ∥. A real-valued function ℱ defined on ℋ is

-

proper if its domain, as defined by domℱ = { x ∈ ℋ / ℱ(x) < + ∞ }, is non-empty;

-

lower semicontinuous (LSC) if liminfx → x0ℱ(x) ≥ ℱ(x0).

We define Γ0(ℋ) as the class of all proper LSC convex real-valued function defined on ℋ.

|

Fig. C.1 Errors in log scale on ellipticity parameters versus the S/N. The positivity constraint significantly improves the accuracy. |

Moreau (1962) introduced the notion of proximity operator as a generalization of a convex projection operator. Let ℱ ∈ Γ0(ℋ). Then the function  achieves its minimum at a unique point denoted by proxℱ(α), (∀α ∈ ℋ). The operator proxℱ is the proximity operator of ℱ. The indicator function of a closed convex subset

achieves its minimum at a unique point denoted by proxℱ(α), (∀α ∈ ℋ). The operator proxℱ is the proximity operator of ℱ. The indicator function of a closed convex subset

of ℋ is the function defined on ℋ by

of ℋ is the function defined on ℋ by

(B.1)It is clear from the definitions that the proximity operator of

(B.1)It is clear from the definitions that the proximity operator of  𝒞 is the orthogonal projector onto

𝒞 is the orthogonal projector onto  . Thus, for

. Thus, for  , which is defined in Sect. 3.2, and for x ∈ℝd2 p2

, we have,

, which is defined in Sect. 3.2, and for x ∈ℝd2 p2

, we have,

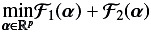

(B.2)Now we suppose that ℋ =ℝp, and we want to solve

(B.2)Now we suppose that ℋ =ℝp, and we want to solve

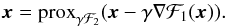

(B.3)where ℱ1,ℱ2 ∈ Γ0(ℝp). Many problems in signal and image processing may be formulated this way, where ℱ1 would be the data attachment function and ℱ2 would constrain this solution based on prior knowledges. It has been shown (Combettes & Wadjs 2005) that if ℱ1 is differentiable with a β-Lipschitz continuous gradient, then the problem (B.3) admits at least one solution and that its solutions, for γ> 0, verify the fixed point equation,

(B.3)where ℱ1,ℱ2 ∈ Γ0(ℝp). Many problems in signal and image processing may be formulated this way, where ℱ1 would be the data attachment function and ℱ2 would constrain this solution based on prior knowledges. It has been shown (Combettes & Wadjs 2005) that if ℱ1 is differentiable with a β-Lipschitz continuous gradient, then the problem (B.3) admits at least one solution and that its solutions, for γ> 0, verify the fixed point equation,  (B.4)This suggests the following iterative scheme,

(B.4)This suggests the following iterative scheme,  (B.5)for appropriate values of the parameter γn. This type of scheme is known as forward-backward (FB) algorithm: a forward gradient step using ℱ1 and a backward step involving ℱ2 through its proximity operator. Some examples of FB algorithms that have been shown to converge to a solution of (B.3) can be found in Bauschke et al. (2011). A popular example of proximity operator is the one associated with ℱ = λ ∥ . ∥ 1 with λ ∈ℝ:

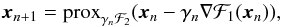

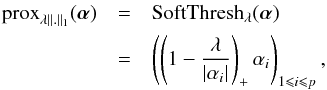

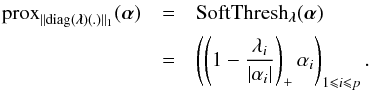

(B.5)for appropriate values of the parameter γn. This type of scheme is known as forward-backward (FB) algorithm: a forward gradient step using ℱ1 and a backward step involving ℱ2 through its proximity operator. Some examples of FB algorithms that have been shown to converge to a solution of (B.3) can be found in Bauschke et al. (2011). A popular example of proximity operator is the one associated with ℱ = λ ∥ . ∥ 1 with λ ∈ℝ:  (B.6)where p is the dimension of ℋ, αi the components of alpha in the basis associated with ∥ . ∥ l1 and (.)+ = max(.,0). One may refer to Bauschke et al. (2011) for proximity operator properties and examples. For

(B.6)where p is the dimension of ℋ, αi the components of alpha in the basis associated with ∥ . ∥ l1 and (.)+ = max(.,0). One may refer to Bauschke et al. (2011) for proximity operator properties and examples. For  , the proximity operator associated with the weighted l1 norm ∥ diag(λ)(.) ∥ 1 is given by

, the proximity operator associated with the weighted l1 norm ∥ diag(λ)(.) ∥ 1 is given by  (B.7)The hard thresholding operator defined as

(B.7)The hard thresholding operator defined as  (B.8)is often used instead in practice. See Blumensath et al. (2007) for more insight on the hard thresholding behavior in terms of convergence.

(B.8)is often used instead in practice. See Blumensath et al. (2007) for more insight on the hard thresholding behavior in terms of convergence.

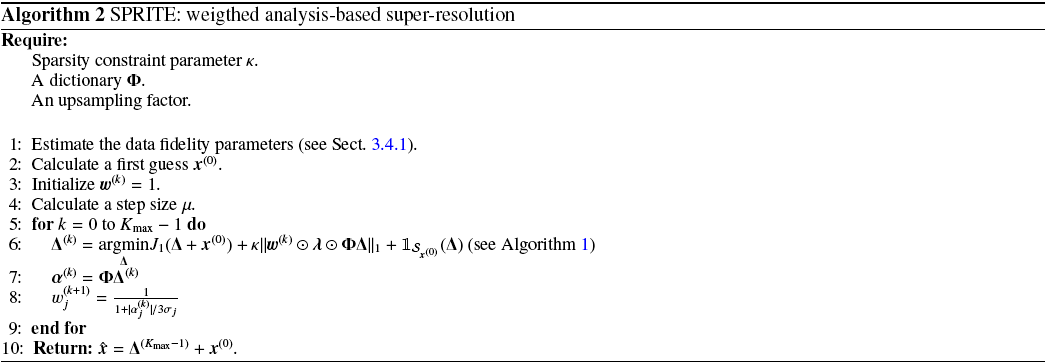

Appendix C: Positivity constraint

|

Fig. C.2 Map of negative values in the PSF reconstruction map (in absolute value) at 15 dB. On the left, SPRITE has a positivity constraint; on the right, SPRITE without positivity constraint. |

We can drop the positivity constraint by simply solving  (C.1)at step 6 in Algorithm 2. We did a similar numerical experiment as the one presented in Sect. 4 to quantify the impact of this constraint. The comparison is given in Fig. C.1. One can see that the positivity constraint is actually important from the point view of the ellipticity parameters at low S/N. This result is illustrated in Fig. C.2. With the positivity constraint, the reconstruction is less influenced by the negative oscillations in the data, due to noise; thus it yields a better robustness. Even if the negative residual values in the final PSF are 1000 order of magnitude smaller than the peak value, it is sufficient to considerably bias the ellipticity measurements.

(C.1)at step 6 in Algorithm 2. We did a similar numerical experiment as the one presented in Sect. 4 to quantify the impact of this constraint. The comparison is given in Fig. C.1. One can see that the positivity constraint is actually important from the point view of the ellipticity parameters at low S/N. This result is illustrated in Fig. C.2. With the positivity constraint, the reconstruction is less influenced by the negative oscillations in the data, due to noise; thus it yields a better robustness. Even if the negative residual values in the final PSF are 1000 order of magnitude smaller than the peak value, it is sufficient to considerably bias the ellipticity measurements.

Appendix D: Reweighting

As stated in Sect. 3.2, the reweighting scheme used in SPRITE is meant to mitigate the bias due to the l1 norm penalty. To verify this, we basically did the same as for the positivity in the previous section. Thus, we ran Algorithm 2 with Kmax = 1 and Kmax = 2 and we compute in each case the mean correlation coefficient in Pearson sense (Rodgers & Nicewander 1988)

|

Fig. D.1 Mean correlation coefficients (× 100) between the SPRITE PSF reconstructions and the reference images versus the S/N; the reweighting reduces the global bias. |

between the reference images and the reconstructions for different S/N. The result is given in Fig. D.1. As expected, the reweighting improves the correlation and consequently, reduces the global bias on the reconstruction.

All Figures

|

Fig. 1 General observation model. See Sect. 2.2 for a detailed description. |

| In the text | |

|

Fig. 2 Adapted observation model. This time we consider an isolated star as an input, and unlike in the general model, the output of the block “Blur” is the PSF. |

| In the text | |

|

Fig. 3 SR general scheme. The deblurring does not apply to our case, since we want to precisely estimate the blur. |

| In the text | |

|

Fig. 4 Simulated optical point-spread function. |

| In the text | |

|

Fig. 5 Critically sampled PSF. |

| In the text | |

|

Fig. 6 PSF sampled at Euclid resolution with different offsets and noise. |

| In the text | |

|

Fig. 7 PSF reconstruction and error map at 30dB for three methods: from the left to the right, shift-and-add, PSFEx, and SPRITE. The error image standard deviation is at least 30% smaller with SPRITE. |

| In the text | |

|

Fig. 8 Errors in log on ellipticity parameters versus the S/N. For S/N = 10 dB, SPRITE achieves around 6 dB less than others methods, which corresponds to a factor of e6 on a linear scale. |

| In the text | |

|

Fig. 9 Mean absolute error on the full width at half maximum (FWHM) in percent. SPRITE achieves on average an error of 6% on the FWHM which is 2% less than PSFEX in average. |

| In the text | |

|

Fig. C.1 Errors in log scale on ellipticity parameters versus the S/N. The positivity constraint significantly improves the accuracy. |

| In the text | |

|

Fig. C.2 Map of negative values in the PSF reconstruction map (in absolute value) at 15 dB. On the left, SPRITE has a positivity constraint; on the right, SPRITE without positivity constraint. |

| In the text | |

|

Fig. D.1 Mean correlation coefficients (× 100) between the SPRITE PSF reconstructions and the reference images versus the S/N; the reweighting reduces the global bias. |

| In the text | |

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.