| Issue |

A&A

Volume 525, January 2011

|

|

|---|---|---|

| Article Number | A7 | |

| Number of page(s) | 13 | |

| Section | Cosmology (including clusters of galaxies) | |

| DOI | https://doi.org/10.1051/0004-6361/201015044 | |

| Published online | 26 November 2010 | |

Dark energy constraints from a space-based supernova survey

1

Laboratoire de Physique Nucléaire et des Hautes Energies, UPMC Univ. Paris

6, UPD Univ. Paris 7, CNRS IN2P3,

4 place Jussieu,

75005

Paris,

France

e-mail: This email address is being protected from spambots. You need JavaScript enabled to view it.

2

Université Paris-sud, 91405

Orsay,

France

Received:

26

May

2010

Accepted:

26

September

2010

Abstract

Aims. We present a forecast of dark energy constraints that could be obtained from a large sample of distances to Type Ia supernovae detected and measured from space.

Methods. We simulate the supernova events as they would be observed by a EUCLID-like telescope with its two imagers, assuming those would be equipped with 4 visible and 3 near infrared swappable filters. We account for known systematic uncertainties affecting the cosmological constraints, including those arising through the training of the supernova model used to fit the supernovae light curves.

Results. Using conservative assumptions and Planck priors, we find that a 18 month survey would yield constraints on the dark energy equation of state comparable to the cosmic shear approach in EUCLID: a variable two-parameter equation of state can be constrained to ~0.03 at z ≃ 0.3. These constraints are derived from distances to about 13 000 supernovae out to z = 1.5, observed in two cones of 10 and 50 deg2. These constraints do not require measuring a nearby supernova sample from the ground.

Conclusions. Provided swappable filters can be accommodated on EUCLID, distances to supernovae can be measured from space and contribute to obtain the most precise constraints on dark energy properties.

Key words: cosmological parameters / dark energy

© ESO, 2010

1. Introduction

About ten years ago, distances to about 50 Type Ia supernovae (SNe Ia) enabled two teams (Riess et al. 1998; Perlmutter et al. 1999) to independently constrain the kinematics of the expansion of the universe and present the first evidence for acceleration at late times (typically later than z ≃ 0.5). This acceleration was ascribed to a mysterious component baptised dark energy. The mere cause of this late acceleration remains unknown, and the expansion history is not well enough constrained yet to uniquely characterise the phenomenon. There is no known candidate to incarnate this dark energy, and working groups have been constituted to review possible observational strategies that could significantly increase our knowledge of it. Two working group reports (Albrecht et al. 2006; Peacock et al. 2006) have identified a set of observational approaches to constrain dark energy, and outlined generic large and difficult projects, mainly (but non only) space-based, that could significantly improve our knowledge of dark energy.

Four main dark energy probes were identified: the cosmic shear correlations as a function of angle and redshift, the measurement of the acoustic peak in the galaxy correlation function, the measurement of distances to Type Ia supernovae, and cluster counts. All these measurements have to be carried out as a function of redshift in order to constrain dark energy. Forecasts and merits of these methods were discussed in both reports which stress that crossing methods is mandatory, since the anticipated measurements face partly unknown systematic uncertainties. Regarding the merits of the considered probes, a short summary of the findings is that cosmic shear correlations are the most promising and most demanding approach, baryon acoustic oscillations (BAO) is the simplest (in terms of analysis), and distances to supernovae the most mature. One strong incentive to use multiple probes, beyond redundancy, is that General Relativity predicts a specific relation between the expansion history and the growth of structures that can be uniquely tested by comparing expansion history from supernovae and BAO, to growth rate from cosmic shear, cluster counts and redshift distortions. (The measurement of redshift distortions can be extracted from the same data as BAO.)

The Hubble diagram of Type Ia supernovae remains today the best dark energy probe, at least if one focuses on results rather than forecasts. Ambitious space-based supernova programs have been imagined and presented right after the discovery of accelerated expansion1 that would observe O(1000) supernovae from space. More recently, a small visible-imaging mission concept DUNE has been considering the observation of about 10 000 supernovae up to z = 1 from space (Réfrégier et al. 2006). This mission concept was later extended to the near-infrared (NIR) and proposed to ESA under the same name (Réfrégier 2009). New space missions are currently being developed in order to constrain dark energy, both in north America (the JDEM mission, now called WFIRST) and in Europe (the EUCLID mission, which incorporates the second DUNE concept). We propose here a space-based supernova survey that could plausibly be implemented on the EUCLID project, provided a filter wheel is accommodated into the visible camera. For the first DUNE concept, suppressing the filter wheel had been considered and had a negligible impact on the mission cost and complexity. The supernova survey forecast we present here relies on the experience gained in analysing the ground-based SNLS survey (Guy et al. 2010; Conley et al. 2010).

We will first describe the instrument suite we simulate. We follow in Sect. 3 with the proposed supernova survey. The proposed methodology for the analysis and the associated Fisher matrix are the subjects of Sect. 4. Our results are presented in Sect. 5. We discuss in Sect. 5.2 how the key issues related to distance biases might be addressed. We summarise in Sect. 6.

2. Instrument suite

In its current design, EUCLID is equipped with a visible and a NIR imager (Laureijs 2009). (It also embarks a slitless NIR spectrograph, and the SN survey we discuss here does not rely on it). We assume that both imagers observe simultaneously the same part of the sky through a dichroic, but if they were instead observing contiguous patches, it would marginally affect the efficiency of the proposed surveys.

2.1. General features

We assume the following figures and features:

-

The primary mirror diameter is 1.2 m, with acentral occultation of 0.5 m.

-

Both the visible and NIR imagers cover 0.5 deg2. The survey efficiency driver is the field of view of the NIR imager.

-

The pixel sizes are 0.1″ for the visible channel and 0.3″ for the NIR channel.

-

We assume a quantum efficiency of 0.8 for the NIR detectors. The CCD sensors for the visible channel are assumed to have a quantum efficiency peaking at 0.93 at 600 nm, and falling to 0.5 at 900 nm. The red sensitivity is probably conservative.

-

We assume that the NIR imager has a 20 electrons read-out noise and a 0.1 el./s/pix dark current. Both numbers are not particularly optimistic.

-

The visible imager has a 4 electrons read-out noise and a 0.002 el./s/pix dark current. Both have a negligible impact on broad-band photometry of faint sources.

-

At variance with the current EUCLID design, we assume that both imagers are equipped with filters, with a filter changing device such as a filter wheel.

-

The filters transmit at most 90% of the light, and the optics transmission (excluding filter and CCD) is about 80% at 1.2 μm.

-

The image quality (IQ) is due to two components: one independent of wavelength equal to 0.17″ (FWHM) and a diffraction contribution for a 1.2 m mirror. Within this model, the FWHM IQ increases from 0.2″ at 0.5 μm to 0.35″ at 1.5 μm. The contribution of pixel spatial sampling will be considered later.

-

The light background is taken from Leinert et al. (1998). We assume that the survey takes place at 60 degrees of ecliptic latitude, which corresponds roughly to a 20% increase of background light with respect to the ecliptic pole.

|

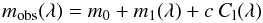

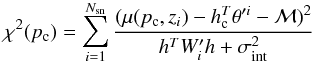

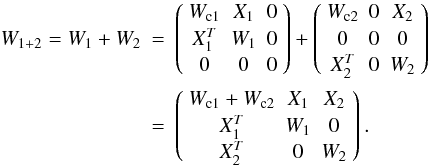

Fig. 1 Overall transmission of the bands of both imaging systems as they are simulated for this study. The EUCLID baseline set consists of 3 NIR filters (as assumed here) and a single “r + i + z” visible filter. |

2.2. Filter set

Cosmological constraints using supernovae derive from comparing measurements across redshifts. In order to make the comparison as robust as possible, we propose a filter set logarithmic in wavelength, with 7 bands: 4 bands in the visible channel, corresponding roughly to g,r,i and z from the SDSS system (Fukugita et al. 1996), and 3 bands on the NIR channel roughly matching y, J and H. The NIR filters are already included in the EUCLID concept, but the visible filters are not. The whole filter set covers the [450,1660] nm interval, with the dichroic split at 950 nm. The overall transmission of the simulated bands is displayed in Fig. 1. The sky brightness we assume in our simulations and the collecting power of the instrument (provided as magnitude zero-points) can be found in Table 1.

Characteristics of the bands considered in this work.

3. Characteristics of the supernova survey

Supernovae are transient events and the primary distance indicator is the amplitude of the light curve(s), that we assume to be measured via broad-band imaging. In order to properly sample supernova light curves, one needs to repeatedly image the same pointings, in as many bands as possible. We require that the rest frame wavelength region from 4000 to 6800 Å is measured for all events, and sampled by (at least) three filters: this roughly corresponds to measuring the B,V and R (rest frame) standard bands. Current distance estimators only require two bands (they can of course accommodate more bands). Requiring three bands will either allow one to use more elaborate distance estimators, or to use the built-in redundancy to compare derived distances. Requiring that the same rest frame wavelengths are measured at all redshifts make the comparison of supernovae brightnesses as independent as possible from a supernova model. Although we require three bands to measure a distance, the observing strategy proposed below provides us with more than three bands at all redshifts. The duration of the survey is arbitrarily chosen as 1.5 year, i.e. about one third of a five-year mission. This range of survey time provides O(104) events, i.e. an order of magnitude over current cosmological samples (e.g. Amanullah et al. 2010). Alterations of the survey duration will be considered in Sect. 5.1.

3.1. Photometric precision requirements

The depth of the observations is driven by the maximum target redshift, and considering three requirements: (1) the measurements should be accurate enough so that the distance estimate has a measurement uncertainty that remains significantly smaller than the observed scatter of the Hubble diagram (namely around 0.15 mag for modern surveys, see e.g. Guy et al. 2007; Kessler et al. 2009; Guy et al. 2010; Conley et al. 2010); (2) the same supernova parameter space should be observable at all redshifts in order to avoid the shortcomings of Malmquist bias corrections; (3) the amplitude of the three bands roughly matching rest frame B,V,R bands should be measured at an accuracy better or comparable to the measured “colour smearing”, namely about 0.025 mag, see Guy et al. (2007, 2010). Colour smearing2 refers to the spread of colour-colour relations of SNe Ia: it is the scatter of single-band light curve amplitudes one has to add to measurement uncertainties in order to properly describe the observed spread of colour-colour relations of SNe Ia. One can for example model the rest frame V − R colour from B − V. The scatter of this colour–colour relation is properly described by assuming that the B,V and R peak magnitudes scatter independently by σc ≃ 0.025 mag around a two-parameter model. One can estimate the colour smearing as a function of wavelength (Guy et al. 2010), and find that the B,V,R region is less scattered than bluer bands. The colour smearing contributes to the Hubble diagram scatter, but does not account for the entirety of the measured ~0.15 mag r.m.s.

In practice, the requirement (2) about Malmquist bias indicates that events one magnitude fainter than the average at the highest redshift should be easily detected. We find that requirement (3) is the most demanding, and the survey setup we present later fulfils these three requirements.

3.2. Redshifts

Supernova redshifts are obviously needed to assemble a Hubble diagram. Obtaining SNe Ia spectra from the ground is technically feasible up to z ~ 1. At higher redshifts, the required exposure times increase very rapidly with redshift because the SNe Ia flux decreases rapidly in the near UV (bluer than ~3600 Å), and the atmosphere glow increases rapidly towards the red. A large spectroscopic followup of supernovae at z > 1 is hence out of reach of current ground-based instruments, and even if adaptive optics, OH suppression and larger telescopes will certainly help very significantly, massive statistics will likely remain out of reach during at least the next decade.

Since acquiring a spectrum for each supernova was a core goal of the proposed SNAP concept3, its baseline design included a high-throughput low-resolution spectrograph. In this concept, the supernova spectroscopy requirements drove the mirror size and limited the statistics to O(2000) supernovae, because of the long exposures required for spectroscopy of distant supernovae.

We believe that obtaining supernova spectra one at a time with a small field instrument is not a realistic goal for O(104) events, especially if aiming at redshifts beyond unity. Wide field space-based slitless spectroscopy may be considered, but the S/N ratio of slitless spectra is naturally poor, because every pixel integrates the sky background spectrum in the whole bandwidth: spectra at a modest magnitude H ~ 24 seem out of reach of EUCLID slit-less spectrograph. Space-based spectroscopy with synthetic slits (as originally proposed for the SPACE project, later merged with DUNE into EUCLID) is significantly more sensitive, and for a supernova program on a joint imaging-spectroscopy-with-slits mission (such as the original EUCLID concept), one should obviously consider obtaining supernovae spectra in parallel with repeated imaging.

So, for O(104) supernovae, we should consider the case where supernova spectra cannot be acquired for all events. Since the Hubble diagram obviously requires redshifts, we consider the following alternatives:

-

Acquiring host galaxy spectra “after the fact” using wide fieldspectroscopy. The target density would be below500 deg-2 and hence perfectly suited to the multi-fiber spectrography being considered today (WFMOS and BigBoss are typical examples). For the redshift range and area we will consider below, this would typically require a few hundred hours of integration on a 4-m class telescope, which seems acceptable, thanks to the multiplex factor.

-

Relying on photometric redshifts of host galaxies, using both visible and NIR bands. This would cause a loss of accuracy of cosmological parameters. However, one would still have to collect a sample of spectroscopic redshifts of faint galaxies in order to train the photometric redshift algorithms.

-

Relying on photometric redshifts of supernova. These are now known to be more accurate than photometric redshifts of host galaxies (Palanque-Delabrouille et al. 2010; Kessler et al. 2010a), thanks to the homogeneity of the events. However, this approach weakens the identification step since the redshift will minimise the difference between measurements and expectations for a Type Ia supernova. It also introduces correlated uncertainties between distance and redshift which would require a careful study.

We assume in what follows that supernovae have high quality redshifts, i.e. that spectroscopic redshifts of host galaxies are acquired. From here on, we will allow for a conservative 20% loss in supernovae statistics due to this process.

3.3. Why space?

Two aspects favour a space based supernova survey: first, ground-based surveys suffer from variations of atmospheric transparency and image quality across observing epochs. This causes photometric uncertainty floors which can be overcome from space. Second, photometry of faint sources in the NIR from the ground is notoriously difficult and requires extreme exposure times on 8-meter class telescopes, because of the bright atmospheric glow. The “1 μm barrier” practically limits supernova ground-based surveys to z = 1 or significantly below, if one imposes that supernovae at all redshifts are measured in the same rest frame wavelength range. Space offers NIR photometry with sensitivities similar to the visible range, and hence opens supernova surveys to the z > 1 range. Accessing NIR bands also improves supernova surveys in two respects: it allows one to measure objects on a large wavelength lever arm, which is mandatory to characterise their colour variations. It also allows one to measure distances to supernovae using redder bands than allowed from the ground (we will consider rest frame I band in Sect. 3.6), which limits the effects of colour variations among the sample. Finally, accumulating deep NIR photometry of galaxies improves galaxy typing and photometric redshifts (over visible-only measurements); the latter may be useful even for supernova cosmology if obtaining spectroscopic redshifts of the supernova hosts turns out to be not practical.

3.4. Supernova surveys cadence and coverage

The imaging survey should be run in “rolling search mode” where a given patch of the sky is observed repeatedly, in order to discover and measure variable objects. The required wavelength range to cover is bound to the redshift range aimed at: in order to derive distances as independent as possible of any supernova model, the same rest frame spectral region should be used to derive distances at all redshifts.

Monitoring a single cone at the depth required at the survey highest redshift delivers a supernova redshift distribution where moderate and low redshifts are essentially missing. We hence propose a two-cone approach: a deep survey of 10 deg2 up to z = 1.55, and a wide survey of 50 deg2 up to z = 1.05. We consider different area ratio in Sect. 5.1. The deep survey typically requires integrations 4 times longer than the wide. Note that both surveys are on purpose volume limited in order to avoid the shortcomings of selection bias corrections.

We simulate a survey duration of 1.5 year of calendar time, with the wide and deep survey observations interleaved. The footprint of the deep survey is assumed to be outside the wide, so that its low redshift sample (where statistics is precious) adds up to that of the wide. We draw the simulated samples from a measured SNe Ia volumic rate as a function of redshift (Ripoche 2008; see also Perrett et al. 2010), which may be parametrised as

![Mathematical equation: $$ R(z) = 1.53\times 10^{-4} \left [ (1+z)/1.5 \right ]^{2.14} h_{70}^3\ \mathrm{Mpc^{-3}\ yr^{-1}} $$](/articles/aa/full_html/2011/01/aa15044-10/aa15044-10-eq43.png)

where years should be understood in the rest frame. Since these measurements stop around z = 1 and rates at larger redshifts are highly uncertain, we assume that the volumic rate remains constant above z = 1 (to z = 1.5). The rates proposed in Mannucci et al. (2007) (accounting for events “lost to extinction”) yield a statistic (to z = 1.5) ~ 25% larger than what we simulate, with a similar redshift distribution. Our simulation accounts for “side effects” by requiring that all epochs corresponding to rest frame phases from −15 to +30 days from maximum light are measured. As a consequence the event statistics increases if the survey monitors smaller areas over a longer period, with a constant total observing time. The accuracies we discuss later ignore 20% of the events, in order to allow for losses in the measurement process (failures to obtain redshifts for example). Accounting for these losses, the deep and wide surveys deliver about 4000 and 9000 events respectively. The simulated statistics are provided as a function of redshift in Table 2. Events at higher redshifts can be detected but they fail the quality cuts for deriving distances.

Expected number of SNe Ia events in a 1.5 year survey.

We settle for a cadence of 5 observer days, but the photometric accuracies that matter to derive distances do not depend on this choice at first order, since those mainly depend on the overall integrated light. However, a significantly coarser sampling might compromise photometric identification. The integration times per filter at each epoch are provided in Table 3 and were chosen in order to provide a measurement of light curve amplitudes to a precision of 2.5% rms on average at the highest redshifts of each survey.

Integration times (in seconds) per visit.

In 5 days of calendar time, the total exposure times amount to 56 and 40 h for the wide and deep surveys respectively, which corresponds to 80% of the 120 h available.

Both surveys are conducted in 7 bands, covering g to H (450 < λ < 1700 nm). One may argue that the bluest bands of the deep survey and the reddest bands of the wide survey are not strictly needed to measure supernovae distances, but since visible and NIR observations are assumed to happen in parallel, dropping blue visible bands for the deep survey, or red NIR bands for the wide survey does not save any observing time.

Note that we have not discussed the collection of a nearby sample (typically O(1000) events at 0.03 < z < 0.1). As a baseline, and at variance with the SNAP project, we stick to a self-contained imaging survey, in order to realistically limit cross-calibration and detection bias issues.

3.5. Identification of SNe Ia and contamination

SNe Ia exhibit reproducible rest frame colours (σ(B − V) ≃ 0.1, see e.g. Fig. 8 of Astier et al. 2006), and even more reproducible colour relations. For example, the rest frame U-band amplitude can be predicted to better than ~0.04 from B- and V-bands (Astier et al. 2006). SNe Ia not only occupy narrow subspaces of multicolour spaces, but also exhibit very reproducible light curve shapes that permit to discriminate against most of the core-collapse events (Poznanski et al. 2002; Johnson & Crotts 2006; Rodney & Tonry 2009). Studies of the photometric selection of SNe Ia have been conducted on the SNLS data and their preliminary conclusions are encouraging (Ripoche 2007; Bazin 2008). These studies rely on host galaxy photometric redshift and most of their identification failures are due to wrong assumed redshifts. The supernova survey we are considering here would be in a more favourable situation than these studies: it measures 7 bands (whilst SNLS has at most 4), and we assume that host galaxy spectroscopic redshifts will be available (whilst SNLS studies used host galaxy photometric redshifts).

In order to estimate the contamination by core-collapse supernova in a sample selected in colour–colour subspaces, we would need a large enough sample of multi-band measurements of such events. These should soon be available, thanks at least to the SDSS supernova survey, the Lick Observatory Supernova Search, and the Palomar Transient Factory, but they are not available yet. Therefore, in order to bound the impact of core-collapse contamination on the SNe Ia distance-redshift relation, we resort to studying how clipping around the Hubble line rejects other supernova types, following Conley et al. (2010).

Core collapse supernovae are classified in Type Ib and Ic, and Type II. Type Ib and Ic events are often merged into a “Ibc” type (see e.g. Richardson et al. 2002; Li et al. 2010). Type II events are about 3 times more frequent than Ibc (Li et al. 2010, Fig. 9), but two thirds of those are Type II-plateau (II-p) which are easily identified from their very flat light curves. Other Type II events represent about the same rate as Ibc, but their light curves rise in a few days, whereas SNe Ia rise in more than 15 days. So, Type II events might add a small contribution to Ibc interlopers, and we will now concentrate on evaluating the impact of a Ibc contamination in the Hubble diagram.

We model the Ibc population absolute magnitude distribution as a Gaussian offset by Δbc from the Ia population with rms σbc. We expect the rate of Ibc to be proportional to the star formation rate, that we take from Hopkins & Beacom (2006), and the amount of Ibc events follows from fbc(z = 0), the ratio of the Ibc rate to the Ia rate at z = 0. The adopted Ia rate was presented in Sect. 3.4.

We simulate a mix of Ia and Ibc events, fit the Hubble diagram with a sixth degree polynomial, and iteratively clip events beyond 3σ from the fit and refit, until no event is clipped. We simulate Ia events with a Gaussian scatter 0.15 mag around the Hubble line. For Ibc events, it is unclear how applying to them the brighter-slower and brighter-bluer corrections for SNe Ia will affect the absolute magnitude distribution. We might guess that some part of their brightness scatter is due to extinction in their host galaxy, and that brighter-bluer corrections would narrow their distance modulus distribution. We will consider below two estimates of the Ibc brightness scatter, one as observed and one significantly lower. Note that Richardson et al. (2002) estimate intrinsic magnitude scatter corrected for host galaxy extinction which differ little from raw estimates. The contamination depends on the bright end of the Ibc luminosity function which is not well constrained: Richardson et al. (2002) propose a distribution extending well beyond the Ia average brightness, while Li et al. (2010) brightest Ibc event is 0.5 mag fainter than the average Ia. Both results are however compatible at the ~10% CL, given the modest statistics involved4. We propose three scenarios for the Ibc population, each with two values for σbc, detailed in Table 4:

-

(R02) the Ibc population in Richardsonet al. (2002) amounts to about 16%of the SNe Ia, is fainter by 1.4 mag than SNe Ias andhas a rms scatter of 1.4 mag.

-

(R02 bright) Richardson et al. (2002) see a mild indication of a bright component of Ibc, representing about 5% of the SNe Ia.

-

(L10) Li et al. (2010) have a much more complete survey and find a volumetric rate of Ibc which is about 80% of the Ia rate. Their average Ibc is fainter by 2.4 mag than their average Ia with an r.m.s of 1.2 mag. As we just noted, the magnitude distribution of the measured sample does not contain Ibc events under the Ia peak.

Contamination affects cosmology by biasing the average distance modulus. However, a redshift independent bias has no effect on cosmology, and we report in Table 4 the slope of the bias as a function of redshift dδμ/dz, which fairly describes most of the effect.

Various scenarios considerd foor the Ibc contamination.

We find that under the three scenarios, the effect on cosmology is small. Even in our “L10” scenario, where the contamination is suspiciously large compared to the fraction of Ibc identified by high redshift SNe Ia surveys, the effect on cosmology remains below the systematic uncertainty σ(eM) = 0.01 (defined later in Eq. (5)) that we will consider as a baseline. The clipping process has a negligible impact on the SNe Ia statistics. A rough estimate of the size of the effect we find can be readily computed, for e.g. the first line of the table: the average offset of Ibc within the Ia ± 3σ window is 0.047, the fraction of the Ibc population within the same window amounts to ~20%, the Ibc to Ia number ratio at z = 0 is assumed to be 0.16, and it increases with redshift as ~(1 + z). The evolution of the Ibc induced distance bias reads 0.047 × 0.2 × 0.16 = 0.0015.

One might argue that a Gaussian distribution is inadequate to describe the tails of the Ibc distribution, but more populated tails also exhibit shallower slopes, and both alterations have a tendency to cancel each other. Note that our estimates ignore the potential rejection from light curve shapes and colours of Ibc events, which is certainly a conservative assumption.

Our results might look at odds with other attempts. Homeier (2005) follows a similar approach and finds sizable effects on cosmology, and we attribute the difference to the absence of clipping. More recently, Kessler et al. (2010b) proposed a supernova photometric identification challenge. The provided simulated sample contains a large fraction of events for which the phase coverage and the signal to noise ratio are inadequate for a distance measurement, would they be genuine Type Ia. In our simulation, such events are excluded a priori and do not count as identification failures. We also benefit in our simulation from assuming spectroscopic redshifts as this reduces the Hubble diagram scatter. Regarding the identification using colours, Type Ia events were generated in Kessler et al. (2010b) with a colour smearing of 0.1 mag, which we now know to be about 4 times too large, and leads to overestimating mis-identifications.

We eventually ignore the efficiency loss due to photometric selection, and integrate the effects of possible contaminations into a drift of absolute magnitude (see Sect. 4.3.4 and Eq. (5)). Note that if spectroscopic redshifts of host galaxies are acquired and the wrong host is assigned to a supernova, this event will likely fail the photometric typing cuts and hence will not pollute the Hubble diagram.

3.6. Rest frame I-band Hubble diagram

The wide survey provides the possibility of constructing a rest frame I-band Hubble diagram out to z ≃ 0.9, with a statistics of about 7000 events. Encouraging cosmological results were recently obtained from rest frame I-band measurements from the ground (Freedman et al. 2009). I-band distances have a smaller contribution of colour to the distance estimate than B-band distances, and are hence significantly more robust to systematics related to colour modelling and calibration. They also exhibit a smaller scatter. Measuring distances to the same events using independent measurements constitutes a very appealing test of the methods and possible biases. It seems unlikely that collecting full rest frame I-band light curves from the ground for a O(1000) events sample reaching z = 0.9 becomes feasible in the next decade. In what follows, we conservatively did not include the cosmological constraints that could be obtained from this second built-in supernova survey.

4. Methodology

4.1. Point source photometry

In deriving the photometric accuracy of a measurement, we assume that the photometry of supernovae is carried-out using PSF photometry5. The pixel sampling is accounted for, and we checked that the position of the source centre within a pixel does not change significantly the signal to noise ratio. For most of our supernovae measurements, the contribution of the shot noise from the source itself is not negligible.

4.2. Light curve fitter training

We use the SALT2 model (Guy et al. 2007) to generate light curves. In this framework, light curves are described by 4 parameters: a date of maximum light (in B-band), an overall amplitude of the light curve X0, an X1 parameter closely related to stretch factor or decline rate, and a colour at B maximum. Since light curve shapes are not derived (yet) from explosion models, the light curve models rely on a training sample, and then reflect the quality of this training sample. Since we should aim at obtaining a supernova sample significantly larger and of better quality than before, the sample itself should be used to train the light curve fitter. This self-training procedure is only possible for light curve fitters which do not provide distance estimates, such as SALT and SALT2 (Guy et al. 2005, 2007), SIFTO (Conley et al. 2008). Those do no assume any relation between redshift and brightness and only predict light curve shapes in observer bands and flux ratios between bands or epochs. They leave to a later stage the event parameter combination that will be used as a distance estimator. Supernova model training causes specific uncertainties: model noise due to the finite training sample and the calibration uncertainties of the latter, and a specific distance uncertainty pattern due to self-training.

SNe Ia exhibit a variety of rest frame colours, but different colours of the same event seem closely related. To account for correlated colour variability, one can take guidance from light extinction (by e.g. dust) and model the observed magnitude (for example at time of maximum B light) as:  (1)m0 and c vary from event to event, whilst the “naked” flux m1 and the colour law Cl constitute the model (supposedly for all events). We can chose the scaling of Cl so that c = (B − V)max + const.Cl (called the “colour law” in the SALT2 parlance) determines the colour relations, e.g. the slopes measured in rest frame colour-colour planes. When a similar model is developed for extinction by dust, the equivalent of the Cl function is determined from data. For example Cardelli et al. (1989) propose a one-parameter family of extinction laws commonly called “Cardelli laws”. For a supernova model, Cl can also be extracted from data, however up to an arbitrary constant which does not affect colour-colour positions6. Guy et al. (2005, 2007) derive Cl for SNe Ia and find it significantly different from Cardelli laws. Similarly, the SNe rest frame colour relations (e.g. U − B vs. B − V) found in Conley et al. (2008) are different from the ones expected from Cardelli laws.

(1)m0 and c vary from event to event, whilst the “naked” flux m1 and the colour law Cl constitute the model (supposedly for all events). We can chose the scaling of Cl so that c = (B − V)max + const.Cl (called the “colour law” in the SALT2 parlance) determines the colour relations, e.g. the slopes measured in rest frame colour-colour planes. When a similar model is developed for extinction by dust, the equivalent of the Cl function is determined from data. For example Cardelli et al. (1989) propose a one-parameter family of extinction laws commonly called “Cardelli laws”. For a supernova model, Cl can also be extracted from data, however up to an arbitrary constant which does not affect colour-colour positions6. Guy et al. (2005, 2007) derive Cl for SNe Ia and find it significantly different from Cardelli laws. Similarly, the SNe rest frame colour relations (e.g. U − B vs. B − V) found in Conley et al. (2008) are different from the ones expected from Cardelli laws.

The Cardelli laws not only predict colour-colour slopes, but they also relate colour variations to total extinction. Extinction causes a brighter-bluer relation, characterised by RB = 4.1 on average for the Milky Way dust. The brighter-bluer relation can again be measured for supernovae (by minimising the Hubble diagram residuals), and one finds a value around 3.2 (Guy et al. 2010), significantly smaller than 4.1. We will come back to this point in Sect. 4.3.4.

Since both SNe colour relations and total to selective extinction point away from extinction laws for Milky Way dust, we should make provision in the survey design for studying both aspects using supernovae, and in particular be in a position to measure both colour relations and the brighter-bluer correlation without any assumption nor prior. It is essential not to restrict the possibilities of SN colour variations to those described by the Cardelli laws. Even if dust extinction parametrisations adequately described supernova colour relations, the known variations of dust properties would still require precise supernova measurements to define which dust is in action: the fact that “regular” dust is unlikely to be the first cause of supernova colour variations does not really impact on the requirements of a supernova survey. The causes of SNe Ia colour variability are unclear, and collecting as many colours as possible for each SN event will likely improve our understanding of these matters.

As noted above, the data scatters around these empirical modelling of SNe Ia colour relations beyond measurement uncertainties (Guy et al. 2007, 2010). We referred to this extra noise as colour smearing and used a value of σc = 0.025 mag to set the depth of the observations. This finite colour smearing might indicate a fundamental limitation of extinction-inspired models to describe SNe Ia colour variability.

4.3. Fisher Matrix

We want to forecast uncertainties of cosmological parameters expected from various survey scenarios, and various hypotheses regarding sources and size of systematic uncertainties. The Fisher matrix framework is sufficient for our purpose.

Sources of uncertainties can be regarded either as extra noise or as extra parameters, and the choice is only a matter of convenience, as discussed on a practical example in Appendix A. We settle for adding parameters corresponding to uncertainty sources, and consider 6 parameter sets:

-

1.

the cosmological parameters;

- 2.

the parameters of the SN events themselves;

- 3.

parameters describing the (rest frame) colours of an average supernova;

- 4.

parameters describing the colour law, i.e. how these colours change from event to event;

- 5.

parameters describing the brighter-slower and brighter-bluer relations, and the intrinsic brightness of a supernova;

- 6.

the photometric zero-points of the light curve measurements, i.e. how instrumental fluxes are converted to physical fluxes.

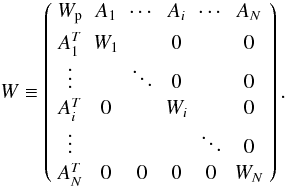

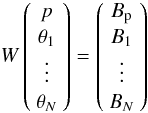

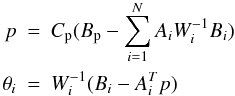

We use least squares estimators. Usually, supernova cosmology fits are carried out in two successive steps: the measured light curves are first fit to yield the light curve parameters, and the cosmology is then fitted using these light curve parameters (and their uncertainties). We use here a mathematically equivalent approach where a single simultaneous fit considers all parameters at once. The Appendix A discusses why these two approaches are strictly equivalent. We settle here for the simultaneous fit because the same events are assumed to be used both to train the light curve fitter and to measure cosmology. In particular, photometric calibration uncertainties affect cosmology both directly and through the light curve fitter training. Within a simultaneous fit, propagating the uncertainties (with correlations between events) does not require any particular care. One obvious drawback of the simultaneous fit is that considering the supernova event parameters on the same footing as all the other ones considerably increases the size of the least-squares problem and uncomfortably lengthens the required matrix inversion (as experienced in Kim & Miquel 2006). In Appendix B, we describe how we take advantage of the specific structure of our least squares problem to rephrase the linear algebra using only small-sized matrices.

We now enter into the details of the chosen parametrisations.

4.3.1. Dark energy parameters

We follow the commonly used equation of state (EoS) effective parametrisation due to Chevallier & Polarski (2001): w(z) = w0 + wa z/(1 + z).

4.3.2. Event parameters

We use the SALT2 parameters: an overall amplitude, a parameter very close to stretch-factor, a date of maximum (B-band) light, and a rest frame colour at maximum.

4.3.3. Average magnitude of a supernova and colour law

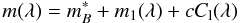

Following the SALT2 model and Eq. (1), we model the peak magnitudes of supernovae as:  (2)where λ refers to a rest frame wavelength. The two functions m1 and Cl describe respectively the magnitude of an average supernova as a function of rest frame wavelength and the colour law. We chose, m1(λB) = 0, Cl(λB) = 0 and Cl(λV) = −1, so that

(2)where λ refers to a rest frame wavelength. The two functions m1 and Cl describe respectively the magnitude of an average supernova as a function of rest frame wavelength and the colour law. We chose, m1(λB) = 0, Cl(λB) = 0 and Cl(λV) = −1, so that  represents the actual magnitude in rest frame B band, and c = B − V + constant is the colour of the event. Determining m1 and Cl from the data is part of the light curve fitter training. Rather than modeling the functions themselves, we model offsets to the same functions extracted from the SALT2 model, by defining:

represents the actual magnitude in rest frame B band, and c = B − V + constant is the colour of the event. Determining m1 and Cl from the data is part of the light curve fitter training. Rather than modeling the functions themselves, we model offsets to the same functions extracted from the SALT2 model, by defining:  (3)where p1 and p2 are polynomials with N1 and N2 parameters to be fitted respectively. The role of these two parametrised functions is to correct broadband inaccuracies of an otherwise correct “narrow band” modelling of supernovae and their colour variations. These inaccuracies are typically expected from photometric calibration errors of the training sample, and do not require sharp corrections. We settle for 10 parameters for each polynomial, which covers spectral resolutions coarser than ~20. Our results do not degrade significantly with larger values, up to ~20 parameters, where the Fisher matrix becomes numerically singular, because the broadband data does not contain enough information at these higher spectral resolutions.

(3)where p1 and p2 are polynomials with N1 and N2 parameters to be fitted respectively. The role of these two parametrised functions is to correct broadband inaccuracies of an otherwise correct “narrow band” modelling of supernovae and their colour variations. These inaccuracies are typically expected from photometric calibration errors of the training sample, and do not require sharp corrections. We settle for 10 parameters for each polynomial, which covers spectral resolutions coarser than ~20. Our results do not degrade significantly with larger values, up to ~20 parameters, where the Fisher matrix becomes numerically singular, because the broadband data does not contain enough information at these higher spectral resolutions.

These two polynomials can be defined either as affecting the amplitude of a light curve (at the central wavelength of the considered filter) or as multiplicative factors affecting the supernova flux before integration in the passband. We eventually settle for the second approach because it is more realistic.

4.3.4. Distance estimator parameters

The simplest way to model the brighter-slower and brighter-bluer correlations is via linear coefficients in the distance modulus (see e.g. Tripp 1998; Astier et al. 2006):  (4)where X1 may either be the actual X1 parameter from SALT2 or any other empirical parameter describing light curve width. ℳ is the intrinsic B-band magnitude of a supernova of null X1 and c. Note that a rest frame band different from B can be chosen, as well as a different colour, as in Freedman et al. (2009). All supernova cosmology works regard the brighter-slower relation (α) as empirical and explicitly or implicitly fit for it. Putting the β parameter on an equal footing is much less common because the assumption that colour variations among supernovae are caused by dust extinction readily provides a value (or a range of values) for β together with a prediction for the Cl function above. Many supernova works assume that the brighter-bluer relation of SNe, parametrised by our β parameter, is due to redenning by dust similar to Milky Way dust. Although, one should indeed expect that some amount of dust extinction is at play, other sources might dominate colour variations among events. Fitting for β constitutes a more general approach than assuming its value, especially since the found values depart from Milky Way dust redenning (e.g. Tripp 1998, and references therein; Astier et al. 2006; Freedman et al. 2009; Kowalski et al. 2008; Amanullah et al. 2010). Assuming that colour variations of supernovae are due to Milky Way like dust not only increases the scatter of distances but also may cause artefacts such as evidence for a Hubble bubble (Conley et al. 2007). In Albrecht et al. (2009), the difference between the expectation that the brighter-bluer relation of SNe follows redenning by Milky Way dust and evidence from measurements that it is not the case is described as a systematic uncertainty. We will not follow this route: since distances depend on this measurable β parameter (or its equivalent in a more complex distant estimator), it has to be measured, whatever the source of colour variation is.

(4)where X1 may either be the actual X1 parameter from SALT2 or any other empirical parameter describing light curve width. ℳ is the intrinsic B-band magnitude of a supernova of null X1 and c. Note that a rest frame band different from B can be chosen, as well as a different colour, as in Freedman et al. (2009). All supernova cosmology works regard the brighter-slower relation (α) as empirical and explicitly or implicitly fit for it. Putting the β parameter on an equal footing is much less common because the assumption that colour variations among supernovae are caused by dust extinction readily provides a value (or a range of values) for β together with a prediction for the Cl function above. Many supernova works assume that the brighter-bluer relation of SNe, parametrised by our β parameter, is due to redenning by dust similar to Milky Way dust. Although, one should indeed expect that some amount of dust extinction is at play, other sources might dominate colour variations among events. Fitting for β constitutes a more general approach than assuming its value, especially since the found values depart from Milky Way dust redenning (e.g. Tripp 1998, and references therein; Astier et al. 2006; Freedman et al. 2009; Kowalski et al. 2008; Amanullah et al. 2010). Assuming that colour variations of supernovae are due to Milky Way like dust not only increases the scatter of distances but also may cause artefacts such as evidence for a Hubble bubble (Conley et al. 2007). In Albrecht et al. (2009), the difference between the expectation that the brighter-bluer relation of SNe follows redenning by Milky Way dust and evidence from measurements that it is not the case is described as a systematic uncertainty. We will not follow this route: since distances depend on this measurable β parameter (or its equivalent in a more complex distant estimator), it has to be measured, whatever the source of colour variation is.

In the distance modulus sketched above, α and β may be either global coefficients or depend on redshift, or depend on the environment of the supernova (characterised for example by host galaxy colours). We also emulate a possible unnoticed evolution of supernovae intrinsic brightness (or a smooth redshift dependent distance bias) by allowing a redshift dependent intrinsic brightness:  (5)where ℳ0 and eM are parameters and eM is constrained by a Gaussian prior (around a null fiducial value). We will consider later several setups for α, β and ℳ. We will use a fiducial value of σ(eM) = 0.01, and we sketch a scheme to constrain it from the survey in Sect. 5.2. This modelling of the “uncertainty floor” is significantly different from the assumptions in Linder & Huterer (2003), where distance shifts in redshift bins are assumed independent, and the uncertainty in a given redshift bin depends on the highest redshift reached by the survey.

(5)where ℳ0 and eM are parameters and eM is constrained by a Gaussian prior (around a null fiducial value). We will consider later several setups for α, β and ℳ. We will use a fiducial value of σ(eM) = 0.01, and we sketch a scheme to constrain it from the survey in Sect. 5.2. This modelling of the “uncertainty floor” is significantly different from the assumptions in Linder & Huterer (2003), where distance shifts in redshift bins are assumed independent, and the uncertainty in a given redshift bin depends on the highest redshift reached by the survey.

4.3.5. Photometric calibration

Photometric calibration accuracy is naturally a major issue for supernova surveys. Supernova cosmology “only” requires relative calibration, in the sense that the heart of the method consists in comparing the flux of events across redshifts: cosmological results are insensitive to the overall flux scale. Supernova fluxes are however to be measured in different bands: distant supernova should be measured in redder observer bands than nearby supernovae. Since supernovae fluxes are calibrated against standard stars, the calibration uncertainty arises in a first place from our limited knowledge of the fluxes of these standards, more precisely of the ratio of their fluxes in different bands. A second contribution to flux calibration uncertainty arises from the measurement process itself, i.e. the systematic accuracy of supernova to standards ratio measurement. We will examine later the influence of the uncertainty of photometric calibration zero-points, where these uncertainties should account for both sources. We typically assume that photometric zero points are known to 1% including the conversion to fluxes. This is conservative in view of currently obtained precisions in the visible (see Regnault et al. 2009; and Guy et al. 2010, for the total uncertainty).

Dark energy constraints when considering specific subsets of uncertainties.

4.4. Cosmological priors

To forecast the measurement precision of supernova surveys one has to complement the distance measurements by other constraints, because distances alone cannot efficiently separate the various universe densities and the equation of state parameters. However, the parameter combination probed by distances to moderate redshifts make them unique. We will complement the proposed measurements with “Planck priors”. For a geometrical probe like distances to SNe, these Planck priors essentially consist in a single constraint on the geometrical parameters (ΩM, ΩDE, w0, wa). Supernovae distances yield a second strong constraint in the same parameter space, which is not enough to reduce the allowed region in the w0,wa plane. Planck priors are more efficient to complement BAO surveys for dark energy because CMB constrains a combination of geometrical parameters and sets the size of the BAO “standard ruler”. As an extra constraint for our supernova survey, we settle for the simplest one: flatness. In using CMB priors for dark energy, one should pay attention to not making use of dark energy information available through the ISW effect, because this information available on large scales might not be reliably extracted. This is achieved by exactly enforcing the “geometrical degeneracy” (see e.g. Albrecht et al. 2009; Mukherjee et al. 2008). Within this framework, using the full Fisher matrix or a single one-dimensional geometrical constraint makes little difference. Our “Planck priors” hence reduce to a measurement of the shift parameter R ( ) to 0.32% relative accuracy (see Mukherjee et al. 2008, Table 1), together with flatness.

) to 0.32% relative accuracy (see Mukherjee et al. 2008, Table 1), together with flatness.

5. Results

We simulate a fiducial flat ΛCDM universe with ΩM = 0.27. The equation of state is parametrised as w(z) = w0 + wa z/(1 + z). We assume that the scatter of the Hubble diagram for perfect measurements exactly matching the average supernova model (i.e without colour smearing) is 0.12 mag. Current estimates of this quantity are below 0.10 (Guy et al. 2010), but since it can only be obtained by subtraction of identified uncertainties that might have been inadvertently inflated, we choose to stand on the safe side. We assume that the colour smearing (see Sect. 3.1) causes peak SN magnitudes in a single band to scatter around the model by a quantity σc = 0.025 (see Guy et al. 2010) unless otherwise specified. σc = 0.01 was assumed in Kim & Miquel (2006).

We restrict the rest frame central wavelength of the bands entering the fit to [3800–7000] Å, which leaves 3 to 4 bands per event. Enlarging this range formally improves the performance but breaks the requirement that similar rest frame ranges are used to derive distances at all redshifts. In a real survey, the whole information would of course be used, in particular to study the supernovae colours.

We will now study the survey performance regarding constraints of the equation of state. Following Albrecht et al. (2006), we define the pivot redshift zp as the one where the EoS uncertainty is minimal, and wp ≡ w(zp). As performance indicators, we report σ(wp), the uncertainty on of the EoS evolution σ(wa), and the reciprocal of their product7, often used as a figure of merit (FoM). The quantity σ(wp) can be regarded as the ability of the project to challenge the cosmological constant paradigm.

In Table 5, we turn various uncertainty sources on and off labelled from A to Z. The setup A only considers photometry Poisson noise and Hubble diagram scatter, and the baseline scenario Z adds all other uncertainty sources discussed above. Some combinations of uncertainty sources are provided in between, in order to identify major uncertainty drivers. Lines B to F display the effect of one source at a time: the single source that mostly degrades the performance is the intrinsic brightness drift with redshift σ(eM), followed by the photometric calibration (zp). In lines G to M, both intrinsic drift with redshift (σ(eM) = 0.01) and colour smearing (σc = 0.025) are allowed, and we add other sources. We note that adding the supernova model fit alone (lines H, I, J compared to G) does not significantly degrade the performance and that adding in the calibration uncertainty (K compared to G) has a sizable effect. But the most dramatic effect is the combination of supernova model training and calibration uncertainty (lines H, K and L), because the N1 parameters of p1 (Eq. (3)) and the zero-points play the same role, the first set in the supernova frame and the latter in the observer frame. This enlights the role of calibration uncertainty through the light curve fitter training, and is supported by the findings of current ground-based surveys (Guy et al. 2010). Turning on or off the colour law fitting (N2, comparing lines G and I, or L and Z) has essentially no impact on the performance: there is indeed no benefit to rely on an assumption (such as a Cardelli law) for this part of the model.

One might be surprised that calibration uncertainties do not ruin the proposed survey since current ground-based supernova surveys face calibration-induced uncertainties comparable to statistics with “only” a few hundred events (Guy et al. 2010; Conley et al. 2010). There are two key differences: first, these survey have to face cross-calibration issues with the nearby sample; second, the observed rest frame region varies with redshift, and the comparison of events across redshifts then heavily relies on the supernova model and inherits its uncertainties. This illustrates our requirement that all events be observed in the same rest frame range.

Comparing colour distributions along redshift constitutes a sensitive handle on astrophysics conditions evolving with time. This test is independent of the supernova model training, in the sense that the training does not aim at matching these distributions. The photometric calibration accuracy directly limits the comparison of colours at different redshifts: with zero-points defined at 0.01, colours can only be compared to ~0.015. Given the natural colour spread of ~0.1, this is a test at σ/7 level, which does not benefit from more than ~50 events in a redshift slice. For supernovae, the sensitivity of evolution tests constitutes another strong incentive to improve the calibration precision to a few per mil level.

5.1. Variations of performance when altering parameters of the baseline.

Effect of altering survey parameters of the baseline.

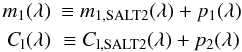

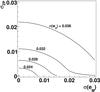

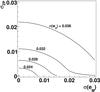

Table 6 illustrates the effect of parameters that determine the cosmological performance, and varies them one at a time. We can check that increasing the spectral resolution of the supernova model (N1 and N2) has almost no effect. If an intrinsic resolution σint better than 0.10 is confirmed, this yields a ~10% improvement of the FoM. Doubling the evolution systematics σ(eM) degrades it by 16%. Improving the zero-points accuracy by a factor of 2 improves it by 30%. The joint effect of calibration uncertainty and distance biases on σ(wp) is displayed in Fig. 2. One might also note that reducing statistics significantly alters the performance. Conversely, doubling the statistics improves the FoM by 50%. We should probably stress here that the wide survey should not be regarded as doable from the ground, because supernova distances at z ~ 1 make use of y and J bands. We finally vary the wide and deep survey allocations within a constant overall observing time, and note that this is not a key parameter. Reducing the deep survey marginally improves the FoM but at the expense of reducing the high redshift statistics, which is the most precious to tackle evolution issues. One might also regard the rest frame I-band Hubble diagram as an extension of the wide that would improve the constraints. If, following the SNAP approach, one includes 1000 nearby supernovae at z = 0.05 (measured from the ground), assuming that cross-calibration uncertainties are not worse than assumed here, and ignoring potential bias issues, the FoM reaches 110.

|

Fig. 2 Contour levels of σ(wp) as a function of calibration uncertainty σZP (equal for all filters), and the distance evolution uncertainty σ(eM) (defined in Eq. (5)). |

One should seriously consider the possibility that there are subclasses of SNe Ia depending on environment as suggested by Mannucci et al. (2006). Sullivan et al. (2006, 2010) propose some observational evidences for different average properties of SNe occurring in passive and star-forming galaxies. It is not yet clear if these different environments produce different supernovae, or if these different environment sample differently the same parent population. We will consider however here the most dramatic case, where two environments produce two different event species described by different parameter sets, namely different α, β, ℳ (see Eq. (4)) and different supernova models (different p1 and p2 functions, see Eq. (3)). We consider that the host galaxy colours allow one to assign each event in one category. In the case of the admixture not evolving with redshift and categories having the same photometric quality, the variance of the cosmological estimators is mathematically the same as for a single species scenario, as shown in Appendix C. For a more realistic scenario, we varied the admixture with redshift from 30/70% at z = 0 to 70/30% at z = 1.5, and variances of the cosmological parameters do not increase by more than 1%.

So, if it turns out that SNe Ia consist in an admixture of species that can be tagged using host galaxy colours or properties of the supernova light curve, the proposed strategy retains its performance. Note that even if events are assigned the wrong category, the cosmological parameters remain unbiased as long as the fraction of interlopers does not evolve with redshift. We also considered the possible evolution with redshift of α and β parameters (defined in Eq. (4)) by fitting those in redshift bins of 0.1, and variances are changed at the percent level. Even considering simultaneously event sub-classes and redshift bins results in minute degradation of performances.

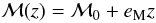

Finally, we replaced the evolution of ℳ(z) defined in Eq. (5) by:

![Mathematical equation: $$ \mathcal{M}(z) = \mathcal{M}_0 + e_{\rm M} \frac{ [ \partial {\rm Log} d_L/\partial w_0 ] (z)} {[ \partial {\rm Log} d_L/\partial w_0 ] (z=1)} $$](/articles/aa/full_html/2011/01/aa15044-10/aa15044-10-eq138.png) where the denominator has been chosen so that σ(eM) represents the uncertainty at z = 1 as in Eq. (5). This functional dependence makes eM degenerate with w0. The choice of a systematic mimicking the dependence on w0 was inspired by Amara & Réfrégier (2008) where, in the weak shear framework, it is regarded as a worst case. However, in our case, for the same strength of the prior, we find that the FoM are larger (or equal) than with the linear model of Eq. (5).

where the denominator has been chosen so that σ(eM) represents the uncertainty at z = 1 as in Eq. (5). This functional dependence makes eM degenerate with w0. The choice of a systematic mimicking the dependence on w0 was inspired by Amara & Réfrégier (2008) where, in the weak shear framework, it is regarded as a worst case. However, in our case, for the same strength of the prior, we find that the FoM are larger (or equal) than with the linear model of Eq. (5).

5.2. Constraining a redshift dependent bias of distances

As discussed in the previous paragraph, cosmological constraints from supernovae depend on our ability to constrain the systematic drift of distances with redshift. We investigate in this section a few handles that might be used to bound the effect or correct for it.

The proposed survey provides accurate colours of host galaxies extending to the observer NIR. This enables one to estimate host galaxy properties by comparing measurements to synthetic galaxy spectra, and analyse if derived distances (or other supernovae properties) depend on the host galaxy properties. This is the strategy followed in Sullivan et al. (2010), where evidence for a dependence of distances with host galaxy stellar mass is presented, and found harmless for cosmology. With the supernova statistics we are considering here, this approach becomes even stronger because it can be applied within a modest redshift range where photometric calibration issues affect all supernovae and host galaxies in the same way.

The metallicity of exploding white dwarves is expected to increase (at least on average) with cosmic time. Metallicity certainly influences the amount of 56Ni synthetised in the explosion (Timmes et al. 2003), but we have no hint yet that standard distance estimators do not correct for this evolution. One might even argue that the range of environments found at, e.g., small redshifts efficiently “trains” distance estimators so that they remain unbiased as redshift varies.

However, in order to bound a possible redshift dependent distance bias we propose to use the near UV flux variations that explosion models correlate to metallicity variations (Hoeflich et al. 1998; Lentz et al. 2000; Sauer et al. 2008). If the models do not necessarily agree on the size of the effects, they define the 2500–4000 Å spectral region as sensitive to admixtures in the progenitor material of other elements than Carbon and Oxygen (the ones of the baseline scenario). SNe Ia exhibit a colour diversity, but different colours appear to be tightly connected (see e.g Astier et al. 2006; Conley et al. 2008; Folatelli et al. 2010). Defining U∗ as the 2500–3200 Å region, the rest frame combination (U∗ − B) − 5.4(B − V) is, from observations, the smallest scatter combination of the (U∗,B,V) triplet (Guy et al. 2010) (up to a multiplicative constant). Using synthetic data from Lentz et al. (2000), we can check that this three-band combination is both sensitive to metallicity, and nicely correlates to distance biases due to evolving metallicities. Quantitatively, when varying metallicity, the distance shift δμ varies as 0.1[(U∗ − B) − 5.4(B − V)], which allows one to constrain δμ at the 0.01 level in the presence of calibration uncertainties of 0.01 per band. The U∗ band is observable in the proposed survey beyond z = 0.6, and lies below the supernova model spectral region, and is then ignored in the training.

Beyond the decline rate paradigm, the early phases of light curve are expected to encode metallicity (see Hoeflich et al. 1998). Given the envisaged statistics, minute departures from the average light curve shape can be detected and correlated with other observables. One key quality of light curve shape indicators is that they remain unaffected by calibration issues.

6. Discussion

6.1. Comparison with weak shear performance

The ability of space based measurements of the weak shear to constrain the cosmological model, and in particular its dark energy sector was studied in detail in Amara & Réfrégier (2008) (and references therein). This work supports the strategy developed for the EUCLID project (Laureijs 2009), and identifies the measurement of the shear (intimately related to the measurement of second moments of galaxies) as the key systematic. The shear measurement uncertainty translates to a systematic uncertainty floor of the shear angular power spectrum  . Current shear measurement techniques (Bridle et al. 2010) achieve a systematic uncertainty that would limit σ(wp) to about 0.05 (Fig. 11 of Amara & Réfrégier (2008) interpolated for

. Current shear measurement techniques (Bridle et al. 2010) achieve a systematic uncertainty that would limit σ(wp) to about 0.05 (Fig. 11 of Amara & Réfrégier (2008) interpolated for  from the best algorithm in Table 5 of Bridle et al. 2010). One should note that this performance is obtained on simulations where in particular, the PSF of the imaging system used to measure the shear is perfectly known. The EUCLID project requires that

from the best algorithm in Table 5 of Bridle et al. 2010). One should note that this performance is obtained on simulations where in particular, the PSF of the imaging system used to measure the shear is perfectly known. The EUCLID project requires that  be reached (Laureijs 2009), leading to σ(wp) ≃ 0.02.

be reached (Laureijs 2009), leading to σ(wp) ≃ 0.02.

Using the current performance of ground-based distance measurements to supernovae, we conservatively derive an EoS constrain σ(wp) ≃ 0.03 from a space-based survey. This is in a position to really complement EoS constraints from shear correlations. These two approaches are not only complementary because their redshift dependent biases are unrelated. On the one hand, distance tests are independent of growth rate, and have to be complemented (by e.g. Planck priors) in order to constrain the EoS, and on the other hand, shear correlation tomography can autonomously constrain the EoS by assuming a given relation (from e.g. General Relativity) between distances and growth rate.

6.2. Summary

We have proposed a two-cone supernova survey conducted from space with a modified EUCLID setup: we assumed that both the visible and the NIR imagers are equipped with swappable filters. We find that it is possible to accurately measure more than 104 supernovae at 0.15 < z < 1.55 in 18 months of survey. The photometric accuracy is tailored to match the measured intrinsic variability of colour relations of supernovae at the highest redshift of the survey. All events are measured in the 7 instrumental bands, and the BVR rest frame bands are covered at all redshifts. Our analysis of supernova distances relies on conservative assumptions and the current know how. It integrates many nuisance effects, such as the light curve fitter noise together with the impact of a conservative photometric calibration uncertainty both directly on cosmology and through the light curve fitter training. Our approach ensures that the interplay of identified uncertainties is properly accounted for. The proposed observing strategy also collects the data to build a rest frame I-band Hubble diagram to z ≃ 0.9, with ~7000 events.

Our results are encouraging in the sense that including these realistic nuisance effects, competitive constraints of the dark energy equation of state can be obtained, when using a simple geometrical Planck prior: within a two-parameter dark energy model, the EoS can be constrained to ~0.03 at z ≃ 0.3.

see e.g. http://snap.lbl.gov/

The expression was introduced in Kessler et al. (2009).

Assuming the disagreement is real, it might be due to the fact that the Li et al. (2010) sample comes from a search targeting nearby galaxies and could be missing events preferentially occurring in dwarf galaxies.

We assume that the object position is perfectly known: first it does not improve the flux variance, and second, we are studying here a supernova survey where the object is measured at the same position in an image series.

The Cl(λ) family reported in Cardelli et al. (1989) for extinction by dust are also obtained from colour–colour slopes. The arbitrary constant can however be determined by requiring that Cl(λ) is 0 for λ going to infinity. Observations extending to ~5 μm allow the authors to carry out the extrapolation. This is not possible in the supernova framework and Cl(λ) is extracted from colour–colour relations up to an additive constant. SALT2 chooses Cl(λB) = 0.

This quantity is equal to Det(Cov(w0,wa))−1/2, and scales as the figures of merit inspired from Albrecht et al. (2006).

Acknowledgments

We are grateful to Peter Nugent for providing us with the simulated spectra discussed in Lentz et al. (2000), and to Andrew Howell for fruitful exchanges.

References

- Albrecht, A., Bernstein, G., Cahn, R., et al. 2006, [arXiv:astro-ph/0609591] [Google Scholar]

- Albrecht, A., Amendola, L., Bernstein, G., et al. 2009, [arXiv:0901.0721] [Google Scholar]

- Amanullah, R., Lidman, C., Rubin, D., et al. 2010, ApJ, 716, 712 [NASA ADS] [CrossRef] [Google Scholar]

- Amara, A., & Réfrégier, A. 2008, MNRAS, 391, 228 [NASA ADS] [CrossRef] [Google Scholar]

- Astier, P., Guy, J., Regnault, N., et al. 2006, A&A, 447, 31 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Bazin, G. 2008, PhD Thesis, Université Denis Diderot, IRFU-08-09-T [Google Scholar]

- Bridle, S., Balan, S. T., Bethge, M., et al. 2010, MNRAS, 405, 2044 [NASA ADS] [Google Scholar]

- Cardelli, J. A., Clayton, G. C., & Mathis, J. S. 1989, APJ, 345, 245 [Google Scholar]

- Chevallier, M., & Polarski, D. 2001, Int. J. Mod. Phys. D, 10, 213 [NASA ADS] [CrossRef] [Google Scholar]

- Conley, A., Carlberg, R. G., Guy, J., et al. 2007, ApJ, 664, L13 [NASA ADS] [CrossRef] [Google Scholar]

- Conley, A., Sullivan, M., Hsiao, E. Y., et al. 2008, ApJ, 681, 482 [NASA ADS] [CrossRef] [Google Scholar]

- Conley, A., Guy, J., Sullivan, M., et al. 2010, submitted [Google Scholar]

- Folatelli, G., Phillips, M. M., Burns, C. R., et al. 2010, AJ, 139, 120 [NASA ADS] [CrossRef] [Google Scholar]

- Freedman, W. L., Burns, C. R., Phillips, M. M., et al. 2009, ApJ, 704, 1036 [NASA ADS] [CrossRef] [Google Scholar]

- Fukugita, M., Ichikawa, T., Gunn, J. E., et al. 1996, AJ, 111, 1748 [NASA ADS] [CrossRef] [Google Scholar]

- Guy, J., Astier, P., Nobili, S., Regnault, N., & Pain, R. 2005, A&A, 443, 781 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Guy, J., Astier, P., Baumont, S., et al. 2007, A&A, 466, 11 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Guy, J., Sullivan, M., Conley, A., et al. 2010, A&A, 523, A7 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Hoeflich, P., Wheeler, J. C., & Thielemann, F. K. 1998, ApJ, 495, 617 [NASA ADS] [CrossRef] [Google Scholar]

- Homeier, N. L. 2005, ApJ, 620, 12 [NASA ADS] [CrossRef] [Google Scholar]

- Hopkins, A. M., & Beacom, J. F. 2006, ApJ, 651, 142 [NASA ADS] [CrossRef] [MathSciNet] [Google Scholar]

- Johnson, B. D., & Crotts, A. P. S. 2006, AJ, 132, 756 [NASA ADS] [CrossRef] [Google Scholar]

- Kessler, R., Becker, A. C., Cinabro, D., et al. 2009, ApJS, 185, 32 [NASA ADS] [CrossRef] [Google Scholar]

- Kessler, R., Cinabro, D., Bassett, B., et al. 2010a, ApJ, 717, 40 [NASA ADS] [CrossRef] [Google Scholar]

- Kessler, R., Conley, A., Jha, S., & Kuhlmann, S. 2010b [arXiv:1001.5210] [Google Scholar]

- Kim, A. G., & Miquel, R. 2006, Astropart. Phys., 24, 451 [NASA ADS] [CrossRef] [Google Scholar]

- Kowalski, M., Rubin, D., Aldering, G., et al. 2008, ApJ, 686, 749 [NASA ADS] [CrossRef] [Google Scholar]

- Laureijs, R. 2009 [arXiv:0912.0914] [Google Scholar]

- Leinert, C., Bowyer, S., Haikala, L. K., et al. 1998, A&AS, 127, 1 [Google Scholar]

- Lentz, E. J., Baron, E., Branch, D., Hauschildt, P. H., & Nugent, P. E. 2000, ApJ, 530, 966 [Google Scholar]

- Li, W., Leaman, J., Chornock, R., et al. 2010, MNRAS, Submitted [arXiv:1006.4612] [Google Scholar]

- Linder, E. V., & Huterer, D. 2003, Phys. Rev. D, 67, 081303 [NASA ADS] [CrossRef] [Google Scholar]

- Mannucci, F., Della Valle, M., & Panagia, N. 2006, MNRAS, 370, 773 [NASA ADS] [CrossRef] [Google Scholar]

- Mannucci, F., Della Valle, M., & Panagia, N. 2007, MNRAS, 377, 1229 [NASA ADS] [CrossRef] [Google Scholar]

- Mukherjee, P., Kunz, M., Parkinson, D., & Wang, Y. 2008, Phys. Rev. D, 78, 083529 [NASA ADS] [CrossRef] [Google Scholar]

- Palanque-Delabrouille, N., Ruhlmann-Kleider, V., Pascal, S., et al. 2010, A&A, 514, A63 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Peacock, J. A., Schneider, P., Efstathiou, G., et al. 2006, ESA-ESO Working Group on Fundamental Cosmology, Tech. rep. [Google Scholar]

- Perlmutter, S., Aldering, G., Goldhaber, G., et al. 1999, ApJ, 517, 565 [NASA ADS] [CrossRef] [Google Scholar]

- Perrett, K., Sullivan, M., Conley, A., Gonzales-Gaitan, S., & Carlberg, R. 2010, in prep. [Google Scholar]

- Poznanski, D., Gal-Yam, A., Maoz, D., et al. 2002, PASP, 114, 833 [NASA ADS] [CrossRef] [Google Scholar]

- Réfrégier, A. 2009, Exper. Astron., 23, 17 [NASA ADS] [CrossRef] [Google Scholar]

- Réfrégier, A., Boulade, O., Mellier, Y., et al. 2006, Proc. SPIE, 6265, [arXiv:astro-ph/0610062] [Google Scholar]

- Regnault, N., Conley, A., Guy, J., et al. 2009, A&A, 506, 999 [NASA ADS] [CrossRef] [EDP Sciences] [Google Scholar]

- Richardson, D., Branch, D., Casebeer, D., et al. 2002, AJ, 123, 745 [NASA ADS] [CrossRef] [Google Scholar]

- Riess, A. G., Filippenko, A. V., Challis, P., et al. 1998, AJ, 116, 1009 [NASA ADS] [CrossRef] [Google Scholar]

- Ripoche, P. 2007, PhD Thesis, Université Aix-Marseille II [Google Scholar]

- Ripoche, P. 2008, Moriond Proceedings (Cosmology) [Google Scholar]

- Rodney, S. A., & Tonry, J. L. 2009, ApJ, 707, 1064 [NASA ADS] [CrossRef] [Google Scholar]

- Sauer, D. N., Mazzali, P. A., Blondin, S., et al. 2008, MNRAS, 391, 1605 [NASA ADS] [CrossRef] [Google Scholar]

- Sullivan, M., Le Borgne, D., Pritchet, C. J., et al. 2006, ApJ, 648, 868 [NASA ADS] [CrossRef] [Google Scholar]

- Sullivan, M., Conley, A., Howell, D. A., et al. 2010, MNRAS, 406, 782 [NASA ADS] [Google Scholar]

- Timmes, F. X., Brown, E. F., & Truran, J. W. 2003, ApJ, 590, L83 [NASA ADS] [CrossRef] [Google Scholar]

- Tripp, R. 1998, A&A, 331, 815 [NASA ADS] [Google Scholar]

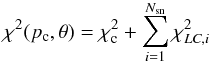

Appendix A: Simultaneous versus successive least squares fits

We compare here two approaches for the least-squares fits in the supernova framework. In the traditional approach one first fits separately the event light curves to extract the event parameters, and then fits the cosmology to distances constructed from these event parameters. In the less conventional approach we present here, light curves and cosmology are fitted simultaneously, by minimising the sum:  (A.1)with

(A.1)with  where, i indexes SN events, k = 1..Ni indexes the measurements fik of a an event, φ is the supernova model, θi are the parameters of event i (θ denotes the ensemble of event parameters), pc are the cosmological parameters, μ(pc,z) is the distance modulus at redshift z,

where, i indexes SN events, k = 1..Ni indexes the measurements fik of a an event, φ is the supernova model, θi are the parameters of event i (θ denotes the ensemble of event parameters), pc are the cosmological parameters, μ(pc,z) is the distance modulus at redshift z,  is the measured distance modulus of event i (a linear combination of event parameters), and ℳ is a combination of the intrinsic magnitude of a supernova and H0. σint is the intrinsic dispersion of a supernova (defined as the “observed” Hubble diagram scatter for ideal measurements), and σik refers to measurement uncertainties. The first χ2 term fits cosmology, the second fits light curves, and both terms are related through θi parameters.

is the measured distance modulus of event i (a linear combination of event parameters), and ℳ is a combination of the intrinsic magnitude of a supernova and H0. σint is the intrinsic dispersion of a supernova (defined as the “observed” Hubble diagram scatter for ideal measurements), and σik refers to measurement uncertainties. The first χ2 term fits cosmology, the second fits light curves, and both terms are related through θi parameters.

If one minimises separately the light curve parts with respect to θi,  may be approximately re-written:

may be approximately re-written:  (A.4)where

(A.4)where  minimises

minimises  and

and  is its second derivative matrix w.r.t θi. The approximation would be exact if the supernova model were linear with respect to its parameters. The approximation holds for error propagation at first order, and will be used only for this purpose. In order to compare the simultaneous fit of pc and all θi with the two-step process, we will compute

is its second derivative matrix w.r.t θi. The approximation would be exact if the supernova model were linear with respect to its parameters. The approximation holds for error propagation at first order, and will be used only for this purpose. In order to compare the simultaneous fit of pc and all θi with the two-step process, we will compute  where

where  denotes the θ value that minimises the global χ2 (A.1) for a given value of pc. A tedious calculation yields:

denotes the θ value that minimises the global χ2 (A.1) for a given value of pc. A tedious calculation yields:  (A.5)which corresponds to the standard cosmology fit that one would perform after a separate fit of all events to yield

(A.5)which corresponds to the standard cosmology fit that one would perform after a separate fit of all events to yield  and