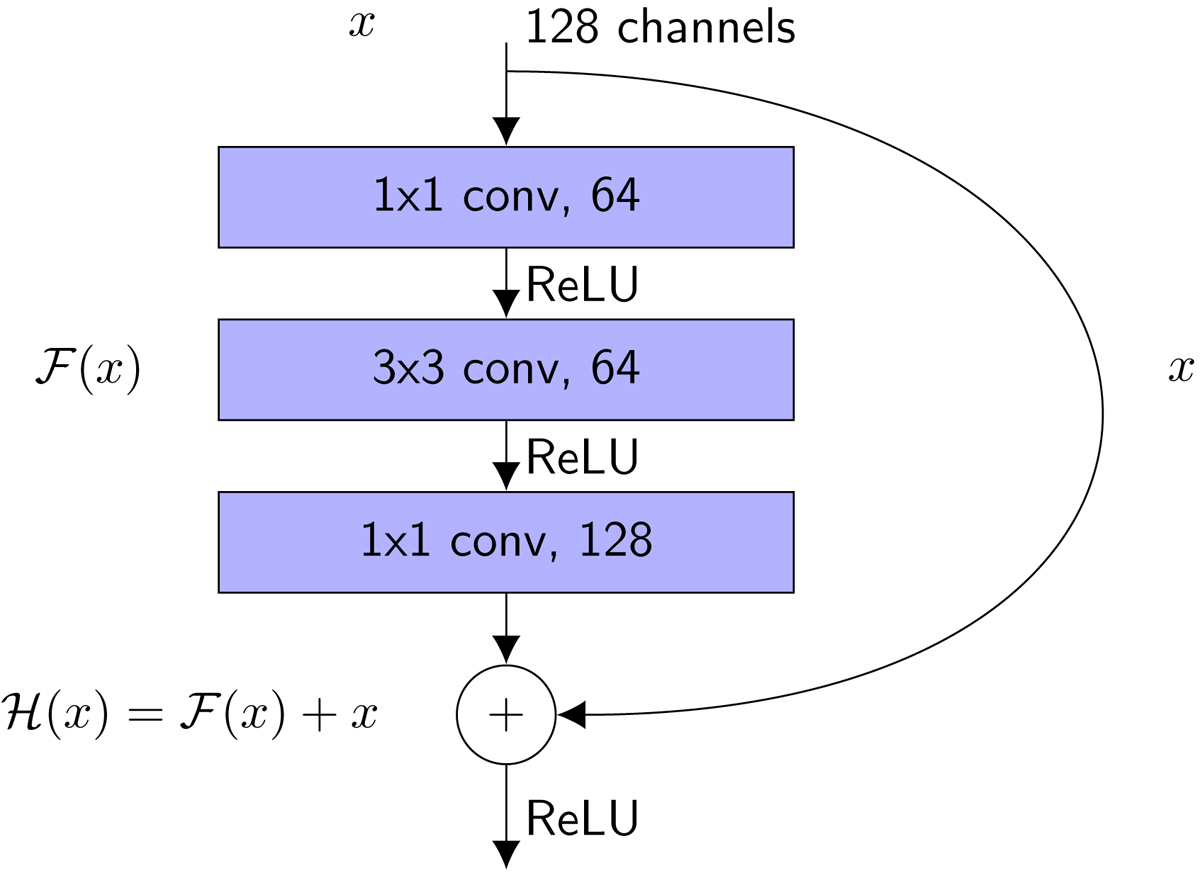

Fig. 2.

Example building block of a ResNet, consisting of three sequential convolutional layers. The input is a 128-channel activation map, which is passed through 64 1 × 1 convolutional filters. The filters extract a 64-channel feature map. These features, after applying a ReLU activation, are then passed through 64 3 × 3 convolutional filters. The purpose of the first layer is to compress the channels for the 3 × 3 convolutional layer, which results in less optimizable parameters. Then, the ReLU activation is applied again and the final 1 × 1 convolutional layer expands the number of channels back to 128. Finally, these outputs are summed with the inputs via a skip-connection and passed through a ReLU activation.

Current usage metrics show cumulative count of Article Views (full-text article views including HTML views, PDF and ePub downloads, according to the available data) and Abstracts Views on Vision4Press platform.

Data correspond to usage on the plateform after 2015. The current usage metrics is available 48-96 hours after online publication and is updated daily on week days.

Initial download of the metrics may take a while.